torch.nn.utils.clip_grad_norm_()

用法

参数列表

- parameters 一个由张量或单个张量组成的可迭代对象(模型参数)

- max_norm 梯度的最大范数

- nort_type 所使用的范数类型。默认为L2范数,可以是无穷大范数inf

设parameters里所有参数的梯度的范数为total_norm,

若max_norm>total_norm,parameters里面的参数的梯度不做改变;

若max_norm<total_norm,parameters里面的参数的梯度都要乘以一个系数clip_coef

官方代码

def clip_grad_norm_(parameters, max_norm, norm_type=2):

r"""Clips gradient norm of an iterable of parameters.

The norm is computed over all gradients together, as if they were

concatenated into a single vector. Gradients are modified in-place.

Arguments:

parameters (Iterable[Tensor] or Tensor): an iterable of Tensors or a

single Tensor that will have gradients normalized

max_norm (float or int): max norm of the gradients

norm_type (float or int): type of the used p-norm. Can be ``'inf'`` for

infinity norm.

Returns:

Total norm of the parameters (viewed as a single vector).

"""

if isinstance(parameters, torch.Tensor):

parameters = [parameters]

#第一步

parameters = list(filter(lambda p: p.grad is not None, parameters))

max_norm = float(max_norm)

norm_type = float(norm_type)

if norm_type == inf:

total_norm = max(p.grad.data.abs().max() for p in parameters)

else:

total_norm = 0

for p in parameters:

#第二步

param_norm = p.grad.data.norm(norm_type)

#第三步

total_norm += param_norm.item() ** norm_type

total_norm = total_norm ** (1. / norm_type)

clip_coef = max_norm / (total_norm + 1e-6)

if clip_coef < 1:

for p in parameters:

p.grad.data.mul_(clip_coef)

return total_norm

意义

这个函数的主要目的是对parameters里的所有参数的梯度进行规范化

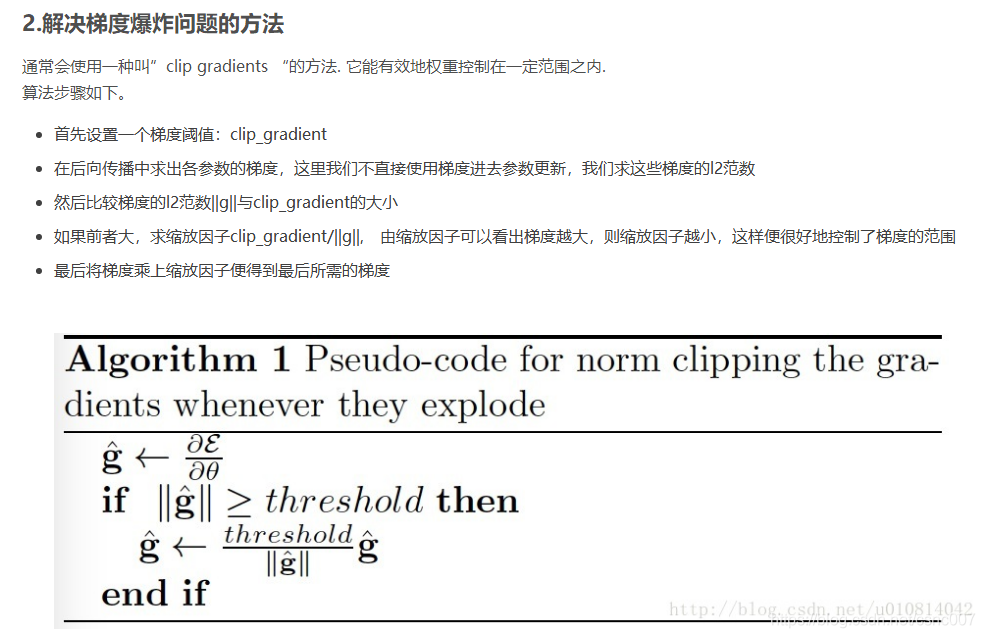

梯度裁剪解决的是梯度消失或爆炸的问题,即设定阈值,如果梯度超过阈值,那么就截断,将梯度变为阈值