离线数仓采集通道搭建

离线数仓搭建——数据采集工具安装

@

目录

一、zookeeper安装及配置

(1)zookeeper-3.5.9安装

先去网上下载zookeeper-3.5.9安装包,将安装包放入flink102的安装包路径

将安装包放入后进行解压

(2)修改zookeeper配置文件

进到zookeeper文件夹中查看文件目录

创建zkData用于保存日志

对zookeeper的配置文件进行修改

先对文件进行更名

修改内容如下:

(3)增加zookeeper环境变量

上一篇文章中有自定义一个环境变量文件,对其进行增加

增加zoo_home:

(4)zookeeper启动

启动zookeeper服务

启动zookeeper客户端

(5)集群zookeeper配置

上面已经完成了单机搭建,下面将文件分发给103、104节点

记得去103、104节点source一下

在zkData文件夹下创建myid文件并编辑

分发给103、104节点

并修改103和104上的编号

(6)zookeeper集群脚本编写

cd到我们的脚本目录

脚本内容如下:

赋予执行权限

现在可以尝试的群起zookeeper

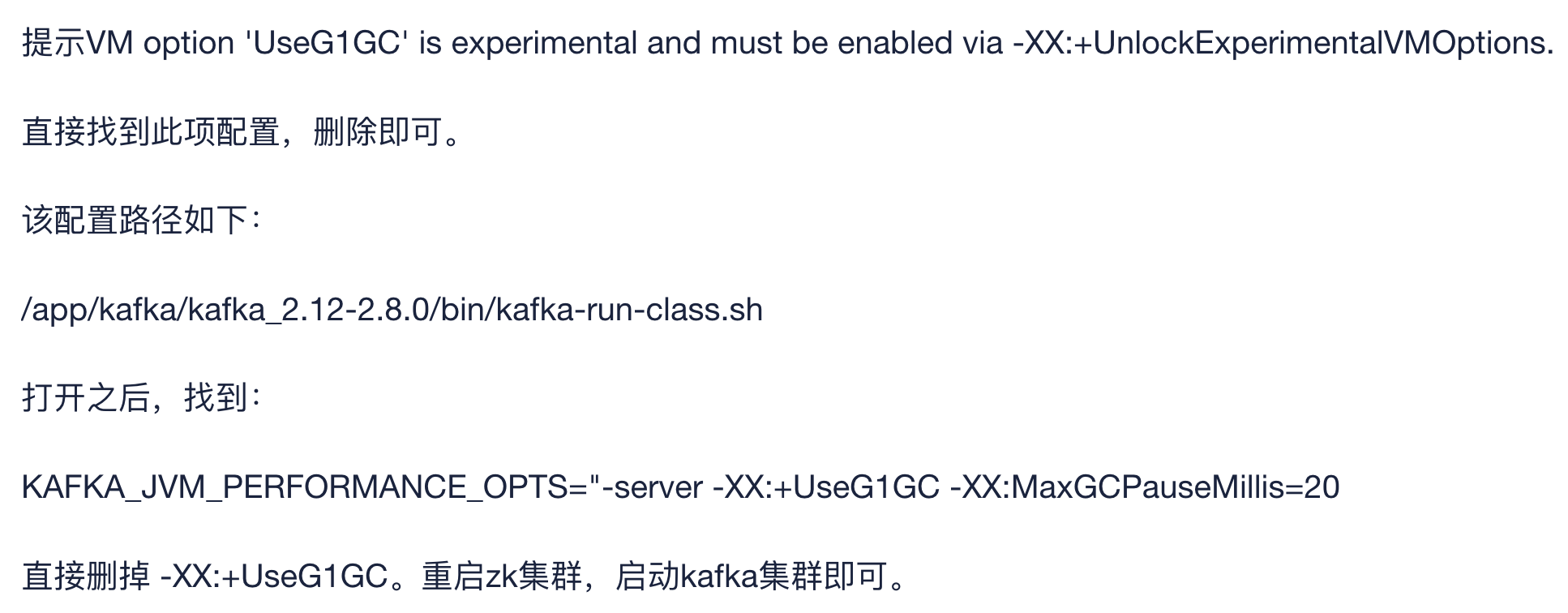

二、kafka安装及配置

(1)kafka安装

这里我选用的是kafka_2.11-2.4.1.tgz,官网可以下载

跟zookeeper一样将安装包放入安装包目录

(2)修改kafka配置文件

进入kafka文件夹查看文件结构

进config目录修改配置文件

修改内容为:

(3)配置kafka环境变量

添加以下内容:

记得source一下

(4)分发给103、104节点

记得各个节点都source,然后进入路径/opt/module/kafka/conf 修改server.properties,将broker.id设置成1、2

(5)群起kafka脚本

先试着开启102节点上的kafka服务

kafka群起脚本编写

试着群起kafka,在用jpsall看一下后台进程

三、flume安装及配置

(1)flume安装及配置

flume我选的是1.9.0,官网可下载

将安装包放入/opt/software

修改文件名

flume安装后需要删除一个jar包以兼容hadoop3.1.3

至此数据采集通道需要安装的工具已经安装完成

__EOF__

本文作者:z x z

本文链接:https://www.cnblogs.com/xiaolongbaoxiangfei/p/15800845.html

关于博主:评论和私信会在第一时间回复。或者直接私信我。

版权声明:本博客所有文章除特别声明外,均采用 BY-NC-SA 许可协议。转载请注明出处!

声援博主:如果您觉得文章对您有帮助,可以点击文章右下角【推荐】一下。您的鼓励是博主的最大动力!

本文链接:https://www.cnblogs.com/xiaolongbaoxiangfei/p/15800845.html

关于博主:评论和私信会在第一时间回复。或者直接私信我。

版权声明:本博客所有文章除特别声明外,均采用 BY-NC-SA 许可协议。转载请注明出处!

声援博主:如果您觉得文章对您有帮助,可以点击文章右下角【推荐】一下。您的鼓励是博主的最大动力!

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· winform 绘制太阳,地球,月球 运作规律

· 震惊!C++程序真的从main开始吗?99%的程序员都答错了

· AI与.NET技术实操系列(五):向量存储与相似性搜索在 .NET 中的实现

· 【硬核科普】Trae如何「偷看」你的代码?零基础破解AI编程运行原理

· 超详细:普通电脑也行Windows部署deepseek R1训练数据并当服务器共享给他人