Deep Neural Network for Image Classification: Application

作业简介

使用前面完成的函数构建神经网络,并运用到猫的分类问题中。我们可以得到相比于logistic回归准确性提高的模型。

工具包

import time

import numpy as np

import h5py

import matplotlib.pyplot as plt

import scipy

from PIL import Image

from scipy import ndimage

from dnn_app_utils_v2 import *

#matplotlib inline

plt.rcParams['figure.figsize'] = (5.0, 4.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

#load_ext autoreload

#autoreload 2

np.random.seed(1)

数据集

还是使用与logistic·回归对猫分类问题中的数据集:

train_x_orig, train_y, test_x_orig, test_y, classes = load_data()

# Example of a picture

index = 7

plt.imshow(train_x_orig[index])

print ("y = " + str(train_y[0,index]) + ". It's a " + classes[train_y[0,index]].decode("utf-8") + " picture.")

plt.show()

输出:

y = 1. It's a cat picture.

数据集详细信息:

# Explore your dataset

m_train = train_x_orig.shape[0]

num_px = train_x_orig.shape[1]

m_test = test_x_orig.shape[0]

print ("Number of training examples: " + str(m_train))

print ("Number of testing examples: " + str(m_test))

print ("Each image is of size: (" + str(num_px) + ", " + str(num_px) + ", 3)")

print ("train_x_orig shape: " + str(train_x_orig.shape))

print ("train_y shape: " + str(train_y.shape))

print ("test_x_orig shape: " + str(test_x_orig.shape))

print ("test_y shape: " + str(test_y.shape))

输出:

Number of training examples: 209

Number of testing examples: 50

Each image is of size: (64, 64, 3)

train_x_orig shape: (209, 64, 64, 3)

train_y shape: (1, 209)

test_x_orig shape: (50, 64, 64, 3)

test_y shape: (1, 50)

数据集预处理:

# Reshape the training and test examples

train_x_flatten = train_x_orig.reshape(train_x_orig.shape[0], -1).T # The "-1" makes reshape flatten the remaining dimensions

test_x_flatten = test_x_orig.reshape(test_x_orig.shape[0], -1).T

# Standardize data to have feature values between 0 and 1.

train_x = train_x_flatten/255.

test_x = test_x_flatten/255.

print ("train_x's shape: " + str(train_x.shape))

print ("test_x's shape: " + str(test_x.shape))

输出:

train_x's shape: (12288, 209)

test_x's shape: (12288, 50)

构建模型

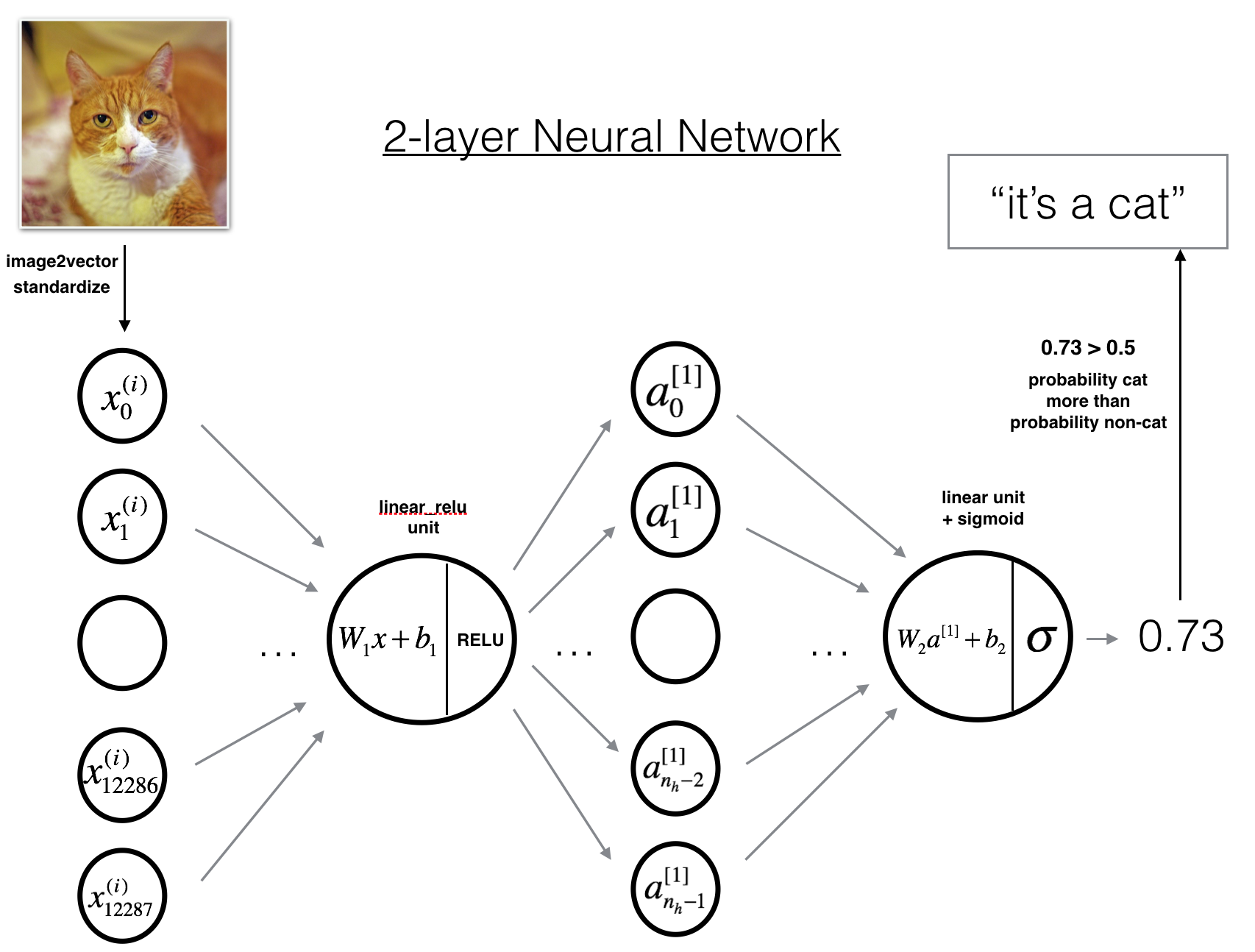

两层网络

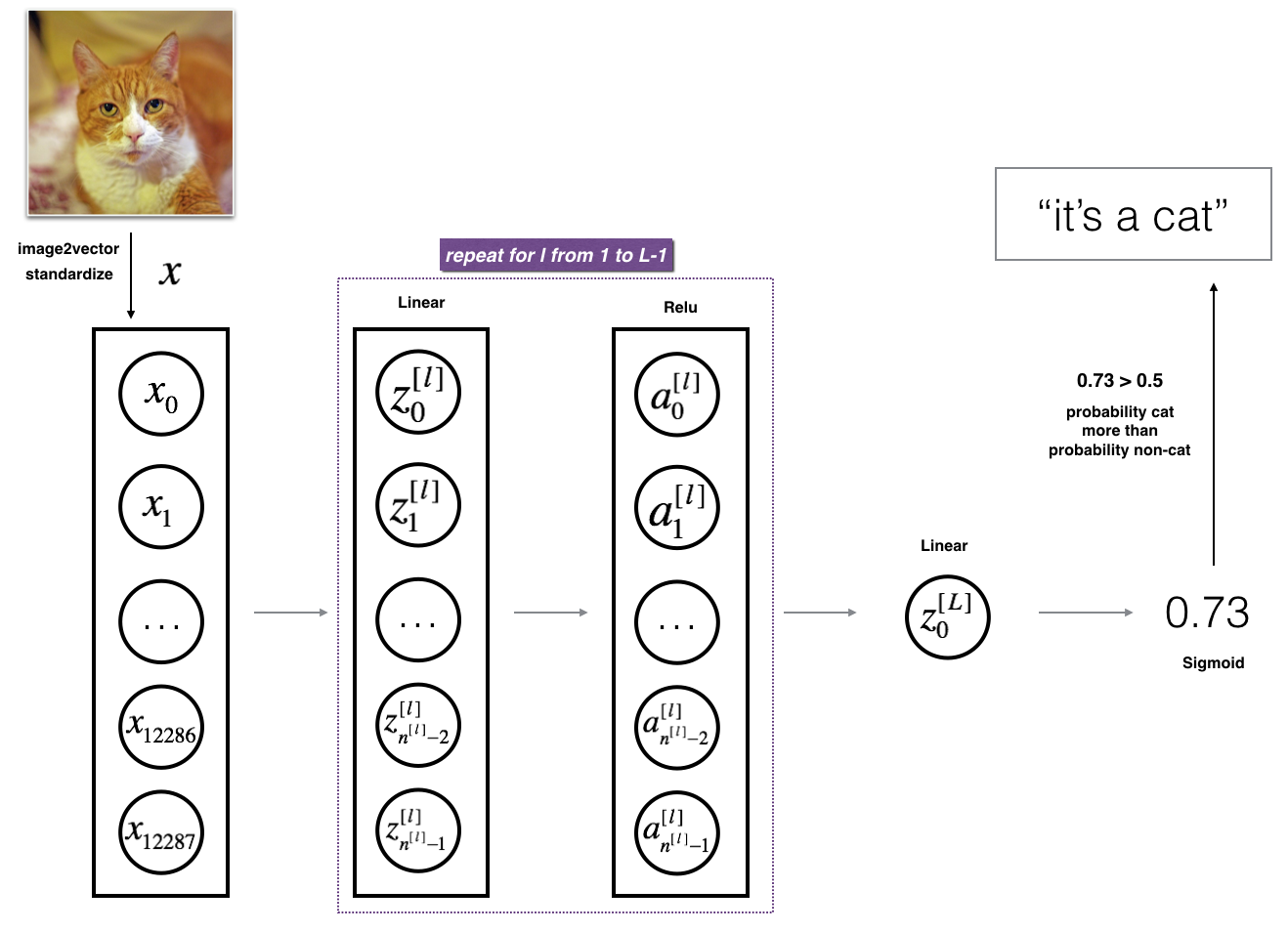

L层网络

基本流程

- 初始化参数和定义超参数

- 循环指定轮数

- 前向传播

- 计算代价函数

- 反向传播

- 更新参数

- 使用训练好的参数推测

两层神经网络实现

# GRADED FUNCTION: two_layer_model

def two_layer_model(X, Y, layers_dims, learning_rate = 0.0075, num_iterations = 3000, print_cost=False):

np.random.seed(1)

grads = {}

costs = [] # to keep track of the cost

m = X.shape[1] # number of examples

(n_x, n_h, n_y) = layers_dims

# Initialize parameters dictionary, by calling one of the functions you'd previously implemented

parameters = initialize_parameters(n_x, n_h, n_y)

# Get W1, b1, W2 and b2 from the dictionary parameters.

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

# Loop (gradient descent)

for i in range(0, num_iterations):

# Forward propagation: LINEAR -> RELU -> LINEAR -> SIGMOID. Inputs: "X, W1, b1". Output: "A1, cache1, A2, cache2".

A1, cache1 = linear_activation_forward(X, W1, b1, "relu")

A2, cache2 = linear_activation_forward(A1, W2, b2, "sigmoid")

# Compute cost

cost = compute_cost(A2, Y)

# Initializing backward propagation

dA2 = - (np.divide(Y, A2) - np.divide(1 - Y, 1 - A2))

# Backward propagation. Inputs: "dA2, cache2, cache1". Outputs: "dA1, dW2, db2; also dA0 (not used), dW1, db1".

dA1, dW2, db2 = linear_activation_backward(dA2, cache2, "sigmoid")

dA0, dW1, db1 = linear_activation_backward(dA1, cache1, "relu")

# Set grads['dWl'] to dW1, grads['db1'] to db1, grads['dW2'] to dW2, grads['db2'] to db2

grads['dW1'] = dW1

grads['db1'] = db1

grads['dW2'] = dW2

grads['db2'] = db2

# Update parameters.

parameters = update_parameters(parameters, grads, learning_rate)

# Retrieve W1, b1, W2, b2 from parameters

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

# Print the cost every 100 training example

if print_cost and i % 100 == 0:

print("Cost after iteration {}: {}".format(i, np.squeeze(cost)))

if print_cost and i % 100 == 0:

costs.append(cost)

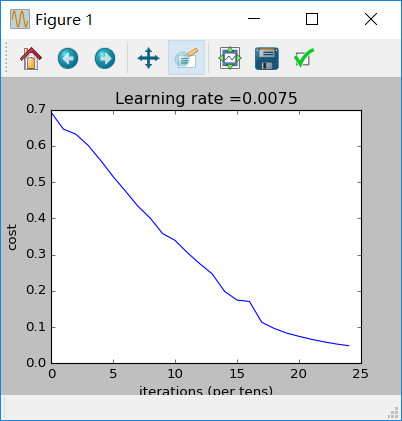

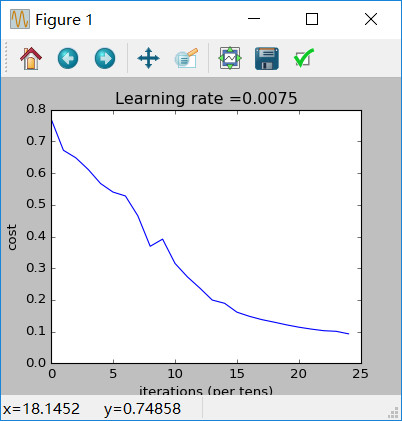

# plot the cost

plt.plot(np.squeeze(costs))

plt.ylabel('cost')

plt.xlabel('iterations (per tens)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

return parameters

测试:

n_x = 12288 # num_px * num_px * 3

n_h = 7

n_y = 1

layers_dims = (n_x, n_h, n_y)

parameters = two_layer_model(train_x, train_y, layers_dims = (n_x, n_h, n_y), num_iterations = 2500, print_cost=True)

输出:

Cost after iteration 0: 0.6930497356599888

Cost after iteration 100: 0.6464320953428849

Cost after iteration 200: 0.6325140647912678

Cost after iteration 300: 0.6015024920354665

Cost after iteration 400: 0.5601966311605747

Cost after iteration 500: 0.515830477276473

Cost after iteration 600: 0.4754901313943325

Cost after iteration 700: 0.4339163151225749

Cost after iteration 800: 0.400797753620389

Cost after iteration 900: 0.3580705011323798

Cost after iteration 1000: 0.3394281538366412

Cost after iteration 1100: 0.3052753636196263

Cost after iteration 1200: 0.27491377282130175

Cost after iteration 1300: 0.2468176821061483

Cost after iteration 1400: 0.19850735037466116

Cost after iteration 1500: 0.17448318112556632

Cost after iteration 1600: 0.17080762978096647

Cost after iteration 1700: 0.1130652456216472

Cost after iteration 1800: 0.09629426845937152

Cost after iteration 1900: 0.08342617959726863

Cost after iteration 2000: 0.07439078704319081

Cost after iteration 2100: 0.06630748132267934

Cost after iteration 2200: 0.05919329501038171

Cost after iteration 2300: 0.053361403485605544

Cost after iteration 2400: 0.04855478562877018

训练集准确性:

predictions_train = predict(train_x, train_y, parameters)

输出:

Accuracy: 1.0

测试集准确性:

predictions_test = predict(test_x, test_y, parameters)

输出:

Accuracy: 0.72

L层神经网络实现

layers_dims = [12288, 20, 7, 5, 1] # 5-layer model

# GRADED FUNCTION: L_layer_model

def L_layer_model(X, Y, layers_dims, learning_rate = 0.0075, num_iterations = 3000, print_cost=False):#lr was 0.009

np.random.seed(1)

costs = [] # keep track of cost

# Parameters initialization.

parameters = initialize_parameters_deep(layers_dims)

# Loop (gradient descent)

for i in range(0, num_iterations):

# Forward propagation: [LINEAR -> RELU]*(L-1) -> LINEAR -> SIGMOID.

AL, caches = L_model_forward(X, parameters)

# Compute cost.

cost = compute_cost(AL, Y)

# Backward propagation.

grads = L_model_backward(AL, Y, caches)

# Update parameters.

parameters = update_parameters(parameters, grads, learning_rate)

# Print the cost every 100 training example

if print_cost and i % 100 == 0:

print ("Cost after iteration %i: %f" %(i, cost))

if print_cost and i % 100 == 0:

costs.append(cost)

# plot the cost

plt.plot(np.squeeze(costs))

plt.ylabel('cost')

plt.xlabel('iterations (per tens)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

return parameters

测试:

parameters = L_layer_model(train_x, train_y, layers_dims, num_iterations = 2500, print_cost = True)

输出:

Cost after iteration 0: 0.771749

Cost after iteration 100: 0.672053

Cost after iteration 200: 0.648263

Cost after iteration 300: 0.611507

Cost after iteration 400: 0.567047

Cost after iteration 500: 0.540138

Cost after iteration 600: 0.527930

Cost after iteration 700: 0.465477

Cost after iteration 800: 0.369126

Cost after iteration 900: 0.391747

Cost after iteration 1000: 0.315187

Cost after iteration 1100: 0.272700

Cost after iteration 1200: 0.237419

Cost after iteration 1300: 0.199601

Cost after iteration 1400: 0.189263

Cost after iteration 1500: 0.161189

Cost after iteration 1600: 0.148214

Cost after iteration 1700: 0.137775

Cost after iteration 1800: 0.129740

Cost after iteration 1900: 0.121225

Cost after iteration 2000: 0.113821

Cost after iteration 2100: 0.107839

Cost after iteration 2200: 0.102855

Cost after iteration 2300: 0.100897

Cost after iteration 2400: 0.092878

训练集准确性

pred_train = predict(train_x, train_y, parameters)

输出:

Accuracy: 0.985645933014

测试集准确性

pred_test = predict(test_x, test_y, parameters)

输出:

Accuracy: 0.8

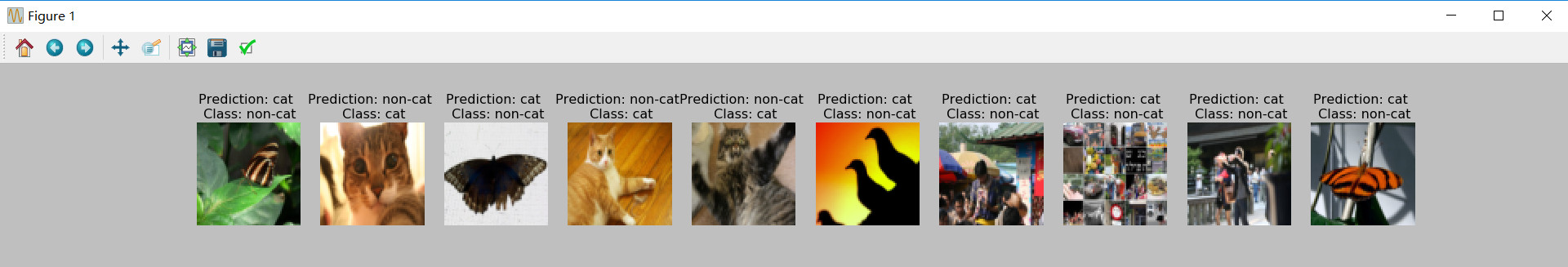

结果分析:

我们可以看一下L层网络误判的图像:

- 误判的原因可以总结为:

- 猫体处于非正常的姿势

- 猫的颜色与背景的颜色很像

- 不常见的猫的颜色和品种

- 相机的视角

- 照片的亮度

- 猫在去全图中成像过小或者过大

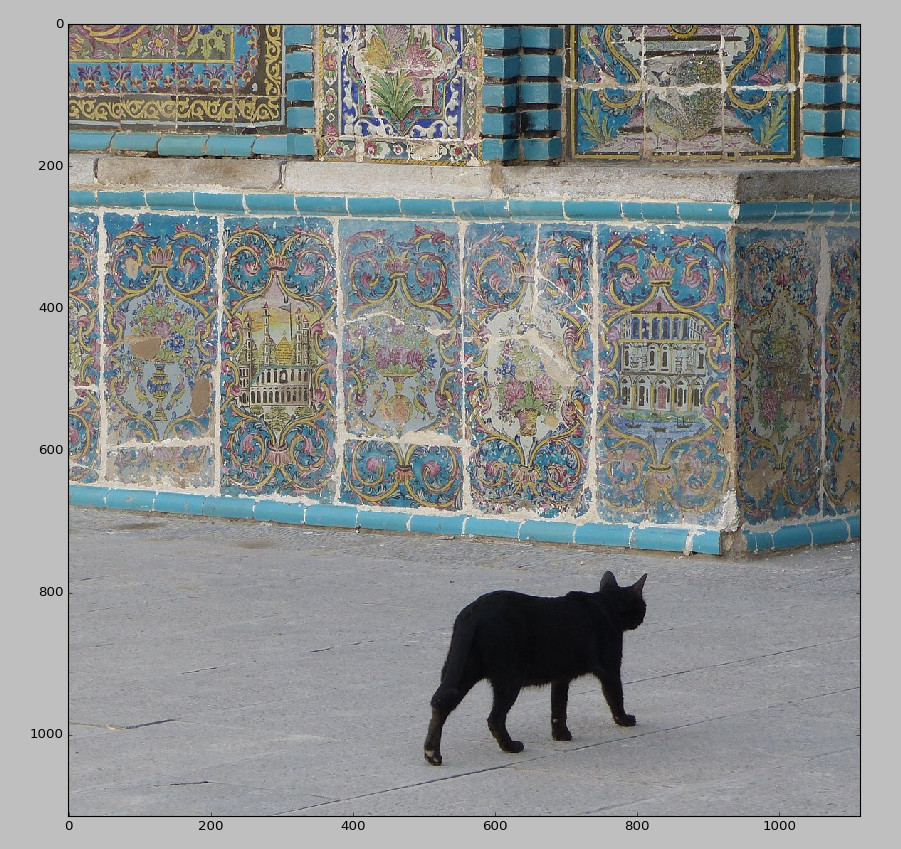

使用自己的图片测试

my_image = "cat.jpg" # change this to the name of your image file

my_label_y = [1] # the true class of your image (1 -> cat, 0 -> non-cat)

fname = "D:/Anaconda342/assignment4/images/" + my_image

image = np.array(ndimage.imread(fname, flatten=False))

my_image = scipy.misc.imresize(image, size=(num_px,num_px)).reshape((num_px*num_px*3,1))

my_predicted_image = predict(my_image, my_label_y, parameters)

plt.imshow(image)

print ("y = " + str(np.squeeze(my_predicted_image)) + ", your L-layer model predicts a \"" + classes[int(np.squeeze(my_predicted_image)),].decode("utf-8") + "\" picture.")

plt.show()

结果:

y = 1.0, your L-layer model predicts a "cat" picture.

浙公网安备 33010602011771号

浙公网安备 33010602011771号