Spark内核源码解析八:DAGScheduler原理解析和源码解析

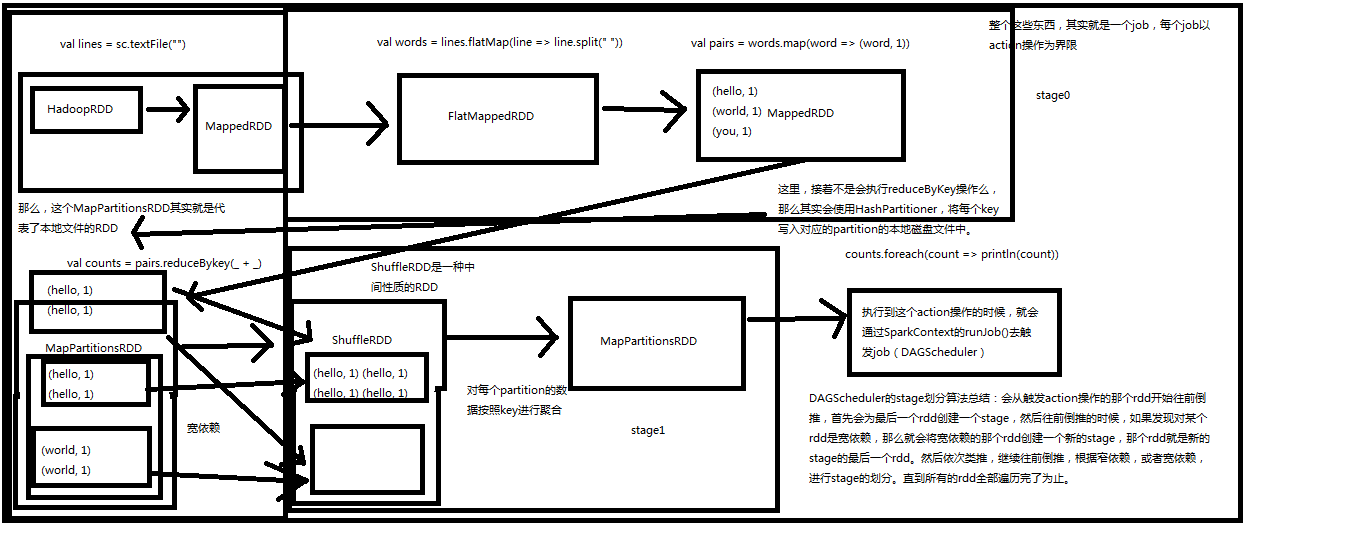

原理图如下

重点DAGScheduler会从触发Action操作的RDD划分stage,然后往前倒退,如果之前依赖的RDD是窄依赖就会放入同一个stage,如果是宽依赖就会就会重新划分一个stage

DAGScheduler

runJob

submitJob

eventProcessLoop.post

JobSubmitted

handJobSubmitted

finalStage = newStage

private[scheduler] def handleJobSubmitted(jobId: Int, finalRDD: RDD[_], func: (TaskContext, Iterator[_]) => _, partitions: Array[Int], allowLocal: Boolean, callSite: CallSite, listener: JobListener, properties: Properties = null) { var finalStage: Stage = null try { // New stage creation may throw an exception if, for example, jobs are run on a // HadoopRDD whose underlying HDFS files have been deleted. // 创建stage,并将stage加入DAGScheduler缓存中 finalStage = newStage(finalRDD, partitions.size, None, jobId, callSite) } catch { case e: Exception => logWarning("Creating new stage failed due to exception - job: " + jobId, e) listener.jobFailed(e) return } if (finalStage != null) { // 这个job就是最后的一个stage val job = new ActiveJob(jobId, finalStage, func, partitions, callSite, listener, properties) clearCacheLocs() logInfo("Got job %s (%s) with %d output partitions (allowLocal=%s)".format( job.jobId, callSite.shortForm, partitions.length, allowLocal)) logInfo("Final stage: " + finalStage + "(" + finalStage.name + ")") logInfo("Parents of final stage: " + finalStage.parents) logInfo("Missing parents: " + getMissingParentStages(finalStage)) val shouldRunLocally = localExecutionEnabled && allowLocal && finalStage.parents.isEmpty && partitions.length == 1 val jobSubmissionTime = clock.getTimeMillis() if (shouldRunLocally) { // Compute very short actions like first() or take() with no parent stages locally. listenerBus.post( SparkListenerJobStart(job.jobId, jobSubmissionTime, Seq.empty, properties)) runLocally(job) } else { // 将job加入内存缓存 jobIdToActiveJob(jobId) = job activeJobs += job finalStage.resultOfJob = Some(job) val stageIds = jobIdToStageIds(jobId).toArray val stageInfos = stageIds.flatMap(id => stageIdToStage.get(id).map(_.latestInfo)) listenerBus.post( SparkListenerJobStart(job.jobId, jobSubmissionTime, stageInfos, properties)) // 提交stage,导致第一个stage提交和所有stage被划分出来提交到waitingStages队列里面 submitStage(finalStage) // stage划分算法比较重要,必须要清楚自己的spark application被划分成了几个job,划分成了 // 几个stage,每个stage包含哪些代码,当出现问题时才能对这些stage处理问题 } } submitWaitingStages() }

/** Submits stage, but first recursively submits any missing parents. */ private def submitStage(stage: Stage) { val jobId = activeJobForStage(stage) if (jobId.isDefined) { logDebug("submitStage(" + stage + ")") if (!waitingStages(stage) && !runningStages(stage) && !failedStages(stage)) { // 获取父stage,submitStage和getMissingParengtStages组成,递归调用submitStage,然后将所有stage加入 // waitingStage等待队列中, // 会反复提交直到最初的stage没有父stage位置 val missing = getMissingParentStages(stage).sortBy(_.id) logDebug("missing: " + missing) if (missing == Nil) { logInfo("Submitting " + stage + " (" + stage.rdd + "), which has no missing parents") // 为stage创建一个task submitMissingTasks(stage, jobId.get) } else { for (parent <- missing) { submitStage(parent) } waitingStages += stage } } } else { abortStage(stage, "No active job for stage " + stage.id) } }

// 获取某个stage的父stage,如果一个RDD的所有依赖都是窄依赖就不会创建stage, // 如果发现一个stage的rdd一个依赖是宽依赖就创建一个stage然后立即返回 private def getMissingParentStages(stage: Stage): List[Stage] = { val missing = new HashSet[Stage] val visited = new HashSet[RDD[_]] // We are manually maintaining a stack here to prevent StackOverflowError // caused by recursively visiting // 往栈里面放入一个RDD val waitingForVisit = new Stack[RDD[_]] def visit(rdd: RDD[_]) { if (!visited(rdd)) { visited += rdd if (getCacheLocs(rdd).contains(Nil)) { for (dep <- rdd.dependencies) { dep match { // 宽依赖就完了就创建一个shuffleMapStage,然后加入set,默认最后是一个finalStage // 对于每一种有shuffle的操作,比如groupByKey,reduceByKey,countByKey // 底层对应3中RDD,MapartitionsRDD,ShuffleRDD,MapartitionsRDD case shufDep: ShuffleDependency[_, _, _] => val mapStage = getShuffleMapStage(shufDep, stage.jobId) if (!mapStage.isAvailable) { missing += mapStage } // 窄依赖,将依赖的RDD放入栈中 case narrowDep: NarrowDependency[_] => waitingForVisit.push(narrowDep.rdd) } } } } } waitingForVisit.push(stage.rdd) while (!waitingForVisit.isEmpty) { visit(waitingForVisit.pop()) } missing.toList }

/** Called when stage's parents are available and we can now do its task. */ private def submitMissingTasks(stage: Stage, jobId: Int) { logDebug("submitMissingTasks(" + stage + ")") // Get our pending tasks and remember them in our pendingTasks entry stage.pendingTasks.clear() // First figure out the indexes of partition ids to compute. val partitionsToCompute: Seq[Int] = { if (stage.isShuffleMap) { (0 until stage.numPartitions).filter(id => stage.outputLocs(id) == Nil) } else { val job = stage.resultOfJob.get (0 until job.numPartitions).filter(id => !job.finished(id)) } } val properties = if (jobIdToActiveJob.contains(jobId)) { jobIdToActiveJob(stage.jobId).properties } else { // this stage will be assigned to "default" pool null } runningStages += stage // SparkListenerStageSubmitted should be posted before testing whether tasks are // serializable. If tasks are not serializable, a SparkListenerStageCompleted event // will be posted, which should always come after a corresponding SparkListenerStageSubmitted // event. stage.latestInfo = StageInfo.fromStage(stage, Some(partitionsToCompute.size)) outputCommitCoordinator.stageStart(stage.id) listenerBus.post(SparkListenerStageSubmitted(stage.latestInfo, properties)) // TODO: Maybe we can keep the taskBinary in Stage to avoid serializing it multiple times. // Broadcasted binary for the task, used to dispatch tasks to executors. Note that we broadcast // the serialized copy of the RDD and for each task we will deserialize it, which means each // task gets a different copy of the RDD. This provides stronger isolation between tasks that // might modify state of objects referenced in their closures. This is necessary in Hadoop // where the JobConf/Configuration object is not thread-safe. var taskBinary: Broadcast[Array[Byte]] = null try { // For ShuffleMapTask, serialize and broadcast (rdd, shuffleDep). // For ResultTask, serialize and broadcast (rdd, func). val taskBinaryBytes: Array[Byte] = if (stage.isShuffleMap) { closureSerializer.serialize((stage.rdd, stage.shuffleDep.get) : AnyRef).array() } else { closureSerializer.serialize((stage.rdd, stage.resultOfJob.get.func) : AnyRef).array() } taskBinary = sc.broadcast(taskBinaryBytes) } catch { // In the case of a failure during serialization, abort the stage. case e: NotSerializableException => abortStage(stage, "Task not serializable: " + e.toString) runningStages -= stage return case NonFatal(e) => abortStage(stage, s"Task serialization failed: $e\n${e.getStackTraceString}") runningStages -= stage return } // 为stage创建指定数量的Task val tasks: Seq[Task[_]] = if (stage.isShuffleMap) { // 给每一个partition创建一个Task,给每个Task计算最佳位置 partitionsToCompute.map { id => val locs = getPreferredLocs(stage.rdd, id) val part = stage.rdd.partitions(id) new ShuffleMapTask(stage.id, taskBinary, part, locs) } } else { // 如果不是shuffleMapStage就是finalStage就需要创建resultTask val job = stage.resultOfJob.get partitionsToCompute.map { id => val p: Int = job.partitions(id) val part = stage.rdd.partitions(p) val locs = getPreferredLocs(stage.rdd, p) new ResultTask(stage.id, taskBinary, part, locs, id) } } if (tasks.size > 0) { logInfo("Submitting " + tasks.size + " missing tasks from " + stage + " (" + stage.rdd + ")") stage.pendingTasks ++= tasks logDebug("New pending tasks: " + stage.pendingTasks) taskScheduler.submitTasks( new TaskSet(tasks.toArray, stage.id, stage.newAttemptId(), stage.jobId, properties)) stage.latestInfo.submissionTime = Some(clock.getTimeMillis()) } else { // Because we posted SparkListenerStageSubmitted earlier, we should post // SparkListenerStageCompleted here in case there are no tasks to run. outputCommitCoordinator.stageEnd(stage.id) listenerBus.post(SparkListenerStageCompleted(stage.latestInfo)) logDebug("Stage " + stage + " is actually done; %b %d %d".format( stage.isAvailable, stage.numAvailableOutputs, stage.numPartitions)) runningStages -= stage } }

浙公网安备 33010602011771号

浙公网安备 33010602011771号