ubuntu 使用阿里云镜像源快速搭建kubernetes 1.15.2集群

一、概述

搭建k8s集群时,需要访问google,下载相关镜像以及安装软件,非常麻烦。

正好阿里云提供了k8s的更新源,国内用户就可以直接使用了。

二、环境介绍

| 操作系统 | 主机名 | IP地址 | 功能 | 配置 |

| ubuntu-16.04.5-server-amd64 | k8s-master | 192.168.10.130 | 主节点 | 2核4G |

| ubuntu-16.04.5-server-amd64 | k8s-node1 | 192.168.10.131 | 从节点 | 2核4G |

| ubuntu-16.04.5-server-amd64 | k8s-node2 | 192.168.10.132 | 从节点 | 2核4G |

注意:请确保CPU至少2核,内存2G

三、安装前准备

主机名

确保3台主机的 /etc/hostname 已经修改为正确的主机名,修改后,请重启系统。

时间

务必保证3台服务器的时区是一样的,强制更改时区为上海,执行以下命令

ln -snf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime bash -c "echo 'Asia/Shanghai' > /etc/timezone"

安装ntpdate

apt-get install -y ntpdate

如果出现以下错误

E: Could not get lock /var/lib/dpkg/lock - open (11: Resource temporarily unavailable) E: Unable to lock the administration directory (/var/lib/dpkg/), is another process using it?

执行2个命令解决

sudo rm /var/cache/apt/archives/lock sudo rm /var/lib/dpkg/lock

使用阿里云的时间服务器更新

ntpdate ntp1.aliyun.com

3台服务器都执行一下,确保时间一致!

请确保防火墙都关闭了!

四、正式开始

禁用swap

所有主机

sudo sed -i '/swap/ s/^/#/' /etc/fstab sudo swapoff -a

安装Docker

更新apt源,并添加https支持(所有主机)

sudo apt-get update && sudo apt-get install apt-transport-https ca-certificates curl software-properties-common -y

使用utc源添加GPG Key (所有主机)

curl -fsSL https://mirrors.ustc.edu.cn/docker-ce/linux/ubuntu/gpg | sudo apt-key add

添加Docker-ce稳定版源地址(所有主机)

sudo add-apt-repository "deb [arch=amd64] https://mirrors.ustc.edu.cn/docker-ce/linux/ubuntu $(lsb_release -cs) stable"

安装docker-ce(所有主机)

安装最新版docker

sudo apt-get update sudo apt-get install -y docker-ce=5:19.03.1~3-0~ubuntu-xenial

安装kubelet,kubeadm,kubectl

添加apt key以及源(所有主机)

sudo apt update && sudo apt install -y apt-transport-https curl curl -s https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | sudo apt-key add - echo "deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main" >>/etc/apt/sources.list.d/kubernetes.list

安装(所有主机)

最新版kubelet

sudo apt update sudo apt install -y kubelet=1.15.2-00 kubeadm=1.15.2-00 kubectl=1.15.2-00 sudo apt-mark hold kubelet=1.15.2-00 kubeadm=1.15.2-00 kubectl=1.15.2-00

安装kubernetes集群(仅master)

sudo kubeadm init --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.15.2 --pod-network-cidr=192.169.0.0/16 | tee /etc/kube-server-key

参数解释:

--image-repository 指定镜像源,指定为阿里云的源,这样就会避免在拉取镜像超时,如果没问题,过几分钟就能看到成功的日志输入

--kubernetes-version 指定版本

--pod-network-cidr 指定pod网络地址。设置为内网网段!

三大内网网络为:

C类:192.168.0.0-192.168.255.255

B类:172.16.0.0-172.31.255.255

A类:10.0.0.0-10.255.255.255

输出:

[init] Using Kubernetes version: v1.15.2 [preflight] Running pre-flight checks [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/ [WARNING SystemVerification]: this Docker version is not on the list of validated versions: 19.03.1. Latest validated version: 18.09 [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Activating the kubelet service [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.10.130] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.10.130 127.0.0.1 ::1] [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.10.130 127.0.0.1 ::1] [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" [control-plane] Creating static Pod manifest for "kube-scheduler" [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [kubelet-check] Initial timeout of 40s passed. [apiclient] All control plane components are healthy after 41.507944 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config-1.15" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Skipping phase. Please see --upload-certs [mark-control-plane] Marking the node k8s-master as control-plane by adding the label "node-role.kubernetes.io/master=''" [mark-control-plane] Marking the node k8s-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule] [bootstrap-token] Using token: bz16uu.olqxoh5q5bnt50sd [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxy Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 192.168.10.130:6443 --token bz16uu.olqxoh5q5bnt50sd \ --discovery-token-ca-cert-hash sha256:9177017ff3016dbb2aadf7484f7823f8b963c989fe9ecdccbe601c9305ce000f

出现WARNING信息,可以忽略。

输出信息,会保存到 /etc/kube-server-key 文件中

拷贝kubeconfig文件到家目录的.kube目录 (仅master)

mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

安装网络插件,让pod之间通信(仅master)

使用最新版的

kubectl apply -f https://docs.projectcalico.org/v3.8/manifests/calico.yaml

查看kube-system命名空间下的pod状态(仅master)

kubectl get pod -n kube-system

等待1分钟,效果如下:

NAME READY STATUS RESTARTS AGE calico-kube-controllers-7bd78b474d-lpfvf 0/1 Running 0 67s calico-node-vfm28 1/1 Running 0 67s coredns-bccdc95cf-dm4pb 1/1 Running 0 111s coredns-bccdc95cf-lvhcg 1/1 Running 0 111s etcd-k8s-master 1/1 Running 0 69s kube-apiserver-k8s-master 1/1 Running 0 67s kube-controller-manager-k8s-master 1/1 Running 0 59s kube-proxy-jpqsq 1/1 Running 0 111s kube-scheduler-k8s-master 1/1 Running 0 56s

查看加入节点命令(仅master)

cat /etc/kube-server-key | tail -2

输出:

kubeadm join 192.168.10.130:6443 --token bz16uu.olqxoh5q5bnt50sd \ --discovery-token-ca-cert-hash sha256:9177017ff3016dbb2aadf7484f7823f8b963c989fe9ecdccbe601c9305ce000f

加入node节点 (仅node)

在每个node上执行

kubeadm join 192.168.10.130:6443 --token bz16uu.olqxoh5q5bnt50sd \ --discovery-token-ca-cert-hash sha256:9177017ff3016dbb2aadf7484f7823f8b963c989fe9ecdccbe601c9305ce000f

等待5分钟,查看集群状态(仅master)

root@k8s-master:~# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master Ready master 5m54s v1.15.2 k8s-node1 Ready <none> 73s v1.15.2 k8s-node2 Ready <none> 71s v1.15.2

命令补全

(仅master)

apt-get install bash-completion source <(kubectl completion bash) echo "source <(kubectl completion bash)" >> ~/.bashrc source ~/.bashrc

四、部署应用

(仅master)

这里以flask为例:

vim flask.yaml

内容如下:

apiVersion: extensions/v1beta1 kind: Deployment metadata: name: flaskapp-1 spec: replicas: 1 template: metadata: labels: name: flaskapp-1 spec: containers: - name: flaskapp-1 image: jcdemo/flaskapp ports: - containerPort: 5000 --- apiVersion: v1 kind: Service metadata: name: flaskapp-1 labels: name: flaskapp-1 spec: type: NodePort ports: - port: 5000 name: flaskapp-port targetPort: 5000 protocol: TCP nodePort: 30005 selector: name: flaskapp-1

启动应用

kubectl apply -f flask.yaml

查看应用状态

root@k8s-master:~# kubectl get pods -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES flaskapp-1-59698bc97d-2xnhb 1/1 Running 0 24s 192.168.36.65 k8s-node1 <none> <none>

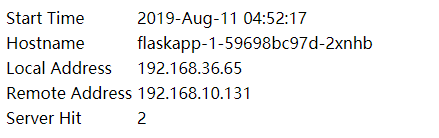

以上信息可以说明,这个pod运行在k8s-node1这台主机上面

ping pod ip地址,如果可以ping通,说明calico插件运行正常。

root@k8s-master:~# ping 192.168.36.65 -c 1 PING 192.168.36.65 (192.168.36.65) 56(84) bytes of data. 64 bytes from 192.168.36.65: icmp_seq=1 ttl=63 time=6.77 ms --- 192.168.36.65 ping statistics --- 1 packets transmitted, 1 received, 0% packet loss, time 0ms rtt min/avg/max/mdev = 6.777/6.777/6.777/0.000 ms

测试 pod 是否可以上网

root@k8s-master:~# kubectl exec -it flaskapp-1-59698bc97d-2xnhb -- ping www.baidu.com -c 1 PING www.baidu.com (61.135.169.125): 56 data bytes 64 bytes from 61.135.169.125: seq=0 ttl=53 time=27.079 ms --- www.baidu.com ping statistics --- 1 packets transmitted, 1 packets received, 0% packet loss round-trip min/avg/max = 27.079/27.079/27.079 ms

使用curl访问pod ip的服务

root@k8s-master:~# curl 192.168.36.65:5000 <html><head><title>Docker + Flask Demo</title></head><body><table><tr><td> Start Time </td> <td>2019-Aug-11 04:52:17</td> </tr><tr><td> Hostname </td> <td>flaskapp-1-59698bc97d-2xnhb</td> </tr><tr><td> Local Address </td> <td>192.168.36.65</td> </tr><tr><td> Remote Address </td> <td>192.168.235.192</td> </tr><tr><td> Server Hit </td> <td>1</td> </tr></table></body></html>

查看svc端口

root@k8s-master:~# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE flaskapp-1 NodePort 10.107.181.43 <none> 5000:30005/TCP 3m40s kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 10m

直接网页访问k8s-node1的30005端口

http://192.168.10.131:30005/

效果如下:

五、部署dashboard可视化插件

概述

在 Kubernetes Dashboard 中可以查看集群中应用的运行状态,也能够创建和修改各种 Kubernetes 资源,比如 Deployment、Job、DaemonSet 等。用户可以 Scale Up/Down Deployment、执行 Rolling Update、重启某个 Pod 或者通过向导部署新的应用。Dashboard 能显示集群中各种资源的状态以及日志信息。

可以说,Kubernetes Dashboard 提供了 kubectl 的绝大部分功能,大家可以根据情况进行选择。

github地址:

https://github.com/kubernetes/dashboard

安装

Kubernetes 默认没有部署 Dashboard,可通过如下命令安装:

kubectl apply -f http://mirror.faasx.com/kubernetes/dashboard/master/src/deploy/recommended/kubernetes-dashboard.yaml

查看service

root@k8s-master:~# kubectl --namespace=kube-system get deployment kubernetes-dashboard NAME READY UP-TO-DATE AVAILABLE AGE kubernetes-dashboard 1/1 1 1 5m23s root@k8s-master:~# kubectl --namespace=kube-system get service kubernetes-dashboard NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes-dashboard ClusterIP 10.100.111.103 <none> 443/TCP 5m28s

查看pod

确保状态是Running

root@k8s-master:~# kubectl get pod --namespace=kube-system -o wide | grep dashboard kubernetes-dashboard-8594bd9565-t78bj 1/1 Running 0 8m41s 192.169.2.7 k8s-node2 <none> <none>

允许外部访问

注意:会占用终端

kubectl proxy --address='0.0.0.0' --accept-hosts='^*$'

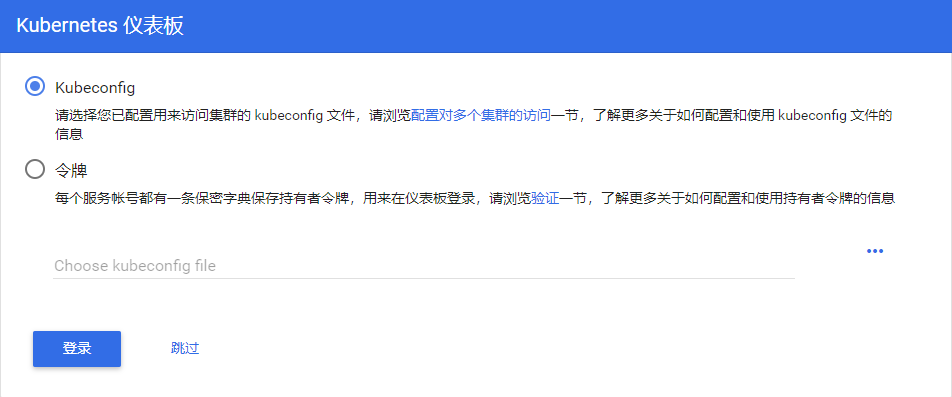

配置登录权限

Dashboard 支持 Kubeconfig 和 Token 两种认证方式,为了简化配置,我们通过配置文件 dashboard-admin.yaml 为 Dashboard 默认用户赋予 admin 权限。

vim dashboard-admin.yml

内容如下:

apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRoleBinding metadata: name: kubernetes-dashboard labels: k8s-app: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kube-system

执行kubectl apply使之生效

kubectl apply -f dashboard-admin.yml

通过浏览器访问

注意:192.168.10.130为master ip

http://192.168.10.130:8001/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxy/.

现在直接点击登录页面的跳过

就可以进入 Dashboard 了,效果如下:

关于dashboard界面结构介绍,请参考链接:

https://www.cnblogs.com/kenken2018/p/10340157.html

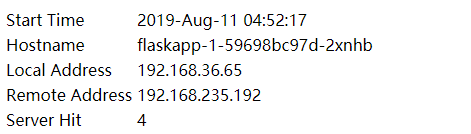

六、解决k8s 外网无法访问nodePort问题

上面flask的例子,无法通过master ip+nodeport访问。

是因为防火墙阻止了

root@k8s-master:~# iptables -xnL|grep FORWARD Chain FORWARD (policy DROP) cali-FORWARD all -- 0.0.0.0/0 0.0.0.0/0 /* cali:wUHhoiAYhphO9Mso */ KUBE-FORWARD all -- 0.0.0.0/0 0.0.0.0/0 /* kubernetes forwarding rules */ Chain KUBE-FORWARD (1 references) Chain cali-FORWARD (1 references)

解决办法:

iptables -P FORWARD ACCEPT

使用master ip+nodeport访问

http://192.168.10.130:30005/

效果如下:

参考链接:

https://blog.csdn.net/a610786189/article/details/80321727

本文参考链接:

https://www.toutiao.com/i6703112655323791884

https://www.cnblogs.com/busigulang/p/10736040.html

https://www.cnblogs.com/qingfeng2010/p/10540832.html

https://www.cnblogs.com/kenken2018/p/10340157.html

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 开发者必知的日志记录最佳实践

· SQL Server 2025 AI相关能力初探

· Linux系列:如何用 C#调用 C方法造成内存泄露

· AI与.NET技术实操系列(二):开始使用ML.NET

· 记一次.NET内存居高不下排查解决与启示

· Manus重磅发布:全球首款通用AI代理技术深度解析与实战指南

· 被坑几百块钱后,我竟然真的恢复了删除的微信聊天记录!

· 没有Manus邀请码?试试免邀请码的MGX或者开源的OpenManus吧

· 园子的第一款AI主题卫衣上架——"HELLO! HOW CAN I ASSIST YOU TODAY

· 【自荐】一款简洁、开源的在线白板工具 Drawnix

2018-08-01 python 全栈开发,Day95(RESTful API介绍,基于Django实现RESTful API,DRF 序列化)