(二)elk采集nginx日志

一、前言

为了记录网站的访问详情,方便记录和统计IP的访问次数和请求的url地址,我们采用轻量级的filebeat工具采集nginx日志,然后把日志的数据包发送给logstash,最后kibana用于日志的展示。

二、实现过程

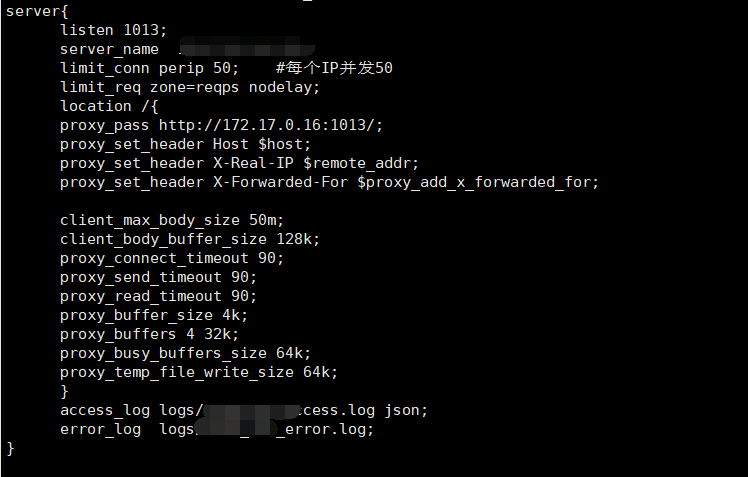

1.配置nginx

1.1 修改nginx日志输出为json格式。

vim nginx.conf

log_format json '{"@timestamp":"$time_iso8601",' '"@version":"1",' '"client":"$remote_addr",' '"url":"$uri",' '"status":"$status",' '"domain":"$host",' '"host":"$server_addr",' '"size":"$body_bytes_sent",' '"responsentime":"$request_time",' '"referer":"$http_referer",' '"useragent":"$http_user_agent"' '}';

user nginx nginx; worker_processes auto; worker_cpu_affinity auto; worker_rlimit_nofile 100000; events { use epoll; multi_accept on; worker_connections 20480; } http { include mime.types; default_type application/octet-stream; log_format main '$remote_addr - $remote_user [$time_local] "$request" ' '$status $body_bytes_sent "$http_referer" ' '"$http_user_agent" "$http_x_forwarded_for"'; log_format json '{"@timestamp":"$time_iso8601",' '"@version":"1",' '"client":"$remote_addr",' '"url":"$uri",' '"status":"$status",' '"domain":"$host",' '"host":"$server_addr",' '"size":"$body_bytes_sent",' '"responsentime":"$request_time",' '"referer":"$http_referer",' '"useragent":"$http_user_agent",' '"upstreampstatus":"$upstream_status",' '"upstreamaddr":"$upstream_addr",' '"upstreamresponsetime":"$upstream_response_time"' '}'; sendfile on; keepalive_timeout 65; include /opt/nginx/conf/vhost/*.conf; }

1.2 修改站点nginx配置文件,添加下面一行

access_log logs/web_access.log

2.配置filebeat

1.1 安装featbeat

rpm -ivh filebeat-*x86_64.rpm

2.2 修改featbeat配置文件

vim /etc/filebeat/filebeat.yml

filebeat.inputs: - type: log paths: - /opt/nginx/logs/web1_access.log json.keys_under_root: true json.overwrite_keys: true fields: app_id: web1_nginx - input_type: log paths: - /opt/nginx/logs/web2.access.log json.keys_under_root: true json.overwrite_keys: true fields: app_id: web2_nginx output.logstash: hosts: ["1.1.1.1:5055"] filebeat.yml

重启featbeat

systemctl restart filebeat.service

3.配置logstash

input { beats { add_field => {"myid"=>"nginx"} port => 5055 } tcp { host => "0.0.0.0" port => 5044 mode => "server" tags => ["tags"] codec => json_lines type => "log" } } output { if[type] == "log" { elasticsearch { hosts => "localhost:9200" index => "%{[appname]}" } } if [myid] == "nginx" { elasticsearch { hosts => ["localhost:9200"] # 定义es服务器的ip index => "%{[fields][app_id]}" # 定义索引 } } }

启动logstash

cd /opt/logstash-6.5.3 &&/usr/bin/nohup ./bin/logstash -f ./config/logstash-tanlu.conf>/dev/null 2>&1 &

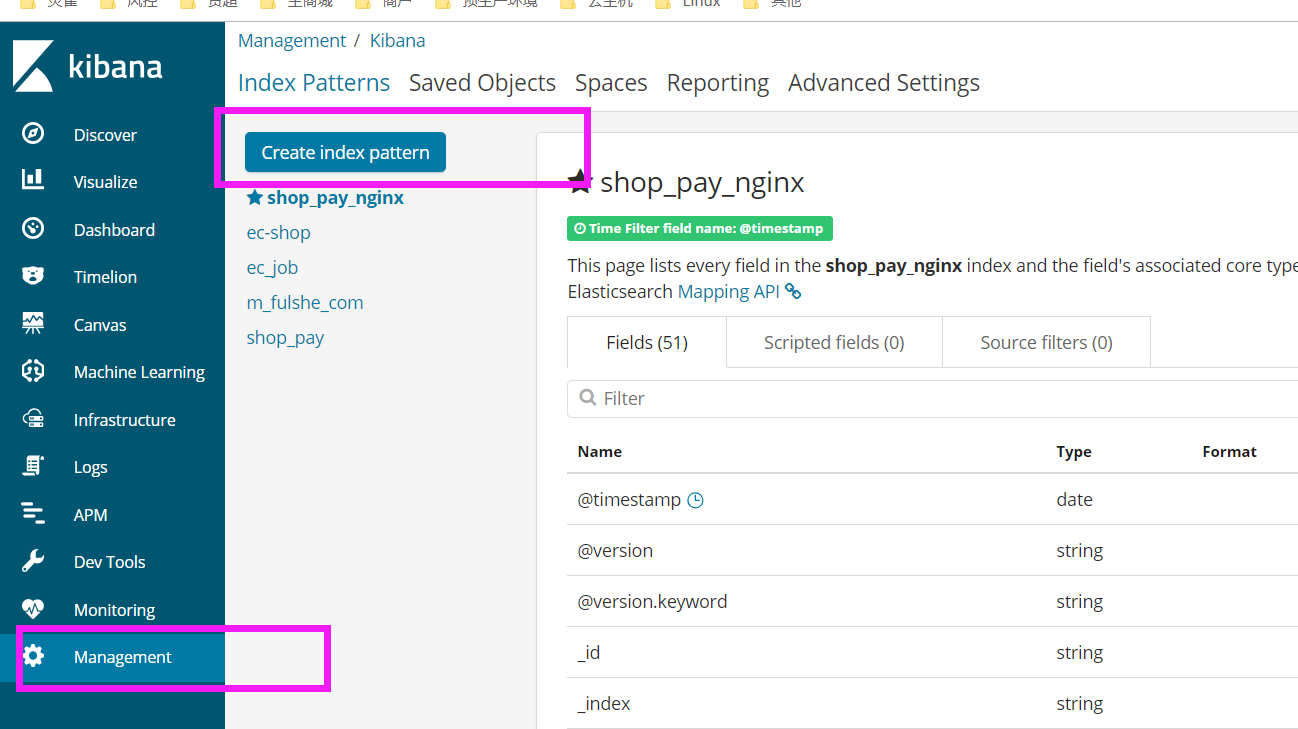

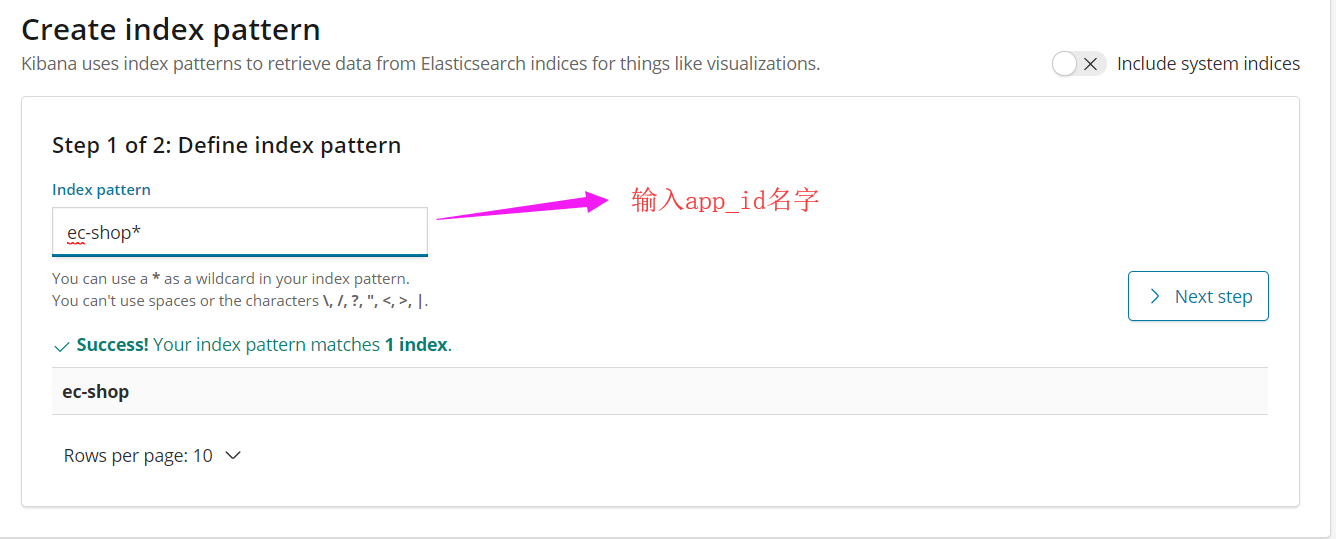

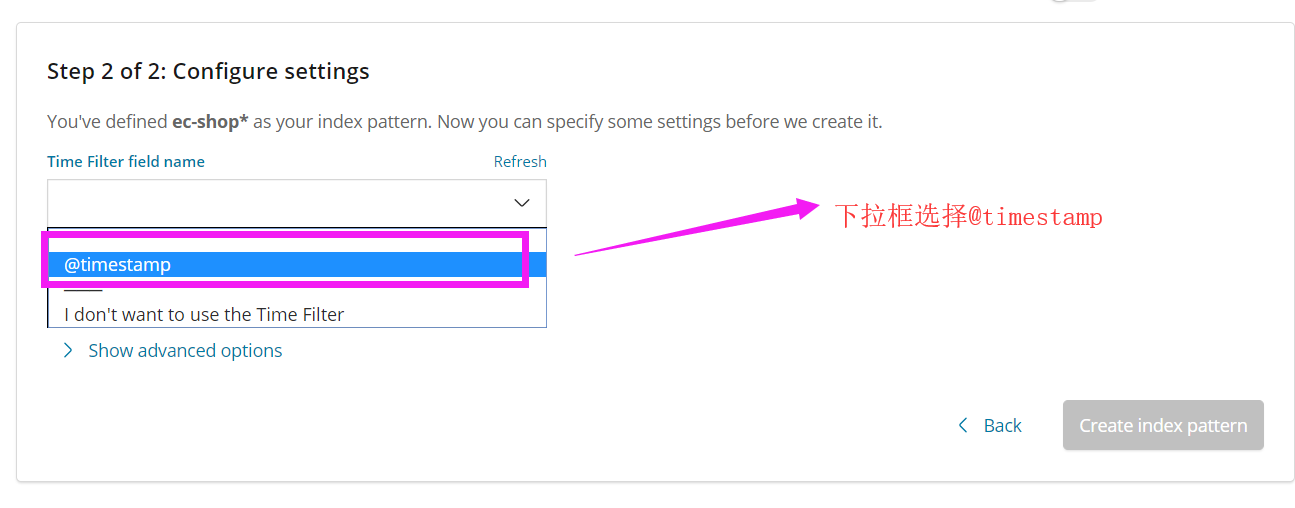

4.配置kibana

management--->create index pattern

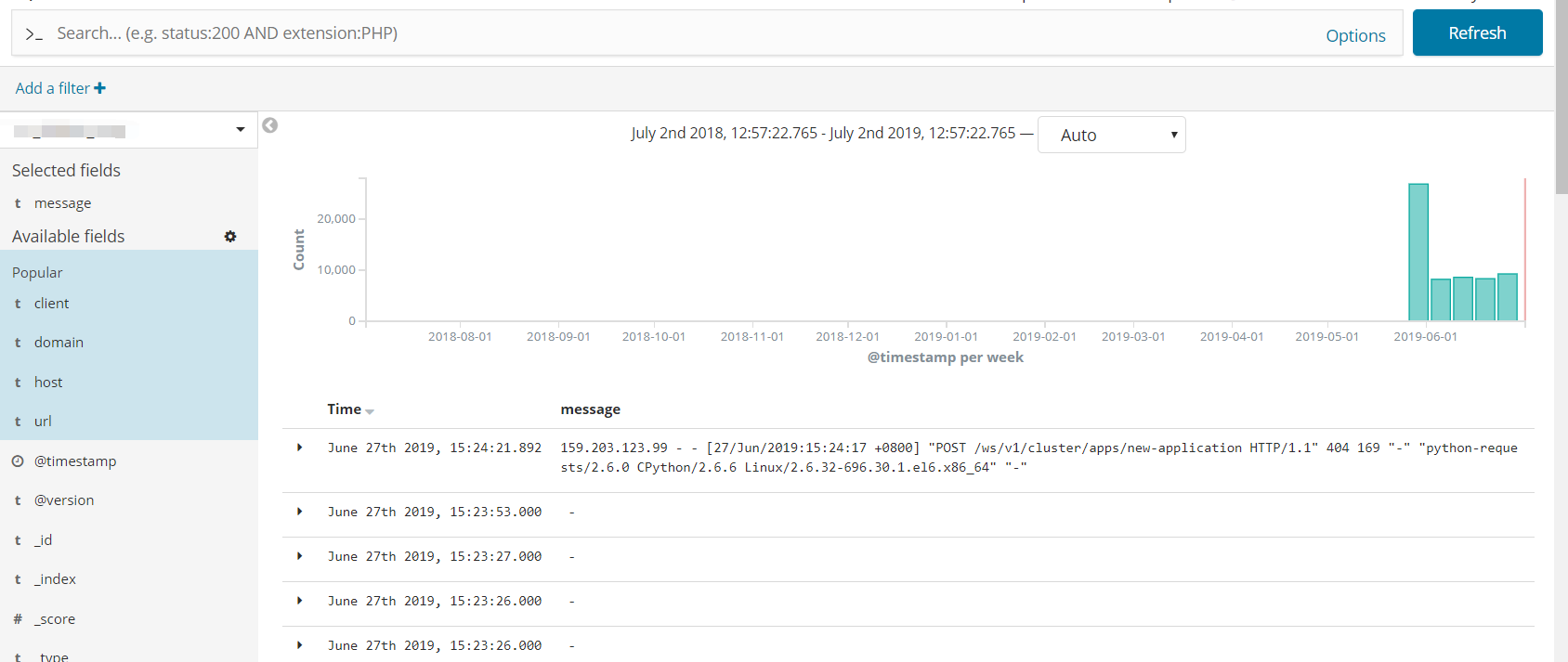

最终效果

浙公网安备 33010602011771号

浙公网安备 33010602011771号