nomad 部署es集群

Nomad 部署 Elasticsearch

参考文档 https://itnext.io/elasticsearch-on-nomad-ae685b762779

准备es镜像

FROM centos:7

ENV APP_HOME /opt/elasticsearch

ENV ES_TMPDIR=${APP_HOME}/temp

ENV ES_USER elasticsearch

RUN useradd -ms /bin/bash -d ${APP_HOME} ${ES_USER}

RUN yum install nmap-ncat -y

RUN set -ex \

&& ES_PACK_NAME=elasticsearch-7.8.1 \

&& FILE_NAME=${ES_PACK_NAME}-linux-x86_64.tar.gz \

&& curl -O https://artifacts.elastic.co/downloads/elasticsearch/${FILE_NAME} \

&& tar -zxf ${FILE_NAME} \

&& cp -R ${ES_PACK_NAME}/* ${APP_HOME} \

&& rm -rf ${APP_HOME}/config/elasticsearch.yml \

&& rm -rf ${APP_HOME}/config/jvm.options \

&& rm -rf ${FILE_NAME} \

&& rm -rf ${ES_PACK_NAME}

RUN mkdir -p ${APP_HOME}/temp

RUN ls -al ${APP_HOME}

RUN chmod a+x -R ${APP_HOME}/bin

RUN chown ${ES_USER}: -R ${APP_HOME}

RUN set -ex \

&& echo 'elasticsearch - nofile 65536' >> /etc/security/limits.d/elasticsearch.conf \

&& echo 'elasticsearch - nproc 65536' >> /etc/security/limits.d/elasticsearch.conf \

&& echo 'root soft memlock unlimited' >> /etc/security/limits.d/elasticsearch.conf \

&& echo 'root hard memlock unlimited' >> /etc/security/limits.d/elasticsearch.conf

RUN cat /etc/security/limits.d/elasticsearch.conf

USER ${ES_USER}

WORKDIR ${APP_HOME}

EXPOSE 9200 9300

推送镜像

#登录dockerhub

docker login

#推送

export ES_IMAGE=x602/elasticsearch:7.8.1

docker build . -t ${ES_IMAGE};

docker push ${ES_IMAGE};

在Nomad上部署Elasticsearch

创建命名空间

nomad namespace apply -description "Elasticsearch Cluster" elasticsearch;

创建和注册卷

# 1.2 ceph-csi es

########## register volume: es-master-0

## es volume.

cat <<EOF > es-volume-master-0.hcl

type = "csi"

id = "es-master-0"

name = "es-master-0"

external_id = "0001-0024-ef8394c8-2b17-435d-ae47-9dff271875d1-0000000000000001-00000000-1111-2222-bbcc-cacacacacae2"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

mount_options {

fs_type = "ext4"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

context {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "myPool"

imageFeatures = "layering"

}

EOF

########## register volume: es-master-1

## es volume.

cat <<EOF > es-volume-master-1.hcl

type = "csi"

id = "es-master-1"

name = "es-master-1"

external_id = "0001-0024-ef8394c8-2b17-435d-ae47-9dff271875d1-0000000000000001-00000000-1111-2222-bbcc-cacacacacac3"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

mount_options {

fs_type = "ext4"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

context {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "myPool"

imageFeatures = "layering"

}

EOF

########## register volume: es-master-2

## es volume.

cat <<EOF > es-volume-master-2.hcl

type = "csi"

id = "es-master-2"

name = "es-master-2"

external_id = "0001-0024-ef8394c8-2b17-435d-ae47-9dff271875d1-0000000000000001-00000000-1111-2222-bbcc-cacacacacac4"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

mount_options {

fs_type = "ext4"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

context {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "myPool"

imageFeatures = "layering"

}

EOF

########## register volume: es-data-0

## es volume.

cat <<EOF > es-volume-data-0.hcl

type = "csi"

id = "es-data-0"

name = "es-data-0"

external_id = "0001-0024-ef8394c8-2b17-435d-ae47-9dff271875d1-0000000000000001-00000000-1111-2222-bbcc-cacacacacaf0"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

mount_options {

fs_type = "ext4"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

context {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "myPool"

imageFeatures = "layering"

}

EOF

########## register volume: es-data-1

## es volume.

cat <<EOF > es-volume-data-1.hcl

type = "csi"

id = "es-data-1"

name = "es-data-1"

external_id = "0001-0024-ef8394c8-2b17-435d-ae47-9dff271875d1-0000000000000001-00000000-1111-2222-bbcc-cacacacacaf1"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

mount_options {

fs_type = "ext4"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

context {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "myPool"

imageFeatures = "layering"

}

EOF

#创建 ----------

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbcc-cacacacacae2 --size 500000 --image-feature layering;

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbcc-cacacacacac3 --size 500000 --image-feature layering;

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbcc-cacacacacac4 --size 500000 --image-feature layering;

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbcc-cacacacacaf0 --size 500000 --image-feature layering;

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbcc-cacacacacaf1 --size 500000 --image-feature layering;

nomad volume register -namespace=elasticsearch es-volume-data-0.hcl;

nomad volume register -namespace=elasticsearch es-volume-data-1.hcl;

nomad volume register -namespace=elasticsearch es-volume-master-0.hcl;

nomad volume register -namespace=elasticsearch es-volume-master-1.hcl;

nomad volume register -namespace=elasticsearch es-volume-master-2.hcl;

检查状态

nomad volume status -namespace elasticsearch;

Container Storage Interface

ID Name Plugin ID Schedulable Access Mode

es-data-0 es-data-0 ceph-csi true single-node-writer

es-data-1 es-data-1 ceph-csi true single-node-writer

es-master-0 es-master-0 ceph-csi true single-node-writer

es-master-1 es-master-1 ceph-csi true single-node-writer

es-master-2 es-master-2 ceph-csi true single-node-writer

job

# 1.2 ceph 7.8

job "elasticsearch" {

namespace = "elasticsearch"

datacenters = ["dc1"]

type = "service"

update {

max_parallel = 1

health_check = "checks"

min_healthy_time = "30s"

healthy_deadline = "5m"

auto_revert = true

canary = 0

stagger = "30s"

}

##################################### master-0 ############################################

group "master-0" {

count = 1

restart {

attempts = 3

delay = "30s"

interval = "5m"

mode = "fail"

}

network {

port "request" {

}

port "communication" {

}

}

volume "ceph-volume" {

type = "csi"

read_only = false

source = "es-master-0"

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

task "elasticsearch" {

driver = "docker"

kill_timeout = "300s"

kill_signal = "SIGTERM"

volume_mount {

volume = "ceph-volume"

destination = "/srv"

read_only = false

}

env {

ES_TMPDIR = "/opt/elasticsearch/temp"

}

template {

data = <<EOF

cluster:

name: my-cluster

publish:

timeout: 300s

join:

timeout: 300s

initial_master_nodes:

- {{ env "NOMAD_IP_communication" }}:{{ env "NOMAD_HOST_PORT_communication" }}

node:

name: es-master-0

master: true

data: false

ingest: false

network:

host: 0.0.0.0

discovery:

seed_hosts:

- {{ env "NOMAD_IP_communication" }}:{{ env "NOMAD_HOST_PORT_communication" }}

path:

data:

- /srv/data

logs: /srv/log

bootstrap.memory_lock: true

indices.query.bool.max_clause_count: 10000

EOF

destination = "local/elasticsearch.yml"

}

template {

data = <<EOF

-Xms512m

-Xmx512m

8-13:-XX:+UseConcMarkSweepGC

8-13:-XX:CMSInitiatingOccupancyFraction=75

8-13:-XX:+UseCMSInitiatingOccupancyOnly

14-:-XX:+UseG1GC

-Djava.io.tmpdir=${ES_TMPDIR}

-XX:+HeapDumpOnOutOfMemoryError

-XX:HeapDumpPath=data

-XX:ErrorFile=logs/hs_err_pid%p.log

8:-XX:+PrintGCDetails

8:-XX:+PrintGCDateStamps

8:-XX:+PrintTenuringDistribution

8:-XX:+PrintGCApplicationStoppedTime

8:-Xloggc:logs/gc.log

8:-XX:+UseGCLogFileRotation

8:-XX:NumberOfGCLogFiles=32

8:-XX:GCLogFileSize=64m

9-:-Xlog:gc*,gc+age=trace,safepoint:file=logs/gc.log:utctime,pid,tags:filecount=32,filesize=64m

EOF

destination = "local/jvm.options"

}

config {

image = "x602/elasticsearch:7.8.1"

force_pull = false

volumes = [

"./local/elasticsearch.yml:/opt/elasticsearch/config/elasticsearch.yml",

"./local/jvm.options:/opt/elasticsearch/config/jvm.options"

]

command = "bin/elasticsearch"

args = [

"-Enetwork.publish_host=${NOMAD_IP_request}",

"-Ehttp.publish_port=${NOMAD_HOST_PORT_request}",

"-Ehttp.port=${NOMAD_PORT_request}",

"-Etransport.publish_port=${NOMAD_HOST_PORT_communication}",

"-Etransport.tcp.port=${NOMAD_PORT_communication}"

]

ports = [

"request",

"communication"

]

ulimit {

memlock = "-1"

nofile = "65536"

nproc = "65536"

}

}

resources {

cpu = 100

memory = 1024

}

service {

name = "es-req"

port = "request"

check {

name = "rest-tcp"

type = "tcp"

interval = "10s"

timeout = "2s"

}

check {

name = "rest-http"

type = "http"

path = "/"

interval = "5s"

timeout = "4s"

}

}

service {

name = "es-master-0-comm"

port = "communication"

check {

type = "tcp"

interval = "10s"

timeout = "2s"

}

}

}

}

##################################### master-1 ############################################

group "master-1" {

count = 1

restart {

attempts = 3

delay = "30s"

interval = "5m"

mode = "fail"

}

network {

port "request" {

}

port "communication" {

}

}

volume "ceph-volume" {

type = "csi"

read_only = false

source = "es-master-1"

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

task "await-es-master-0-comm" {

driver = "docker"

config {

image = "busybox:1.28"

command = "sh"

args = ["-c", "echo -n 'Waiting for service'; until nslookup es-master-0-comm.service.consul 2>&1 >/dev/null; do echo '.'; sleep 2; done"]

network_mode = "host"

}

resources {

cpu = 200

memory = 128

}

lifecycle {

hook = "prestart"

sidecar = false

}

}

task "elasticsearch" {

driver = "docker"

kill_timeout = "300s"

kill_signal = "SIGTERM"

volume_mount {

volume = "ceph-volume"

destination = "/srv"

read_only = false

}

env {

ES_TMPDIR = "/opt/elasticsearch/temp"

}

template {

data = <<EOF

cluster:

name: my-cluster

publish:

timeout: 300s

join:

timeout: 300s

initial_master_nodes:

- {{ range service "es-master-0-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

node:

name: es-master-1

master: true

data: false

ingest: false

network:

host: 0.0.0.0

discovery:

seed_hosts:

- {{ env "NOMAD_IP_communication" }}:{{ env "NOMAD_HOST_PORT_communication" }}

- {{ range service "es-master-0-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

path:

data:

- /srv/data

logs: /srv/log

bootstrap.memory_lock: true

indices.query.bool.max_clause_count: 10000

EOF

destination = "local/elasticsearch.yml"

}

template {

data = <<EOF

-Xms512m

-Xmx512m

8-13:-XX:+UseConcMarkSweepGC

8-13:-XX:CMSInitiatingOccupancyFraction=75

8-13:-XX:+UseCMSInitiatingOccupancyOnly

14-:-XX:+UseG1GC

-Djava.io.tmpdir=${ES_TMPDIR}

-XX:+HeapDumpOnOutOfMemoryError

-XX:HeapDumpPath=data

-XX:ErrorFile=logs/hs_err_pid%p.log

8:-XX:+PrintGCDetails

8:-XX:+PrintGCDateStamps

8:-XX:+PrintTenuringDistribution

8:-XX:+PrintGCApplicationStoppedTime

8:-Xloggc:logs/gc.log

8:-XX:+UseGCLogFileRotation

8:-XX:NumberOfGCLogFiles=32

8:-XX:GCLogFileSize=64m

9-:-Xlog:gc*,gc+age=trace,safepoint:file=logs/gc.log:utctime,pid,tags:filecount=32,filesize=64m

EOF

destination = "local/jvm.options"

}

config {

image = "x602/elasticsearch:7.8.1"

force_pull = false

volumes = [

"./local/elasticsearch.yml:/opt/elasticsearch/config/elasticsearch.yml",

"./local/jvm.options:/opt/elasticsearch/config/jvm.options"

]

command = "bin/elasticsearch"

args = [

"-Enetwork.publish_host=${NOMAD_IP_request}",

"-Ehttp.publish_port=${NOMAD_HOST_PORT_request}",

"-Ehttp.port=${NOMAD_PORT_request}",

"-Etransport.publish_port=${NOMAD_HOST_PORT_communication}",

"-Etransport.tcp.port=${NOMAD_PORT_communication}"

]

ports = [

"request",

"communication"

]

ulimit {

memlock = "-1"

nofile = "65536"

nproc = "65536"

}

}

resources {

cpu = 100

memory = 1024

}

service {

name = "es-req"

port = "request"

check {

name = "rest-tcp"

type = "tcp"

interval = "10s"

timeout = "2s"

}

check {

name = "rest-http"

type = "http"

path = "/"

interval = "5s"

timeout = "4s"

}

}

service {

name = "es-master-1-comm"

port = "communication"

check {

type = "tcp"

interval = "10s"

timeout = "2s"

}

}

}

}

##################################### master-2 ############################################

group "master-2" {

count = 1

restart {

attempts = 3

delay = "30s"

interval = "5m"

mode = "fail"

}

network {

port "request" {

}

port "communication" {

}

}

volume "ceph-volume" {

type = "csi"

read_only = false

source = "es-master-2"

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

task "await-es-master-0-comm" {

driver = "docker"

config {

image = "busybox:1.28"

command = "sh"

args = ["-c", "echo -n 'Waiting for service'; until nslookup es-master-0-comm.service.consul 2>&1 >/dev/null; do echo '.'; sleep 2; done"]

network_mode = "host"

}

resources {

cpu = 200

memory = 128

}

lifecycle {

hook = "prestart"

sidecar = false

}

}

task "await-es-master-1-comm" {

driver = "docker"

config {

image = "busybox:1.28"

command = "sh"

args = ["-c", "echo -n 'Waiting for service'; until nslookup es-master-1-comm.service.consul 2>&1 >/dev/null; do echo '.'; sleep 2; done"]

network_mode = "host"

}

resources {

cpu = 200

memory = 128

}

lifecycle {

hook = "prestart"

sidecar = false

}

}

task "elasticsearch" {

driver = "docker"

kill_timeout = "300s"

kill_signal = "SIGTERM"

volume_mount {

volume = "ceph-volume"

destination = "/srv"

read_only = false

}

env {

ES_TMPDIR = "/opt/elasticsearch/temp"

}

template {

data = <<EOF

cluster:

name: my-cluster

publish:

timeout: 300s

join:

timeout: 300s

initial_master_nodes:

- {{ range service "es-master-0-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

node:

name: es-master-2

master: true

data: false

ingest: false

network:

host: 0.0.0.0

discovery:

seed_hosts:

- {{ env "NOMAD_IP_communication" }}:{{ env "NOMAD_HOST_PORT_communication" }}

- {{ range service "es-master-0-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

- {{ range service "es-master-1-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

path:

data:

- /srv/data

logs: /srv/log

bootstrap.memory_lock: true

indices.query.bool.max_clause_count: 10000

EOF

destination = "local/elasticsearch.yml"

}

template {

data = <<EOF

-Xms512m

-Xmx512m

8-13:-XX:+UseConcMarkSweepGC

8-13:-XX:CMSInitiatingOccupancyFraction=75

8-13:-XX:+UseCMSInitiatingOccupancyOnly

14-:-XX:+UseG1GC

-Djava.io.tmpdir=${ES_TMPDIR}

-XX:+HeapDumpOnOutOfMemoryError

-XX:HeapDumpPath=data

-XX:ErrorFile=logs/hs_err_pid%p.log

8:-XX:+PrintGCDetails

8:-XX:+PrintGCDateStamps

8:-XX:+PrintTenuringDistribution

8:-XX:+PrintGCApplicationStoppedTime

8:-Xloggc:logs/gc.log

8:-XX:+UseGCLogFileRotation

8:-XX:NumberOfGCLogFiles=32

8:-XX:GCLogFileSize=64m

9-:-Xlog:gc*,gc+age=trace,safepoint:file=logs/gc.log:utctime,pid,tags:filecount=32,filesize=64m

EOF

destination = "local/jvm.options"

}

config {

image = "x602/elasticsearch:7.8.1"

force_pull = false

volumes = [

"./local/elasticsearch.yml:/opt/elasticsearch/config/elasticsearch.yml",

"./local/jvm.options:/opt/elasticsearch/config/jvm.options"

]

command = "bin/elasticsearch"

args = [

"-Enetwork.publish_host=${NOMAD_IP_request}",

"-Ehttp.publish_port=${NOMAD_HOST_PORT_request}",

"-Ehttp.port=${NOMAD_PORT_request}",

"-Etransport.publish_port=${NOMAD_HOST_PORT_communication}",

"-Etransport.tcp.port=${NOMAD_PORT_communication}"

]

ports = [

"request",

"communication"

]

ulimit {

memlock = "-1"

nofile = "65536"

nproc = "65536"

}

}

resources {

cpu = 100

memory = 1024

}

service {

name = "es-req"

port = "request"

check {

name = "rest-tcp"

type = "tcp"

interval = "10s"

timeout = "2s"

}

check {

name = "rest-http"

type = "http"

path = "/"

interval = "5s"

timeout = "4s"

}

}

service {

name = "es-master-2-comm"

port = "communication"

check {

type = "tcp"

interval = "10s"

timeout = "2s"

}

}

}

}

##################################### data-0 ############################################

group "data-0" {

count = 1

restart {

attempts = 3

delay = "30s"

interval = "5m"

mode = "fail"

}

network {

port "request" {

}

port "communication" {

}

}

volume "ceph-volume" {

type = "csi"

read_only = false

source = "es-data-0"

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

task "await-es-master-0-comm" {

driver = "docker"

config {

image = "busybox:1.28"

command = "sh"

args = ["-c", "echo -n 'Waiting for service'; until nslookup es-master-0-comm.service.consul 2>&1 >/dev/null; do echo '.'; sleep 2; done"]

network_mode = "host"

}

resources {

cpu = 200

memory = 128

}

lifecycle {

hook = "prestart"

sidecar = false

}

}

task "await-es-master-1-comm" {

driver = "docker"

config {

image = "busybox:1.28"

command = "sh"

args = ["-c", "echo -n 'Waiting for service'; until nslookup es-master-1-comm.service.consul 2>&1 >/dev/null; do echo '.'; sleep 2; done"]

network_mode = "host"

}

resources {

cpu = 200

memory = 128

}

lifecycle {

hook = "prestart"

sidecar = false

}

}

task "await-es-master-2-comm" {

driver = "docker"

config {

image = "busybox:1.28"

command = "sh"

args = ["-c", "echo -n 'Waiting for service'; until nslookup es-master-2-comm.service.consul 2>&1 >/dev/null; do echo '.'; sleep 2; done"]

network_mode = "host"

}

resources {

cpu = 200

memory = 128

}

lifecycle {

hook = "prestart"

sidecar = false

}

}

task "elasticsearch" {

driver = "docker"

kill_timeout = "300s"

kill_signal = "SIGTERM"

volume_mount {

volume = "ceph-volume"

destination = "/srv"

read_only = false

}

env {

ES_TMPDIR = "/opt/elasticsearch/temp"

}

template {

data = <<EOF

cluster:

name: my-cluster

publish:

timeout: 300s

join:

timeout: 300s

node:

name: es-data-0

master: false

data: true

ingest: true

network:

host: 0.0.0.0

discovery:

seed_hosts:

- {{ range service "es-master-0-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

- {{ range service "es-master-1-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

- {{ range service "es-master-2-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

path:

data:

- /srv/data

logs: /srv/log

bootstrap.memory_lock: true

indices.query.bool.max_clause_count: 10000

EOF

destination = "local/elasticsearch.yml"

}

template {

data = <<EOF

-Xms512m

-Xmx512m

8-13:-XX:+UseConcMarkSweepGC

8-13:-XX:CMSInitiatingOccupancyFraction=75

8-13:-XX:+UseCMSInitiatingOccupancyOnly

14-:-XX:+UseG1GC

-Djava.io.tmpdir=${ES_TMPDIR}

-XX:+HeapDumpOnOutOfMemoryError

-XX:HeapDumpPath=data

-XX:ErrorFile=logs/hs_err_pid%p.log

8:-XX:+PrintGCDetails

8:-XX:+PrintGCDateStamps

8:-XX:+PrintTenuringDistribution

8:-XX:+PrintGCApplicationStoppedTime

8:-Xloggc:logs/gc.log

8:-XX:+UseGCLogFileRotation

8:-XX:NumberOfGCLogFiles=32

8:-XX:GCLogFileSize=64m

9-:-Xlog:gc*,gc+age=trace,safepoint:file=logs/gc.log:utctime,pid,tags:filecount=32,filesize=64m

EOF

destination = "local/jvm.options"

}

config {

image = "x602/elasticsearch:7.8.1"

force_pull = false

volumes = [

"./local/elasticsearch.yml:/opt/elasticsearch/config/elasticsearch.yml",

"./local/jvm.options:/opt/elasticsearch/config/jvm.options"

]

command = "bin/elasticsearch"

args = [

"-Enetwork.publish_host=${NOMAD_IP_request}",

"-Ehttp.publish_port=${NOMAD_HOST_PORT_request}",

"-Ehttp.port=${NOMAD_PORT_request}",

"-Etransport.publish_port=${NOMAD_HOST_PORT_communication}",

"-Etransport.tcp.port=${NOMAD_PORT_communication}"

]

ports = [

"request",

"communication"

]

ulimit {

memlock = "-1"

nofile = "65536"

nproc = "65536"

}

}

resources {

cpu = 100

memory = 1024

}

service {

name = "es-req"

port = "request"

check {

name = "rest-tcp"

type = "tcp"

interval = "10s"

timeout = "2s"

}

check {

name = "rest-http"

type = "http"

path = "/"

interval = "5s"

timeout = "4s"

}

}

service {

name = "es-data-comm"

port = "communication"

check {

type = "tcp"

interval = "10s"

timeout = "2s"

}

}

}

}

##################################### data-1 ############################################

group "data-1" {

count = 1

restart {

attempts = 3

delay = "30s"

interval = "5m"

mode = "fail"

}

network {

port "request" {

}

port "communication" {

}

}

volume "ceph-volume" {

type = "csi"

read_only = false

source = "es-data-1"

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

task "await-es-master-0-comm" {

driver = "docker"

config {

image = "busybox:1.28"

command = "sh"

args = ["-c", "echo -n 'Waiting for service'; until nslookup es-master-0-comm.service.consul 2>&1 >/dev/null; do echo '.'; sleep 2; done"]

network_mode = "host"

}

resources {

cpu = 200

memory = 128

}

lifecycle {

hook = "prestart"

sidecar = false

}

}

task "await-es-master-1-comm" {

driver = "docker"

config {

image = "busybox:1.28"

command = "sh"

args = ["-c", "echo -n 'Waiting for service'; until nslookup es-master-1-comm.service.consul 2>&1 >/dev/null; do echo '.'; sleep 2; done"]

network_mode = "host"

}

resources {

cpu = 200

memory = 128

}

lifecycle {

hook = "prestart"

sidecar = false

}

}

task "await-es-master-2-comm" {

driver = "docker"

config {

image = "busybox:1.28"

command = "sh"

args = ["-c", "echo -n 'Waiting for service'; until nslookup es-master-2-comm.service.consul 2>&1 >/dev/null; do echo '.'; sleep 2; done"]

network_mode = "host"

}

resources {

cpu = 200

memory = 128

}

lifecycle {

hook = "prestart"

sidecar = false

}

}

task "elasticsearch" {

driver = "docker"

kill_timeout = "300s"

kill_signal = "SIGTERM"

volume_mount {

volume = "ceph-volume"

destination = "/srv"

read_only = false

}

env {

ES_TMPDIR = "/opt/elasticsearch/temp"

}

template {

data = <<EOF

cluster:

name: my-cluster

publish:

timeout: 300s

join:

timeout: 300s

node:

name: es-data-1

master: false

data: true

ingest: true

network:

host: 0.0.0.0

discovery:

seed_hosts:

- {{ range service "es-master-0-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

- {{ range service "es-master-1-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

- {{ range service "es-master-2-comm" }}{{ .Address }}:{{ .Port }}{{ end }}

path:

data:

- /srv/data

logs: /srv/log

bootstrap.memory_lock: true

indices.query.bool.max_clause_count: 10000

EOF

destination = "local/elasticsearch.yml"

}

template {

data = <<EOF

-Xms512m

-Xmx512m

8-13:-XX:+UseConcMarkSweepGC

8-13:-XX:CMSInitiatingOccupancyFraction=75

8-13:-XX:+UseCMSInitiatingOccupancyOnly

14-:-XX:+UseG1GC

-Djava.io.tmpdir=${ES_TMPDIR}

-XX:+HeapDumpOnOutOfMemoryError

-XX:HeapDumpPath=data

-XX:ErrorFile=logs/hs_err_pid%p.log

8:-XX:+PrintGCDetails

8:-XX:+PrintGCDateStamps

8:-XX:+PrintTenuringDistribution

8:-XX:+PrintGCApplicationStoppedTime

8:-Xloggc:logs/gc.log

8:-XX:+UseGCLogFileRotation

8:-XX:NumberOfGCLogFiles=32

8:-XX:GCLogFileSize=64m

9-:-Xlog:gc*,gc+age=trace,safepoint:file=logs/gc.log:utctime,pid,tags:filecount=32,filesize=64m

EOF

destination = "local/jvm.options"

}

config {

image = "x602/elasticsearch:7.8.1"

force_pull = false

volumes = [

"./local/elasticsearch.yml:/opt/elasticsearch/config/elasticsearch.yml",

"./local/jvm.options:/opt/elasticsearch/config/jvm.options"

]

command = "bin/elasticsearch"

args = [

"-Enetwork.publish_host=${NOMAD_IP_request}",

"-Ehttp.publish_port=${NOMAD_HOST_PORT_request}",

"-Ehttp.port=${NOMAD_PORT_request}",

"-Etransport.publish_port=${NOMAD_HOST_PORT_communication}",

"-Etransport.tcp.port=${NOMAD_PORT_communication}"

]

ports = [

"request",

"communication"

]

ulimit {

memlock = "-1"

nofile = "65536"

nproc = "65536"

}

}

resources {

cpu = 100

memory = 1024

}

service {

name = "es-req"

port = "request"

check {

name = "rest-tcp"

type = "tcp"

interval = "10s"

timeout = "2s"

}

check {

name = "rest-http"

type = "http"

path = "/"

interval = "5s"

timeout = "4s"

}

}

service {

name = "es-data-comm"

port = "communication"

check {

type = "tcp"

interval = "10s"

timeout = "2s"

}

}

}

}

}

获取服务中所有节点的 IP 地址和端口。

cat <<EOF > service.tpl

{{ range service "es-req" }}

- {{ .Address }}:{{ .Port }}{{ end }}

EOF

consul-template -template service.tpl -dry;

>

- 10.103.3.41:20102

- 10.103.3.41:28131

- 10.103.3.41:29898

- 10.103.3.41:28413

- 10.103.3.41:28185

验证

curl http://10.103.3.41:20102/_cluster/health?pretty

{

"cluster_name" : "my-cluster",

"status" : "green",

"timed_out" : false,

"number_of_nodes" : 7,

"number_of_data_nodes" : 4,

"active_primary_shards" : 5,

"active_shards" : 5,

"relocating_shards" : 0,

"initializing_shards" : 0,

"unassigned_shards" : 0,

"delayed_unassigned_shards" : 0,

"number_of_pending_tasks" : 0,

"number_of_in_flight_fetch" : 0,

"task_max_waiting_in_queue_millis" : 0,

"active_shards_percent_as_number" : 100.0

}

进行 基准测试

https://esrally.readthedocs.io/en/stable/docker.html

使用track参考 https://esrally.readthedocs.io/en/stable/race.html

我们这里使用http_logs 贴合生产 具体详细说明我参考了这个 https://blog.csdn.net/wuxiao5570/article/details/56291834

# target-hosts 写获取的es节点ip

cat <<EOF > service.tpl

{{ range service "es-req" }}{{ .Address }}:{{ .Port }},{{ end }}

EOF

consul-template -template service.tpl -dry;

# 先test-mode一下测试

docker run --name rally_test elastic/rally race --track=nyc_taxis --test-mode --pipeline=benchmark-only --challenge=append-no-conflicts --target-hosts=10.103.3.41:29126,10.103.3.41:20765,10.103.3.41:29429,10.103.3.41:22198,10.103.3.41:26775

# 我这里映射的目录是上面的容器cp出来的,避免数据重复下载

docker cp rally_test:/rally/.rally myrally

#进行正经测试

docker run --rm -v $PWD/myrally:/rally/.rally elastic/rally race --track=http_logs --pipeline=benchmark-only --target-hosts=10.103.3.41:29126,10.103.3.41:20765,10.103.3.41:29429,10.103.3.41:22198,10.103.3.41:26775

tailf myrally/logs/rally.log 查看日志,很重要 测试感觉特别慢了首先看日志 一般是 磁盘慢了或者es节点连接不上

补充

#忽略提交告警 [WARNING] Local changes in [/rally/.rally/benchmarks/tracks/default] prevent tracks update from remote. Please commit your changes.

cd myrally/benchmarks/tracks/default

git status

git checkout .

# https://www.jb51.net/article/177582.htm

# 可以挂到后台执行

screen -S yourname -> 新建一个叫yourname的session

screen -ls -> 列出当前所有的session

screen -r yourname -> 回到yourname这个session

screen -d yourname -> 远程detach某个session

screen -d -r yourname -> 结束当前session并回到yourname这个session

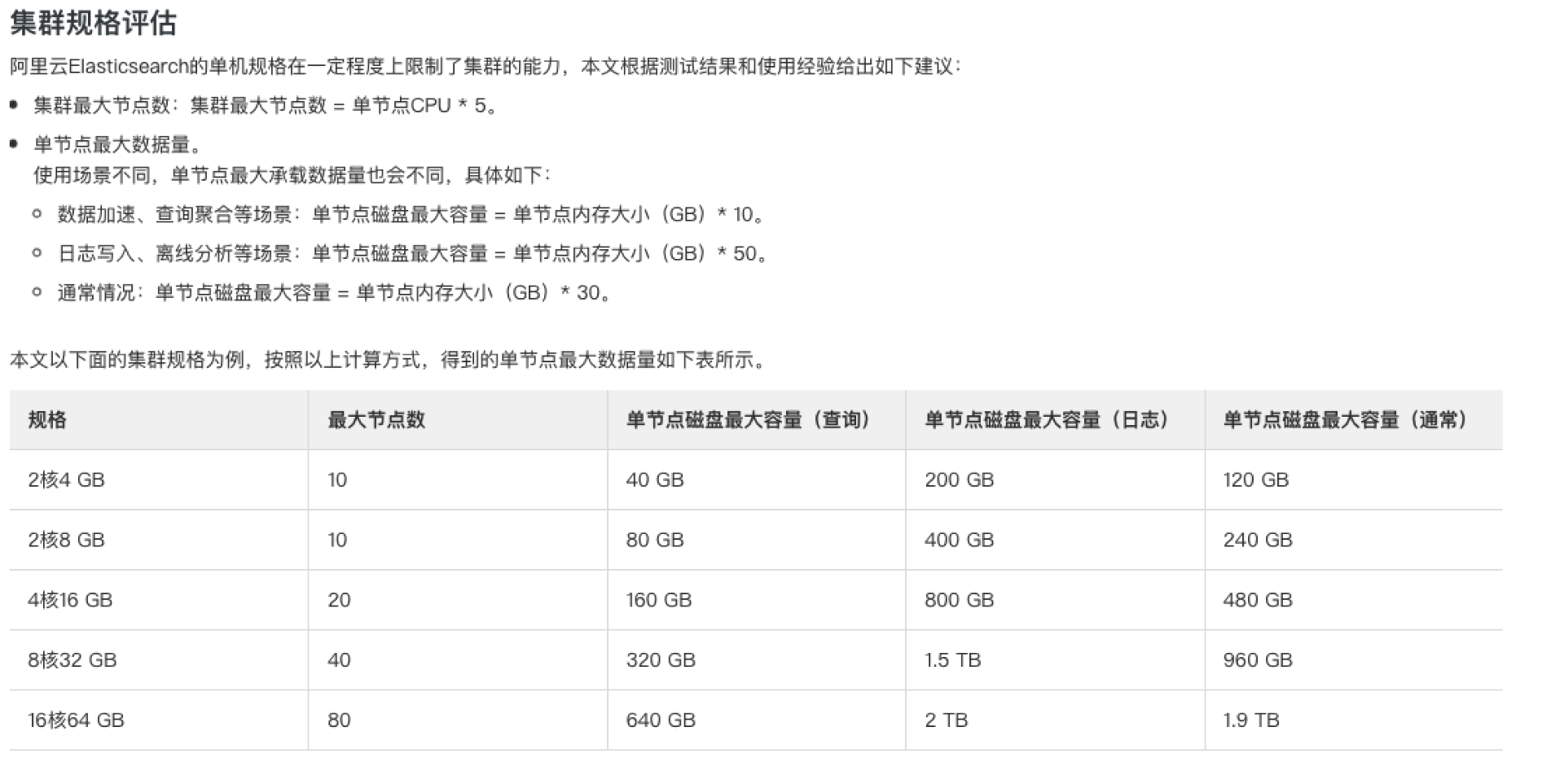

基准测试集群配置参考

清空数据进行测试

# nomad 取消volume挂载

## 方式1

# yum install expect -y

expect <<-EOF

spawn nomad volume deregister -force -namespace=elasticsearch es-data-0

expect "Are*"

send "y\r"

expect eof;

EOF

expect <<-EOF

spawn nomad volume deregister -force -namespace=elasticsearch es-data-1

expect "Are*"

send "y\r"

expect eof;

EOF

expect <<-EOF

spawn nomad volume deregister -force -namespace=elasticsearch es-master-0

expect "Are*"

send "y\r"

expect eof;

EOF

expect <<-EOF

spawn nomad volume deregister -force -namespace=elasticsearch es-master-1

expect "Are*"

send "y\r"

expect eof;

EOF

expect <<-EOF

spawn nomad volume deregister -force -namespace=elasticsearch es-master-2

expect "Are*"

send "y\r"

expect eof;

EOF

nomad volume status -namespace=elasticsearch

# ceph 删除 Pool

# rbd --pool myPool list #显示当前myPool的所有 rbd

# sudo rbd --pool myPool resize csi-vol-00000000-1111-2222-bbbb-cacacacacaf1 --size 20480 #调整大小

# 查询连接此Pool的地址

rbd status --pool myPool csi-vol-00000000-1111-2222-bbbb-cacacacacac3

## 加入黑名单

ceph osd blacklist add 10.103.3.40:0/1018593199

## 删除

sudo rbd --pool myPool rm csi-vol-00000000-1111-2222-bbbb-cacacacacac3

## ceph osd blacklist ls #列出黑名单

ceph osd blacklist clear #清空黑名单

# 删除 rbd 如果删除报错请执行上方添加黑名单操作

sudo rbd --pool myPool rm csi-vol-00000000-1111-2222-bbbb-cacacacacae2

sudo rbd --pool myPool rm csi-vol-00000000-1111-2222-bbbb-cacacacacac3

sudo rbd --pool myPool rm csi-vol-00000000-1111-2222-bbbb-cacacacacac4

sudo rbd --pool myPool rm csi-vol-00000000-1111-2222-bbbb-cacacacacaf0

sudo rbd --pool myPool rm csi-vol-00000000-1111-2222-bbbb-cacacacacaf1

#创建

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbbb-cacacacacae2 --size 204800 --image-feature layering;

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbbb-cacacacacac3 --size 204800 --image-feature layering;

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbbb-cacacacacac4 --size 204800 --image-feature layering;

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbbb-cacacacacaf0 --size 204800 --image-feature layering;

sudo rbd --pool myPool create csi-vol-00000000-1111-2222-bbbb-cacacacacaf1 --size 204800 --image-feature layering;

#重新创建 volume

nomad volume register -namespace=elasticsearch es-volume-data-0.hcl;

nomad volume register -namespace=elasticsearch es-volume-data-1.hcl;

nomad volume register -namespace=elasticsearch es-volume-master-0.hcl;

nomad volume register -namespace=elasticsearch es-volume-master-1.hcl;

nomad volume register -namespace=elasticsearch es-volume-master-2.hcl;

# 查询状态

nomad volume status -namespace elasticsearch;

<none>

job文件字段说明

### bsy ###

job "elasticsearch-ssd" { #定义job的名字 nomad job status 可以看到

namespace = "elasticsearch-ssd" #定义命名空间 创建命令 nomad namespace apply -description "Elasticsearch Cluster" elasticsearch;

datacenters = ["dc1"] #数据中心 nomad node status 查看dc字段

type = "service" #设置该job类型是服务,主要用于conusl的服务注册,不写这条,该job不会注册服务到consul

update { #更新策略定义

max_parallel = 1 #同时更新任务数量

health_check = "checks"

min_healthy_time = "30s" #分配必须处于健康状态的最低时间,然后标记为正常状态。

healthy_deadline = "5m" #标记为健康的截止日期,之后分配自动转换为不健康状态

auto_revert = true #指定job在部署失败时是否应自动恢复到上一个稳定job

canary = 0 #如果修改job以后导致更新失败,需要创建指定数量的替身,不会停止之前的旧版本,一旦确定替身健康,他们就会提升为正式服务,更新旧版本。

stagger = "30s"

}

##################################### master-0 ############################################

group "master-0" { #定义 group master-0

count = 1 # 启动服务数量

restart { # 定义重启策略

attempts = 3 # 时间间隔内重启次数

delay = "30s" #重新启动任务之前要等待的时间

interval = "5m" # 在服务开始运行的持续时间内,如果一直出现故障,则会由mode控制。mode是控制任务在一个时间间隔内失败多次的行为。

mode = "fail"

}

network { #定义网络

port "request" { #port名称 会在下面使用

}

port "communication" {

}

}

volume "ceph-volume" { #定义 volume 名称 task.volume_mount.volume 会引用

type = "host" # host 是本机 还有host

read_only = false # volume 的权限

source = "es-ssd-master-0"

}

task "elasticsearch" { # 创建单独的工作单元

driver = "docker" # 定义驱动 还可以是 java 等

kill_timeout = "300s"

kill_signal = "SIGTERM"

#volume_mount { # 挂载 上方声明的 volume

# volume = "ceph-volume"

# destination = "/srv" # 挂载的容器目录

# read_only = false

#}

env { #定义变量 同docker的 -e 参数

ES_TMPDIR = "/opt/elasticsearch/temp"

}

template { #定义一个配置文件 可以传nomad 一些变量

data = <<EOF

cluster:

name: my-cluster

publish:

timeout: 300s

join:

timeout: 300s

initial_master_nodes:

- {{ env "NOMAD_IP_communication" }}:{{ env "NOMAD_HOST_PORT_communication" }} #communication 就是上面 network.port 的名称

node:

name: es-master-0

master: true

data: false

ingest: false

network:

host: 0.0.0.0

discovery:

seed_hosts:

- {{ env "NOMAD_IP_communication" }}:{{ env "NOMAD_HOST_PORT_communication" }}

path:

data:

- /srv/data

logs: /srv/log

bootstrap.memory_lock: true

indices.query.bool.max_clause_count: 10000

EOF

#到这里配置文件内容定位完成

destination = "local/elasticsearch.yml" #指定存放在nomad 服务器数据的相对目录下

}

template {

data = <<EOF

-Xms512m

-Xmx512m

8-13:-XX:+UseConcMarkSweepGC

8-13:-XX:CMSInitiatingOccupancyFraction=75

8-13:-XX:+UseCMSInitiatingOccupancyOnly

14-:-XX:+UseG1GC

-Djava.io.tmpdir=${ES_TMPDIR}

-XX:+HeapDumpOnOutOfMemoryError

-XX:HeapDumpPath=data

-XX:ErrorFile=logs/hs_err_pid%p.log

8:-XX:+PrintGCDetails

8:-XX:+PrintGCDateStamps

8:-XX:+PrintTenuringDistribution

8:-XX:+PrintGCApplicationStoppedTime

8:-Xloggc:logs/gc.log

8:-XX:+UseGCLogFileRotation

8:-XX:NumberOfGCLogFiles=32

8:-XX:GCLogFileSize=64m

9-:-Xlog:gc*,gc+age=trace,safepoint:file=logs/gc.log:utctime,pid,tags:filecount=32,filesize=64m

EOF

destination = "local/jvm.options"

}

config { #这里就开始定义 容器的一些内容 如 镜像 启动命令等

image = "x602/elasticsearch:7.8.1" #镜像地址

force_pull = false

volumes = [ #挂载配置文件 我们上面输出的存放路径:容器内部的存放路径

"./local/elasticsearch.yml:/opt/elasticsearch/config/elasticsearch.yml",

"./local/jvm.options:/opt/elasticsearch/config/jvm.options"

]

command = "bin/elasticsearch" # 已什么命令启动

args = [ # 启动后跟的参数

"-Enetwork.publish_host=${NOMAD_IP_request}",

"-Ehttp.publish_port=${NOMAD_HOST_PORT_request}",

"-Ehttp.port=${NOMAD_PORT_request}",

"-Etransport.publish_port=${NOMAD_HOST_PORT_communication}",

"-Etransport.tcp.port=${NOMAD_PORT_communication}"

]

ports = [ #写端口 我们上面 network 定义的

"request",

"communication"

]

ulimit { #设置容器的 ulimit

memlock = "-1"

nofile = "65536"

nproc = "65536"

}

}

resources { #资源限制

cpu = 100

memory = 1024

}

service { # 定义 service

name = "es-req" # 名称

port = "request" # port 名字 上面 定义的

check { # 定义检查

name = "rest-tcp"

type = "tcp"

interval = "10s"

timeout = "2s"

}

check {

name = "rest-http"

type = "http"

path = "/"

interval = "5s"

timeout = "4s"

}

}

service {

name = "es-master-0-comm"

port = "communication"

check {

type = "tcp"

interval = "10s"

timeout = "2s"

}

}

}

}

##################################### master-1 ############################################

... ...

}

csi 动态创建

# 新 ew 1.2 ceph-csi es

########## register volume: es-master-0

## es volume.

cat <<EOF > es-volume-master-0.hcl

type = "csi"

id = "es-master-0"

name = "es-master-0"

capacity_min = "10GB"

capacity_max = "100GB"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

capability {

access_mode = "single-node-writer"

attachment_mode = "block-device"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

parameters {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "nomad"

imageFeatures = "layering"

}

EOF

########## register volume: es-master-1

## es volume.

cat <<EOF > es-volume-master-1.hcl

type = "csi"

id = "es-master-1"

name = "es-master-1"

capacity_min = "10GB"

capacity_max = "100GB"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

capability {

access_mode = "single-node-writer"

attachment_mode = "block-device"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

parameters {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "nomad"

imageFeatures = "layering"

}

EOF

########## register volume: es-master-2

## es volume.

cat <<EOF > es-volume-master-2.hcl

type = "csi"

id = "es-master-2"

name = "es-master-2"

capacity_min = "10GB"

capacity_max = "100GB"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

capability {

access_mode = "single-node-writer"

attachment_mode = "block-device"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

parameters {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "nomad"

imageFeatures = "layering"

}

EOF

########## register volume: es-data-0

## es volume.

cat <<EOF > es-volume-data-0.hcl

type = "csi"

id = "es-data-0"

name = "es-data-0"

capacity_min = "100GB"

capacity_max = "100GB"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

capability {

access_mode = "single-node-writer"

attachment_mode = "block-device"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

parameters {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "nomad"

imageFeatures = "layering"

}

EOF

########## register volume: es-data-1

## es volume.

cat <<EOF > es-volume-data-1.hcl

type = "csi"

id = "es-data-1"

name = "es-data-1"

capacity_min = "100GB"

capacity_max = "100GB"

capability {

access_mode = "single-node-writer"

attachment_mode = "file-system"

}

capability {

access_mode = "single-node-writer"

attachment_mode = "block-device"

}

plugin_id = "ceph-csi"

secrets {

userID = "admin"

userKey = "AQBWYPVhs0XZIhAACN0PAHEJTitvq514oVC12A=="

}

parameters {

clusterID = "ef8394c8-2b17-435d-ae47-9dff271875d1"

pool = "nomad"

imageFeatures = "layering"

}

EOF

nomad volume create -namespace=elasticsearch es-volume-data-0.hcl;

nomad volume create -namespace=elasticsearch es-volume-data-1.hcl;

nomad volume create -namespace=elasticsearch es-volume-master-0.hcl;

nomad volume create -namespace=elasticsearch es-volume-master-1.hcl;

nomad volume create -namespace=elasticsearch es-volume-master-2.hcl;

nomad volume delete -namespace=elasticsearch es-data-0

nomad volume delete -namespace=elasticsearch es-data-1

nomad volume delete -namespace=elasticsearch es-master-0

nomad volume delete -namespace=elasticsearch es-master-1

nomad volume delete -namespace=elasticsearch es-master-2

本文作者:鸣昊

本文链接:https://www.cnblogs.com/x602/p/15872274.html

版权声明:本作品采用知识共享署名-非商业性使用-禁止演绎 2.5 中国大陆许可协议进行许可。

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 25岁的心里话

· 闲置电脑爆改个人服务器(超详细) #公网映射 #Vmware虚拟网络编辑器

· 零经验选手,Compose 一天开发一款小游戏!

· 因为Apifox不支持离线,我果断选择了Apipost!

· 通过 API 将Deepseek响应流式内容输出到前端