关于深度学习之TensorFlow简单实例

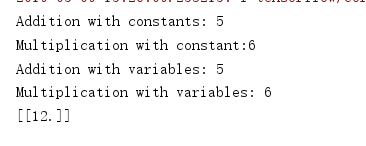

1.对TensorFlow的基本操作

import tensorflow as tf import os os.environ["CUDA_VISIBLE_DEVICES"]="0" a=tf.constant(2) b=tf.constant(3) with tf.Session() as sess: print("a:%i" % sess.run(a),"b:%i" % sess.run(b)) print("Addition with constants: %i" % sess.run(a+b)) print("Multiplication with constant:%i" % sess.run(a*b)) a=tf.placeholder(tf.int16) b=tf.placeholder(tf.int16) add=tf.add(a,b) mul=tf.multiply(a,b) with tf.Session() as sess: print("Addition with variables: %i" % sess.run(add,feed_dict={a:2,b:3})) print("Multiplication with variables: %i" % sess.run(mul,feed_dict={a:2,b:3})) with tf.Session() as sess: matrix1=tf.constant([[3.,3.]]) matrix2=tf.constant([[2.],[2.]]) product=tf.matmul(matrix1,matrix2) result=sess.run(product) print(result)

结果截图

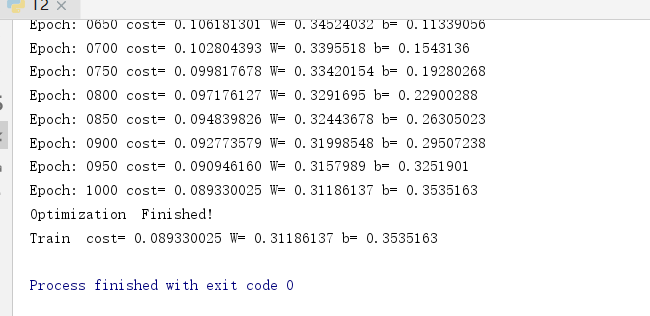

2.线性回归操作

import tensorflow as tf import numpy as np import matplotlib.pyplot as plt import os os.environ["CUDA_VISIBLE_DEVICES"]="0" # 设置训练参数,learning_rate=0.01,training_epochs=1000,display_step=50。 #Parameters learning_rate=0.01 training_epochs=1000 display_step=50 # 创建训练数据 #training Data train_X=np.asarray([3.3,4.4,5.5,6.71,6.93,4.168,9.779,6.182,7.59,2.167, 7.042,10.791,5.313,7.997,5.654,9.27,3.1]) train_Y=np.asarray([1.7,2.76,2.09,3.19,1.694,1.573,3.366,2.596,2.53,1.221, 2.827,3.465,1.65,2.904,2.42,2.94,1.3]) n_samples=train_X.shape[0] # 构造计算图,使用变量Variable构造变量X,Y #tf Graph Input X=tf.placeholder("float") Y=tf.placeholder("float") # 设置模型的初始权重 #Set model weights W=tf.Variable(np.random.randn(),name="weight") b=tf.Variable(np.random.randn(),name='bias') # 构造线性回归模型 #Construct a linear model pred=tf.add(tf.multiply(X,W),b) # 求损失函数,即均方差 #Mean squared error cost=tf.reduce_sum(tf.pow(pred-Y,2))/(2*n_samples) # 使用梯度下降法求最小值,即最优解 # Gradient descent optimizer=tf.train.GradientDescentOptimizer(learning_rate).minimize(cost) # 初始化全部变量 #Initialize the variables init =tf.global_variables_initializer() # 使用tf.Session()创建Session会话对象,会话封装了Tensorflow运行时的状态和控制。 #Start training with tf.Session() as sess: sess.run(init) # 调用会话对象sess的run方法,运行计算图,即开始训练模型。 #Fit all training data for epoch in range(training_epochs): for (x,y) in zip(train_X,train_Y): sess.run(optimizer,feed_dict={X:x,Y:y}) #Display logs per epoch step if (epoch+1) % display_step==0: c=sess.run(cost,feed_dict={X:train_X,Y:train_Y}) print("Epoch:" ,'%04d' %(epoch+1),"cost=","{:.9f}".format(c),"W=",sess.run(W),"b=",sess.run(b)) print("Optimization Finished!") # 打印训练模型的代价函数。 training_cost=sess.run(cost,feed_dict={X:train_X,Y:train_Y}) print("Train cost=",training_cost,"W=",sess.run(W),"b=",sess.run(b)) # 可视化,展现线性模型的最终结果。 #Graphic display plt.plot(train_X,train_Y,'ro',label='Original data') plt.plot(train_X,sess.run(W)*train_X+sess.run(b),label="Fitting line") plt.legend() plt.show()

结果截图

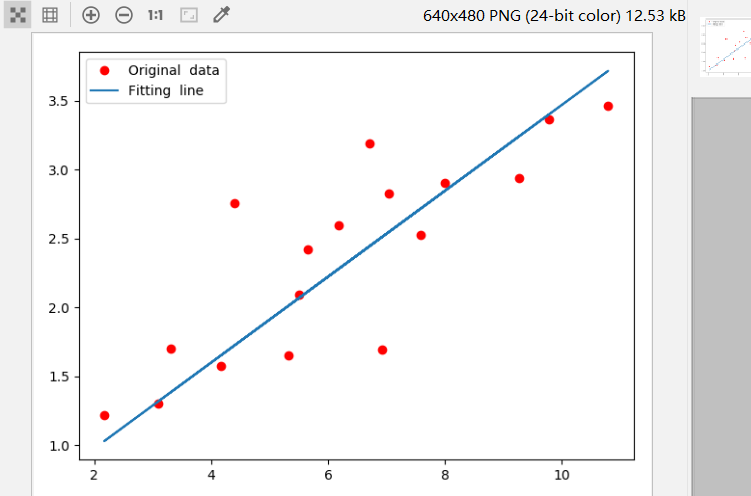

3.逻辑回归

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data mnist=input_data.read_data_sets("/home/yxcx/tf_data",one_hot=True) import os os.environ["CUDA_VISIBLE_DEVICES"]="0" #Parameters learning_rate=0.01 training_epochs=25 batch_size=100 display_step=1 #tf Graph Input x=tf.placeholder(tf.float32,[None,784]) y=tf.placeholder(tf.float32,[None,10]) #Set model weights W=tf.Variable(tf.zeros([784,10])) b=tf.Variable(tf.zeros([10])) #Construct model pred=tf.nn.softmax(tf.matmul(x,W)+b) #Minimize error using cross entropy cost=tf.reduce_mean(-tf.reduce_sum(y*tf.log(pred),reduction_indices=1)) #Gradient Descent optimizer=tf.train.GradientDescentOptimizer(learning_rate).minimize(cost) #Initialize the variables init=tf.global_variables_initializer() #Start training with tf.Session() as sess: sess.run(init) #Training cycle for epoch in range(training_epochs): avg_cost=0 total_batch=int(mnist.train.num_examples/batch_size) # loop over all batches for i in range(total_batch): batch_xs,batch_ys=mnist.train.next_batch(batch_size) #Fit training using batch data _,c=sess.run([optimizer,cost],feed_dict={x:batch_xs,y:batch_ys}) #Conpute average loss avg_cost+= c/total_batch if (epoch+1) % display_step==0: print("Epoch:",'%04d' % (epoch+1),"Cost:" ,"{:.09f}".format(avg_cost)) print("Optimization Finished!") #Test model correct_prediction=tf.equal(tf.argmax(pred,1),tf.argmax(y,1)) # Calculate accuracy for 3000 examples accuracy=tf.reduce_mean(tf.cast(correct_prediction,tf.float32)) print("Accuracy:",accuracy.eval({x:mnist.test.images[:3000],y:mnist.test.labels[:3000]}))

结果截图:

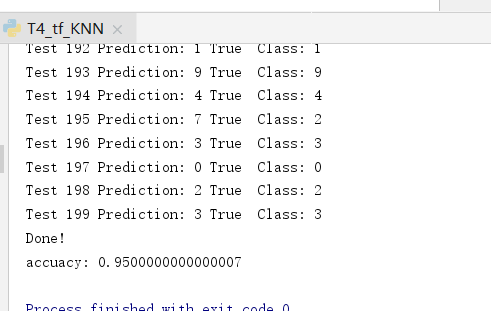

4.K邻近算法

import numpy as np import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data import os os.environ["CUDA_VISIBLE_DEVICES"]="0" mnist =input_data.read_data_sets("/home/yxcx/tf_data/MNIST_data",one_hot=True) # mnist=input_data.read_data_sets("MNIST_data",one_hot=True,source_url='http://yann.lecun.com/exdb/mnist/') Xtr,Ytr=mnist.train.next_batch(5000) Xte,Yte=mnist.test.next_batch(200) #tf Graph Input xtr=tf.placeholder("float",[None,784]) xte=tf.placeholder("float",[784]) distance =tf.reduce_sum(tf.abs(tf.add(xtr,tf.negative(xte))),reduction_indices=1) pred=tf.argmin(distance,0) accuracy=0 init=tf.global_variables_initializer() #Start training with tf.Session() as sess: sess.run(init) for i in range(len(Xte)): #Get nearest nerighbor nn_index=sess.run(pred,feed_dict={xtr:Xtr,xte:Xte[i,:]}) print("Test",i ,"Prediction:",np.argmax(Ytr[nn_index]),"True Class:",np.argmax(Yte[i])) if np.argmax(Ytr[nn_index])==np.argmax(Yte[i]): accuracy+=1./len(Xte) print("Done!") print("accuacy:" ,accuracy)

结果截图:

注:在3和4实验所使用数据集路径是不需要修改的,在运行程序时需要联网,会自动下载数据集。因为网络原因,可能会失败。如果失败后需多运行几次,就可以成功

对代码中用到的一些方法即参数进行记录:

1. x=tf.placeholder(dytype,shape,name)

三个参数含义为:

- dtype:数据类型。常用的是tf.float32,tf.float64等数值类型

- shape:数据形状。默认是None,就是一维值,也可以是多维(比如[None,784]表示行位置,列为784)

- name:名称

2.tf.Variable(initializer,name)

参数含义为:

1.initializer表示初始化

2.name:名称

3.tf.zeros( shape, dtype=tf.float32, name=None )

参数含义:

1.第一个参数可以理解为数组,如代码中的tf.zeros([784,10]) 理解为784行10列

2.类型

3.名称

4.tf.nn.softmax()

含义:将向量归结为0-1之间,使特征更明显

5.reduce_mean(input_tensor,axis=None,keep_dims=False,name=None,reduction_indices=None)

该方法用于计算张量的各个维度上的元素的平均值.

1.input_tensor:要减少的张量.应该有数字类型.

2.axis:要减小的尺寸.如果为None(默认),则减少所有维度.必须在[-rank(input_tensor), rank(input_tensor))范围内.

3.keep_dims:如果为true,则保留长度为1的缩小尺寸.

4.name:操作的名称(可选).

5.reduction_indices:axis的不支持使用的名称.

6.tf.train.GradientDescentOptimizer

自适应学习器

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】凌霞软件回馈社区,博客园 & 1Panel & Halo 联合会员上线

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】博客园社区专享云产品让利特惠,阿里云新客6.5折上折

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步