Tensorflow中神经网络的激活函数

激励函数的目的是为了调节权重和误差。

relu

max(0,x)

relu6

min(max(0,x),6)

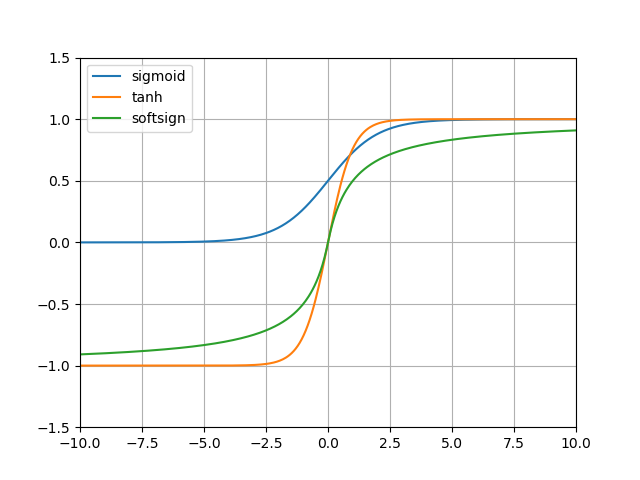

sigmoid

1/(1+exp(-x))

tanh

((exp(x)-exp(-x))/(exp(x)+exp(-x))

双曲正切函数的值域是(-1,1)

softsign

x/(abs(x)+1)

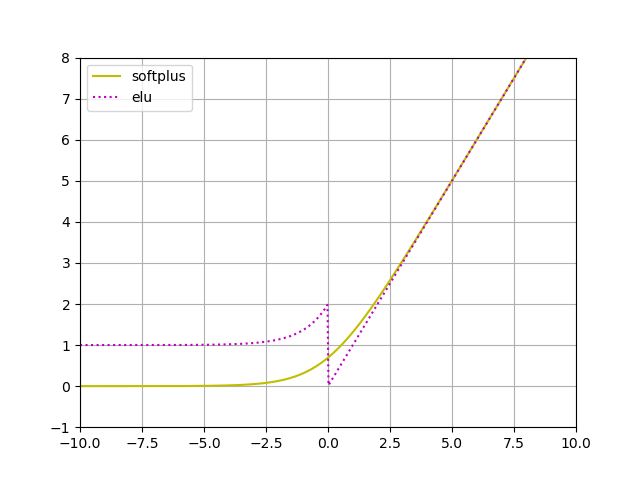

softplus

log(exp(x)+1)

elu

(exp(x)+1)if x<0 else x

import math

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

x = np.linspace(-10, 10, 500)

relu = list(map(lambda m: max(0, m), x))

relu6 = list(map(lambda m: min(max(0, m), 6), x))

sigmoid = 1 / (np.exp(-x) + 1)

tanh = (np.exp(x) - np.exp(-x)) / (np.exp(x) + np.exp(-x))

softsign = x / (np.abs(x) + 1)

softplus = np.log(np.exp(x) + 1)

elu = list(map(lambda m: math.exp(m) + 1 if m < 0 else m, x))

data = {

'relu': relu,

'relu6': relu6,

'sigmoid': sigmoid,

'tanh': tanh,

'softsign': softsign,

'softplus': softplus,

'elu': elu

}

df = pd.DataFrame(data, index=x)

# print(df)

df[["relu", "relu6"]].plot(

kind="line", grid=True,

style={"relu": "y-", "relu6": "r:"},

yticks=np.linspace(-1, 8, 10),

xlim=[-10, 10], ylim=[-1, 8])

df[["softplus", "elu"]].plot(

kind="line", grid=True,

style={"softplus": "y-", "elu": "m:"},

yticks=np.linspace(-1, 8, 10),

xlim=[-10, 10], ylim=[-1, 8])

df[["sigmoid", "tanh", "softsign"]].plot(

kind="line", grid=True,

yticks=np.linspace(-1.5, 1.5, 7),

xlim=[-10, 10], ylim=[-1.5, 1.5])

plt.show()

浙公网安备 33010602011771号

浙公网安备 33010602011771号