windows下Logstash6.5.3版本读取文件输入不生效、配置elasticsearch模板后数据入es报错:Rejecting mapping update to [hello-world-2020.09.10] as the final mapping would have more than 1 type: [_doc, doc]"}

首先讲个题外话。logstash配置文件hello-world.json上篇也提到过,不过那是7.9.0版本的,注意mapping下面是没有type的,因为默认的type就是_doc:

{ "index_patterns": ["hello-world-%{+YYYY.MM.dd}"], "order": 0, "settings": { "index.refresh_interval": "10s" }, "mappings": { "properties": { "createTime": { "type": "long" }, "sessionId": { "type": "text", "fielddata": true, "fields": { "keyword": { "type": "keyword", "ignore_above": 256 } } }, "chart": { "type": "text", "analyzer": "ik_max_word", "search_analyzer": "ik_max_word" } } } }

如果我们拿上面这个模板放到logstash的6.5.3版本去跑,会提示mapper_parsing_exception,原因是:

Root mapping definition has unsupported parameters: [createTime : {type=long}] [sessionId : {fielddata=true, type=text, fields={keyword={ignore_above=256, type=keyword}}}] [chart : {search_analyzer=ik_max_word, analyzer=ik_max_word, type=text}]

为啥不支持,因为我们没有提供mapping的type,所以我们只能加上一个映射类型,比如我们就使用_doc,模板文件内容新增节点_doc:

{ "index_patterns": [ "hello-world-%{+YYYY.MM.dd}" ], "order": 0, "settings": { "index.refresh_interval": "10s" }, "mappings": { "_doc": { "properties": { "createTime": { "type": "long" }, "sessionId": { "type": "text", "fielddata": true, "fields": { "keyword": { "type": "keyword", "ignore_above": 256 } } }, "chart": { "type": "text", "analyzer": "ik_max_word", "search_analyzer": "ik_max_word" } } } } }

好了,重启logstash,这次启动没报错。回归正题,我们给logstash配置输入源是:

input{ file { path => "D:\wlf\logs\*" start_position => "beginning" type => "log" } }

启动没有报错,但静悄悄,文件在D盘里,数据也有的,但没有任何动静,elasticsearch也没有收到数据。这里比较奇葩的是path指定的目录分隔符,它要求使用的不再是我们习惯上windows环境下的反斜杠,而是linux下的斜杠,所以把path改成:

path => "D:/wlf/logs/*"

重启启动logstash,终于在启动结束后有了动静,数据往elasticsearch插入了,只不过插入失败了,我们迎来了新的报错:

[2020-09-10T09:46:23,198][WARN ][logstash.outputs.elasticsearch] Could not index event to Elasticsearch. {:status=>400, :action=>["index", {:_id=>nil, :_index=>"hello-world-2020.09.10", :_type=>"doc", :routing=>nil}, #<LogStash::Event:0x15dfbf91>], :response=>{"index"=>{"_index"=>"after-cdr-2020.09.10", "_type"=>"doc", "_id"=>"jX-xdXQBTQnatMJTUOJU", "status"=>400, "error"=>{"type"=>"illegal_argument_exception", "reason"=>"Rejecting mapping update to [hello-world-2020.09.10] as the final mapping would have more than 1 type: [_doc, doc]"}}}}

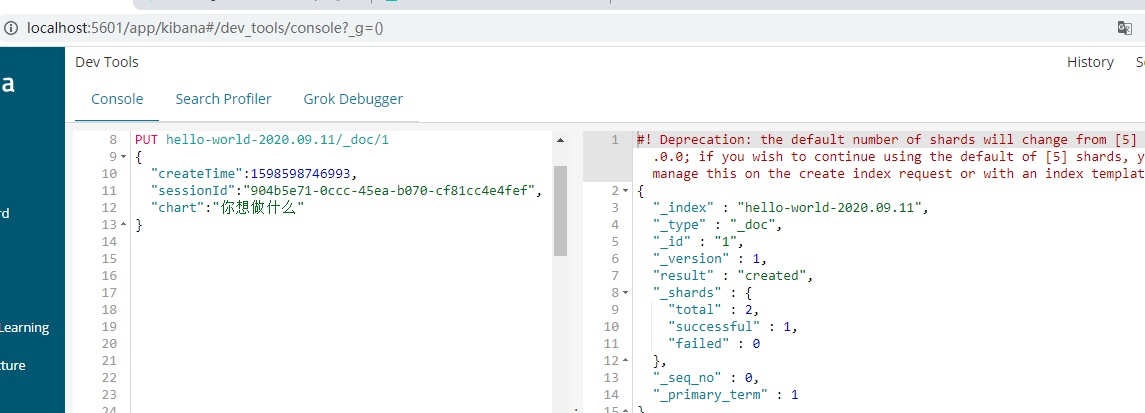

日志说elasticsearch的hello-world-2020.09.10索引要的映射type是_doc,而我们给的却是doc,所以拒绝为此索引更新映射。我们到kiban确认下这个问题:

模板是对的,插入一条数据试试:

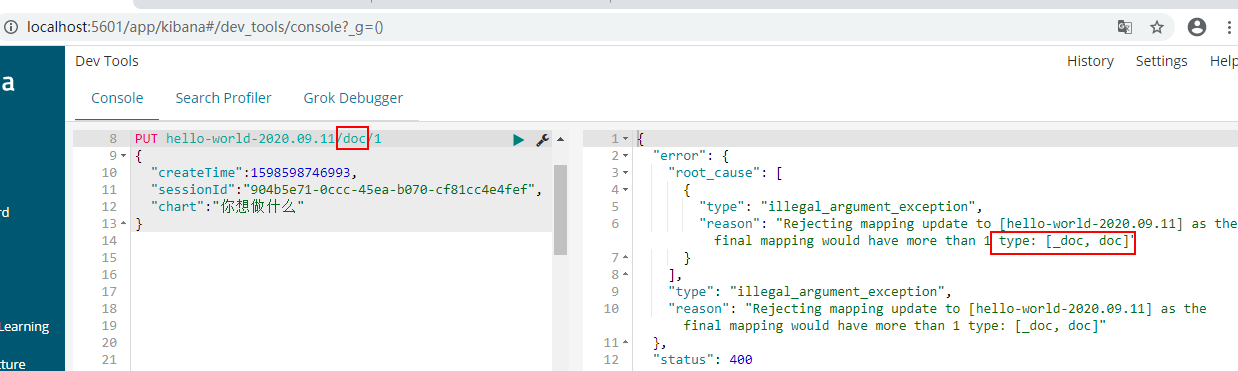

一点问题没有,那么按日志里的提示,我们试试映射类型为doc的:

问题重现了,这里为啥logstash要使用doc类型而不是_doc类型呢?还不是默认值搞的鬼。我们不使用默认值就好了,在输出器中指定映射类型为_doc:

output { elasticsearch{ hosts => "localhost:9200" index => "hello-world-%{+YYYY.MM.dd}" manage_template => true template_name => "hello" template_overwrite => true template => "D:\elk\logstash-6.5.3\config\hello-world.json" document_type => "_doc" } }

再次重启logstash,启动ok,但之前D盘里的文件已经传输过一次(虽然是失败的),所以elasticsearch还是静悄悄,我们可以手动打开其中某个文件,复制其中一条数据后保存,或者直接复制一个文件,随后新的数据会被传输到elasticsearch去,这次会顺利,没有异常,直接到elasticsearch去查同步的数据即可。

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】凌霞软件回馈社区,博客园 & 1Panel & Halo 联合会员上线

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步