L1和L2特征的适用场景

How to decide which regularization (L1 or L2) to use?

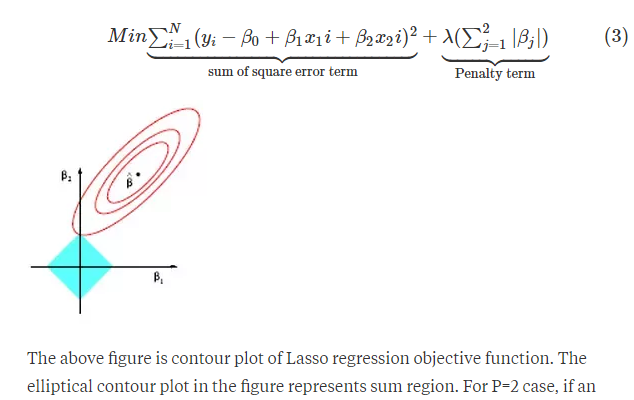

Is there collinearity among some features? L2 regularization can improve prediction quality in this case, as implied by its alternative name, "ridge regression." However, it is true in general that either form of regularization will improve out-of-sample prediction, whether or not there is multicollinearity and whether or not there are irrelevant features, simply because of the shrinkage properties of the regularized estimators. L1 regularization can't help with multicollinearity; it will just pick the feature with the largest correlation to the outcome. Ridge regression can obtain coefficient estimates even when you have more features than examples... but the probability that any will be estimated precisely at 0 is 0.

What are the pros & cons of each of L1 / L2 regularization?

L1 regularization can't help with multicollinearity. L2 regularization can't help with feature selection. Elastic net regression can solve both problems. L1 and L2 regularization are taught for pedagogical reasons, but I'm not aware of any situation where you want to use regularized regressions but not try an elastic net as a more general solution, since it includes both as special cases.

实际使用过程中,如果数据量不是很大,用L2的精度要好。

多重共线性(multicollinearity)指的是你建模的时候,解释变量之间有高度相关性。

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】凌霞软件回馈社区,博客园 & 1Panel & Halo 联合会员上线

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步