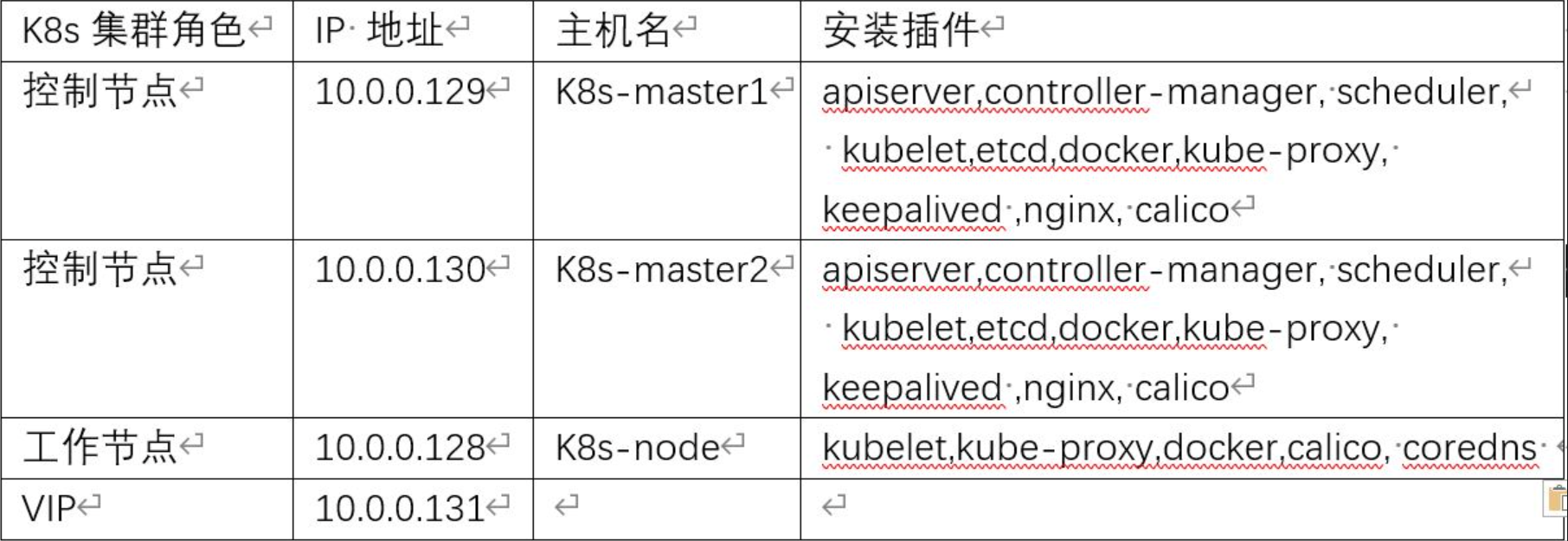

kubeadm安装生产环境多master节点k8s高可用集群

环境准备

三台虚拟机(所有节点做好host解析)

cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

10.0.0.128 k8s-node

10.0.0.129 k8s-master1

10.0.0.130 k8s-master2

kubeadm是工具,可以快速搭建集群,属于自动部署,简化部署操作。kubeadm适合需要经常部署k8s,或者对自动化要求比较高的场景下使用。

一、初始化安装k8s集群的实验环境

1. 修改网卡配置文件

[root@k8s-node ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0

TYPE=Ethernet

BOOTPROTO=static

DEFROUTE=yes

NAME=eth0

DEVICE=eth0

ONBOOT=yes

IPADDR=10.0.0.128

NETMASK=255.255.255.0

GATEWAY=10.0.0.254

DNS1=8.8.8.8

[root@k8s-master1 ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0

TYPE=Ethernet

BOOTPROTO=static

DEFROUTE=yes

NAME=eth0

DEVICE=eth0

ONBOOT=yes

IPADDR=10.0.0.129

NETMASK=255.255.255.0

GATEWAY=10.0.0.254

DNS1=8.8.8.8

[root@k8s-master2 ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0

TYPE=Ethernet

BOOTPROTO=static

DEFROUTE=yes

NAME=eth0

DEVICE=eth0

ONBOOT=yes

IPADDR=10.0.0.130

NETMASK=255.255.255.0

GATEWAY=10.0.0.254

DNS1=8.8.8.8

修改配置文件之后需要重启网络服务才能使配置生效

systemctl restart network

2. 配置主机之间无密码登录

在k8s-node主机上操作:

[root@k8s-node ~]# ssh-keygen

[root@k8s-node ~]# ssh-copy-id k8s-master2

[root@k8s-node ~]# ssh-copy-id k8s-master1

在k8s-master1主机上操作:

[root@k8s-master1 ~]# ssh-keygen

[root@k8s-master1 ~]# ssh-copy-id k8s-master2

[root@k8s-master1 ~]# ssh-copy-id k8s-node

在k8s-master2主机上操作:

[root@k8s-master2 ~]# ssh-keygen

[root@k8s-master2 ~]# ssh-copy-id k8s-master1

[root@k8s-master2 ~]# ssh-copy-id k8s-node

或者用脚本实现

yum install -y sshpass

ssh-keygen -f /root/.ssh/id_rsa -P ''

export IP="10.0.0.128 10.0.0.129 10.0.0.130"

export SSHPASS=kvm-kvm@ECS

for HOST in $IP;do

sshpass -e ssh-copy-id -o StrictHostKeyChecking=no $HOST;

done

3. 关闭交换分区swap,提升性能

临时关闭

[root@k8s-node ~]# swapoff -a

[root@k8s-master1 ~]# swapoff -a

[root@k8s-master2 ~]# swapoff -a

永久关闭,注释swap挂载,给swap这行开头加一下注释

[root@k8s-node ~]# cat /etc/fstab

#

# /etc/fstab

# Created by anaconda on Thu Jul 21 04:56:59 2022

#

# Accessible filesystems, by reference, are maintained under '/dev/disk'

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

#

/dev/mapper/centos-root / xfs defaults 0 0

UUID=25df1604-f316-4d70-b007-e905a7ec9d55 /boot xfs defaults 0 0

#/dev/mapper/centos-swap swap swap defaults 0 0

[root@k8s-master1 ~]# cat /etc/fstab

#

# /etc/fstab

# Created by anaconda on Sun Jul 24 17:18:04 2022

#

# Accessible filesystems, by reference, are maintained under '/dev/disk'

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

#

/dev/mapper/centos-root / xfs defaults 0 0

UUID=ec12e2e1-faeb-432d-b5f6-2768a14c6e9c /boot xfs defaults 0 0

#/dev/mapper/centos-swap swap swap defaults 0 0

[root@k8s-master2 ~]# cat /etc/fstab

#

# /etc/fstab

# Created by anaconda on Sun Jul 24 17:18:04 2022

#

# Accessible filesystems, by reference, are maintained under '/dev/disk'

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

#

/dev/mapper/centos-root / xfs defaults 0 0

#/dev/mapper/centos-swap swap swap defaults 0 0

关闭swap分区的原因:

Swap是交换分区,如果机器内存不够,会使用swap分区,但是swap分区的性能较低,k8s设计的时候为了能提升性能,默认是不允许使用交换分区的。Kubeadm初始化的时候会检测swap是否关闭,如果没关闭,那就初始化失败。如果不想要关闭交换分区,安装k8s的时候可以指定--ignore-preflight-errors=Swap来解决

4. 修改机器内核参数

[root@k8s-master1 ~]# modprobe br_netfilter

[root@k8s-master1 ~]# cat > /etc/sysctl.d/k8s.conf <<EOF

>net.bridge.bridge-nf-call-ip6tables = 1

>net.bridge.bridge-nf-call-iptables = 1

>net.ipv4.ip_forward = 1

>EOF

[root@k8s-master1 ~]# sysctl -p /etc/sysctl.d/k8s.conf

其他节点同理,相同的操作

要让Linux系统具有路由转发功能,需要配置一个Linux的内核参数net.ipv4.ip_forward。这个参数指定了Linux系统当前对路由转发功能的支持情况;其值为0时表示禁止进行IP转发;如果是1,则说明IP转发功能已经打开。

5. 关闭关闭firewalld防火墙,selinux

[root@k8s-master1 ~]# systemctl stop firewalld ; systemctl disable firewalld

[root@k8s-master1 ~]# sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

[root@k8s-master1 ~]# reboot

[root@k8s-master1 ~]# getenforce

其他节点相同操作

6. 配置清华k8s源

[root@k8s-master1 ~]# cat /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=kubernetes

baseurl=https://mirrors.tuna.tsinghua.edu.cn/kubernetes/yum/repos/kubernetes-el7-$basearch

enabled=1

其他节点配置相同的源

7. 开启ipvs

[root@k8s-master1 ~]# cat /etc/sysconfig/modules/ipvs.modules

#!/bin/bash

ipvs_modules="ip_vs ip_vs_lc ip_vs_wlc ip_vs_rr ip_vs_wrr ip_vs_lblc ip_vs_lblcr ip_vs_dh ip_vs_sh ip_vs_nq ip_vs_sed ip_vs_ftp nf_conntrack"

for kernel_module in ${ipvs_modules}; do

/sbin/modinfo -F filename ${kernel_module} > /dev/null 2>&1

if [ 0 -eq 0 ]; then

/sbin/modprobe ${kernel_module}

fi

done

[root@k8s-master1 ~]# bash /etc/sysconfig/modules/ipvs.modules

其他节点相理,做相同的操作

ipvs (IP Virtual Server) 实现了传输层负载均衡,ipvs运行在主机上,在真实服务器集群前充当负载均衡器。ipvs可以将基于TCP和UDP的服务请求转发到真实服务器上,并使真实服务器的服务在单个 IP 地址上显示为虚拟服务。

kube-proxy支持 iptables 和 ipvs 两种模式。 iptables 就是 kube-proxy 默认的操作模式,ipvs 和 iptables 都是基于netfilter的,但是ipvs采用的是hash表,因此当service数量达到一定规模时,hash查表的速度优势就会显现出来,从而提高service的服务性能。

1)、ipvs 为大型集群提供了更好的可扩展性和性能

2)、ipvs 支持比 iptables 更复杂的复制均衡算法(最小负载、最少连接、加权等等)

3)、ipvs 支持服务器健康检查和连接重试等功能

二、 安装docker服务

[root@k8s-master1 ~]# yum install yum-utils device-mapper-persistent-data lvm2

[root@k8s-master1 ~]# curl -o /etc/yum.repos.d/docker-ce.repo https://mirrors.ustc.edu.cn/docker-ce/linux/centos/docker-ce.repo

[root@k8s-master1 ~]# sed -i 's#download.docker.com#mirrors.tuna.tsinghua.edu.cn/docker-ce#g' /etc/yum.repos.d/docker-ce.repo

[root@k8s-master1 ~]# yum install docker-ce-20.10.6 docker-ce-cli-20.10.6 containerd.io -y

[root@k8s-master1 ~]# systemctl start docker

[root@k8s-master1 ~]# systemctl enable docker

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

#配置加速器

[root@k8s-master1 ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://docker.mirrors.ustc.edu.cn","https://reg-mirror.qiniu.com/","https://hub-mirror.c.163.com/"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

[root@k8s-master1 ~]# systemctl daemon-reload && systemctl restart docker

k8s-master2和k8s-node节点相同

三、安装初始化k8s需要的软件包

[root@k8s-master1 ~]# yum install kubelet-1.20.6 kubeadm-1.20.6 kubectl-1.20.6 --nogpgcheck -y

[root@k8s-master1 ~]# systemctl enable kubelet && systemctl start kubelet

[root@k8s-master1 ~]# systemctl status kubelet

● kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/usr/lib/systemd/system/kubelet.service; enabled; vendor preset: disabled)

Drop-In: /usr/lib/systemd/system/kubelet.service.d

└─10-kubeadm.conf

Active: activating (auto-restart) (Result: exit-code) since Wed 2022-07-27 23:47:32 CST; 9s ago

Docs: https://kubernetes.io/docs/

Process: 4919 ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_KUBEADM_ARGS $KUBELET_EXTRA_ARGS (code=exited, status=255)

Main PID: 4919 (code=exited, status=255)

Jul 27 23:47:32 k8s-master1 systemd[1]: kubelet.service: main process exited, code=exited, status=255/n/a

Jul 27 23:47:32 k8s-master1 systemd[1]: Unit kubelet.service entered failed state.

Jul 27 23:47:32 k8s-master1 systemd[1]: kubelet.service failed.

注:kubelet状态不是running状态,这个是正常的,不用管,等k8s组件起来这个kubelet就正常了。

[root@k8s-master1 ~]# kubelet --version

Kubernetes v1.20.6

其他节点相同的操作

kubeadm: kubeadm是一个工具,用来初始化k8s集群的

kubelet: 安装在集群所有节点上,用于启动Pod的

kubectl: 通过kubectl可以部署和管理应用,查看各种资源,创建、删除和更新各种组件

四、通过keepalive+nginx实现k8s apiserver节点高可用

1. 安装keepalive 和nginx

在k8s-master1和k8s-master2上做nginx主备安装(配置文件相同)

[root@k8s-master1 ~]# yum install nginx keepalived -y

2. 修改nginx配置文件。主备一样

[root@k8s-master1 ~]# cat /etc/nginx/nginx.conf

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

# 四层负载均衡,为两台Master apiserver组件提供负载均衡

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 10.0.0.129:6443; # Master1 APISERVER IP:PORT

server 10.0.0.130:6443; # Master2 APISERVER IP:PORT

}

server {

listen 16443; # 由于nginx与master节点复用,这个监听端口不能是6443,否则会冲突

proxy_pass k8s-apiserver;

}

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

include /etc/nginx/mime.types;

default_type application/octet-stream;

server {

listen 80 default_server;

server_name _;

location / {

}

}

}

查看配置文件是否正确

[root@k8s-master1 ~]# nginx -t

nginx: [emerg] unknown directive "stream" in /etc/nginx/nginx.conf:13

nginx: configuration file /etc/nginx/nginx.conf test failed

定位原因是nginx缺少modules模块

[root@k8s-master1 ~]# yum install -y nginx-all-modules.noarch

再次检查配置文件正常

[root@k8s-master1 ~]# nginx -t

nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

nginx: configuration file /etc/nginx/nginx.conf test is successful

3. keepalive配置

主keepalived

[root@k8s-master1 ~]# cat /etc/keepalived/keepalived.conf

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state MASTER

interface eth0 # 修改为实际网卡名

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 100 # 优先级,主服务器设置100,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

# 虚拟IP

virtual_ipaddress {

10.0.0.131/24

}

track_script {

check_nginx

}

}

vrrp_script:指定检查nginx工作状态脚本(根据nginx状态判断是否故障转移)

virtual_ipaddress:虚拟IP(VIP)

[root@k8s-master1 ~]# cat /etc/keepalived/check_nginx.sh

#!/bin/bash

#1、判断Nginx是否存活

counter=`ps -C nginx --no-header | wc -l`

if [ $counter -eq 0 ]; then

#2、如果不存活则尝试启动Nginx

service nginx start

sleep 2

#3、等待2秒后再次获取一次Nginx状态

counter=`ps -C nginx --no-header | wc -l`

#4、再次进行判断,如Nginx还不存活则停止Keepalived,让地址进行漂移

if [ $counter -eq 0 ]; then

service keepalived stop

fi

fi

[root@k8s-master1 ~]# scp /etc/keepalived/check_nginx.sh root@k8s-master2:/etc/keepalived/check_nginx.sh

[root@xianchaomaster1 ~]# chmod +x /etc/keepalived/check_nginx.sh

备keepalive

[root@k8s-master2 ~]# cat /etc/keepalived/keepalived.conf

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state BACKUP

interface eth0 # 修改为实际网卡名

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 90 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

# 虚拟IP

virtual_ipaddress {

10.0.0.131/24

}

track_script {

check_nginx

}

}

[root@k8s-master2 ~]# cat /etc/keepalived/check_nginx.sh

#!/bin/bash

counter=`ps -C nginx --no-header | wc -l`

if [ $counter -eq 0 ]; then

service nginx start

sleep 2

counter=`ps -C nginx --no-header | wc -l`

if [ $counter -eq 0 ]; then

service keepalived stop

fi

fi

[root@xianchaomaster2 ~]# chmod +x /etc/keepalived/check_nginx.sh

4. 启动服务

[root@k8s-master1 keepalived]# systemctl daemon-reload

[root@k8s-master1 keepalived]# systemctl start nginx

[root@k8s-master1 keepalived]# systemctl status nginx

[root@k8s-master1 keepalived]# systemctl start keepalived

[root@k8s-master1 keepalived]# systemctl status keepalived

[root@k8s-master1 ~]# systemctl enable nginx

Created symlink from /etc/systemd/system/multi-user.target.wants/nginx.service to /usr/lib/systemd/system/nginx.service.

[root@k8s-master1 ~]# systemctl enable keepalived

Created symlink from /etc/systemd/system/multi-user.target.wants/keepalived.service to /usr/lib/systemd/system/keepalived.service.

k8s-master2 相同,启动服务

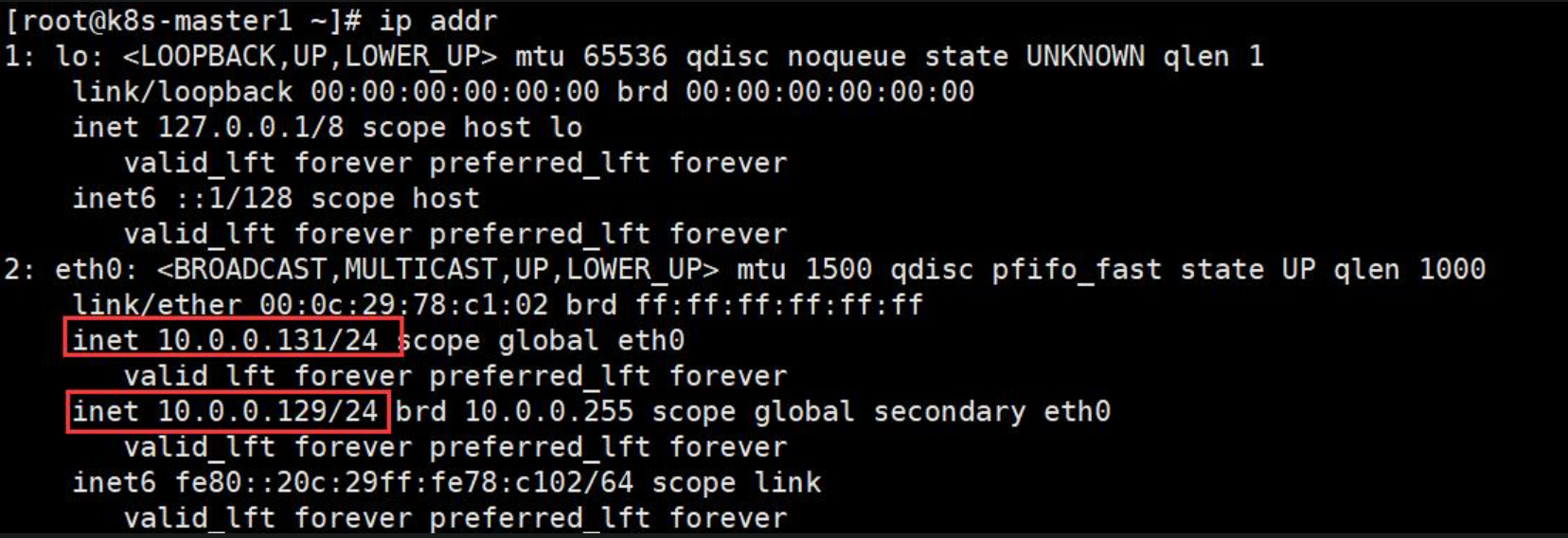

vip绑定成功

5. 测试keepalived

#停掉k8s-master1上的nginx或者keepalived,查看vip是否会漂移到k8s-master2上

[root@k8s-master1 ~]# systemctl stop keepalived

五、kubeadm初始化k8s集群

初始化k8s集群需要的离线镜像包上传到三个节点上

[root@k8s-master1 images]# docker load -i k8simage-1-20-6.tar.gz

[root@k8s-master2 images]# docker load -i k8simage-1-20-6.tar.gz

[root@k8s-node images]# docker load -i k8simage-1-20-6.tar.gz

创建kubeadm-config.yaml文件:

[root@k8s-master1 ~]# cat kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.20.6

controlPlaneEndpoint: 10.0.0.131:16443

imageRepository: registry.aliyuncs.com/google_containers

apiServer:

certSANs:

- 10.0.0.128

- 10.0.0.129

- 10.0.0.130

- 10.0.0.131

networking:

podSubnet: 10.244.0.0/16

serviceSubnet: 10.10.0.0/16

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs

注:生成kubeadm默认配置文件,执行如下命令:

[root@k8s-master1 ~]# kubeadm config print init-defaults > init-config.yaml

初始化命令如下:

[root@k8s-master1 ~]# kubeadm init --config kubeadm-config.yaml --ignore-preflight-errors=SystemVerification

注:若初始化有问题,修改init-config.yaml,先重置kubeadm,然后再执行kubeadm init命令

[root@k8s-master1 ~]# kubeadm reset -f #重置命令

[root@k8s-master1 ~]# kubeadm init --config kubeadm-config.yaml --ignore-preflight-errors=SystemVerification

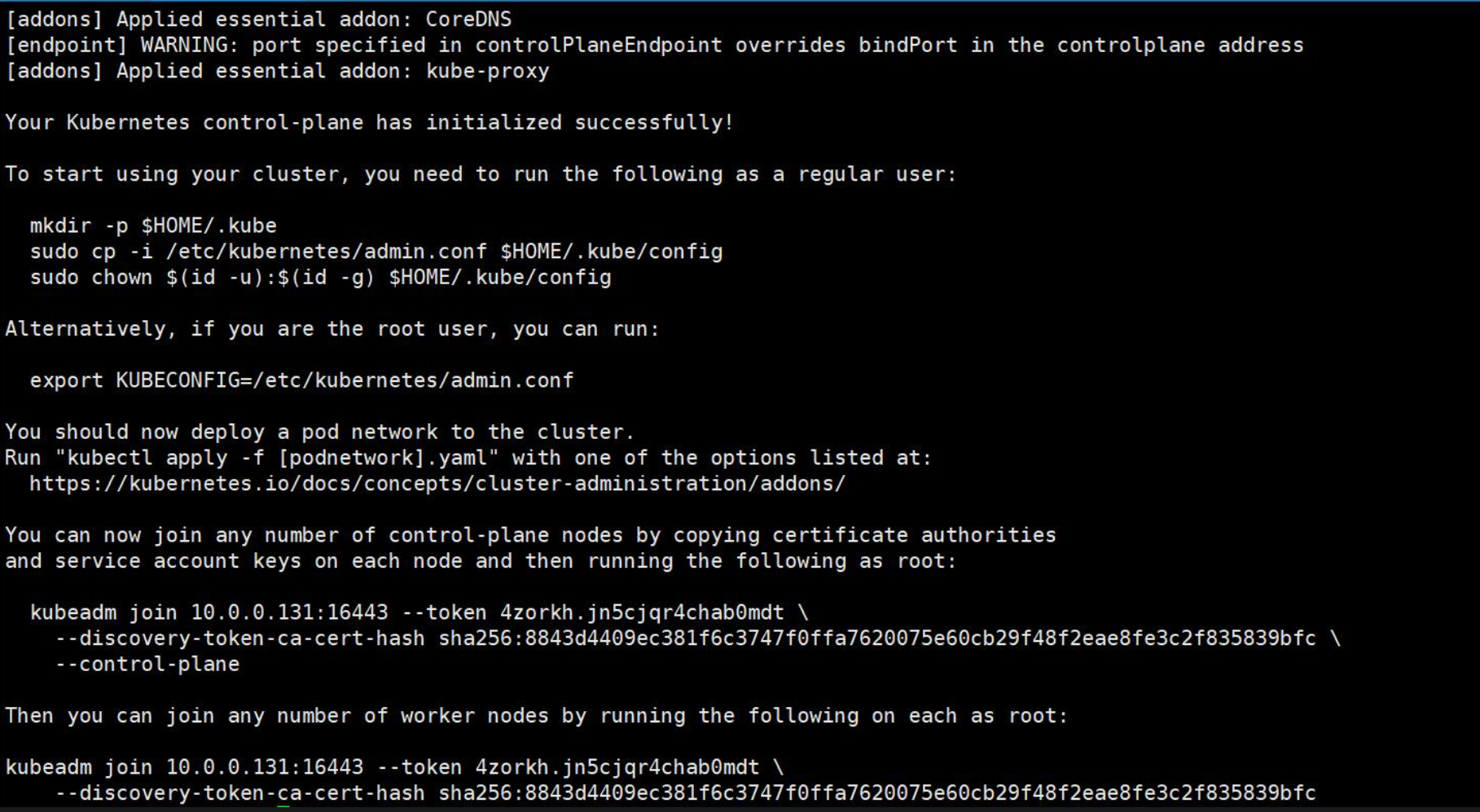

显示如下截图,表明初始化成功

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join 10.0.0.131:16443 --token 4zorkh.jn5cjqr4chab0mdt \

--discovery-token-ca-cert-hash sha256:8843d4409ec381f6c3747f0ffa7620075e60cb29f48f2eae8fe3c2f835839bfc \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 10.0.0.131:16443 --token 4zorkh.jn5cjqr4chab0mdt \

--discovery-token-ca-cert-hash sha256:8843d4409ec381f6c3747f0ffa7620075e60cb29f48f2eae8fe3c2f835839bfc

根据上述提示命令配置kubectl的配置文件config,相当于对kubectl进行授权,这样kubectl命令可以使用这个证书对k8s集群进行管理

[root@k8s-master1 ~]# mkdir -p $HOME/.kube

[root@k8s-master1 ~]# sudo cp -i /etc/kubernetes/admin.conf

[root@k8s-master1 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

查看集群状态

[root@k8s-master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady control-plane,master 8m42s v1.20.6

节点显示不正常,此时集群状态还是NotReady状态,因为没有安装网络插件。

六、扩容k8s集群-添加master节点

1. 在k8s-master2上创建证书存放目录

[root@k8s-master2 ~]# cd /root && mkdir -p /etc/kubernetes/pki/etcd &&mkdir -p ~/.kube/

2. 将k8s-master1上的证书拷贝到k8s-master2上

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/ca.* k8s-master2:/etc/kubernetes/pki/

ca.crt 100% 1066 381.7KB/s 00:00

ca.key 100% 1679 579.4KB/s 00:00

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/sa.* k8s-master2:/etc/kubernetes/pki/

sa.key 100% 1679 451.8KB/s 00:00

sa.pub 100% 451 139.6KB/s 00:00

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/front-proxy-ca.* k8s-master2:/etc/kubernetes/pki/

front-proxy-ca.crt 100% 1078 566.0KB/s 00:00

front-proxy-ca.key 100% 1675 28.4KB/s 00:00

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/etcd/ca.* k8s-master2:/etc/kubernetes/pki/etcd/

ca.crt 100% 1058 318.9KB/s 00:00

ca.key 100% 1679 585.2KB/s 00:00

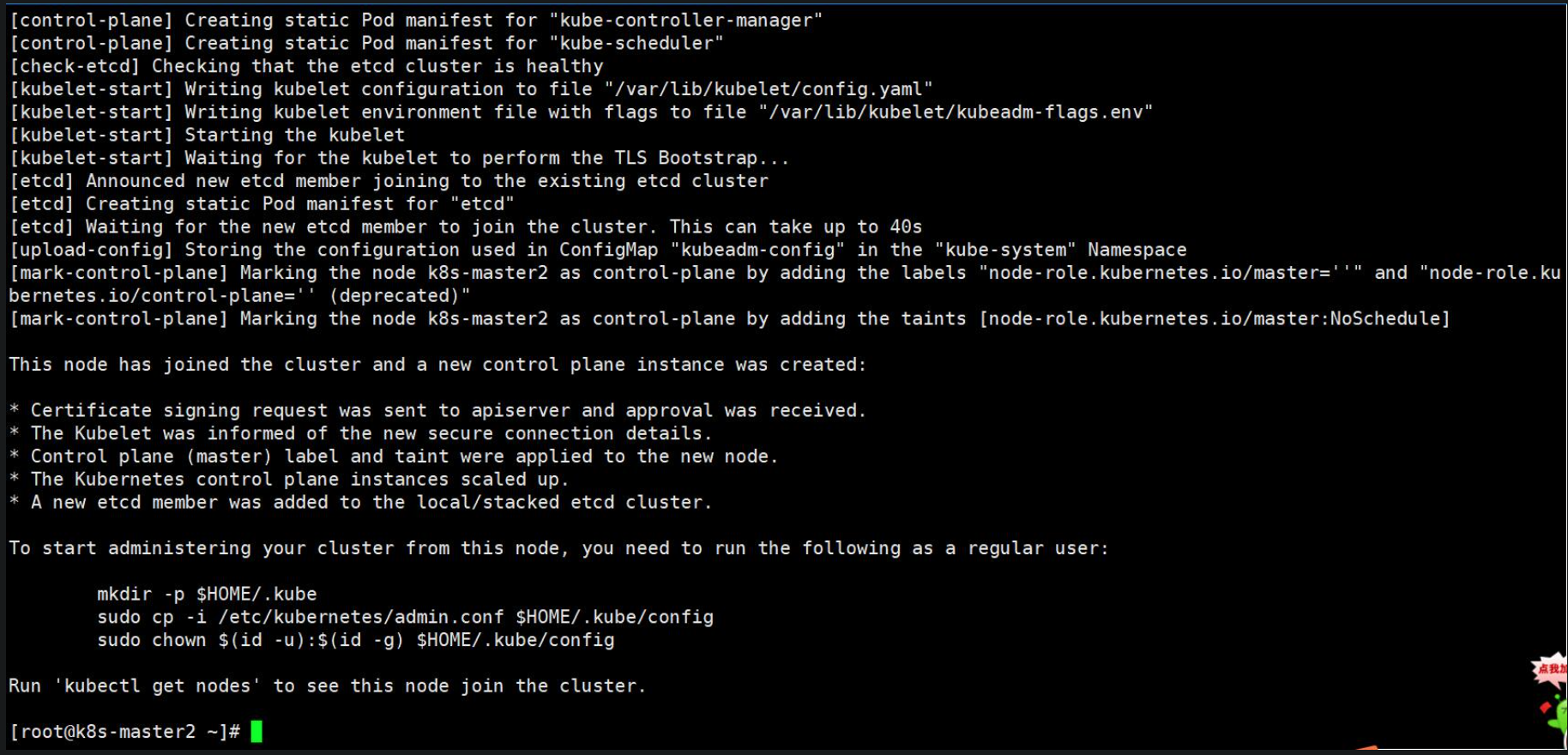

3. 添加master节点,在k8s-master2上执行如下命令:

[root@k8s-master2 ~]# kubeadm join 10.0.0.131:16443 --token 4zorkh.jn5cjqr4chab0mdt --discovery-token-ca-cert-hash sha256:8843d4409ec381f6c3747f0ffa7620075e60cb29f48f2eae8fe3c2f835839bfc --control-plane

显示如下图所示,说明已经加入集群

注:token有效期是有限的,如果旧的token过期,可以使用kubeadm token create --print-join-command重新创建一条token

4. 在k8s-master1上查看集群状况

[root@k8s-master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady control-plane,master 17m v1.20.6

k8s-master2 NotReady control-plane,master 3m15s v1.20.6

可以看到k8s-master2已经加入集群

七 、扩容k8s集群-添加node节点

1. 在k8s-master1上查看加入加入节点的命令

[root@k8s-master1 ~]# kubeadm token create --print-join-command

kubeadm join 10.0.0.131:16443 --token 6cidtt.kgl82ugmll1e9fbi --discovery-token-ca-cert-hash sha256:8843d4409ec381f6c3747f0ffa7620075e60cb29f48f2eae8fe3c2f835839bfc

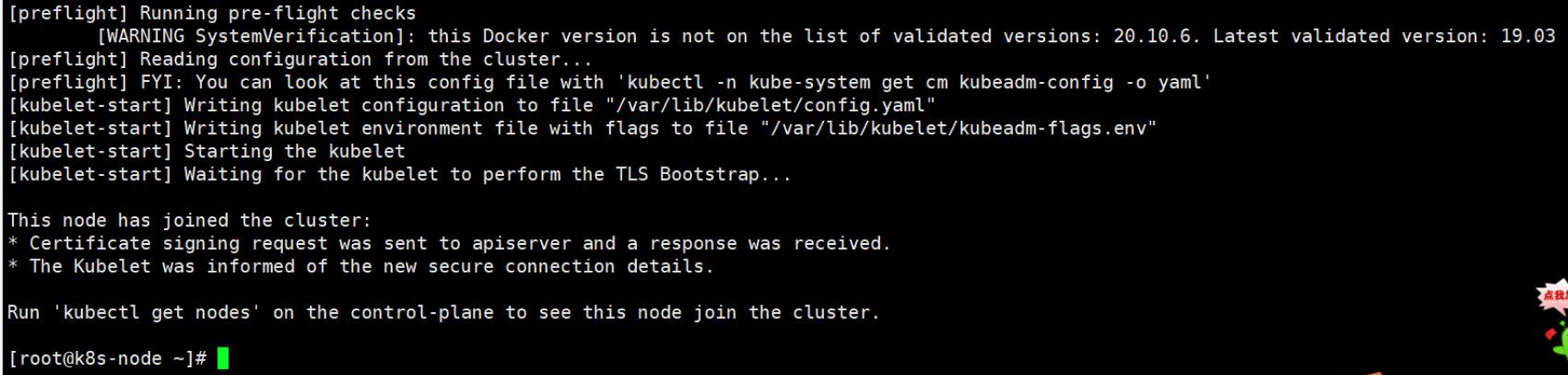

2. 在k8s-node节点上执行如下命令,将k8s-node节点加入到集群中

[root@k8s-node ~]# kubeadm join 10.0.0.131:16443 --token 4zorkh.jn5cjqr4chab0mdt --discovery-token-ca-cert-hash sha256:8843d4409ec381f6c3747f0ffa7620075e60cb29f48f2eae8fe3c2f835839bfc

显示如下图所示,表明加入成功

3. 在k8s-master1上查看集群状况

[root@k8s-master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady control-plane,master 22m v1.20.6

k8s-master2 NotReady control-plane,master 8m41s v1.20.6

k8s-node NotReady <none> 46s v1.20.6

八、安装kubernetes网络组件-Calico

注:在线下载配置文件地址是: https://docs.projectcalico.org/manifests/calico.yaml

[root@k8s-master1 ~]# kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml

显示报错信息如下:

将该文件下载下来,修改版本信息为:policy/v1beta1,重新执行

[root@k8s-master1 ~]# kubectl apply -f calico.yaml

查看node节点信息

[root@k8s-master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready control-plane,master 37m v1.20.6

k8s-master2 Ready control-plane,master 23m v1.20.6

k8s-node Ready <none> 15m v1.20.6

已显示Ready状态

查看集群信息

[root@k8s-master1 ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-6949477b58-mx6wz 1/1 Running 1 19m

calico-node-58k68 1/1 Running 3 19m

calico-node-cnkd4 1/1 Running 2 19m

calico-node-vk766 1/1 Running 3 19m

coredns-7f89b7bc75-5l98h 1/1 Running 0 49m

coredns-7f89b7bc75-gcqx6 1/1 Running 0 49m

etcd-k8s-master1 1/1 Running 2 49m

etcd-k8s-master2 1/1 Running 2 35m

kube-apiserver-k8s-master1 1/1 Running 4 49m

kube-apiserver-k8s-master2 1/1 Running 2 35m

kube-controller-manager-k8s-master1 1/1 Running 6 49m

kube-controller-manager-k8s-master2 1/1 Running 5 35m

kube-proxy-4js8h 1/1 Running 0 49m

kube-proxy-fq2nb 1/1 Running 0 35m

kube-proxy-t4ptw 1/1 Running 0 27m

kube-scheduler-k8s-master1 1/1 Running 5 49m

kube-scheduler-k8s-master2 1/1 Running 4 35m

九、测试在k8s创建pod是否可以正常访问网络

[root@k8s-master1 ~]# kubectl run busybox --image busybox:latest --restart=Never --rm -it busybox -- sh

If you don't see a command prompt, try pressing enter.

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 4E:53:0F:E0:E0:D4

inet addr:10.244.113.133 Bcast:10.244.113.133 Mask:255.255.255.255

UP BROADCAST RUNNING MULTICAST MTU:1480 Metric:1

RX packets:5 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:438 (438.0 B) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

/ # ping baidu.com

PING baidu.com (39.156.66.10): 56 data bytes

64 bytes from 39.156.66.10: seq=0 ttl=127 time=28.332 ms

64 bytes from 39.156.66.10: seq=1 ttl=127 time=27.305 ms

64 bytes from 39.156.66.10: seq=2 ttl=127 time=27.632 ms

64 bytes from 39.156.66.10: seq=3 ttl=127 time=31.171 ms

^C

--- baidu.com ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 27.305/28.610/31.171 ms

可以看到能访问网络,说明calico网络插件已经被正常安装了

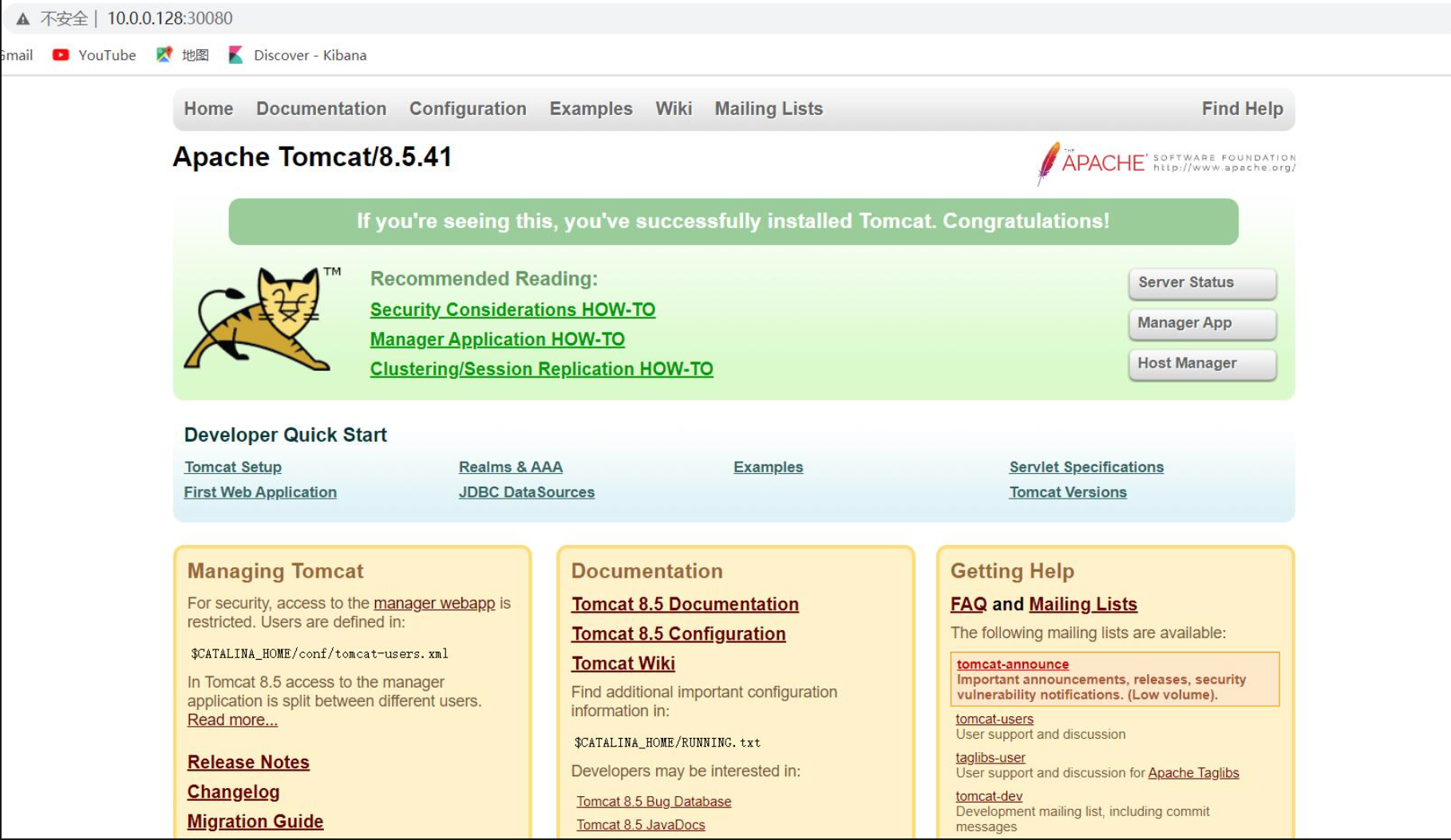

十、测试k8s集群中部署tomcat服务

将tomcat镜像文件上传至k8s-node节点上,获取tomcat:8.5-jre8-alpine此镜像

[root@k8s-node images]# docker load -i tomcat.tar.gz

f1b5933fe4b5: Loading layer [==================================================>] 5.796MB/5.796MB

9b9b7f3d56a0: Loading layer [==================================================>] 3.584kB/3.584kB

edd61588d126: Loading layer [==================================================>] 80.28MB/80.28MB

48988bb7b861: Loading layer [==================================================>] 2.56kB/2.56kB

8e0feedfd296: Loading layer [==================================================>] 24.06MB/24.06MB

aac21c2169ae: Loading layer [==================================================>] 2.048kB/2.048kB

Loaded image: tomcat:8.5-jre8-alpine

创建一个tomcat的pod

[root@k8s-master1 ~]# cat >tomcat.yaml <<EOF

> apiVersion: v1 #pod属于k8s核心组v1

> kind: Pod #创建的是一个Pod资源

> metadata: #元数据

> name: demo-pod #pod名字

> namespace: default #pod所属的名称空间

> labels:

> app: myapp #pod具有的标签

> env: dev #pod具有的标签

> spec:

> containers: #定义一个容器,容器是对象列表,下面可以有多个name

> - name: tomcat-pod-java #容器的名字

> ports:

> - containerPort: 8080

> image: tomcat:8.5-jre8-alpine #容器使用的镜像

> imagePullPolicy: IfNotPresent

> EOF

[root@k8s-master1 ~]# kubectl apply -f tomcat.yaml

pod/demo-pod created

[root@k8s-master1 ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

demo-pod 0/1 ContainerCreating 0 12s

[root@k8s-master1 ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

demo-pod 1/1 Running 0 18s

创建一个service可以访问tomcat

[root@k8s-master1 ~]# cat >tomcat-service.yaml <<EOF

> apiVersion: v1

> kind: Service

> metadata:

> name: tomcat

> spec:

> type: NodePort

> ports:

> - port: 8080

> nodePort: 30080 #外部端口访问

> selector:

> app: myapp

> env: dev

> EOF

[root@k8s-master1 ~]# kubectl apply -f tomcat-service.yaml

service/tomcat created

[root@k8s-master1 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.10.0.1 <none> 443/TCP 78m

tomcat NodePort 10.10.104.169 <none> 8080:30080/TCP 19s

在浏览器访问k8s-node节点的ip:30080,可以看到tomcat页面: