参考笔记:https://www.cnblogs.com/yinzhengjie/p/17069566.html

一、环境准备

准备5台机器,二进制部署K8S高可用集群:

| 主机 |

ip |

| k8s-master01 |

10.0.0.201 |

| k8s-master02 |

10.0.0.202 |

| k8s-master03 |

10.0.0.203 |

| k8s-node01 |

10.0.0.204 |

| k8s-node02 |

10.0.0.205 |

二、K8S二进制部署准备环境

1. 所有节点下载软件包

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~/softwares] |

| total 24 |

| drwxr-xr-x 2 root root 4096 Apr 27 21:00 01-Linux常用的软件包 |

| drwxr-xr-x 2 root root 110 Apr 27 21:00 02-Linux-kernel |

| drwxr-xr-x 2 root root 8192 Apr 27 21:00 03-Linux-yum-update |

| drwxr-xr-x 2 root root 4096 Apr 27 21:00 04-Linux-ipvsadm |

| drwxr-xr-x 2 root root 4096 Apr 27 21:00 05-Linux-docker-ce-19_03 |

| drwxr-xr-x 2 root root 88 Apr 27 21:00 06-etcd_k8s |

| drwxr-xr-x 11 root root 171 Apr 27 21:01 07-k8s-ha-install |

| drwxr-xr-x 2 root root 36 Apr 27 21:01 08-cfssl |

| drwxr-xr-x 2 root root 195 Apr 27 21:01 09-keepalive-haproxy |

2. 所有节点安装常用的软件包

| yum -y install bind-utils expect rsync wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git ntpdate |

| [root@k8s-master01 ~/softwares] |

3. 免密钥登录集群并配置同步脚本

3.1 设置主机名,各节点参考如下命令修改即可

| hostnamectl set-hostname k8s-master01 |

| hostnamectl set-hostname k8s-master02 |

| hostnamectl set-hostname k8s-master03 |

| hostnamectl set-hostname k8s-node01 |

| hostnamectl set-hostname k8s-node02 |

3.2 所有节点设置相应的主机名及hosts文件解析

| [root@k8s-master01 ~] |

| 10.0.0.201 k8s-master01 |

| 10.0.0.202 k8s-master02 |

| 10.0.0.203 k8s-master03 |

| 10.0.0.204 k8s-node01 |

| 10.0.0.205 k8s-node02 |

| EOF |

3.3 k8s-master01节点配置免密码登录其他节点

| [root@k8s-master01 ~] |

| |

| |

| |

| |

| ssh-keygen -t rsa -P "" -f /root/.ssh/id_rsa -q |

| |

| |

| export mypasswd=1 |

| |

| |

| k8s_host_list=(k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02) |

| |

| |

| for i in ${k8s_host_list[@]};do |

| expect -c " |

| spawn ssh-copy-id -i /root/.ssh/id_rsa.pub root@$i |

| expect { |

| \"*yes/no*\" {send \"yes\r\"; exp_continue} |

| \"*password*\" {send \"$mypasswd\r\"; exp_continue} |

| }" |

| done |

| EOF |

| [root@k8s-master01 ~] |

3.4 k8s-master01编写同步脚本

| [root@k8s-master01 ~] |

| |

| |

| |

| if [ $# -ne 1 ];then |

| echo "Usage: $0 /path/to/file(绝对路径)" |

| exit |

| fi |

| |

| if [ ! -e $1 ];then |

| echo "[ $1 ] dir or file not find!" |

| exit |

| fi |

| |

| fullpath=`dirname $1` |

| |

| basename=`basename $1` |

| |

| cd $fullpath |

| |

| k8s_host_list=(k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02) |

| |

| for host in ${k8s_host_list[@]};do |

| tput setaf 2 |

| echo ===== rsyncing ${host}: $basename ===== |

| tput setaf 7 |

| rsync -az $basename `whoami`@${host}:$fullpath |

| if [ $? -eq 0 ];then |

| echo "命令执行成功!" |

| fi |

| done |

| EOF |

| [root@k8s-master01 ~] |

| |

3.5 测试同步脚本是否正常工作

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~] |

| ===== rsyncing k8s-master01: hosts ===== |

| 命令执行成功! |

| ===== rsyncing k8s-master02: hosts ===== |

| 命令执行成功! |

| ===== rsyncing k8s-master03: hosts ===== |

| 命令执行成功! |

| ===== rsyncing k8s-node01: hosts ===== |

| 命令执行成功! |

| ===== rsyncing k8s-node02: hosts ===== |

| 命令执行成功! |

4. Linux基础环境优化

4.1 所有节点关闭firewalld,selinux,NetworkManager

| systemctl disable --now firewalld |

| systemctl disable --now NetworkManager |

| setenforce 0 |

| sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux |

| sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config |

4.2 所有节点关闭swap分区,fstab注释swap

| [root@k8s-master01 ~/softwares] |

| vm.swappiness = 0 |

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares] |

| total used free shared buff/cache available |

| Mem: 1.9G 177M 838M 9.5M 965M 1.6G |

| Swap: 0B 0B 0B |

4.3 所有节点同步时间

| - 手动同步时区和时间 |

| ln -svf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime |

| ntpdate ntp.aliyun.com |

| |

| - 定期任务同步("crontab -e") |

| */5 * * * * /usr/sbin/ntpdate ntp.aliyun.com |

4.4 所有节点配置limit

| [root@k8s-master01 ~/softwares] |

| * soft nofile 655360 |

| * hard nofile 131072 |

| * soft nproc 655350 |

| * hard nproc 655350 |

| * soft memlock unlimited |

| * hard memlock unlimited |

| EOF |

4.5 所有节点优化sshd服务

| sed -i 's@#UseDNS yes@UseDNS no@g' /etc/ssh/sshd_config |

| sed -i 's@^GSSAPIAuthentication yes@GSSAPIAuthentication no@g' /etc/ssh/sshd_config |

| |

| |

| - UseDNS选项: |

| 打开状态下,当客户端试图登录SSH服务器时,服务器端先根据客户端的IP地址进行DNS PTR反向查询出客户端的主机名,然后根据查询出的客户端主机名进行DNS正向A记录查询,验证与其原始IP地址是否一致,这是防止客户端欺骗的一种措施,但一般我们的是动态IP不会有PTR记录,打开这个选项不过是在白白浪费时间而已,不如将其关闭。 |

| |

| - GSSAPIAuthentication: |

| 当这个参数开启( GSSAPIAuthentication yes )的时候,通过SSH登陆服务器时候会有些会很慢!这是由于服务器端启用了GSSAPI。登陆的时候客户端需要对服务器端的IP地址进行反解析,如果服务器的IP地址没有配置PTR记录,那么就容易在这里卡住了。 |

4.6 Linux内核调优

| [root@k8s-master01 ~/softwares] |

| net.ipv4.ip_forward = 1 |

| net.bridge.bridge-nf-call-iptables = 1 |

| net.bridge.bridge-nf-call-ip6tables = 1 |

| net.ipv6.conf.all.disable_ipv6 = 1 |

| fs.may_detach_mounts = 1 |

| vm.overcommit_memory=1 |

| vm.panic_on_oom=0 |

| fs.inotify.max_user_watches=89100 |

| fs.file-max=52706963 |

| fs.nr_open=52706963 |

| net.netfilter.nf_conntrack_max=2310720 |

| net.ipv4.tcp_keepalive_time = 600 |

| net.ipv4.tcp_keepalive_probes = 3 |

| net.ipv4.tcp_keepalive_intvl =15 |

| net.ipv4.tcp_max_tw_buckets = 36000 |

| net.ipv4.tcp_tw_reuse = 1 |

| net.ipv4.tcp_max_orphans = 327680 |

| net.ipv4.tcp_orphan_retries = 3 |

| net.ipv4.tcp_syncookies = 1 |

| net.ipv4.tcp_max_syn_backlog = 16384 |

| net.ipv4.ip_conntrack_max = 65536 |

| net.ipv4.tcp_max_syn_backlog = 16384 |

| net.ipv4.tcp_timestamps = 0 |

| net.core.somaxconn = 16384 |

| EOF |

| [root@k8s-master01 ~/softwares] |

4.7 修改终端颜色

| cat <<EOF >> ~/.bashrc |

| PS1='[\[\e[34;1m\]\u@\[\e[0m\]\[\e[32;1m\]\H\[\e[0m\]\[\e[31;1m\] \W\[\e[0m\]]# ' |

| EOF |

| source ~/.bashrc |

5. 所有节点升级Linux内核

5.1 下载并安装内核软件包

| wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm |

| wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm |

| |

| [root@k8s-master01 ~/softwares] |

5.2 更改内核启动顺序

| [root@k8s-master01 ~/softwares] |

| Generating grub configuration file ... |

| Found linux image: /boot/vmlinuz-4.19.12-1.el7.elrepo.x86_64 |

| Found initrd image: /boot/initramfs-4.19.12-1.el7.elrepo.x86_64.img |

| Found linux image: /boot/vmlinuz-3.10.0-1160.el7.x86_64 |

| Found initrd image: /boot/initramfs-3.10.0-1160.el7.x86_64.img |

| Found linux image: /boot/vmlinuz-0-rescue-3f247cb2747e45bc9e7695f23024427b |

| Found initrd image: /boot/initramfs-0-rescue-3f247cb2747e45bc9e7695f23024427b.img |

| done |

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares] |

| /boot/vmlinuz-4.19.12-1.el7.elrepo.x86_64 |

5.3 更新软件版本,但不需要更新内核,因为我内核已经更新到了指定的版本

| |

| [root@k8s-master01 ~/softwares] |

| |

| |

6. 所有节点安装ipvsadm以实现kube-proxy的负载均衡

6.1 安装ipvsadm等相关工具

| |

| [root@k8s-master01 ~/softwares] |

6.2 手动加载模块

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares] |

6.3 创建要开机自动加载的模块配置文件

| [root@k8s-master01 ~/softwares] |

| ip_vs |

| ip_vs_lc |

| ip_vs_wlc |

| ip_vs_rr |

| ip_vs_wrr |

| ip_vs_lblc |

| ip_vs_lblcr |

| ip_vs_dh |

| ip_vs_sh |

| ip_vs_fo |

| ip_vs_nq |

| ip_vs_sed |

| ip_vs_ftp |

| ip_vs_sh |

| nf_conntrack |

| ip_tables |

| ip_set |

| xt_set |

| ipt_set |

| ipt_rpfilter |

| ipt_REJECT |

| ipip |

| EOF |

6.4 启动模块,如上图所示,这是Linux 3.10.X系列的内核模块,并不是我们需要的!

| [root@k8s-master01 ~/softwares] |

| ip_vs_sh 12688 0 |

| ip_vs_wrr 12697 0 |

| ip_vs_rr 12600 0 |

| ip_vs 145458 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr |

| nf_conntrack 139264 1 ip_vs |

| libcrc32c 12644 3 xfs,ip_vs,nf_conntrack |

| |

| 温馨提示: |

| Linux kernel 4.19+版本已经将之前的"nf_conntrack_ipv4"模块更名为"nf_conntrack"模块哟~ |

7. 重启所有节点并检查内核和模块是否配置成功

7.1 查看现有内核版本

| [root@k8s-master01 ~/softwares] |

| 3.10.0-1160.el7.x86_64 |

7.2 检查默认加载的内核版本

| [root@k8s-master01 ~/softwares] |

| /boot/vmlinuz-4.19.12-1.el7.elrepo.x86_64 |

7.3 重启所有节点

| [root@k8s-master01 ~/softwares] |

7.4 检查支持ipvs的内核模块是否加载成功,如上图所示,支持了更多的内核参数

| [root@k8s-master01 ~] |

| ip_vs_ftp 16384 0 |

| nf_nat 32768 1 ip_vs_ftp |

| ip_vs_sed 16384 0 |

| ip_vs_nq 16384 0 |

| ip_vs_fo 16384 0 |

| ip_vs_sh 16384 0 |

| ip_vs_dh 16384 0 |

| ip_vs_lblcr 16384 0 |

| ip_vs_lblc 16384 0 |

| ip_vs_wrr 16384 0 |

| ip_vs_rr 16384 0 |

| ip_vs_wlc 16384 0 |

| ip_vs_lc 16384 0 |

| ip_vs 151552 24 ip_vs_wlc,ip_vs_rr,ip_vs_dh,ip_vs_lblcr,ip_vs_sh,ip_vs_fo,ip_vs_nq,ip_vs_lblc,ip_vs_wrr,ip_vs_lc,ip_vs_sed,ip_vs_ftp |

| nf_conntrack 143360 2 nf_nat,ip_vs |

| nf_defrag_ipv6 20480 1 nf_conntrack |

| nf_defrag_ipv4 16384 1 nf_conntrack |

| libcrc32c 16384 4 nf_conntrack,nf_nat,xfs,ip_vs |

7.5 再次查看内核版本

| [root@k8s-master01 ~] |

| 4.19.12-1.el7.elrepo.x86_64 |

三、基础组件安装

1.所有节点部署docker环境

1.1 安装docker

| |

| [root@k8s-master01 ~/softwares] |

1.2 将docker的CgroupDriver改成systemd,并配置镜像加速和私有镜像仓库地址

| [root@k8s-master01 ~/softwares] |

| { |

| "exec-opts": ["native.cgroupdriver=systemd"], |

| "registry-mirrors": ["https://registry.docker-cn.com","https://tuv7rqqq.mirror.aliyuncs.com"], |

| "log-driver": "json-file", |

| "log-opts": {"max-size": "200m"}, |

| "storage-driver": "overlay2" |

| } |

| EOF |

1.3 设置开机自启动

| [root@k8s-master01 ~/softwares] |

| Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service. |

| [root@k8s-master01 ~/softwares] |

| ● docker.service - Docker Application Container Engine |

| Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; vendor preset: disabled) |

| Active: active (running) since Thu 2023-04-27 22:18:56 CST; 8ms ago |

| Docs: https://docs.docker.com |

| Main PID: 1687 (dockerd) |

| Tasks: 10 |

| Memory: 40.4M |

| CGroup: /system.slice/docker.service |

| └─1687 /usr/bin/dockerd -H fd:// --containerd=/run/contai... |

| |

| Apr 27 22:18:55 k8s-master01 dockerd[1687]: time="2023-04-27T22:18:5... |

| Apr 27 22:18:55 k8s-master01 dockerd[1687]: time="2023-04-27T22:18:5... |

| Apr 27 22:18:55 k8s-master01 dockerd[1687]: time="2023-04-27T22:18:5... |

| Apr 27 22:18:55 k8s-master01 dockerd[1687]: time="2023-04-27T22:18:5... |

| Apr 27 22:18:56 k8s-master01 dockerd[1687]: time="2023-04-27T22:18:5... |

| Apr 27 22:18:56 k8s-master01 dockerd[1687]: time="2023-04-27T22:18:5... |

| Apr 27 22:18:56 k8s-master01 dockerd[1687]: time="2023-04-27T22:18:5... |

| Apr 27 22:18:56 k8s-master01 dockerd[1687]: time="2023-04-27T22:18:5... |

| Apr 27 22:18:56 k8s-master01 dockerd[1687]: time="2023-04-27T22:18:5... |

| Apr 27 22:18:56 k8s-master01 systemd[1]: Started Docker Application ... |

| Hint: Some lines were ellipsized, use -l to show in full. |

| [root@k8s-master01 ~/softwares]# docker info | grep "Cgroup Driver" |

| Cgroup Driver: systemd |

| [root@k8s-master01 ~/softwares]# docker info | grep "Registry Mirrors" -A 2 |

| Registry Mirrors: |

| https://registry.docker-cn.com/ |

| https://tuv7rqqq.mirror.aliyuncs.com/ |

| |

1.4 配置自动补全功能

| |

| [root@k8s-master01 ~/softwares] |

2. 部署etcd和K8S程序

2.1 所有节点下载K8S,etcd的软件包

| wget https://dl.k8s.io/v1.23.15/kubernetes-server-linux-amd64.tar.gz |

| wget https://github.com/etcd-io/etcd/releases/download/v3.5.2/etcd-v3.5.2-linux-amd64.tar.gz |

2.2 解压K8S的二进制程序包到PATH环境变量路径(master节点)

| [root@k8s-master01 ~/softwares] |

2.3 解压etcd的二进制程序包到PATH环境变量路径(master节点)

| [root@k8s-master01 ~/softwares] |

2.4 k8s-master01将组件发送到node节点

| [root@k8s-master01 ~/softwares] |

2.5 所有节点查看kubernetes的版本

| [root@k8s-master01 ~/softwares] |

| Kubernetes v1.23.15 |

| [root@k8s-master01 ~/softwares] |

| Kubernetes v1.23.15 |

| [root@k8s-master01 ~/softwares] |

| Kubernetes v1.23.15 |

| [root@k8s-master01 ~/softwares] |

| etcdctl version: 3.5.2 |

| API version: 3.5 |

| [root@k8s-master01 ~/softwares] |

| Kubernetes v1.23.15 |

| [root@k8s-master01 ~/softwares] |

| Kubernetes v1.23.15 |

| [root@k8s-master01 ~/softwares] |

| Client Version: version.Info{Major:"1", Minor:"23", GitVersion:"v1.23.15", GitCommit:"b84cb8ab29366daa1bba65bc67f54de2f6c34848", GitTreeState:"clean", BuildDate:"2022-12-08T10:49:13Z", GoVersion:"go1.17.13", Compiler:"gc", Platform:"linux/amd64"} |

| The connection to the server localhost:8080 was refused - did you specify the right host or port? |

| |

| |

| [root@k8s-node02 softwares] |

| -bash: kube-apiserver: command not found |

| [root@k8s-node02 softwares] |

| -bash: kube-controller-manager: command not found |

| [root@k8s-node02 softwares] |

| -bash: kube-scheduler: command not found |

| [root@k8s-node02 softwares] |

| -bash: etcdctl: command not found |

| [root@k8s-node02 softwares] |

| [root@k8s-node02 softwares] |

| Kubernetes v1.23.15 |

| [root@k8s-node02 softwares] |

| -bash: kubectl: command not found |

2.6 所有节点创建工作目录

| [root@k8s-master01 ~/softwares] |

2.7 切换分支,版本取决于所部署的K8S版本

| git clone https://github.com/dotbalo/k8s-ha-install.git |

| cd k8s-ha-install/ |

| git checkout manual-installation-v1.23.x |

| |

| |

四、生成K8S集群证书文件

1.下载证书管理工具

1.1 k8s-master01节点下载证书管理工具

| |

| |

| |

| |

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares] |

1.2 所有Master节点创建etcd证书目录

| [root@k8s-master01 ~/softwares] |

1.3 所有节点创建kubernetes相关目录

| [root@k8s-master01 ~/softwares] |

2. k8s-master01节点生成etcd证书

2.1 k8s-master01生成etcd CA证书和CA证书的key

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| |

| |

| 2023/04/27 22:40:46 [INFO] generating a new CA key and certificate from CSR |

| 2023/04/27 22:40:46 [INFO] generate received request |

| 2023/04/27 22:40:46 [INFO] received CSR |

| 2023/04/27 22:40:46 [INFO] generating key: rsa-2048 |

| 2023/04/27 22:40:47 [INFO] encoded CSR |

| 2023/04/27 22:40:47 [INFO] signed certificate with serial number 487485893086809550096199093314791134992298961086 |

2.2 k8s-master01颁发证书

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| -ca=/etc/etcd/ssl/etcd-ca.pem \ |

| -ca-key=/etc/etcd/ssl/etcd-ca-key.pem \ |

| -config=ca-config.json \ |

| -hostname=127.0.0.1,k8s-master01,k8s-master02,k8s-master03,10.0.0.201,10.0.0.202,10.0.0.203 \ |

| -profile=kubernetes \ |

| etcd-csr.json | cfssljson -bare /etc/etcd/ssl/etcd |

| |

| |

| 2023/04/27 22:41:20 [INFO] generate received request |

| 2023/04/27 22:41:20 [INFO] received CSR |

| 2023/04/27 22:41:20 [INFO] generating key: rsa-2048 |

| 2023/04/27 22:41:21 [INFO] encoded CSR |

| 2023/04/27 22:41:21 [INFO] signed certificate with serial number 675924064101716663788964686872284057430188149903 |

2.3 将证书复制到其他master节点

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| ssh $NODE "mkdir -p /etc/etcd/ssl" |

| for FILE in etcd-ca-key.pem etcd-ca.pem etcd-key.pem etcd.pem; do |

| scp /etc/etcd/ssl/${FILE} $NODE:/etc/etcd/ssl/${FILE} |

| done |

| done |

| |

| |

| etcd-ca-key.pem 100% 1679 553.5KB/s 00:00 |

| etcd-ca.pem 100% 1367 905.8KB/s 00:00 |

| etcd-key.pem 100% 1679 151.8KB/s 00:00 |

| etcd.pem 100% 1509 922.1KB/s 00:00 |

| etcd-ca-key.pem 100% 1679 1.6MB/s 00:00 |

| etcd-ca.pem 100% 1367 927.7KB/s 00:00 |

| etcd-key.pem 100% 1679 734.7KB/s 00:00 |

| etcd.pem 100% 1509 89.9KB/s 00:00 |

3. k8s组件apiserver相关证书

3.1 k8s-master01生成kubernetes证书

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| |

| 2023/04/27 22:44:07 [INFO] generating a new CA key and certificate from CSR |

| 2023/04/27 22:44:07 [INFO] generate received request |

| 2023/04/27 22:44:07 [INFO] received CSR |

| 2023/04/27 22:44:07 [INFO] generating key: rsa-2048 |

| 2023/04/27 22:44:08 [INFO] encoded CSR |

| 2023/04/27 22:44:08 [INFO] signed certificate with serial number 622916043139950813454231265196884373573668287194 |

3.2 k8s-master01生成apiserver的客户端证书

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| |

| |

| 2023/04/27 22:45:09 [INFO] generate received request |

| 2023/04/27 22:45:09 [INFO] received CSR |

| 2023/04/27 22:45:09 [INFO] generating key: rsa-2048 |

| 2023/04/27 22:45:09 [INFO] encoded CSR |

| 2023/04/27 22:45:09 [INFO] signed certificate with serial number 71180299814883969154672874268501953413837726194 |

3.3 k8s-master01生成apiserver的聚合证书

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| |

| |

| 2023/04/27 22:45:51 [INFO] generating a new CA key and certificate from CSR |

| 2023/04/27 22:45:51 [INFO] generate received request |

| 2023/04/27 22:45:51 [INFO] received CSR |

| 2023/04/27 22:45:51 [INFO] generating key: rsa-2048 |

| 2023/04/27 22:45:51 [INFO] encoded CSR |

| 2023/04/27 22:45:51 [INFO] signed certificate with serial number 374782127566380677065060880043584181844441098837 |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| |

| |

| 2023/04/27 22:45:51 [INFO] generate received request |

| 2023/04/27 22:45:51 [INFO] received CSR |

| 2023/04/27 22:45:51 [INFO] generating key: rsa-2048 |

| 2023/04/27 22:45:51 [INFO] encoded CSR |

| 2023/04/27 22:45:51 [INFO] signed certificate with serial number 271552184398450791592402315047208603715393230593 |

| 2023/04/27 22:45:51 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for |

| websites. For more information see the Baseline Requirements for the Issuance and Management |

| of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org); |

| specifically, section 10.2.3 ("Information Requirements"). |

| |

温馨提示:

(1)"10.96.0.0"是k8s service的网段,如果说需要更改k8s service网段,那就需要更改"10.96.0.1";

(2)如果不是高可用集群,10.0.0.250为Master01的IP,我这里这个是高可用的vip;

4. k8s-master01生成k8s组件controller manager相关证书

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| -ca=/etc/kubernetes/pki/ca.pem \ |

| -ca-key=/etc/kubernetes/pki/ca-key.pem \ |

| -config=ca-config.json \ |

| -profile=kubernetes \ |

| manager-csr.json | cfssljson -bare /etc/kubernetes/pki/controller-manager |

| |

| |

| |

| |

| kubectl config set-cluster kubernetes \ |

| --certificate-authority=/etc/kubernetes/pki/ca.pem \ |

| --embed-certs=true \ |

| --server=https://10.0.0.222:6443 \ |

| --kubeconfig=/etc/kubernetes/controller-manager.kubeconfig |

| |

| |

| kubectl config set-credentials system:kube-controller-manager \ |

| --client-certificate=/etc/kubernetes/pki/controller-manager.pem \ |

| --client-key=/etc/kubernetes/pki/controller-manager-key.pem \ |

| --embed-certs=true \ |

| --kubeconfig=/etc/kubernetes/controller-manager.kubeconfig |

| |

| |

| kubectl config set-context system:kube-controller-manager@kubernetes \ |

| --cluster=kubernetes \ |

| --user=system:kube-controller-manager \ |

| --kubeconfig=/etc/kubernetes/controller-manager.kubeconfig |

| |

| |

| kubectl config use-context system:kube-controller-manager@kubernetes \ |

| --kubeconfig=/etc/kubernetes/controller-manager.kubeconfig |

5. k8s-master01生成k8s组件scheduler相关证书

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| -ca=/etc/kubernetes/pki/ca.pem \ |

| -ca-key=/etc/kubernetes/pki/ca-key.pem \ |

| -config=ca-config.json \ |

| -profile=kubernetes \ |

| scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/scheduler |

| |

| |

| kubectl config set-cluster kubernetes \ |

| --certificate-authority=/etc/kubernetes/pki/ca.pem \ |

| --embed-certs=true \ |

| --server=https://10.0.0.222:6443 \ |

| --kubeconfig=/etc/kubernetes/scheduler.kubeconfig |

| |

| kubectl config set-credentials system:kube-scheduler \ |

| --client-certificate=/etc/kubernetes/pki/scheduler.pem \ |

| --client-key=/etc/kubernetes/pki/scheduler-key.pem \ |

| --embed-certs=true \ |

| --kubeconfig=/etc/kubernetes/scheduler.kubeconfig |

| |

| kubectl config set-context system:kube-scheduler@kubernetes \ |

| --cluster=kubernetes \ |

| --user=system:kube-scheduler \ |

| --kubeconfig=/etc/kubernetes/scheduler.kubeconfig |

| |

| kubectl config use-context system:kube-scheduler@kubernetes \ |

| --kubeconfig=/etc/kubernetes/scheduler.kubeconfig |

6. k8s-master01生成admin的证书

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| -ca=/etc/kubernetes/pki/ca.pem \ |

| -ca-key=/etc/kubernetes/pki/ca-key.pem \ |

| -config=ca-config.json \ |

| -profile=kubernetes \ |

| admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin |

| |

| |

| kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://10.0.0.222:6443 --kubeconfig=/etc/kubernetes/admin.kubeconfig |

| |

| kubectl config set-credentials kubernetes-admin --client-certificate=/etc/kubernetes/pki/admin.pem --client-key=/etc/kubernetes/pki/admin-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/admin.kubeconfig |

| |

| kubectl config set-context kubernetes-admin@kubernetes --cluster=kubernetes --user=kubernetes-admin --kubeconfig=/etc/kubernetes/admin.kubeconfig |

| |

| kubectl config use-context kubernetes-admin@kubernetes --kubeconfig=/etc/kubernetes/admin.kubeconfig |

温馨提示:

我们用同样的命令生成了admin.kubeconfig,scheduler.kubeconfig,controller-manager.kubeconfig,它们之间是如何区分的?

我们生成的证书会定义一个用户 admin,它是属于 system:masters 这个组,k8s 安装的时候会有一个 clusterrole,它是一个集群角色,相当于一个配置,它有着集群最高的管理权限,同时会创建一个 clusterrolebinding,它会把 admin 绑到 system:masters 这个组上,然后这个组上的所有用户都会有这个集群的权限

7. 创建ServiceAccount Key

7.1 ServiceAccount是k8s一种认证方式,创建ServiceAccount的时候会创建一个与之绑定的secret,这个secret会生成一个token

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| Generating RSA private key, 2048 bit long modulus |

| .......................................................................................................................+++ |

| ......................................................................................................................+++ |

| e is 65537 (0x10001) |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| writing RSA key |

7.2 发送证书至其他节点

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| do |

| for FILE in $(ls /etc/kubernetes/pki | grep -v etcd); |

| do |

| scp /etc/kubernetes/pki/${FILE} $NODE:/etc/kubernetes/pki/${FILE}; |

| done; |

| for FILE in admin.kubeconfig controller-manager.kubeconfig scheduler.kubeconfig; |

| do |

| scp /etc/kubernetes/${FILE} $NODE:/etc/kubernetes/${FILE}; |

| done; |

| done |

7.3 查看ca证书的有效期

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/pki] |

| { |

| "signing": { |

| "default": { |

| "expiry": "876000h" |

| }, |

| "profiles": { |

| "kubernetes": { |

| "usages": [ |

| "signing", |

| "key encipherment", |

| "server auth", |

| "client auth" |

| ], |

| "expiry": "876000h" |

| } |

| } |

| } |

| } |

五、二进制高可用及etcd配置

1.创建配置文件

1.1 k8s-master01节点的配置文件

| [root@k8s-master01 ~] |

| name: 'k8s-master01' |

| data-dir: /var/lib/etcd |

| wal-dir: /var/lib/etcd/wal |

| snapshot-count: 5000 |

| heartbeat-interval: 100 |

| election-timeout: 1000 |

| quota-backend-bytes: 0 |

| listen-peer-urls: 'https://10.0.0.201:2380' |

| listen-client-urls: 'https://10.0.0.201:2379,http://127.0.0.1:2379' |

| overy-srv: |

| itial-cluster: 'k8s-master01=https://10.0.0.201:2380,k8s-master02=https://10.0.0.202:2380,k8s-master03=https://10.0.0.203:2380' |

| itial-cluster-token: 'etcd-k8s-cluster' |

| itial-cluster-state: 'new' |

| rict-reconfig-check: false |

| able-v2: tr> max-snapshots: 3 |

| max-wals: 5 |

| cors: |

| initial-advertise-peer-urls: 'https://10.0.0.201:2380' |

| advertise-client-urls: 'https://10.0.0.201:2379' |

| discovery: |

| discovery-fallback: 'proxy' |

| discovery-proxy: |

| discovery-srv: |

| initial-cluster: 'k8s-master01=https://10.0.0.201:2380,k8s-master02=https://10.0.0.202:2380,k8s-master03=https://10.0.0.203:2380' |

| initial-cluster-token: 'etcd-k8s-cluster' |

| initial-cluster-state: 'new' |

| strict-reconfig-check: false |

| enable-v2: true |

| enable-pprof: true |

| proxy: 'off' |

| proxy-failure-wait: 5000 |

| proxy-refresh-interval: 30000 |

| proxy-dial-timeout: 1000 |

| proxy-write-timeout: 5000 |

| proxy-read-timeout: 0 |

| client-transport-security: |

| cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' |

| key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' |

| client-cert-auth: true |

| trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' |

| auto-tls: true |

| peer-transport-security: |

| cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' |

| key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' |

| peer-client-cert-auth: true |

| trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' |

| auto-tls: true |

| debug: false |

| log-package-levels: |

| log-outputs: [default] |

| force-new-cluster: false |

| EOF |

| |

1.2 k8s-master02节点的配置文件

| [root@k8s-master02 ~] |

| name: 'k8s-master02' |

| data-dir: /var/lib/etcd |

| wal-dir: /var/lib/etcd/wal |

| snapshot-count: 5000 |

| heartbeat-interval: 100 |

| election-timeout: 1000 |

| quota-backend-bytes: 0 |

| listen-peer-urls: 'https://10.0.0.202:2380' |

| listen-client-urls: 'https://10.0.0.202:2379,http://127.0.0.1:2379' |

| max-snapshots: 3 |

| max-wals: 5 |

| cors: |

| initial-advertise-peer-urls: 'https://10.0.0.202:2380' |

| advertise-client-urls: 'https://10.0.0.202:2379' |

| discovery: |

| discovery-fallback: 'proxy' |

| discovery-proxy: |

| discovery-srv: |

| initial-cluster: 'k8s-master01=https://10.0.0.201:2380,k8s-master02=https://10.0.0.202:2380,k8s-master03=https://10.0.0.203:2380' |

| initial-cluster-token: 'etcd-k8s-cluster' |

| initial-cluster-state: 'new' |

| strict-reconfig-check: false |

| enable-v2: true |

| enable-pprof: true |

| proxy: 'off' |

| proxy-failure-wait: 5000 |

| proxy-refresh-interval: 30000 |

| proxy-dial-timeout: 1000 |

| proxy-write-timeout: 5000 |

| proxy-read-timeout: 0 |

| client-transport-security: |

| cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' |

| key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' |

| client-cert-auth: true |

| trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' |

| auto-tls: true |

| peer-transport-security: |

| cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' |

| key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' |

| peer-client-cert-auth: true |

| trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' |

| auto-tls: true |

| debug: false |

| log-package-levels: |

| log-outputs: [default] |

| force-new-cluster: false |

| EOF |

1.3 k8s-master03节点的配置文件

| [root@k8s-master03 ~] |

| name: 'k8s-master03' |

| data-dir: /var/lib/etcd |

| wal-dir: /var/lib/etcd/wal |

| snapshot-count: 5000 |

| heartbeat-interval: 100 |

| election-timeout: 1000 |

| quota-backend-bytes: 0 |

| listen-peer-urls: 'https://10.0.0.203:2380' |

| listen-client-urls: 'https://10.0.0.203:2379,http://127.0.0.1:2379' |

| max-snapshots: 3 |

| max-wals: 5 |

| cors: |

| initial-advertise-peer-urls: 'https://10.0.0.203:2380' |

| advertise-client-urls: 'https://10.0.0.203:2379' |

| discovery: |

| discovery-fallback: 'proxy' |

| discovery-proxy: |

| discovery-srv: |

| initial-cluster: 'k8s-master01=https://10.0.0.201:2380,k8s-master02=https://10.0.0.202:2380,k8s-master03=https://10.0.0.203:2380' |

| initial-cluster-token: 'etcd-k8s-cluster' |

| initial-cluster-state: 'new' |

| strict-reconfig-check: false |

| enable-v2: true |

| enable-pprof: true |

| proxy: 'off' |

| proxy-failure-wait: 5000 |

| proxy-refresh-interval: 30000 |

| proxy-dial-timeout: 1000 |

| proxy-write-timeout: 5000 |

| proxy-read-timeout: 0 |

| client-transport-security: |

| cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' |

| key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' |

| client-cert-auth: true |

| trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' |

| auto-tls: true |

| peer-transport-security: |

| cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' |

| key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem' |

| peer-client-cert-auth: true |

| trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem' |

| auto-tls: true |

| debug: false |

| log-package-levels: |

| log-outputs: [default] |

| force-new-cluster: false |

| EOF |

2. 所有master节点启动服务

2.1 创建启动脚本

| [root@k8s-master01 ~] |

| [Unit] |

| Description=Etcd Service |

| Documentation=https://coreos.com/etcd/docs/latest/ |

| After=network.target |

| |

| [Service] |

| Type=notify |

| ExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.config.yml> Restart=on-failure |

| RestartSec=10 |

| LimitNOFILE=65536 |

| |

| [Install] |

| WantedBy=multi-user.target |

| Alias=etcd3.service |

| EOF |

2.2 启动服务

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~] |

| Created symlink from /etc/systemd/system/etcd3.service to /usr/lib/systemd/system/etcd.service. |

| Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /usr/lib/systemd/system/etcd.service. |

| [root@k8s-master01 ~] |

| ● etcd.service - Etcd Service |

| Loaded: loaded (/usr/lib/systemd/system/etcd.service; enabled; vendor preset: disabled) |

| Active: active (running) since Sat 2023-04-29 15:14:27 CST; 442ms ago |

| Docs: https://coreos.com/etcd/docs/latest/ |

| Main PID: 1799 (etcd) |

| Tasks: 7 |

| Memory: 53.2M |

| CGroup: /system.slice/etcd.service |

| └─1799 /usr/local/bin/etcd --config-file=/etc/etcd/etcd.c... |

| |

| Apr 29 15:14:27 k8s-master01 etcd[1799]: {"level":"info","ts":"2023-... |

| Apr 29 15:14:27 k8s-master01 etcd[1799]: {"level":"info","ts":"2023-... |

| Apr 29 15:14:27 k8s-master01 etcd[1799]: {"level":"info","ts":"2023-... |

| Apr 29 15:14:27 k8s-master01 etcd[1799]: {"level":"info","ts":"2023-... |

| Apr 29 15:14:27 k8s-master01 etcd[1799]: {"level":"info","ts":"2023-... |

| Apr 29 15:14:27 k8s-master01 etcd[1799]: {"level":"info","ts":"2023-... |

| Apr 29 15:14:27 k8s-master01 etcd[1799]: {"level":"info","ts":"2023-... |

| Apr 29 15:14:27 k8s-master01 etcd[1799]: {"level":"info","ts":"2023-... |

| Apr 29 15:14:27 k8s-master01 systemd[1]: Started Etcd Service. |

| Apr 29 15:14:28 k8s-master01 etcd[1799]: {"level":"info","ts":"2023-... |

| Apr 29 15:14:28 k8s-master01 etcd[1799]: {"level":"warn","ts":"2023-... |

| Hint: Some lines were ellipsized, use -l to show in full. |

2.3 查看etcd状态

| [root@k8s-master01 ~] |

| |

| +-----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ |

| | ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS | |

| +-----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ |

| | 10.0.0.201:2379 | a13792c022d0886d | 3.5.2 | 20 kB | true | false | 2 | 8 | 8 | | |

| | 10.0.0.202:2379 | fee311e0d1995cf9 | 3.5.2 | 20 kB | false | false | 2 | 8 | 8 | | |

| | 10.0.0.203:2379 | 1ff1bd28f41b3b74 | 3.5.2 | 20 kB | false | false | 2 | 8 | 8 | | |

| +-----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ |

| |

| |

| |

六、高可用配置(haproxy+keepalived)

1.所有master节点安装keepalived和haproxy

| |

| |

| [root@k8s-master01 ~/softwares] |

2.所有master节点配置haproxy,配置文件各个节点相同

2.1 备份配置文件

| [root@k8s-master01 ~/softwares] |

2.2 所有节点的配置文件内容相同

| [root@k8s-master01 ~/softwares] |

| global |

| maxconn 2000 |

| ulimit-n 16384 |

| log 127.0.0.1 local0 err |

| stats timeout 30s |

| |

| defaults |

| log global |

| mode http |

| option httplog |

| timeout connect 5000 |

| timeout client 50000 |

| timeout server 50000 |

| timeout http-request 15s |

| timeout http-keep-alive 15s |

| |

| frontend monitor-in |

| bind *:33305 |

| mode http |

| option httplog |

| monitor-uri /monitor |

| |

| frontend k8s-master |

| bind 0.0.0.0:16443 |

| bind 127.0.0.1:16443 |

| mode tcp |

| option tcplog |

| tcp-request inspect-delay 5s |

| default_backend k8s-master |

| |

| backend k8s-master |

| mode tcp |

| option tcplog |

| option tcp-check |

| balance roundrobin |

| default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100 |

| server k8s-master01 10.0.0.201:6443 check |

| server k8s-master02 10.0.0.202:6443 check |

| server k8s-master03 10.0.0.203:6443 check |

| EOF |

3.所有节点配置keepalived,配置文件各节点不同

3.1 备份配置文件

| [root@k8s-master01 ~/softwares] |

3.2 "k8s-master01"节点创建配置文件

| [root@k8s-master01 ~/softwares] |

| ! Configuration File for keepalived |

| global_defs { |

| router_id LVS_DEVEL |

| script_user root |

| enable_script_security |

| } |

| vrrp_script chk_apiserver { |

| script "/etc/keepalived/check_apiserver.sh" |

| interval 5 |

| weight -5 |

| fall 2 |

| rise 1 |

| } |

| vrrp_instance VI_1 { |

| state MASTER |

| interface eth0 |

| mcast_src_ip 10.0.0.201 |

| virtual_router_id 51 |

| priority 101 |

| advert_int 2 |

| authentication { |

| auth_type PASS |

| auth_pass K8SHA_KA_AUTH |

| } |

| virtual_ipaddress { |

| 10.0.0.222 |

| } |

| track_script { |

| chk_apiserver |

| } |

| } |

| EOF |

| |

3.3 "k8s-master02"节点创建配置文件

| [root@k8s-master02 ~/softwares] |

| ! Configuration File for keepalived |

| global_defs { |

| router_id LVS_DEVEL |

| script_user root |

| enable_script_security |

| } |

| vrrp_script chk_apiserver { |

| script "/etc/keepalived/check_apiserver.sh" |

| interval 5 |

| weight -5 |

| fall 2 |

| rise 1 |

| } |

| vrrp_instance VI_1 { |

| state MASTER |

| interface eth0 |

| mcast_src_ip 10.0.0.202 |

| virtual_router_id 51 |

| priority 101 |

| advert_int 2 |

| authentication { |

| auth_type PASS |

| auth_pass K8SHA_KA_AUTH |

| } |

| virtual_ipaddress { |

| 10.0.0.222 |

| } |

| track_script { |

| chk_apiserver |

| } |

| } |

| EOF |

3.4 "k8s-master03"节点创建配置文件

| [root@k8s-master03 ~/softwares] |

| ! Configuration File for keepalived |

| global_defs { |

| router_id LVS_DEVEL |

| script_user root |

| enable_script_security |

| } |

| vrrp_script chk_apiserver { |

| script "/etc/keepalived/check_apiserver.sh" |

| interval 5 |

| weight -5 |

| fall 2 |

| rise 1 |

| } |

| vrrp_instance VI_1 { |

| state MASTER |

| interface eth0 |

| mcast_src_ip 10.0.0.203 |

| virtual_router_id 51 |

| priority 101 |

| advert_int 2 |

| authentication { |

| auth_type PASS |

| auth_pass K8SHA_KA_AUTH |

| } |

| virtual_ipaddress { |

| 10.0.0.222 |

| } |

| track_script { |

| chk_apiserver |

| } |

| } |

| EOF |

3.5 所有master节点配置KeepAlived健康检查文件

3.5.1 创建检查脚本

| [root@k8s-master01 ~/softwares] |

| |

| |

| err=0 |

| for k in $(seq 1 3) |

| do |

| check_code=$(pgrep haproxy) |

| if [[ $check_code == "" ]]; then |

| err=$(expr $err + 1) |

| sleep 1 |

| continue |

| else |

| err=0 |

| break |

| fi |

| done |

| |

| if [[ $err != "0" ]]; then |

| echo "systemctl stop keepalived" |

| /usr/bin/systemctl stop keepalived |

| exit 1 |

| else |

| exit 0 |

| fi |

| EOF |

| |

3.5.2 添加执行权限

| [root@k8s-master01 ~/softwares] |

温馨提示:

(1)我们通过KeepAlived虚拟出来一个VIP,VIP会配置到一个master节点上面,它会通过haproxy暴露的16443的端口反向代理到我们的三个master节点上面,所以我们可以通过VIP的地址加上16443访问到我们的API server;

(2)健康检查会检查haproxy的状态,三次失败就会将KeepAlived停掉,停掉之后KeepAlived会跳到其他的节点;

4.所有master节点启动服务

4.1 启动harproxy

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares] |

| Created symlink from /etc/systemd/system/multi-user.target.wants/haproxy.service to /usr/lib/systemd/system/haproxy.service. |

4.2 启动keepalived

| [root@k8s-master01 ~/softwares] |

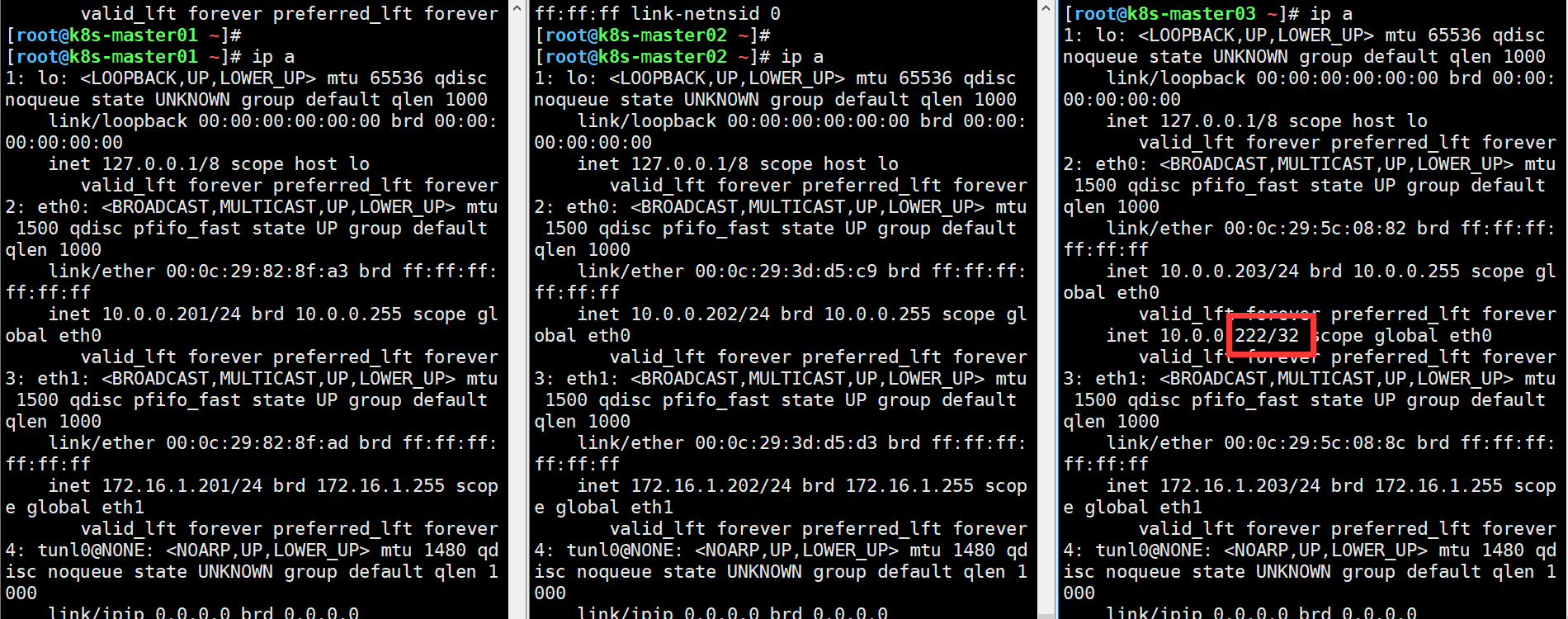

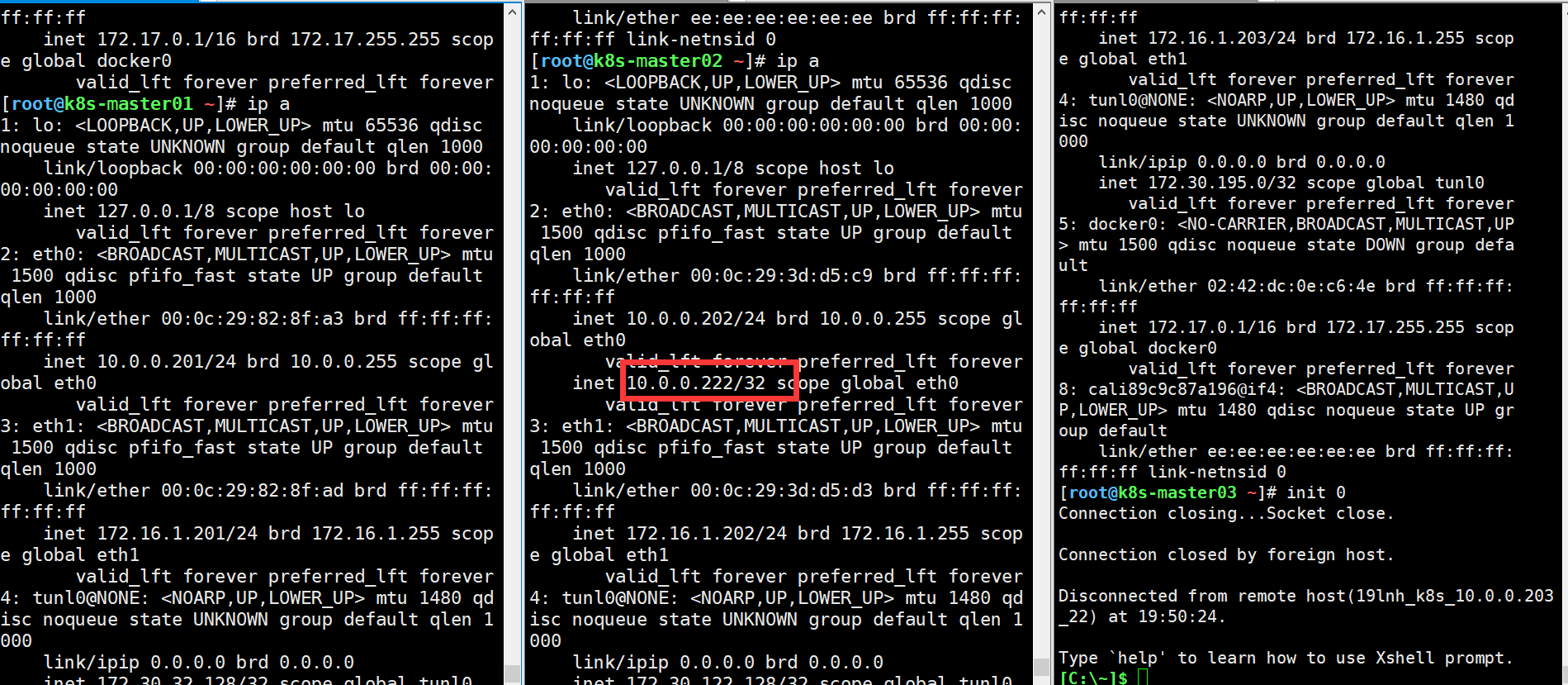

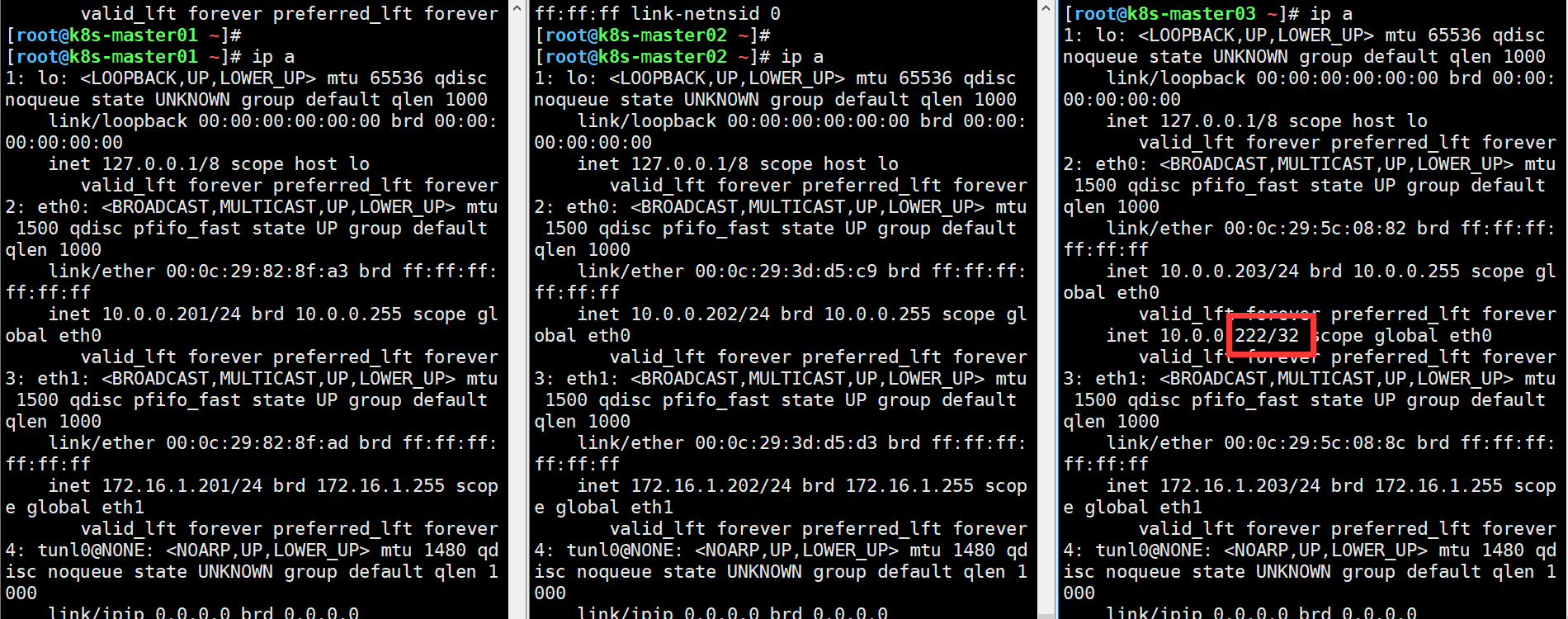

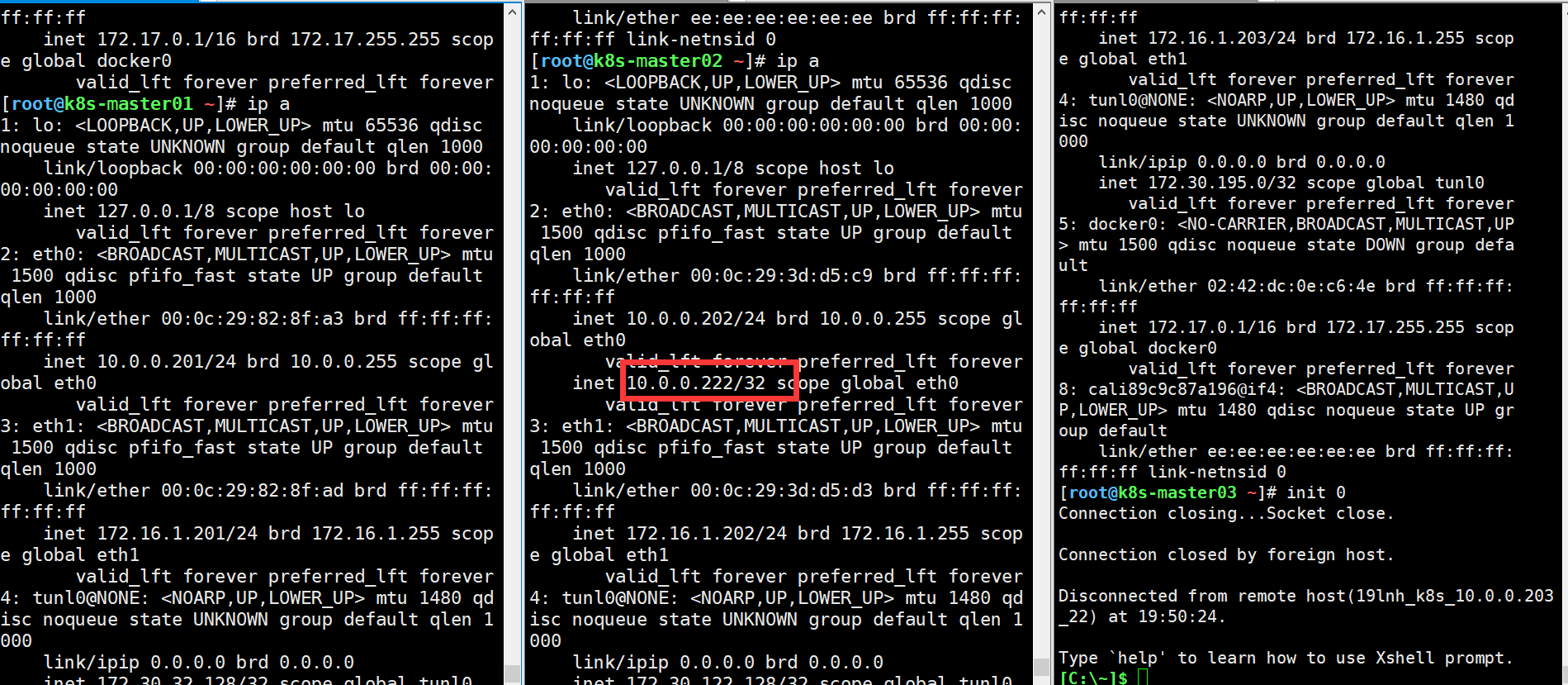

4.3 查看VIP

| [root@k8s-master03 ~/softwares] |

| 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 |

| link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 |

| inet 127.0.0.1/8 scope host lo |

| valid_lft forever preferred_lft forever |

| 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000 |

| link/ether 00:0c:29:5c:08:82 brd ff:ff:ff:ff:ff:ff |

| inet 10.0.0.203/24 brd 10.0.0.255 scope global eth0 |

| valid_lft forever preferred_lft forever |

| inet 10.0.0.222/32 scope global eth0 |

| valid_lft forever preferred_lft forever |

| 3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000 |

| link/ether 00:0c:29:5c:08:8c brd ff:ff:ff:ff:ff:ff |

| inet 172.16.1.203/24 brd 172.16.1.255 scope global eth1 |

| valid_lft forever preferred_lft forever |

| 4: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000 |

| link/ipip 0.0.0.0 brd 0.0.0.0 |

| 5: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default |

| link/ether 02:42:fe:03:de:61 brd ff:ff:ff:ff:ff:ff |

| inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0 |

| valid_lft forever preferred_lft forever |

| |

| |

| |

七、二进制K8s master组件配置

1.所有master节点Apiserver服务启动

1.1 所有master节点创建工作目录

1.2 k8s-master01节点创建配置文件

| [root@k8s-master01 ~] |

| [Unit] |

| Description=Kubernetes API Server |

| Documentation=https://github.com/kubernetes/kubernetes |

| After=network.target |

| |

| [Service] |

| ExecStart=/usr/local/bin/kube-apiserver \ |

| --v=2 \ |

| --logtostderr=true \ |

| --allow-privileged=true \ |

| --bind-address=0.0.0.0 \ |

| --secure-port=6443 \ |

| --insecure-port=0 \ |

| --advertise-address=10.0.0.201 \ |

| --service-cluster-ip-range=10.96.0.0/12 \ |

| |

| --service-node-port-range=3000-50000 \ |

| --etcd-servers=https://10.0.0.201:2379,https://10.0.0.202:2379,https://10.0.0.203:2379 \ |

| --etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \ |

| --etcd-certfile=/etc/etcd/ssl/etcd.pem \ |

| --etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \ |

| --client-ca-file=/etc/kubernetes/pki/ca.pem \ |

| --tls-cert-file=/etc/kubernetes/pki/apiserver.pem \ |

| --tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \ |

| --kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \ |

| --kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \ |

| --service-account-key-file=/etc/kubernetes/pki/sa.pub \ |

| --service-account-signing-key-file=/etc/kubernetes/pki/sa.key \ |

| --service-account-issuer=https://kubernetes.default.svc.cluster.local \ |

| --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \ |

| --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \ |

| --authorization-mode=Node,RBAC \ |

| --enable-bootstrap-token-auth=true \ |

| --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \ |

| --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \ |

| --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \ |

| --requestheader-allowed-names=aggregator \ |

| --requestheader-group-headers=X-Remote-Group \ |

| --requestheader-extra-headers-prefix=X-Remote-Extra- \ |

| --requestheader-username-headers=X-Remote-User |

| |

| |

| Restart=on-failure |

| RestartSec=10s |

| LimitNOFILE=65535 |

| |

| [Install] |

| WantedBy=multi-user.target |

| EOF |

1.3 k8s-master02节点创建配置文件

| [root@k8s-master02 ~] |

| [Unit] |

| Description=Kubernetes API Server |

| Documentation=https://github.com/kubernetes/kubernetes |

| After=network.target |

| |

| [Service] |

| ExecStart=/usr/local/bin/kube-apiserver \ |

| --v=2 \ |

| --logtostderr=true \ |

| --allow-privileged=true \ |

| --bind-address=0.0.0.0 \ |

| --secure-port=6443 \ |

| --insecure-port=0 \ |

| --advertise-address=10.0.0.202 \ |

| --service-cluster-ip-range=10.96.0.0/12 \ |

| --service-node-port-range=3000-50000 \ |

| --etcd-servers=https://10.0.0.201:2379,https://10.0.0.202:2379,https://10.0.0.203:2379 \ |

| --etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \ |

| --etcd-certfile=/etc/etcd/ssl/etcd.pem \ |

| --etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \ |

| --client-ca-file=/etc/kubernetes/pki/ca.pem \ |

| --tls-cert-file=/etc/kubernetes/pki/apiserver.pem \ |

| --tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \ |

| --kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \ |

| --kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \ |

| --service-account-key-file=/etc/kubernetes/pki/sa.pub \ |

| --service-account-signing-key-file=/etc/kubernetes/pki/sa.key \ |

| --service-account-issuer=https://kubernetes.default.svc.cluster.local \ |

| --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \ |

| --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \ |

| --authorization-mode=Node,RBAC \ |

| --enable-bootstrap-token-auth=true \ |

| --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \ |

| --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \ |

| --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \ |

| --requestheader-allowed-names=aggregator \ |

| --requestheader-group-headers=X-Remote-Group \ |

| --requestheader-extra-headers-prefix=X-Remote-Extra- \ |

| --requestheader-username-headers=X-Remote-User |

| |

| |

| Restart=on-failure |

| RestartSec=10s |

| LimitNOFILE=65535 |

| |

| [Install] |

| WantedBy=multi-user.target |

| EOF |

1.4 k8s-master03节点创建配置文件

| [root@k8s-master03 ~] |

| [Unit] |

| Description=Kubernetes API Server |

| Documentation=https://github.com/kubernetes/kubernetes |

| After=network.target |

| |

| [Service] |

| ExecStart=/usr/local/bin/kube-apiserver \ |

| --v=2 \ |

| --logtostderr=true \ |

| --allow-privileged=true \ |

| --bind-address=0.0.0.0 \ |

| --secure-port=6443 \ |

| --insecure-port=0 \ |

| --advertise-address=10.0.0.203 \ |

| --service-cluster-ip-range=10.96.0.0/12 \ |

| --service-node-port-range=3000-50000 \ |

| --etcd-servers=https://10.0.0.201:2379,https://10.0.0.202:2379,https://10.0.0.203:2379 \ |

| --etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \ |

| --etcd-certfile=/etc/etcd/ssl/etcd.pem \ |

| --etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \ |

| --client-ca-file=/etc/kubernetes/pki/ca.pem \ |

| --tls-cert-file=/etc/kubernetes/pki/apiserver.pem \ |

| --tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \ |

| --kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \ |

| --kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \ |

| --service-account-key-file=/etc/kubernetes/pki/sa.pub \ |

| --service-account-signing-key-file=/etc/kubernetes/pki/sa.key \ |

| --service-account-issuer=https://kubernetes.default.svc.cluster.local \ |

| --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \ |

| --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \ |

| --authorization-mode=Node,RBAC \ |

| --enable-bootstrap-token-auth=true \ |

| --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \ |

| --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \ |

| --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \ |

| --requestheader-allowed-names=aggregator \ |

| --requestheader-group-headers=X-Remote-Group \ |

| --requestheader-extra-headers-prefix=X-Remote-Extra- \ |

| --requestheader-username-headers=X-Remote-User |

| |

| |

| Restart=on-failure |

| RestartSec=10s |

| LimitNOFILE=65535 |

| |

| [Install] |

| WantedBy=multi-user.target |

| EOF |

1.5 所有master节点启动服务

2.所有master节点ControllerManager服务启动

2.1 创建配置文件

| [root@k8s-master01 ~] |

| [Unit] |

| Description=Kubernetes Controller Manager |

| Documentation=https://github.com/kubernetes/kubernetes |

| After=network.target |

| |

| [Service] |

| ExecStart=/usr/local/bin/kube-controller-manager \ |

| --v=2 \ |

| --logtostderr=true \ |

| --address=127.0.0.1 \ |

| --root-ca-file=/etc/kubernetes/pki/ca.pem \ |

| --cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \ |

| --cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \ |

| --service-account-private-key-file=/etc/kubernetes/pki/sa.key \ |

| --kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \ |

| --leader-elect=true \ |

| --use-service-account-credentials=true \ |

| --node-monitor-grace-period=40s \ |

| --node-monitor-period=5s \ |

| --pod-eviction-timeout=2m0s \ |

| --controllers=*,bootstrapsigner,tokencleaner \ |

| --allocate-node-cidrs=true \ |

| --cluster-cidr=172.16.0.0/12 \ |

| --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \ |

| --node-cidr-mask-size=24 |

| |

| Restart=always |

| RestartSec=10s |

| |

| [Install] |

| WantedBy=multi-user.target |

| EOF |

2.2启动服务

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~] |

| ● kube-controller-manager.service - Kubernetes Controller Manager |

| Loaded: loaded (/usr/lib/systemd/system/kube-controller-manager.service; enabled; vendor preset: disabled) |

| Active: active (running) since Sat 2023-04-29 15:41:32 CST; 1s ago |

| Docs: https://github.com/kubernetes/kubernetes |

| Main PID: 2100 (kube-controller) |

| Tasks: 1 |

| Memory: 33.0M |

| CGroup: /system.slice/kube-controller-manager.service |

| └─2100 /usr/local/bin/kube-controller-manager --v=2 --log... |

| |

| Apr 29 15:41:32 k8s-master01 systemd[1]: Started Kubernetes Controll... |

| Hint: Some lines were ellipsized, use -l to show in full. |

| |

3.所有master节点Scheduler服务启动

3.1 创建配置文件

| [root@k8s-master01 ~] |

| [Unit] |

| Description=Kubernetes Scheduler |

| Documentation=https://github.com/kubernetes/kubernetes |

| After=network.target |

| |

| [Service] |

| ExecStart=/usr/local/bin/kube-scheduler \ |

| --v=2 \ |

| --logtostderr=true \ |

| --address=127.0.0.1 \ |

| --leader-elect=true \ |

| --kubeconfig=/etc/kubernetes/scheduler.kubeconfig |

| |

| Restart=always |

| RestartSec=10s |

| |

| [Install] |

| WantedBy=multi-user.target |

| EOF |

3.2 启动服务并查看状态

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~] |

| ● kube-scheduler.service - Kubernetes Scheduler |

| Loaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; vendor preset: disabled) |

| Active: active (running) since Sat 2023-04-29 15:42:55 CST; 3s ago |

| Docs: https://github.com/kubernetes/kubernetes |

| Main PID: 2216 (kube-scheduler) |

| Tasks: 4 |

| Memory: 49.6M |

| CGroup: /system.slice/kube-scheduler.service |

| └─2216 /usr/local/bin/kube-scheduler --v=2 --logtostderr=... |

| |

| Apr 29 15:42:55 k8s-master01 systemd[1]: Started Kubernetes Scheduler. |

| Hint: Some lines were ellipsized, use -l to show in full. |

| |

3.3 检查组件是否正常

| [root@k8s-master01 ~]# kubectl get cs --kubeconfig=/etc/kubernetes/adin.kubeconfig |

| Warning: v1 ComponentStatus is deprecated in v1.19+ |

| NAME STATUS MESSAGE ERROR |

| controller-manager Healthy ok |

| scheduler Healthy ok |

| etcd-1 Healthy {"health":"true","reason":""} |

| etcd-2 Healthy {"health":"true","reason":""} |

| etcd-0 Healthy {"health":"true","reason":""} |

八、创建Bootstrapping自动颁发证书

1.k8s-master01节点创建bootstrap-kubelet.kubeconfig文件

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares] |

| kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://10.0.0.222:6443 --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig |

| kubectl config set-credentials tls-bootstrap-token-user --token=c8ad9c.2e4d610cf3e7426e --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig |

| kubectl config set-context tls-bootstrap-token-user@kubernetes --cluster=kubernetes --user=tls-bootstrap-token-user --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig |

| kubectl config use-context tls-bootstrap-token-user@kubernetes --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig |

| 温馨提示: |

| "bootstrap-kubelet.kubeconfig"是一个keepalived用来向apiserver申请证书的文件,如果要修改bootstrap.secret.yaml的token-id和token-secret,需要保证c8ad9c字符串一致的,并且位数是一样的。还要保证上个命令的黄色字体:c8ad9c.2e4d610cf3e7426e与你修改的字符串要一致 |

2. 所有master节点拷贝管理证书

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/bootstrap] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/bootstrap] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/bootstrap] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/bootstrap] |

3. master01创建bootstrap

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/bootstrap] |

九、部署Node节点

1. 从master01拷贝证书

| [root@k8s-master01 /etc/kubernetes] |

| [root@k8s-master01 /etc/kubernetes] |

| ssh $NODE mkdir -p /etc/kubernetes/pki /etc/etcd/ssl /etc/etcd/ssl |

| for FILE in etcd-ca.pem etcd.pem etcd-key.pem; do |

| scp /etc/etcd/ssl/$FILE $NODE:/etc/etcd/ssl/ |

| done |

| for FILE in pki/ca.pem pki/ca-key.pem pki/front-proxy-ca.pem bootstrap-kubelet.kubeconfig; do |

| scp /etc/kubernetes/$FILE $NODE:/etc/kubernetes/${FILE} |

| done |

| done |

| 温馨提示: |

| node节点使用自动颁发证书的形式配置 |

2. Kubelet配置

2.1 所有节点创建工作目录

| [root@k8s-master01 /etc/kubernetes] |

2.2 所有节点配置kubelet service

| [root@k8s-master01 /etc/kubernetes] |

| [Unit] |

| Description=Kubernetes Kubelet |

| Documentation=https://github.com/kubernetes/kubernetes |

| After=docker.service |

| Requires=docker.service |

| |

| [Service] |

| ExecStart=/usr/local/bin/kubelet |

| Restart=always |

| StartLimitInterval=0 |

| RestartSec=10 |

| |

| [Install] |

| WantedBy=multi-user.target |

| EOF |

2.3 所有节点配置kubelet service的配置文件

| [root@k8s-master01 /etc/kubernetes] |

| [Service] |

| Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig --kubeconfig=/etc/kubernetes/kubelet.kubeconfig" |

| Environment="KUBELET_SYSTEM_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin" |

| Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.2" |

| Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' " |

| ExecStart= |

| ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS |

| EOF |

2.4 所有节点创建kubelet的配置文件

| [root@k8s-master01 /etc/kubernetes] |

| apiVersion: kubelet.config.k8s.io/v1beta1 |

| kind: KubeletConfiguration |

| address: 0.0.0.0 |

| port: 10250 |

| readOnlyPort: 10255 |

| authentication: |

| anonymous: |

| enabled: false |

| webhook: |

| cacheTTL: 2m0s |

| enabled: true |

| x509: |

| clientCAFile: /etc/kubernetes/pki/ca.pem |

| authorization: |

| mode: Webhook |

| webhook: |

| cacheAuthorizedTTL: 5m0s |

| cacheUnauthorizedTTL: 30s |

| cgroupDriver: systemd |

| cgroupsPerQOS: true |

| clusterDNS: |

| - 10.96.0.10 |

| clusterDomain: yuanlinux.com |

| containerLogMaxFiles: 5 |

| containerLogMaxSize: 10Mi |

| contentType: application/vnd.kubernetes.protobuf |

| cpuCFSQuota: true |

| cpuManagerPolicy: none |

| cpuManagerReconcilePeriod: 10s |

| enableControllerAttachDetach: true |

| enableDebuggingHandlers: true |

| enforceNodeAllocatable: |

| - pods |

| eventBurst: 10 |

| eventRecordQPS: 5 |

| evictionHard: |

| imagefs.available: 15% |

| memory.available: 100Mi |

| nodefs.available: 10% |

| nodefs.inodesFree: 5% |

| evictionPressureTransitionPeriod: 5m0s |

| failSwapOn: true |

| fileCheckFrequency: 20s |

| hairpinMode: promiscuous-bridge |

| healthzBindAddress: 127.0.0.1 |

| healthzPort: 10248 |

| httpCheckFrequency: 20s |

| imageGCHighThresholdPercent: 85 |

| imageGCLowThresholdPercent: 80 |

| imageMinimumGCAge: 2m0s |

| iptablesDropBit: 15 |

| iptablesMasqueradeBit: 14 |

| kubeAPIBurst: 10 |

| kubeAPIQPS: 5 |

| makeIPTablesUtilChains: true |

| maxOpenFiles: 1000000 |

| maxPods: 110 |

| nodeStatusUpdateFrequency: 10s |

| oomScoreAdj: -999 |

| podPidsLimit: -1 |

| registryBurst: 10 |

| registryPullQPS: 5 |

| resolvConf: /etc/resolv.conf |

| rotateCertificates: true |

| runtimeRequestTimeout: 2m0s |

| serializeImagePulls: true |

| staticPodPath: /etc/kubernetes/manifests |

| streamingConnectionIdleTimeout: 4h0m0s |

| syncFrequency: 1m0s |

| volumeStatsAggPeriod: 1m0s |

| EOF |

2.5 启动所有节点kubelet

| [root@k8s-master01 /etc/kubernetes] |

| [root@k8s-master01 /etc/kubernetes] |

| Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service. |

| [root@k8s-master01 /etc/kubernetes] |

| ● kubelet.service - Kubernetes Kubelet |

| Loaded: loaded (/usr/lib/systemd/system/kubelet.service; enabled; vendor preset: disabled) |

| Drop-In: /etc/systemd/system/kubelet.service.d |

| └─10-kubelet.conf |

| Active: active (running) since Sat 2023-04-29 16:21:26 CST; 7s ago |

| Docs: https://github.com/kubernetes/kubernetes |

| Main PID: 2784 (kubelet) |

| Tasks: 1 |

| Memory: 10.2M |

| CGroup: /system.slice/kubelet.service |

| └─2784 /usr/local/bin/kubelet --bootstrap-kubeconfig=/e... |

| |

| Apr 29 16:21:26 k8s-master01 systemd[1]: Started Kubernetes Kubelet. |

| Hint: Some lines were ellipsized, use -l to show in full. |

| |

2.6 在master101节点上查看node信息

| [root@k8s-master01 /etc/kubernetes] |

| NAME STATUS ROLES AGE VERSION |

| k8s-master01 NotReady <none> 59m v1.23.15 |

| k8s-master02 NotReady <none> 58m v1.23.15 |

| k8s-master03 NotReady <none> 58m v1.23.15 |

| k8s-node01 NotReady <none> 38s v1.23.15 |

| k8s-node02 NotReady <none> 23s v1.23.15 |

踩坑: 启动Kubelet,发现node的Kubelet没起来,发现没有目录,竟然.....................如果操作步骤一样的话,请排查文件是否都存在了。

3.kube-proxy配置

3.1 在“k8s-master01”节点生成"/etc/kubernetes/kube-proxy.kubeconfig"配置文件

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install] |

| kubectl create clusterrolebinding system:kube-proxy --clusterrole system:node-proxier --serviceaccount kube-system:kube-proxy |

| SECRET=$(kubectl -n kube-system get sa/kube-proxy \ |

| --output=jsonpath='{.secrets[0].name}') |

| JWT_TOKEN=$(kubectl -n kube-system get secret/$SECRET \ |

| --output=jsonpath='{.data.token}' | base64 -d) |

| PKI_DIR=/etc/kubernetes/pki |

| K8S_DIR=/etc/kubernetes |

| kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://10.0.0.222:6443 --kubeconfig=${K8S_DIR}/kube-proxy.kubeconfig |

| kubectl config set-credentials kubernetes --token=${JWT_TOKEN} --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig |

| kubectl config set-context kubernetes --cluster=kubernetes --user=kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig |

| kubectl config use-context kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig |

3.2 在“k8s-master01”将kube-proxy的systemd Service文件发送到其他节点

| [root@k8s-master01 ~/softwares/07-k8s-ha-install] |

| scp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfig |

| done |

3.3 所有节点创建kube-proxy.conf配置文件

| [root@k8s-master01 ~] |

| KUBE_PROXY_OPTS="--logtostderr=false \\ |

| --v=2 \\ |

| --log-dir=/var/log/kubernetes/ \\ |

| --config=/etc/kubernetes/kube-proxy-config.yml" |

| EOF |

| |

| |

| |

| |

| [root@k8s-master01 ~] |

| kind: KubeProxyConfiguration |

| apiVersion: kubeproxy.config.k8s.io/v1alpha1 |

| bindAddress: 0.0.0.0 |

| metricsBindAddress: 0.0.0.0:10249 |

| clientConnection: |

| kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig |

| hostnameOverride: k8s-master01 |

| clusterCIDR: 172.30.0.0/16 |

| EOF |

| |

| [root@k8s-master02 ~] |

| kind: KubeProxyConfiguration |

| apiVersion: kubeproxy.config.k8s.io/v1alpha1 |

| bindAddress: 0.0.0.0 |

| metricsBindAddress: 0.0.0.0:10249 |

| clientConnection: |

| kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig |

| hostnameOverride: k8s-master02 |

| clusterCIDR: 172.30.0.0/16 |

| EOF |

| |

| [root@k8s-master03 ~] |

| kind: KubeProxyConfiguration |

| apiVersion: kubeproxy.config.k8s.io/v1alpha1 |

| bindAddress: 0.0.0.0 |

| metricsBindAddress: 0.0.0.0:10249 |

| clientConnection: |

| kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig |

| hostnameOverride: k8s-master03 |

| clusterCIDR: 172.30.0.0/16 |

| EOF |

| |

| [root@k8s-node01 ~] |

| kind: KubeProxyConfiguration |

| apiVersion: kubeproxy.config.k8s.io/v1alpha1 |

| bindAddress: 0.0.0.0 |

| metricsBindAddress: 0.0.0.0:10249 |

| clientConnection: |

| kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig |

| hostnameOverride: k8s-node01 |

| clusterCIDR: 172.30.0.0/16 |

| EOF |

| |

| [root@k8s-node02 ~] |

| kind: KubeProxyConfiguration |

| apiVersion: kubeproxy.config.k8s.io/v1alpha1 |

| bindAddress: 0.0.0.0 |

| metricsBindAddress: 0.0.0.0:10249 |

| clientConnection: |

| kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig |

| hostnameOverride: k8s-node02 |

| clusterCIDR: 172.30.0.0/16 |

| EOF |

3.4 所有节点使用systemd管理kube-proxy

| [root@k8s-master01 ~] |

| [Unit] |

| Description=Kubernetes Proxy |

| After=network.target |

| |

| [Service] |

| EnvironmentFile=/etc/kubernetes/kube-proxy.conf |

| ExecStart=/usr/local/bin/kube-proxy \$KUBE_PROXY_OPTS |

| Restart=on-failure |

| LimitNOFILE=65536 |

| |

| [Install] |

| WantedBy=multi-user.target |

| EOF |

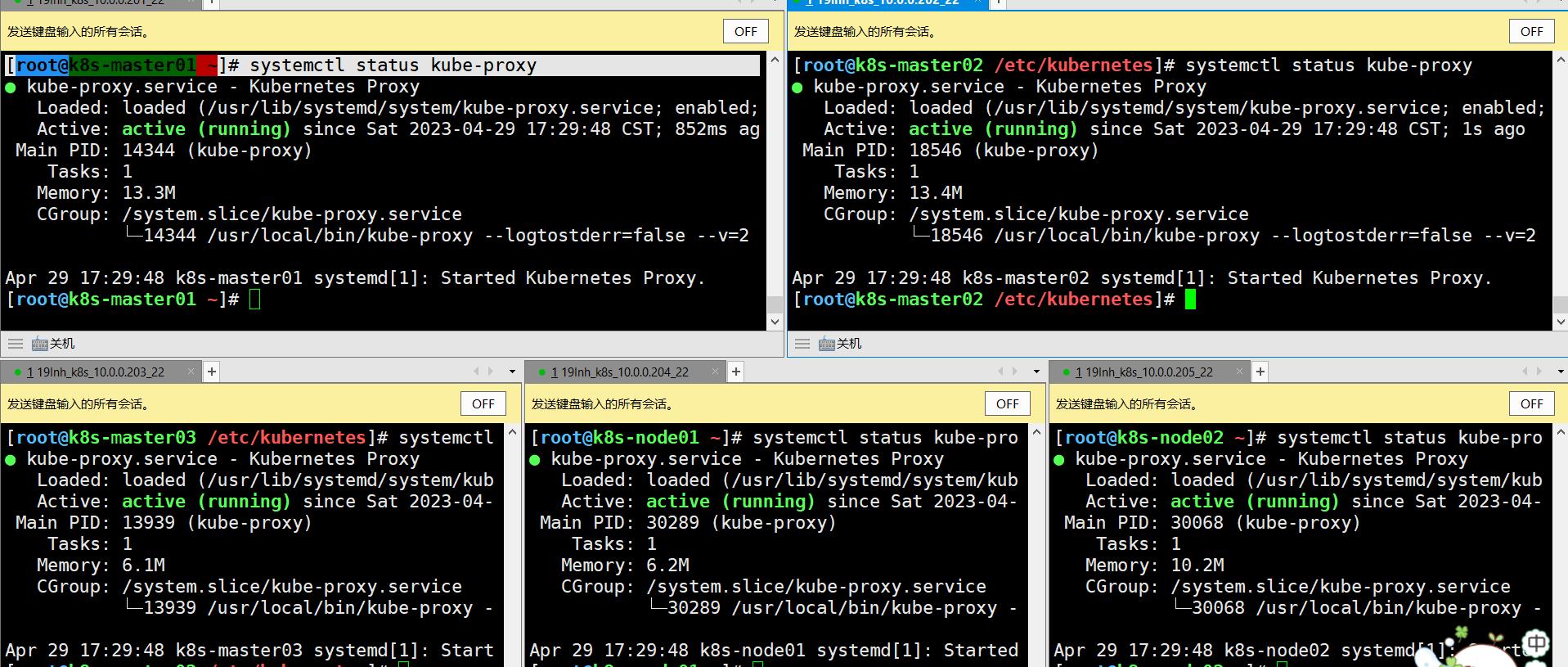

3.5 所有节点启动kube-proxy

| [root@k8s-master01 ~] |

| [root@k8s-master01 ~] |

| Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service. |

| [root@k8s-master01 ~] |

温馨提示:

如果更改了集群Pod的网段,需要更改kube-proxy.conf的clusterCIDR参数,比如我上面的案例自定义的网段为"172.30.0.0/16"。

十、master01部署网络插件

1. 环境准备

| |

| [root@k8s-master01 ~] |

| 10.0.0.250 harbor.yuanlinux.com |

| |

| [root@k8s-master01 ~] |

| |

| |

| [root@harbor.yuanlinux.com ~] |

| [root@harbor.yuanlinux.com ~] |

| [root@harbor.yuanlinux.com ~] |

| [root@harbor.yuanlinux.com ~] |

| [root@harbor.yuanlinux.com ~] |

| |

| |

2.部署calico网络插件

| [root@k8s-master01 ~/softwares] |

| |

| |

| |

| 17 etcd-key: 值见下面操作 |

| 18 etcd-cert: 值见下面操作 |

| 19 etcd-ca: 值见下面操作 |

| 31 etcd_endpoints: "https://10.0.0.201:2379,https://10.0.0.202:2379,https://10.0.0.203:2379" |

| 32 |

| 33 |

| 34 etcd_ca: "/calico-secrets/etcd-ca" |

| 35 etcd_cert: "/calico-secrets/etcd-cert" |

| 36 etcd_key: "/calico-secrets/etcd-key" |

| 366 - name: CALICO_IPV4POOL_CIDR |

| 367 value: "172.30.0.0/16" |

| |

| |

| 235 - name: install-cni |

| 236 image: harbor.yuanlinux.com/k8s/cni:v3.22.0 |

| 279 - name: flexvol-driver |

| 280 image: harbor.yuanlinux.com/k8s/pod2daemon-flexvol:v3.22.0 |

| 290 - name: calico-node |

| 291 image: harbor.yuanlinux.com/k8s/node:v3.22.0 |

| 532 - name: calico-kube-controllers |

| 533 image: harbor.yuanlinux.com/k8s/kube-controllers:v3.22.0 |

| |

| |

| |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/calico] |

| LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcFFJQkFBS0NBUUVBdVdZSVg2b2xXS3dMUEtJSzRZcHB4ZG5qNnBIb3N2c3BVUFhsLytpcngvVGJHc0h5ClQ5eEtvRTR1SWlCek00dzB6Z0NkTWVVYVZaTzBUVVVHV0hzMlR6c0FlV3hzbVRqUC9qNGxzQ0hsOXdOMTg0aFoKV0wvYytreU4xUEdxWTMxV3AweFN6L0tsa0RtcmxManMyQkVBekJYU2JlR1RJemFnTFRXZEE1NlJGK1p6TVJmZApIRjRzT293eTl4dy8vZk04bENXMzQ0Sk1yNmxHZ2hsUnVTa2NJRklYLzNNemo4V0NLNyt2VFE2b2k0SllzWS9NCkZVZHM2WHRacHZPcGxUcUo2Zk05Q2VaR25UYnlOTGJPNk5VbS84eDRjdC9mYmJ3Ui9oTTBxcnlnUkU4eWpxQW8KbzdFMjhvTkxGUkRiTmxzZ3VObWNyQmo0eUJ2SjJSaFEyejEyR1FJREFRQUJBb0lCQUZ5dndlZnhyQklVa05tVApPVlZnV1Zqc2dhRTNxTm94N29ubkpVRTNGUW8yUTRPeENtOGFkc1NGMFZLR1hwR2F1cHR5ZXlRQ29aTys4QmpoCk5UYnZBa3ZCOTQ2OHdkNG9KUE82SmlWVURSL2N2dzh0VDEyckxkS0Vpek8yVGJUSGFKYmk5Vk80djBUSFVCeGUKQnlwTjlkUVI1TTNDUkVrS2VqR2Y2QXR6THA1L1lOamxIQXdpUFUvaEllUVRBS1JodTVZekw5NFZFQTVQSjVxegp5OVNHYjlUZ3lQUmNSem5xOFpmL3lhTmlPYXU3bVJ4U094UlM3QzdWdEZISVpiOGg1WXQ0eEJGNG9VeVpWSGdMCnp4VjlBZ3psZ0JvaGprTGhJeDRhOTB0TlJ1cHF1bDR3b1lnc24va0c5RE4vZUYrWGlNTWx1WWtVL1dvNGFUeHAKTFY5UFZNRUNnWUVBN1paR2NmRlBJbVg4VmFjVHB1YzBLN0ZPRnJTbUMzTWY4dDFsYkhhZi9WQ1JFSW51RmgrMgppdVdXNGJ1cjZ5SVFIRS9LSGhmUm9jT3BhK2JRWjlmc01aMFZ0b3V1d3dqc0JsYnI0Znl2aTVtSmsxNkZNQXFICjJ3L0VhTDl0S3pXWGxMejQzV3FHemxLM0x3TzJvUkkwUVNybTBqaS81bmkzZUxKcThmY2dhODBDZ1lFQXg4UlcKelhPa3U1M2hSRkRXMWhuaWFnM0RiR3FzUTNYY3NtdnpVaTFMN0MrS3lobFB4SmpqNXVmREZ4MVhZdkt6L0wveAo4Skp1Y2VlRU5RTmo3TE9Xd1RWb0s3N29JNDdWZmcrYjdUR1BsNkpWcTZ0TUZpV0xmYW9JZnV4ZVdSWjBraWR6CitpMEZ0eTd5dXRGbzR0dE15QWZab0V4cXpkN1ZKY090YzFQSEgzMENnWUVBdDN2bitaVkg3U1BnSlhIN3psa2UKUkdRUkQ1NEI0alBOeDYxTjU5OFJIZnY3bkU4NWJTS2V3bFFmRzBQcHVKUzg1bkNFZ29zWW5acFRISDdNRW5hQgo5YXNBR3ROemF6Slh2V21oa0F5cXNlQW9qSVJoemNGRVBGekg3YkZ3cVA4aGlvQUtua3pud1MzR1JPdlVQajZsCjFuSkFncmZMRkQzRVM5VlduSG1qTXowQ2dZRUFwb1lWc2Nndnp6SUp3Vy85MXBYWE5uN29vK3k4VXJQaVdGMUMKaFFNN1lkUXp4c3FZd3hLTUVFU3NUUTFwZGhOSlZHMFJHbkNHWHE4V2R6YXZTblplT2dyeUhsMVNsNm1PY0RwRQp5ZEhobUE1N2lkSU9aL3UrTHUvWml5d3diZVVaSVdoLzlsRW5qWTgyU2VNY290Y2FSemk4QWpNUmFUSFN6bHN5CnNJdHExdVVDZ1lFQTI0dXc3aFIydE9qOEtXck5VMkZTamppR1hjM2VDTmxPN1diUjRHL3hhUnJMcUxxN3R3L0kKWTRNNFVuTHpnVzd2VW1RUEVhKy9lU3lCQjFCaUZmcm5xMWtWMEVDdVlQb1JoYW1hT2pBS0trcUZONm5sTk9DMwp6MXRCMUtWdThIcjhXVXBuQ2hjWkcxVS9xZUVVNWZuaUpEWGJBMkpaVUwyWXNyK2tnTXNBUDJrPQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo= |

| |

| |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/calico] |

| LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUVMRENDQXhTZ0F3SUJBZ0lVZG1WN3VWZE5YSkxiSDJ0Tm50Nk9KeStNekk4d0RRWUpLb1pJaHZjTkFRRUwKQlFBd1p6RUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeERUQUxCZ05WQkFvVEJHVjBZMlF4RmpBVUJnTlZCQXNURFVWMFkyUWdVMlZqZFhKcGRIa3hEVEFMCkJnTlZCQU1UQkdWMFkyUXdJQmNOTWpNd05ESTNNVFF6TmpBd1doZ1BNakV5TXpBME1ETXhORE0yTURCYU1HY3gKQ3pBSkJnTlZCQVlUQWtOT01SQXdEZ1lEVlFRSUV3ZENaV2xxYVc1bk1SQXdEZ1lEVlFRSEV3ZENaV2xxYVc1bgpNUTB3Q3dZRFZRUUtFd1JsZEdOa01SWXdGQVlEVlFRTEV3MUZkR05rSUZObFkzVnlhWFI1TVEwd0N3WURWUVFECkV3UmxkR05rTUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUF1V1lJWDZvbFdLd0wKUEtJSzRZcHB4ZG5qNnBIb3N2c3BVUFhsLytpcngvVGJHc0h5VDl4S29FNHVJaUJ6TTR3MHpnQ2RNZVVhVlpPMApUVVVHV0hzMlR6c0FlV3hzbVRqUC9qNGxzQ0hsOXdOMTg0aFpXTC9jK2t5TjFQR3FZMzFXcDB4U3ovS2xrRG1yCmxManMyQkVBekJYU2JlR1RJemFnTFRXZEE1NlJGK1p6TVJmZEhGNHNPb3d5OXh3Ly9mTThsQ1czNDRKTXI2bEcKZ2hsUnVTa2NJRklYLzNNemo4V0NLNyt2VFE2b2k0SllzWS9NRlVkczZYdFpwdk9wbFRxSjZmTTlDZVpHblRieQpOTGJPNk5VbS84eDRjdC9mYmJ3Ui9oTTBxcnlnUkU4eWpxQW9vN0UyOG9OTEZSRGJObHNndU5tY3JCajR5QnZKCjJSaFEyejEyR1FJREFRQUJvNEhOTUlIS01BNEdBMVVkRHdFQi93UUVBd0lGb0RBZEJnTlZIU1VFRmpBVUJnZ3IKQmdFRkJRY0RBUVlJS3dZQkJRVUhBd0l3REFZRFZSMFRBUUgvQkFJd0FEQWRCZ05WSFE0RUZnUVViaWRhZU51aAp2cERnRkYxSE1mdldXa1liV0tVd0h3WURWUjBqQkJnd0ZvQVVPWFJyMEc2MXhtOVNDR05NOVd0Mmd0MkNIaXd3ClN3WURWUjBSQkVRd1FvSU1hemh6TFcxaGMzUmxjakF4Z2d4ck9ITXRiV0Z6ZEdWeU1ES0NER3M0Y3kxdFlYTjAKWlhJd000Y0Vmd0FBQVljRUNnQUF5WWNFQ2dBQXlvY0VDZ0FBeXpBTkJna3Foa2lHOXcwQkFRc0ZBQU9DQVFFQQpiaWJWMmR6QzZuWWJuRXg1MlhhMjNRbkI2cWFqbS9MSUl1ci9ZcjV3MnZWUkRUdUJ6Sk8vUHowT1BzU3JtY0FRCkEydFZNT3g1RU5GS3B6dzZ3Z2M2MllVZDNYYlpiOCtQbjZoTndQRFpwYUd1aUlwU3lQaE95OUl4ZmZLOWFQZysKQlBOMU1TeTN3NGFOcGgzY2N2UlUzMzVzUzdoREF1T1dwMmU1M09NdmdnVGIyN1ZPNXI4dkxWUFJRTzF6a3REcApLTE9ldkl5aGN3TVB2eXljT2NqUmR6QmFwT1EraENkV1pwUjdvUXZVTUNzTmR5clpGeUxTZkVwR2pHOHJXU24rCldKcFROaDlyVnpFdWp3WTU2UVBDak00N3NtQnVJcy9BNGN0dnh0T0VoRWpsc01zSEJTS2l1VTkxb0VJRnAyVmwKZ2g4Y0JjN21sVGE2eldqQUhoaFZGQT09Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K |

| |

| |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/calico] |

| LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUR4RENDQXF5Z0F3SUJBZ0lVVldPZm01S2dIS21HdlFqeWU2R2hvVkVrYUw0d0RRWUpLb1pJaHZjTkFRRUwKQlFBd1p6RUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeERUQUxCZ05WQkFvVEJHVjBZMlF4RmpBVUJnTlZCQXNURFVWMFkyUWdVMlZqZFhKcGRIa3hEVEFMCkJnTlZCQU1UQkdWMFkyUXdJQmNOTWpNd05ESTNNVFF6TmpBd1doZ1BNakV5TXpBME1ETXhORE0yTURCYU1HY3gKQ3pBSkJnTlZCQVlUQWtOT01SQXdEZ1lEVlFRSUV3ZENaV2xxYVc1bk1SQXdEZ1lEVlFRSEV3ZENaV2xxYVc1bgpNUTB3Q3dZRFZRUUtFd1JsZEdOa01SWXdGQVlEVlFRTEV3MUZkR05rSUZObFkzVnlhWFI1TVEwd0N3WURWUVFECkV3UmxkR05rTUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUEyUURLQWdGUTlkMmoKQVZGRElnTTB6TzNFcE1EZk5wc3FLdnRlNDZtVS82TWJlSE1GQzNQNHNjNXFEYk04N0Q4QUZvNUs4VkwwcDRUWgpWK0JqU2lBSHI0UDZDL2pqalZJZmRLanJlc0UyVVpjSDIvRmRieXhpejVBTEJhNlpMUFhETjlxVmlhWkorYlVvCnRzZXJpZUllVWtxdElPTFRXNVBXbTR0eTFBa081c042NjZ4eTBKTTNBZnIwSk43SHozaytoTEplNkdnRHRJWFAKTnpPbXRDdEl4aVgvQ3JrT1d4YkEwd0V1bFRyaHRjZHRWWXBhVTd6amVHa0xmZHdNRmdEUWtOSERDajAzR3Y4dApDbXhGOWhXem5EYkM4V0dMZDNPUi9ZUlJsb2w5eEN0bm1Vc3JISDNXT0krTW9jU1YrRXd4Q2JzelVVOEtLd01wCjRVUUNFQ1YrRlFJREFRQUJvMll3WkRBT0JnTlZIUThCQWY4RUJBTUNBUVl3RWdZRFZSMFRBUUgvQkFnd0JnRUIKL3dJQkFqQWRCZ05WSFE0RUZnUVVPWFJyMEc2MXhtOVNDR05NOVd0Mmd0MkNIaXd3SHdZRFZSMGpCQmd3Rm9BVQpPWFJyMEc2MXhtOVNDR05NOVd0Mmd0MkNIaXd3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUtTOXZwT1lEQVR4Cjh2VWZDOHZDQzAzUUh1T3NMT3pEa0xMemtsR2pTNWN0d0hXMk1jRGV6bXZIMVZRNEloQ0UrMEo0M1p4MUNpRXcKUEFNU1gyS0pCOFRGTVJhVkRzUGFteHVNZGhnc1Fsa1RvYWNvZ3FGYWZhY1U4eFlRT3BRNjFYWXovcHkxd0UwYwowVDlJaGU3aHp5UmVvWVVTZklKYUw3TVJGZmR3QzFKL2hiajAxR3FNSVpZYTdOK3QxVVQ0T09PLy95NkoyazVHClhncFpia1NYVGVIOXJMRXU1RTdXeFlZL1BESW12UFRRQmhqMG81RUdIbEVOdzNDWHZHRnMzUTJLYmFQRGxHZTQKWUVXS0srTlhsc1daYVliZkZRMVJIczFOZW44WHAxR2NQZExTb2VpRGY1OVJIbDJLanE0akpNMlhUMGQwVVllZgpEd0pNSWx5TnZlMD0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo= |

| |

| |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/calico] |

| secret/calico-etcd-secrets created |

| configmap/calico-config created |

| clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created |

| clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created |

| clusterrole.rbac.authorization.k8s.io/calico-node created |

| clusterrolebinding.rbac.authorization.k8s.io/calico-node created |

| daemonset.apps/calico-node created |

| serviceaccount/calico-node created |

| deployment.apps/calico-kube-controllers created |

| serviceaccount/calico-kube-controllers created |

| Warning: policy/v1beta1 PodDisruptionBudget is deprecated in v1.21+, unavailable in v1.25+; use policy/v1 PodDisruptionBudget |

| poddisruptionbudget.policy/calico-kube-controllers created |

| |

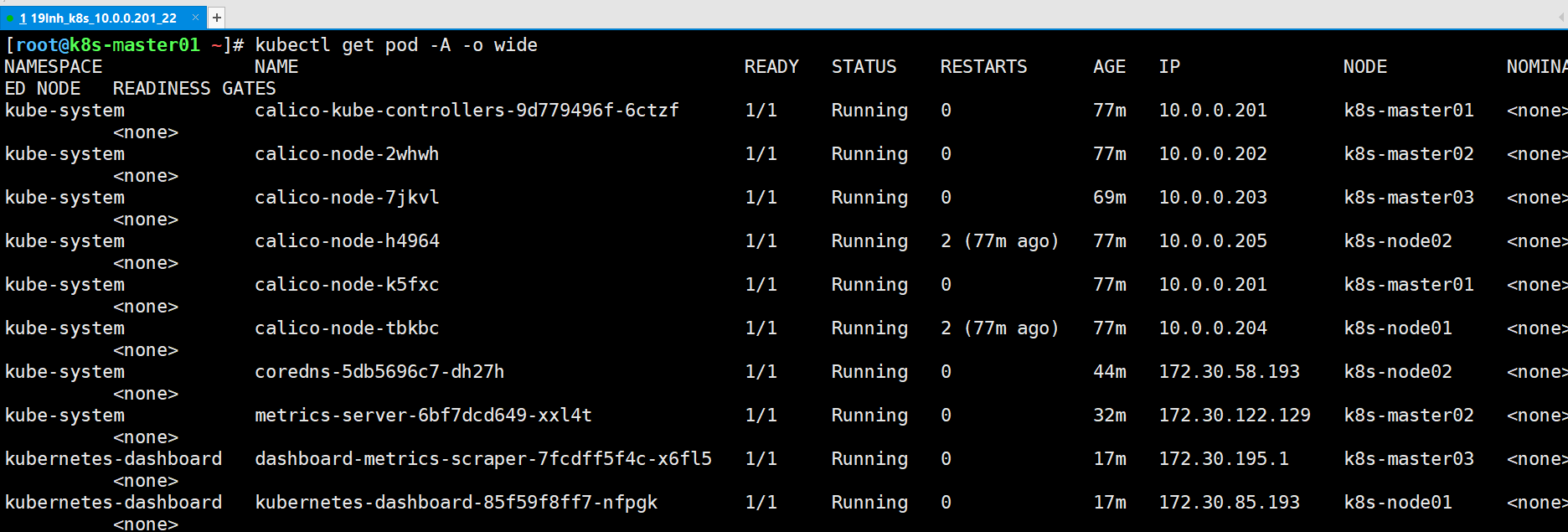

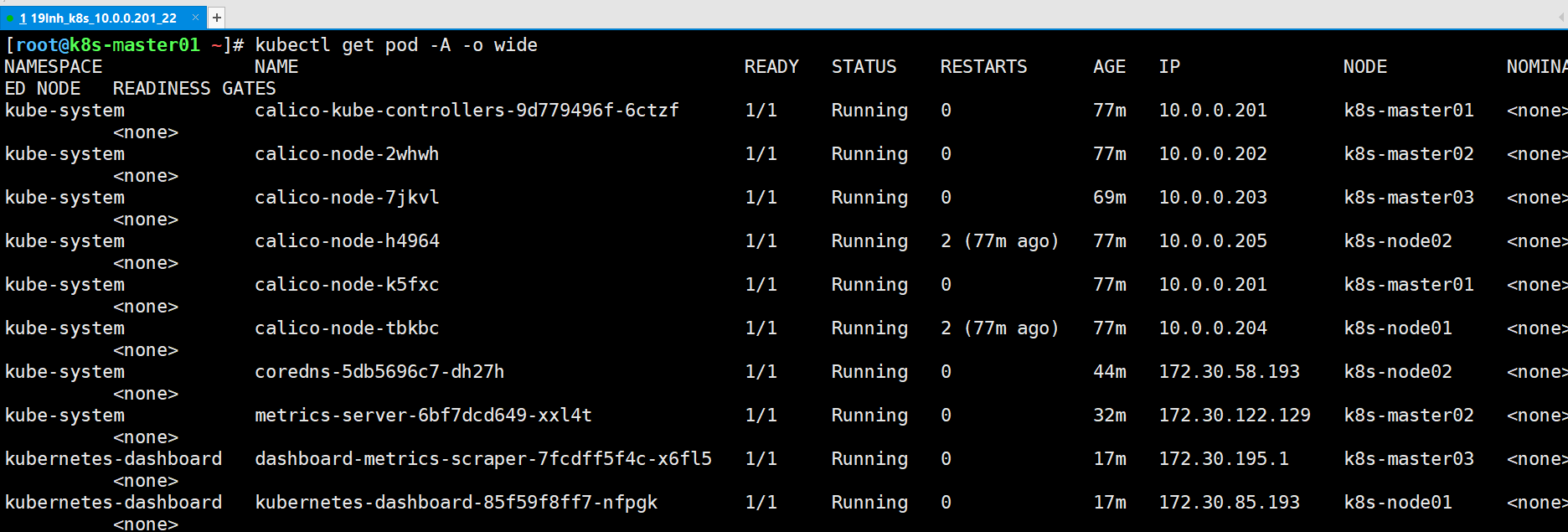

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/calico] |

| NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES |

| pod/calico-kube-controllers-9d779496f-6ctzf 1/1 Running 0 28m 10.0.0.201 k8s-master01 <none> <none> |

| pod/calico-node-2whwh 1/1 Running 0 28m 10.0.0.202 k8s-master02 <none> <none> |

| pod/calico-node-7jkvl 1/1 Running 0 20m 10.0.0.203 k8s-master03 <none> <none> |

| pod/calico-node-h4964 1/1 Running 2 (27m ago) 28m 10.0.0.205 k8s-node02 <none> <none> |

| pod/calico-node-k5fxc 1/1 Running 0 28m 10.0.0.201 k8s-master01 <none> <none> |

| pod/calico-node-tbkbc 1/1 Running 2 (27m ago) 28m 10.0.0.204 k8s-node01 <none> <none> |

| |

| NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME |

| node/k8s-master01 Ready <none> 159m v1.23.15 10.0.0.201 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| node/k8s-master02 Ready <none> 159m v1.23.15 10.0.0.202 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| node/k8s-master03 Ready <none> 159m v1.23.15 10.0.0.203 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| node/k8s-node01 Ready <none> 100m v1.23.15 10.0.0.204 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| node/k8s-node02 Ready <none> 100m v1.23.15 10.0.0.205 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| |

| |

| |

| |

| |

| sed -i 's#etcd_endpoints: "http://<ETCD_IP>:<ETCD_PORT>"#etcd_endpoints: "https://10.0.0.201:2379,https://10.0.0.202:2379,https://10.0.0.203:2379"#g' calico-etcd.yaml |

| |

| ETCD_CA=`cat /etc/kubernetes/pki/etcd/etcd-ca.pem | base64 | tr -d '\n'` |

| ETCD_CERT=`cat /etc/kubernetes/pki/etcd/etcd.pem | base64 | tr -d '\n'` |

| ETCD_KEY=`cat /etc/kubernetes/pki/etcd/etcd-key.pem | base64 | tr -d '\n'` |

| |

| sed -i "s@# etcd-key: null@etcd-key: ${ETCD_KEY}@g; s@# etcd-cert: null@etcd-cert: ${ETCD_CERT}@g; s@# etcd-ca: null@etcd-ca: ${ETCD_CA}@g" calico-etcd.yaml |

| |

| sed -i 's#etcd_ca: ""#etcd_ca: "/calico-secrets/etcd-ca"#g; s#etcd_cert: ""#etcd_cert: "/calico-secrets/etcd-cert"#g; s#etcd_key: "" #etcd_key: "/calico-secrets/etcd-key" #g' calico-etcd.yaml |

| |

| |

| POD_SUBNET="172.30.110.0/24" |

| |

| |

| sed -i 's@# - name: CALICO_IPV4POOL_CIDR@- name: CALICO_IPV4POOL_CIDR@g; s@# value: "192.168.0.0/16"@ value: '"${POD_SUBNET}"'@g' calico-etcd.yaml |

| |

| |

| |

| |

| |

| 温馨提示: |

| 上述所有步骤均可以省略,就会出错。需要手动修改你自己集群的证书文件内容,我发你的你用不了!需要手动修改"etcd-key","etcd-cert"和"etcd-ca"。 |

| - etcd证书文件存储路径: |

| /etc/kubernetes/pki/etcd/ |

| |

| - base64编码文件内容 |

| cat <file> | base64 -w 0 |

故障:有一个pod一直在pending

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/calico] |

| Events: |

| Type Reason Age From Message |

| ---- ------ ---- ---- ------- |

| Normal Scheduled 10m default-scheduler Successfully assigned kube-system/calico-node-7jkvl to k8s-master03 |

| |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/calico] |

| NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES |

| pod/calico-kube-controllers-9d779496f-6ctzf 1/1 Running 0 21m 10.0.0.201 k8s-master01 <none> <none> |

| pod/calico-node-2whwh 1/1 Running 0 21m 10.0.0.202 k8s-master02 <none> <none> |

| pod/calico-node-7jkvl 0/1 Pending 0 13m <none> k8s-master03 <none> <none> |

| pod/calico-node-h4964 1/1 Running 2 (20m ago) 21m 10.0.0.205 k8s-node02 <none> <none> |

| pod/calico-node-k5fxc 1/1 Running 0 21m 10.0.0.201 k8s-master01 <none> <none> |

| pod/calico-node-tbkbc 1/1 Running 2 (20m ago) 21m 10.0.0.204 k8s-node01 <none> <none> |

| |

| NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME |

| node/k8s-master01 Ready <none> 151m v1.23.15 10.0.0.201 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| node/k8s-master02 Ready <none> 151m v1.23.15 10.0.0.202 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| node/k8s-master03 NotReady <none> 151m v1.23.15 10.0.0.203 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| node/k8s-node01 Ready <none> 93m v1.23.15 10.0.0.204 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| node/k8s-node02 Ready <none> 93m v1.23.15 10.0.0.205 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 docker://19.3.15 |

| |

| |

| 重启了这台服务器 |

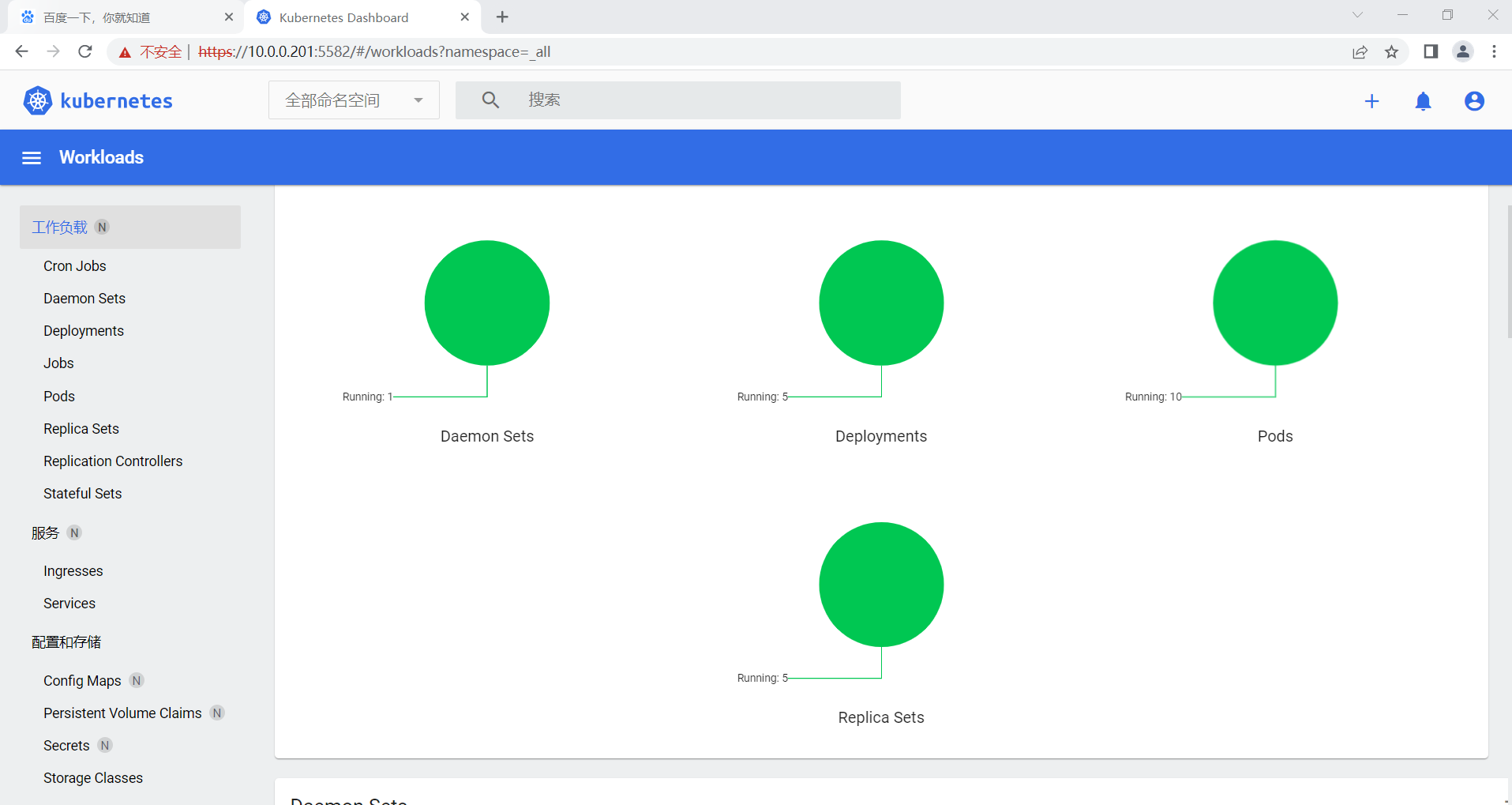

十一、附加组件部署

1. 部署CoreDNS

| (1)部署coreDNS |

| [root@k8s-master01 ~/softwares] |

| [root@k8s-master01 ~/softwares/07-k8s-ha-install/CoreDNS] |

| |

| |

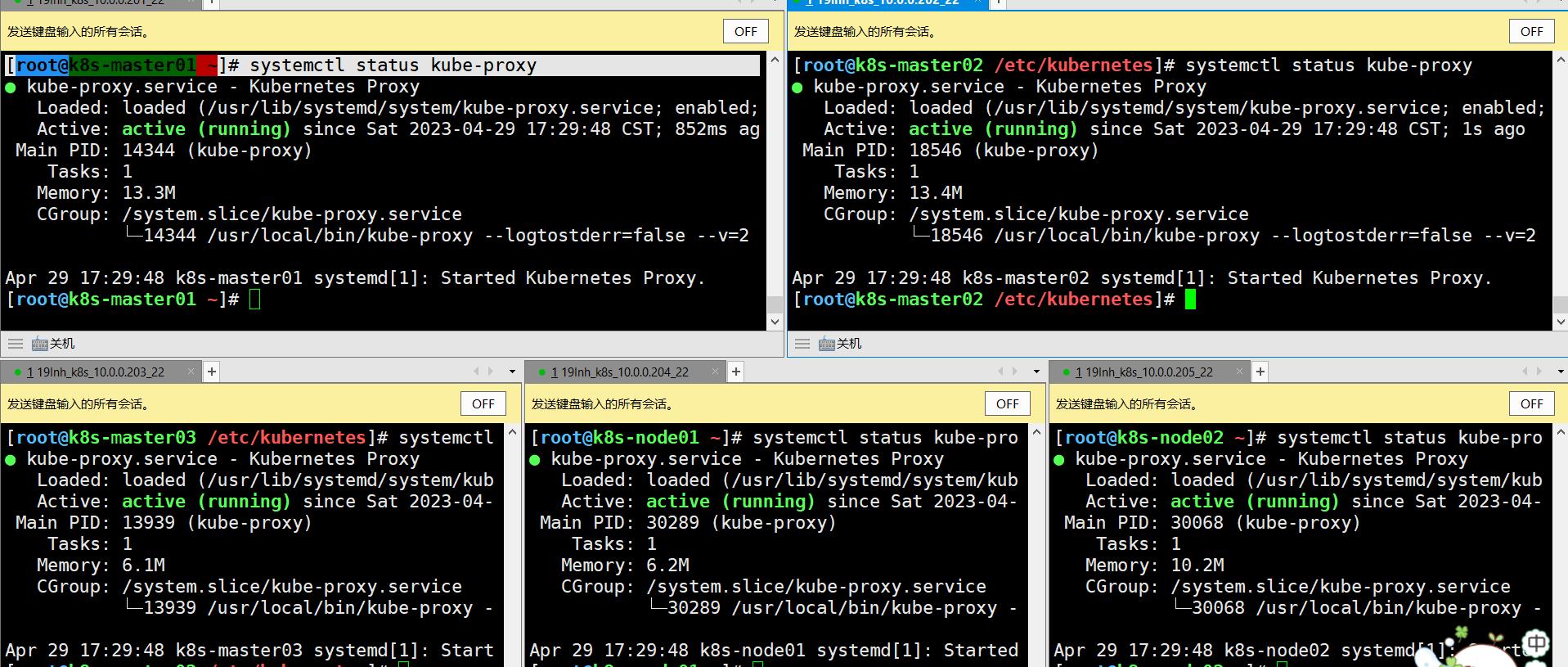

| |