ELK 分布式日志实战

一. ELK 分布式日志实战介绍

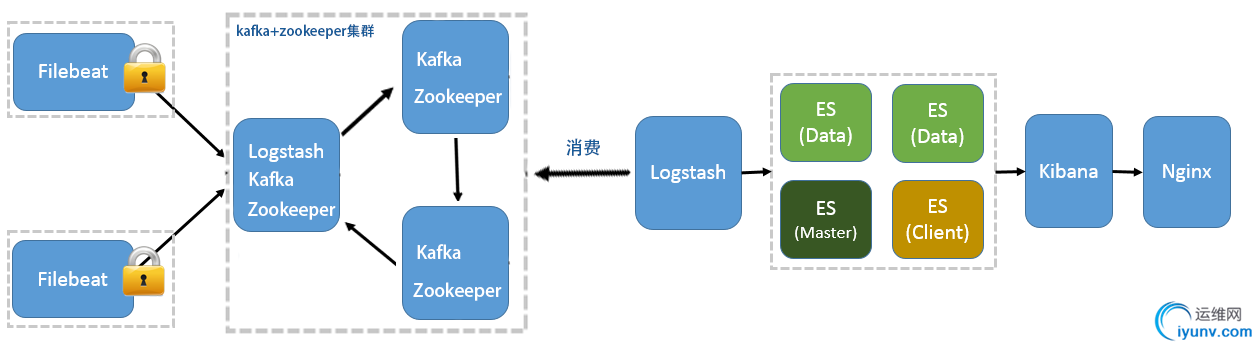

此实战方案以 Elk 5.5.2 版本为准,分布式日志将以下图分布进行安装部署以及配置。

当Elk需监控应用日志时,需在应用部署所在的服务器中,安装Filebeat日志采集工具,日志采集工具通过配置,采集本地日志文件,将日志消息传输到Kafka集群,

我们可部署日志中间服务器,安装Logstash日志采集工具,Logstash直接消费Kafka的日志消息,并将日志数据推送到Elasticsearch中,并且通过Kibana对日志数据进行展示。

二. Elasticsearch配置

1.Elasticsearch、Kibana安装配置,可见本人另一篇博文

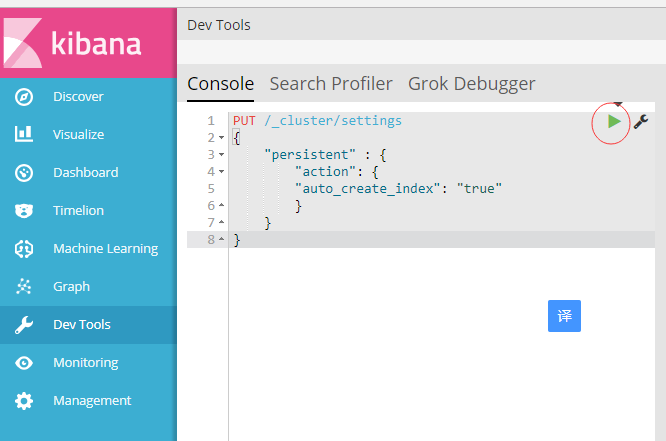

https://www.cnblogs.com/woodylau/p/9474848.html 2.创建logstash日志前,需先设置自动创建索引(根据第一步Elasticsearch、Kibana安装成功后,点击Kibana Devtools菜单项,输入下文代码执行)

PUT /_cluster/settings

{

"persistent" : {

"action": {

"auto_create_index": "true"

}

}

}

三. Filebeat 插件安装以及配置

1.下载Filebeat插件 5.5.2 版本

wget https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-5.5.2-linux-x86_64.tar.gz

2.解压filebeat-5.5.2-linux-x86_64.tar.gz文件至/tools/elk/目录下

1 tar -zxvf filebeat-5.5.2-linux-x86_64.tar.gz -C /tools/elk/ 2 cd /tools/elk/ 3 mv filebeat-5.5.2-linux-x86_64 filebeat-5.5.2

3.配置filebeat.yml文件1 cd /tools/elk/filebeat-5.5.2 2 vi filebeat.yml

4.filebeat.yml 用以下文本内容覆盖之前文本1 filebeat.prospectors: 2 - input_type: log 3 paths: 4 # 应用 info日志 5 - /data/applog/app.info.log 6 encoding: utf-8 7 document_type: app-info 8 #定义额外字段,方便logstash创建不同索引时所设 9 fields: 10 type: app-info 11 #logstash读取额外字段,必须设为true 12 fields_under_root: true 13 scan_frequency: 10s 14 harvester_buffer_size: 16384 15 max_bytes: 10485760 16 tail_files: true 17 18 - input_type: log 19 paths: 20 #应用错误日志 21 - /data/applog/app.error.log 22 encoding: utf-8 23 document_type: app-error 24 fields: 25 type: app-error 26 fields_under_root: true 27 scan_frequency: 10s 28 harvester_buffer_size: 16384 29 max_bytes: 10485760 30 tail_files: true 31 32 # filebeat读取日志数据录入kafka集群 33 output.kafka: 34 enabled: true 35 hosts: ["192.168.20.21:9092","192.168.20.22:9092","192.168.20.23:9092"] 36 topic: elk-%{[type]} 37 worker: 2 38 max_retries: 3 39 bulk_max_size: 2048 40 timeout: 30s 41 broker_timeout: 10s 42 channel_buffer_size: 256 43 keep_alive: 60 44 compression: gzip 45 max_message_bytes: 1000000 46 required_acks: 1 47 client_id: beats 48 partition.hash: 49 reachable_only: true 50 logging.to_files: true

5.启动 filebeat 日志采集工具

1 cd /tools/elk/filebeat-5.5.2 2 #后台启动 filebeat 3 nohup ./filebeat -c ./filebeat-kafka.yml &

四. Logstash 安装配置

1. 下载Logstash 5.5.2 版本wget https://artifacts.elastic.co/downloads/logstash/logstash-5.5.2.tar.gz

2.解压logstash-5.5.2.tar.gz文件至/tools/elk/目录下1 tar -zxvf logstash-5.5.2.tar.gz -C /tools/elk/ 2 cd /tools/elk/ 3 mv filebeat-5.5.2-linux-x86_64 filebeat-5.5.2

3.安装x-pack监控插件(可选插件,如若elasticsearch安装此插件,则logstash也必须安装)

./logstash-plugin install x-pack

4.编辑 logstash_kafka.conf 文件

1 cd /tools/elk/logstash-5.5.2/config 2 vi logstash_kafka.conf

5.配置 logstash_kafka.conf

input {

kafka {

codec => "json"

topics_pattern => "elk-.*"

bootstrap_servers => "192.168.20.21:9092,192.168.20.22:9092,192.168.20.23:9092"

auto_offset_reset => "latest"

group_id => "logstash-g1"

}

}

filter {

#当非业务字段时,无traceId则移除

if ([message] =~ "traceId=null") {

drop {}

}

}

output {

elasticsearch {

#Logstash输出到elasticsearch

hosts => ["192.168.20.21:9200","192.168.20.22:9200","192.168.20.23:9200"]

# type为filebeat额外字段值

index => "logstash-%{type}-%{+YYYY.MM.dd}"

document_type => "%{type}"

flush_size => 20000

idle_flush_time => 10

sniffing => true

template_overwrite => false

# 当elasticsearch安装了x-pack插件,则需配置用户名密码

user => "elastic"

password => "elastic"

}

}

6.启动 logstash 日志采集工具

1 cd /tools/elk/logstash-5.5.2

#后台启动 logstash 2 nohup /tools/elk/logstash-5.5.2/bin/logstash -f /tools/elk/logstash-5.5.2/config/logstash_kafka.conf &

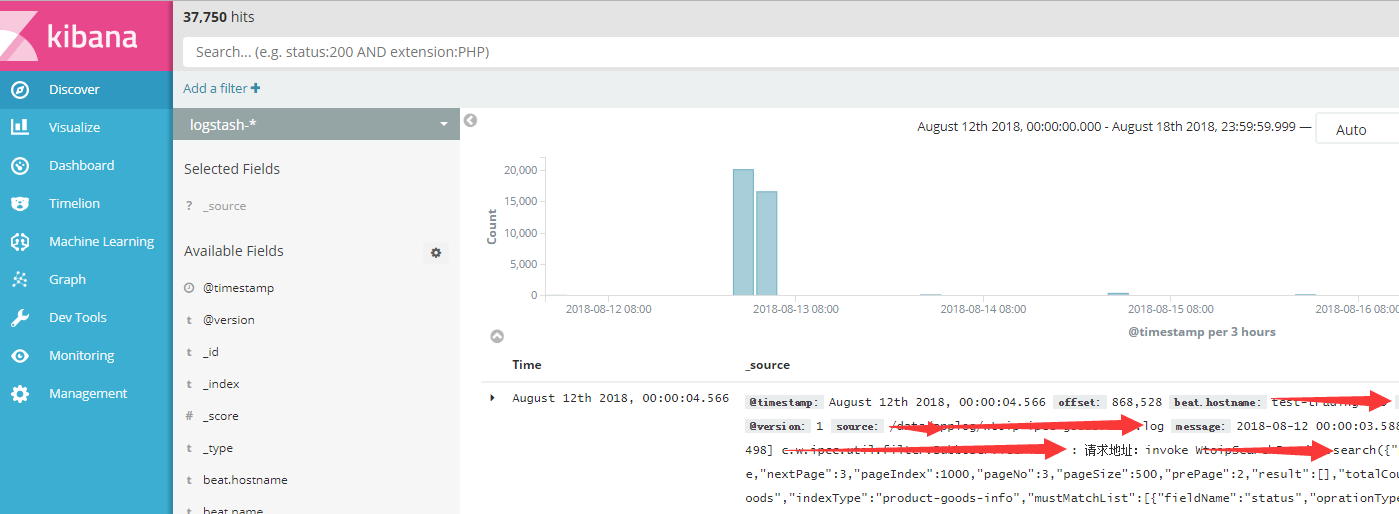

五. 最终查看ELK安装配置结果

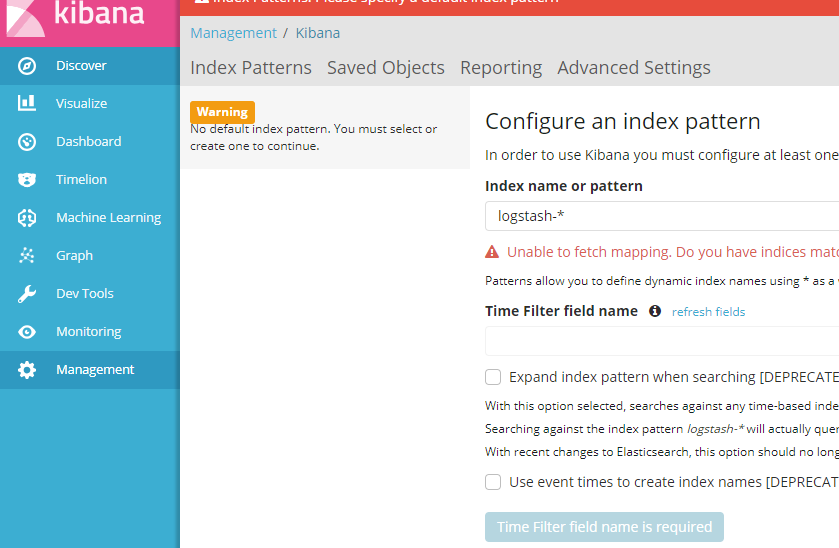

1.访问 Kibana, http://localhost:5601,点击 Discover菜单,配置索引表达式,输入 logstash-*,点击下图蓝色按钮,则创建查看Logstash采集的应用日志