Kubernetes 1.13 的完整部署手册

前言:

非常详细的K8s的完整部署手册,由于Kubernetes版本和操作系统的版本关系非常敏感,部署前请查阅版本关系对应表

地址:https://github.com/kubernetes/kubernetes/releases

1.实践环境准备

服务器虚拟机准备

| IP 地址 | 192.168.1.11 | 192.168.1.12 |

|---|---|---|

| 节点角色 | master and etcd | worker |

| CPU | >=2c | >=2c |

| 内存 | >=2G | >=2G |

| 主机名 | master | node |

| 节点数量 | 1台 | 1台 |

本实验我这里用的VM是vmware workstation创建的,每个给了4C 4G 100GB配置,需要保证两台节点都可以正常访问互联网,大家根据自己的资源情况,按照上面给的建议最低值创建即可。

注意:hostname不能有大写字母,比如Master这样。

2. 软件版本

如下是软件版本的对照情况:

操作系统版本: CentOS7.1804

Kubernetes版本: v1.13

docker版本: 18.06.1-ce

kubeadm版本: v1.13

kubectl版本: v1.13

kubelet版本: v1.13

注意:这里采用的软件版本,请大家尽量严格与我保持一致! 开源软件,版本非常敏感和重要!

3. 基础环境配置

3.1 配置节点的hostname

$ hostnamectl set-hostname master

$ hostnamectl set-hostname node

注意:每台机器上设置对应好hostname,注意,不能有大写字母!

3.2 配置节点的 /etc/hosts 文件

注意:hosts文件非常重要,请在每个节点上执行:

vi /etc/hosts

192.168.1.11 master

192.168.1.12 node

3.3 关闭防火墙、selinux、swap

关停防火墙

$ systemctl stop firewalld

$ systemctl disable firewalld

关闭Selinux

$ setenforce 0

$ sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/sysconfig/selinux

$ sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

$ sed -i "s/^SELINUX=permissive/SELINUX=disabled/g" /etc/sysconfig/selinux

$ sed -i "s/^SELINUX=permissive/SELINUX=disabled/g" /etc/selinux/config

关闭Swap

$ swapoff -a

$ sed -i 's/.*swap.*/#&/' /etc/fstab

加载br_netfilter

$ modprobe br_netfilter

3.4 配置内核参数

配置sysctl内核参数

$ cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

生效文件

$ sysctl -p /etc/sysctl.d/k8s.conf

修改Linux 资源配置文件,调高ulimit最大打开数和systemctl管理的服务文件最大打开数

$ echo "* soft nofile 655360" >> /etc/security/limits.conf

$ echo "* hard nofile 655360" >> /etc/security/limits.conf

$ echo "* soft nproc 655360" >> /etc/security/limits.conf

$ echo "* hard nproc 655360" >> /etc/security/limits.conf

$ echo "* soft memlock unlimited" >> /etc/security/limits.conf

$ echo "* hard memlock unlimited" >> /etc/security/limits.conf

$ echo "DefaultLimitNOFILE=1024000" >> /etc/systemd/system.conf

$ echo "DefaultLimitNPROC=1024000" >> /etc/systemd/system.conf

4. 配置CentOS YUM源

配置国内tencent yum源地址、epel源地址、Kubernetes源地址

$ rm -rf /etc/yum.repos.d/*

$ curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

$ yum install -y wget

$ wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.cloud.tencent.com/repo/centos7_base.repo

$ wget -O /etc/yum.repos.d/epel.repo http://mirrors.cloud.tencent.com/repo/epel-7.repo

$ yum clean all && yum makecache

配置国内Kubernetes源地址

$ vi /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

5. 安装依赖软件包

有些依赖包我们要把它安装上,方便到时候使用

$ yum install -y conntrack ipvsadm ipset jq sysstat curl iptables libseccomp bash-completion yum-utils device-mapper-persistent-data lvm2 net-tools conntrack-tools vim libtool-ltdl

6. 时间同步配置

Kubernetes是分布式的,各个节点系统时间需要同步对应上。

$ yum install chrony –y

$ systemctl enable chronyd.service && systemctl start chronyd.service && systemctl status chronyd.service

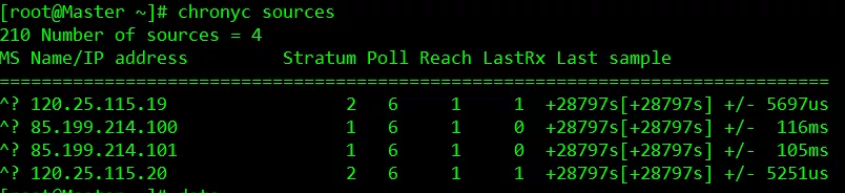

$ chronyc sources

运行date命令看下系统时间,过一会儿时间就会同步。

7. 配置节点间ssh互信

配置ssh互信,那么节点之间就能无密访问,方便日后执行自动化部署

$ ssh-keygen # 每台机器执行这个命令, 一路回车即可

$ ssh-copy-id node # 到master上拷贝公钥到其他节点,这里需要输入 yes和密码

8. 初始化环境配置检查

- 重启,做完以上所有操作,最好reboot重启一遍

- ping 每个节点hostname 看是否能ping通

- ssh 对方hostname看互信是否无密码访问成功

- 执行date命令查看每个节点时间是否正确

- 执行 ulimit -Hn 看下最大文件打开数是否是655360

- cat /etc/sysconfig/selinux |grep disabled 查看下每个节点selinux是否都是disabled状态

二、docker安装

Kubernetes 是容器调度编排PaaS平台,那么docker是必不可少要安装的。最新Kubernetes 1.13 支持最新的docker版本是18.06.1,那么我们就安装最新的 docker-ce 18.06.1

具体Kubernetes changelog 文档地址:https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.12.md#v1123

1. remove旧版本docker

$ yum remove -y docker docker-ce docker-common docker-selinux docker-engine

2. 设置docker yum源

$ yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

3. 列出docker版本

$ yum list docker-ce --showduplicates | sort -r

4. 安装docker 指定18.06.1

$ yum install -y docker-ce-18.06.1.ce-3.el7

5. 配置镜像加速器和docker数据存放路径

$systemctl restart docker

$vi /etc/docker/daemon.json

{

"registry-mirrors": ["https://q2hy3fzi.mirror.aliyuncs.com"]

}

6. 启动docker

$ systemctl daemon-reload && systemctl restart docker && systemctl enable docker && systemctl status docker

查看docker 版本

$ docker --version

三、安装kubeadm、kubelet、kubectl

这一步是所有节点都得安装(包括node节点)

1. 工具说明

• kubeadm: 部署集群用的命令

• kubelet: 在集群中每台机器上都要运行的组件,负责管理pod、容器的生命周期

• kubectl: 集群管理工具

2. yum 安装

安装工具

$ yum install -y kubelet-1.13.2 kubeadm-1.13.2 kubectl-1.13.2 kubernetes-cni-0.6.0

启动kubelet

$ systemctl enable kubelet && systemctl start kubelet

注意:kubelet 服务会暂时启动不了,先不用管它。

四、镜像下载准备

1. 初始化获取要下载的镜像列表

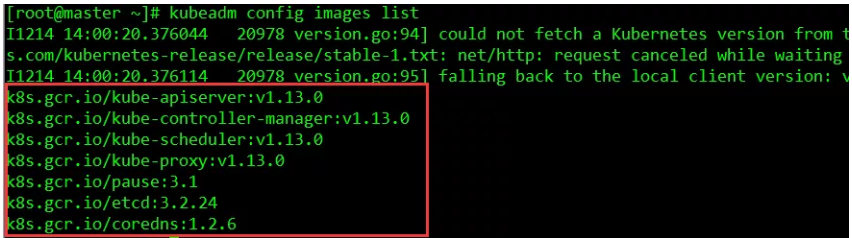

使用kubeadm来搭建Kubernetes,那么就需要下载得到Kubernetes运行的对应基础镜像,比如:kube-proxy、kube-apiserver、kube-controller-manager等等 。那么有什么方法可以得知要下载哪些镜像呢?从kubeadm v1.11+版本开始,增加了一个kubeadm config print-default 命令,可以让我们方便的将kubeadm的默认配置输出到文件中,这个文件里就包含了搭建K8S对应版本需要的基础配置环境。另外,我们也可以执行 kubeadm config images list 命令查看依赖需要安装的镜像列表。

注意:这个列表显示的tag名字和镜像版本号,从Kubernetes v1.12+开始,镜像名后面不带amd64, arm, arm64, ppc64le 这样的标识了。

1.1 生成默认kubeadm.conf文件

执行这个命令就生成了一个kubeadm.conf文件

$ kubeadm config print init-defaults > kubeadm.conf

1.2 绕过墙下载镜像方法(注意认真看,后期版本安装也可以套用这方法)

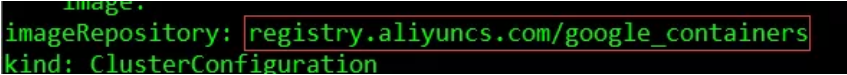

注意这个配置文件默认会从google的镜像仓库地址k8s.gcr.io下载镜像。因此,我们通过下面的方法把地址改成国内的,比如用阿里的:

$ sed -i "s/imageRepository: .*/imageRepository: registry.aliyuncs.com/google_containers/g" kubeadm.conf

1.4 下载需要用到的镜像

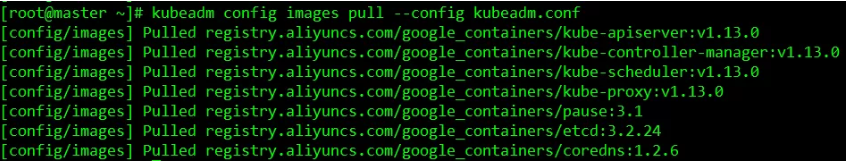

kubeadm.conf修改好后,我们执行下面命令就可以自动从国内下载需要用到的镜像了:

$ kubeadm config images pull --config kubeadm.conf

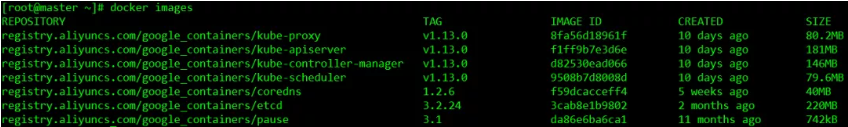

自动下载v1.13需要用到的镜像,执行 docker images 可以看到下载好的镜像列表:

注:除了上面的方法,还有一种方式是搭建自己的镜像仓库。不过前提你得下载好对应的版本镜像,然后上传到你镜像仓库里,然后pull下载。不过上面我提到的方法更加方便省事。

1.5 docker tag 镜像

镜像下载好后,我们还需要tag下载好的镜像,让下载好的镜像都是带有 k8s.gcr.io 标识的,目前我们从阿里下载的镜像 标识都是,如果不打tag变成k8s.gcr.io,那么后面用kubeadm安装会出现问题,因为kubeadm里面只认 google自身的模式。我们执行下面命令即可完成tag标识更换:

$ docker tag registry.aliyuncs.com/google_containers/kube-apiserver:v1.13.0 k8s.gcr.io/kube-apiserver:v1.13.0

$ docker tag registry.aliyuncs.com/google_containers/kube-controller-manager:v1.13.0 k8s.gcr.io/kube-controller-manager:v1.13.0

$ docker tag registry.aliyuncs.com/google_containers/kube-scheduler:v1.13.0 k8s.gcr.io/kube-scheduler:v1.13.0

$ docker tag registry.aliyuncs.com/google_containers/kube-proxy:v1.13.0 k8s.gcr.io/kube-proxy:v1.13.0

$ docker tag registry.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1

$ docker tag registry.aliyuncs.com/google_containers/etcd:3.2.24 k8s.gcr.io/etcd:3.2.24

$ docker tag registry.aliyuncs.com/google_containers/coredns:1.2.6 k8s.gcr.io/coredns:1.2.6

1.6 docker rmi 清理下载的镜像

执行完上面tag镜像的命令,我们还需要把带有 registry.aliyuncs.com 标识的镜像删除,执行:

$ docker rmi registry.aliyuncs.com/google_containers/kube-apiserver:v1.13.0

$ docker rmi registry.aliyuncs.com/google_containers/kube-controller-manager:v1.13.0

$ docker rmi registry.aliyuncs.com/google_containers/kube-scheduler:v1.13.0

$ docker rmi registry.aliyuncs.com/google_containers/kube-proxy:v1.13.0

$ docker rmi registry.aliyuncs.com/google_containers/pause:3.1

$ docker rmi registry.aliyuncs.com/google_containers/etcd:3.2.24

$ docker rmi registry.aliyuncs.com/google_containers/coredns:1.2.6

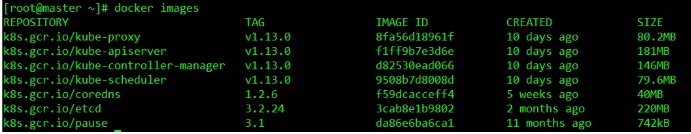

1.7 查看下载的镜像列表

执行docker images命令,即可查看到,这里结果如下,您下载处理后,结果需要跟这里的一致:

注:以上操作其实可以写到一个脚本里,然后自动处理。另外两个master节点,重复上面的操作下载即可。

[root@master ~]# cat docker.sh

#!/bin/bash

docker tag registry.aliyuncs.com/google_containers/kube-apiserver:v1.13.0 k8s.gcr.io/kube-apiserver:v1.13.0

docker tag registry.aliyuncs.com/google_containers/kube-controller-manager:v1.13.0 k8s.gcr.io/kube-controller-manager:v1.13.0

docker tag registry.aliyuncs.com/google_containers/kube-scheduler:v1.13.0 k8s.gcr.io/kube-scheduler:v1.13.0

docker tag registry.aliyuncs.com/google_containers/kube-proxy:v1.13.0 k8s.gcr.io/kube-proxy:v1.13.0

docker tag registry.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1

docker tag registry.aliyuncs.com/google_containers/etcd:3.2.24 k8s.gcr.io/etcd:3.2.24

docker tag registry.aliyuncs.com/google_containers/coredns:1.2.6 k8s.gcr.io/coredns:1.2.6

docker rmi registry.aliyuncs.com/google_containers/kube-apiserver:v1.13.0

docker rmi registry.aliyuncs.com/google_containers/kube-controller-manager:v1.13.0

docker rmi registry.aliyuncs.com/google_containers/kube-scheduler:v1.13.0

docker rmi registry.aliyuncs.com/google_containers/kube-proxy:v1.13.0

docker rmi registry.aliyuncs.com/google_containers/pause:3.1

docker rmi registry.aliyuncs.com/google_containers/etcd:3.2.24

docker rmi registry.aliyuncs.com/google_containers/coredns:1.2.6

五、部署master节点

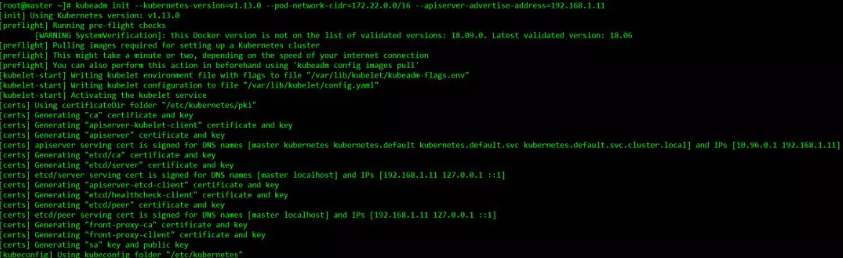

1. kubeadm init 初始化master节点

$ kubeadm init --kubernetes-version=v1.13.0 --pod-network-cidr=172.22.0.0/16 --apiserver-advertise-address=192.168.1.11

这里我们定义POD的网段为: 172.22.0.0/16 ,然后api server地址就是master本机IP地址。

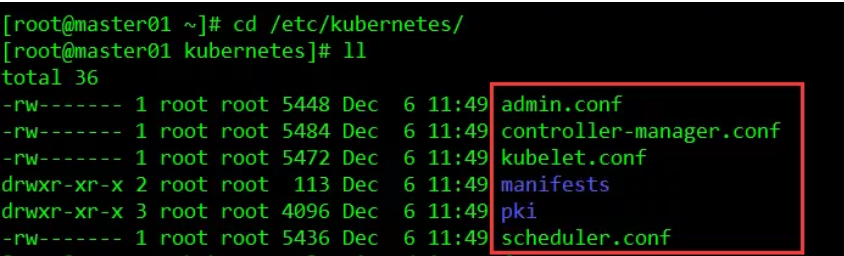

2. 初始化成功后,/etc/kubernetes/ 会生成下面文件

3. 同时最后会生成一句话

kubeadm join 192.168.1.11:6443 --token zs4s82.r9svwuj78jc3px43 --discovery-token-ca-cert-hash sha256:45063078d23b3e8d33ff1d81e903fac16fe6c8096189600c709e3bf0ce051ae8

这个我们记录下,到时候添加node的时候要用到。

4. 验证测试

配置kubectl命令

$ mkdir -p /root/.kube

$ cp /etc/kubernetes/admin.conf /root/.kube/config

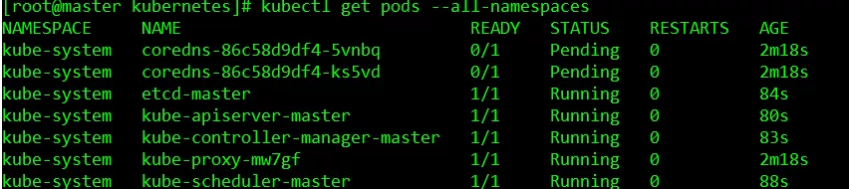

执行获取pods列表命令,查看相关状态

$ kubectl get pods --all-namespaces

其中coredns pod处于Pending状态,这个先不管。

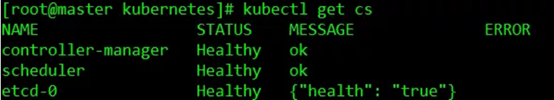

我们也可以执行 kubectl get cs 查看集群的健康状态:

六、部署flannel网络 (在所有节点执行上执行)

[root@master ~]$ docker pull docker.io/dockerofwj/flannel

[root@master ~]$ docker tag docker.io/dockerofwj/flannel quay.io/coreos/flannel:v0.10.0-amd64

[root@master ~]$ docker image rm docker.io/dockerofwj/flannel

在master节点执行上执行

[root@master ~]$ echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> /etc/profile

[root@master ~]$ source /etc/profile

[root@master ~]$ echo $KUBECONFIG

[root@master ~]$ sysctl net.bridge.bridge-nf-call-iptables=1

[root@master ~]$ vi kube-flannel.yaml

[文件内容地址: https://files-cdn.cnblogs.com/files/wenyang321/kube-flannel.yaml.sh]

注意:该yaml文件内容太长了,我无法很好的粘贴进来,所以放到了博客园的文件里,文件内容使用链接下的内容。 文件名为 “kube-flannel.yaml” 并不是“kube-flannel.yaml.sh”

[root@master ~]$ kubectl apply -f kube-flannel.yaml

[root@master ~]$ kubectl get pods --all-namespaces

把node加入集群里

加node计算节点非常简单,在node上运行:

$ kubeadm join 192.168.1.11:6443 --token zs4s82.r9svwuj78jc3px43 --discovery-token-ca-cert-hash sha256:45063078d23b3e8d33ff1d81e903fac16fe6c8096189600c709e3bf0ce051ae8

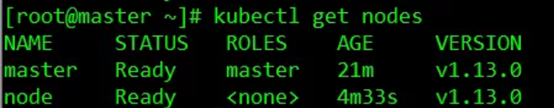

两个节点运行的参数命令一样,运行完后,我们在master节点上运行 kubectl get nodes 命令查看node是否正常

[root@master ~]$ kubectl get nodes

七、部署dashboard

部署dashboard之前,我们需要生成证书,不然后面会https访问登录不了。

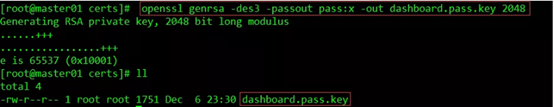

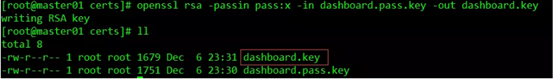

1. 生成私钥和证书签名请求

$ mkdir -p /var/share/certs

$ cd /var/share/certs

$ openssl genrsa -des3 -passout pass:x -out dashboard.pass.key 2048

$ openssl rsa -passin pass:x -in dashboard.pass.key -out dashboard.key

删除刚才生成的dashboard.pass.key

$ rm -rf dashboard.pass.key

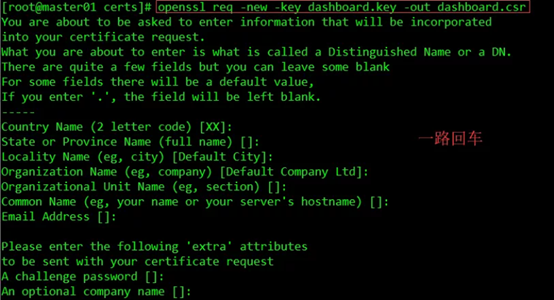

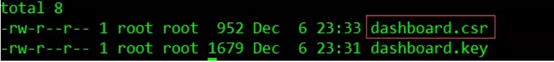

$ openssl req -new -key dashboard.key -out dashboard.csr

生成了dashboard.csr

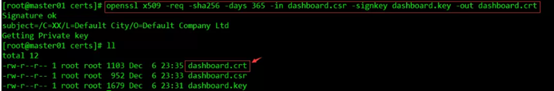

生成SSL证书

$ openssl x509 -req -sha256 -days 365 -in dashboard.csr -signkey dashboard.key -out dashboard.crt

dashboard.crt文件是适用于仪表板和dashboard.key私钥的证书。

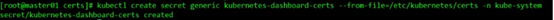

创建secret

$ kubectl create secret generic kubernetes-dashboard-certs --from-file=/var/share/certs -n kube-system

注意/etc/kubernetes/certs 是之前创建crt、csr、key 证书文件存放的路径

2. 下载dashboard镜像、tag镜像(在全部节点上)

$ docker pull registry.cn-hangzhou.aliyuncs.com/kubernete/kubernetes-dashboard-amd64:v1.10.0

$ docker tag registry.cn-hangzhou.aliyuncs.com/kubernete/kubernetes-dashboard-amd64:v1.10.0 k8s.gcr.io/kubernetes-dashboard:v1.10.0

$ docker rmi registry.cn-hangzhou.aliyuncs.com/kubernete/kubernetes-dashboard-amd64:v1.10.0

3. 创建dashboard的pod

[root@master ~]$ vi kubernetes-dashboard.yaml

//# ------------------- Dashboard Service Account ------------------- #

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

---

//# ------------------- Dashboard Role & Role Binding ------------------- #

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

rules:

//# Allow Dashboard to create 'kubernetes-dashboard-key-holder' secret.

- apiGroups: [""]

resources: ["secrets"]

verbs: ["create"]

//# Allow Dashboard to create 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

verbs: ["create"]

//# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs"]

verbs: ["get", "update", "delete"]

//# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

//# Allow Dashboard to get metrics from heapster.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:"]

verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard-minimal

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kube-system

---

//# ------------------- Dashboard Deployment ------------------- #

kind: Deployment

apiVersion: apps/v1beta2

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

nodeName: master

containers:

- name: kubernetes-dashboard

image: docker.io/mirrorgooglecontainers/kubernetes-dashboard-amd64:v1.10.1

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --token-ttl=5400

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

volumes:

- name: kubernetes-dashboard-certs

hostPath:

path: /var/share/certs

type: Directory

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

// # Comment the following tolerations if Dashboard must not be deployed on master

//# tolerations:

//# - key: node-role.kubernetes.io/master

//# effect: NoSchedule

---

//# ------------------- Dashboard Service ------------------- #

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

ports:

- port: 443

targetPort: 8443

nodePort: 31234

selector:

k8s-app: kubernetes-dashboard

type: NodePort

$ kubectl create -f kubernetes-dashboard.yaml

4. 查看服务运行情况

$ kubectl get deployment kubernetes-dashboard -n kube-system

$ kubectl --namespace kube-system get pods -o wide

$ kubectl get services kubernetes-dashboard -n kube-system

$ netstat -ntlp|grep 31234

5.配置dashboard-user-role.yaml

在master节点执行

vi dashboard-user-role.yaml

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: admin

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

roleRef:

kind: ClusterRole

name: cluster-admin

apiGroup: rbac.authorization.k8s.io

subjects:

- kind: ServiceAccount

name: admin

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

$kubectl create -f dashboard-user-role.yaml

$kubectl describe secret/$(kubectl get secret -nkube-system |grep admin|awk '{print $1}') -nkube-system

复制tocken

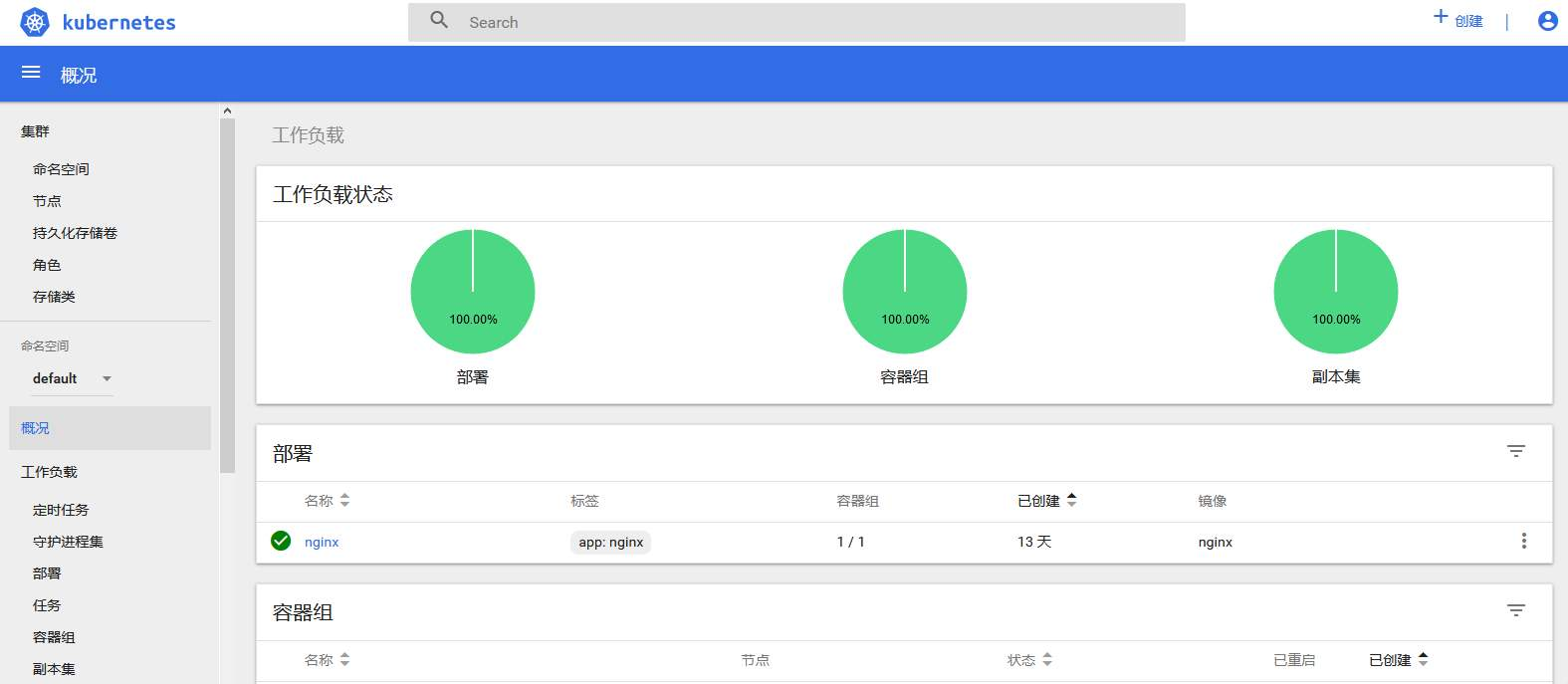

6. 打开dashboard界面并登录

Web访问 https://192.168.1.11:31234 使用Token登陆

浙公网安备 33010602011771号

浙公网安备 33010602011771号