kubeadm安装Kubernetes13.1集群-三

环境:

master: 192.168.3.100

node01: 192.168.3.101

node02: 192.168.3.102

关闭所有主机防火墙,selinux;

配置主机互信;

master:

1、设置docker和kubernetes的repo文件(阿里):

cd /etc/yum.repos.d/

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

vim kubernetes.repo

[kubernetes]

name=Kubernetes Repo

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

enabled=1

yum repolist #验证repo

scp docker-ce.repo kubernetes.repo node01:/etc/yum.repos.d/

scp docker-ce.repo kubernetes.repo node02:/etc/yum.repos.d/

2、安装docker、kubelet、kubeadm、kubectl

[root@master ~]# yum install epel-release -y

[root@master ~]# cd

[root@master ~]# wget https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

[root@master ~]# rpm --import yum-key.gpg

[root@master ~]# wget https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

[root@master ~]# rpm --import rpm-package-key.gpg

[root@master ~]# yum install docker-ce kubelet kubeadm kubectl

3、启动

(1)

[root@master ~]# echo "1" >/proc/sys/net/bridge/bridge-nf-call-ip6tables

[root@master ~]# echo "1" >/proc/sys/net/bridge/bridge-nf-call-iptables

创建/etc/sysctl.d/k8s.conf文件,添加如下内容:

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

执行命令使修改生效:

modprobe br_netfilter

sysctl -p /etc/sysctl.d/k8s.conf

[root@master ~]# systemctl daemon-reload

[root@master ~]# systemctl restart docker

(2)

IPvs:(我没有使用ipvs, 所以没有做这一步)

kube-proxy开启ipvs的前置条件:

在所有的Kubelet存在的节点执行以下脚本:

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

上面脚本创建了的/etc/sysconfig/modules/ipvs.modules文件,保证在节点重启后能自动加载所需模块。

使用lsmod | grep -e ip_vs -e nf_conntrack_ipv4命令查看是否已经正确加载所需的内核模块。

接下来还需要确保各个节点上已经安装了ipset软件包: yum install ipset

为了便于查看ipvs的代理规则,最好安装一下管理工具ipvsadm : yum install ipvsadm。

如果以上前提条件如果不满足,则即使kube-proxy的配置开启了ipvs模式,也会退回到iptables模式。

在kubelet的配置文件/etc/sysconfig/kubelet,设置使用ipvs:

KUBE_PROXY_MODE=ipvs

(3)准备镜像

[root@master ~]# rpm -ql kubelet

/etc/kubernetes/manifests

/etc/sysconfig/kubelet

/etc/systemd/system/kubelet.service

/usr/bin/kubelet

[root@master ~]# systemctl enable kubelet #kubelet先设置开机启动,但是先不启动

[root@master ~]# systemctl enable docker

[root@master ~]# kubeadm init --help

[root@master ~]# kubeadm version #查看kubeadm的版本

[root@master ~]# kubeadm config images list #查看kubeadm需要的image版本,然后下载对应的镜像

由于国内不能访问Google,所以只能下载国内站点上的镜像,然后重新打标:

docker pull mirrorgooglecontainers/kube-apiserver:v1.13.1

docker pull mirrorgooglecontainers/kube-controller-manager:v1.13.1

docker pull mirrorgooglecontainers/kube-scheduler:v1.13.1

docker pull mirrorgooglecontainers/kube-proxy:v1.13.1

docker pull mirrorgooglecontainers/pause:3.1

docker pull mirrorgooglecontainers/etcd:3.2.24

docker pull coredns/coredns:1.2.6

docker pull registry.cn-shenzhen.aliyuncs.com/cp_m/flannel:v0.10.0-amd64

为了应对网络不畅通的问题,我们国内网络环境只能提前手动下载相关镜像并重新打 tag :

docker tag mirrorgooglecontainers/kube-apiserver:v1.13.1 k8s.gcr.io/kube-apiserver:v1.13.1

docker tag mirrorgooglecontainers/kube-controller-manager:v1.13.1 k8s.gcr.io/kube-controller-manager:v1.13.1

docker tag mirrorgooglecontainers/kube-scheduler:v1.13.1 k8s.gcr.io/kube-scheduler:v1.13.1

docker tag mirrorgooglecontainers/kube-proxy:v1.13.1 k8s.gcr.io/kube-proxy:v1.13.1

docker tag mirrorgooglecontainers/pause:3.1 k8s.gcr.io/pause:3.1

docker tag mirrorgooglecontainers/etcd:3.2.24 k8s.gcr.io/etcd:3.2.24

docker tag coredns/coredns:1.2.6 k8s.gcr.io/coredns:1.2.6

docker tag registry.cn-shenzhen.aliyuncs.com/cp_m/flannel:v0.10.0-amd64 quay.io/coreos/flannel:v0.10.0-amd64

docker rmi mirrorgooglecontainers/kube-apiserver:v1.13.1

docker rmi mirrorgooglecontainers/kube-controller-manager:v1.13.1

docker rmi mirrorgooglecontainers/kube-scheduler:v1.13.1

docker rmi mirrorgooglecontainers/kube-proxy:v1.13.1

docker rmi mirrorgooglecontainers/pause:3.1

docker rmi mirrorgooglecontainers/etcd:3.2.24

docker rmi coredns/coredns:1.2.6

docker rmi registry.cn-shenzhen.aliyuncs.com/cp_m/flannel:v0.10.0-amd64

(4)

[root@master ~]# vim /etc/sysconfig/kubelet #设置忽略Swap启动的状态错误

KUBELET_EXTRA_ARGS="--fail-swap-on=false"

初始化集群:

[root@master ~]# kubeadm init --kubernetes-version=v1.13.1 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --ignore-preflight-errors=Swap

要开始使用集群,需要以常规用户身份运行以下命令:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config #root用户的话,这条命令就不用执行了

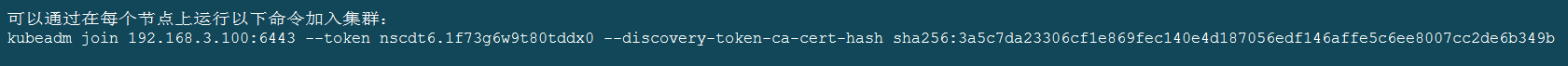

可以通过在每个节点上运行以下命令加入集群,但是现在不用添加:

sha256:3a5c7da23306cf1e869fec140e4d187056edf146affe5c6ee8007cc2de6b349b #初始化集群会生成

4、kubectl命令

[root@master ~]# kubectl get cs #检查各组件健康状况

[root@master ~]# kubectl get nodes #查看节点

[root@master ~]# kubectl get ns #查看所有名称空间

[root@master ~]# kubectl describe node node01 #查看一个节点的详细信息

[root@master ~]# kubectl cluster-info #查看集群信息

5、部署flannel

[root@master ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

[root@master ~]# kubectl get pods -n kube-system #查看当前节点所有pod状态,-n:指定namespace

node01:

1、

[root@master ~]# scp rpm-package-key.gpg node01:/root/

2、

[root@node01 ~]# yum install docker-ce kubelet kubeadm

[root@master ~]# scp /usr/lib/systemd/system/docker.service node01:/usr/lib/systemd/system/docker.service

[root@master ~]# scp /etc/sysconfig/kubelet node01:/etc/sysconfig/

[root@node01 ~]# systemctl daemon-reload

[root@node01 ~]# systemctl start docker

[root@node01 ~]# systemctl enable docker

[root@node01 ~]# systemctl enable kubelet

[root@node01 ~]# echo "1" >/proc/sys/net/bridge/bridge-nf-call-ip6tables

[root@node01 ~]# echo "1" >/proc/sys/net/bridge/bridge-nf-call-iptables

3、

[root@master ~]# scp myimages.gz node01:/root/ #将master的镜像打包copy到node01

[root@node01 ~]# docker load -i myimages.gz

[root@node01 ~]# docker tag fdb321fd30a0 k8s.gcr.io/kube-proxy:v1.13.1

[root@node01 ~]# docker tag 40a63db91ef8 k8s.gcr.io/kube-apiserver:v1.13.1

[root@node01 ~]# docker tag ab81d7360408 k8s.gcr.io/kube-scheduler:v1.13.1

[root@node01 ~]# docker tag 26e6f1db2a52 k8s.gcr.io/kube-controller-manager:v1.13.1

[root@node01 ~]# docker tag f59dcacceff4 k8s.gcr.io/coredns:1.2.6

[root@node01 ~]# docker tag 3cab8e1b9802 k8s.gcr.io/etcd:3.2.24

[root@node01 ~]# docker tag f0fad859c909 quay.io/coreos/flannel:v0.10.0-amd64

[root@node01 ~]# docker tag da86e6ba6ca1 k8s.gcr.io/pause:3.1

[root@node01 ~]# kubeadm join 192.168.3.100:6443 --token nscdt6.1f73g6w9t80tddx0 --discovery-token-ca-cert-hash

sha256:3a5c7da23306cf1e869fec140e4d187056edf146affe5c6ee8007cc2de6b349b --ignore-preflight-errors=Swap

node02 :

1、

[root@master ~]# scp rpm-package-key.gpg node02:/root/

[root@node02 ~]# yum install docker-ce kubelet kubeadm

[root@master ~]# scp /usr/lib/systemd/system/docker.service node02:/usr/lib/systemd/system/docker.service

[root@master ~]# scp /etc/sysconfig/kubelet node02:/etc/sysconfig/

[root@node02 ~]# systemctl daemon-reload

[root@node02 ~]# systemctl start docker

[root@node02 ~]# systemctl enable docker

[root@node02 ~]# systemctl enable kubelet

[root@node02 ~]# echo "1" >/proc/sys/net/bridge/bridge-nf-call-ip6tables

[root@node02 ~]# echo "1" >/proc/sys/net/bridge/bridge-nf-call-iptables

2、

[root@master ~]# scp myimages.gz node02:/root/ #将master的镜像copy到node02

[root@node02 ~]# docker load -i myimages.gz

[root@node02 ~]# docker tag fdb321fd30a0 k8s.gcr.io/kube-proxy:v1.13.1

[root@node02 ~]# docker tag 40a63db91ef8 k8s.gcr.io/kube-apiserver:v1.13.1

[root@node02 ~]# docker tag ab81d7360408 k8s.gcr.io/kube-scheduler:v1.13.1

[root@node02 ~]# docker tag 26e6f1db2a52 k8s.gcr.io/kube-controller-manager:v1.13.1

[root@node02 ~]# docker tag f59dcacceff4 k8s.gcr.io/coredns:1.2.6

[root@node02 ~]# docker tag 3cab8e1b9802 k8s.gcr.io/etcd:3.2.24

[root@node02 ~]# docker tag f0fad859c909 quay.io/coreos/flannel:v0.10.0-amd64

[root@node02 ~]# docker tag da86e6ba6ca1 k8s.gcr.io/pause:3.1

[root@node02 ~]# kubeadm join 192.168.3.100:6443 --token nscdt6.1f73g6w9t80tddx0 --discovery-token-ca-cert-hash

sha256:3a5c7da23306cf1e869fec140e4d187056edf146affe5c6ee8007cc2de6b349b --ignore-preflight-errors=Swap

最后:检查集群状况

[root@master ~]# kubectl get cs #检查各组件健康状况

[root@master ~]# kubectl get nodes #查看节点

[root@master ~]# kubectl get ns #查看所有名称空间

[root@master ~]# kubectl describe node node01 #查看一个节点的详细信息

[root@master ~]# kubectl cluster-info #查看集群信息