MapReduce数据清洗

Result文件数据说明:

Ip:106.39.41.166,(城市)

Date:10/Nov/2016:00:01:02 +0800,(日期)

Day:10,(天数)

Traffic: 54 ,(流量)

Type: video,(类型:视频video或文章article)

Id: 8701(视频或者文章的id)

测试要求:

2、数据处理:

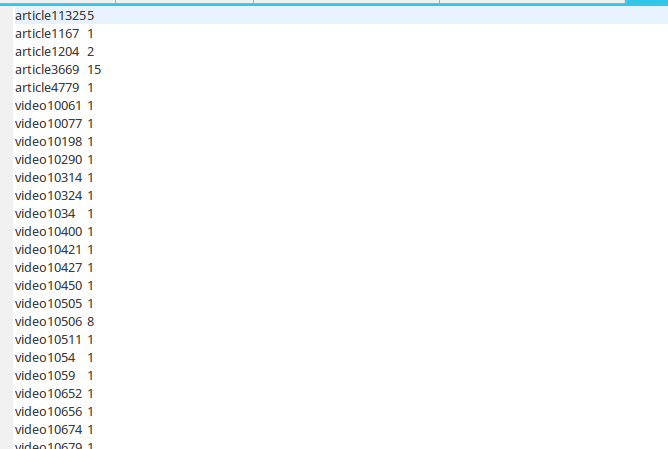

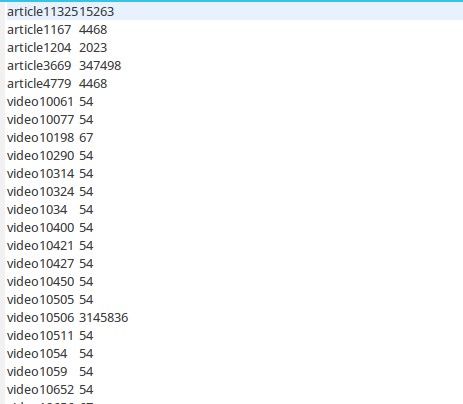

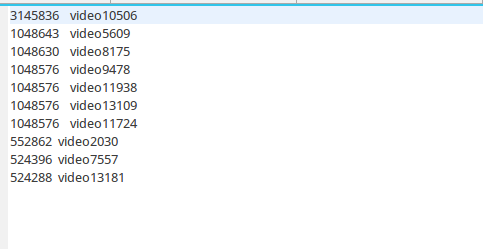

·统计最受欢迎的视频/文章的Top10访问次数 (video/article)

·按照地市统计最受欢迎的Top10课程 (ip)

·按照流量统计最受欢迎的Top10课程 (traffic)

1、

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

|

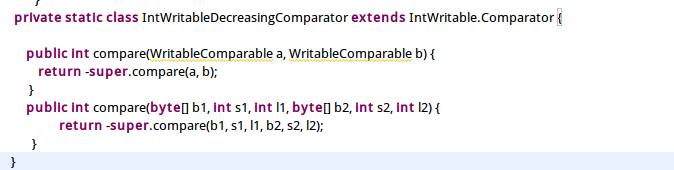

package test4;import java.io.IOException; import org.apache.hadoop.conf.Configuration;import org.apache.hadoop.fs.Path;import org.apache.hadoop.io.IntWritable;import org.apache.hadoop.io.Text;import org.apache.hadoop.io.WritableComparable;import org.apache.hadoop.mapreduce.Job;import org.apache.hadoop.mapreduce.Mapper;import org.apache.hadoop.mapreduce.Reducer;import org.apache.hadoop.mapreduce.Reducer.Context;import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;public class quchong {public static void main(String[] args) throws IOException,ClassNotFoundException,InterruptedException {Job job = Job.getInstance();job.setJobName("paixu");job.setJarByClass(quchong.class);job.setMapperClass(doMapper.class);job.setReducerClass(doReducer.class);job.setOutputKeyClass(Text.class);job.setOutputValueClass(IntWritable.class);Path in = new Path("hdfs://localhost:9000/test/in/result");Path out = new Path("hdfs://localhost:9000/test/stage3/out1");FileInputFormat.addInputPath(job,in);FileOutputFormat.setOutputPath(job,out);////if(job.waitForCompletion(true)){Job job2 = Job.getInstance();job2.setJobName("paixu"); job2.setJarByClass(quchong.class); job2.setMapperClass(doMapper2.class); job2.setReducerClass(doReduce2.class); job2.setOutputKeyClass(IntWritable.class); job2.setOutputValueClass(Text.class); job2.setSortComparatorClass(IntWritableDecreasingComparator.class); job2.setInputFormatClass(TextInputFormat.class); job2.setOutputFormatClass(TextOutputFormat.class); Path in2=new Path("hdfs://localhost:9000/test/stage3/out1/part-r-00000"); Path out2=new Path("hdfs://localhost:9000/test/stage3/out2"); FileInputFormat.addInputPath(job2,in2); FileOutputFormat.setOutputPath(job2,out2); System.exit(job2.waitForCompletion(true) ? 0 : 1);}}public static class doMapper extends Mapper<Object,Text,Text,IntWritable>{public static final IntWritable one = new IntWritable(1);public static Text word = new Text();@Overrideprotected void map(Object key, Text value, Context context)throws IOException,InterruptedException {//StringTokenizer tokenizer = new StringTokenizer(value.toString()," "); String[] strNlist = value.toString().split(","); // String str=strNlist[3].trim(); String str2=strNlist[4]+strNlist[5];// Integer temp= Integer.valueOf(str);word.set(str2);//IntWritable abc = new IntWritable(temp);context.write(word,one);}}public static class doReducer extends Reducer<Text,IntWritable,Text,IntWritable>{private IntWritable result = new IntWritable();@Overrideprotected void reduce(Text key,Iterable<IntWritable> values,Context context)throws IOException,InterruptedException{int sum = 0;for (IntWritable value : values){sum += value.get();}result.set(sum);context.write(key,result);}}/////////////////public static class doMapper2 extends Mapper<Object , Text , IntWritable,Text>{private static Text goods=new Text(); private static IntWritable num=new IntWritable(); @Overrideprotected void map(Object key, Text value, Context context)throws IOException,InterruptedException { String line=value.toString(); String arr[]=line.split(" "); num.set(Integer.parseInt(arr[1])); goods.set(arr[0]); context.write(num,goods);}}public static class doReduce2 extends Reducer< IntWritable, Text, IntWritable, Text>{ private static IntWritable result= new IntWritable(); int i=0; public void reduce(IntWritable key,Iterable<Text> values,Context context) throws IOException, InterruptedException{ for(Text val:values){ if(i<10) { context.write(key,val); i++; } } } } private static class IntWritableDecreasingComparator extends IntWritable.Comparator { public int compare(WritableComparable a, WritableComparable b) { return -super.compare(a, b); } public int compare(byte[] b1, int s1, int l1, byte[] b2, int s2, int l2) { return -super.compare(b1, s1, l1, b2, s2, l2); }}} |

(去重,并输出访问次数)

(去重,并输出访问次数)

(排序,输出Top10)

(排序,输出Top10)

2、

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

|

package test3; import java.io.IOException; import org.apache.hadoop.fs.Path;import org.apache.hadoop.io.IntWritable;import org.apache.hadoop.io.Text;import org.apache.hadoop.io.WritableComparable;import org.apache.hadoop.mapreduce.Job;import org.apache.hadoop.mapreduce.Mapper;import org.apache.hadoop.mapreduce.Reducer;import org.apache.hadoop.mapreduce.Reducer.Context;import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;import test4.quchong.doMapper2;import test4.quchong.doReduce2;public class quchong {public static void main(String[] args) throws IOException,ClassNotFoundException,InterruptedException {Job job = Job.getInstance();job.setJobName("paixu");job.setJarByClass(quchong.class);job.setMapperClass(doMapper.class);job.setReducerClass(doReducer.class);job.setOutputKeyClass(Text.class);job.setOutputValueClass(IntWritable.class);Path in = new Path("hdfs://localhost:9000/test/in/result");Path out = new Path("hdfs://localhost:9000/test/stage2/out1");FileInputFormat.addInputPath(job,in);FileOutputFormat.setOutputPath(job,out);if(job.waitForCompletion(true)){Job job2 = Job.getInstance();job2.setJobName("paixu"); job2.setJarByClass(quchong.class); job2.setMapperClass(doMapper2.class); job2.setReducerClass(doReduce2.class); job2.setOutputKeyClass(IntWritable.class); job2.setOutputValueClass(Text.class); job2.setSortComparatorClass(IntWritableDecreasingComparator.class); job2.setInputFormatClass(TextInputFormat.class); job2.setOutputFormatClass(TextOutputFormat.class); Path in2=new Path("hdfs://localhost:9000/test/stage2/out1/part-r-00000"); Path out2=new Path("hdfs://localhost:9000/test/stage2/out2"); FileInputFormat.addInputPath(job2,in2); FileOutputFormat.setOutputPath(job2,out2); System.exit(job2.waitForCompletion(true) ? 0 : 1);}}public static class doMapper extends Mapper<Object,Text,Text,IntWritable>{public static final IntWritable one = new IntWritable(1);public static Text word = new Text();@Overrideprotected void map(Object key, Text value, Context context)throws IOException,InterruptedException {//StringTokenizer tokenizer = new StringTokenizer(value.toString()," "); String[] strNlist = value.toString().split(","); // String str=strNlist[3].trim(); String str2=strNlist[0];// Integer temp= Integer.valueOf(str);word.set(str2);//IntWritable abc = new IntWritable(temp);context.write(word,one);}}public static class doReducer extends Reducer<Text,IntWritable,Text,IntWritable>{private IntWritable result = new IntWritable();@Overrideprotected void reduce(Text key,Iterable<IntWritable> values,Context context)throws IOException,InterruptedException{int sum = 0;for (IntWritable value : values){sum += value.get();}result.set(sum);context.write(key,result);}}////////////////public static class doMapper2 extends Mapper<Object , Text , IntWritable,Text>{private static Text goods=new Text(); private static IntWritable num=new IntWritable(); @Overrideprotected void map(Object key, Text value, Context context)throws IOException,InterruptedException { String line=value.toString(); String arr[]=line.split(" "); num.set(Integer.parseInt(arr[1])); goods.set(arr[0]); context.write(num,goods);}}public static class doReduce2 extends Reducer< IntWritable, Text, IntWritable, Text>{ private static IntWritable result= new IntWritable(); int i=0; public void reduce(IntWritable key,Iterable<Text> values,Context context) throws IOException, InterruptedException{ for(Text val:values){ if(i<10) { context.write(key,val); i++; } } } } private static class IntWritableDecreasingComparator extends IntWritable.Comparator { public int compare(WritableComparable a, WritableComparable b) { return -super.compare(a, b); } public int compare(byte[] b1, int s1, int l1, byte[] b2, int s2, int l2) { return -super.compare(b1, s1, l1, b2, s2, l2); }}} |

(去重,显示ip次数)

(去重,显示ip次数)

(排序,输出Top10)

(排序,输出Top10)

3、

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

|

package test2; import java.io.IOException;import java.text.SimpleDateFormat;import java.util.Date;import java.util.Locale;import java.util.StringTokenizer;import org.apache.hadoop.conf.Configuration;import org.apache.hadoop.fs.Path;import org.apache.hadoop.io.IntWritable;import org.apache.hadoop.io.LongWritable;import org.apache.hadoop.io.Text;import org.apache.hadoop.io.WritableComparable;import org.apache.hadoop.mapreduce.Job;import org.apache.hadoop.mapreduce.Mapper;import org.apache.hadoop.mapreduce.Reducer;import org.apache.hadoop.mapreduce.Reducer.Context;import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat; import test3.quchong;import test3.quchong.doMapper2;import test3.quchong.doReduce2;public class paixu {public static void main(String[] args) throws IOException,ClassNotFoundException,InterruptedException {Job job = Job.getInstance();job.setJobName("paixu");job.setJarByClass(paixu.class);job.setMapperClass(doMapper.class);job.setReducerClass(doReducer.class);job.setOutputKeyClass(Text.class);job.setOutputValueClass(IntWritable.class);Path in = new Path("hdfs://localhost:9000/test/in/result");Path out = new Path("hdfs://localhost:9000/test/stage1/out1");FileInputFormat.addInputPath(job,in);FileOutputFormat.setOutputPath(job,out);if(job.waitForCompletion(true)){Job job2 = Job.getInstance();job2.setJobName("paixu"); job2.setJarByClass(quchong.class); job2.setMapperClass(doMapper2.class); job2.setReducerClass(doReduce2.class); job2.setOutputKeyClass(IntWritable.class); job2.setOutputValueClass(Text.class); job2.setSortComparatorClass(IntWritableDecreasingComparator.class); job2.setInputFormatClass(TextInputFormat.class); job2.setOutputFormatClass(TextOutputFormat.class); Path in2=new Path("hdfs://localhost:9000/test/stage1/out1/part-r-00000"); Path out2=new Path("hdfs://localhost:9000/test/stage1/out2"); FileInputFormat.addInputPath(job2,in2); FileOutputFormat.setOutputPath(job2,out2); System.exit(job2.waitForCompletion(true) ? 0 : 1);}}public static class doMapper extends Mapper<Object,Text,Text,IntWritable>{public static final IntWritable one = new IntWritable(1);public static Text word = new Text();@Overrideprotected void map(Object key, Text value, Context context)throws IOException,InterruptedException {//StringTokenizer tokenizer = new StringTokenizer(value.toString()," "); String[] strNlist = value.toString().split(","); String str=strNlist[3].trim(); String str2=strNlist[4]+strNlist[5]; Integer temp= Integer.valueOf(str);word.set(str2);IntWritable abc = new IntWritable(temp);context.write(word,abc);}}public static class doReducer extends Reducer<Text,IntWritable,Text,IntWritable>{private IntWritable result = new IntWritable();@Overrideprotected void reduce(Text key,Iterable<IntWritable> values,Context context)throws IOException,InterruptedException{int sum = 0;for (IntWritable value : values){sum += value.get();}result.set(sum);context.write(key,result);}}/////////////public static class doMapper2 extends Mapper<Object , Text , IntWritable,Text>{private static Text goods=new Text(); private static IntWritable num=new IntWritable(); @Overrideprotected void map(Object key, Text value, Context context)throws IOException,InterruptedException { String line=value.toString(); String arr[]=line.split(" "); num.set(Integer.parseInt(arr[1])); goods.set(arr[0]); context.write(num,goods);}}public static class doReduce2 extends Reducer< IntWritable, Text, IntWritable, Text>{ private static IntWritable result= new IntWritable(); int i=0; public void reduce(IntWritable key,Iterable<Text> values,Context context) throws IOException, InterruptedException{ for(Text val:values){ if(i<10) { context.write(key,val); i++; } } } } private static class IntWritableDecreasingComparator extends IntWritable.Comparator { public int compare(WritableComparable a, WritableComparable b) { return -super.compare(a, b); } public int compare(byte[] b1, int s1, int l1, byte[] b2, int s2, int l2) { return -super.compare(b1, s1, l1, b2, s2, l2); }}} |

(去重、显示流量总量)

(去重、显示流量总量)

(排序,输出Top10)

(排序,输出Top10)

总结:

本来我是通过两个类来实现的,后来我发现在一个类中可以进行多个job,我就定义两个job,job1进行去重,输出总数。job2进行排序,输出Top10。

在for(Intwritable val:values)遍历时,根据主键升序遍历,但我们需要的结果是降序,那么在这里我们需要引入一个比较器。