Python-celery分布式队列

Python-celery分布式队列

http://www.cnblogs.com/alex3714/p/6351797.html

1 Celery介绍和基本使用

Celery 是一个 基于python开发的分布式异步消息任务队列,通过它可以轻松的实现任务的异步处理, 如果你的业务场景中需要用到异步任务,就可以考虑使用celery, 举几个实例场景中可用的例子:

- 你想对100台机器执行一条批量命令,可能会花很长时间 ,但你不想让你的程序等着结果返回,而是给你返回 一个任务ID,你过一段时间只需要拿着这个任务id就可以拿到任务执行结果, 在任务执行ing进行时,你可以继续做其它的事情。

- 你想做一个定时任务,比如每天检测一下你们所有客户的资料,如果发现今天 是客户的生日,就给他发个短信祝福

Celery 在执行任务时需要通过一个消息中间件来接收和发送任务消息,以及存储任务结果, 一般使用rabbitMQ or Redis,后面会讲

1.1 Celery有以下优点:

- 简单:一单熟悉了celery的工作流程后,配置和使用还是比较简单的

- 高可用:当任务执行失败或执行过程中发生连接中断,celery 会自动尝试重新执行任务

- 快速:一个单进程的celery每分钟可处理上百万个任务

- 灵活: 几乎celery的各个组件都可以被扩展及自定制

Celery基本工作流程图

1.1 1.2 Celery安装使用

Celery的默认broker是RabbitMQ, 仅需配置一行就可以

broker_url = 'amqp://guest:guest@localhost:5672//

rabbitMQ 没装的话请装一下,安装看这里 http://docs.celeryproject.org/en/latest/getting-started/brokers/rabbitmq.html#id3

1.1.1 使用Redis做broker也可以

安装redis组件

pip install -U "celery[redis]

配置

Configuration is easy, just configure the location of your Redis database:

app.conf.broker_url = 'redis://localhost:6379/0'

Where the URL is in the format of:

redis://:password@hostname:port/db_number

all fields after the scheme are optional, and will default to localhost on port 6379, using database 0.

如果想获取每个任务的执行结果,还需要配置一下把任务结果存在哪

If you also want to store the state and return values of tasks in Redis, you should configure these settings:

app.conf.result_backend = 'redis://localhost:6379/0'

1.1.2 开始使用Celery啦

安装celery模块

pip install celery

创建一个celery application 用来定义你的任务列表

创建一个任务文件就叫tasks.py吧

from celery import Celery

app = Celery('tasks',

broker='redis://localhost',

backend='redis://localhost')

@app.task

def add(x,y):

print("running...",x,y)

return x+y

启动Celery Worker来开始监听并执行任务

celery -A tasks worker --loglevel=info

调用任务

再打开一个终端, 进行命令行模式,调用任务

>>> from tasks import add >>> add.delay(4, 4) #看你的worker终端会显示收到一个任务,此时你想看任务结果的话,需要在调用任务时赋值个变量 >>> result = add.delay(4, 4) The ready() method returns whether the task has finished processing or not: >>> result.ready() False You can wait for the result to complete, but this is rarely used since it turns the asynchronous call into a synchronous one: >>> result.get(timeout=1) 8 In case the task raised an exception, get() will re-raise the exception, but you can override this by specifying the propagate argument: >>> result.get(propagate=False) If the task raised an exception you can also gain access to the original traceback: >>> result.traceback …

1.1 项目使用

可以把celery配置成一个应用

目录格式如下

proj/__init__.py

/celery.py

/tasks.py

proj/celery.py

from __future__ import absolute_import, unicode_literals

from celery import Celery

app = Celery('pro',

broker='redis://localhost',

backend='redis://localhost',

include=['celery_pro.tasks','celery_pro.tasks2'])

# Optional configuration, see the application user guide.

app.conf.update(

result_expires=3600,

)

if __name__ == '__main__':

app.start()

proj/tasks.py

from __future__ import absolute_import, unicode_literals

from .celery import app

@app.task

def add(x, y):

return x + y

@app.task

def mul(x, y):

return x * y

@app.task

def xsum(numbers):

return sum(numbers)

启动

celery -A proj worker -l info

输出

-------------- celery@Alexs-MacBook-Pro.local v4.0.2 (latentcall)

---- **** -----

--- * *** * -- Darwin-15.6.0-x86_64-i386-64bit 2017-01-26 21:50:24

-- * - **** ---

- ** ---------- [config]

- ** ---------- .> app: proj:0x103a020f0

- ** ---------- .> transport: redis://localhost:6379//

- ** ---------- .> results: redis://localhost/

- *** --- * --- .> concurrency: 8 (prefork)

-- ******* ---- .> task events: OFF (enable -E to monitor tasks in this worker)

--- ***** -----

-------------- [queues]

.> celery exchange=celery(direct) key=celery

后台启动worker

In the background

In production you’ll want to run the worker in the background, this is described in detail in the daemonization tutorial.

The daemonization scripts uses the celery multi command to start one or more workers in the background:

$ celery multi start w1 -A proj -l info

celery multi v4.0.0 (latentcall)

> Starting nodes...

> w1.halcyon.local: OK

You can restart it too:

$ celery multi restart w1 -A proj -l info

celery multi v4.0.0 (latentcall)

> Stopping nodes...

> w1.halcyon.local: TERM -> 64024

> Waiting for 1 node.....

> w1.halcyon.local: OK

> Restarting node w1.halcyon.local: OK

celery multi v4.0.0 (latentcall)

> Stopping nodes...

> w1.halcyon.local: TERM -> 64052

or stop it:

$ celery multi stop w1 -A proj -l info

The stop command is asynchronous so it won’t wait for the worker to shutdown. You’ll probably want to use the stopwait command instead, this ensures all currently executing tasks is completed before exiting:

$ celery multi stopwait w1 -A proj -l info

定时任务

celery支持定时任务,设定好任务的执行时间,celery就会定时自动帮你执行, 这个定时任务模块叫celery beat

写一个脚本 叫periodic_task.py 我们放在一个项目里

from __future__ import absolute_import, unicode_literals

from celery.schedules import crontab

from .celery import app

@app.on_after_configure.connect

def setup_periodic_tasks(sender, **kwargs):

# Calls test('hello') every 10 seconds.

sender.add_periodic_task(10.0, test.s('hello'), name='add every 10')

# Calls test('world') every 30 seconds

sender.add_periodic_task(30.0, test.s('world'), expires=10)

# Executes every Monday morning at 7:30 a.m.

sender.add_periodic_task(

crontab(hour=15, minute=53, day_of_week=5),

test.s('Happy Mondays!'),

)

@app.task

def test(arg):

print(arg)

add_periodic_task 会添加一条定时任务

上面是通过调用函数添加定时任务,也可以像写配置文件 一样的形式添加, 下面是每30s执行的任务

app.conf.beat_schedule = {

'add-every-30-seconds': {

'task': 'tasks.add',

'schedule': 30.0,

'args': (16, 16)

},

}

app.conf.timezone = 'UTC'

celery.py 配置

from __future__ import absolute_import, unicode_literals

from celery import Celery

app = Celery('pro',

broker='redis://localhost',

backend='redis://localhost',

include=['celery_pro.tasks','celery_pro.tasks2','celery_pro.periodic_task'])

# Optional configuration, see the application user guide.

app.conf.update(

result_expires=3600,

)

app.conf.beat_schedule = {

'add-every-30-seconds': {

'task': 'tasks.add',

'schedule': 30.0,

'args': (16, 16)

},

}

app.conf.timezone = 'UTC'

if __name__ == '__main__':

app.start()

#重启beat

任务添加好了,需要让celery单独启动一个进程来定时发起这些任务, 注意, 这里是发起任务,不是执行,这个进程只会不断的去检查你的任务计划, 每发现有任务需要执行了,就发起一个任务调用消息,交给celery worker去执行

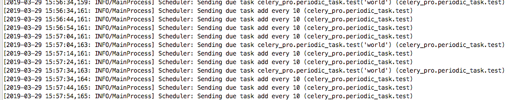

celery -A celery_pro.periodic_task beat -l info

输出like below

celery beat v4.0.2 (latentcall) is starting.

__ - ... __ - _

LocalTime -> 2017-02-08 18:39:31

Configuration ->

. broker -> redis://localhost:6379//

. loader -> celery.loaders.app.AppLoader

. scheduler -> celery.beat.PersistentScheduler

. db -> celerybeat-schedule

. logfile -> [stderr]@%WARNING

. maxinterval -> 5.00 minutes (300s)

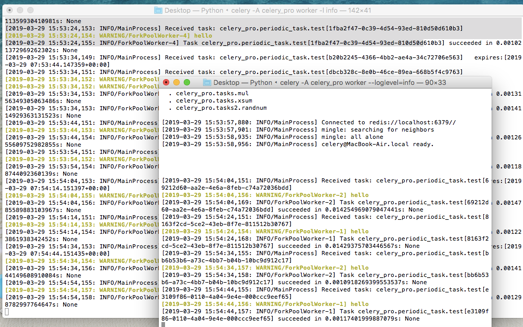

此时还差一步,就是还需要启动一个worker,负责执行celery beat发起的任务

启动celery worker来执行任务

celery -A celery_pro worker --loglevel=info

更复杂的定时配置

上面的定时任务比较简单,只是每多少s执行一个任务,但如果你想要每周一三五的早上8点给你发邮件怎么办呢?哈,其实也简单,用crontab功能,跟linux自带的crontab功能是一样的,可以个性化定制任务执行时间

linux crontab http://www.cnblogs.com/peida/archive/2013/01/08/2850483.html

from celery.schedules import crontab

app.conf.beat_schedule = {

# Executes every Monday morning at 7:30 a.m.

'add-every-monday-morning': {

'task': 'tasks.add',

'schedule': crontab(hour=7, minute=30, day_of_week=1),

'args': (16, 16),

},

}

celery与Django结合

django 可以轻松跟celery结合实现异步任务,只需简单配置即可

If you have a modern Django project layout like:

- proj/

- proj/__init__.py

- proj/settings.py

- proj/urls.py

- manage.py

- app1/

- tasks.py

- models.py

- app2/

- tasks.py

- models.py

app __init__

# import pymysql # pymysql.install_as_MySQLdb() from __future__ import absolute_import, unicode_literals # This will make sure the app is always imported when # Django starts so that shared_task will use this app. from .celery import app as celery_app __all__ = ['celery_app']

settings

CELERY_BROKER_URL = 'redis://localhost:6379' CELERY_RESULT_BACKEND = 'redis://localhost:6379'

file: proj/proj/celery.py

from __future__ import absolute_import, unicode_literals

import os

from celery import Celery

# set the default Django settings module for the 'celery' program.

os.environ.setdefault('DJANGO_SETTINGS_MODULE', 'proj.settings')

app = Celery('app01',

# broker='redis://localhost',

# backend='redis://localhost',

)

# Using a string here means the worker don't have to serialize

# the configuration object to child processes.

# - namespace='CELERY' means all celery-related configuration keys

# should have a `CELERY_` prefix.

app.config_from_object('django.conf:settings', namespace='CELERY')

# Load task modules from all registered Django app configs.

app.autodiscover_tasks()

@app.task(bind=True)

def debug_task(self):

print('Request: {0!r}'.format(self.request))

proj/proj/__init__.py:

from __future__ import absolute_import, unicode_literals # This will make sure the app is always imported when # Django starts so that shared_task will use this app. from .celery import app as celery_app __all__ = ['celery_app']

urls

url('^celery_call/',views.celery_call),

url('^celery_ret/',views.celery_ret),

然后在具体的app里的tasks.py里写你的任务

# Create your tasks here

from __future__ import absolute_import, unicode_literals

from celery import shared_task

@shared_task

def add(x, y):

return x + y

@shared_task

def mul(x, y):

return x * y

@shared_task

def xsum(numbers):

return sum(numbers)

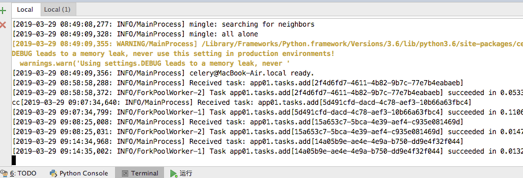

在你的django views里调用celery task

from django.core.mail import send_mail

from django.shortcuts import render,HttpResponse

from app01 import tasks

from celery.result import AsyncResult

import random

def celery_call(request):

randnum = random.randint(1,10000)

t = tasks.add.delay(randnum,6)

print("randnum",randnum)

return HttpResponse(t.id)

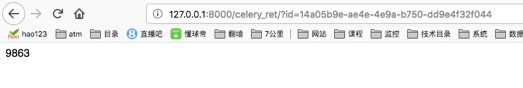

def celery_ret(request):

task_id = request.GET.get('id')

res = AsyncResult(id=task_id)

if res.ready():

return HttpResponse(res.get())

else:

return HttpResponse(res.ready())

测试

celery—Django定时任务

安装插件

pip install django-celery-beat

settings

INSTALLED_APPS = [

'django.contrib.admin',

'django.contrib.auth',

'django.contrib.contenttypes',

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

'app01',

'app02',

'django_celery_beat',

]

创建表启动

Python3 manage.py migrate celery -A proj beat -l info -S django

此时启动你的celery beat 和worker,会发现每隔2分钟,beat会发起一个任务消息让worker执行scp_task任务

注意,经测试,每添加或修改一个任务,celery beat都需要重启一次,要不然新的配置不会被celery beat进程读到

浙公网安备 33010602011771号

浙公网安备 33010602011771号