cephadm RGW服务

一 工作目录

root@cephadm-deploy:~# cephadm shell

Inferring fsid 0888a64c-57e6-11ec-ad21-fbe9db6e2e74

Using recent ceph image quay.io/ceph/ceph@sha256:bb6a71f7f481985f6d3b358e3b9ef64c6755b3db5aa53198e0aac38be5c8ae54

root@cephadm-deploy:/#

二 部署RGW

参考文档:

https://docs.ceph.com/en/pacific/cephadm/services/rgw/

https://docs.ceph.com/en/pacific/radosgw/multisite/#multisite

https://access.redhat.com/documentation/en-us/red_hat_ceph_storage/3/html/ceph_object_gateway_for_production/index

2.1 查看当前rgw服务状态

root@cephadm-deploy:/# ceph orch ls

NAME PORTS RUNNING REFRESHED AGE PLACEMENT

alertmanager ?:9093,9094 1/1 8m ago 21h count:1

crash 5/5 8m ago 21h *

grafana ?:3000 1/1 8m ago 21h count:1

mds.wgs_cephfs 3/3 8m ago 13h count:3

mgr 2/2 8m ago 21h count:2

mon 5/5 8m ago 21h count:5

node-exporter ?:9100 5/5 8m ago 21h *

osd 1 64s ago - <unmanaged>

osd.all-available-devices 14 8m ago 19h *

prometheus ?:9095 1/1 8m ago 21h count:1

root@cephadm-deploy:/# ceph orch ls |grep rgw

2.2 指定rgw

root@cephadm-deploy:/# ceph orch host label add ceph-node01 rgw

Added label rgw to host ceph-node01

root@cephadm-deploy:/# ceph orch host label add ceph-node02 rgw

Added label rgw to host ceph-node02

root@cephadm-deploy:/# ceph orch host label add ceph-node03 rgw

Added label rgw to host ceph-node03

2.3 创建rgw

在标记为rgw的主机分别创建两个端口为8000和8001的rgw服务,一共有6个rgw实例。

root@cephadm-deploy:/# ceph orch apply rgw wgs_rgw '--placement=label:rgw count-per-host:2' --port=8000

Scheduled rgw.wgs_rgw update...

2.4 查看rgw服务

root@cephadm-deploy:/# ceph orch ls

NAME PORTS RUNNING REFRESHED AGE PLACEMENT

alertmanager ?:9093,9094 1/1 7m ago 21h count:1

crash 5/5 7m ago 21h *

grafana ?:3000 1/1 7m ago 21h count:1

mds.wgs_cephfs 3/3 7m ago 13h count:3

mgr 2/2 7m ago 21h count:2

mon 5/5 7m ago 21h count:5

node-exporter ?:9100 5/5 7m ago 21h *

osd 1 4s ago - <unmanaged>

osd.all-available-devices 14 7m ago 19h *

prometheus ?:9095 1/1 7m ago 21h count:1

rgw.wgs_rgw ?:8000 6/6 10s ago 25s count-per-host:2;label:rgw

2.5 验证rgw端口

ceph-node01节点

root@ceph-node01:~# ss -tnlp |grep radosgw

LISTEN 0 128 0.0.0.0:8000 0.0.0.0:* users:(("radosgw",pid=27630,fd=73))

LISTEN 0 128 0.0.0.0:8001 0.0.0.0:* users:(("radosgw",pid=28497,fd=73))

LISTEN 0 128 [::]:8000 [::]:* users:(("radosgw",pid=27630,fd=74))

LISTEN 0 128 [::]:8001 [::]:* users:(("radosgw",pid=28497,fd=74)) ceph-node02节点

root@ceph-node02:~# ss -tnlp |grep radosgw

LISTEN 0 128 0.0.0.0:8000 0.0.0.0:* users:(("radosgw",pid=26033,fd=73))

LISTEN 0 128 0.0.0.0:8001 0.0.0.0:* users:(("radosgw",pid=27250,fd=73))

LISTEN 0 128 [::]:8000 [::]:* users:(("radosgw",pid=26033,fd=74))

LISTEN 0 128 [::]:8001 [::]:* users:(("radosgw",pid=27250,fd=74))ceph-node03节点

root@ceph-node03:~# ss -tnlp |grep radosgw

LISTEN 0 128 0.0.0.0:8000 0.0.0.0:* users:(("radosgw",pid=26696,fd=73))

LISTEN 0 128 0.0.0.0:8001 0.0.0.0:* users:(("radosgw",pid=27591,fd=72))

LISTEN 0 128 [::]:8000 [::]:* users:(("radosgw",pid=26696,fd=74))

LISTEN 0 128 [::]:8001 [::]:* users:(("radosgw",pid=27591,fd=73)) 2.6 查看生成的rgw进程

ceph-node01节点

root@ceph-node01:~# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

9c1fb036fabf quay.io/ceph/ceph "/usr/bin/radosgw -n…" 3 minutes ago Up 3 minutes ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-rgw-wgs_rgw-ceph-node01-vjsfbz

6e9f4ed438ea quay.io/ceph/ceph "/usr/bin/radosgw -n…" 4 minutes ago Up 4 minutes ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-rgw-wgs_rgw-ceph-node01-hbpdrz

e9a882b32dbd quay.io/ceph/ceph "/usr/bin/ceph-osd -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-osd-8

ef581f0ea76c quay.io/ceph/ceph "/usr/bin/ceph-osd -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-osd-0

54b706002316 quay.io/ceph/ceph "/usr/bin/ceph-osd -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-osd-4

dab354338956 quay.io/ceph/ceph "/usr/bin/ceph-mds -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-mds-wgs_cephfs-ceph-node01-ellktv

f4eb5396a980 quay.io/ceph/ceph "/usr/bin/ceph-crash…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-crash-ceph-node01

0a7ff497f2ac quay.io/ceph/ceph "/usr/bin/ceph-mgr -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-mgr-ceph-node01-anwvfy

043e5e69b1fc quay.io/prometheus/node-exporter:v0.18.1 "/bin/node_exporter …" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-node-exporter-ceph-node01

44c21d274db9 quay.io/ceph/ceph "/usr/bin/ceph-mon -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-mon-ceph-node01

ceph-node02节点

root@ceph-node02:~# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

ce3aabe63039 quay.io/ceph/ceph "/usr/bin/radosgw -n…" 4 minutes ago Up 4 minutes ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-rgw-wgs_rgw-ceph-node02-qihdse

c63e0a4236ed quay.io/ceph/ceph "/usr/bin/radosgw -n…" 4 minutes ago Up 4 minutes ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-rgw-wgs_rgw-ceph-node02-lwedrm

d68453d87f8e quay.io/ceph/ceph "/usr/bin/ceph-osd -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-osd-1

c25392c35740 quay.io/ceph/ceph "/usr/bin/ceph-osd -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-osd-10

fe35e32cfc16 quay.io/ceph/ceph "/usr/bin/ceph-osd -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-osd-6

e0e7c52ff221 quay.io/ceph/ceph "/usr/bin/ceph-crash…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-crash-ceph-node02

b797e26afd47 quay.io/prometheus/node-exporter:v0.18.1 "/bin/node_exporter …" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-node-exporter-ceph-node02

7b8a7e66cb2c quay.io/ceph/ceph "/usr/bin/ceph-mds -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-mds-wgs_cephfs-ceph-node02-zpdphv

046689b3eb42 quay.io/ceph/ceph "/usr/bin/ceph-mon -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-mon-ceph-node02

ceph-node03节点

root@ceph-node03:~# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

5dccd6175f77 quay.io/ceph/ceph "/usr/bin/radosgw -n…" 3 minutes ago Up 3 minutes ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-rgw-wgs_rgw-ceph-node03-ispkoa

4a9b6094a278 quay.io/ceph/ceph "/usr/bin/radosgw -n…" 3 minutes ago Up 3 minutes ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-rgw-wgs_rgw-ceph-node03-xeqeho

828719b2a597 quay.io/ceph/ceph "/usr/bin/ceph-osd -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-osd-11

98e9de663b94 quay.io/ceph/ceph "/usr/bin/ceph-osd -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-osd-7

e043180af4f3 quay.io/ceph/ceph "/usr/bin/ceph-osd -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-osd-2

194c9fbbb9e4 quay.io/ceph/ceph "/usr/bin/ceph-crash…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-crash-ceph-node03

dc732195ee1c quay.io/prometheus/node-exporter:v0.18.1 "/bin/node_exporter …" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-node-exporter-ceph-node03

2b3ea53cdaf1 quay.io/ceph/ceph "/usr/bin/ceph-mon -…" About an hour ago Up About an hour ceph-0888a64c-57e6-11ec-ad21-fbe9db6e2e74-mon-ceph-node03

2.7 查看rgw存储池

root@cephadm-deploy:/# ceph osd pool ls

device_health_metrics

cephfs.wgs_cephfs.meta

cephfs.wgs_cephfs.data

.rgw.root

default.rgw.log

default.rgw.control

default.rgw.meta

2.8 查看rgw zone信息

查看代码

root@cephadm-deploy:/# radosgw-admin zone get --rgw-zone=default

{

"id": "31beee56-fd78-467a-b0ee-ce21cfb0f4fb",

"name": "default",

"domain_root": "default.rgw.meta:root",

"control_pool": "default.rgw.control",

"gc_pool": "default.rgw.log:gc",

"lc_pool": "default.rgw.log:lc",

"log_pool": "default.rgw.log",

"intent_log_pool": "default.rgw.log:intent",

"usage_log_pool": "default.rgw.log:usage",

"roles_pool": "default.rgw.meta:roles",

"reshard_pool": "default.rgw.log:reshard",

"user_keys_pool": "default.rgw.meta:users.keys",

"user_email_pool": "default.rgw.meta:users.email",

"user_swift_pool": "default.rgw.meta:users.swift",

"user_uid_pool": "default.rgw.meta:users.uid",

"otp_pool": "default.rgw.otp",

"system_key": {

"access_key": "",

"secret_key": ""

},

"placement_pools": [

{

"key": "default-placement",

"val": {

"index_pool": "default.rgw.buckets.index",

"storage_classes": {

"STANDARD": {

"data_pool": "default.rgw.buckets.data"

}

},

"data_extra_pool": "default.rgw.buckets.non-ec",

"index_type": 0

}

}

],

"realm_id": "",

"notif_pool": "default.rgw.log:notif"

}

2.9 访问rgw服务

root@cephadm-deploy:~# curl http://192.168.174.103:8000/ <?xml version="1.0" encoding="UTF-8"?><ListAllMyBucketsResult xmlns="http://s3.amazonaws.com/doc/2006-03-01/"><Owner><ID>anonymous</ID><DisplayName></DisplayName></Owner><Buckets></Buckets></ListAllMyBucketsResult>

root@cephadm-deploy:~# curl http://192.168.174.103:8001

<?xml version="1.0" encoding="UTF-8"?><ListAllMyBucketsResult xmlns="http://s3.amazonaws.com/doc/2006-03-01/"><Owner><ID>anonymous</ID><DisplayName></DisplayName></Owner><Buckets></Buckets></ListAllMyBucketsResult>

2.10 查询ceph集群状态

root@cephadm-deploy:/# ceph -s cluster: id: 0888a64c-57e6-11ec-ad21-fbe9db6e2e74 health: HEALTH_OKservices:

mon: 5 daemons, quorum cephadm-deploy,ceph-node01,ceph-node02,ceph-node03,ceph-node04 (age 89m)

mgr: ceph-node01.anwvfy(active, since 93m), standbys: cephadm-deploy.jgiulj

mds: 1/1 daemons up, 2 standby

osd: 15 osds: 15 up (since 89m), 15 in (since 14h)

rgw: 6 daemons active (3 hosts, 1 zones)

data:

volumes: 1/1 healthy

pools: 7 pools, 169 pgs

objects: 250 objects, 29 KiB

usage: 365 MiB used, 300 GiB / 300 GiB avail

pgs: 169 active+clean

三 创建rgw用户

root@cephadm-deploy:/# radosgw-admin user create --uid="wgs" --display-name="wgs"

{

"user_id": "wgs",

"display_name": "wgs",

"email": "",

"suspended": 0,

"max_buckets": 1000,

"subusers": [],

"keys": [

{

"user": "wgs",

"access_key": "DFGP6UPZ4KWPUTBEXVNY", #s3cmd 需要用到

"secret_key": "MvIKieIA9L09VLG5A5Osc7XCs5zYmHXBQdPmk8zi" #s3cmd 需要用到

}

],

"swift_keys": [],

"caps": [],

"op_mask": "read, write, delete",

"default_placement": "",

"default_storage_class": "",

"placement_tags": [],

"bucket_quota": {

"enabled": false,

"check_on_raw": false,

"max_size": -1,

"max_size_kb": 0,

"max_objects": -1

},

"user_quota": {

"enabled": false,

"check_on_raw": false,

"max_size": -1,

"max_size_kb": 0,

"max_objects": -1

},

"temp_url_keys": [],

"type": "rgw",

"mfa_ids": []

}四 设置s3cmd客户端

4.1 安装s3cmd客户端

root@ceph-client01:~# apt -y install s3cmd

4.2 设置rgw域名解析

root@ceph-client01:~# cat /etc/hosts 127.0.0.1 localhost 127.0.1.1 wgs

192.168.174.103 rgw.wgs.com #内部域名解析

4.3 初始化s3cmd

root@ceph-client01:~# s3cmd --configureEnter new values or accept defaults in brackets with Enter.

Refer to user manual for detailed description of all options.Access key and Secret key are your identifiers for Amazon S3. Leave them empty for using the env variables.

Access Key: DFGP6UPZ4KWPUTBEXVNY

Secret Key: MvIKieIA9L09VLG5A5Osc7XCs5zYmHXBQdPmk8zi

Default Region [US]:Use "s3.amazonaws.com" for S3 Endpoint and not modify it to the target Amazon S3.

S3 Endpoint [s3.amazonaws.com]: rgw.wgs.com:8000Use "%(bucket)s.s3.amazonaws.com" to the target Amazon S3. "%(bucket)s" and "%(location)s" vars can be used

if the target S3 system supports dns based buckets.

DNS-style bucket+hostname:port template for accessing a bucket [%(bucket)s.s3.amazonaws.com]:Encryption password is used to protect your files from reading

by unauthorized persons while in transfer to S3

Encryption password:

Path to GPG program [/usr/bin/gpg]:When using secure HTTPS protocol all communication with Amazon S3

servers is protected from 3rd party eavesdropping. This method is

slower than plain HTTP, and can only be proxied with Python 2.7 or newer

Use HTTPS protocol [Yes]: NoOn some networks all internet access must go through a HTTP proxy.

Try setting it here if you can't connect to S3 directly

HTTP Proxy server name:New settings:

Access Key: DFGP6UPZ4KWPUTBEXVNY

Secret Key: MvIKieIA9L09VLG5A5Osc7XCs5zYmHXBQdPmk8zi

Default Region: US

S3 Endpoint: rgw.wgs.com:8000

DNS-style bucket+hostname:port template for accessing a bucket: %(bucket)s.s3.amazonaws.com

Encryption password:

Path to GPG program: /usr/bin/gpg

Use HTTPS protocol: False

HTTP Proxy server name:

HTTP Proxy server port: 0Test access with supplied credentials? [Y/n] Y

Please wait, attempting to list all buckets...

Success. Your access key and secret key worked fine 😃Now verifying that encryption works...

Not configured. Never mind.

Save settings? [y/N] y

Configuration saved to '/root/.s3cfg'

4.4 验证s3cmd配置

查看代码

root@ceph-client01:~# cat /root/.s3cfg

[default]

access_key = BKRXWW1XBXZI04MDEM09 #创建用户生成的access_key

access_token =

add_encoding_exts =

add_headers =

bucket_location = US

ca_certs_file =

cache_file =

check_ssl_certificate = True

check_ssl_hostname = True

cloudfront_host = cloudfront.amazonaws.com

content_disposition =

content_type =

default_mime_type = binary/octet-stream

delay_updates = False

delete_after = False

delete_after_fetch = False

delete_removed = False

dry_run = False

enable_multipart = True

encoding = UTF-8

encrypt = False

expiry_date =

expiry_days =

expiry_prefix =

follow_symlinks = False

force = False

get_continue = False

gpg_command = /usr/bin/gpg

gpg_decrypt = %(gpg_command)s -d --verbose --no-use-agent --batch --yes --passphrase-fd %(passphrase_fd)s -o %(output_file)s %(input_file)s

gpg_encrypt = %(gpg_command)s -c --verbose --no-use-agent --batch --yes --passphrase-fd %(passphrase_fd)s -o %(output_file)s %(input_file)s

gpg_passphrase =

guess_mime_type = True

host_base = rgw.wgs.com:8000 # 修改为rgw服务地址

host_bucket = rgw.wgs.com:8000/%(bucket) # 修改为rgw服务地址

human_readable_sizes = False

invalidate_default_index_on_cf = False

invalidate_default_index_root_on_cf = True

invalidate_on_cf = False

kms_key =

limit = -1

limitrate = 0

list_md5 = False

log_target_prefix =

long_listing = False

max_delete = -1

mime_type =

multipart_chunk_size_mb = 15

multipart_max_chunks = 10000

preserve_attrs = True

progress_meter = True

proxy_host =

proxy_port = 0

put_continue = False

recursive = False

recv_chunk = 65536

reduced_redundancy = False

requester_pays = False

restore_days = 1

restore_priority = Standard

secret_key = pbkRtYmG5fABMe4wFuV7VPENIKcXbj0bUiYtxnCB #创建用户生成的secret_key

send_chunk = 65536

server_side_encryption = False

signature_v2 = False

signurl_use_https = False

simpledb_host = sdb.amazonaws.com

skip_existing = False

socket_timeout = 300

stats = False

stop_on_error = False

storage_class =

throttle_max = 100

upload_id =

urlencoding_mode = normal

use_http_expect = False

use_https = False

use_mime_magic = True

verbosity = WARNING

website_endpoint = http://%(bucket)s.s3-website-%(location)s.amazonaws.com/

website_error =

website_index = index.html五 对象存储测试

5.1 创建bucket

root@ceph-client01:~# s3cmd mb s3://wgsbucket

Bucket 's3://wgsbucket/' created

5.2 客户端验证bucket

root@ceph-client01:~# s3cmd ls s3:/

2021-12-09 05:40 s3://wgsbucket

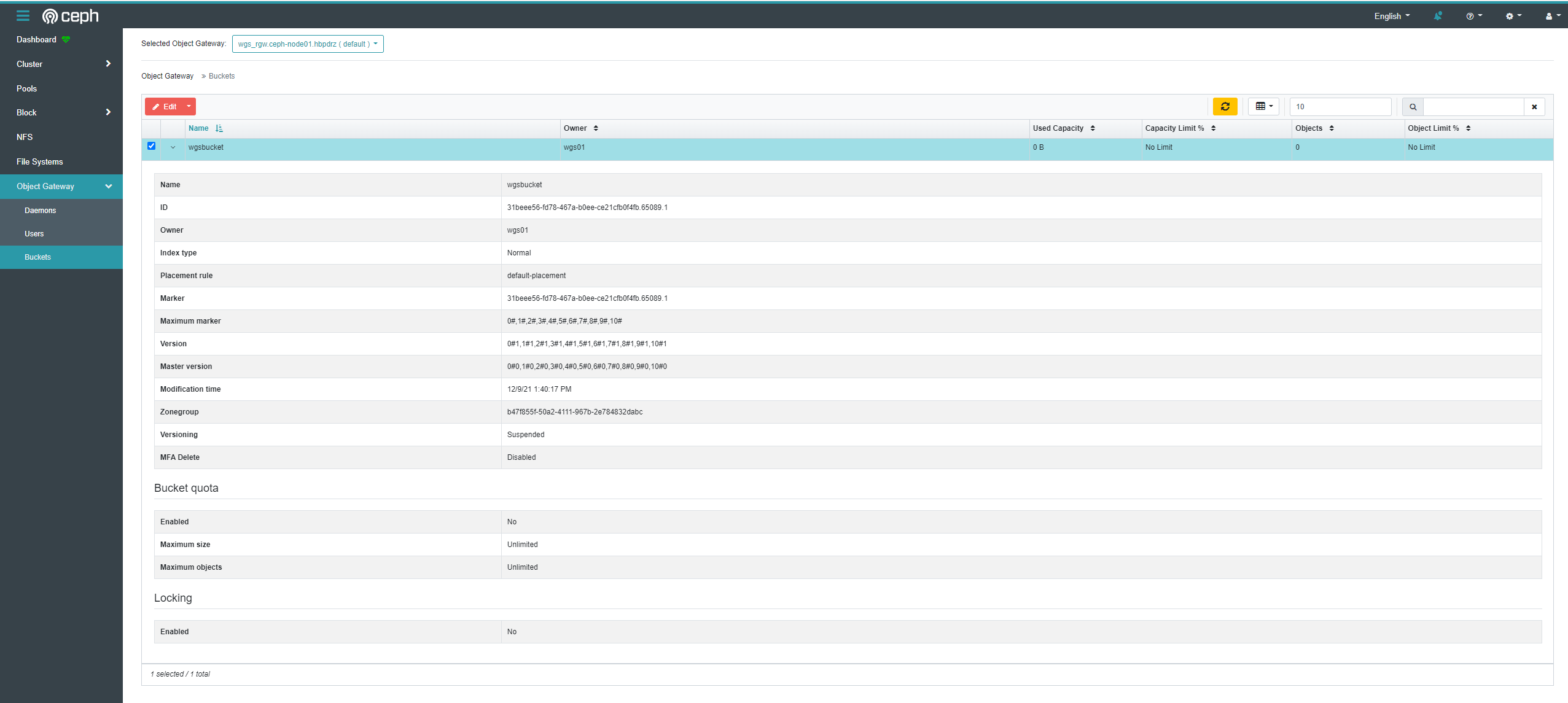

5.3 ceph web验证

5.4 命令行验证

root@cephadm-deploy:/# radosgw-admin bucket list

[

"wgsbucket"

]