1、二进制部署kubernetes集群(上篇)

1.实验架构

1.1.硬件环境

准备5台2c/2g/50g虚拟机,使用10.4.7.0/24 网络 。//因后期要直接向k8s交付java服务,因此运算节点需要4c8g。不交付服务,全部2c2g足够。

1.2.软件环境

操作系统:预装CentOS7.6操作系统。//因docker完美支持对内核有需求,所有操作系统全部CentOS 7.x(需要内核3.8以上)

做好系统基础优化。

关闭selinux,关闭firewalld服务

时间同步(chronyd)

调整Base源,Epel源

内核优化(文件描述符大小,内核转发,等等)

1.3.IP及架构规划

| 主机名 | 角色 | ip | 部署服务与组件 | 硬件配置 |

|---|---|---|---|---|

| hdss7-11.host.com | k8s proxy 主 | 10.4.7.11 | bind9、nginx(L4)、keepalived、supervisor | 2C 2G 50G |

| hdss7-12.host.com | k8s proxy 备 | 10.4.7.12 | etcd、nginx(L4)、keepalived、supervisor | 2C 2G 50G |

| hdss7-21.host.com | k8s 运算节点1 | 10.4.7.21 | etcd、kube-apiserver、kube-controller-manager、kube-scheduler kube-kubelet、kube-proxy,supervisor | 4C 8G 50G |

| hdss7-22.host.com | k8s 元算节点2 | 10.4.7.22 | etcd、kube-apiserver、kube-controller-manager、kube-scheduler、kube-kubelet、kube-proxy,supervisor | 4C 8G 50G |

| hdss7-200.host.com | k8s 运维节点,docker仓库 | 10.4.7.200 | docker 私有仓库、资源配置清单仓库、提供共享存储(NFS)、签发证书 | 2C 2G 50G |

2.基础环境准备及网络设置

2.1.网络设置

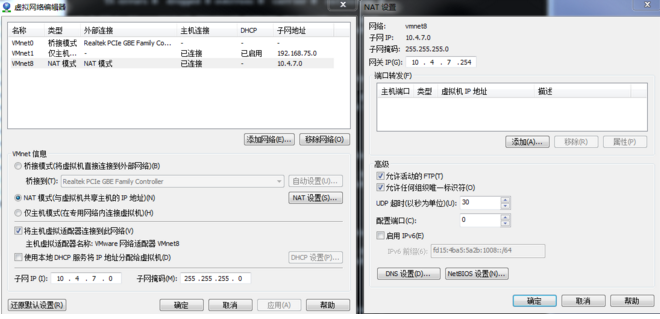

2.1.1.VM虚拟网络编辑器设置

2.1.2.Windows网卡设置

2.2.虚拟机操作系统设置

2.2.1.设置主机名

~]# hostnamectl set-hostname hdss7-xx.host.com

2.2.2.关闭防火墙和selinux

~]# systemctl stop firewalld

~]# systemctl disable firewalld

~]# setenforce 0

~]# sed –i ‘s/SELINUX=enforcing/SELINUX=disabled/g’ /etc/selinux/config

2.2.3.设置网卡

~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0

TYPE=Ethernet

BOOTPROTO=none

NAME=eth0

DEVICE=eth0

ONBOOT=yes

IPADDR=10.4.7.xx

NETMASK=255.255.255.0

GATEWAY=10.4.7.254

DNS1=10.4.7.xx

2.2.4.设置yum源

~]# yum install epel-release

或者

~]# wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

~]# wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

~]# yum clean all

~]# yum makecache

2.2.5.安装常用工具

~]# yum install wget net-tools telnet tree nmap sysstat lrzsz dos2unix bind-utils -y

2.3安装bind服务

proxy主节点hdss7-11.host.com 上

2.3.1.安装bind 9

~]# yum install bind -y

2.3.2.配置bind 9

//主配置文件

~]# vi /etc/named.conf

listen-on port 53 { 10.4.7.11; };

allow-query { any; };

forwarders { 10.4.7.254; };

recursion yes;

dnssec-enable no;

dnssec-validation no

##########参数说明#########

* dnssec-enable yes; ----> dnssec-enable no; # 是否支持DNSSEC开关 PS:dnssec作用:1.为DNS数据提供来源验证 2.为数据提供完整性性验证 3.为查询提供否定存在验证

* dnssec-validation yes; ----> dnssec-validation no; #是否进行DNSSEC确认开关

* forwarders { 10.4.7.1; }; #用来指定上一层DNS地址,一般指定网关,确保服务能够访问公网

* listen-on-v6 port 53 { ::1; }; 如果不用IPV6,可以删除

*recursion 递归查询

#########################

~]# named-checkconf

//区域配置文件

~]# vi /etc/named.rfc1912.zones

zone "host.com" IN {

type master;

file "host.com.zone";

allow-update { 10.4.7.11; };

};

zone "od.com" IN {

type master;

file "od.com.zone";

allow-update { 10.4.7.11; };

};

//配置区域数据文件

1.配置主机域数据文件

~]# vi /var/named/host.com.zone

$ORIGIN host.com.

$TTL 600 ; 10 minutes

@ IN SOA dns.host.com. dnsadmin.host.com. (

2019111001 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.host.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

HDSS7-11 A 10.4.7.11

HDSS7-12 A 10.4.7.12

HDSS7-21 A 10.4.7.21

HDSS7-22 A 10.4.7.22

HDSS7-200 A 10.4.7.200

2.配置业务域数据文件

~]# vi /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10 minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019111001 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

2.3.3.检查配置并启动bind 9

~]# named-checkconf

~]# systemctl start named

~]# netstat -nltup|grep 53

tcp 0 0 10.4.7.11:53 0.0.0.0:* LISTEN 18279/named

tcp 0 0 127.0.0.1:953 0.0.0.0:* LISTEN 18279/named

tcp6 0 0 :::53 :::* LISTEN 18279/named

tcp6 0 0 ::1:953 :::* LISTEN 18279/named

udp 0 0 10.4.7.11:53 0.0.0.0:* 18279/named

udp6 0 0 :::53 :::* 18279/named

2.3.4.检查

[root@hdss7-11 ~]# dig -t A hdss7-21.host.com @10.4.7.11 +short

10.4.7.21

[root@hdss7-11 ~]# dig -t A hdss7-200.host.com @10.4.7.11 +short

10.4.7.200

[root@hdss7-11 ~]# dig -t A hdss7-11.host.com @10.4.7.11 +short

10.4.7.11

[root@hdss7-11 ~]# dig -t A dns.od.com @10.4.7.11 +short

10.4.7.11

2.3.5.配置DNS客户端

linux主机所有

[root@hdss7-11 ~]# vi /etc/resolv.conf

# Generated by NetworkManager

search host.com

nameserver 10.4.7.11

~] systemctl restart network 五台都设置重启

windows主机

更改适配器设置 --》 属性 --》ipv4 --》更改dns

自动跃点 10 优先从vmnet 8出网

2.3.6.检查

~]# ping hdss7-200.host.com

~]# ping dns.od.com

##若windows ping不到,把本地网卡dns设置成10.4.7.11

2.4.准备签发证书环境

运维主机hdss7-200.host.com 上

2.4.1.安装CFSSL

证书签发工具CFSSL:R1.2

HDSS7-200上:

~]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -O /usr/bin/cfssl //cfssl下载地址

~]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -O /usr/bin/cfssl-json //cfssl-json下载地址

~]# wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -O /usr/bin/cfssl-certinfo //cfssl-certinfo下载地址

~]# chmod +x /usr/bin/cfssl*

2.4.2.创建生成CA证书签名请求(csr)的json配置文件

vi /opt/certs/ca-csr.json

{

"CN": "OldboyEdu",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

],

"ca": {

"expiry": "175200h"

}

}

##############配置说明###########

CN:Common Name,浏览器使用该字段验证网站是否合法,一般写的是域名,很重要,浏览器使用该字段验证网站是否合法

C:Country 国家

ST:State 州,省

L:Locality 地区,城市

O:Organization Name 组织名称,公司名称

OU:Organization Unit Name 组织单位名称,部门

2.4.3.生成CA证书和私钥

mkdir /opt/certs

cd /opt/certs

certs]# cfssl gencert -initca ca-csr.json | cfssl-json -bare ca

certs]# ll

ca.csr

ca-csr.json

ca-key.pem //根证书的私钥

ca.pem //根证书

##########

ca.pem //根证书

ca-key.pem:

2.5.部署docker环境

hdss7-200.host.com

hdss7-21.host.com

hdss7-22.host.com上

2.5.1.安装

[root@hdss7-21 ~]# curl -fsSL https://get.docker.com | bash -s docker --mirror Aliyun

# Executing docker install script, commit: f45d7c11389849ff46a6b4d94e0dd1ffebca32c1

+ sh -c 'yum install -y -q yum-utils'

+ sh -c 'yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo'

Loaded plugins: fastestmirror

adding repo from: https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

grabbing file https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo to /etc/yum.repos.d/docker-ce.repo

repo saved to /etc/yum.repos.d/docker-ce.repo

+ '[' stable '!=' stable ']'

+ sh -c 'yum makecache'

Loaded plugins: fastestmirror

Loading mirror speeds from cached hostfile

* base: mirrors.aliyun.com

* extras: mirrors.aliyun.com

* updates: mirrors.aliyun.com

base | 3.6 kB 00:00:00

docker-ce-stable | 3.5 kB 00:00:00

epel | 5.4 kB 00:00:00

extras | 2.9 kB 00:00:00

updates | 2.9 kB 00:00:00

(1/14): docker-ce-stable/x86_64/updateinfo | 55 B 00:00:00

(2/14): docker-ce-stable/x86_64/filelists_db | 18 kB 00:00:00

(3/14): docker-ce-stable/x86_64/primary_db | 37 kB 00:00:00

(4/14): docker-ce-stable/x86_64/other_db | 111 kB 00:00:00

(5/14): epel/x86_64/prestodelta | 4.0 kB 00:00:00

(6/14): base/7/x86_64/other_db | 2.6 MB 00:00:01

(7/14): epel/x86_64/other_db | 3.3 MB 00:00:02

(8/14): extras/7/x86_64/other_db | 100 kB 00:00:00

(9/14): extras/7/x86_64/filelists_db | 207 kB 00:00:00

(10/14): updates/7/x86_64/other_db | 243 kB 00:00:00

(11/14): epel/x86_64/updateinfo_zck | 1.5 MB 00:00:01

(12/14): updates/7/x86_64/filelists_db | 2.1 MB 00:00:01

(13/14): base/7/x86_64/filelists_db | 7.3 MB 00:00:04

(14/14): epel/x86_64/filelists_db | 12 MB 00:00:05

Metadata Cache Created

+ '[' -n '' ']'

+ sh -c 'yum install -y -q docker-ce'

warning: /var/cache/yum/x86_64/7/docker-ce-stable/packages/docker-ce-19.03.5-3.el7.x86_64.rpm: Header V4 RSA/SHA512 Signature, key ID 621e9f35: NOKEY

Public key for docker-ce-19.03.5-3.el7.x86_64.rpm is not installed

Importing GPG key 0x621E9F35:

Userid : "Docker Release (CE rpm) <docker@docker.com>"

Fingerprint: 060a 61c5 1b55 8a7f 742b 77aa c52f eb6b 621e 9f35

From : https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

setsebool: SELinux is disabled.

If you would like to use Docker as a non-root user, you should now consider

adding your user to the "docker" group with something like:

sudo usermod -aG docker your-user

Remember that you will have to log out and back in for this to take effect!

WARNING: Adding a user to the "docker" group will grant the ability to run

containers which can be used to obtain root privileges on the

docker host.

Refer to https://docs.docker.com/engine/security/security/#docker-daemon-attack-surface

for more information.

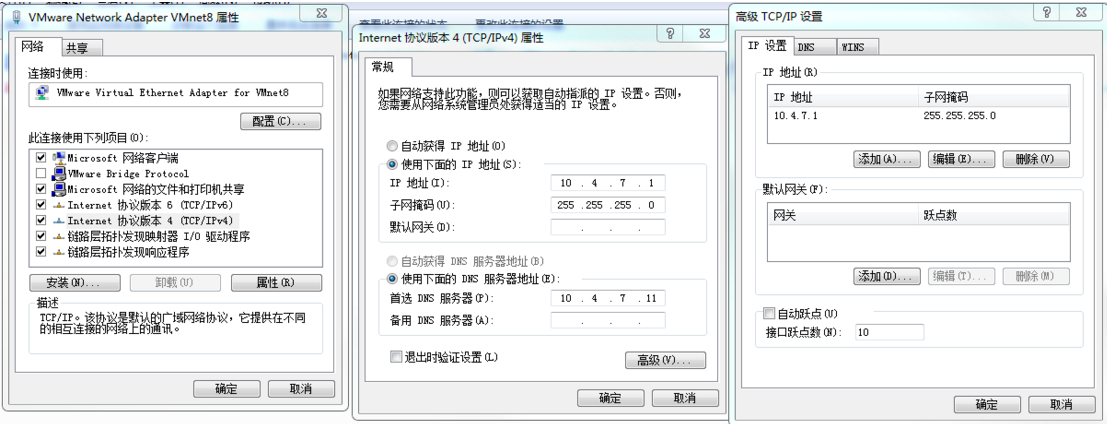

报错:

解决:

rm -f /etc/yum.repos.d/local.repo 再重新安装

2.5.2.配置

mkdir /etc/docker

vi /etc/docker/daemon.json

{

"graph": "/data/docker",

"storage-driver": "overlay2",

"insecure-registries": ["registry.access.redhat.com","quay.io","harbor.od.com"],

"registry-mirrors": ["https://q2gr04ke.mirror.aliyuncs.com"],

"bip": "172.7.21.1/24",

"exec-opts": ["native.cgroupdriver=systemd"],

"live-restore": true

}

############配置说明###############

bip要根据宿主机ip变化

注意:hdss7-21.host.com bip 172.7.21.1/24

hdss7-22.host.com bip 172.7.22.1/24

hdss7-200.host.com bip 172.7.200.1/24

#################################

2.5.3.启动

docker]# mkdir -p /data/docker

docker]# systemctl start docker

docker]# systemctl enable docker

docker]# docker --version

Docker version 19.03.4, build 9013bf583a

[root@hdss7-200 docker]# docker version

Client: Docker Engine - Community

Version: 19.03.4

API version: 1.40

Go version: go1.12.10

Git commit: 9013bf583a

Built: Fri Oct 18 15:52:22 2019

OS/Arch: linux/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 19.03.5

API version: 1.40 (minimum version 1.12)

Go version: go1.12.12

Git commit: 633a0ea

Built: Wed Nov 13 07:24:18 2019

OS/Arch: linux/amd64

Experimental: false

containerd:

Version: 1.2.10

GitCommit: b34a5c8af56e510852c35414db4c1f4fa6172339

runc:

Version: 1.0.0-rc8+dev

GitCommit: 3e425f80a8c931f88e6d94a8c831b9d5aa481657

docker-init:

Version: 0.18.0

GitCommit: fec3683

2.6.部署docker镜像私有仓库harbor

运维主机hdss7-200.host.com 上

2.6.1.下载软件二进制包并解压

强烈建议下载1.7.5以上版本

harbor官网github地址

https://github.com/goharbor/harbor

src]# tar xf harbor-offline-installer-v1.8.3.tgz -C /opt/

opt]# mv harbor/ harbor-v1.8.3

opt]# ln -s /opt/harbor-v1.8.3/ /opt/harbor

2.6.2.配置

[root@hdss7-200 opt]# vi /opt/harbor/harbor.yml

hostname: harbor.od.com

http:

port: 180

harbor_admin_password:Harbor12345

data_volume: /data/harbor

log:

level: info

rotate_count: 50

rotate_size:200M

location: /data/harbor/logs

[root@hdss7-200 opt]# mkdir -p /data/harbor/logs

2.6.3.安装docker-compose

[root@hdss7-200 opt]# yum install docker-compose -y

Loaded plugins: fastestmirror

Loading mirror speeds from cached hostfile

* base: mirrors.aliyun.com

* extras: mirrors.aliyun.com

* updates: mirrors.aliyun.com

Package docker-compose-1.18.0-4.el7.noarch already installed and latest version

Nothing to do

2.6.4.安装harbor

[root@hdss7-200 harbor]# ./install.sh

[Step 0]: checking installation environment ...

Note: docker version: 19.03.4

Note: docker-compose version: 1.18.0

................

Creating harbor-portal ... done

Creating nginx ... done

Creating registry ...

Creating registryctl ...

Creating harbor-db ...

Creating redis ...

Creating harbor-core ...

Creating harbor-portal ...

Creating harbor-jobservice ...

Creating nginx ...

?.----Harbor has been installed and started successfully.----

Now you should be able to visit the admin portal at http://harbor.od.com.

For more details, please visit https://github.com/goharbor/harbor .

2.6.5.检查harbor启动情况

[root@hdss7-200 harbor]# docker-compose ps //docker给起了一堆容器,单机编排

Name Command State Ports

--------------------------------------------------------------------------------------

harbor-core /harbor/start.sh Up

harbor-db /entrypoint.sh postgres Up 5432/tcp

harbor-jobservice /harbor/start.sh Up

harbor-log /bin/sh -c /usr/local/bin/ ... Up 127.0.0.1:1514->10514/tcp

harbor-portal nginx -g daemon off; Up 80/tcp

nginx nginx -g daemon off; Up 0.0.0.0:180->80/tcp

redis docker-entrypoint.sh redis ... Up 6379/tcp

registry /entrypoint.sh /etc/regist ... Up 5000/tcp

[root@hdss7-200 harbor]# docker ps -a //可以看到180映射到本机80port

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

d6880cca7143 goharbor/nginx-photon:v1.8.3 "nginx -g 'daemon of?? 3 minutes ago Up 3 minutes (healthy) 0.0.0.0:180->80/tcp nginx

a5d166ffb68e goharbor/harbor-jobservice:v1.8.3 "/harbor/start.sh" 3 minutes ago Up 3 minutes harbor-jobservice

e0ab65359cc4 goharbor/harbor-portal:v1.8.3 "nginx -g 'daemon of?? 3 minutes ago Up 3 minutes (healthy) 80/tcp harbor-portal

ef032bf896c0 goharbor/harbor-core:v1.8.3 "/harbor/start.sh" 3 minutes ago Up 3 minutes (healthy) harbor-core

6c334c42ac9d goharbor/redis-photon:v1.8.3 "docker-entrypoint.s?? 3 minutes ago Up 3 minutes 6379/tcp redis

257f4c4ef4d7 goharbor/harbor-db:v1.8.3 "/entrypoint.sh post?? 3 minutes ago Up 3 minutes (healthy) 5432/tcp harbor-db

7e741324bb39 goharbor/registry-photon:v2.7.1-patch-2819-v1.8.3 "/entrypoint.sh /etc?? 3 minutes ago Up 3 minutes (healthy) 5000/tcp registry

a468bf625584 goharbor/harbor-registryctl:v1.8.3 "/harbor/start.sh" 3 minutes ago Up 3 minutes (healthy) registryctl

41a1e79fc5d1 goharbor/harbor-log:v1.8.3 "/bin/sh -c /usr/loc?? 3 minutes ago Up 3 minutes (healthy) 127.0.0.1:1514->10514/tcp harbor-log

2.6.6.配置harbor的dns内网解析

[root@hdss7-11 ~]# vi /var/named/od.com.zone

2019111002 ; serial

harbor A 10.4.7.200

//注意serial前滚一个序号

[root@hdss7-11 ~]# systemctl restart named

[root@hdss7-11 ~]# dig -t A harbor.od.com +short

10.4.7.200

2.6.7.安装nginx并配置

[root@hdss7-200 harbor]# yum install nginx

[root@hdss7-200 harbor]# vi /etc/nginx/conf.d/harbor.od.com.conf

server {

listen 80;

server_name harbor.od.com;

client_max_body_size 1000m;

location / {

proxy_pass http://127.0.0.1:180;

}

}

[root@hdss7-200 harbor]# systemctl start nginx

[root@hdss7-200 harbor]# systemctl enable nginx

Created symlink from /etc/systemd/system/multi-user.target.wants/nginx.service to /usr/lib/systemd/system/nginx.service.

2.6.8.浏览器打开http://harbor.od.com

[root@hdss7-11 ~]# curl harbor.od.com

<!doctype html>

<html>

<head>

<meta charset="utf-8">

<title>Harbor</title>

<base href="/">

<meta name="viewport" content="width=device-width, initial-scale=1">

<link rel="icon" type="image/x-icon" href="favicon.ico?v=2">

<link rel="stylesheet" href="styles.c1fdd265f24063370a49.css"></head>

<body>

<harbor-app>

<div class="spinner spinner-lg app-loading">

Loading...

</div>

</harbor-app>

<script type="text/javascript" src="runtime.26209474bfa8dc87a77c.js"></script><script type="text/javascript" src="scripts.c04548c4e6d1db502313.js"></script><script type="text/javascript" src="main.144e8ccd474a28572e81.js"></script></body>

1、浏览器输入:harbor.od.com 用户名:admin 密码:Harbor12345

2、新建项目

3、下载测试镜像并打给镜像打一个tag

[root@hdss7-200 harbor]# docker pull nginx:1.7.9

1.7.9: Pulling from library/nginx

Image docker.io/library/nginx:1.7.9 uses outdated schema1 manifest format. Please upgrade to a schema2 image for better future compatibility. More information at https://docs.docker.com/registry/spec/deprecated-schema-v1/

a3ed95caeb02: Pull complete

6f5424ebd796: Pull complete

d15444df170a: Pull complete

e83f073daa67: Pull complete

a4d93e421023: Pull complete

084adbca2647: Pull complete

c9cec474c523: Pull complete

Digest: sha256:e3456c851a152494c3e4ff5fcc26f240206abac0c9d794affb40e0714846c451

Status: Downloaded newer image for nginx:1.7.9

docker.io/library/nginx:1.7.9

[root@hdss7-200 harbor]# docker images |grep 1.7.9

nginx 1.7.9 84581e99d807 4 years ago 91.7MB

[root@hdss7-200 harbor]# docker tag 84581e99d807 harbor.od.com/public/nginx:v1.7.9

4、登陆harbor并推送到仓库

[root@hdss7-200 harbor]# docker login harbor.od.com

Username: admin

Password:

WARNING! Your password will be stored unencrypted in /root/.docker/config.json.

Configure a credential helper to remove this warning. See

https://docs.docker.com/engine/reference/commandline/login/#credentials-store

Login Succeeded

[root@hdss7-200 harbor]# docker push harbor.od.com/public/nginx:v1.7.9

The push refers to repository [harbor.od.com/public/nginx]

5f70bf18a086: Pushed

4b26ab29a475: Pushed

ccb1d68e3fb7: Pushed

e387107e2065: Pushed

63bf84221cce: Pushed

e02dce553481: Pushed

dea2e4984e29: Pushed

v1.7.9: digest: sha256:b1f5935eb2e9e2ae89c0b3e2e148c19068d91ca502e857052f14db230443e4c2 size: 3012

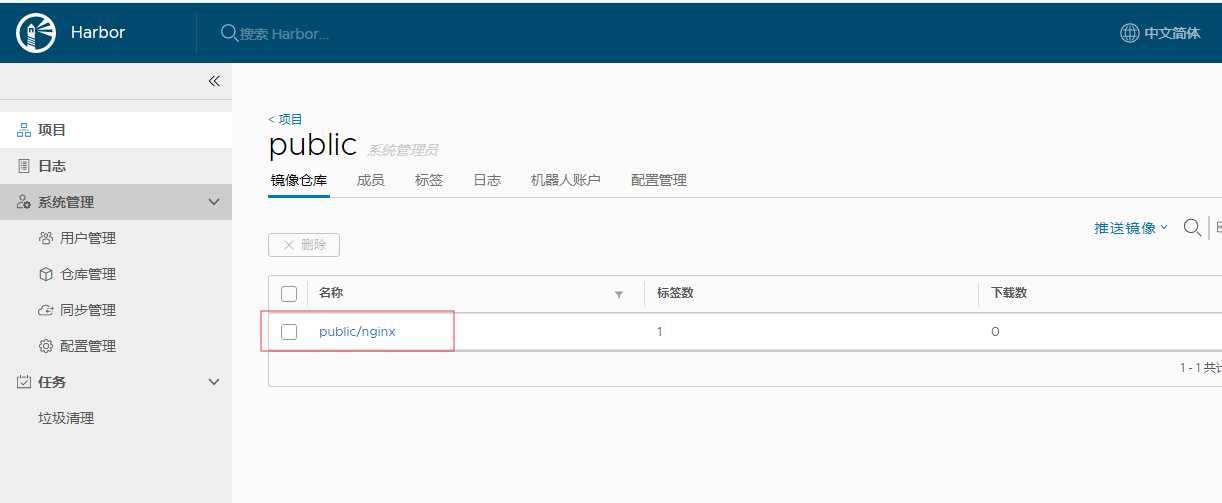

2.6.9.检查

可以看到nginx镜像已推送到public下

3.部署Master节点服务

3.1.部署etcd集群

3.1.1.集群规划

| 主机名 | 角色 | ip地址 |

|---|---|---|

| hdss7-12.host.com | etcd lead | 10.4.7.12 |

| hdss7-21.host.com | etcd follow | 10.4.7.21 |

| hdss7-22.host.com | etcd follow | 10.4.7.22 |

| 注意:这里部署文档以hdss7-12.host.com为例,另外两台部署方法类似 |

3.1.2.创建基于根证书的config配置文件

hdss7-200上创建

[root@hdss7-200 ~]# vi /opt/certs/ca-config.json

{

"signing": {

"default": {

"expiry": "175200h"

},

"profiles": {

"server": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"server auth"

]

},

"client": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"client auth"

]

},

"peer": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

3.1.3.创建生成自签证书的签名请求(csr)的 json配置文件

[root@hdss7-200 ~]# vi /opt/certs/etcd-peer-csr.json

{

"CN": "k8s-etcd",

"hosts": [

"10.4.7.11",

"10.4.7.12",

"10.4.7.21",

"10.4.7.22"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

3.1.4.生成etcd证书和私钥

[root@hdss7-200 ~]# cd /opt/certs/

[root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=peer etcd-peer-csr.json |cfssl-json -bare etcd-peer

2019/11/16 14:03:17 [INFO] generate received request

2019/11/16 14:03:17 [INFO] received CSR

2019/11/16 14:03:17 [INFO] generating key: rsa-2048

2019/11/16 14:03:17 [INFO] encoded CSR

2019/11/16 14:03:17 [INFO] signed certificate with serial number 319260464385476820097369736582871670367101389147

2019/11/16 14:03:17 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

3.1.5.检查生成的证书和私钥

[root@hdss7-200 certs]# ll

total 36

-rw-r--r-- 1 root root 836 Nov 16 13:52 ca-config.json

-rw-r--r-- 1 root root 993 Nov 11 00:30 ca.csr

-rw-r--r-- 1 root root 328 Nov 11 00:29 ca-csr.json

-rw------- 1 root root 1679 Nov 11 00:30 ca-key.pem

-rw-r--r-- 1 root root 1346 Nov 11 00:30 ca.pem

-rw-r--r-- 1 root root 1062 Nov 16 14:03 etcd-peer.csr

-rw-r--r-- 1 root root 363 Nov 16 13:59 etcd-peer-csr.json

-rw------- 1 root root 1679 Nov 16 14:03 etcd-peer-key.pem

-rw-r--r-- 1 root root 1428 Nov 16 14:03 etcd-peer.pem

3.1.6.创建etcd用户

hdss7-12上

[root@hdss7-12 opt]# useradd -s /sbin/nologin -M etcd

[root@hdss7-12 opt]# id etcd

uid=1000(etcd) gid=1000(etcd) groups=1000(etcd)

3.1.7.下载软件,解压,做软链接

https://github.com/etcd-io/etcd/tags //这里用的3.1.20版本,目前不建议超过3.3的版本

[root@hdss7-12 src]# tar xf etcd-v3.1.20-linux-amd64.tar.gz -C /opt/

[root@hdss7-12 src]# cd ..

[root@hdss7-12 opt]# mv etcd-v3.1.20-linux-amd64/ etcd-v3.1.20

[root@hdss7-12 opt]# ln -s /opt/etcd-v3.1.20/ /opt/etcd

[root@hdss7-12 opt]# ll

total 0

lrwxrwxrwx 1 root root 18 Nov 16 14:22 etcd -> /opt/etcd-v3.1.20/

drwxr-xr-x 3 478493 89939 123 Oct 11 2018 etcd-v3.1.20

drwxr-xr-x 2 root root 45 Nov 16 14:20 src

3.1.8.创建目录,拷贝证书,私钥

创建证书目录、数据目录、日志目录

[root@hdss7-12 opt]# mkdir -p /opt/etcd/certs /data/etcd /data/logs/etcd-server

拷贝3.1.4生成的证书文件

[root@hdss7-12 certs]# scp hdss7-200:/opt/certs/ca.pem .

[root@hdss7-12 certs]# scp hdss7-200:/opt/certs/etcd-peer.pem .

[root@hdss7-12 certs]# scp hdss7-200:/opt/certs/etcd-peer-kry.pem .

[root@hdss7-12 certs]# ll

total 12

-rw-r--r-- 1 root root 1346 Nov 16 14:31 ca.pem

-rw------- 1 root root 1679 Nov 16 14:34 etcd-peer-key.pem

-rw-r--r-- 1 root root 1428 Nov 16 14:33 etcd-peer.pem

3.1.9.创建etcd服务启动脚本

etcd集群各主机启动配置略有不同,配置其他节点时注意修改

[root@hdss7-12 ~]# vi /opt/etcd/etcd-server-startup.sh

#!/bin/sh

./etcd --name etcd-server-7-12 \

--data-dir /data/etcd/etcd-server \

--listen-peer-urls https://10.4.7.12:2380 \

--listen-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--quota-backend-bytes 8000000000 \

--initial-advertise-peer-urls https://10.4.7.12:2380 \

--advertise-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--initial-cluster etcd-server-7-12=https://10.4.7.12:2380,etcd-server-7-21=https://10.4.7.21:2380,etcd-server-7-22=https://10.4.7.22:2380 \

--ca-file ./certs/ca.pem \

--cert-file ./certs/etcd-peer.pem \

--key-file ./certs/etcd-peer-key.pem \

--client-cert-auth \

--trusted-ca-file ./certs/ca.pem \

--peer-ca-file ./certs/ca.pem \

--peer-cert-file ./certs/etcd-peer.pem \

--peer-key-file ./certs/etcd-peer-key.pem \

--peer-client-cert-auth \

--peer-trusted-ca-file ./certs/ca.pem \

--log-output stdout

#############配置说明#########

--listen-peer-urls https://10.4.7.12:2380 \ //内部通信起的2380端口

--listen-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \ //对外通信起的2379端口

--quota-backend-bytes 8000000000 \ // 后端配额

--initial-advertise-peer-urls https://10.4.7.12:2380 \

--advertise-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--initial-cluster etcd-server-7-12=https://10.4.7.12:2380,etcd-server-7-21=https://10.4.7.21:2380,etcd-server-7-22=https://10.4.7.22:2380 \

--ca-file ./certs/ca.pem \

--cert-file ./certs/etcd-peer.pem \

--key-file ./certs/etcd-peer-key.pem \

--client-cert-auth \

--trusted-ca-file ./certs/ca.pem \

--peer-ca-file ./certs/ca.pem \

--peer-cert-file ./certs/etcd-peer.pem \

--peer-key-file ./certs/etcd-peer-key.pem \

--peer-client-cert-auth \

--peer-trusted-ca-file ./certs/ca.pem \

--log-output stdout

[root@hdss7-12 ~]# chmod +x /opt/etcd/etcd-server.startup.sh

[root@hdss7-12 ~]# ll /opt/etcd/etcd-server.startup.sh

-rwxr-xr-x 1 root root 981 Nov 16 14:39 /opt/etcd/etcd-server.startup.sh

3.1.10.调整目录权限

[root@hdss7-12 ~]# chown -R etcd.etcd /opt/etcd-v3.1.20/ /data/etcd/ /data/logs/etcd-server/

3.1.11.安装supervison软件

supervison是一个管理后台进程的软件,etcd进程掉了会自动拉起来

[root@hdss7-12 ~]# yum install supervisor -y

[root@hdss7-12 ~]# systemctl start supervisord

[root@hdss7-12 ~]# systemctl enable supervisord

3.1.12.创建etcd-server的启动配置

etcd集群各主机启动配置略有不同,配置其他节点时注意修改

[root@hdss7-12 ~]# vi /etc/supervisord.d/etcd-server.ini

[program:etcd-server-7-12]

command=/opt/etcd/etcd-server-startup.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/etcd ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=etcd ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/etcd-server/etcd.stdout.log ; stdout log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

3.1.13.启动etcd服务并检查

[root@hdss7-12 ~]# supervisorctl update

etcd-server-7-12: added process group

[root@hdss7-12 ~]# supervisorctl status

etcd-server-7-12 STARTING

[root@hdss7-12 ~]# supervisorctl status

etcd-server-7-12 RUNNING pid 20350, uptime 0:01:14

[root@hdss7-12 ~]# netstat -luntp|grep etcd

tcp 0 0 10.4.7.12:2379 0.0.0.0:* LISTEN 22657/./etcd

tcp 0 0 127.0.0.1:2379 0.0.0.0:* LISTEN 22657/./etcd

tcp 0 0 10.4.7.12:2380 0.0.0.0:* LISTEN 22657/./etcd RTING

3.1.14.安装部署启动检查所有集群

和上述无区别,最主要是修改两个配置文件:

1、/opt/etcd/etcd-server-startup.sh的ip地址,

2、/etc/supervisord.d/etcd-server.ini

//修改supervisord启动ini文件的program标签,是为了更好区分主机,生产规范,强迫症患者的福音,不修改不会造成启动失败

3.1.15.检查集群状态

任意节点输入

[root@hdss7-21 etcd]# ./etcdctl cluster-health

member 988139385f78284 is healthy: got healthy result from http://127.0.0.1:2379

member 5a0ef2a004fc4349 is healthy: got healthy result from http://127.0.0.1:2379

member f4a0cb0a765574a8 is healthy: got healthy result from http://127.0.0.1:2379

cluster is healthy

[root@hdss7-21 etcd]# ./etcdctl member list

988139385f78284: name=etcd-server-7-22 peerURLs=https://10.4.7.22:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.22:2379 isLeader=false

5a0ef2a004fc4349: name=etcd-server-7-21 peerURLs=https://10.4.7.21:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.21:2379 isLeader=false

f4a0cb0a765574a8: name=etcd-server-7-12 peerURLs=https://10.4.7.12:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.12:2379 isLeader=true

3.2.部署kube-apiserver集群

3.2.1.集群规划

| 主机名 | 角色 | ip | vip |

|---|---|---|---|

| hdss7-21.host.com | kube-apiserver | 10.4.7.21 | 无 |

| hdss7-22.host.com | kube-apiserver | 10.4.7.22 | 无 |

| hdss7-11.host.com | L4 | 10.4.7.11 | 10.4.7.10 |

| hdss7-12.host.com | L4 | 10.4.7.12 | 10.4.7.10 |

| 注意:hdss7-11和hdss7-12使用nginx做4层负载均衡器,keepalived跑一个VIP:10.4.7.10,代理两个kube-apiserver,实现高可用。 | |||

| 这里以hdss21为例,另外一台运算节点部署方法类似 |

3.2.2.下载软件,解压,做软链接

hdss7-21.host.com上

官方github地址:github.com/kubernetes/releasses/tag/v1.15.4 //这里是v1.15.2版本

下载方法:点击版本号--》CHANGELOG-1.15.md--》DOWNLOAD --》server binaries--》找到kubenetes-server-linux-amd64.tar.gz

[root@hdss7-21 src]# tar xf kubernetes-server-linux-amd64-v1.15.2.tar.gz -C /opt

[root@hdss7-21 opt]# mv kubernetes/ kubernetes-v1.15.2

[root@hdss7-21 opt]# ln -s /opt/kubernetes-v1.15.2/ /opt/kubernetes

[root@hdss7-21 opt]# ll

total 0

drwx--x--x 4 root root 28 Nov 16 03:06 containerd

lrwxrwxrwx 1 root root 18 Nov 16 15:44 etcd -> /opt/etcd-v3.1.20/

drwxr-xr-x 4 etcd etcd 166 Nov 17 00:39 etcd-v3.1.20

lrwxrwxrwx 1 root root 24 Nov 17 01:35 kubernetes -> /opt/kubernetes-v1.15.2/

drwxr-xr-x 4 root root 79 Aug 5 18:01 kubernetes-v1.15.2

drwxr-xr-x 2 root root 306 Nov 16 15:41 src

//删掉无用的源码包,bin下无用的tag,tar文件,不用adm方式部署,所以可以删除

[root@hdss7-21 opt]# cd kubernetes

[root@hdss7-21 kubernetes]# ls

addons kubernetes-src.tar.gz LICENSES server

[root@hdss7-21 kubernetes]# rm -rf kubernetes-src.tar.gz

[root@hdss7-21 kubernetes]# ll

total 1180

drwxr-xr-x 2 root root 6 Aug 5 18:01 addons

-rw-r--r-- 1 root root 1205293 Aug 5 18:01 LICENSES

drwxr-xr-x 3 root root 17 Aug 5 17:57 server

[root@hdss7-21 bin]# rm -f *.tar

[root@hdss7-21 bin]# rm -f *_tag

[root@hdss7-21 bin]# ll

total 884636

-rwxr-xr-x 1 root root 43534816 Aug 5 18:01 apiextensions-apiserver

-rwxr-xr-x 1 root root 100548640 Aug 5 18:01 cloud-controller-manager

-rwxr-xr-x 1 root root 200648416 Aug 5 18:01 hyperkube

-rwxr-xr-x 1 root root 40182208 Aug 5 18:01 kubeadm

-rwxr-xr-x 1 root root 164501920 Aug 5 18:01 kube-apiserver

-rwxr-xr-x 1 root root 116397088 Aug 5 18:01 kube-controller-manager

-rwxr-xr-x 1 root root 42985504 Aug 5 18:01 kubectl

-rwxr-xr-x 1 root root 119616640 Aug 5 18:01 kubelet

-rwxr-xr-x 1 root root 36987488 Aug 5 18:01 kube-proxy

-rwxr-xr-x 1 root root 38786144 Aug 5 18:01 kube-scheduler

-rwxr-xr-x 1 root root 1648224 Aug 5 18:01 mounter

3.2.3.签发client证书

运维主机hdss7-200上

3.2.3.1.创建生成证书签名请求(csr)的json配置文件

[root@hdss7-200 certs]# vi /opt/certs/client-csr.json

{

"CN": "k8s-node",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

3.2.3.2.生成clent证书和私钥

[root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client client-csr.json |cfssl-json -bare client

3.2.3.3.检查生成的证书和私钥

[root@hdss7-200 certs]# ll

total 52

-rw-r--r-- 1 root root 836 Nov 16 13:52 ca-config.json

-rw-r--r-- 1 root root 993 Nov 11 00:30 ca.csr

-rw-r--r-- 1 root root 328 Nov 11 00:29 ca-csr.json

-rw------- 1 root root 1679 Nov 11 00:30 ca-key.pem

-rw-r--r-- 1 root root 1346 Nov 11 00:30 ca.pem

-rw-r--r-- 1 root root 993 Nov 16 17:47 client.csr

-rw-r--r-- 1 root root 280 Nov 16 17:45 client-csr.json

-rw------- 1 root root 1679 Nov 16 17:47 client-key.pem

-rw-r--r-- 1 root root 1363 Nov 16 17:47 client.pem

-rw-r--r-- 1 root root 1062 Nov 16 14:03 etcd-peer.csr

-rw-r--r-- 1 root root 363 Nov 16 13:59 etcd-peer-csr.json

-rw------- 1 root root 1679 Nov 16 14:03 etcd-peer-key.pem

-rw-r--r-- 1 root root 1428 Nov 16 14:03 etcd-peer.pem

3.2.4.签发kube-apiserver证书

运维主机hdss7-200上

3.2.4.1.创建生成证书签名请求(csr)的 json配置文件

[root@hdss7-200 certs]# vi /opt/certs/apiserver-csr.json

3.2.4.2.生成kube-apiserver证书和私钥

[root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server apiserver-csr.json |cfssl-json -bare apiserver

3.2.4.3.检查生成的证书和私钥

[root@hdss7-200 certs]# ll

total 68

-rw-r--r-- 1 root root 1249 Nov 16 17:55 apiserver.csr

-rw-r--r-- 1 root root 567 Nov 16 17:55 apiserver-csr.json

-rw------- 1 root root 1679 Nov 16 17:55 apiserver-key.pem

-rw-r--r-- 1 root root 1598 Nov 16 17:55 apiserver.pem

-rw-r--r-- 1 root root 836 Nov 16 13:52 ca-config.json

-rw-r--r-- 1 root root 993 Nov 11 00:30 ca.csr

-rw-r--r-- 1 root root 328 Nov 11 00:29 ca-csr.json

-rw------- 1 root root 1679 Nov 11 00:30 ca-key.pem

-rw-r--r-- 1 root root 1346 Nov 11 00:30 ca.pem

-rw-r--r-- 1 root root 993 Nov 16 17:47 client.csr

-rw-r--r-- 1 root root 280 Nov 16 17:45 client-csr.json

-rw------- 1 root root 1679 Nov 16 17:47 client-key.pem

-rw-r--r-- 1 root root 1363 Nov 16 17:47 client.pem

-rw-r--r-- 1 root root 1062 Nov 16 14:03 etcd-peer.csr

-rw-r--r-- 1 root root 363 Nov 16 13:59 etcd-peer-csr.json

-rw------- 1 root root 1679 Nov 16 14:03 etcd-peer-key.pem

-rw-r--r-- 1 root root 1428 Nov 16 14:03 etcd-peer.pem

3.2.5.拷贝证书至各运算节点,并创建配置

3.2.5.1.拷贝3套证书到.../bin/cert目录

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/ca.pem .

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/ca-key.pem .

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/client.pem .

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/client-key.pem .

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/apiserver.pem .

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/apiserver-key.pem .

[root@hdss7-21 cert]# ll

total 24

-rw------- 1 root root 1679 Nov 17 02:11 apiserver-key.pem

-rw-r--r-- 1 root root 1598 Nov 17 02:08 apiserver.pem

-rw------- 1 root root 1679 Nov 17 02:07 ca-key.pem

-rw-r--r-- 1 root root 1346 Nov 17 02:06 ca.pem

-rw------- 1 root root 1679 Nov 17 02:08 client-key.pem

-rw-r--r-- 1 root root 1363 Nov 17 02:07 client.pem

3.2.5.2.创建配置

[root@hdss7-21 bin]# mkdir conf

[root@hdss7-21 conf]# vi audit.yaml

apiVersion: audit.k8s.io/v1beta1 # This is required.

kind: Policy

# Don't generate audit events for all requests in RequestReceived stage.

omitStages:

- "RequestReceived"

rules:

# Log pod changes at RequestResponse level

- level: RequestResponse

resources:

- group: ""

# Resource "pods" doesn't match requests to any subresource of pods,

# which is consistent with the RBAC policy.

resources: ["pods"]

# Log "pods/log", "pods/status" at Metadata level

- level: Metadata

resources:

- group: ""

resources: ["pods/log", "pods/status"]

# Don't log requests to a configmap called "controller-leader"

- level: None

resources:

- group: ""

resources: ["configmaps"]

resourceNames: ["controller-leader"]

# Don't log watch requests by the "system:kube-proxy" on endpoints or services

- level: None

users: ["system:kube-proxy"]

verbs: ["watch"]

resources:

- group: "" # core API group

resources: ["endpoints", "services"]

# Don't log authenticated requests to certain non-resource URL paths.

- level: None

userGroups: ["system:authenticated"]

nonResourceURLs:

- "/api*" # Wildcard matching.

- "/version"

# Log the request body of configmap changes in kube-system.

- level: Request

resources:

- group: "" # core API group

resources: ["configmaps"]

# This rule only applies to resources in the "kube-system" namespace.

# The empty string "" can be used to select non-namespaced resources.

namespaces: ["kube-system"]

# Log configmap and secret changes in all other namespaces at the Metadata level.

- level: Metadata

resources:

- group: "" # core API group

resources: ["secrets", "configmaps"]

# Log all other resources in core and extensions at the Request level.

- level: Request

resources:

- group: "" # core API group

- group: "extensions" # Version of group should NOT be included.

# A catch-all rule to log all other requests at the Metadata level.

- level: Metadata

# Long-running requests like watches that fall under this rule will not

# generate an audit event in RequestReceived.

omitStages:

- "RequestReceived"

3.2.6.创建apiserver启动脚本

[root@hdss7-21 bin]# vi /opt/kubernetes/server/bin/kube-apiserver.sh

#!/bin/bash

./kube-apiserver \

--apiserver-count 2 \

--audit-log-path /data/logs/kubernetes/kube-apiserver/audit-log \

--audit-policy-file ./conf/audit.yaml \

--authorization-mode RBAC \

--client-ca-file ./cert/ca.pem \

--requestheader-client-ca-file ./cert/ca.pem \

--enable-admission-plugins NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,MutatingAdmissionWebhook,ValidatingAdmissionWebhook,ResourceQuota \

--etcd-cafile ./cert/ca.pem \

--etcd-certfile ./cert/client.pem \

--etcd-keyfile ./cert/client-key.pem \

--etcd-servers https://10.4.7.12:2379,https://10.4.7.21:2379,https://10.4.7.22:2379 \

--service-account-key-file ./cert/ca-key.pem \

--service-cluster-ip-range 192.168.0.0/16 \

--service-node-port-range 3000-29999 \

--target-ram-mb=1024 \

--kubelet-client-certificate ./cert/client.pem \

--kubelet-client-key ./cert/client-key.pem \

--log-dir /data/logs/kubernetes/kube-apiserver \

--tls-cert-file ./cert/apiserver.pem \

--tls-private-key-file ./cert/apiserver-key.pem \

--v 2

#################参数说明############

--apiserver-count 2 \ // apiserver数量,有资源可以给3个

--audit-log-path /data/logs/kubernetes/kube-apiserver/audit-log \ //日志目录

--audit-policy-file ./conf/audit.yaml \ //日志审计规则

--authorization-mode RBAC \ //RBAC --基于角色访问的控制

--client-ca-file ./cert/ca.pem \

--requestheader-client-ca-file ./cert/ca.pem \

--enable-admission-plugins NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,MutatingAdmissionWebhook,ValidatingAdmissionWebhook,ResourceQuota \

--etcd-cafile ./cert/ca.pem \

--etcd-certfile ./cert/client.pem \

--etcd-keyfile ./cert/client-key.pem \

--etcd-servers https://10.4.7.12:2379,https://10.4.7.21:2379,https://10.4.7.22:2379 \

--service-account-key-file ./cert/ca-key.pem \

--service-cluster-ip-range 192.168.0.0/16 \

--service-node-port-range 3000-29999 \

--target-ram-mb=1024 \

--kubelet-client-certificate ./cert/client.pem \

--kubelet-client-key ./cert/client-key.pem \

--log-dir /data/logs/kubernetes/kube-apiserver \

--tls-cert-file ./cert/apiserver.pem \

--tls-private-key-file ./cert/apiserver-key.pem \

--v 2 //log Level 是v 2

更多说明请看例子:

[root@hdss7-21 bin]# ./kube-apiserver --help|grep -A 5 target-ram-mb

--target-ram-mb int Memory limit for apiserver in MB (used to configure sizes of caches, etc.)

Etcd flags:

--default-watch-cache-size int Default watch cache size. If zero, watch cache will be disabled for resources that do not have a default watch size set. (default 100)

--delete-collection-workers int Number of workers spawned for DeleteCollection call. These are used to speed up namespace cleanup. (default 1)

3.2.7.调整权限和目录

[root@hdss7-21 bin]# chmod +x kube-apiserver.sh

[root@hdss7-21 bin]# mkdir -p /data/logs/kubernetes/kube-apiserver

3.2.8.创建supervisor配置

[root@hdss7-21 bin]# vi /etc/supervisord.d/kube-apiserver.ini

[program:kube-apiserver-7-21]

command=/opt/kubernetes/server/bin/kube-apiserver.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-apiserver/apiserver.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

3.2.9.启动服务并检查

[root@hdss7-21 bin]# supervisorctl update

[root@hdss7-21 bin]# supervisorctl status

etcd-server-7-21 RUNNING pid 1716, uptime 2:01:19

kube-apiserver-7-21 RUNNING pid 2032, uptime 0:01:33

[root@hdss7-21 bin]# netstat -nltup|grep kube-api

tcp 0 0 127.0.0.1:8080 0.0.0.0:* LISTEN 2033/./kube-apiserv

tcp6 0 0 :::6443 :::* LISTEN 2033/./kube-apiserv

3.2.10.安装部署启动检查所有集群

hdss7-22 跟上述基本相同

/etc/supervisord.d/kube-apiserver.ini

需要更改成[program:kube-apiserver-7-22]

3.2.11.配四层反向代理

VIP 10.4.7.10:7443代理 两台apiserver的6443端口,此处会用到keepalived

3.2.11.1安装nginx和keepalived

hdss7-11和hdss7-12都安装nginx和keepalived

[root@hdss7-12 etcd]# yum install nginx -y

hdss7-11和hdss7-12配置nginx.conf

[root@hdss7-11 conf.d]# vi /etc/nginx/nginx.conf //最后追加

stream {

upstream kube-apiserver {

server 10.4.7.21:6443 max_fails=3 fail_timeout=30s;

server 10.4.7.22:6443 max_fails=3 fail_timeout=30s;

}

server {

listen 7443;

proxy_connect_timeout 2s;

proxy_timeout 900s;

proxy_pass kube-apiserver;

}

}

[root@hdss7-12 etcd]# nginx -t

nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

nginx: configuration file /etc/nginx/nginx.conf test is successful

hdss7-11和hdss7-12配置keepalived

检查脚本

[root@hdss7-11 ~]# vi /etc/keepalived/check_port.sh

#!/bin/bash

#keepalived 监控端口脚本

#使用方法:

#在keepalived的配置文件中

#vrrp_script check_port {#创建一个vrrp_script脚本,检查配置

# script "/etc/keepalived/check_port.sh 6379" #配置监听的端口

# interval 2 #检查脚本的频率,单位(秒)

#}

CHK_PORT=$1

if [ -n "$CHK_PORT" ];then

PORT_PROCESS=`ss -lnt|grep $CHK_PORT|wc -l`

if [ $PORT_PROCESS -eq 0 ];then

echo "Port $CHK_PORT Is Not Used,End."

exit 1

fi

else

echo "Check Port Cant Be Empty!"

fi

[root@hdss7-11 ~]# chmod +x /etc/keepalived/check_port.sh

配置文件

keepalived 主:

[root@hdss7-11 conf.d]# vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.11

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.4.7.11

nopreempt

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.4.7.10

}

}

keepalived从:

[root@hdss7-12 conf.d]# vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.12

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 251

mcast_src_ip 10.4.7.12

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.4.7.10

}

}

3.2.12.启动代理并检查

//hdss7-11和hdss7-12上启动nginx

~]# systemctl start nginx

~]# systemctl enable nginx

Created symlink from /etc/systemd/system/multi-user.target.wants/nginx.service to /usr/lib/systemd/system/nginx.service.

~]# netstat -nltup|grep nginx

tcp 0 0 0.0.0.0:80 0.0.0.0:* LISTEN 21520/nginx: master

tcp 0 0 0.0.0.0:7443 0.0.0.0:* LISTEN 21520/nginx: master

tcp6 0 0 :::80 :::* LISTEN 21520/nginx: master

//hdss7-11和hdss7-12上启动keepalived

~]# systemctl start keepalived.service

~]# systemctl enable keepalived

Created symlink from /etc/systemd/system/multi-user.target.wants/keepalived.service to /usr/lib/systemd/system/keepalived.service.

//检查

[root@hdss7-11 ~]# ip addr

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:4c:f4:9e brd ff:ff:ff:ff:ff:ff

inet 10.4.7.11/24 brd 10.4.7.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet 10.4.7.10/32 scope global eth0

valid_lft forever preferred_lft forever

//VIP漂移测试

nginx -s stop测试,略。。。

注意:keepalived hdss7-11配置 nopreempt,意为非抢占式

原因:如果抢占式,假如生产网络抖动原因,check_port脚本探测不到,VIP有可能会动触发报警,VIP漂移在生产上属于重大生产事故,是要写故障报告的,是无法忍受的

3.3.部署controller-manager

3.3.1.集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| hdss7-21.host.com | controller-manager | 10.4.7.21 |

| hdss7-22.host.com | controller-manager | 10.4.7.21 |

| 注意:这里部署文档以hdss7-21为例,另外一台类似 |

3.3.2.创建启动脚本

hdss7-21上

[root@hdss7-21 bin]# vi /opt/kubernetes/server/bin/kube-controller-manager.sh

#!/bin/sh

./kube-controller-manager \

--cluster-cidr 172.7.0.0/16 \

--leader-elect true \

--log-dir /data/logs/kubernetes/kube-controller-manager \

--master http://127.0.0.1:8080 \

--service-account-private-key-file ./cert/ca-key.pem \

--service-cluster-ip-range 192.168.0.0/16 \

--root-ca-file ./cert/ca.pem \

--v 2

3.3.3.调整文件权限,创建目录

[root@hdss7-21 bin]# chmod +x /opt/kubernetes/server/bin/kube-controller-manager.sh

[root@hdss7-21 bin]# mkdir -p /data/logs/kubernetes/kube-controller-manager

3.3.4.创建supervisor配置

[root@hdss7-21 bin]# vi /etc/supervisord.d/kube-conntroller-manager.ini

[program:kube-controller-manager-7-21]

command=/opt/kubernetes/server/bin/kube-controller-manager.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-controller-manager/controller.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

3.3.5.启动服务并检查

[root@hdss7-21 bin]# supervisorctl update

kube-controller-manager-7-21: added process group

[root@hdss7-21 bin]# supervisorctl status

etcd-server-7-21 RUNNING pid 1716, uptime 3:44:21

kube-apiserver-7-21 RUNNING pid 2032, uptime 1:44:35

kube-controller-manager-7-21 RUNNING pid 2196, uptime 0:00:50

3.3.6.安装部署启动检查所有集群规划主机

hdss7-22 跟上述基本相同

/etc/supervisord.d/ kube-conntroller-manager.ini

需要更改成[program:kube-conntroller-manager-7-22]

3.4.部署kube-scheduler

3.4.1.集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| hdss7-21.host.com | kube-scheduler | 10.4.7.21 |

| hdss7-22.host.com | kube-scheduler | 10.4.7.22 |

| 注意:这里部署文档以hdss7-21为例,另一运算节点类似 |

3.4.2.创建启动脚本

hdss7-21上

[root@hdss7-21 bin]# vi /opt/kubernetes/server/bin/kube-scheduler.sh

#!/bin/sh

./kube-scheduler \

--leader-elect \

--log-dir /data/logs/kubernetes/kube-scheduler \

--master http://127.0.0.1:8080 \

--v 2

//如果主控节点组件在不同的地方,是需要证书验证的,实验环境是在一个宿主机,所以这里无需证书

3.4.3.调整文件权限,创建目录

[root@hdss7-21 bin]# chmod +x /opt/kubernetes/server/bin/kube-scheduler.sh

[root@hdss7-21 bin]# mkdir -p /data/logs/kubernetes/kube-scheduler

3.4.4.创建supervisor配置

[root@hdss7-21 bin]# vi /etc/supervisord.d/kube-scheduler.ini

[program:kube-scheduler-7-21]

command=/opt/kubernetes/server/bin/kube-scheduler.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-scheduler/scheduler.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

3.4.5.启动服务并检查

[root@hdss7-21 bin]# supervisorctl update

kube-scheduler-7-21: added process group

[root@hdss7-21 bin]# supervisorctl status

etcd-server-7-21 RUNNING pid 1716, uptime 4:11:22

kube-apiserver-7-21 RUNNING pid 2032, uptime 2:11:36

kube-controller-manager-7-21 RUNNING pid 2196, uptime 0:27:51

kube-scheduler-7-21 RUNNING pid 2284, uptime 0:00:33

3.4.6.安装部署启动检查所有集群规划主机

hdss7-22 跟上述基本相同

/etc/supervisord.d/ kube-scheduler.ini

需要更改成[program:kube-scheduler-7-22]

3.5.检查主控节点

3.5.1.建立kubectl软链接

[root@hdss7-22 bin]# ln -s /opt/kubernetes/server/bin/kubectl /usr/bin/kubectl

3.5.2.检查主控节点

检查集群健康状态 cs(cluster status)

[root@hdss7-21 bin]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health": "true"}

etcd-0 Healthy {"health": "true"}

etcd-1 Healthy {"health": "true"}

4.部署node节点服务

4.1.部署kubelet

4.1.1.集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| hdss7-21.host.com | kubelet | 10.4.7.21 |

| hdss7-22.host.com | kubelet | 10.4.7.22 |

| 注意:这里部署文档以 hdss7-21主机为例,另外一台运算节点安装部署方法类似 |

4.1.2.签发kubelet证书

运维主机hdss7-200上

4.1.2.1.创建生成证书签名请求(csr)的json配置文件

[root@hdss7-200 certs]# vi kubelet-csr.json

{

"CN": "k8s-kubelet",

"hosts": [

"127.0.0.1",

"10.4.7.10",

"10.4.7.21",

"10.4.7.22",

"10.4.7.23",

"10.4.7.24",

"10.4.7.25",

"10.4.7.26",

"10.4.7.27",

"10.4.7.28"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

4.1.2.2.生成kubelet证书和私钥

[root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server kubelet-csr.json | cfssl-json -bare kubelet

4.1.2.3.检查生成的证书和私钥

[root@hdss7-200 certs]# ll

total 84

-rw-r--r-- 1 root root 1115 Nov 16 22:03 kubelet.csr

-rw-r--r-- 1 root root 452 Nov 16 21:59 kubelet-csr.json

-rw------- 1 root root 1679 Nov 16 22:03 kubelet-key.pem

-rw-r--r-- 1 root root 1468 Nov 16 22:03 kubelet.pem

4.1.3.拷贝证书到各运算节点,并创建配置

hdss7-21 上

4.1.3.1.拷贝证书,私钥,注意私钥文件属性600

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/kubelet.pem .

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/kubelet-key.pem .

[root@hdss7-21 cert]# ll

total 32

-rw------- 1 root root 1679 Nov 17 02:11 apiserver-key.pem

-rw-r--r-- 1 root root 1598 Nov 17 02:08 apiserver.pem

-rw------- 1 root root 1679 Nov 17 02:07 ca-key.pem

-rw-r--r-- 1 root root 1346 Nov 17 02:06 ca.pem

-rw------- 1 root root 1679 Nov 17 02:08 client-key.pem

-rw-r--r-- 1 root root 1363 Nov 17 02:07 client.pem

-rw------- 1 root root 1679 Nov 17 06:12 kubelet-key.pem

-rw-r--r-- 1 root root 1468 Nov 17 06:11 kubelet.pem

4.1.3.2.创建配置---分四步

都在conf目录下

4.1.3.2.1.set-cluster

[root@hdss7-21 conf]# kubectl config set-cluster myk8s \

--certificate-authority=/opt/kubernetes/server/bin/cert/ca.pem \

--embed-certs=true \

--server=https://10.4.7.10:7443 \

--kubeconfig=kubelet.kubeconfig

返回结果:

Cluster "myk8s" set.

4.1.3.2.2.set-credentials

[root@hdss7-21 conf]# kubectl config set-credentials k8s-node \

--client-certificate=/opt/kubernetes/server/bin/cert/client.pem \

--client-key=/opt/kubernetes/server/bin/cert/client-key.pem \

--embed-certs=true \

--kubeconfig=kubelet.kubeconfig

返回结果:

User "k8s-node" set.

4.1.3.2.3.set-context

[root@hdss7-21 conf]# kubectl config set-context myk8s-context \

--cluster=myk8s \

--user=k8s-node \

--kubeconfig=kubelet.kubeconfig

返回结果:

Context "myk8s-context" created.

4.1.3.2.4.use-context

[root@hdss7-21 conf]# kubectl config use-context myk8s-context --kubeconfig=kubelet.kubeconfig

返回结果:

Switched to context "myk8s-context".

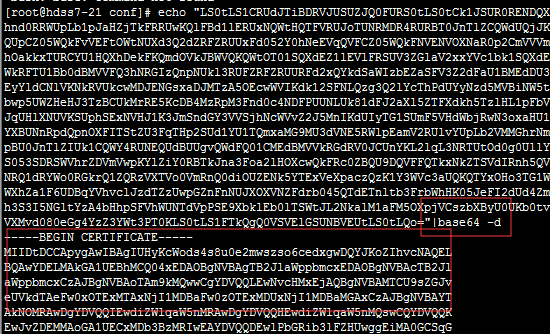

4.1.3.2.5.查看生成的kubelet.kubeconfig

[root@hdss7-21 conf]# ll

total 12

-rw-r--r-- 1 root root 2223 Nov 17 02:18 audit.yaml

-rw------- 1 root root 6199 Nov 17 06:32 kubelet.kubeconfig

cat下面的这段实际上就是ca.pem 经过base64编译以后的编码

4.1.3.2.6.k8s-node.yaml

1、创建配置文件

[root@hdss7-21 conf]# vi k8s-node.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: k8s-node

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: k8s-node

2、根据配置文件创建用户

[root@hdss7-21 conf]# kubectl create -f k8s-node.yaml //-f 指定配置文件

clusterrolebinding.rbac.authorization.k8s.io/k8s-node created //创建角色后会存到etcd里

3、查询集群角色和查看角色属性

[root@hdss7-21 conf]# kubectl get clusterrolebinding k8s-node

NAME AGE

k8s-node 7m54s

[root@hdss7-21 conf]# kubectl get clusterrolebinding k8s-node -o yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding //定义了一个集群绑定资源,k8s一切皆资源

metadata:

creationTimestamp: "2019-11-16T22:53:06Z"

name: k8s-node //资源的名称为k8s-node

resourceVersion: "11262"

selfLink: /apis/rbac.authorization.k8s.io/v1/clusterrolebindings/k8s-node

uid: 2b87d554-de37-494d-b6dd-7c5d0ecdd5d6

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node //给k8s-node集群用户绑定了一个集群角色,叫system:node,意思是让下面k8s-node用户具备这个集群里运算节点的权限

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: k8s-node

4、拷贝kubelet.kubeconfig 到hdss7-22上

[root@hdss7-22 conf]# scp hdss7-21:/opt/kubernetes/server/bin/conf/kubelet.kubeconfig .

[root@hdss7-22 conf]# ll

total 12

-rw-r--r-- 1 root root 2223 Nov 16 18:48 audit.yaml

-rw------- 1 root root 6199 Nov 16 23:14 kubelet.kubeconfig

4.1.4.准备pause基础镜像

运维主机 hdss7-200上

为什么需要这个pause基础镜像?

原因:需要用一个pause基础镜像把这台机器的pod拉起来,因为kubelet是干活的节点,它帮我们调度docker引擎,边车模式,让kebelet控制一个小镜像,先于我们的业务容器起来,让它帮我们业务容器去设置:UTC、NET、IPC,让它先把命名空间占上,业务容易还没起来的时候,pod的ip已经分配出来了。

4.1.4.1.下载pause镜像

运维主机 hdss7-200

[root@hdss7-200 ~]# docker pull kubernetes/pause

Using default tag: latest

latest: Pulling from kubernetes/pause

4f4fb700ef54: Pull complete

b9c8ec465f6b: Pull complete

Digest: sha256:b31bfb4d0213f254d361e0079deaaebefa4f82ba7aa76ef82e90b4935ad5b105

Status: Downloaded newer image for kubernetes/pause:latest

docker.io/kubernetes/pause:latest

4.1.4.2.提交至docker私有仓库(harbor)中

4.1.4.2.1.给镜像打tag

[root@hdss7-200 ~]# docker tag f9d5de079539 harbor.od.com/public/pause:latest

[root@hdss7-200 ~]# docker images -a

REPOSITORY TAG IMAGE ID CREATED SIZE

...

kubernetes/pause latest f9d5de079539 5 years ago 240kB

harbor.od.com/public/pause latest f9d5de079539 5 years ago 240kB

4.1.4.2.2.推到harbor上

[root@hdss7-200 ~]# docker push harbor.od.com/public/pause:latest

The push refers to repository [harbor.od.com/public/pause]

5f70bf18a086: Mounted from public/nginx

e16a89738269: Pushed

latest: digest: sha256:b31bfb4d0213f254d361e0079deaaebefa4f82ba7aa76ef82e90b4935ad5b105 size: 938

4.1.5.创建kubelet启动脚本

hdss7-21上

[root@hdss7-21 conf]# vi /opt/kubernetes/server/bin/kubelet.sh

#!/bin/sh

./kubelet \

--anonymous-auth=false \

--cgroup-driver systemd \

--cluster-dns 192.168.0.2 \

--cluster-domain cluster.local \

--runtime-cgroups=/systemd/system.slice \

--kubelet-cgroups=/systemd/system.slice \

--fail-swap-on="false" \

--client-ca-file ./cert/ca.pem \

--tls-cert-file ./cert/kubelet.pem \

--tls-private-key-file ./cert/kubelet-key.pem \

--hostname-override hdss7-21.host.com \

--image-gc-high-threshold 20 \

--image-gc-low-threshold 10 \

--kubeconfig ./conf/kubelet.kubeconfig \

--log-dir /data/logs/kubernetes/kube-kubelet \

--pod-infra-container-image harbor.od.com/public/pause:latest \

--root-dir /data/kubelet

###################参数说明############

--anonymous-auth=false \ //匿名登陆,这里不允许

--cgroup-driver systemd \ //这里和docker的daemon.json保持一直

--cluster-dns 192.168.0.2 \

--cluster-domain cluster.local \

--runtime-cgroups=/systemd/system.slice \

--kubelet-cgroups=/systemd/system.slice \

--fail-swap-on="false" \ //正常是关闭swap分区的。这里不关,没有关闭swap分区正常启动,没有报错

--client-ca-file ./cert/ca.pem \

--tls-cert-file ./cert/kubelet.pem \

--tls-private-key-file ./cert/kubelet-key.pem \

--hostname-override hdss7-21.host.com \

--image-gc-high-threshold 20 \

--image-gc-low-threshold 10 \

--kubeconfig ./conf/kubelet.kubeconfig \

--log-dir /data/logs/kubernetes/kube-kubelet \

--pod-infra-container-image harbor.od.com/public/pause:latest \

--root-dir /data/kubelet

注意:kubelet集群个主机的启动脚本略不同,其他节点注意修改:--hostname-override

4.1.6.检查配置,权限,创建日志目录

hdss7-21上

[root@hdss7-21 conf]# ls -l|grep kubelet

-rw------- 1 root root 6199 Nov 17 06:32 kubelet.kubeconfig

[root@hdss7-21 conf]# chmod +x /opt/kubernetes/server/bin/kubelet.sh

[root@hdss7-21 conf]# mkdir -p /data/logs/kubernetes/kube-kubelet /data/kubelet

4.1.7.创建supervisor配置

[root@hdss7-21 conf]# vi /etc/supervisord.d/kube-kubelet.ini

[program:kube-kubelet-7-21]

command=/opt/kubernetes/server/bin/kubelet.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-kubelet/kubelet.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

注意:其他主机部署时请注意修改program标签

4.1.8.启动服务并检查

[root@hdss7-21 conf]# supervisorctl update

kube-kubelet-7-21: added process group

[root@hdss7-21 bin]# supervisorctl status

etcd-server-7-21 RUNNING pid 3270, uptime 0:00:46

kube-apiserver-7-21 RUNNING pid 3273, uptime 0:00:46

kube-controller-manager-7-21 RUNNING pid 3267, uptime 0:00:46

kube-kubelet-7-21 RUNNING pid 3268, uptime 0:00:46

kube-scheduler-7-21 RUNNING pid 3269, uptime 0:00:46

遇到一个问题,没起来:

查看日志原来是我的docker服务没起,systemctl start docker systemctl enable docker

4.1.9.检查运算节点

[root@hdss7-21 bin]# tailf /data/logs/kubernetes/kube-kubelet/kubelet.stdout.log

I1117 08:24:38.074479 3272 kubelet_node_status.go:72] Attempting to register node hdss7-21.host.com

E1117 08:24:38.088198 3272 kubelet.go:2248] node "hdss7-21.host.com" not found

I1117 08:24:38.088590 3272 kubelet_node_status.go:75] Successfully registered node hdss7-21.host.com

I1117 08:24:38.119351 3272 cpu_manager.go:155] [cpumanager] starting with none policy

I1117 08:24:38.119363 3272 cpu_manager.go:156] [cpumanager] reconciling every 10s

I1117 08:24:38.119382 3272 policy_none.go:42] [cpumanager] none policy: Start

I1117 08:24:38.155413 3272 kubelet.go:1822] skipping pod synchronization - container runtime status check may not have completed yet

W1117 08:24:38.374787 3272 manager.go:546] Failed to retrieve checkpoint for "kubelet_internal_checkpoint": checkpoint is not found

I1117 08:24:38.375126 3272 plugin_manager.go:116] Starting Kubelet Plugin Manager

I1117 08:24:38.690595 3272 reconciler.go:150] Reconciler: start to sync state

4.1.10.安装部署启动检查所有集群规划主机

其他节点类似,有些需要稍许调整,上面有标注:

/opt/kubernetes/server/bin/conf/kubelet.kubeconfig

/opt/kubernetes/server/bin/kubelet.sh

/etc/supervisord.d/kube-kubelet.ini

4.1.11.检查所有运算节点

[root@hdss7-21 bin]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

hdss7-21.host.com Ready <none> 8h v1.15.2

hdss7-22.host.com Ready <none> 8h v1.15.2

// 角色是none,打个标签,标签是特色管理功能之一

[root@hdss7-21 bin]# kubectl label node hdss7-21.host.com node-role.kubernetes.io/master=

node/hdss7-21.host.com labeled

[root@hdss7-21 bin]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

hdss7-21.host.com Ready master 8h v1.15.2

hdss7-22.host.com Ready <none> 8h v1.15.2

[root@hdss7-21 bin]# kubectl label node hdss7-21.host.com node-role.kubernetes.io/node=

node/hdss7-21.host.com labeled

[root@hdss7-21 bin]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

hdss7-21.host.com Ready master,node 8h v1.15.2

hdss7-22.host.com Ready <none> 8h v1.15.2

4.2.部署kube-proxy

联结pod网络和集群网络

4.2.1.集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| hdss7-21.host.com | kube-proxy | 10.4.7.21 |

| hdss7-22.host.com | kube-proxy | 10.4.7.21 |

| 注意:这里部署以hdss7-21主机为例,其他运算节点类似 |

4.2.2.签发kube-proxy证书

运维主机hdss7-200上

4.2.2.1.创建生成证书签名请求(csr)的json配置文件

[root@hdss7-200 certs]# vi kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

4.2.2.2.生成kubelet证书和私钥

[root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client kube-proxy-csr.json |cfssl-json -bare kube-proxy-client

2019/11/17 00:58:31 [INFO] generate received request

2019/11/17 00:58:31 [INFO] received CSR

2019/11/17 00:58:31 [INFO] generating key: rsa-2048

2019/11/17 00:58:31 [INFO] encoded CSR

2019/11/17 00:58:31 [INFO] signed certificate with serial number 490532965938220149616881213802710440162344647640

2019/11/17 00:58:31 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

4.2.2.3.检查生成的证书和私钥

[root@hdss7-200 certs]# ll

-rw-r--r-- 1 root root 1005 Nov 17 00:58 kube-proxy-client.csr

-rw------- 1 root root 1675 Nov 17 00:58 kube-proxy-client-key.pem

-rw-r--r-- 1 root root 1375 Nov 17 00:58 kube-proxy-client.pem

-rw-r--r-- 1 root root 267 Nov 17 00:57 kube-proxy-csr.json

4.2.3.拷贝证书至各运算节点,并创建配置

hdss7-21上

4.2.3.1.拷贝证书,私钥,注意私钥文件属性600

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/kube-proxy-client.pem .

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/kube-proxy-client-key.pem .

[root@hdss7-21 cert]# ll

total 40

-rw------- 1 root root 1675 Nov 17 09:10 kube-proxy-client-key.pem

-rw-r--r-- 1 root root 1375 Nov 17 09:10 kube-proxy-client.pem

4.2.3.2.创建配置

注意必须在conf目录下,否则报错

4.2.3.2.1.set-cluster

[root@hdss7-21 conf]# kubectl config set-cluster myk8s \

--certificate-authority=/opt/kubernetes/server/bin/cert/ca.pem \

--embed-certs=true \

--server=https://10.4.7.10:7443 \

--kubeconfig=kube-proxy.kubeconfig

返回结果:

Cluster "myk8s" set.

4.2.3.2.2.set-credentials

[root@hdss7-21 conf]# kubectl config set-credentials kube-proxy \

--client-certificate=/opt/kubernetes/server/bin/cert/kube-proxy-client.pem \

--client-key=/opt/kubernetes/server/bin/cert/kube-proxy-client-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

返回结果:

User "kube-proxy" set.

4.2.3.2.3.set-context

[root@hdss7-21 conf]# kubectl config set-context myk8s-context \

--cluster=myk8s \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

返回结果:

Context "myk8s-context" created.

4.2.3.2.4.use-context

[root@hdss7-21 conf]# kubectl config use-context myk8s-context --kubeconfig=kube-proxy.kubeconfig

返回结果

Switched to context "myk8s-context".

4.2.3.2.5.拷贝kube-proxy.kubeconfig 到 hdss7-22的conf目录下

[root@hdss7-22 conf]# scp hdss7-21:/opt/kubernetes/server/bin/conf/kube-proxy.kubeconfig .

4.2.4.创建kube-proxy启动脚本

hdss7-21上

4.2.4.1.加载ipvs模块

[root@hdss7-21 bin]# lsmod |grep ip_vs

[root@hdss7-21 bin]# vi /root/ipvs.sh

#!/bin/bash

ipvs_mods_dir="/usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs"

for i in $(ls $ipvs_mods_dir|grep -o "^[^.]*")

do

/sbin/modinfo -F filename $i &>/dev/null

if [ $? -eq 0 ];then

/sbin/modprobe $i

fi

done

[root@hdss7-21 bin]# chmod +x /root/ipvs.sh

[root@hdss7-21 bin]# sh /root/ipvs.sh

[root@hdss7-21 bin]# lsmod |grep ip_vs

ip_vs_wrr 12697 0

ip_vs_wlc 12519 0

ip_vs_sh 12688 0

ip_vs_sed 12519 0

......

4.2.4.2.创建启动脚本

[root@hdss7-21 bin]# vi /opt/kubernetes/server/bin/kube-proxy.sh

#!/bin/sh

./kube-proxy \

--cluster-cidr 172.7.0.0/16 \

--hostname-override hdss7-21.host.com \

--proxy-mode=ipvs \

--ipvs-scheduler=nq \

--kubeconfig ./conf/kube-proxy.kubeconfig

4.2.5.检查配置,权限,创建日志目录

[root@hdss7-22 bin]# ls -l /opt/kubernetes/server/bin/conf/|grep kube-proxy

-rw------- 1 root root 6215 Nov 17 01:22 kube-proxy.kubeconfig

[root@hdss7-22 bin]# chmod +x /opt/kubernetes/server/bin/kube-proxy.sh

[root@hdss7-22 bin]# mkdir -p /data/logs/kubernetes/kube-proxy

4.2.6.创建supervisor配置

[root@hdss7-21 bin]# vi /etc/supervisord.d/kube-proxy.ini

[program:kube-proxy-7-21]

command=/opt/kubernetes/server/bin/kube-proxy.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-proxy/proxy.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

4.2.7.启动服务并检查

[root@hdss7-21 bin]# supervisorctl update

[root@hdss7-21 bin]# supervisorctl status

etcd-server-7-21 RUNNING pid 3270, uptime 1:22:21

kube-apiserver-7-21 RUNNING pid 3273, uptime 1:22:21

kube-controller-manager-7-21 RUNNING pid 3267, uptime 1:22:21

kube-kubelet-7-21 RUNNING pid 3268, uptime 1:22:21

kube-proxy-7-21 RUNNING pid 19580, uptime 0:00:31

kube-scheduler-7-21 RUNNING pid 3269, uptime 1:22:21

[root@hdss7-21 bin]# yum install ipvsadm -y

[root@hdss7-21 bin]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.0.1:443 nq

-> 10.4.7.21:6443 Masq 1 0 0

-> 10.4.7.22:6443 Masq 1 0 0

[root@hdss7-21 bin]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 7h11m

4.2.8.安装部署启动检查所有集群规划主机

hdss7-22 跟上述基本相同

/etc/supervisord.d/kube-proxy.ini

需要更改成[program:kube-proxy-7-21]

/opt/kubernetes/server/bin/kube-proxy.sh

需要改成 --hostname-override hdss7-22.host.com

5.验证kubernetes集群

5.1.在任意一个运算节点,创建一个资源配置清单

这里选择hdss7-21主机

[root@hdss7-21 ~]# vi /root/nginx-ds.yaml

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: nginx-ds

spec:

template:

metadata:

labels:

app: nginx-ds

spec:

containers:

- name: my-nginx

image: harbor.od.com/public/nginx:v1.7.9

ports:

- containerPort: 80

5.2.应用资源配置,并检查

5.2.1.hdss7-21上的操作

[root@hdss7-21 bin]# kubectl create -f /root/nginx-ds.yaml

返回结果:

daemonset.extensions/nginx-ds created

[root@hdss7-21 bin]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-ds-mcvxt 1/1 Running 0 8h

nginx-ds-zsnz9 1/1 Running 0 8h

[root@hdss7-21 bin]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ds-mcvxt 1/1 Running 0 8h 172.7.21.2 hdss7-21.host.com <none> <none>

nginx-ds-zsnz9 1/1 Running 0 8h 172.7.22.2 hdss7-22.host.com <none> <none>

[root@hdss7-21 bin]# curl 172.7.21.2

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

[root@hdss7-21 bin]# curl 172.7.22.2

^C

//curl 不到?

原因是跨宿主机,docker容器还不能通信,下篇:flannel解决这个问题

5.2.2.hdss7-22上的操作

[root@hdss7-22 bin]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ds-mcvxt 1/1 Running 0 6m45s 172.7.21.2 hdss7-21.host.com <none> <none>

nginx-ds-zsnz9 1/1 Running 0 6m45s 172.7.22.2 hdss7-22.host.com <none> <none>

[root@hdss7-22 bin]# curl 172.7.22.2

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

[root@hdss7-22 bin]# curl 172.7.21.2

^C

//curl 不到,上述已有解释

5.2.3.查看kubernetes集群上篇是否搭建好

[root@hdss7-21 bin]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-1 Healthy {"health": "true"}

etcd-2 Healthy {"health": "true"}

etcd-0 Healthy {"health": "true"}

[root@hdss7-21 bin]# kubectl get node

NAME STATUS ROLES AGE VERSION

hdss7-21.host.com Ready master,node 9h v1.15.2

hdss7-22.host.com Ready master,node 10h v1.15.2

[root@hdss7-21 bin]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-ds-mcvxt 1/1 Running 0 8h

nginx-ds-zsnz9 1/1 Running 0 8h

5.2.4.FAQ:

5.2.4.1.:kube-proxy启动之后,用不了ipvs模式,查看日志发现如下报错

E1111 12:27:38.031263 23086 server_others.go:259] can't determine whether to use ipvs proxy, error: error getting ipset version, error: executable file not found in $PATH

W1111 12:27:38.049355 23086 node.go:113] Failed to retrieve node info: Get https://10.4.7.10:7443/api/v1/nodes/hdss7-21.host.com: EOF

yum install ipset -y,用supervisor重启服务,问题解决

浙公网安备 33010602011771号

浙公网安备 33010602011771号