kubesphere 记录1

C:\Users\zhangxiaokang\Desktop\233

[root@zxk ~]# cd /root/kubesphere/

[root@zxk kubesphere]# ll

total 23100

-rwxrwxrwx 1 root root 11963040 Sep 22 01:56 kk

drwxr-xr-x 3 root root 4096 Sep 22 01:55 kubekey

-rw-r--r-- 1 root root 11634102 Sep 22 02:14 kubekey-v1.2.0-linux-amd64.tar.gz

-rw-r--r-- 1 root root 24235 Sep 22 02:14 README.md

-rw-r--r-- 1 root root 24164 Sep 22 02:14 README_zh-CN.md

[root@zxk kubesphere]# ./kk create cluster --with-kubernetes v1.20.10 --with-kubesphere v3.2.0

+------+------+------+---------+----------+-------+-------+-----------+---------+------------+-------------+------------------+--------------+

| name | sudo | curl | openssl | ebtables | socat | ipset | conntrack | docker | nfs client | ceph client | glusterfs client | time |

+------+------+------+---------+----------+-------+-------+-----------+---------+------------+-------------+------------------+--------------+

| zxk | y | y | y | y | y | y | y | 20.10.9 | y | | | CST 02:21:23 |

+------+------+------+---------+----------+-------+-------+-----------+---------+------------+-------------+------------------+--------------+

This is a simple check of your environment.

Before installation, you should ensure that your machines meet all requirements specified at

https://github.com/kubesphere/kubekey#requirements-and-recommendations

Continue this installation? [yes/no]: yes

INFO[02:21:25 CST] Downloading Installation Files

INFO[02:21:25 CST] Downloading kubeadm ...

INFO[02:21:26 CST] Downloading kubelet ...

INFO[02:21:26 CST] Downloading kubectl ...

INFO[02:21:26 CST] Downloading helm ...

INFO[02:21:27 CST] Downloading kubecni ...

INFO[02:21:27 CST] Downloading etcd ...

INFO[02:21:27 CST] Downloading docker ...

INFO[02:21:27 CST] Downloading crictl ...

INFO[02:21:27 CST] Configuring operating system ...

[zxk 172.16.188.135] MSG:

vm.swappiness = 1

kernel.sysrq = 1

net.ipv4.neigh.default.gc_stale_time = 120

net.ipv4.conf.all.rp_filter = 0

net.ipv4.conf.default.rp_filter = 0

net.ipv4.conf.default.arp_announce = 2

net.ipv4.conf.lo.arp_announce = 2

net.ipv4.conf.all.arp_announce = 2

net.ipv4.tcp_max_tw_buckets = 5000

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 1024

net.ipv4.tcp_synack_retries = 2

net.ipv4.tcp_slow_start_after_idle = 0

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-arptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_local_reserved_ports = 30000-32767

vm.max_map_count = 262144

fs.inotify.max_user_instances = 524288

no crontab for root

INFO[02:21:29 CST] Get cluster status

INFO[02:21:29 CST] Installing Container Runtime ...

INFO[02:21:29 CST] Start to download images on all nodes

[zxk] Downloading image: kubesphere/pause:3.2

[zxk] Downloading image: kubesphere/kube-apiserver:v1.20.10

[zxk] Downloading image: kubesphere/kube-controller-manager:v1.20.10

[zxk] Downloading image: kubesphere/kube-scheduler:v1.20.10

[zxk] Downloading image: kubesphere/kube-proxy:v1.20.10

[zxk] Downloading image: coredns/coredns:1.6.9

[zxk] Downloading image: kubesphere/k8s-dns-node-cache:1.15.12

[zxk] Downloading image: calico/kube-controllers:v3.20.0

[zxk] Downloading image: calico/cni:v3.20.0

[zxk] Downloading image: calico/node:v3.20.0

[zxk] Downloading image: calico/pod2daemon-flexvol:v3.20.0

INFO[02:23:44 CST] Getting etcd status

[zxk 172.16.188.135] MSG:

Configuration file will be created

INFO[02:23:44 CST] Generating etcd certs

INFO[02:23:45 CST] Synchronizing etcd certs

INFO[02:23:45 CST] Creating etcd service

Push /root/kubesphere/kubekey/v1.20.10/amd64/etcd-v3.4.13-linux-amd64.tar.gz to 172.16.188.135:/tmp/kubekey/etcd-v3.4.13-linux-amd64.tar.gz Done

INFO[02:23:46 CST] Starting etcd cluster

INFO[02:23:46 CST] Refreshing etcd configuration

[zxk 172.16.188.135] MSG:

Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /etc/systemd/system/etcd.service.

INFO[02:23:51 CST] Backup etcd data regularly

INFO[02:23:58 CST] Installing kube binaries

Push /root/kubesphere/kubekey/v1.20.10/amd64/kubeadm to 172.16.188.135:/tmp/kubekey/kubeadm Done

Push /root/kubesphere/kubekey/v1.20.10/amd64/kubelet to 172.16.188.135:/tmp/kubekey/kubelet Done

Push /root/kubesphere/kubekey/v1.20.10/amd64/kubectl to 172.16.188.135:/tmp/kubekey/kubectl Done

Push /root/kubesphere/kubekey/v1.20.10/amd64/helm to 172.16.188.135:/tmp/kubekey/helm Done

Push /root/kubesphere/kubekey/v1.20.10/amd64/cni-plugins-linux-amd64-v0.9.1.tgz to 172.16.188.135:/tmp/kubekey/cni-plugins-linux-amd64-v0.9.1.tgz Done

INFO[02:24:02 CST] Initializing kubernetes cluster

[zxk 172.16.188.135] MSG:

W0922 02:24:02.970938 14550 utils.go:69] The recommended value for "clusterDNS" in "KubeletConfiguration" is: [10.233.0.10]; the provided value is: [169.254.25.10]

[init] Using Kubernetes version: v1.20.10

[preflight] Running pre-flight checks

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.9. Latest validated version: 19.03

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local lb.kubesphere.local localhost zxk zxk.cluster.local] and IPs [10.233.0.1 172.16.188.135 127.0.0.1]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] External etcd mode: Skipping etcd/ca certificate authority generation

[certs] External etcd mode: Skipping etcd/server certificate generation

[certs] External etcd mode: Skipping etcd/peer certificate generation

[certs] External etcd mode: Skipping etcd/healthcheck-client certificate generation

[certs] External etcd mode: Skipping apiserver-etcd-client certificate generation

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 19.502989 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.20" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node zxk as control-plane by adding the labels "node-role.kubernetes.io/master=''" and "node-role.kubernetes.io/control-plane='' (deprecated)"

[mark-control-plane] Marking the node zxk as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: qtkjt2.vvl7fq5ldhm83xa2

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join lb.kubesphere.local:6443 --token qtkjt2.vvl7fq5ldhm83xa2 \

--discovery-token-ca-cert-hash sha256:091e762bbe17d40bff8eead561419ecc7e3f802d3b86ebb96b5d9ed2ce4f0e61 \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join lb.kubesphere.local:6443 --token qtkjt2.vvl7fq5ldhm83xa2 \

--discovery-token-ca-cert-hash sha256:091e762bbe17d40bff8eead561419ecc7e3f802d3b86ebb96b5d9ed2ce4f0e61

[zxk 172.16.188.135] MSG:

node/zxk untainted

[zxk 172.16.188.135] MSG:

node/zxk labeled

[zxk 172.16.188.135] MSG:

service "kube-dns" deleted

[zxk 172.16.188.135] MSG:

service/coredns created

Warning: resource clusterroles/system:coredns is missing the kubectl.kubernetes.io/last-applied-configuration annotation which is required by kubectl apply. kubectl apply should only be used on resources created declaratively by either kubectl create --save-config or kubectl apply. The missing annotation will be patched automatically.

clusterrole.rbac.authorization.k8s.io/system:coredns configured

[zxk 172.16.188.135] MSG:

serviceaccount/nodelocaldns created

daemonset.apps/nodelocaldns created

[zxk 172.16.188.135] MSG:

configmap/nodelocaldns created

INFO[02:24:50 CST] Get cluster status

INFO[02:24:51 CST] Joining nodes to cluster

INFO[02:24:51 CST] Deploying network plugin ...

[zxk 172.16.188.135] MSG:

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

daemonset.apps/calico-node created

serviceaccount/calico-node created

deployment.apps/calico-kube-controllers created

serviceaccount/calico-kube-controllers created

poddisruptionbudget.policy/calico-kube-controllers created

[zxk 172.16.188.135] MSG:

storageclass.storage.k8s.io/local created

serviceaccount/openebs-maya-operator created

clusterrole.rbac.authorization.k8s.io/openebs-maya-operator created

clusterrolebinding.rbac.authorization.k8s.io/openebs-maya-operator created

deployment.apps/openebs-localpv-provisioner created

INFO[02:24:52 CST] Deploying KubeSphere ...

v3.2.0

[zxk 172.16.188.135] MSG:

namespace/kubesphere-system created

namespace/kubesphere-monitoring-system created

[zxk 172.16.188.135] MSG:

secret/kube-etcd-client-certs created

[zxk 172.16.188.135] MSG:

namespace/kubesphere-system unchanged

serviceaccount/ks-installer unchanged

customresourcedefinition.apiextensions.k8s.io/clusterconfigurations.installer.kubesphere.io unchanged

clusterrole.rbac.authorization.k8s.io/ks-installer unchanged

clusterrolebinding.rbac.authorization.k8s.io/ks-installer unchanged

deployment.apps/ks-installer unchanged

clusterconfiguration.installer.kubesphere.io/ks-installer created

#####################################################

### Welcome to KubeSphere! ###

#####################################################

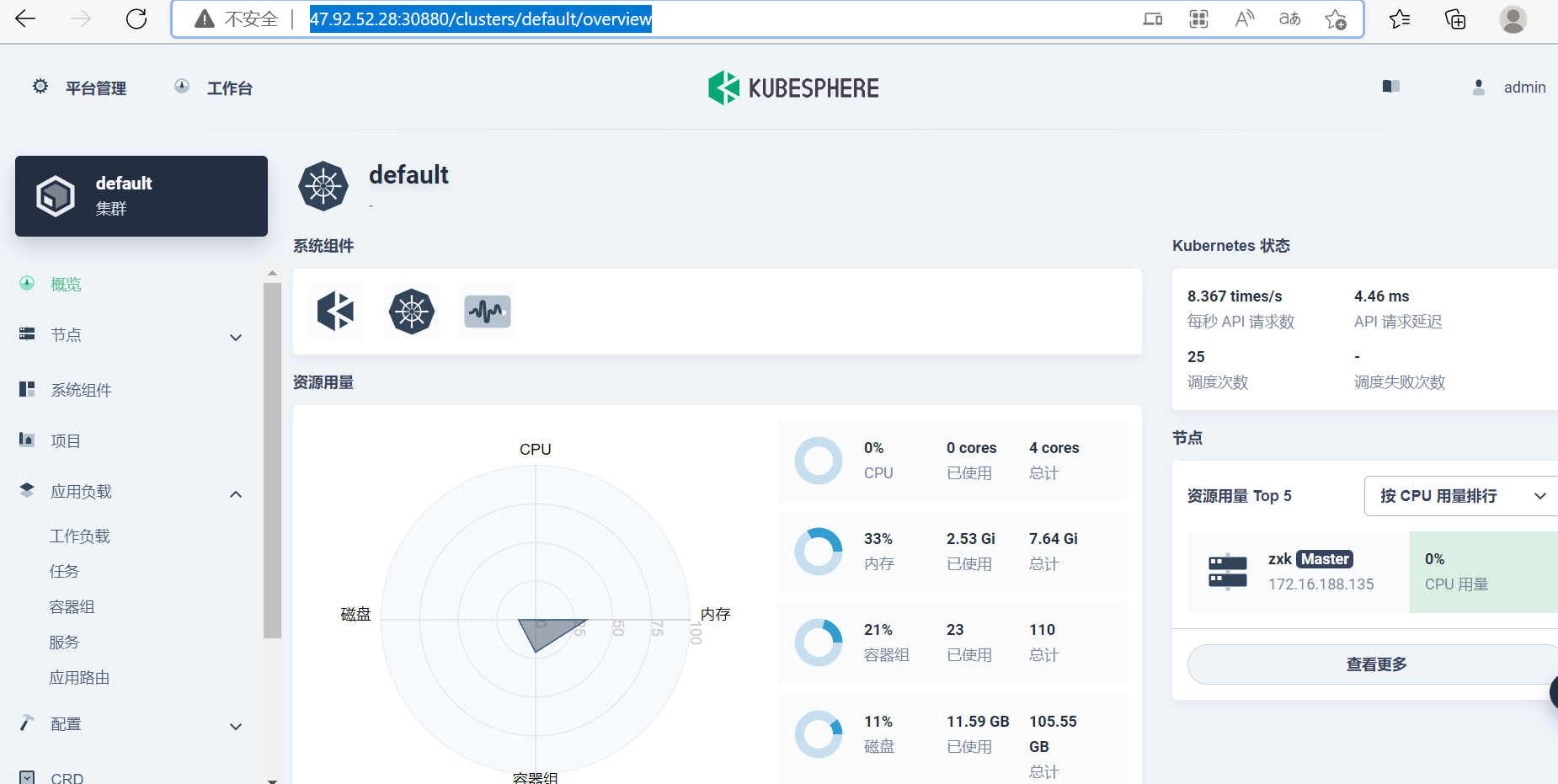

Console: http://172.16.188.135:30880

Account: admin

Password: P@88w0rd

NOTES:

1. After you log into the console, please check the

monitoring status of service components in

"Cluster Management". If any service is not

ready, please wait patiently until all components

are up and running.

2. Please change the default password after login.

#####################################################

https://kubesphere.io 2022-09-22 02:30:23

#####################################################

INFO[02:30:26 CST] Installation is complete.

Please check the result using the command:

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l app=ks-install -o jsonpath='{.items[0].metadata.name}') -f

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 无需6万激活码!GitHub神秘组织3小时极速复刻Manus,手把手教你使用OpenManus搭建本

· C#/.NET/.NET Core优秀项目和框架2025年2月简报

· Manus爆火,是硬核还是营销?

· 终于写完轮子一部分:tcp代理 了,记录一下

· 【杭电多校比赛记录】2025“钉耙编程”中国大学生算法设计春季联赛(1)