Docker安装kafka

一、准备zookeeper/kafka/kafka-console镜像

略

二、配置docker-compose.yml文件

version: '3.9'

services:

zookeeper:

image: bitnami/zookeeper:3.9

container_name: zookeeper

ports:

- "2181:2181"

- "2888:2888"

- "3888:3888"

environment:

ZOO_MY_ID: 1

ZOO_SERVERS: zookeeper:2888:3888

ZOO_ENABLE_AUTH: "yes"

ZOO_SERVER_AUTH_SCHEME: "digest"

ZOO_DIGEST_USERNAME: "kafka-zk"

ZOO_DIGEST_PASSWORD: "password.zk"

volumes:

- /container/mnt/zookeeper/data:/data

- /container/mnt/zookeeper/datalog:/datalog

networks:

- kafka-zk-network

restart: always

kafka:

image: bitnami/kafka:3.6.0

container_name: kafka

ports:

# 9092 端口,暴露外网是24001

- "24001:9092"

environment:

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://暴露外网的IP:24001

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: PLAINTEXT:PLAINTEXT,PLAINTEXT_HOST:PLAINTEXT

KAFKA_ZOOKEEPER_CONNECT: zookeeper:2181

KAFKA_ZOOKEEPER_SET_ACL: "yes"

KAFKA_ZOOKEEPER_ACL: "kafka-zk:password.zk:rwcda"

KAFKA_BROKER_ID: 1

volumes:

- /container/mnt/kafka/data:/var/lib/kafka/data

networks:

- kafka-zk-network

restart: always

kafka-console:

container_name: kafka-console

image: redpandadata/console:latest

ports:

- "24040:8080"

environment:

KAFKA_BROKERS: "暴露外网的IP:24001"

depends_on:

- "kafka"

deploy:

placement:

constraints:

- node.labels.kafka.replica==1

networks:

- kafka-zk-network

restart: always

networks:

kafka-zk-network:

driver: bridge

注意:KAFKA_ADVERTISED_LISTENERS配置的是对外网监听的ip和端口,要使用外网IP和端口(即如果用的是阿里云,就要用阿里云的公网IP,否则会无法连接),我们的9092端口暴露的是24001

三、启动

docker compose up -d

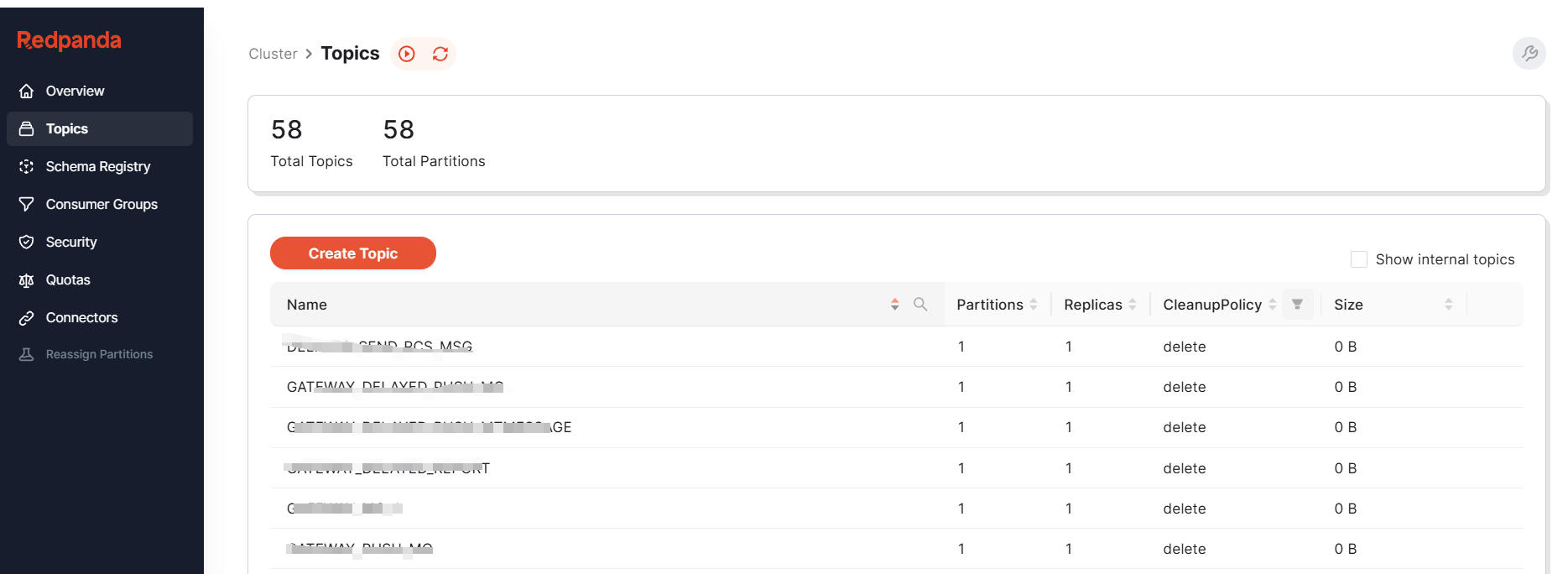

四、通过kafka-console查看kafka

五、springcloud配置kafka

kafka:

bootstrap-servers: 暴露的IP:24001 # 指定 kafka 的地址

producer: # producer 生产者

retries: 0 # 重试次数

acks: 1 # 应答级别:多少个分区副本备份完成时向生产者发送ack确认(可选0、1、all/-1)

batch-size: 100000 # 批量处理的最大大小 单位 byte

buffer-memory: 33554432 # 生产端缓冲区大小

key-serializer: org.apache.kafka.common.serialization.StringSerializer

value-serializer: org.apache.kafka.common.serialization.StringSerializer

consumer:

group-id: test-consumer-group #指定消费者组的 group_id

enable-auto-commit: false # 是否自动提交offset

#auto-commit-interval: 100 # 提交offset延时(接收到消息后多久提交offset)

# earliest:当各分区下有已提交的offset时,从提交的offset开始消费;无提交的offset时,从头开始消费

# latest:当各分区下有已提交的offset时,从提交的offset开始消费;无提交的offset时,消费新产生的该分区下的数据

# none:topic各分区都存在已提交的offset时,从offset后开始消费;只要有一个分区不存在已提交的offset,则抛出异常

auto-offset-reset: latest #latest 最新的位置 , earliest最早的位置

max-poll-records: 10 #批量消费每次最多消费多少条消息

properties:

max.poll.interval.ms: 86400000

key-deserializer: org.apache.kafka.common.serialization.StringDeserializer #指定 key 的反序列化器

value-deserializer: org.apache.kafka.common.serialization.StringDeserializer #指定 value 的反序列化器

listener:

ack-mode: manual_immediate

# 消费者监听的topic不存在时,项目会报错,设置为false

missing-topics-fatal: false

type: batch

concurrency: 1

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· TypeScript + Deepseek 打造卜卦网站:技术与玄学的结合

· Manus的开源复刻OpenManus初探

· AI 智能体引爆开源社区「GitHub 热点速览」

· 从HTTP原因短语缺失研究HTTP/2和HTTP/3的设计差异

· 三行代码完成国际化适配,妙~啊~