二进制高可用安装k8s集群下

一、Node节点配置

1.1 kubelet

Master01节点复制证书至Node节点

cd /etc/kubernetes/

for NODE in k8s-master02 k8s-master03 k8s-node01 k8s-node02; do

ssh $NODE mkdir -p /etc/kubernetes/pki

for FILE in pki/ca.pem pki/ca-key.pem pki/front-proxy-ca.pem bootstrap-kubelet.kubeconfig; do

scp /etc/kubernetes/$FILE $NODE:/etc/kubernetes/${FILE}

done

done

所有节点创建相关目录,master节点也配置了kubelet

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/

所有节点配置kubelet service

[root@k8s-master01 bootstrap]# vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

[Service]

ExecStart=/usr/local/bin/kubelet

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

如果Runtime为Containerd,请使用如下Kubelet的配置:

如下配置单独指定了/etc/kubernetes/kubelet-conf.yml配置文件

所有节点配置kubelet service的配置文件(也可以写到kubelet.service):

# Runtime为Containerd

# vim /etc/systemd/system/kubelet.service.d/10-kubelet.conf

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig --kubeconfig=/etc/kubernetes/kubelet.kubeconfig"

Environment="KUBELET_SYSTEM_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin --container-runtime=remote --runtime-request-timeout=15m --container-runtime-endpoint=unix:///run/containerd/containerd.sock --cgroup-driver=systemd"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

如果Runtime为Docker,请使用如下Kubelet的配置:

# Runtime为Docker

# vim /etc/systemd/system/kubelet.service.d/10-kubelet.conf

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig --kubeconfig=/etc/kubernetes/kubelet.kubeconfig"

Environment="KUBELET_SYSTEM_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.5"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

创建kubelet的配置文件,如果以后要更改kubelet的配置就改这个文件

注意:如果更改了k8s的service网段,需要更改kubelet-conf.yml 的clusterDNS:配置,改成k8s Service网段的第十个地址,比如192.168.0.10

[root@k8s-master01 bootstrap]# vim /etc/kubernetes/kubelet-conf.yml

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/pki/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 192.168.0.10

clusterDomain: cluster.local

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 110

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

启动所有节点kubelet

systemctl daemon-reload

systemctl enable --now kubelet

此时系统日志/var/log/messages显示只有如下两种信息为正常,没有循环打印日志就是正常的,如下报错信息为cailico未安装,安装calico后即可恢复

Unable to update cni config: no networks found in /etc/cni/net.d

如果有很多报错日志,或者有大量看不懂的报错,说明kubelet的配置有误,需要检查kubelet配置

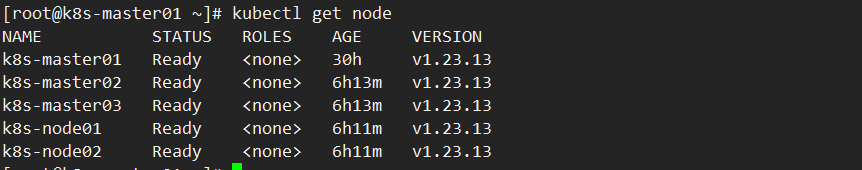

查看集群状态(Ready或NotReady都正常)

[root@k8s-master01 bootstrap]# kubectl get node

1.2 kube-proxy

注意,如果不是高可用集群,10.103.236.236:8443改为master01的地址,8443改为apiserver的端口,默认是6443

以下操作只在Master01执行

cd /root/k8s-ha-install

kubectl -n kube-system create serviceaccount kube-proxy

kubectl create clusterrolebinding system:kube-proxy --clusterrole system:node-proxier --serviceaccount kube-system:kube-proxy

SECRET=$(kubectl -n kube-system get sa/kube-proxy \

--output=jsonpath='{.secrets[0].name}')

JWT_TOKEN=$(kubectl -n kube-system get secret/$SECRET \

--output=jsonpath='{.data.token}' | base64 -d)

PKI_DIR=/etc/kubernetes/pki

K8S_DIR=/etc/kubernetes

kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://10.103.236.236:8443 --kubeconfig=${K8S_DIR}/kube-proxy.kubeconfig

kubectl config set-credentials kubernetes --token=${JWT_TOKEN} --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config set-context kubernetes --cluster=kubernetes --user=kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config use-context kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

将kubeconfig发送至其他节点

for NODE in k8s-master02 k8s-master03; do

scp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfig

done

for NODE in k8s-node01 k8s-node02; do

scp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfig

done

所有节点添加kube-proxy的配置和service文件:

vim /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Kube Proxy

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--v=2

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

如果更改了集群Pod的网段,需要更改kube-proxy.yaml的clusterCIDR为自己的Pod网段:以及mode=ipvs

vim /etc/kubernetes/kube-proxy.yaml

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

clientConnection:

acceptContentTypes: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

qps: 5

clusterCIDR: 172.16.0.0/12

configSyncPeriod: 15m0s

conntrack:

max: null

maxPerCore: 32768

min: 131072

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

syncPeriod: 30s

ipvs:

masqueradeAll: true

minSyncPeriod: 5s

scheduler: "rr"

syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: "ipvs"

nodePortAddresses: null

oomScoreAdj: -999

portRange: ""

udpIdleTimeout: 250ms

所有节点启动kube-proxy

systemctl daemon-reload

systemctl enable --now kube-proxy

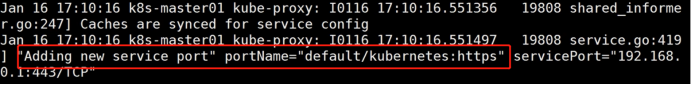

看下日志如果出现如下所示表示正常

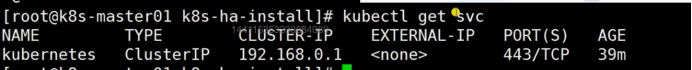

查看svc,pod跟apiserver通讯都是走的如下地址

二、Calico安装

建议安装官方推荐版本

以下步骤只在master01执行

cd /root/k8s-ha-install/calico/

更改calico的网段,主要需要将红色部分的网段,改为自己的Pod网段

cp calico.yaml calico.yaml.bak

sed -i "s#POD_CIDR#172.16.0.0/12#g" calico.yaml

#检查

grep "IPV4POOL_CIDR" calico.yaml -A 1

kubectl apply -f calico.yaml

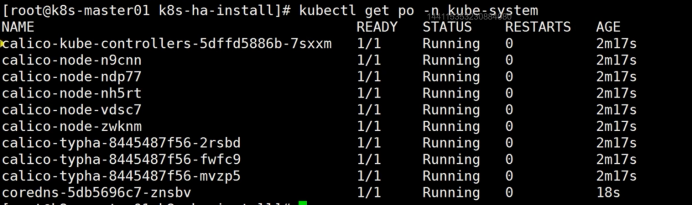

#查看容器状态

kubectl get po -n kube-system

三、CoreDNS安装

安装官方推荐版本(推荐)

cd /root/k8s-ha-install/

如果更改了k8s service的网段需要将coredns的serviceIP改成k8s service网段的第十个IP

跟/etc/kubernetes/kubelet-conf.yml文件中的一致

COREDNS_SERVICE_IP=`kubectl get svc | grep kubernetes | awk '{print $3}'`0

sed -i "s#KUBEDNS_SERVICE_IP#${COREDNS_SERVICE_IP}#g" CoreDNS/coredns.yaml

安装coredns

kubectl create -f CoreDNS/coredns.yaml

安装最新版CoreDNS(不推荐)

安装k8s推荐的版本就行了,没必要追求最新版本

COREDNS_SERVICE_IP=`kubectl get svc | grep kubernetes | awk '{print $3}'`0

git clone https://github.com/coredns/deployment.git

cd deployment/kubernetes

# ./deploy.sh -s -i ${COREDNS_SERVICE_IP} | kubectl apply -f -

查看状态

# kubectl get po -n kube-system -l k8s-app=kube-dns

四、Metrics Server安装

在新版的Kubernetes中系统资源的采集均使用Metrics-server,可以通过Metrics采集节点和Pod的内存、磁盘、CPU和网络的使用率。

k8s-ha-install目录中有metrics-server跟kubeadm-metrics-server两个目录文件,他们之间的区别对比.

vimdiff kubeadm-metrics-server/comp.yaml metrics-server/comp.yaml

可以发现只有证书部署位置不一样,kubeadm自动生成的证书文件是以.crt结尾的,二进制cfssl生成的证书文件是.pem结尾的,用的是之前生成的聚合证书。

安装metrics server

cd /root/k8s-ha-install/metrics-server

kubectl create -f .

等待metrics server启动然后查看状态

# kubectl top node

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

k8s-master01 231m 5% 1620Mi 42%

k8s-master02 274m 6% 1203Mi 31%

k8s-master03 202m 5% 1251Mi 32%

k8s-node01 69m 1% 667Mi 17%

k8s-node02 73m 1% 650Mi 16%

五、Dashboard安装

Dashboard用于展示集群中的各类资源,同时也可以通过Dashboard实时查看Pod的日志和在容器中执行一些命令等。

安装指定版本dashboard(推荐)

cd /root/k8s-ha-install/dashboard/

kubectl create -f .

查看状态

[root@k8s-master01 dashboard]# kubectl get po -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-7fcdff5f4c-kbvx8 1/1 Running 0 59s

kubernetes-dashboard-85f59f8ff7-25zrp 1/1 Running 0 59s

查看暴露的端口号

[root@k8s-master01 dashboard]# kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 192.168.208.237 <none> 8000/TCP 98s

kubernetes-dashboard NodePort 192.168.166.30 <none> 443:30812/TCP 98s

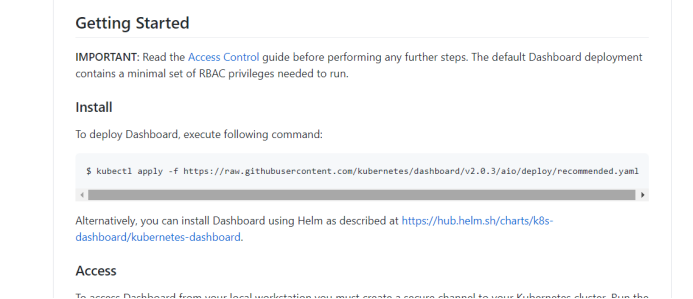

安装最新版(可以研究一下)

官方GitHub地址:https://github.com/kubernetes/dashboard

可以在官方dashboard查看到最新版dashboard

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.3/aio/deploy/recommended.yaml

#地址改为页面上最新的地址

创建管理员用户

vim admin.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kube-system

#应用yaml配置文件

kubectl apply -f admin.yaml -n kube-system

登录dashboard参考kubeadm,如果是谷歌浏览器需要修改属性。

更改dashboard的svc为NodePort,这样可以使用宿主机加端口访问到dashboard

kubectl edit svc kubernetes-dashboard -n kubernetes-dashboard

29 selector:

30 k8s-app: kubernetes-dashboard

31 sessionAffinity: None

32 type: NodePort #在32行的位置修改为NodePort

查看端口号

[root@k8s-master01 dashboard]# kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 192.168.208.237 <none> 8000/TCP 98s

kubernetes-dashboard NodePort 192.168.166.30 <none> 443:30812/TCP 98s

访问Dashboard:https://10.103.236.201:30812

关于token获取,看kubeadm笔记。

六、集群验证

注意:因为二进制安装master没有配置污点,是可以部署Pod的。

一个成功的集群具备如下条件

-

Pod必须能解析Service

-

Pod必须能解析跨namespace的Service

-

每个节点都必须要能访问Kubernetes的kubernetes svc 443和kube-dns的service 53

-

Pod和Pod之前要能通

- 同namespace能通信

- 跨namespace能通信

- 跨机器能通信

下面逐步验证

#配置一个busybox

cat<<EOF | kubectl apply -f -

apiVersion: v1

kind: Pod

metadata:

name: busybox

namespace: default

spec:

containers:

- name: busybox

image: busybox:1.28

command:

- sleep

- "3600"

imagePullPolicy: IfNotPresent

restartPolicy: Always

EOF

kubectl get pod

参看是否能解析svc

[root@k8s-master01 dashboard]# kubectl exec busybox -n default -- nslookup kubernetes

Server: 192.168.0.10

Address 1: 192.168.0.10 kube-dns.kube-system.svc.cluster.local

Name: kubernetes

Address 1: 192.168.0.1 kubernetes.default.svc.cluster.local

查看是否能解析跨namespace的Service

[root@k8s-master01 dashboard]# kubectl exec busybox -n default -- nslookup kube-dns.kube-system

Server: 192.168.0.10

Address 1: 192.168.0.10 kube-dns.kube-system.svc.cluster.local

Name: kube-dns.kube-system

Address 1: 192.168.0.10 kube-dns.kube-system.svc.cluster.local

所有节点测试是否能访问443跟53端口

yum -y install telnet -y

[root@k8s-master01 dashboard]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 32h

telnet 192.168.0.1 443

#查看kube-dns

[root@k8s-master01 dashboard]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

calico-typha ClusterIP 192.168.229.37 <none> 5473/TCP 29h

kube-dns ClusterIP 192.168.0.10 <none> 53/UDP,53/TCP,9153/TCP 29h

metrics-server ClusterIP 192.168.48.97 <none> 443/TCP 12m

[root@k8s-master01 dashboard]# telnet 192.168.0.10 53

[root@k8s-master01 dashboard]# curl telnet 192.168.0.10:53

curl: (6) Could not resolve host: telnet; Unknown error

curl: (52) Empty reply from server #提示这个表示正常

#测试pod之间通信

[root@k8s-master01 dashboard]# kubectl get po -n kube-system -owide

...省略...

[root@k8s-master01 dashboard]# kubectl get po -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

busybox 1/1 Running 0 5m24s 172.27.14.193 k8s-node02 <none> <none>

#进入这个容器,有些容器是没有bash工具的进不了正常,换成busybox试试

kubectl exec -it busybox -- sh #或者

kubectl exec -it busybox -- bash

#注意如果进入系统组件容器要加命名空间,如

kubectl exec -it calico-kube-coxxxx -n kube-system -- sh

kubectl exec -it calico-kube-coxxxx -n kube-system -- bash

ping 其他节点

其他测试

#创建一个pod

kubectl run nginx --image=nginx

#创建一个deploy,有3个副本

kubectl create deploy nginx --image=nginx --replicas=3

kubectl get deploy

kubectl get po -owide #查看这3个pod部署哪个节点上

#删除

kubectl delete deploy nginx

kubectl delete po busybox nginx

浙公网安备 33010602011771号

浙公网安备 33010602011771号