nginx负载均衡

nginx负载均衡

nginx负载均衡介绍

nginx应用场景之一就是负载均衡。在访问量较多的时候,可以通过负载均衡,将多个请求分摊到多台服务器上,相当于把一台服务器需要承担的负载量交给多台服务器处理,进而提高系统的吞吐率;另外如果其中某一台服务器挂掉,其他服务器还可以正常提供服务,以此来提高系统的可伸缩性与可靠性。

下图为负载均衡示例图,当用户请求发送后,首先发送到负载均衡服务器,而后由负载均衡服务器根据配置规则将请求转发到不同的web服务器上。

反向代理与负载均衡

nginx通常被用作后端服务器的反向代理,这样就可以很方便的实现动静分离以及负载均衡,从而大大提高服务器的处理能力。

nginx实现动静分离,其实就是在反向代理的时候,如果是静态资源,就直接从nginx发布的路径去读取,而不需要从后台服务器获取了。

但是要注意,这种情况下需要保证后端跟前端的程序保持一致,可以使用Rsync做服务端自动同步或者使用NFS、MFS分布式共享存储。

Http Proxy模块,功能很多,最常用的是proxy_pass和proxy_cache

如果要使用proxy_cache,需要集成第三方的ngx_cache_purge模块,用来清除指定的URL缓存。这个集成需要在安装nginx的时候去做,如:

./configure --add-module=../ngx_cache_purge-1.0 ......

nginx通过upstream模块来实现简单的负载均衡,upstream需要定义在http段内

在upstream段内,定义一个服务器列表,默认的方式是轮询,如果要确定同一个访问者发出的请求总是由同一个后端服务器来处理,可以设置ip_hash,如:

upstream idfsoft.com {

ip_hash;

server 127.0.0.1:9080 weight=5;

server 127.0.0.1:8080 weight=5;

server 127.0.0.1:1111;

}

注意:这个方法本质还是轮询,而且由于客户端的ip可能是不断变化的,比如动态ip,代理,FQ等,因此ip_hash并不能完全保证同一个客户端总是由同一个服务器来处理。

定义好upstream后,需要在server段内添加如下内容:

server {

location / {

proxy_pass http://idfsoft.com;

}

}

nginx负载均衡配置

环境说明

| 系统 | IP | 角色 | 服务 |

|---|---|---|---|

| centos8 | 192.168.222.250 | Nginx负载均衡器 | nginx |

| centos8 | 192.168.222.137 | Web1服务器 | apache |

| centos8 | 192.168.222.138 | Web2服务器 | nginx |

nginx负载均衡器使用源码的方式安装nginx,另外两台Web服务器使用yum的方式分别安装nginx与apache服务

nginx源码安装可以看我的博客nginx,里面有nginx详细的源码安装

修改Web服务器的默认主页

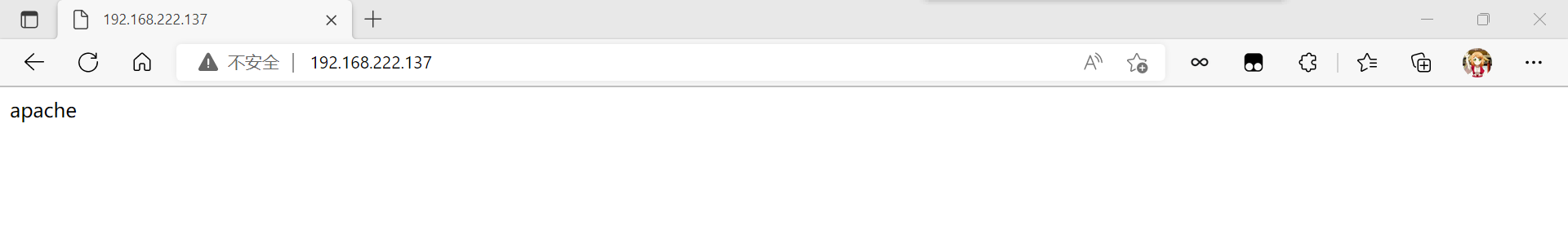

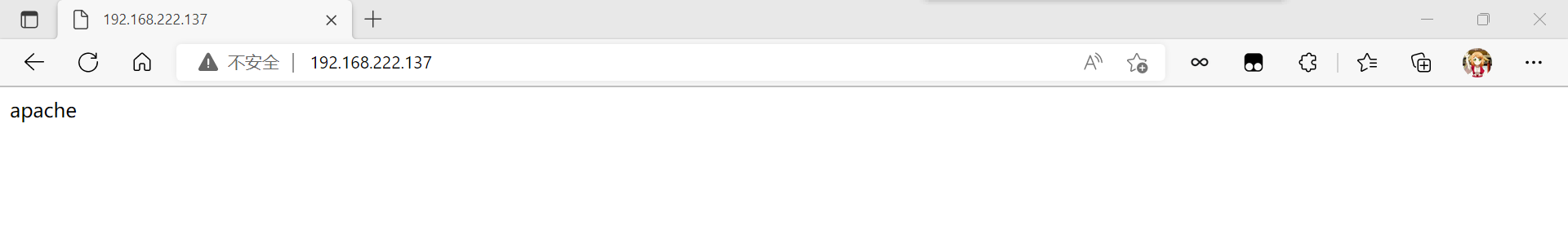

Web1:

[root@Web1 ~]# yum -y install httpd //下载服务

[root@Web1 ~]# systemctl stop firewalld.service //关闭防火墙

[root@Web1 ~]# vim /etc/selinux/config

SELINUX=disabled

[root@Web1 ~]# setenforce 0

[root@Web1 ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@Web1 ~]# cd /var/www/html/

[root@Web1 html]# ls

[root@Web1 html]# echo "apache" > index.html //编辑内容到网站里面

[root@Web1 html]# cat index.html

apache

[root@Web1 html]# systemctl enable --now httpd

Created symlink /etc/systemd/system/multi-user.target.wants/httpd.service → /usr/lib/systemd/system/httpd.service.

[root@Web1 html]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

LISTEN 0 128 *:80 *:*

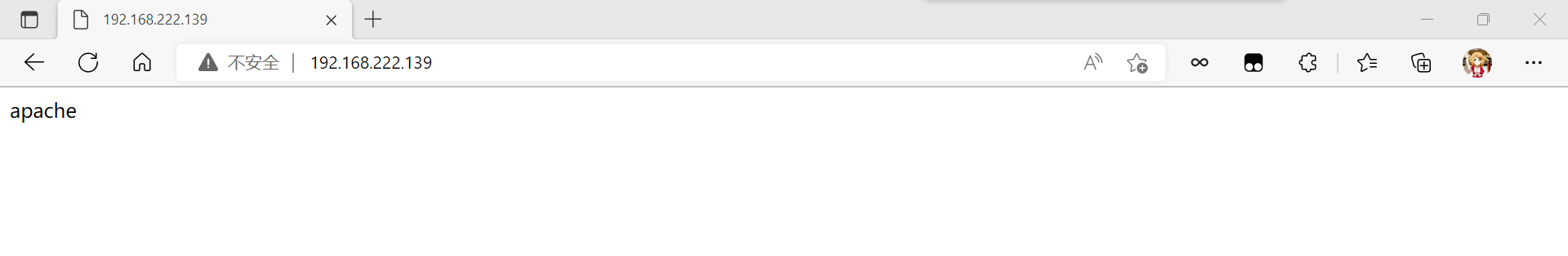

访问:

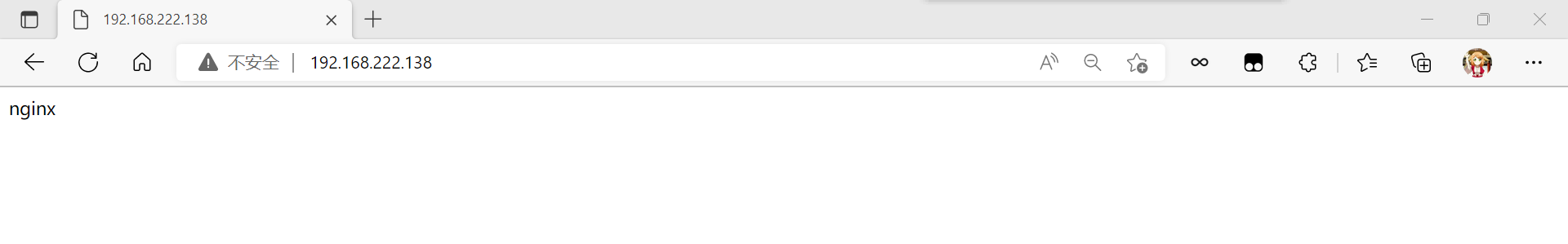

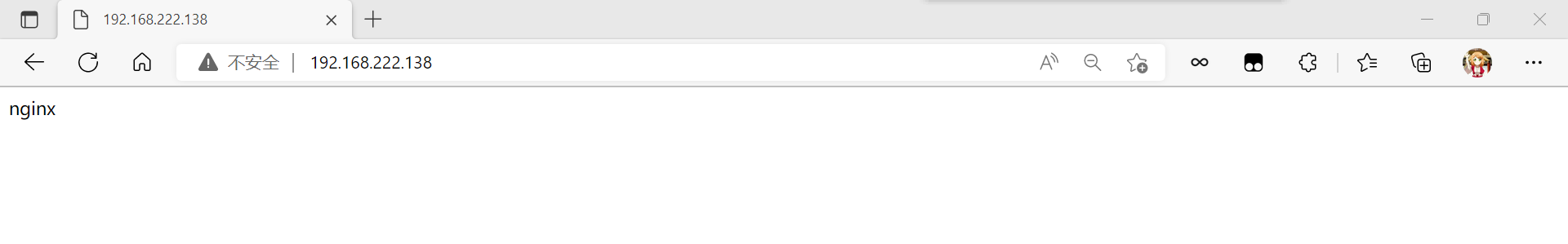

Web2:

[root@Web2 ~]# yum -y install nginx //下载服务

[root@Web2 ~]# systemctl stop firewalld.service //关闭防火墙

[root@Web2 ~]# vim /etc/selinux/config

SELINUX=disabled

[root@Web2 ~]# setenforce 0

[root@Web2 ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@Web2 ~]# cd /usr/share/nginx/html/

[root@Web2 html]# ls

404.html 50x.html index.html nginx-logo.png poweredby.png

[root@Web2 html]# echo "nginx" > index.html //编辑内容到网站里面

[root@Web2 html]# cat index.html

nginx

[root@Web2 html]# systemctl enable --now nginx.service

Created symlink /etc/systemd/system/multi-user.target.wants/nginx.service → /usr/lib/systemd/system/nginx.service.

[root@Web2 html]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:111 0.0.0.0:*

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 32 192.168.122.1:53 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:111 [::]:*

LISTEN 0 128 [::]:80 [::]:*

LISTEN 0 128 [::]:22 [::]:*

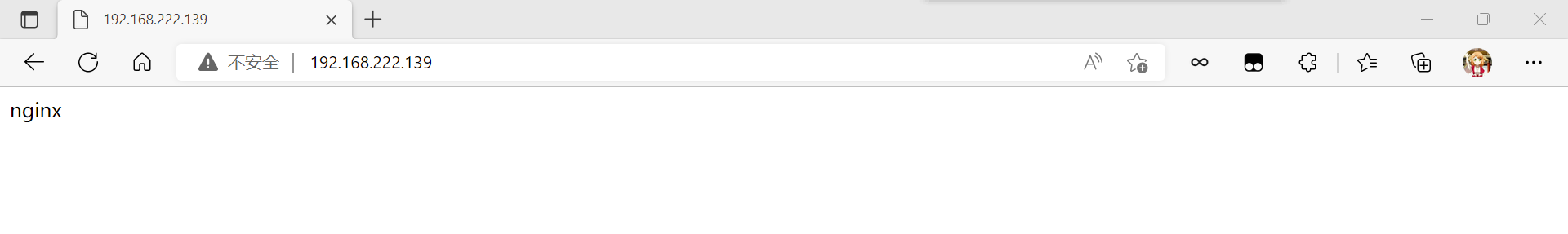

访问:

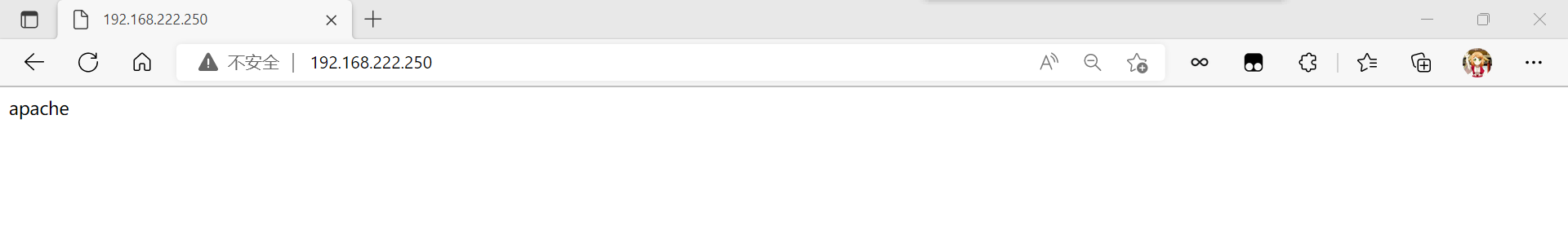

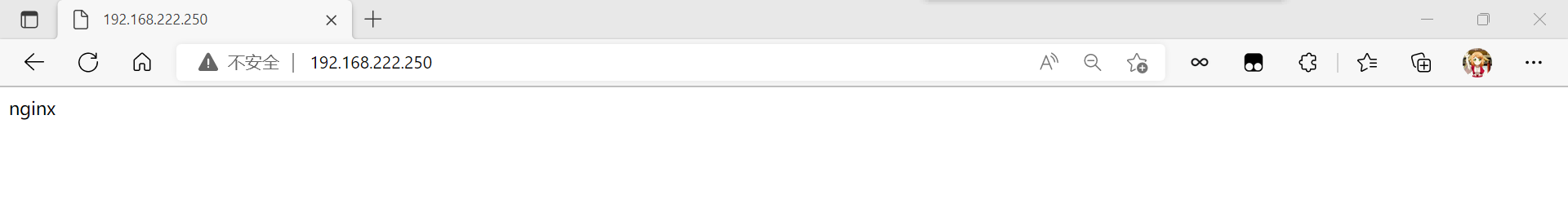

开启nginx负载均衡和反向代理

[root@nginx ~]# vim /usr/local/nginx/conf/nginx.conf

...

upstream webserver { //http字段内添加

server 192.168.222.137;

server 192.168.222.138;

}

...

location / { //server字段里面修改

root html;

proxy_pass http://webserver;

}

[root@nginx ~]# systemctl reload nginx.service

//重新加载配置

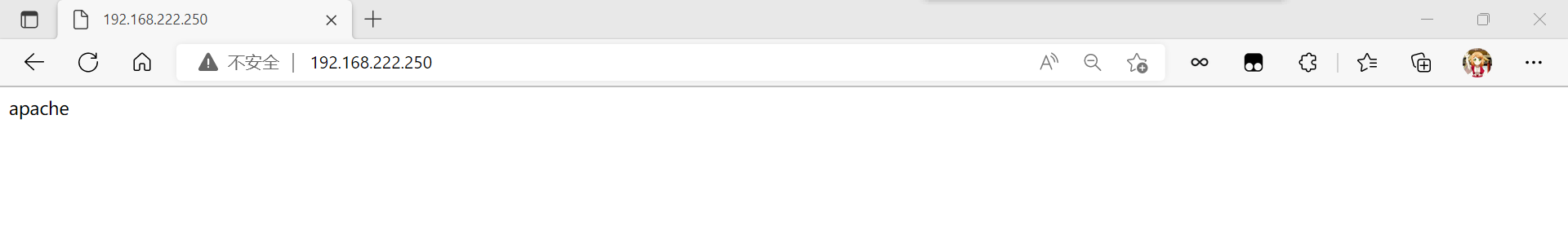

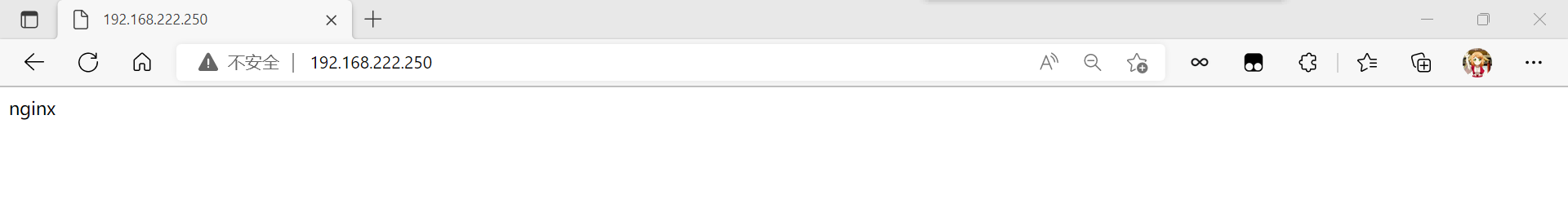

测试:

在浏览器输入nginx负载均衡器的IP地址

编辑nginx负载均衡器的nginx配置文件

[root@nginx ~]# vim /usr/local/nginx/conf/nginx.conf

upstream webserver { //在http字段内修改

server 192.168.222.137 weight=3;

server 192.168.222.138;

}

[root@nginx ~]# systemctl reload nginx.service

//重新加载配置

[root@nginx ~]# curl 192.168.222.250

apache

[root@nginx ~]# curl 192.168.222.250

apache

[root@nginx ~]# curl 192.168.222.250

apache

[root@nginx ~]# curl 192.168.222.250

nginx

[root@nginx ~]# curl 192.168.222.250

apache

[root@nginx ~]# curl 192.168.222.250

apache

[root@nginx ~]# curl 192.168.222.250

apache

[root@nginx ~]# curl 192.168.222.250

nginx

//可以观察到每访问三次apache就会访问一次nginx,意思就是配置要连续访问3次,才会进行下一次轮查询,当集群中有配置较低,较老的服务器可以进行使用,来减轻这些服务器的压力。

[root@nginx ~]# vim /usr/local/nginx/conf/nginx.conf

upstream webserver { //http字段里面进行修改

ip_hash;

server 192.168.222.137 weight=3;

server 192.168.222.138;

}

[root@nginx ~]# systemctl reload nginx.service

//重新加载配置

[root@nginx ~]# curl 192.168.222.250

nginx

[root@nginx ~]# curl 192.168.222.250

nginx

[root@nginx ~]# curl 192.168.222.250

nginx

[root@nginx ~]# curl 192.168.222.250

nginx

[root@nginx ~]# curl 192.168.222.250

nginx

[root@nginx ~]# curl 192.168.222.250

nginx

[root@nginx ~]# curl 192.168.222.250

nginx

//可以看见访问到的全部是nginx,因为ip_hash配置,这条配置可以让客户端访问到服务器端,以后就一直是此服务器来进行响应客户端,所以才会一直访问到nginx,当然前面已经说过,这个方式的本质还是轮询,并不能保证一个客户端总是由同一个服务器来进行响应

Keepalived高可用nginx负载均衡器

实验环境

| 系统 | 角色 | 服务 | IP |

|---|---|---|---|

| centos8 | nginx负载均衡器,master | nginx,keepalived | 192.168.222.250 |

| centos8 | nginx负载均衡器,backup | nginx,keepalived | 192.168.222.139 |

| centos8 | Web1服务器 | apache | 192.168.222.137 |

| centos8 | Web2服务器 | nginx | 192.168.222.138 |

nginx源码安装可以看我的博客nginx,里面有nginx详细的源码安装

VIP为:192.168.222.133

修改Web服务器的默认主页

Web1:

[root@Web1 ~]# yum -y install httpd //下载服务

[root@Web1 ~]# systemctl stop firewalld.service //关闭防火墙

[root@Web1 ~]# vim /etc/selinux/config

SELINUX=disabled

[root@Web1 ~]# setenforce 0

[root@Web1 ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@Web1 ~]# cd /var/www/html/

[root@Web1 html]# ls

[root@Web1 html]# echo "apache" > index.html //编辑内容到网站里面

[root@Web1 html]# cat index.html

apache

[root@Web1 html]# systemctl enable --now httpd

Created symlink /etc/systemd/system/multi-user.target.wants/httpd.service → /usr/lib/systemd/system/httpd.service.

[root@Web1 html]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

LISTEN 0 128 *:80 *:*

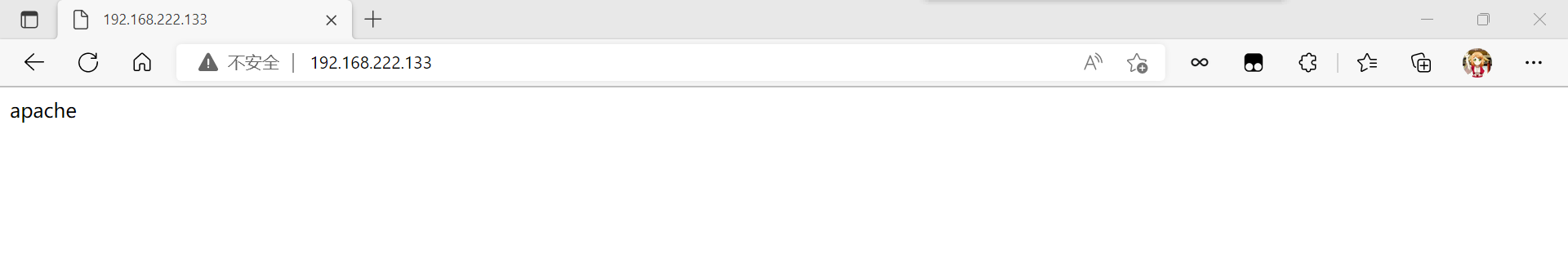

访问:

Web2:

[root@Web2 ~]# yum -y install nginx //下载服务

[root@Web2 ~]# systemctl stop firewalld.service //关闭防火墙

[root@Web2 ~]# vim /etc/selinux/config

SELINUX=disabled

[root@Web2 ~]# setenforce 0

[root@Web2 ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@Web2 ~]# cd /usr/share/nginx/html/

[root@Web2 html]# ls

404.html 50x.html index.html nginx-logo.png poweredby.png

[root@Web2 html]# echo "nginx" > index.html //编辑内容到网站里面

[root@Web2 html]# cat index.html

nginx

[root@Web2 html]# systemctl enable --now nginx.service

Created symlink /etc/systemd/system/multi-user.target.wants/nginx.service → /usr/lib/systemd/system/nginx.service.

[root@Web2 html]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:111 0.0.0.0:*

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 32 192.168.122.1:53 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:111 [::]:*

LISTEN 0 128 [::]:80 [::]:*

LISTEN 0 128 [::]:22 [::]:*

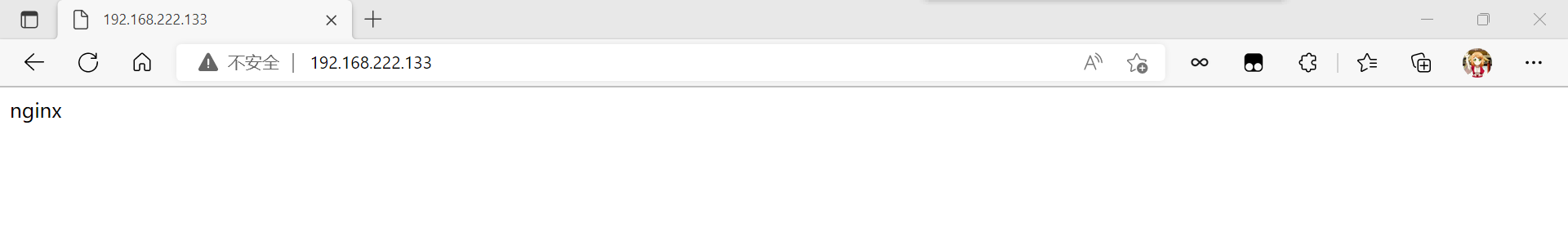

访问:

开启nginx负载均衡和反向代理

Keepalived高可用的主节点的nginx是需要设置开机自启的

master:

[root@master ~]# systemctl status nginx.service

● nginx.service - nginx server daemon

Loaded: loaded (/usr/lib/systemd/system/nginx.service; enabled; vendor preset: disabled)

Active: active (running) since Tue 2022-10-18 21:27:54 CST; 1h 1min ago

Process: 46768 ExecStart=/usr/local/nginx/sbin/nginx (code=exited, status=0/SUCCESS)

Main PID: 46769 (nginx)

Tasks: 2 (limit: 12221)

Memory: 2.6M

CGroup: /system.slice/nginx.service

├─46769 nginx: master process /usr/local/nginx/sbin/nginx

└─46770 nginx: worker process

Oct 18 21:27:54 nginx systemd[1]: Starting nginx server daemon...

Oct 18 21:27:54 nginx systemd[1]: Started nginx server daemon.

[root@master ~]# vim /usr/local/nginx/conf/nginx.conf

...

upstream webserver { //http字段内添加

server 192.168.222.137;

server 192.168.222.138;

}

...

location / { //server字段里面修改

root html;

proxy_pass http://webserver;

}

[root@master ~]# systemctl reload nginx.service

//重新加载配置

测试:

在浏览器输入nginx负载均衡器的IP地址

backup:

Keepalived高可用的备用节点的nginx是不设置开机自启的,如果开启的话,后面访问VIP的时候可能会访问不到,可以在需要测试的时候进行开启

[root@backup ~]# systemctl status nginx.service

● nginx.service - nginx server daemon

Loaded: loaded (/usr/lib/systemd/system/nginx.service; disabled; vendor preset: disabled)

Active: active (running) since Tue 2022-10-18 22:25:31 CST; 1s ago

Process: 73641 ExecStart=/usr/local/nginx/sbin/nginx (code=exited, status=0/SUCCESS)

Main PID: 73642 (nginx)

Tasks: 2 (limit: 12221)

Memory: 2.7M

CGroup: /system.slice/nginx.service

├─73642 nginx: master process /usr/local/nginx/sbin/nginx

└─73643 nginx: worker process

Oct 18 22:25:31 backup systemd[1]: Starting nginx server daemon...

Oct 18 22:25:31 backup systemd[1]: Started nginx server daemon.

[root@backup ~]# vim /usr/local/nginx/conf/nginx.conf

...

upstream webserver { //http字段内添加

server 192.168.222.137;

server 192.168.222.138;

}

...

location / { //server字段里面修改

root html;

proxy_pass http://webserver;

}

[root@backup ~]# systemctl reload nginx.service

//重新加载一下配置

访问:

在浏览器输入nginx负载均衡器的IP地址

安装Keepalived

master:

[root@master ~]# dnf list all |grep keepalived //查找系统中是否存在其安装包

Failed to set locale, defaulting to C.UTF-8

keepalived.x86_64 2.1.5-6.el8 AppStream

[root@master ~]# dnf -y install keepalived

backup:

[root@backup ~]# dnf list all |grep keepalived //查找系统中是否存在其安装包

Failed to set locale, defaulting to C.UTF-8

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

keepalived.x86_64 2.1.5-6.el8 AppStream

[root@backup ~]# dnf -y install keepalived

配置Keepalived

master

[root@master ~]# cd /etc/keepalived/

[root@master keepalived]# ls

keepalived.conf

[root@master keepalived]# mv keepalived.conf{,-bak} //备份一下配置文件

[root@master keepalived]# ls

keepalived.conf-bak

[root@master keepalived]# vim keepalived.conf //编辑一个新配置文件

[root@master keepalived]# cat keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb01

}

vrrp_instance VI_1 { //这里主备节点需要一致

state BACKUP

interface ens33 //网卡

virtual_router_id 51

priority 100 //这里比备节点的高

advert_int 1

authentication {

auth_type PASS

auth_pass tushanbu //密码(可以随机生成)

}

virtual_ipaddress {

192.168.222.133 //高可用虚拟IP(VIP)地址

}

}

virtual_server 192.168.222.133 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.222.250 80 { //主节点ip

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.222.139 80 { //备节点ip

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@master keepalived]# systemctl enable --now keepalived.service

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

backup:

[root@backup ~]# cd /etc/keepalived/

[root@backup keepalived]# ls

keepalived.conf

[root@backup keepalived]# mv keepalived.conf{,-bak} //备份一下配置文件

[root@backup keepalived]# ls

keepalived.conf-bak

[root@backup keepalived]# vim keepalived.conf //编辑新的配置文件

[root@backup keepalived]# cat keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb02

}

vrrp_instance VI_1 { //这里主备节点需要一致

state BACKUP

interface ens33 //网卡

virtual_router_id 51

priority 90 //这里比主节点的小

advert_int 1

authentication {

auth_type PASS

auth_pass tushanbu //密码(可以随机生成)

}

virtual_ipaddress {

192.168.222.133 //高可用虚拟IP(VIP)地址

}

}

virtual_server 192.168.222.133 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.222.250 80 { //主节点ip

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.222.137 80 { //备节点ip

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@backup keepalived]# systemctl enable --now keepalived.service

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

[root@backup keepalived]# systemctl start nginx

//此时测试的时候可以开启nginx

查看VIP

master:

[root@master keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:05:f4:28 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.250/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

backup:

[root@backup keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

//VIP在master主机上面因为在Keepalived配置文件里我们设置master的优先级要比backup高一些,所以VIP在这里很正常

访问:

master:

[root@master keepalived]# curl 192.168.222.133

apache

[root@master keepalived]# curl 192.168.222.133

nginx

此是关闭master上面的nginx和keepalived的

[root@master keepalived]# systemctl stop nginx.service

[root@master keepalived]# systemctl stop keepalived.service

[root@master keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:05:f4:28 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.250/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

//此时master上面没有VIP

backup:

[root@backup keepalived]# systemctl enable --now keepalived

[root@backup keepalived]# systemctl start nginx.service

[root@backup keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

//此时backup上面出现VIP,备节点变成了主节点

[root@backup keepalived]# curl 192.168.222.133

apache

[root@backup keepalived]# curl 192.168.222.133

nginx

访问:

可以看到,其中一个nginx负载均衡器挂掉了,也不会影响正常访问,这就是nginx负载均衡的高可用的配置

重启master上面的nginx和keepalived

[root@master keepalived]# systemctl enable --now keepalived

[root@master keepalived]# systemctl enable --now nginx

[root@master keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:05:f4:28 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.250/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

//可以发现VIP出现在master节点上面

编写脚本监控Keepalived和nginx的状态

master:

[root@master keepalived]# cd

[root@master ~]# mkdir /scripts

[root@master ~]# cd /scripts/

[root@master scripts]# vim check_nginx.sh

[root@master scripts]# cat check_nginx.sh

#!/bin/bash

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -lt 1 ];then

systemctl stop keepalived

fi

[root@master scripts]# chmod +x check_nginx.sh

[root@master scripts]# ll

total 4

-rwxr-xr-x. 1 root root 151 Oct 19 00:32 check_nginx.sh

[root@master scripts]# vim notify.sh

[root@master scripts]# cat notify.sh

#!/bin/bash

case "$1" in

master)

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -lt 1 ];then

systemctl start nginx

fi

;;

backup)

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -gt 0 ];then

systemctl stop nginx

fi

;;

*)

echo "Usage:$0 master|backup VIP"

;;

esac

[root@master scripts]# chmod +x notify.sh

[root@master scripts]# ll

total 8

-rwxr-xr-x. 1 root root 151 Oct 19 00:32 check_nginx.sh

-rwxr-xr-x. 1 root root 399 Oct 19 00:35 notify.sh

backup:

可以先提前创建好存放脚本的目录

[root@backup keepalived]# cd

[root@backup ~]# mkdir /scripts

[root@backup ~]# cd /scripts/

从主节点上面将脚本到备节点提前创建好的存放目录里面

[root@master scripts]# scp notify.sh 192.168.222.139:/scripts/

root@192.168.222.139's password:

notify.sh 100% 399 216.0KB/s 00:00

[root@backup scripts]# ls

notify.sh

[root@backup scripts]# cat notify.sh

#!/bin/bash

case "$1" in

master)

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -lt 1 ];then

systemctl start nginx

fi

;;

backup)

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -gt 0 ];then

systemctl stop nginx

fi

;;

*)

echo "Usage:$0 master|backup VIP"

;;

esac

配置keepalived加入监控脚本的配置

master:

[root@master scripts]# cd

[root@master ~]# vim /etc/keepalived/keepalived.conf

[root@master ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb01

}

vrrp_script nginx_check{

script "/scripts/check_nginx.sh"

interval 5

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

! Configuration File for keepalived

global_defs {

router_id lb01

}

vrrp_script nginx_check{ //添加

script "/scripts/check_nginx.sh" //添加

interval 1 //添加

weight -20 //添加

} //添加

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass tushanbu

}

virtual_ipaddress {

192.168.222.133

}

track_script { //添加

nginx_check //添加

} //添加

notify_master "/scripts/notify.sh master" //添加

}

virtual_server 192.168.222.133 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.222.250 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.222.139 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@master ~]# systemctl restart keepalived.service

backup:

backup无需检测nginx是否正常,当升级为MASTER时启动nginx,当降级为BACKUP时关闭

[root@backup scripts]# cd

[root@backup ~]# vim /etc/keepalived/keepalived.conf

[root@backup ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb02

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass tushanbu

}

virtual_ipaddress {

192.168.222.133

}

notify_master "/scripts/notify.sh master" //添加

notify_backup "/scripts/notify.sh backup" //添加

}

virtual_server 192.168.222.133 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.222.250 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.222.139 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@backup ~]# systemctl restart keepalived.service

测试

正常状态运行查看状态

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.250/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

[root@master]# curl 192.168.222.133

apache

[root@master]# curl 192.168.222.133

nginx

//此时VIP在主节点上面

关闭master的nginx

[root@master ~]# systemctl stop nginx.service

[root@master ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:05:f4:28 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.250/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

//没有VIP

backup:

[root@backup ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:31:af:f9 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.139/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe31:aff9/64 scope link

valid_lft forever preferred_lft forever

[root@backup ~]# curl 192.168.222.133

apache

[root@backup ~]# curl 192.168.222.133

nginx

//备节点变成主机节点

重新开启master的nginx

[root@master ~]# systemctl restart keepalived.service

[root@master ~]# systemctl restart nginx.service

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:f6:83:57 brd ff:ff:ff:ff:ff:ff

inet 192.168.222.250/24 brd 192.168.222.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.222.133/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fef6:8357/64 scope link

valid_lft forever preferred_lft forever

[root@master]# curl 192.168.222.133

apache

[root@master]# curl 192.168.222.133

nginx

//此时VIP重新回到master上面