python写的小爬虫程序

从网上看的视频,然后配合视频写的爬虫程序,爬取一些简单的页面数据,工具python3

入口文件 spider_main.py

# coding=utf-8 import htm_download,urls_manager,htm_output,htm_parser class SpiderMain(object): def __init__(self): self.urls = urls_manager.UrlManager() self.download = htm_download.HtmlDownload() self.parser = htm_parser.HtmlParser() self.output = htm_output.HtmlOutput() def craw(self, root_url): self.urls.add_new_url(root_url) count = 1 #启动循环 while self.urls.has_new_url(): try: new_url = self.urls.get_new_url() print( 'craw %d : %s' % (count,new_url)) html_cont = self.download.download(new_url) new_urls,new_data= self.parser.parser(new_url,html_cont)# self.urls.add_new_urls(new_urls) self.output.collect_data(new_data) if count == 10: break count = count+1 except ValueError: print('craw faild ' ) self.output.output_html() if __name__ == '__main__': root_url = 'https://baike.baidu.com/item/php/9337' spider = SpiderMain() spider.craw(root_url)

URL管理文件 url_manaher.py

# coding=utf-8 class UrlManager(object): def __init__(self): self.new_urls = set() self.old_urls = set() def add_new_url(self, root_url): if root_url is None: return if root_url not in self.new_urls and root_url not in self.old_urls: self.new_urls.add(root_url) def has_new_url(self): if len(self.new_urls) != 0: return True return False def get_new_url(self): url = self.new_urls.pop() self.old_urls.add(url) return url def add_new_urls(self, new_urls): if len(new_urls) == 0 or new_urls is None: return for url in new_urls: self.add_new_url(url) def return_old_url(self): return self.old_urls def return_new_url(self): return self.new_urls

下载url页面数据 html_download.py

# coding=utf-8 from urllib import request from urllib.parse import quote import string class HtmlDownload(object): def download(self, new_url): if new_url is None: return s = quote(new_url,safe=string.printable) resp = request.urlopen(s) if resp.status != 200: return return resp.read().decode('utf-8')

解析下载后的数据 htm_parser.py

# coding=utf-8 from bs4 import BeautifulSoup import re from urllib import parse class HtmlParser(object): def parser(self, new_url, html_cont): if new_url is None or html_cont is None: return resp = BeautifulSoup(html_cont,'html.parser') new_urls = self._get_new_urls(new_url,resp) new_data = self._get_new_data(new_url,resp) return new_urls,new_data def _get_new_urls(self, new_url, resp): links = resp.find_all('a',href=re.compile(r"/view")) new_urls = set() for link in links: new_full_url = parse.urljoin(new_url,link.get('href')) new_urls.add(new_full_url) return new_urls def _get_new_data(self, new_url, resp): res_data = {} res_data['url'] = new_url title_node = resp.find('dd',{'class':'lemmaWgt-lemmaTitle-title'}) #标题 if title_node is None: return res_data['title'] = title_node.find('h1').get_text() summary = resp.find('div',{'class':'lemma-summary'}) #class_="lemma-summary" res_data['content'] = summary.get_text() return res_data

输出解析完成的数据 htm_output.py

# coding=utf-8 class HtmlOutput(object): def __init__(self): self.datas = [] def collect_data(self, new_data): if new_data is None: return self.datas.append(new_data) def output_html(self): fout = open('output.html','w',encoding='utf-8') fout.write("<html>") fout.write("<body>") fout.write("<table>") for data in self.datas: fout.write("<tr>") fout.write("<td>%s</td>" % data['url']) fout.write("<td>%s</td>" % data['title']) fout.write("<td>%s</td>" % data['content']) fout.write("</tr>") fout.write("</table>") fout.write("</body>") fout.write("</html>") fout.close()

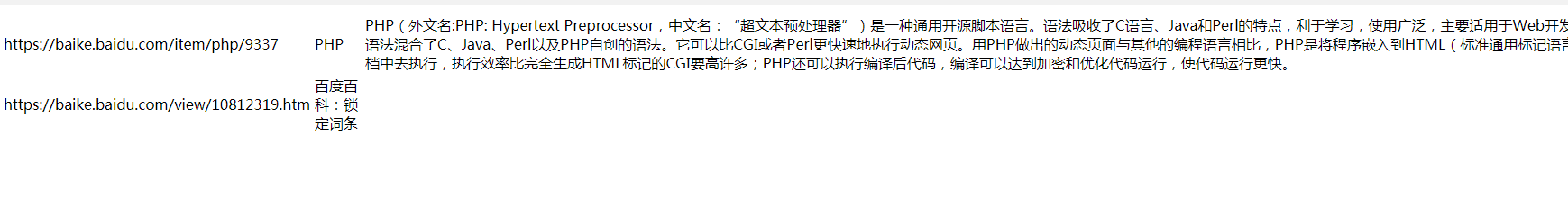

运行spider_main.py文件后的效果如下图