K8S从入门到放弃系列-(15)Kubernetes集群Ingress部署

Ingress是kubernetes集群对外提供服务的一种方式.ingress部署相对比较简单,官方把相关资源配置文件,都已经集合到一个yml文件中(mandatory.yaml),镜像地址也修改为quay.io。

1、部署

官方地址:https://github.com/kubernetes/ingress-nginx

1.1 下载部署文件:

## mandatory.yaml为ingress所有资源yml文件的集合

### 若是单独部署,需要分别下载configmap.yaml、namespace.yaml、rbac.yaml、service-nodeport.yaml、with-rbac.yaml

[root@k8s-master01 ingress-master]# wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/static/mandatory.yaml

### service-nodeport.yaml为ingress通过nodeport对外提供服务,注意默认nodeport暴露端口为随机,可以编辑该文件自定义端口

[root@k8s-master01 ingress-master]# wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/static/provider/baremetal/service-nodeport.yaml

1.2 应用yml文件创建ingress资源

[root@k8s-master01 ingress-master]# kubectl apply -f mandatory.yaml

namespace/ingress-nginx created

configmap/nginx-configuration created

configmap/tcp-services created

configmap/udp-services created

serviceaccount/nginx-ingress-serviceaccount created

clusterrole.rbac.authorization.k8s.io/nginx-ingress-clusterrole created

role.rbac.authorization.k8s.io/nginx-ingress-role created

rolebinding.rbac.authorization.k8s.io/nginx-ingress-role-nisa-binding created

clusterrolebinding.rbac.authorization.k8s.io/nginx-ingress-clusterrole-nisa-binding created

deployment.apps/nginx-ingress-controller created

[root@k8s-master01 ingress-master]# kubectl apply -f service-nodeport.yaml

service/ingress-nginx created

1.3 查看资源创建

[root@k8s-master01 ingress-master]# kubectl get pods -n ingress-nginx -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ingress-controller-86449c74bb-cbkgp 1/1 Running 0 19s 10.254.88.48 k8s-node02 <none> <none>

### 通过创建的svc可以看到已经把ingress-nginx service在主机映射的端口为33848(http),45891(https)

[root@k8s-master01 ingress-master]# kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx NodePort 10.254.102.184 <none> 80:33848/TCP,443:45891/TCP 43s

说明:

Ingress Contronler 通过与 Kubernetes API 交互,动态的去感知集群中 Ingress 规则变化,然后读取它,按照自定义的规则,规则就是写明了哪个域名对应哪个service,生成一段 Nginx 配置,再写到 Nginx-ingress-control的 Pod 里,这个 Ingress Contronler 的pod里面运行着一个nginx服务,控制器会把生成的nginx配置写入/etc/nginx.conf文件中,然后 reload 一下 使用配置生效。以此来达到域名分配置及动态更新的问题。

2、验证

2.1 创建svc及后端deployment

[root@k8s-master01 ingress-master]# cat test-ingress-pods.yml apiVersion: v1 kind: Service metadata: name: myapp-svc namespace: default spec: selector: app: myapp env: test ports: - name: http port: 80 targetPort: 80 --- apiVersion: apps/v1 kind: Deployment metadata: name: myapp-test spec: replicas: 2 selector: matchLabels: app: myapp env: test template: metadata: labels: app: myapp env: test spec: containers: - name: myapp image: nginx:1.15-alpine ports: - name: httpd containerPort: 80

## 查看pod资源部署

[root@k8s-master01 ingress-master]# kubectl get pods

NAME READY STATUS RESTARTS AGE

myapp-test-66cf5bf7d5-5cnjv 1/1 Running 0 3m39s

myapp-test-66cf5bf7d5-vdkml 1/1 Running 0 3m39s

## 查看svc

[root@k8s-master01 ingress-master]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

myapp-svc ClusterIP 10.254.155.238 <none> 80/TCP 4m40s

2.2 创建ingress规则

## ingress规则中,要指定需要绑定暴露的svc名称

[root@k8s-master01 ingress-master]# cat test-ingress-myapp.yml apiVersion: extensions/v1beta1 kind: Ingress metadata: name: ingress-myapp namespace: default annotations: kubernetes.io/ingress.class: "nginx" spec: rules: - host: www.tchua.top http: paths: - path: backend: serviceName: myapp-svc servicePort: 80

[root@k8s-master01 ingress-master]# kubectl apply -f test-ingress-myapp.yml

[root@k8s-master01 ingress-master]# kubectl get ingress

NAME HOSTS ADDRESS PORTS AGE

ingress-myapp www.tchua.top 80 13s

2.3 在win主机配置hosts域名解析

## 这里随机解析任一台节点主机都可以

172.16.11.123 www.tchua.top

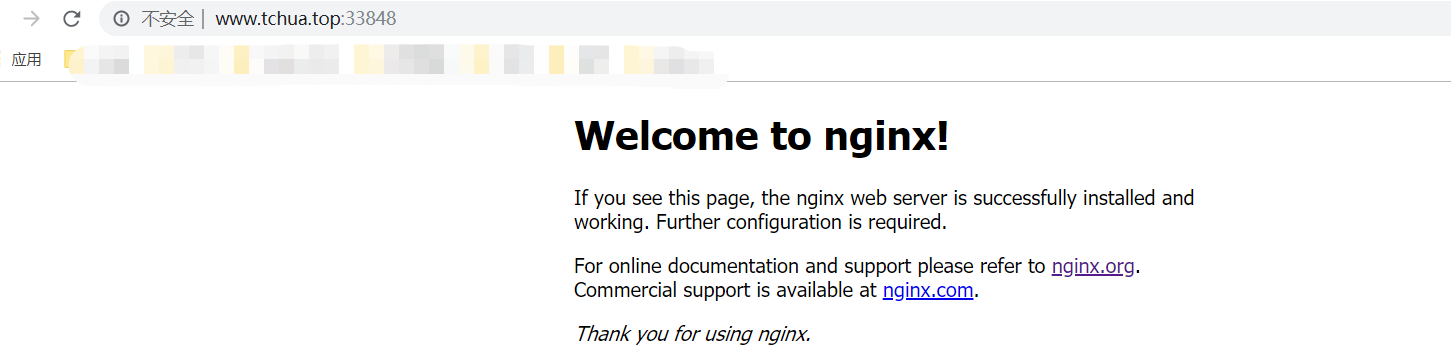

然后主机浏览器访问http://www.tchua.top:33848,这里访问时需要加上svc映射到主机时随机产生的nodePort端口号。

总结:

1、上面我们创建一个针对于nginx的deployment资源,pod为2个;

2、为nginx的pod暴露service服务,名称为myapp-svc

3、通过ingress把nginx暴露出去

这里对于nginx创建的svc服务,其实在实际调度过程中,流量是直接通过ingress然后调度到后端的pod,而没有经过svc服务,svc只是提供一个收集pod服务的作用。

3、Ingress高可用

上面我们只是解决了集群对外提供服务的功能,并没有对ingress进行高可用的部署,Ingress高可用,我们可以通过修改deployment的副本数来实现高可用,但是由于ingress承载着整个集群流量的接入,所以生产环境中,建议把ingress通过DaemonSet的方式部署集群中,而且该节点打上污点不允许业务pod进行调度,以避免业务应用与Ingress服务发生资源争抢。然后通过SLB把ingress节点主机添为后端服务器,进行流量转发。

##修改mandatory.yaml

### 主要修改pod相关

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: nginx-ingress-controller

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

spec:

selector:

matchLabels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

annotations:

prometheus.io/port: "10254"

prometheus.io/scrape: "true"

spec:

serviceAccountName: nginx-ingress-serviceaccount

hostNetwork: true

dnsPolicy: ClusterFirstWithHostNet

nodeSelector:

vanje/ingress-controller-ready: "true"

tolerations:

- key: "node-role.kubernetes.io/master"

operator: "Equal"

value: ""

effect: "NoSchedule"

containers:

- name: nginx-ingress-controller

image: quay.io/kubernetes-ingress-controller/nginx-ingress-controller:0.25.0

args:

- /nginx-ingress-controller

- --configmap=$(POD_NAMESPACE)/nginx-configuration

- --tcp-services-configmap=$(POD_NAMESPACE)/tcp-services

- --udp-services-configmap=$(POD_NAMESPACE)/udp-services

- --publish-service=$(POD_NAMESPACE)/ingress-nginx

- --annotations-prefix=nginx.ingress.kubernetes.io

securityContext:

allowPrivilegeEscalation: true

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

# www-data -> 33

runAsUser: 33

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

ports:

- name: http

containerPort: 80

- name: https

containerPort: 443

livenessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

修改参数如下:

kind: Deployment #修改为DaemonSet

replicas: 1 #注销此行,DaemonSet不需要此参数

hostNetwork: true #添加该字段让docker使用物理机网络,在物理机暴露服务端口(80),注意物理机80端口提前不能被占用

dnsPolicy: ClusterFirstWithHostNet #使用hostNetwork后容器会使用物理机网络包括DNS,会无法解析内部service,使用此参数让容器使用K8S的DNS

nodeSelector:vanje/ingress-controller-ready: "true" #添加节点标签

tolerations: 添加对指定节点容忍度

这里我在2台master节点部署(生产环境不要使用master节点,应该部署在独立的节点上),因为我们采用DaemonSet的方式,所以我们需要对2个节点打标签以及容忍度。

## 给节点打标签

[root@k8s-master01 ingress-master]# kubectl label nodes k8s-master02 vanje/ingress-controller-ready=true [root@k8s-master01 ingress-master]# kubectl label nodes k8s-master03 vanje/ingress-controller-ready=true

## 节点打污点

### master节点我之前已经打过污点,如果你没有打污点,执行下面2条命令。此污点名称需要与yaml文件中pod的容忍污点对应

[root@k8s-master02 ~]# kubectl taint nodes k8s-master02 node-role.kubernetes.io/master=:NoSchedule

[root@k8s-master03 ~]# kubectl taint nodes k8s-master03 node-role.kubernetes.io/master=:NoSchedule

3.2)创建资源

[root@k8s-master01 ingress-master]# kubectl apply -f mandatory.yaml

## 查看资源分布情况

### 可以看到两个ingress-controller已经根据我们选择,部署在2个master节点上

[root@k8s-master01 ingress-master]# kubectl get pod -n ingress-nginx -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ingress-controller-298dq 1/1 Running 0 134m 172.16.11.122 k8s-master03 <none> <none>

nginx-ingress-controller-sh9h2 1/1 Running 0 134m 172.16.11.121 k8s-master02 <none> <none>

3.3)测试

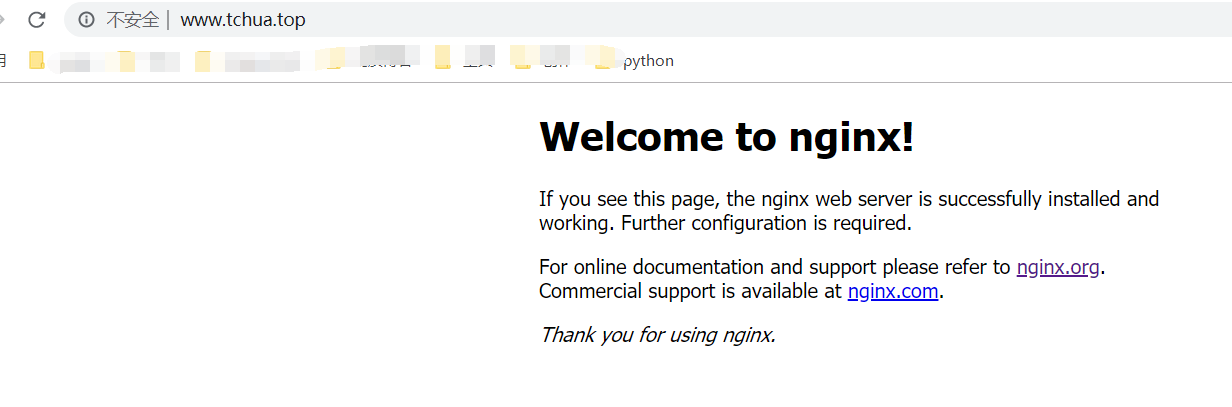

这里直接使用上面创建的pod及对应svc测试即可,另外注意一点,因为我们创建的ingress-controller采用的时hostnetwork模式,所以无需在创建ingress-svc服务来把端口映射到节点主机上。

## 创建pod及svc

[root@k8s-master01 ingress-master]# kubectl test-ingress-pods.yml

## 创建ingress规则 [root@k8s-master01 ingress-master]# kubectl test-ingress-myapp.yml

在win主机上直接解析,IP地址为k8s-master03/k8s-master02 任意节点ip即可,访问的时候也无需再加端口

浙公网安备 33010602011771号

浙公网安备 33010602011771号