redis cluaster (redis分布式集群 redis分片集群)

redis cluaster (redis分布式集群)

高可用:

在搭建集群时,会为每一个分片的主节点,对应一个从节点,实现slaveof的功能,同时当主节点down,实现类似于sentinel的自动failover的功能

redis cluaster搭建过程[6节点]:

1. 安装ruby支持

yum install ruby rubygems -y

2. 替换为国内源

使用国内源

gem sources -l

gem sources -a http://mirrors.aliyun.com/rubygems/

gem sources --remove https://rubygems.org/

gem install redis -v 3.3.3

gem sources -l

移除默认源:

gem sources -a http://mirrors.aliyun.com/rubygems/ --remove https://rubygems.org/

添加完成后通过:

[root@k8s-master1 26380]# gem sources -l

*** CURRENT SOURCES ***

http://mirrors.aliyun.com/rubygems/ #这里可以看到移除了默认源添加了一个阿里云源

3. 安装redis cluaster插件

[root@k8s-master1 26380]# gem install redis -v 3.3.3

Fetching: redis-3.3.3.gem (100%)

Successfully installed redis-3.3.3

Parsing documentation for redis-3.3.3

Installing ri documentation for redis-3.3.3

1 gem installed

4. 集群节点准备

mkdir /nosql/700{0..5} -p

vim /nosql/7000/redis.conf

#--------------------------------------

port 7000

daemonize yes

pidfile /nosql/7000/redis.pid

loglevel notice

logfile "/nosql/7000/redis.log"

dbfilename dump.rdb

dir /nosql/7000

protected-mode no

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

appendonly yes

#--------------------------------------

vim /nosql/7001/redis.conf

#--------------------------------------

port 7001

daemonize yes

pidfile /nosql/7001/redis.pid

loglevel notice

logfile "/nosql/7001/redis.log"

dbfilename dump.rdb

dir /nosql/7001

protected-mode no

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

appendonly yes

#--------------------------------------

vim /nosql/7002/redis.conf

#--------------------------------------

port 7002

daemonize yes

pidfile /nosql/7002/redis.pid

loglevel notice

logfile "/nosql/7002/redis.log"

dbfilename dump.rdb

dir /nosql/7002

protected-mode no

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

appendonly yes

#--------------------------------------

vim /nosql/7003/redis.conf

#--------------------------------------

port 7003

daemonize yes

pidfile /nosql/7003/redis.pid

loglevel notice

logfile "/nosql/7003/redis.log"

dbfilename dump.rdb

dir /nosql/7003

protected-mode no

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

appendonly yes

#--------------------------------------

vim /nosql/7004/redis.conf

#--------------------------------------

port 7004

daemonize yes

pidfile /nosql/7004/redis.pid

loglevel notice

logfile "/nosql/7004/redis.log"

dbfilename dump.rdb

dir /nosql/7004

protected-mode no

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

appendonly yes

#--------------------------------------

vim /nosql/7005/redis.conf

#--------------------------------------

port 7005

daemonize yes

pidfile /nosql/7005/redis.pid

loglevel notice

logfile "/nosql/7001/redis.log"

dbfilename dump.rdb

dir /nosql/7005

protected-mode no

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

appendonly yes

#--------------------------------------

配置完成后 启动节点

redis-server /nosql/7000/redis.conf

redis-server /nosql/7001/redis.conf

redis-server /nosql/7002/redis.conf

redis-server /nosql/7003/redis.conf

redis-server /nosql/7004/redis.conf

redis-server /nosql/7005/redis.conf

检查:

[root@k8s-master1 26380]# ps -ef |grep redis

root 1513 1 0 10:59 ? 00:00:00 redis-server *:7000 [cluster]

root 1515 1 0 10:59 ? 00:00:00 redis-server *:7001 [cluster]

root 1517 1 0 10:59 ? 00:00:00 redis-server *:7002 [cluster]

root 1523 1 0 10:59 ? 00:00:00 redis-server *:7003 [cluster]

root 1527 1 0 10:59 ? 00:00:00 redis-server *:7004 [cluster]

root 1529 1 0 10:59 ? 00:00:00 redis-server *:7005 [cluster]

root 1859 1261 0 11:00 pts/0 00:00:00 grep --color=auto redis

#加入到集群管理:

redis-trib.rb create --replicas 1 127.0.0.1:7000 127.0.0.1:7001 \

127.0.0.1:7002 127.0.0.1:7003 127.0.0.1:7004 127.0.0.1:7005

加入集群后会收到询问

Can I set the above configuration? (type 'yes' to accept): yes 输入yes 即可

注意: 这里 前3个节点为主节点,后三个节点为从节点

#查询集群状态:

集群主节点状态

redis-cli -p 7000 cluster nodes | grep master

[root@k8s-master1 26380]# redis-cli -p 7000 cluster nodes |grep master

f61f106f3cf969673a39fa0e0a795b7033166d0b 127.0.0.1:7001 master - 0 1600484746297 2 connected 5461-10922

21d16b3ccb51b444e188a2e4f344761ed5a8420f 127.0.0.1:7000 myself,master - 0 0 1 connected 0-5460

da1ede6d05d4c754c90690ab8613e0bc80b002ef 127.0.0.1:7002 master - 0 1600484746800 3 connected 10923-16383

这里查询也印证了我们说的 前三个为主节点。

集群从节点状态

redis-cli -p 7000 cluster nodes | grep slave

[root@k8s-master1 26380]# redis-cli -p 7000 cluster nodes | grep slave

ccada0a70487b609f60e904260115610b7161714 127.0.0.1:7005 slave da1ede6d05d4c754c90690ab8613e0bc80b002ef 0 1600484813136 6 connected

da4be9425c3f8fda1ff7a54f9e63d346e99e9135 127.0.0.1:7003 slave 21d16b3ccb51b444e188a2e4f344761ed5a8420f 0 1600484812129 4 connected

e67d94be0dbbdd8ffcc02fcba05d0b058a349675 127.0.0.1:7004 slave f61f106f3cf969673a39fa0e0a795b7033166d0b 0 1600484814142 5 connected

redis cluster扩容新节点

默认构建集群时它类似于服务器的硬盘槽位,默认槽位都分配好了,新增节点并不会工作,只是加入到节点了而已。

如果需要让新节点工作,则需要重新进行槽位分配。

节点扩容实战:

新增两台实例,用于扩容一个主从

mkdir /nosql/7006 -p

mkdir /nosql/7007 -p

cp /nosql/7000/redis.conf /nosql/7006/redis.conf

sed -i "s#7000#7006#g" /nosql/7006/redis.conf

cat /nosql/7006/redis.conf

#----------------------------

port 7006

daemonize yes

pidfile /nosql/7006/redis.pid

loglevel notice

logfile "/nosql/7006/redis.log"

dbfilename dump.rdb

dir /nosql/7006

protected-mode no

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

appendonly yes

#----------------------------

cp /nosql/7000/redis.conf /nosql/7007/redis.conf

sed -i "s#7000#7007#g" /nosql/7007/redis.conf

cat /nosql/7007/redis.conf

#----------------------------

port 7007

daemonize yes

pidfile /nosql/7007/redis.pid

loglevel notice

logfile "/nosql/7007/redis.log"

dbfilename dump.rdb

dir /nosql/7007

protected-mode no

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

appendonly yes

#----------------------------

两台节点配置完成后启动

redis-server /nosql/7006/redis.conf

redis-server /nosql/7007/redis.conf

检查进程:

[root@k8s-master1 26380]# ps -ef|grep redis

root 1513 1 0 10:59 ? 00:00:02 redis-server *:7000 [cluster]

root 1515 1 0 10:59 ? 00:00:02 redis-server *:7001 [cluster]

root 1517 1 0 10:59 ? 00:00:02 redis-server *:7002 [cluster]

root 1523 1 0 10:59 ? 00:00:02 redis-server *:7003 [cluster]

root 1527 1 0 10:59 ? 00:00:02 redis-server *:7004 [cluster]

root 1529 1 0 10:59 ? 00:00:02 redis-server *:7005 [cluster]

root 8835 1 0 11:30 ? 00:00:00 redis-server *:7006 [cluster] #<-------新节点启动成功

root 9102 1 0 11:31 ? 00:00:00 redis-server *:7007 [cluster] #<-------新节点启动成功

root 9356 1261 0 11:32 pts/0 00:00:00 grep --color=auto redis

root 65882 1 0 Sep18 ? 00:01:17 redis-server *:6381

root 65893 1 0 Sep18 ? 00:01:17 redis-server *:6382

root 66500 1261 0 Sep18 pts/0 00:02:01 redis-sentinel *:26379 [sentinel]

root 67226 1261 0 Sep18 pts/0 00:02:03 redis-sentinel *:26380 [sentinel]

root 68930 1 0 Sep18 ? 00:01:16 redis-server *:6380

启动完成后进行节点添加,将新增的节点加入到集群并工作:

1. 将7006加入新节点,设置为主节点

redis-trib.rb add-node 127.0.0.1:7006 127.0.0.1:7000

2. 检查状态

[root@k8s-master1 26380]# redis-cli -p 7000 cluster nodes|grep master

f61f106f3cf969673a39fa0e0a795b7033166d0b 127.0.0.1:7001 master - 0 1600486595271 2 connected 5461-10922

21d16b3ccb51b444e188a2e4f344761ed5a8420f 127.0.0.1:7000 myself,master - 0 0 1 connected 0-5460

f410ac95f665687533957933f9ddbc9d1665899a 127.0.0.1:7006 master - 0 1600486594769 0 connected <----看到已经连接到集群,但是还没做分片槽位信息

da1ede6d05d4c754c90690ab8613e0bc80b002ef 127.0.0.1:7002 master - 0 1600486595773 3 connected 10923-16383

这里可以看到我们总槽位是16383 之前有3个节点 现在有4个节点,所以需要重新分配,使用 16384/4 [方便除整,这里就多加1进行除法计算]

[root@k8s-master1 ~]# echo 16384/4|bc

4096

也就是需要每个节点分到4096

现在需要将已经分配的槽位每个分一部分给新的主节点。

3. 重新分片[重新分片的时候建议避开业务高峰期,一面出现数据丢失问题]

[root@k8s-master1 26380]# redis-trib.rb reshard 127.0.0.1:7000 #执行重新分片

How many slots do you want to move (from 1 to 16384) 4096 <--- #手工输入分片数量 4096

What is the receiving node ID? f410ac95f665687533957933f9ddbc9d1665899a #这是扩容到哪个节点,新增的是7006 所以这里应该写70006的id

Please enter all the source node IDs.

Type 'all' to use all the nodes as source nodes for the hash slots.

Type 'done' once you entered all the source nodes IDs.

Source node #1: all #<-------------- 输入all 是从所有节点进行重新分配

...

...

Moving slot 12287 from da1ede6d05d4c754c90690ab8613e0bc80b002ef

Do you want to proceed with the proposed reshard plan (yes/no)? yes #是否接受重新分片的计划? 这里直接yes就可以了

这样分片就完成了,现在重新查看状态

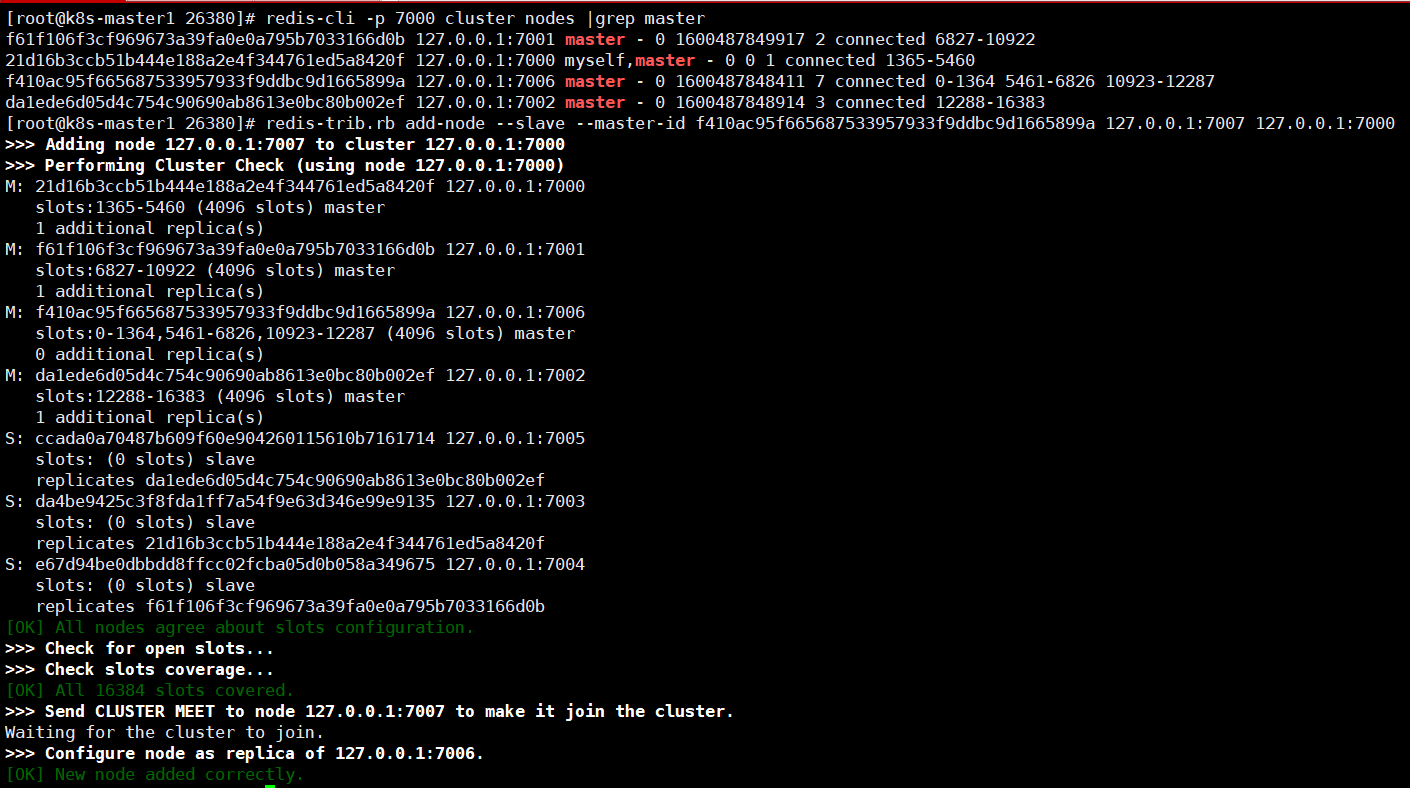

[root@k8s-master1 26380]# redis-cli -p 7000 cluster nodes |grep master

f61f106f3cf969673a39fa0e0a795b7033166d0b 127.0.0.1:7001 master - 0 1600487849917 2 connected 6827-10922

21d16b3ccb51b444e188a2e4f344761ed5a8420f 127.0.0.1:7000 myself,master - 0 0 1 connected 1365-5460

f410ac95f665687533957933f9ddbc9d1665899a 127.0.0.1:7006 master - 0 1600487848411 7 connected 0-1364 5461-6826 10923-12287

da1ede6d05d4c754c90690ab8613e0bc80b002ef 127.0.0.1:7002 master - 0 1600487848914 3 connected 12288-16383

这里可以看到 127.0.0.1:7006 获得了其他节点分配过来的槽位信息: connected 0-1364 5461-6826 10923-12287

这说明分片成功。

0-1364 5461-6826 10923-12287 这个id 是 f410ac95f665687533957933f9ddbc9d1665899a 添加从节点是为这个主节点添加从节点。

4. 刚才添加了主节点重新分片,现在需要添加从节点:

redis-trib.rb add-node --slave --master-id f410ac95f665687533957933f9ddbc9d1665899a 127.0.0.1:7007 127.0.0.1:7000

这个地址就是新增的

[root@k8s-master1]# redis-trib.rb add-node --slave --master-id f410ac95f665687533957933f9ddbc9d1665899a 127.0.0.1:7007 127.0.0.1:7000

>>> Adding node 127.0.0.1:7007 to cluster 127.0.0.1:7000

>>> Performing Cluster Check (using node 127.0.0.1:7000)

M: 21d16b3ccb51b444e188a2e4f344761ed5a8420f 127.0.0.1:7000

slots:1365-5460 (4096 slots) master

1 additional replica(s)

M: f61f106f3cf969673a39fa0e0a795b7033166d0b 127.0.0.1:7001

slots:6827-10922 (4096 slots) master

1 additional replica(s)

M: f410ac95f665687533957933f9ddbc9d1665899a 127.0.0.1:7006

slots:0-1364,5461-6826,10923-12287 (4096 slots) master

0 additional replica(s)

M: da1ede6d05d4c754c90690ab8613e0bc80b002ef 127.0.0.1:7002

slots:12288-16383 (4096 slots) master

1 additional replica(s)

S: ccada0a70487b609f60e904260115610b7161714 127.0.0.1:7005

slots: (0 slots) slave

replicates da1ede6d05d4c754c90690ab8613e0bc80b002ef

S: da4be9425c3f8fda1ff7a54f9e63d346e99e9135 127.0.0.1:7003

slots: (0 slots) slave

replicates 21d16b3ccb51b444e188a2e4f344761ed5a8420f

S: e67d94be0dbbdd8ffcc02fcba05d0b058a349675 127.0.0.1:7004

slots: (0 slots) slave

replicates f61f106f3cf969673a39fa0e0a795b7033166d0b

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

>>> Send CLUSTER MEET to node 127.0.0.1:7007 to make it join the cluster.

Waiting for the cluster to join.

>>> Configure node as replica of 127.0.0.1:7006.

[OK] New node added correctly.

redis删除节点

#1. 将需要删除节点slot移动走

redis-trib.rb reshard 127.0.0.1:7000

#2. 删除一个节点

[root@k8s-master1 26380]# redis-trib.rb reshard 127.0.0.1:7000

>>> Performing Cluster Check (using node 127.0.0.1:7000)

M: 21d16b3ccb51b444e188a2e4f344761ed5a8420f 127.0.0.1:7000

slots:1365-5460 (4096 slots) master

1 additional replica(s)

S: dbb275031f92a1b3bd67b0e264076e584b8ab2f1 127.0.0.1:7007

slots: (0 slots) slave

replicates f410ac95f665687533957933f9ddbc9d1665899a

M: f61f106f3cf969673a39fa0e0a795b7033166d0b 127.0.0.1:7001

slots:6827-10922 (4096 slots) master

1 additional replica(s)

M: f410ac95f665687533957933f9ddbc9d1665899a 127.0.0.1:7006

slots:0-1364,5461-6826,10923-12287 (4096 slots) master

1 additional replica(s)

M: da1ede6d05d4c754c90690ab8613e0bc80b002ef 127.0.0.1:7002

slots:12288-16383 (4096 slots) master

1 additional replica(s)

S: ccada0a70487b609f60e904260115610b7161714 127.0.0.1:7005

slots: (0 slots) slave

replicates da1ede6d05d4c754c90690ab8613e0bc80b002ef

S: da4be9425c3f8fda1ff7a54f9e63d346e99e9135 127.0.0.1:7003

slots: (0 slots) slave

replicates 21d16b3ccb51b444e188a2e4f344761ed5a8420f

S: e67d94be0dbbdd8ffcc02fcba05d0b058a349675 127.0.0.1:7004

slots: (0 slots) slave

replicates f61f106f3cf969673a39fa0e0a795b7033166d0b

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

How many slots do you want to move (from 1 to 16384)? 4096 #删除节点分片大小,上面添加的时候重新分配就是4096,这里也需要是4096

What is the receiving node ID? 21d16b3ccb51b444e188a2e4f344761ed5a8420f #这里的ID 就选择 7000 这个节点的

Source node #1: f410ac95f665687533957933f9ddbc9d1665899a # 这里是要删除的的节点ID,需要删除7006节点就写7006节点ID

Source node #2:done # 这里输入done 确认设置的信息

Do you want to proceed with the proposed reshard plan (yes/no)? yes #这里输入yes,这样就完成了删除节点,它会把7006节点移动到7000节点。

#3. 删除节点信息

[root@k8s-master1 26380]# redis-trib.rb del-node 127.0.0.1:7006 f410ac95f665687533957933f9ddbc9d1665899a

>>> Removing node f410ac95f665687533957933f9ddbc9d1665899a from cluster 127.0.0.1:7006

>>> Sending CLUSTER FORGET messages to the cluster...

>>> SHUTDOWN the node.

注意: 此时7006这个节点就退出了,从ps的结果中可以看到:

[root@k8s-master1 26380]# ps -ef|grep redis

root 1513 1 0 10:59 ? 00:00:12 redis-server *:7000 [cluster]

root 1515 1 0 10:59 ? 00:00:10 redis-server *:7001 [cluster]

root 1517 1 0 10:59 ? 00:00:10 redis-server *:7002 [cluster]

root 1523 1 0 10:59 ? 00:00:06 redis-server *:7003 [cluster]

root 1527 1 0 10:59 ? 00:00:07 redis-server *:7004 [cluster]

root 1529 1 0 10:59 ? 00:00:06 redis-server *:7005 [cluster]

root 9102 1 0 11:31 ? 00:00:03 redis-server *:7007 [cluster]

主节点已经删除,此时还要删除从节点:

[root@k8s-master1 26380]# redis-cli -p 7000 cluster nodes |grep slave

dbb275031f92a1b3bd67b0e264076e584b8ab2f1 127.0.0.1:7007 slave 21d16b3ccb51b444e188a2e4f344761ed5a8420f 0 1600490142525 8 connected

ccada0a70487b609f60e904260115610b7161714 127.0.0.1:7005 slave da1ede6d05d4c754c90690ab8613e0bc80b002ef 0 1600490143027 6 connected

da4be9425c3f8fda1ff7a54f9e63d346e99e9135 127.0.0.1:7003 slave 21d16b3ccb51b444e188a2e4f344761ed5a8420f 0 1600490142021 8 connected

e67d94be0dbbdd8ffcc02fcba05d0b058a349675 127.0.0.1:7004 slave f61f106f3cf969673a39fa0e0a795b7033166d0b 0 1600490143531 5 connected

7007是从节点,还需要删除这个节点[节点ID是第一列,不要删除第四列,第四列是主节点]

[root@k8s-master1 26380]# redis-trib.rb del-node 127.0.0.1:7007 dbb275031f92a1b3bd67b0e264076e584b8ab2f1

>>> Removing node dbb275031f92a1b3bd67b0e264076e584b8ab2f1 from cluster 127.0.0.1:7007

>>> Sending CLUSTER FORGET messages to the cluster...

>>> SHUTDOWN the node.

检查:

1.查看slave节点是否有信息没删除

[root@k8s-master1 26380]# redis-cli -p 7000 cluster nodes |grep slave

ccada0a70487b609f60e904260115610b7161714 127.0.0.1:7005 slave da1ede6d05d4c754c90690ab8613e0bc80b002ef 0 1600490541551 6 connected

da4be9425c3f8fda1ff7a54f9e63d346e99e9135 127.0.0.1:7003 slave 21d16b3ccb51b444e188a2e4f344761ed5a8420f 0 1600490540548 8 connected

e67d94be0dbbdd8ffcc02fcba05d0b058a349675 127.0.0.1:7004 slave f61f106f3cf969673a39fa0e0a795b7033166d0b 0 1600490541551 5 connected

2.查看master节点是否有信息没删除

[root@k8s-master1 26380]# redis-cli -p 7000 cluster nodes |grep master

f61f106f3cf969673a39fa0e0a795b7033166d0b 127.0.0.1:7001 master - 0 1600490554614 2 connected 6827-10922

21d16b3ccb51b444e188a2e4f344761ed5a8420f 127.0.0.1:7000 myself,master - 0 0 8 connected 0-6826 10923-12287

da1ede6d05d4c754c90690ab8613e0bc80b002ef 127.0.0.1:7002 master - 0 1600490553609 3 connected 12288-16383

此时 7006 已经从主从节点都删除了

删除节点的过程概述:

1. redis-trib.rb reshard 127.0.0.1:7000 #删除一个节点

2. How many slots do you want to move (from 1 to 16384)? 4096 #删除节点分片大小,上面添加的时候重新分配就是4096,这里也需要是4096

3. What is the receiving node ID? #从哪个节点接收

4. Source node #1: #这里是要删除的的节点ID,需要删除7006节点就写7006节点ID

5. Source node #2: #这里输入done 确认设置的信息

6. Do you want to proceed with the proposed reshard plan (yes/no)? yes # 是否确认删除的节点信息?

注意,redis新增节点 删除节点都需要进行重新分配的

微信赞赏

微信赞赏

支付宝赞赏

支付宝赞赏

浙公网安备 33010602011771号

浙公网安备 33010602011771号