kubernetes之四---使用kubeadm部署K8S集群

k8s组件介绍:

(1)kube-apiserver:Kubernetes API server 为api对象验证并配置数据,包括pods、services、replicationcontrollers和其它api对象API Server提供REST操作和到集群共享状态的前端,所有其他组件通过它进行交互。

(2)Kubernetes scheduler:是一个拥有丰富策略、能够感知拓扑变化、支持特定负载的功能组件,它对集群的可用性、性能表现以及容量都影响巨大。 scheduler 需要考虑独立的和集体的资源需求、

服务质量需求、硬件/软件/策略限制、亲和与反亲和规范、数据位置、内部负载接口、截止时间等等。如有必要,特定的负载需求可以通过API暴露出来。

(3)kubecontroller manager :Controller Manager 作为集群内部的管理控制中心,负责集群内的 Node 、 Pod 副本、服务端点( Endpoint )、命名空间 Namespace )、服务账号(ServiceAccount)、资源定额 ResourceQuota)的管理,当某个Node意外宕机时, Controller Manager 会及时发现并执行自动化修复流程,确保集群始终处于预期的工作状态。

(4)kube-proxy:Kubernetes 网络代理运行在 node 上,它反映了 node 上Kubernetes API 中定义的服务,并可以通过一组后端进行简单的 TCP 、 UDP 流转发或循环模式( round robin)robin))的TCP 、UDP 转发,用户必须使用 apiserver API 创建一个服务来配置代理,其实就是kube proxy通过在主机上维护网络规则并执行连接转发来实现 Kubernetes服务访问 。

官方文档:https://k8smeetup.github.io/docs/admin/kube-proxy

(5)kubelet:是主要的节点代理,它会监视已分配给节点的pod,具体功能如下:

官方文档:https://k8smeetup.github.io/docs/admin/kubelet/

- 向master汇报node节点的状态信息

- 接受指令并在Pod中创建docker容器

- 准备Pod所需的数据卷

- 返回pod的运行状态

- 在node节点执行容器健康检查

(6)etcd:是Kubernetes提供默认的存储系统,保存所有集群数据,使用时需要为etcd数据提供备份计划

官方文档:https://github.com/etcd-io/etcd

基于Kubeadm部署k8s集群

部署架构图

环境准备

环境准备说明:

测试使用的Kubernetes集群可由一个master主机及一个以上(建议至少两个)node主机组成,这些主机可以是物理服务器,也可以运行于vmware、virtualbox或kvm等虚拟化平台上的虚拟机,甚至是公有云上的VPS主机。

本测试环境将由master1、node1和node2三个独立的主机组成,它们分别拥有4核心的CPU及4G的内存资源,操作系统环境均为CentOS 7.5 1804。此外,各主机需要预设的系统环境如下:

(1)借助于NTP服务设定各节点时间精确同步;

(2)通过DNS完成各节点的主机名称解析,测试环境主机数量较少时也可以使用hosts文件进行;

(3)关闭各节点的iptables或firewalld服务,并确保它们被禁止随系统引导过程启动;

(4)各节点禁用SELinux;

(5)各节点禁用所有的Swap设备;

(6)若要使用ipvs模型的proxy,各节点还需要载入ipvs相关的各模块;

master:192.168.7.100

node1:192.168.7.101

node2:192.168.7.102

需要安装组件

docker-ce kubeadm kubelet kubectl

注意:禁用swap、selinux、iptables

#setenforce 0 #最好修改配置文件然后重启/etc/sysconfig/selinux,改为disabled #systemctl stop firewalld #systemctl disable firewalld #swapoff -a

配置三个主机的hosts文件

# vim /etc/hosts 192.168.7.100 master 192.168.7.101 node1 192.168.7.102 node2

1、如果开启了 swap 分区,kubelet 会启动失败(可以通过将参数 --fail-swap-on 设置为false 来忽略 swap on),故需要在每台机器上关闭 swap 分区:

$ sudo swapoff -a

2、为了防止开机自动挂载 swap 分区,可以注释 /etc/fstab 中相应的条目:

$ sudo sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

3、进行时间同步

$ yum -y install ntpdate $ sudo ntpdate cn.pool.ntp.org

4、修改iptables内生的桥接相关功能,已经默认开启了,没开启的自行开启

[root@master ~]# vim /etc/sysctl.conf # 修改内核参数 net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 [root@master ~]# sysctl -p # 将内核参数生效

具体步骤

1. master和node先安装kubelet docker kubeadm 2. master节点运行kubeadm init初始化命令 3. 验证master 4. Node节点使用kubeadm加入k8s master 5. 验证node 6.启动容器测试访问

kubeadm介绍

官网地址:https://kubernetes.io/zh/docs/reference/setup-tools/kubeadm/kubeadm/

1、在三个主机上都安装docker-ce

官方下载地址:https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

1、切换到 /etc/yum.repos.d目录下进行下载docker-ce的yum源

[root@k8s-master ~]# cd /etc/yum.repos.d/ [root@k8s-master yum.repos.d]# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

2、开始安装docker-ce

[root@k8s-master yum.repos.d]# yum install docker-ce -y

另外,docker自1.13版起会自动设置iptables的FORWARD默认策略为DROP,这可能会影响Kubernetes集群依赖的报文转发功能,因此,需要在docker服务启动后,重新将FORWARD链的默认策略设备为ACCEPT,方式是修改/usr/lib/systemd/system/docker.service文件,在“ExecStart=/usr/bin/dockerd”一行之后新增一行如下内容:

[Service] Type=notify # the default is not to use systemd for cgroups because the delegate issues still # exists and systemd currently does not support the cgroup feature set required # for containers run by docker ExecStartPost=/usr/sbin/iptables -P FORWARD ACCEPT # 将防火墙的FORWARD链改为ACCEPT ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock ExecReload=/bin/kill -s HUP $MAINPID TimeoutSec=0 RestartSec=2 Restart=always

3、启动docker服务

# systemctl daemon-reload # systemctl start docker # systemctl enable docker

4、配置docker加速器,并重新加载docker,设置为开机启动

[root@master ~]# mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://kganztio.mirror.aliyuncs.com"]

}

EOF

[root@master ~]# systemctl daemon-reload

[root@master ~]# systemctl start docker

[root@master ~]# systemctl enable docker.service

2、三个主机都下载kubenetes的yum源仓库并下载组件

阿里云官方地址:https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

1、在三个主机上都配置kubernetes的yum源仓库

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

2、master主节点和node1、node2主机都安装kubelet、kubeadm、kubectl组件,指定的版本号,方便后面做kubeadm的实验升级,生产中是安装的最新版本。

[root@master ~]# yum list all |grep "^kube" # 可以查看kubernetes版本 kubeadm.x86_64 1.18.6-0 @kubernetes kubectl.x86_64 1.18.6-0 @kubernetes kubelet.x86_64 1.18.6-0 @kubernetes kubernetes-cni.x86_64 0.8.6-0 @kubernetes kubernetes.x86_64 1.5.2-0.7.git269f928.el7 extras kubernetes-client.x86_64 1.5.2-0.7.git269f928.el7 extras kubernetes-master.x86_64 1.5.2-0.7.git269f928.el7 extras kubernetes-node.x86_64 1.5.2-0.7.git269f928.el7 extras

安装指定的kubernetes版本

[root@k8s-master yum.repos.d]# yum -y install kubeadm-1.14.1 kubelet-1.14.1 kubectl-1.14.1

安装完成之后插件此时安装的版本

[root@master ~]# kubeadm version

kubeadm version: &version.Info{Major:"1", Minor:"14", GitVersion:"v1.14.1", GitCommit:"b7394102d6ef778017f2ca4046abbaa23b88c290", GitTreeState:"clean", BuildDate:"2019-04-08T17:08:49Z", GoVersion:"go1.12.1", Compiler:"gc", Platform:"linux/amd64"}

3、修改kubelet配置文件

[root@master ~]# vim /etc/sysconfig/kubelet KUBELET_EXTRA_ARGS="--fail-swap-on=false" KUBE_PROXY=MODE=ipvs

设置为开机启动

[root@master ~]# systemctl enable kubelet.service

kubeadm命令详解

[root@master ~]# kubeadm --help --apiserver-advertise-address string #API Server 将要监听的监听地址 --apiserver-bind-port int32 # API Server绑定的端口默认为6443, --apiserver-cert-extra-sans stringSlice # 可选的证书额外信息,用于指定API Server的服务器证书。可以是IP地址也可以是DNS名称。 --cert-dir string # 证书的存储路径,缺省路径为/etc/kubernetes/pki --config string #kubeadm配置文件的路径 --ignore-preflight-errors strings #可以忽略检查过程中出现的错误信息,比如忽略swap,如果为all就忽略所有 --image-repository string # 设置一个镜像仓库,默认为k8s.gcr.io --kubernetes-version string # 选择k8s版本,默认为stable1 --node-name string # 指定node名称 --pod-network-cidr # 设置pod ip地址范围 --service-cidr # 设置service网络地址范围 --service-dns-domain string # 设置域名,默认为cluster.local --skip-certificate-key-print # 不打印用于加密的key信息 --skip-phases strings # 要跳过哪些阶段 --skip-token-print # 跳过打印token信息 --token # 指定token --token-ttl #指定token过期时间,默认为24小时,0为永不过期 --upload-certs #更新证书 全局选项 --log-file string # 日志路径 --log-file-max-size uint # 设置日志文件的最大大小,单位为兆,默认为18000为没有限制 rootfs # 宿主机的根路径,也就是使用绝对路径 --skip-headers # 为true,在log日志里面不显示消息的头部信息 --skip-log-headers # 为true在日志文件里面不记录头部信息

3、在主节点master机器上初始化kubeadm

需要下载的镜像,可以通过docker去拉取镜像,也可以进行初始化去下载镜像,本次实验是在初始化的过程中拉取的镜像,可以通过以下命令进行查询自己需要的镜像。

[root@master ~]# kubeadm config images list --kubernetes-version v1.14.1 k8s.gcr.io/kube-apiserver:v1.14.1 k8s.gcr.io/kube-controller-manager:v1.14.1 k8s.gcr.io/kube-scheduler:v1.14.1 k8s.gcr.io/kube-proxy:v1.14.1 k8s.gcr.io/pause:3.1 k8s.gcr.io/etcd:3.3.10 k8s.gcr.io/coredns:1.3.1

由于镜像都是国外的,下载比较慢,将上面查询到的镜像进行转发到aliyun网站即可,下载相对比较快!!!

# docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.14.1 # docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.14.1 # docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.14.1 # docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.14.1 # docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1 # docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.3.10 # docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.3.1 # docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.14.1 k8s.gcr.io/kube-apiserver:v1.14.1 # docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.14.1 k8s.gcr.io/kube-scheduler:v1.14.1 # docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.14.1 k8s.gcr.io/kube-controller-manager:v1.14.1 # docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.14.1 k8s.gcr.io/kube-proxy:v1.14.1 # docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.3.10 k8s.gcr.io/etcd:3.3.10 # docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1 # docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.3.1 k8s.gcr.io/coredns:1.3.1

1、在master主机上开始初始化,注意指定当前安装的kubeadm版本,以及忽略swap分区,此格式化之后会自动下载镜像文件。

kubeadm init --kubernetes-version=v1.14.1 \ --pod-network-cidr=10.224.0.0/16 --service-cidr=10.96.0.0/12 \ --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers \ --ignore-preflight-errors=swap

命令中的各选项简单说明如下:

- --kubernetes-version选项的版本号用于指定要部署的Kubenretes程序版本,它需要与当前的kubeadm支持的版本保持一致;

- --pod-network-cidr选项用于指定分Pod分配使用的网络地址,它通常应该与要部署使用的网络插件(例如flannel、calico等)的默认设定保持一致,10.244.0.0/16是flannel默认使用的网络;

- --service-cidr用于指定为Service分配使用的网络地址,它由kubernetes管理,默认即为10.96.0.0/12;

- 最后一个选项“--ignore-preflight-errors=Swap”仅应该在未禁用Swap设备的状态下使用。

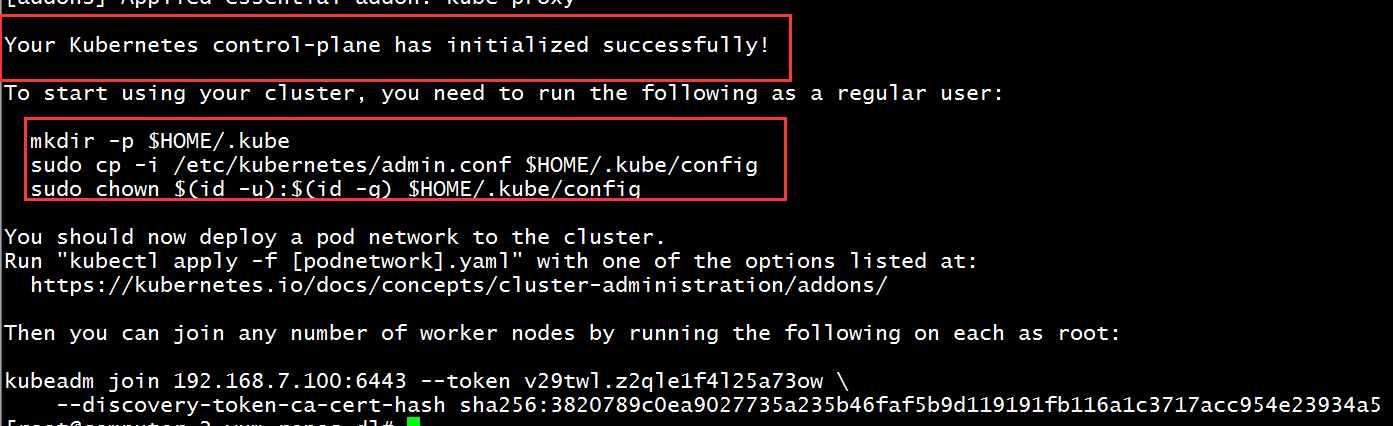

2、出现以下内容才算初始化成功,一定要记得将swap进行关闭,否则初始化不成功!!!

3、在master主机上配置kube证书,然后进行执行命令,建隐藏文件、复制一个环境变量并授权,此时master节点就能执行kubectl命令。

[root@computer-2 yum.repos.d]# mkdir -p $HOME/.kube [root@computer-2 yum.repos.d]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config [root@computer-2 yum.repos.d]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

4、在node1和node2主机上添加kubeadm初始化后最后两行的命令,将两个节点添加到master节点主机上

[root@master ~]# kubeadm init --apiserver-advertise-address=192.168.7.101 \

> --apiserver-bind-port=6443 --kubernetes-version=v1.18.3 \

> --pod-network-cidr=10.10.0.0/16 --service-cidr=10.20.0.0/16 \

> --service-dns-domain=linux36.local --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers \

> --ignore-preflight-errors=swap

W0720 22:37:02.504667 8243 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[init] Using Kubernetes version: v1.18.3

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.linux36.local] and IPs [10.20.0.1 192.168.7.101]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [master localhost] and IPs [192.168.7.101 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [master localhost] and IPs [192.168.7.101 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

W0720 22:37:17.024784 8243 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[control-plane] Creating static Pod manifest for "kube-scheduler"

W0720 22:37:17.028093 8243 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 26.505881 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 4a5y0s.jex4tzdhzj60t1ro

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.7.101:6443 --token 4a5y0s.jex4tzdhzj60t1ro \ # 执行完初始化的最后两行在node1和node2上执行,将两个节点添加到master上,实现集群

--discovery-token-ca-cert-hash sha256:5d5e7c28f5fba3cb42426e113f192d122e72514e5f7df05e1c2e1f082833b496

5、在master主机上查看主节点和node1节点状态,此时是NotReady状态,是因为还没有部署flannel

[root@computer-2 yum.repos.d]# kubectl get node NAME STATUS ROLES AGE VERSION computer-2 NotReady master 10m v1.14.1 node1 NotReady <none> 7s v1.14.1 node2 NotReady <none> 7s v1.14.1

4、在master上部署 flannel

官网地址:https://github.com/coreos/flannel/

如果下载flannel不下来,详情看:https://www.cnblogs.com/struggle-1216/p/13370413.html

# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

查看此时下载的所有docker镜像

[root@master ~]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy v1.14.1 20a2d7035165 9 months ago 82.1MB registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver v1.14.1 cfaa4ad74c37 9 months ago 210MB registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler v1.14.1 8931473d5bdb 9 months ago 81.6MB registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager v1.14.1 efb3887b411d 9 months ago 158MB quay.io/coreos/flannel v0.11.0-amd64 ff281650a721 11 months ago 52.6MB registry.cn-hangzhou.aliyuncs.com/google_containers/coredns 1.3.1 eb516548c180 12 months ago 40.3MB registry.cn-hangzhou.aliyuncs.com/google_containers/etcd 3.3.10 2c4adeb21b4f 13 months ago 258MB registry.cn-hangzhou.aliyuncs.com/google_containers/pause 3.1 da86e6ba6ca1 2 years ago 742kB

如果上面的镜像未下载下来,可以在此网页去查找下载,https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

[root@master ~]# docker pull quay.io/coreos/flannel:v0.11.0-amd64

5、在node1和node2上下载coredns:1.3.1镜像

由于在master初始化时添加了自定义的dns域名,而node1和node2节点上没有coredns镜像,只能手动去pull下载到node1和node2主机内

[root@node1 yum.repos.d]# docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.3.1 [root@node2 yum.repos.d]# docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.3.1

查看两个node节点的镜像,此时两个node节点的镜像一致

[root@node1 yum.repos.d]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy v1.14.1 20a2d7035165 9 months ago 82.1MB quay.io/coreos/flannel v0.11.0-amd64 ff281650a721 11 months ago 52.6MB registry.cn-hangzhou.aliyuncs.com/google_containers/coredns 1.3.1 eb516548c180 12 months ago 40.3MB registry.cn-hangzhou.aliyuncs.com/google_containers/pause 3.1 da86e6ba6ca1 2 years ago 742kB

6、在master节点上验证此时集群效果

(1)三个节点已经全部在准备状态

[root@master ~]# kubectl get node NAME STATUS ROLES AGE VERSION computer-2 Ready master 109m v1.14.1 node1 Ready <none> 54m v1.14.1 node2 Ready <none> 19m v1.14.1

(2)查看三个节点的状态

[root@master ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

(3)查看此时所有的节点运行状态,此时都是runing状态

[root@master ~]# kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system coredns-d5947d4b-8l45w 1/1 Running 1 110m kube-system coredns-d5947d4b-lnk5x 1/1 Running 1 110m kube-system etcd-computer-2 1/1 Running 0 109m kube-system kube-apiserver-computer-2 1/1 Running 1 109m kube-system kube-controller-manager-computer-2 1/1 Running 1 110m kube-system kube-flannel-ds-amd64-r9x8c 1/1 Running 0 21m kube-system kube-flannel-ds-amd64-smw4z 1/1 Running 0 50m kube-system kube-flannel-ds-amd64-svb24 1/1 Running 0 50m kube-system kube-proxy-dgjlx 1/1 Running 0 110m kube-system kube-proxy-hkpdn 1/1 Running 0 56m kube-system kube-proxy-mvddn 1/1 Running 0 21m kube-system kube-scheduler-computer-2 1/1 Running 1 110m

(4)查看两个node节点的状态,此时都是runing状态

[root@master ~]# kubectl get pods -n kube-system -o wide |grep node kube-flannel-ds-amd64-r9x8c 1/1 Running 0 21m 192.168.7.102 node2 <none> <none> kube-flannel-ds-amd64-svb24 1/1 Running 0 50m 192.168.7.101 node1 <none> <none> kube-proxy-hkpdn 1/1 Running 0 56m 192.168.7.101 node1 <none> <none> kube-proxy-mvddn 1/1 Running 0 21m 192.168.7.102 node2 <none> <none>

(5)可以查询每个详细的节点信息

[root@master ~]# kubectl get pods -o wide -n kube-system NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES coredns-d5947d4b-8l45w 1/1 Running 1 125m 10.10.0.2 computer-2 <none> <none> coredns-d5947d4b-lnk5x 1/1 Running 1 125m 10.10.0.3 computer-2 <none> <none> etcd-computer-2 1/1 Running 0 124m 192.168.7.100 computer-2 <none> <none> kube-apiserver-computer-2 1/1 Running 1 124m 192.168.7.100 computer-2 <none> <none> kube-controller-manager-computer-2 1/1 Running 2 124m 192.168.7.100 computer-2 <none> <none> kube-flannel-ds-amd64-r9x8c 1/1 Running 0 35m 192.168.7.102 node2 <none> <none> kube-flannel-ds-amd64-smw4z 1/1 Running 0 64m 192.168.7.100 computer-2 <none> <none> kube-flannel-ds-amd64-svb24 1/1 Running 0 64m 192.168.7.101 node1 <none> <none> kube-proxy-dgjlx 1/1 Running 0 125m 192.168.7.100 computer-2 <none> <none> kube-proxy-hkpdn 1/1 Running 0 70m 192.168.7.101 node1 <none> <none> kube-proxy-mvddn 1/1 Running 0 35m 192.168.7.102 node2 <none> <none> kube-scheduler-computer-2 1/1 Running 2 124m 192.168.7.100 computer-2 <none> <none>

7、创建容器测试效果

创建四个容器,然后进入容器中进行测试,此时可以看到不同网段的IP地址也可以跨宿主机ping通

[root@master ~]# kubectl run net-test1 --image=alpine --replicas=4 sleep 360000 # --replicas=4是创建四个容器

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

net-test1-7c9c4b94d6-46mvg 1/1 Running 0 79s 10.10.2.2 node2 <none> <none>

net-test1-7c9c4b94d6-jl7kv 1/1 Running 0 79s 10.10.1.3 node1 <none> <none>

net-test1-7c9c4b94d6-lq45j 1/1 Running 0 79s 10.10.2.3 node2 <none> <none>

net-test1-7c9c4b94d6-x7qmd 1/1 Running 0 79s 10.10.1.2 node1 <none> <none>

[root@master ~]# kubectl exec -it net-test1-7c9c4b94d6-46mvg sh

/ # ip a # 查看本机的IP地址

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

3: eth0@if6: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1450 qdisc noqueue state UP

link/ether a2:b1:a4:32:6c:5d brd ff:ff:ff:ff:ff:ff

inet 10.10.2.2/24 scope global eth0 #IP地址是10.10.2.2

valid_lft forever preferred_lft forever

/ # ping 10.10.1.3 # ping不同网段的地址,此时已经通了

PING 10.10.1.3 (10.10.1.3): 56 data bytes

64 bytes from 10.10.1.3: seq=0 ttl=62 time=32.475 ms

64 bytes from 10.10.1.3: seq=1 ttl=62 time=81.510 ms

^C

--- 10.10.1.3 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 32.475/56.992/81.510 ms

8、创建和删除名称空间

[root@master ~]# kubectl get ns # ns是namespace缩写 NAME STATUS AGE default Active 11h kube-node-lease Active 11h kube-public Active 11h kube-system Active 11h [root@master ~]# kubectl create namespace develop namespace/develop created [root@master ~]# kubectl get ns NAME STATUS AGE default Active 11h develop Active 4s kube-node-lease Active 11h kube-public Active 11h kube-system Active 11h [root@master ~]# kubectl delete namespace develop namespace "develop" deleted [root@master ~]# kubectl get ns NAME STATUS AGE default Active 11h kube-node-lease Active 11h kube-public Active 11h kube-system Active 11h

9、查询yaml和json格式的信息

[root@master ~]# kubectl get ns/default -o yaml # 输出yaml格式信息

apiVersion: v1

kind: Namespace

metadata:

creationTimestamp: "2020-07-20T14:37:43Z"

managedFields:

- apiVersion: v1

fieldsType: FieldsV1

fieldsV1:

f:status:

f:phase: {}

manager: kube-apiserver

operation: Update

time: "2020-07-20T14:37:43Z"

name: default

resourceVersion: "152"

selfLink: /api/v1/namespaces/default

uid: 95da281c-b699-41c5-9fb3-01b38b2fdbe9

spec:

finalizers:

- kubernetes

status:

phase: Active

[root@master ~]# kubectl get ns/default -o json # 输出json格式信息

{

"apiVersion": "v1",

"kind": "Namespace",

"metadata": {

"creationTimestamp": "2020-07-20T14:37:43Z",

"managedFields": [

{

"apiVersion": "v1",

"fieldsType": "FieldsV1",

"fieldsV1": {

"f:status": {

"f:phase": {}

}

},

"manager": "kube-apiserver",

"operation": "Update",

"time": "2020-07-20T14:37:43Z"

}

],

"name": "default",

"resourceVersion": "152",

"selfLink": "/api/v1/namespaces/default",

"uid": "95da281c-b699-41c5-9fb3-01b38b2fdbe9"

},

"spec": {

"finalizers": [

"kubernetes"

]

},

"status": {

"phase": "Active"

}

}

至此,kubernetes集群已经搭建安装完成;kubeadm帮助我们在后台完成了所有操作!!!