用Python和Pytorch实现softmax和cross-entropy

softmax激活函数

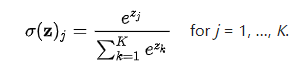

softmax激活函数将包含K个元素的向量转换到(0,1)之间,并且和为1,因此它们可以用来表示概率。

python:

def softmax(x): return np.exp(x) / np.sum(np.exp(x), axis=0)

x=np.array([0.1, 0.9, 4.0]) output=softmax(x) print('Softmax in Python :',output) #Softmax in Python : [0.04672966 0.10399876 0.84927158]

pytorch

x=torch.tensor(x) output=torch.softmax(x,dim=0) print(output) #tensor([0.0467, 0.1040, 0.8493], dtype=torch.float64)

cross-entropy

交叉熵是分类任务的常用损失,用来衡量两个分布的不同。通常以真实分布和预测分布作为输入。

![]()

#Cross Entropy Loss def cross_entropy(y, y_pre): N = y_pre.shape[0] ce =-np.sum(y*np.log(y_pre)) / N return ce

y=np.array([0,0,1]) #class #2 y_pre_good=np.array([0.1,0.1,0.8]) y_pre_bed=np.array([0.8,0.1,0.1]) l1=cross_entropy(y,y_pre_good) l2=cross_entropy(y,y_pre_bed) print('Loss 1:',l1) print('Loss 2:',l2) # Loss 1: 0.07438118377140324 # Loss 2: 0.7675283643313485

pytorch:

loss =nn.CrossEntropyLoss() y=torch.tensor([2]) y_pre_good=torch.tensor([[1.0,1.1,2.5]]) y_pre_bed=torch.tensor([[3.2,0.2,0.9]]) l1=loss(y_pre_good,y) l2=loss(y_pre_bed,y) print(l1.item()) #0.3850 print(l2.item()) #2.4398

参考链接:https://androidkt.com/implement-softmax-and-cross-entropy-in-python-and-pytorch/

浙公网安备 33010602011771号

浙公网安备 33010602011771号