一次“Error Domain=AVFoundationErrorDomain Code=-11841”的调试

一次“Error Domain=AVFoundationErrorDomain Code=-11841”的调试

起因

最近在重构视频输出模块的时候,调试碰到AVAssetReader 调用开始方法总是返回NO而失败,代码如下:

if ([reader startReading] == NO)

{

NSLog(@"Error reading from file at URL: %@", self.url);

return;

}

reader的创建代码如下,主要用的是GPUImageMovieCompostion.

- (AVAssetReader*)createAssetReader

{

NSError *error = nil;

AVAssetReader *assetReader = [AVAssetReader assetReaderWithAsset:self.compositon error:&error];

NSDictionary *outputSettings = @{(id)kCVPixelBufferPixelFormatTypeKey: @(kCVPixelFormatType_420YpCbCr8BiPlanarFullRange)};

AVAssetReaderVideoCompositionOutput *readerVideoOutput = [AVAssetReaderVideoCompositionOutput assetReaderVideoCompositionOutputWithVideoTracks:[_compositon tracksWithMediaType:AVMediaTypeVideo]

videoSettings:outputSettings];

readerVideoOutput.videoComposition = self.videoComposition;

readerVideoOutput.alwaysCopiesSampleData = NO;

if ([assetReader canAddOutput:readerVideoOutput]) {

[assetReader addOutput:readerVideoOutput];

}

NSArray *audioTracks = [_compositon tracksWithMediaType:AVMediaTypeAudio];

BOOL shouldRecordAudioTrack = (([audioTracks count] > 0) && (self.audioEncodingTarget != nil) );

AVAssetReaderAudioMixOutput *readerAudioOutput = nil;

if (shouldRecordAudioTrack)

{

[self.audioEncodingTarget setShouldInvalidateAudioSampleWhenDone:YES];

NSDictionary *audioReaderSetting = @{AVFormatIDKey: @(kAudioFormatLinearPCM),

AVLinearPCMIsBigEndianKey: @(NO),

AVLinearPCMIsFloatKey: @(NO),

AVLinearPCMBitDepthKey: @(16)};

readerAudioOutput = [AVAssetReaderAudioMixOutput assetReaderAudioMixOutputWithAudioTracks:audioTracks audioSettings:audioReaderSetting];

readerAudioOutput.audioMix = self.audioMix;

readerAudioOutput.alwaysCopiesSampleData = NO;

[assetReader addOutput:readerAudioOutput];

}

return assetReader;

}

然后在该处断点,查看reader的status和error,显示Error Domain=AVFoundationErrorDomain Code=-11841。查了一下文档发现如下:

AVErrorInvalidVideoComposition = -11841

You attempted to perform a video composition operation that is not supported.

应该就是readerVideoOutput.videoComposition = self.videoComposition; 这个videoCompostion有问题了。AVMutableVideoComposition这个类在视频编辑里面非常重要,包含对AVComposition中的各个视频track如何融合的信息,我做的是两个视频的融合小demo,就需要用到它,设置这个类稍微有点复杂,比较容易出错,特别是设置AVMutableVideoCompositionInstruction这个类的timeRange属性,我的这次错误就是因为这个。

开始调试

马上调用AVMutableVideoComposition的如下方法:

BOOL isValid = [self.videoComposition isValidForAsset:self.compositon timeRange:CMTimeRangeMake(kCMTimeZero, self.compositon.duration) validationDelegate:self];

这个时候isValid为No,确定就是这个videoCompostion问题了,添加了AVVideoCompositionValidationHandling协议四个方法打印一下,这个协议太贴心了,估计知道这个地方出错概率比较高,所以特地弄的吧。代码如下:

- (BOOL)videoComposition:(AVVideoComposition *)videoComposition shouldContinueValidatingAfterFindingInvalidValueForKey:(NSString *)key

{

NSLog(@"%s===%@",__func__,key);

return YES;

}

- (BOOL)videoComposition:(AVVideoComposition *)videoComposition shouldContinueValidatingAfterFindingEmptyTimeRange:(CMTimeRange)timeRange

{

NSLog(@"%s===%@",__func__,CFBridgingRelease(CMTimeRangeCopyDescription(kCFAllocatorDefault, timeRange)));

return YES;

}

- (BOOL)videoComposition:(AVVideoComposition *)videoComposition shouldContinueValidatingAfterFindingInvalidTimeRangeInInstruction:(id<AVVideoCompositionInstruction>)videoCompositionInstruction

{

NSLog(@"%s===%@",__func__,videoCompositionInstruction);

return YES;

}

- (BOOL)videoComposition:(AVVideoComposition *)videoComposition shouldContinueValidatingAfterFindingInvalidTrackIDInInstruction:(id<AVVideoCompositionInstruction>)videoCompositionInstruction layerInstruction:(AVVideoCompositionLayerInstruction *)layerInstruction asset:(AVAsset *)asset

{

NSLog(@"%s===%@===%@",__func__,layerInstruction,asset);

return YES;

}

重新运行一下发现第三个方法打印输出,就是instruction的timeRange问题了,又重新看了一下两个instruction的设置问题,感觉又没什么问题,代码如下:

- (void)buildCompositionWithAssets:(NSArray *)assetsArray

{

for (int i = 0; i < assetsArray.count; i++) {

AVURLAsset *asset = assetsArray[i];

NSArray *videoTracks = [asset tracksWithMediaType:AVMediaTypeVideo];

NSArray *audioTracks = [asset tracksWithMediaType:AVMediaTypeAudio];

AVAssetTrack *videoTrack = videoTracks[0];

AVAssetTrack *audioTrack = audioTracks[0];

NSError *error = nil;

AVMutableCompositionTrack *videoT = [self.avcompostions.mutableComps addMutableTrackWithMediaType:AVMediaTypeVideo preferredTrackID:kCMPersistentTrackID_Invalid];

AVMutableCompositionTrack *audioT = [self.avcompostions.mutableComps addMutableTrackWithMediaType:AVMediaTypeAudio preferredTrackID:kCMPersistentTrackID_Invalid];

[videoT insertTimeRange:videoTrack.timeRange ofTrack:videoTrack atTime:self.offsetTime error:&error];

[audioT insertTimeRange:audioTrack.timeRange ofTrack:audioTrack atTime:self.offsetTime error:nil];

NSAssert(!error, @"insert error = %@",error);

AVMutableVideoCompositionInstruction *instruction = [AVMutableVideoCompositionInstruction videoCompositionInstruction];

AVMutableVideoCompositionLayerInstruction *layerInstruction = [AVMutableVideoCompositionLayerInstruction videoCompositionLayerInstructionWithAssetTrack:videoT];

instruction.layerInstructions = @[layerInstruction];

instruction.timeRange = CMTimeRangeMake(self.offsetTime, asset.duration);

[self.instrucionArray addObject:instruction];

self.offsetTime = CMTimeAdd(self.offsetTime,asset.duration);

}

}

这个数组里面会有两个加载好的AVAsset对象,加载AVAsset的代码如下:

- (void)loadAssetFromPath:(NSArray *)paths

{

NSMutableArray *assetsArray = [NSMutableArray arrayWithCapacity:paths.count];

dispatch_group_t dispatchGroup = dispatch_group_create();

for (int i = 0; i < paths.count; i++) {

NSString *path = paths[i];

//first find from cache

AVAsset *asset = [self.assetsCache objectForKey:path];

if (!asset) {

NSDictionary *inputOptions = [NSDictionary dictionaryWithObject:[NSNumber numberWithBool:YES] forKey:AVURLAssetPreferPreciseDurationAndTimingKey];

asset = [AVURLAsset URLAssetWithURL:[NSURL fileURLWithPath:path] options:inputOptions];

// cache asset

NSAssert(asset != nil, @"Can't create asset from path", path);

NSArray *loadKeys = @[@"tracks", @"duration", @"composable"];

[self loadAsset:asset withKeys:loadKeys usingDispatchGroup:dispatchGroup];

[self.assetsCache setObject:asset forKey:path];

}else {

}

[assetsArray addObject:asset];

}

dispatch_group_notify(dispatchGroup, dispatch_get_main_queue(), ^{

!self.assetLoadBlock?:self.assetLoadBlock(assetsArray);

});

}

- (void)loadAsset:(AVAsset *)asset withKeys:(NSArray *)assetKeysToLoad usingDispatchGroup:(dispatch_group_t)dispatchGroup

{

dispatch_group_enter(dispatchGroup);

[asset loadValuesAsynchronouslyForKeys:assetKeysToLoad completionHandler:^(){

for (NSString *key in assetKeysToLoad) {

NSError *error;

if ([asset statusOfValueForKey:key error:&error] == AVKeyValueStatusFailed) {

NSLog(@"Key value loading failed for key:%@ with error: %@", key, error);

goto bail;

}

}

if (![asset isComposable]) {

NSLog(@"Asset is not composable");

goto bail;

}

bail:

dispatch_group_leave(dispatchGroup);

}];

}

按照正常来说,应该不会有什么问题的,但是问题还是来了。打印出创建的两个AVMutableVideoCompositionInstruction的信息,发现他们的timeRange确实有重合的地方。AVMutableVideoCompositionInstruction的timeRange必须对应AVMutableCompositionTrack里面的一段段插入的track的timeRange。开发文档是这么说的:

to report a video composition instruction with a timeRange that's invalid, that overlaps with the timeRange of a prior instruction, or that contains times earlier than the timeRange of a prior instruction

我在插入的时候使用的是videoTrack.timeRange,而设置instruction.timeRange = CMTimeRangeMake(self.offsetTime, asset.duration);使用的是asset.duration。这个两个竟然是不一样的。asset.duration是{59885/1000 = 59.885},videoTrack.timeRange是{{0/1000 = 0.000}, {59867/1000 = 59.867}}

这两个时长有细微差别,到底哪个是比较准确一点的呢?

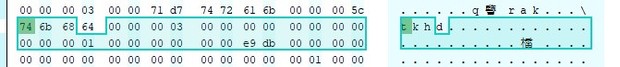

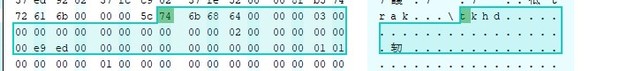

视频文件时长的计算

笔者在demo中使用的是mp4格式的文件,mp4文件是若干个不同的类型box组成的,box可以理解为装有数据的容器。其中有一种moov类型的box里面装有视频播放的元数据(metadata),这里面有视频的时长信息:timescale和duration。 duration / timescale = 可播放时长(s)。从mvhd中读到timescal为0x03e8,duration为0xe9ed,也就是59885 / 1000,为59.885。再看看tkhd box里面的内容,这里面是包含单一track的信息。如下图:

从上图可以看出videotrack和audiotrack两个的时长是不一样的,videotrack为0xe9db,也就是59.867,audiotrack为0xe9ed,就是59.885。所以我们在计算timeRange的时候最好统一使用相对精确一点的videotrack,而不要AVAsset的duration,尽量避免时间上的误差,视频精细化的编辑,对这些误差敏感。