k8s-单/多-Master-集群部署

1)基础环境

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 | 使用 virtualbox 创建虚拟机,配置双网卡网卡1:自定义NAT网络网卡2:网络地址转换(NAT),并且配置端口转发,方便主机SSH管控主机资源:Linux操作系统 Centos7-Minalkubernetes 1.12Docer-ce 18.xx-ceEtcd 3.xFlannel 0.10systemctl stop firewalld systemctl disable firewalld关闭selinux<br><br>yum -y install ntpdate <br>ntpdate cn.pool.ntp.org <br>ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime <br>cat >>/etc/rc.local<< <br>ntpdate cn.pool.ntp.org <br>>>EOF |

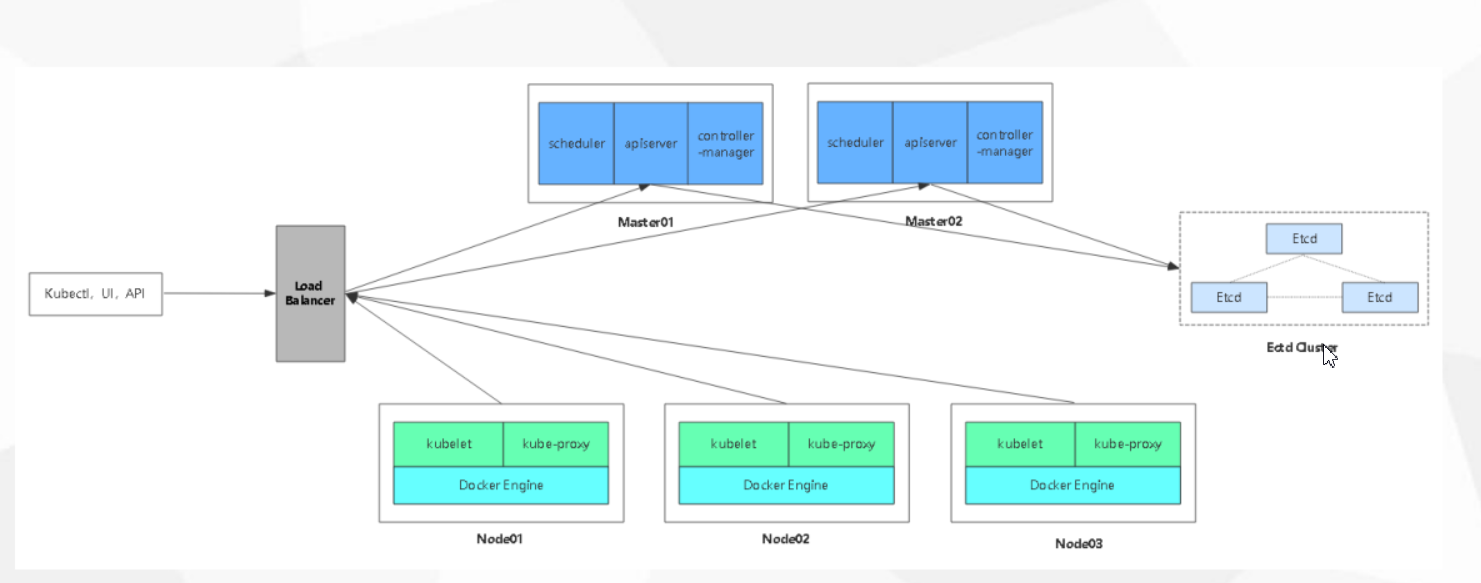

2)机器角色分配:

1 2 3 4 5 6 7 | vm3-192.168.200.3 etcd-1 master-1(kube-apiserver,kube-scheduler,kube-controller-manager)vm4-192.168.200.4 etcd-2 master-2(kube-apiserver,kube-scheduler,kube-controller-manager)-->扩容vm5-192.168.200.5 etcd-3vm6-192.168.200.6 node-1(flanneld,kubelet,kube-proxy)vm7-192.168.200.7 node-2(flanneld,kubelet,kube-proxy)vm8-192.168.200.8 nginx(L4)、keepalivedvm9-192.168.200.9 nginx(L4)、keepalived |

3)架构图:

4)部署方式选择:

二进制部署

5)部署方案分析:

先部署单master集群,后扩展master节点为多节点

6)ETCD集群部署

6-1>安装ssl工具

1 2 3 4 5 6 7 8 | sh install_cfssl.sh脚本内容如下:curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfsslcurl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljsoncurl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfochmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo |

6-2>签发etcd证书

<br>[root@vm3 etcd-cert]# pwd/data/tools/etcd-cert[root@vm3 etcd-cert]# cat etcd-cert.sh cat > ca-config.json <<EOF{ "signing": { "default": { "expiry": "876000h" }, "profiles": { "www": { "expiry": "876000h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ] } } }}EOFcat > ca-csr.json <<EOF{ "CN": "etcd CA", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing" } ]}EOFcfssl gencert -initca ca-csr.json | cfssljson -bare ca -#-----------------------cat > server-csr.json <<EOF{ "CN": "etcd", "hosts": [ "192.168.200.3", "192.168.200.4", "192.168.200.5" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing" } ]}EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server[root@vm3 etcd-cert]# sh etcd-cert.sh |

注意:这里server-csr.json中host为etcd的机器的信任IP

6-2>部署ETCD组件

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 | [root@vm3 ~]# mkdir -p /data/tools/ && cd /data/tools/[root@vm3 tools]# rz etcd-v3.3.10-linux-amd64.tar.gz[root@vm3 tools]# tar xf etcd-v3.3.10-linux-amd64.tar.gz [root@vm3 tools]# mkdir /opt/etcd/{cfg,bin,ssl} -p 我们将etcd 部署在/opt/etcd/目录下[root@vm3 tools]# mv etcd etcdctl /opt/etcd/bin/[root@vm3 etcd-cert]# cp ca-key.pem ca.pem server-key.pem server.pem /opt/etcd/ssl/[root@vm3 tools]# cat etcd.sh #!/bin/bash# example: ./etcd.sh etcd01 192.168.1.10 etcd02=https://192.168.1.11:2380,etcd03=https://192.168.1.12:2380ETCD_NAME=$1ETCD_IP=$2ETCD_CLUSTER=$3WORK_DIR=/opt/etcdcat <<EOF >$WORK_DIR/cfg/etcd#[Member]ETCD_NAME="${ETCD_NAME}"ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"EOFcat <<EOF >/usr/lib/systemd/system/etcd.service[Unit]Description=Etcd ServerAfter=network.targetAfter=network-online.targetWants=network-online.target[Service]Type=notifyEnvironmentFile=${WORK_DIR}/cfg/etcdExecStart=${WORK_DIR}/bin/etcd \--name=\${ETCD_NAME} \--data-dir=\${ETCD_DATA_DIR} \--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \--initial-cluster=\${ETCD_INITIAL_CLUSTER} \--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \--initial-cluster-state=new \--cert-file=${WORK_DIR}/ssl/server.pem \--key-file=${WORK_DIR}/ssl/server-key.pem \--peer-cert-file=${WORK_DIR}/ssl/server.pem \--peer-key-file=${WORK_DIR}/ssl/server-key.pem \--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pemRestart=on-failureLimitNOFILE=65536[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable etcdsystemctl restart etcd[root@vm3 tools]# [root@vm3 tools]# ./etcd.sh etcd01 192.168.200.3 etcd02=https://192.168.200.4:2380,etcd03=https://192.168.200.5:2380 |

2379 数据端口

2380 集群端口

另外两台节点部署etcd

scp /usr/lib/systemd/system/etcd.service root@192.168.200.4:/usr/lib/systemd/system/scp /usr/lib/systemd/system/etcd.service root@192.168.200.5:/usr/lib/systemd/system/scp -r /opt/etcd root@192.168.200.4:/opt/scp -r /opt/etcd root@192.168.200.5:/opt/vm4,vm5 的etcd配置文件需要修改/opt/etcd/cfg/etcd修改如下字段ETCD_NAME="etcd01"ETCD_LISTEN_PEER_URLS="https://192.168.200.3:2380"ETCD_LISTEN_CLIENT_URLS="https://192.168.200.3:2379"ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.200.3:2380"ETCD_ADVERTISE_CLIENT_URLS="https://192.168.200.3:2379" |

查看etcd集群健康状况:

查看etcd 集群健康状况[root@vm3 cfg]# /opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.200.4:2379,https://192.168.200.5:2379" cluster-healthmember 4a8537d90d14a19b is healthy: got healthy result from https://192.168.200.3:2379member 7129c86b0966982b is healthy: got healthy result from https://192.168.200.4:2379member b3095f3c8af680ac is healthy: got healthy result from https://192.168.200.5:2379cluster is healthy |

至此etcd集群部署完毕,这里我们可以将etcd,etcdctl加入到环境变量中,这样我们就可以直接调用了

vim .bash_profileexport PATH=$PATH:/opt/etcd/bin |

7>Node 节点Docker-ce 安装

安装docker-ce 镜像yum install -y yum-utils device-mapper-persistent-data lvm2yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repoyum install docker-ce -ycurl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://bc437cce.m.daocloud.iosystemctl start dockersystemctl enable docker |

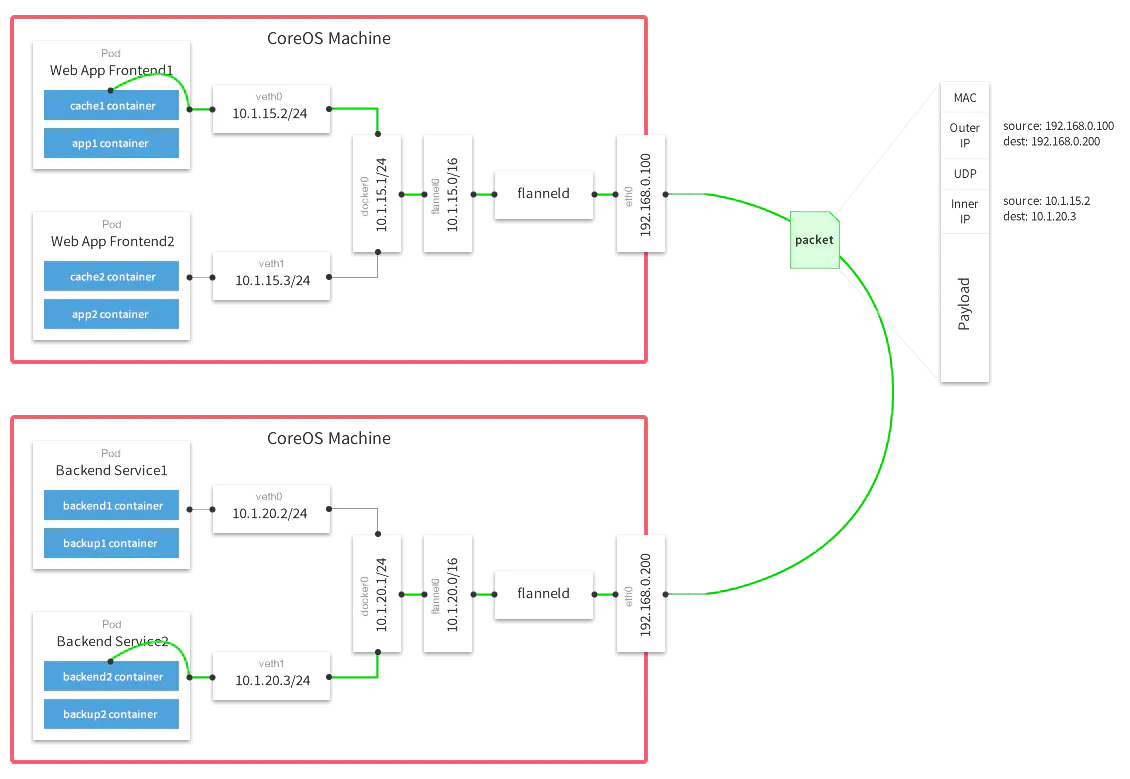

8->容器集群网络插件Flannel部署

Overlay Network:覆盖网络,在基础网络上叠加的一种虚拟网络技术模式,该网络中的主机通过虚拟链路连接起来。

VXLAN:将源数据包封装到UDP中,并使用基础网络的IP/MAC作为外层报文头进行封装,然后在以太网上传输,到达目的地后由隧道端点解封装并将数据发送给目标地址。

Flannel:是Overlay网络的一种,也是将源数据包封装在另一种网络包里面进行路由转发和通信,目前已经支持UDP、VXLAN、HOSTGW、AWS VPC和GCE路由等数据转发方式。并借助etcd(也支持kubernetes)维护网络的分配情况。

8.1 Flannel网络原理(https://www.jianshu.com/p/82864baeacac)

控制平面上host本地的flanneld负责从远端的ETCD集群同步本地和其它host上的subnet信息,并为POD分配IP地址。数据平面flannel通过Backend(比如UDP封装)来实现L3 Overlay,既可以选择一般的TUN设备又可以选择VxLAN设备。

8.2 写入分配的子网段到etcd集群中,供flannel使用

[root@vm3 ~]# etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.200.3:2379,https://192.168.200.4:2379,https://192.168.200.5:2379" set /coreos.com/network/config '{"Network":"172.17.0.0/16","Backend":{"Type":"vxlan"}}'{"Network":"172.17.0.0/16","Backend":{"Type":"vxlan"}}# 查看子网信息[root@vm3 ~]# etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.200.3:2379,https://192.168.200.4:2379,https://192.168.200.5:2379" get /coreos.com/network/config {“Network”:"172.17.0.0/16","Backend":{"Type":"vxlan"}}[root@vm3 ~]# |

8.3 node节点部署flannel插件

node6,node7 操作[root@vm6 tools]# mkdir -p /data/tools/[root@vm6 tools]# wget https://github.com/coreos/flannel/releases/download/v0.11.0/flannel-v0.11.0-linux-amd64.tar.gz[root@vm6 tools]# tar -xf flannel-v0.11.0-linux-amd64.tar.gz [root@vm6 tools]# lltotal 43780-rwxr-xr-x. 1 root root 35249016 Jan 28 2019 flanneld-rw-r--r--. 1 root root 9565743 Jan 28 2019 flannel-v0.11.0-linux-amd64.tar.gz-rwxr-xr-x. 1 root root 2139 Oct 22 2018 mk-docker-opts.sh下面会有两个可执行文件flanneld flannel二进制执行文件mk-docker-opts.sh 用于生成一个子网到文件中,docker读取这个文件,指定子网启动[root@vm6 tools]# mkdir -p /opt/kubernetes/{cfg,ssl,bin}因为flannel需要从etcd哪里获取子网信息,所以etcd的证书需要copy到flannel的ssl目录下<br>[root@vm3 ssl]# scp /opt/etcd/ssl/* root@192.168.200.7:/opt/kubernetes/ssl/root@192.168.200.7's password: ca-key.pem 100% 1675 527.1KB/s 00:00 ca.pem 100% 1265 1.2MB/s 00:00 server-key.pem 100% 1675 2.1MB/s 00:00 server.pem 100% 1342 1.8MB/s 00:00 [root@vm3 ssl]# scp /opt/etcd/ssl/* root@192.168.200.6:/opt/kubernetes/ssl/root@192.168.200.6's password: ca-key.pem 100% 1675 1.8MB/s 00:00 ca.pem 100% 1265 1.3MB/s 00:00 server-key.pem 100% 1675 1.5MB/s 00:00 server.pem 100% 1342 1.2MB/s 00:00 [root@vm3 ssl]# |

8.4 flannel 的配置脚本

[root@vm6 tools]# cat flannel.sh #!/bin/bash#ETCD_ENDPOINTS=${1:-"http://127.0.0.1:2379"}ETCD_ENDPOINTS="https://192.168.200.3:2379,https://192.168.200.4:2379,https://192.168.200.5:2379"#生成flannel的配置文件cat <<EOF >/opt/kubernetes/cfg/flanneldFLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \-etcd-cafile=/opt/kubernetes/ssl/ca.pem \-etcd-certfile=/opt/kubernetes/ssl/server.pem \-etcd-keyfile=/opt/kubernetes/ssl/server-key.pem"EOFcat <<EOF >/usr/lib/systemd/system/flanneld.service[Unit]Description=Flanneld overlay address etcd agentAfter=network-online.target network.targetBefore=docker.service[Service]Type=notifyEnvironmentFile=/opt/kubernetes/cfg/flanneldExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONSExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.envRestart=on-failure[Install]WantedBy=multi-user.targetEOFcat <<EOF >/usr/lib/systemd/system/docker.service[Unit]Description=Docker Application Container EngineDocumentation=https://docs.docker.comAfter=network-online.target firewalld.serviceWants=network-online.target[Service]Type=notify#添加如下两行内容,让docker使用flannel分配的子网段信息EnvironmentFile=/run/flannel/subnet.envExecStart=/usr/bin/dockerd \$DOCKER_NETWORK_OPTIONSExecReload=/bin/kill -s HUP \$MAINPIDLimitNOFILE=infinityLimitNPROC=infinityLimitCORE=infinityTimeoutStartSec=0Delegate=yesKillMode=processRestart=on-failureStartLimitBurst=3StartLimitInterval=60s[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reload systemctl enable flanneld systemctl restart flanneld [root@vm6 tools]# pwd/data/tools[root@vm6 tools]# cp flanneld mk-docker-opts.sh /opt/kubernetes/bin/[root@vm6 tools]# sh flannel.sh https://192.168.200.3:2379,https://192.168.200.4:2379,https://192.168.200.5:2379<br><br>flannel 启动后,需要重启一下docker <br>[root@vm6 tools]# systemctl restart docker |

同理:vm7 也用上面相同步骤操作

这里我们查看一下/run/flannel/subnet.env 内容(vm6和vm7的文件内容是不同的)

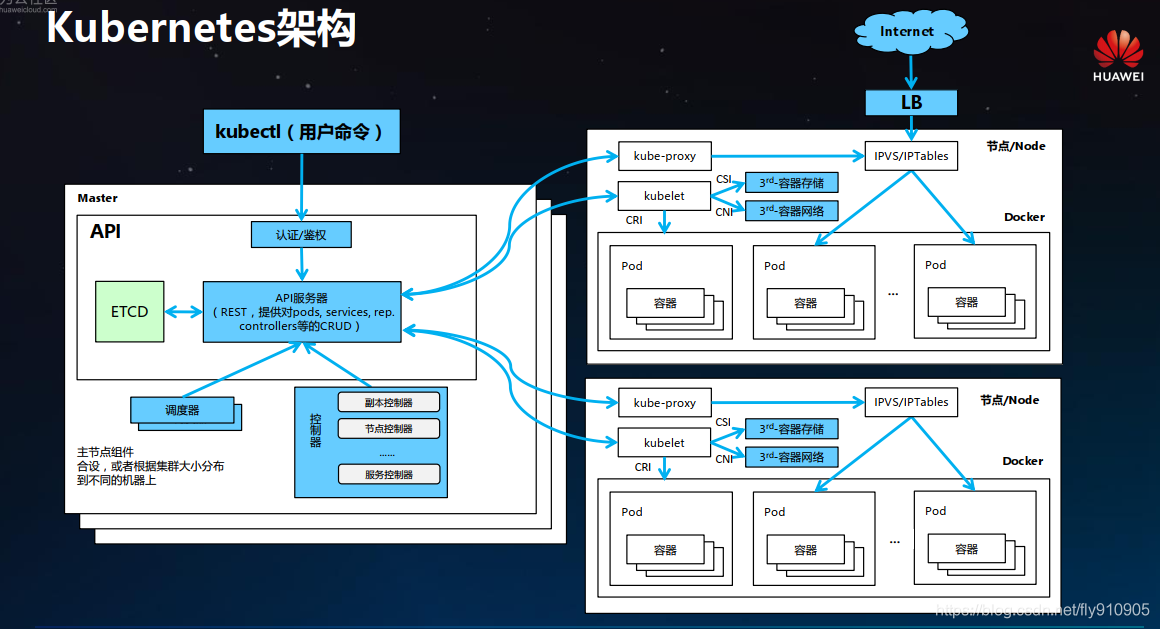

9 > Master 组件部署

组件构成:

apiserver(必须先部署,另外两个组件部署无顺序要求)

scheduler

controller-manager

9.1 > 部署apiserver

[root@vm3 master]# cat k8s-cert.sh # 生成ca证书cat > ca-config.json <<EOF{ "signing": { "default": { "expiry": "87600h" }, "profiles": { "kubernetes": { "expiry": "87600h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ] } } }}EOFcat > ca-csr.json <<EOF{ "CN": "kubernetes", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing", "O": "k8s", "OU": "System" } ]}EOFcfssl gencert -initca ca-csr.json | cfssljson -bare ca -#-----------------------# 创建apiserver的证书,这里要注意的就是host中的IP地址添加masterip,lbip,vipcat > server-csr.json <<EOF{ "CN": "kubernetes", "hosts": [ "10.0.0.1", "127.0.0.1", "192.168.200.3", "192.168.200.4", "192.168.200.5", "192.168.200.100", "192.168.200.200", "10.206.176.19", "10.206.240.188", "10.206.240.189", "kubernetes", "kubernetes.default", "kubernetes.default.svc", "kubernetes.default.svc.cluster", "kubernetes.default.svc.cluster.local" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ]}EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server#-----------------------cat > admin-csr.json <<EOF{ "CN": "admin", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "system:masters", "OU": "System" } ]}EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin#-----------------------#生成kube-proxy 证书cat > kube-proxy-csr.json <<EOF{ "CN": "system:kube-proxy", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ]}EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy |

[root@vm3 master]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p[root@vm3 master]# sh k8s-cert.sh[root@vm3 master]# cp ca*pem server*pem /opt/kubernetes/ssl/[root@vm3 master]# tar -xf kubernetes-server-linux-amd64.tar.gz [root@vm3 bin]# pwd/root/k8s/master/kubernetes/server/bin[root@vm3 bin]# cp kube-apiserver kube-scheduler kube-controller-manager kubectl /opt/kubernetes/bin/token 文件生成[root@vm3 master]# cat token.sh BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')cat > /opt/kubernetes/cfg/token.csv <<EOF${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"EOF[root@vm3 master]# sh token.sh 部署api服务[root@vm3 master]# cat apiserver.sh #!/bin/bashMASTER_ADDRESS=$1ETCD_SERVERS=$2cat <<EOF >/opt/kubernetes/cfg/kube-apiserverKUBE_APISERVER_OPTS="--logtostderr=true \\--v=4 \\--etcd-servers=${ETCD_SERVERS} \\--bind-address=${MASTER_ADDRESS} \\--secure-port=6443 \\--advertise-address=${MASTER_ADDRESS} \\--allow-privileged=true \\--service-cluster-ip-range=10.0.0.0/24 \\--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\--authorization-mode=RBAC,Node \\--kubelet-https=true \\--enable-bootstrap-token-auth \\--token-auth-file=/opt/kubernetes/cfg/token.csv \\--service-node-port-range=30000-50000 \\--tls-cert-file=/opt/kubernetes/ssl/server.pem \\--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \\--client-ca-file=/opt/kubernetes/ssl/ca.pem \\--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\--etcd-cafile=/opt/etcd/ssl/ca.pem \\--etcd-certfile=/opt/etcd/ssl/server.pem \\--etcd-keyfile=/opt/etcd/ssl/server-key.pem"EOFcat <<EOF >/usr/lib/systemd/system/kube-apiserver.service[Unit]Description=Kubernetes API ServerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserverExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOF#启动kube-apiserversystemctl daemon-reloadsystemctl enable kube-apiserversystemctl restart kube-apiserver[root@vm3 master]# sh apiserver.sh 192.168.200.3 https://192.168.200.3:2379,https://192.168.200.4:2379,https://192.168.200.5:2379<br><br> |

参数说明:

--logtostderr 启用日志

---v 日志等级

--etcd-servers etcd集群地址

--bind-address 监听地址

--secure-port https安全端口

--advertise-address 集群通告地址

--allow-privileged 启用授权

--service-cluster-ip-range Service虚拟IP地址段

--enable-admission-plugins 准入控制模块

--authorization-mode 认证授权,启用RBAC授权和节点自管理

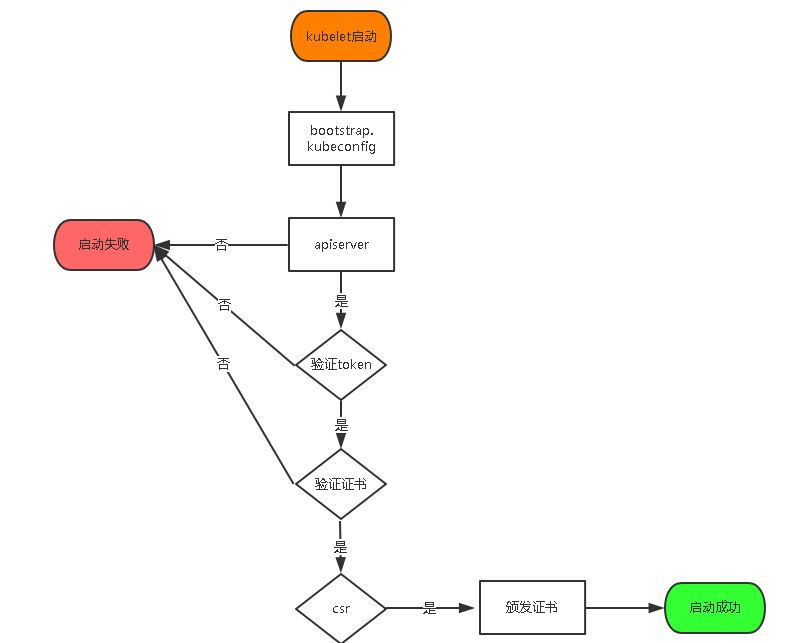

--enable-bootstrap-token-auth 启用TLS bootstrap功能认证,为了k8s能自动为node(kubelet)颁发证书

--token-auth-file token文件 就是上面 TLS bootstrap认证的时候会用到,kubelet会自动使用这个文件以一个身份通知apiserver它要加入集群,身份为kubelet-bootstrap,10001,角色为system:kubelet-bootstrap,这个角色是根据最小化权限来分配的,只用来请求证书的签名

--service-node-port-range Service Node类型默认分配端口范围

下面这些事apiserver的证书

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem

下面这些是连接etcd的证书

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem

注:

部署scheduler 和 controller-manager 组件 (如果是多节点,自身具有选举功能--leader-elect,不用做高可用,apiserver做高可用即可)

scheduler 部署

脚本内容为:

[root@vm3 master]# cat scheduler.sh #!/bin/bashMASTER_ADDRESS=$1cat <<EOF >/opt/kubernetes/cfg/kube-schedulerKUBE_SCHEDULER_OPTS="--logtostderr=true \\--v=4 \\--master=${MASTER_ADDRESS}:8080 \\--leader-elect"EOFcat <<EOF >/usr/lib/systemd/system/kube-scheduler.service[Unit]Description=Kubernetes SchedulerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=-/opt/kubernetes/cfg/kube-schedulerExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable kube-schedulersystemctl restart kube-scheduler |

controller-manager 部署

脚本内容如下:

[root@vm3 master]# cat controller-manager.sh #!/bin/bashMASTER_ADDRESS=$1cat <<EOF >/opt/kubernetes/cfg/kube-controller-managerKUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\--v=4 \\--master=${MASTER_ADDRESS}:8080 \\--leader-elect=true \\--address=127.0.0.1 \\--service-cluster-ip-range=10.0.0.0/24 \\--cluster-name=kubernetes \\--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\--root-ca-file=/opt/kubernetes/ssl/ca.pem \\--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\--experimental-cluster-signing-duration=87600h0m0s"EOFcat <<EOF >/usr/lib/systemd/system/kube-controller-manager.service[Unit]Description=Kubernetes Controller ManagerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-managerExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable kube-controller-managersystemctl restart kube-controller-manager |

执行scheduler和controller-manager

[root@vm3 master]# sh scheduler.sh 192.168.200.3(这里建议写127.0.0.1)Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service.[root@vm3 master]# ps -ef |grep schedulerroot 6875 1 2 16:49 ? 00:00:00 /opt/kubernetes/bin/kube-scheduler --logtostderr=true --v=4 --master=192.168.200.3:8080 --leader-electroot 6885 6485 0 16:49 pts/0 00:00:00 grep --color=auto scheduler[root@vm3 master]# sh controller-manager.sh 192.168.200.3(这里建议写127.0.0.1)Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /usr/lib/systemd/system/kube-controller-manager.service.[root@vm3 master]# ps -ef | grep controller-managerroot 6932 1 35 16:49 ? 00:00:03 /opt/kubernetes/bin/kube-controller-manager --logtostderr=true --v=4 --master=192.168.200.3:8080 --leader-elect=true --address=127.0.0.1 --service-cluster-ip-range=10.0.0.0/24 --cluster-name=kubernetes --cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem --cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem --root-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem --experimental-cluster-signing-duration=87600h0m0sroot 6940 6485 0 16:50 pts/0 00:00:00 grep --color=auto controller-manager参数说明:--master 连接本地apiserver--leader-elect 当该组件启动多个时,自动选举(HA)通过kubectl工具查看当前集群组件状态[root@vm3 master]# /opt/kubernetes/bin/kubectl get csNAME STATUS MESSAGE ERRORcontroller-manager Healthy ok scheduler Healthy ok etcd-0 Healthy {"health":"true"} etcd-2 Healthy {"health":"true"} etcd-1 Healthy {"health":"true"} 接下来我们将几个可执行文件加入环境变量vim .bash_profile export PATH=$PATH:/opt/etcd/bin:/opt/kubernetes/bin |

10> Node 节点组件部署

copy node 节点所需要的组件 kubelet kube-proxy

[root@vm3 bin]# pwd/root/k8s/master/kubernetes/server/bin[root@vm3 bin]# scp kubelet kube-proxy root@192.168.200.6:/opt/kubernetes/bin/root@192.168.200.6's password: kubelet 100% 168MB 23.9MB/s 00:07 kube-proxy 100% 4172KB 82.9MB/s 00:00 [root@vm3 bin]# scp kubelet kube-proxy root@192.168.200.7:/opt/kubernetes/bin/root@192.168.200.7's password: kubelet 100% 168MB 95.5MB/s 00:01 kube-proxy |

生成配置文件的脚本需要在master节点上执行

[root@vm3 kubeconfig]# pwd/root/k8s/kubeconfig[root@vm3 kubeconfig]# cat kubeconfig.sh # 创建 TLS Bootstrapping Token#BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')BOOTSTRAP_TOKEN=`cat /opt/kubernetes/cfg/token.csv | awk -F"," '{print $1}'`#api地址APISERVER=$1#使用k8s-cert.sh 生成的证书(前面已经操作过了),证书所在目录SSL_DIR=$2# 创建kubelet bootstrapping kubeconfig export KUBE_APISERVER="https://$APISERVER:6443"# 设置集群参数kubectl config set-cluster kubernetes \ --certificate-authority=$SSL_DIR/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=bootstrap.kubeconfig# 设置客户端认证参数kubectl config set-credentials kubelet-bootstrap \ --token=${BOOTSTRAP_TOKEN} \ --kubeconfig=bootstrap.kubeconfig# 设置上下文参数kubectl config set-context default \ --cluster=kubernetes \ --user=kubelet-bootstrap \ --kubeconfig=bootstrap.kubeconfig# 设置默认上下文kubectl config use-context default --kubeconfig=bootstrap.kubeconfig#----------------------# 创建kube-proxy kubeconfig文件kubectl config set-cluster kubernetes \ --certificate-authority=$SSL_DIR/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=kube-proxy.kubeconfigkubectl config set-credentials kube-proxy \ --client-certificate=$SSL_DIR/kube-proxy.pem \ --client-key=$SSL_DIR/kube-proxy-key.pem \ --embed-certs=true \ --kubeconfig=kube-proxy.kubeconfigkubectl config set-context default \ --cluster=kubernetes \ --user=kube-proxy \ --kubeconfig=kube-proxy.kubeconfigkubectl config use-context default --kubeconfig=kube-proxy.kubeconfig[root@vm3 kubeconfig]# sh kubeconfig.sh 192.168.200.3 /root/k8s/masterCluster "kubernetes" set.User "kubelet-bootstrap" set.Context "default" created.Switched to context "default".Cluster "kubernetes" set.User "kube-proxy" set.Context "default" created.Switched to context "default".这样就生成了bootstrap.config 和 kube-proxy.kubeconfig 配置文件[root@vm3 kubeconfig]# pwd/root/k8s/kubeconfig[root@vm3 kubeconfig]# lltotal 16-rw------- 1 root root 2167 Feb 23 00:40 bootstrap.kubeconfig-rw-r--r-- 1 root root 1583 Feb 23 00:39 kubeconfig.sh-rw------- 1 root root 6269 Feb 23 00:40 kube-proxy.kubeconfig[root@vm3 kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.200.6:/opt/kubernetes/cfg/root@192.168.200.6's password: bootstrap.kubeconfig 100% 2167 697.7KB/s 00:00 kube-proxy.kubeconfig 100% 6269 723.1KB/s 00:00 [root@vm3 kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.200.7:/opt/kubernetes/cfg/root@192.168.200.7's password: bootstrap.kubeconfig 100% 2167 781.6KB/s 00:00 kube-proxy.kubeconfig |

master 节点操作 将kubelet-bootstrap用户绑定到系统集群角色,这个一步不操作,会导致kubelet无法启动

[root@vm3 k8s]# kubectl create clusterrolebinding kubelet-bootstrap \--clusterrole=system:node-bootstrapper \--user=kubelet-bootstrap |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 | [root@vm6 node]# cat kubelet.sh #!/bin/bashNODE_ADDRESS=$1DNS_SERVER_IP=${2:-"10.0.0.2"}cat <<EOF >/opt/kubernetes/cfg/kubeletKUBELET_OPTS="--logtostderr=true \\--v=4 \\--hostname-override=${NODE_ADDRESS} \\--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\--config=/opt/kubernetes/cfg/kubelet.config \\--cert-dir=/opt/kubernetes/ssl/kubelet \\--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"EOFcat <<EOF >/opt/kubernetes/cfg/kubelet.configkind: KubeletConfigurationapiVersion: kubelet.config.k8s.io/v1beta1address: ${NODE_ADDRESS}port: 10250readOnlyPort: 10255cgroupDriver: cgroupfsclusterDNS:- ${DNS_SERVER_IP} clusterDomain: cluster.local.failSwapOn: falseauthentication: anonymous: enabled: trueEOFcat <<EOF >/usr/lib/systemd/system/kubelet.service[Unit]Description=Kubernetes KubeletAfter=docker.serviceRequires=docker.service[Service]EnvironmentFile=/opt/kubernetes/cfg/kubeletExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTSRestart=on-failureKillMode=process[Install]WantedBy=multi-user.targetEOF# 启动kubeletsystemctl daemon-reloadsystemctl enable kubeletsystemctl restart kubelet[root@vm6 node]# sh kubelet.sh 192.168.200.6 node7 都执行上面步骤脚本注释:--cert-dir=/opt/kubernetes/ssl/kubelet这个是apiserver为kubelet颁发证书存放的目录 这个时候我们就看到vm6节点的kubelet进程有了,这个时候他去连接apiserver,请求apiserver为其颁发证书我们登录master节点可以看到[root@vm3 kubeconfig]# kubectl get csr NAME AGE REQUESTOR CONDITIONnode-csr-FIQxgA9RxLElyEtd011fiGslJOcJxd0t7Mp0O5wstvs 74s kubelet-bootstrap Pendingnode-csr-hUnG5TD6op9rqnNXgK-51CG_6VVQ93444UsFTw2akrk 13s kubelet-bootstrap Pending这个时候需要登陆到Master节点上审批Node加入集群[root@vm3 kubeconfig]# kubectl certificate approve node-csr-FIQxgA9RxLElyEtd011fiGslJOcJxd0t7Mp0O5wstvscertificatesigningrequest.certificates.k8s.io/node-csr-FIQxgA9RxLElyEtd011fiGslJOcJxd0t7Mp0O5wstvs approved[root@vm3 kubeconfig]# kubectl get csr NAME AGE REQUESTOR CONDITIONnode-csr-FIQxgA9RxLElyEtd011fiGslJOcJxd0t7Mp0O5wstvs 5m3s kubelet-bootstrap Approved,Issuednode-csr-hUnG5TD6op9rqnNXgK-51CG_6VVQ93444UsFTw2akrk 4m2s kubelet-bootstrap Pending 我们再次查看的时候发现这个node节点已经approve允许加入了注:中间遇到过一次kubectl get csr 返回 No resources[root@vm3 ~]# kubectl get csrNo resources found.这里具体的原因不详,这里登录node删除认证文件重启一下kubelet [root@vm7 kubelet]# pwd/opt/kubernetes/ssl/kubelet[root@vm7 kubelet]# lltotal 12-rw-------. 1 root root 1261 Oct 19 04:16 kubelet-client-2020-10-19-04-16-56.pemlrwxrwxrwx. 1 root root 66 Oct 19 04:16 kubelet-client-current.pem -> /opt/kubernetes/ssl/kubelet/kubelet-client-2020-10-19-04-16-56.pem-rw-r--r--. 1 root root 2132 Oct 19 02:29 kubelet.crt-rw-------. 1 root root 1675 Oct 19 02:29 kubelet.key[root@vm7 kubelet]# rm -f * [root@vm7 kubelet]# systemctl restart kubelet 再次执行就好了[root@vm3 cfg]# kubectl get csr NAME AGE REQUESTOR CONDITIONnode-csr-CW2EbDoZiYnqn9TBouCMNOzcTR0VVi1Gn75p7C1wloY 1s kubelet-bootstrap Pendingnode-csr-aWZLSZAE1TT3lHGHUlkZYKRqbuCjmHlHGC0I3ITfanA 60s kubelet-bootstrap Pending |

kube-proxy 部署:

[root@vm6 node]# cat proxy.sh #!/bin/bashNODE_ADDRESS=$1cat <<EOF >/opt/kubernetes/cfg/kube-proxyKUBE_PROXY_OPTS="--logtostderr=true \\--v=4 \\--hostname-override=${NODE_ADDRESS} \\--cluster-cidr=10.0.0.0/24 \\--proxy-mode=ipvs \\--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"EOFcat <<EOF >/usr/lib/systemd/system/kube-proxy.service[Unit]Description=Kubernetes ProxyAfter=network.target[Service]EnvironmentFile=-/opt/kubernetes/cfg/kube-proxyExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable kube-proxysystemctl restart kube-proxy[root@vm6 node]# sh proxy.sh 192.168.200.6接下来200.7 节点相同的操作最后我们到master节点上执行kubectl get node 就发现有两个节点了[root@vm3 ~]# kubectl get node NAME STATUS ROLES AGE VERSION192.168.200.6 Ready <none> 6h53m v1.12.3192.168.200.7 Ready <none> 5h3m v1.12.3 |

11> 扩容Master

master节点上有三个k8s组件

kube-apiserver

kube-scheduler

kube-controller-manager

scheduler和controller-manager 本身具有--leader-elect 功能,不用做高可用

因为apiserver是http服务,所以我们给他做高可用即可,之前因为apiserver只有一台,所有node节点直接连接apiserver的IP,当apiserver做了高可用之后,后面node要想连接apiserver,就连接LB的IP即可

vm4 扩容成master:

[root@vm3 ~]# scp -r /opt/kubernetes root@192.168.200.4:/opt/root@192.168.200.4's password: token.csv 100% 84 36.1KB/s 00:00 kube-apiserver 100% 929 359.2KB/s 00:00 kube-controller-manager 100% 483 300.5KB/s 00:00 kube-scheduler 100% 94 31.8KB/s 00:00 kube-apiserver 100% 184MB 11.2MB/s 00:16 kube-scheduler 100% 55MB 5.6MB/s 00:09 kube-controller-manager 100% 155MB 7.6MB/s 00:20 kubectl 100% 55MB 6.8MB/s 00:07 ca-key.pem 100% 1675 706.4KB/s 00:00 ca.pem 100% 1359 331.5KB/s 00:00 server-key.pem 100% 1679 231.1KB/s 00:00 server.pem 100% 1667 149.8KB/s 00:00 [root@vm3 ~]# scp /usr/lib/systemd/system/kube-kube-apiserver.service kube-controller-manager.service kube-scheduler.service [root@vm3 ~]# scp /usr/lib/systemd/system/kube-* root@192.168.200.4:/usr/lib/systemd/system/root@192.168.200.4's password: kube-apiserver.service 100% 282 115.7KB/s 00:00 kube-controller-manager.service 100% 317 74.6KB/s 00:00 kube-scheduler.service 这里我们只要修改一下vm4的cfg/kube-apiserver 中的IP地址为192.168.200.4[root@vm4 cfg]# systemctl daemon-reload[root@vm4 cfg]# systemctl enable kube-apiserver Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /usr/lib/systemd/system/kube-apiserver.service.[root@vm4 cfg]# systemctl restart kube-apiserver |

QA:

1、Oct 23 05:17:36 vm6 kubelet: I1023 17:17:36.364778 99526 bootstrap.go:235] Failed to connect to apiserver: the server has asked for the client to provide credentials

原因是kubeconfig.sh脚本中在生成bootstrap.kubeconfig 配置文件的时候token写死了。这里应该从token.sh 生成的文件里读取,所以修改kubeconfig.sh后重新执行,然后将bootstrap.kubeconfig和kube-proxy.kubeconfig重新分发到node机器上,并且重启kubelet

2、kubectl get node 的时候还是没有值,查看日志一直报

Feb 22 13:12:04 vm3 kube-scheduler: E0223 02:12:04.209233 8047 reflector.go:134] k8s.io/client-go/informers/factory.go:131: Failed to list *v1.PersistentVolumeClaim: Get http://192.168.200.3:8080/api/v1/persistentvolumeclaims?limit=500&resourceVersion=0: dial tcp 192.168.200.3:8080: connect: connection refusedFeb 22 13:12:04 vm3 kube-scheduler: I0223 02:12:04.209556 8047 reflector.go:169] Listing and watching *v1.Service from k8s.io/client-go/informers/factory.go:131Feb 22 13:12:04 vm3 kube-scheduler: E0223 02:12:04.209806 8047 reflector.go:134] k8s.io/client-go/informers/factory.go:131: Failed to list *v1.Service: Get http://192.168.200.3:8080/api/v1/services?limit=500&resourceVersion=0: dial tcp 192.168.200.3:8080: connect: connection refused |

这个时候我们需要登录master 主机上修改 kube-controller-manager kube-scheduler

将配置文件中的master IP 修改成127.0.0.1 ,然后重启一下两个组件就可以了

3、node节点配置flanneld 报错

Feb 24 03:42:53 vm7 flanneld: timed outFeb 24 03:42:53 vm7 flanneld: E0224 03:42:53.786685 4262 main.go:382] Couldn't fetch network config: invalid character '?' looking for beginning of object key stringFeb 24 03:42:54 vm7 flanneld: timed outFeb 24 03:42:54 vm7 flanneld: E0224 03:42:54.792365 4262 main.go:382] Couldn't fetch network config: invalid character '?' looking for beginning of object key stringFeb 24 03:42:55 vm7 flanneld: timed outFeb 24 03:42:55 vm7 flanneld: E0224 03:42:55.796666 4262 main.go:382] Couldn't fetch network config: invalid character '?' looking for beginning of object key stringFeb 24 03:42:56 vm7 flanneld: timed outFeb 24 03:42:56 vm7 flanneld: E0224 03:42:56.801973 4262 main.go:382] Couldn't fetch network config: invalid character '?' looking for beginning of object key stringFeb 24 03:42:57 vm7 flanneld: timed outFeb 24 03:42:57 vm7 flanneld: E0224 03:42:57.806036 4262 main.go:382] Couldn't fetch network config: invalid character '?' looking for beginning of object key string |

这里错误的原因是下面的Network 用了中文的双引号,修改一下(写入分配的子网段到etcd集群中,供flannel使用)

[root@vm3 ~]# etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.200.3:2379,https://192.168.200.4:2379,https://192.168.200.5:2379" get /coreos.com/network/config {“Network”:"172.17.0.0/16","Backend":{"Type":"vxlan"}} |

4、中间遇到过systemctl start kubelet 后到 master 节点上执行kubectl get csr 发现无结果。

方案:

登录 node 节点上将之前api给kubelet 签发的证书删除掉。在重启一下kubelet就好了

[root@vm6 kubelet]# pwd

/opt/kubernetes/ssl/kubelet

5、证书:

1、etcd flanneld 使用的是一份证书etcd生成的证书(etcd-cert.sh)

2、kube-apiserver kube-scheduler kube-controller-manager kubelet kube-proxy(k8s-cert.sh)

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 10年+ .NET Coder 心语,封装的思维:从隐藏、稳定开始理解其本质意义

· .NET Core 中如何实现缓存的预热?

· 从 HTTP 原因短语缺失研究 HTTP/2 和 HTTP/3 的设计差异

· AI与.NET技术实操系列:向量存储与相似性搜索在 .NET 中的实现

· 基于Microsoft.Extensions.AI核心库实现RAG应用

· 10年+ .NET Coder 心语 ── 封装的思维:从隐藏、稳定开始理解其本质意义

· 地球OL攻略 —— 某应届生求职总结

· 提示词工程——AI应用必不可少的技术

· Open-Sora 2.0 重磅开源!

· 周边上新:园子的第一款马克杯温暖上架