ML Theory 太魔怔了!!!!!

从微积分课上我们学到

-

对一个 C2 函数,其二阶泰勒展开的皮亚诺余项形式

f(w′)=f(w)+⟨∇f(w),w′−w⟩+o(∥w′−w∥)

这说明只要 w′ 和 w 挨得足够接近,我们就可以用 f(w)+⟨∇f(w),w′−w⟩ 来逼近 f(w′)。

现在我们想定量描述这个逼近过程,来说明梯度下降 (gredient descent, GD) 的收敛性及其速率。因此考虑其拉格朗日余项

f(w′)=f(w)+⟨∇f(w),w′−w⟩+g(ξ)2∥w′−w∥2

我们来定量描述 g 的性质。

由于梯度下降要执行多轮,因此会有不同的 w,w′,所以性质需要适用于所有位置。

定义 平滑性假设 (Smoothness assumption) ∃L, s.t. ∀w,w′,|g(ξ)|≤L。换句话说,

|f(w′)−f(w)−⟨∇f(w),w′−w⟩|≤L2∥w′−w∥2

这个假设是非常自然的,其略强于 C2。在有界闭集上两者等价。

平滑性是说一个函数在每个点被一个二次函数 bound 住,在梯度的视角下,这等价于其 Lipschitz 连续,在 Hessian 矩阵的视角下,这等价于矩阵的 norm 被 bound 住。

命题 梯度 L-Lipschitz 连续等价于 ∥∇2f(x)∥≤L,其中 |∇2f(x)| 表示 Hessian 矩阵的 Euclidean norm,即 max∥x∥=1∥Hx∥=|λ|max。梯度 L-Lipschitz 连续表示

∥∇f(w)−∇f(w′)∥≤L∥w−w′∥

证明

-

⇐:

∥∇f(w′)−∇f(w)∥=∥∥∥∫10∇2f(w+τ(w′−w))(w′−w)dτ∥∥∥=∥∥∥∫10∇2f(w+τ(w′−w))dτ⋅(w′−w)∥∥∥≤∥∥∥∫10∇2f(w+τ(w′−w))dτ∥∥∥⋅∥w′−w∥≤∫10∥∇2f(w+τ(w′−w))∥dτ⋅∥w′−w∥≤L∥w′−w∥

-

⇒:

∥∇2f(w)∥=max∥x∥=1∥Hx∥≤limα→0+∥∇f(w+αv)−∇f(w)∥α≤L

其中 ∥v∥=1。

命题 L-平滑等价于梯度 L-Lipschitz 连续。

证明

-

⇐:

f(w′)=f(w)+∫10⟨∇f(w+τ(w′−w)),w′−w⟩dτ=f(w)+⟨∇f(w),w′−w⟩+∫10⟨∇f(w+τ(w′−w))−∇f(w),w′−w⟩dτ≤f(w)+⟨∇f(w),w′−w⟩+∫10∥∇f(w+τ(w′−w))−∇f(w)∥⋅∥w′−w∥dτCauchy-Schwarz≤f(w)+⟨∇f(w),w′−w⟩+∫10Lτ∥w′−w∥⋅∥w′−w∥dτ=f(w)+⟨∇f(w),w′−w⟩+L2∥w′−w∥2

-

⇒:考虑 f 的 Lagrange 余项的 Taylor 展开

f(w′)=f(w)+⟨f(w),w′−w⟩+12⟨∇2f(ξ)(w′−w),w′−w⟩

|f(w′)−f(w)−⟨∇f(w),w′−w⟩|=12|⟨∇2f(ξ)(w′−w),w′−w⟩|≤L2∥w′−w∥2

令 w′=w+tv,∥v∥=1,有

|⟨∇2f(w+tv)v,v⟩|≤L

令 t→0+,由于 f 是 C2 函数,可得

|⟨∇2f(w)v,v⟩|≤L

注意到 ∇2f(w) 是一个 self-adjoint 的矩阵,因此

maxv∥∇2f(w)v∥2=maxv⟨∇2f(w)v,v⟩=|λ|max

根据上一条命题,该命题得证。

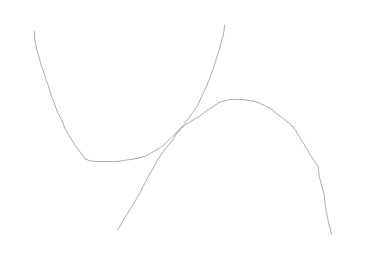

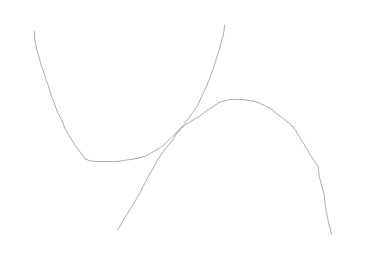

回到梯度下降中。对平滑的 f,有

{f(w′)≤f(w)+⟨∇f(w),w′−w⟩+L2∥w′−w∥2f(w′)≥f(w)+⟨∇f(w),w′−w⟩−L2∥w′−w∥2

这给出了一个从 w 出发,走到某个 w′ 的 f 的上下界,就像这样(灵魂画手 yy)

下界并不重要,我们关心的是上界。在 w,w′ 足够接近时,f 总是下降的,定量地,假设在梯度下降中采取学习速率 η,w′=w−η∇f(w),

f(w′)−f(w)≤⟨∇f(w),w′−w⟩+L2∥w′−w∥2=⟨∇f(w),−η∇f(w)⟩+Lη22∥∇f(w)∥2=−η(1−Lη2)∥∇f(w)∥2

因此当 η<2L 时,式子总是 <0 的,这保证我们每次梯度下降都会有进步。

但是这个假设还是不够。首先它可能会落入局部最优,其次虽然每次都有进步,但是全局的收敛速度没有保证。考虑 f(x)=sigmoid(x),从 x 很大的开始向中间靠拢,速度是负指数级的。这要求我们给函数更多的整体性质。

定义 一个函数 f 是凸的,如果 f(tx1+(1−t)x2)≤tf(x1)+(1−t)f(x2), t∈[0,1]。

其有若干个等价定义,这是微积分课上讲过的。

命题 若 f 是 C2 函数,则凸等价于 ∇2f(w) 半正定。

也就是说,凸性和平滑性一个保证的是 |λ|max 的界,一个保证的是 λmin 的符号。

凸性能够保证收敛速度。

命题 w∗=argminwf(w),采用学习速率 η≤1L 进行 t 轮梯度下降时,有

f(wt)≤f(w∗)+12ηt∥w0−w∗∥2

证明 考虑裂项法

f(wi+1)≤f(wi)−η(1−Lη2)∥∇f(wi)∥2Smoothness≤f(wi)−η2∥∇f(wi)∥2≤f(w∗)+⟨∇f(wi),wi−w∗⟩−η2∥∇f(wi)∥2Convexity=f(w∗)−1η⟨wi+1−wi,wi−w∗⟩−12η∥wi−wi+1∥2梯度下降=f(w∗)+12η∥wi−w∗∥2−12η(∥wi−w∗∥2−2⟨wi−wi+1,wi−w∗⟩+∥wi−wi+1∥2)配方=f(w∗)+12η∥wi−w∗∥2−12η∥(wi−wi+1)−(wi−w∗)∥2=f(w∗)+12η(∥wi−w∗∥2−∥wi+1−w∗∥2)

t−1∑i=0(f(wi+1)−f(w∗))≤12η(∥w0−w∗∥2−∥wt−w∗∥2)≤12η∥w0−w∗∥2

由于 f(wi) 不升,

f(wt)≤f(w∗)+12ηt∥w0−w∗∥2

令总训练轮数

T=L∥w0−w∗∥22ϵ

即可得到 f(wt)≤f(w∗)+ϵ。

接下来考虑一个很常用的技巧,随机梯度下降 (stochastic gradient descent, SGD)。如果我们每次都仅选取小批量数据计算梯度,那么便要考虑收敛性的问题。

wt+1=wt−ηGt

E[Gt]=∇f(wt)

其中

∇f(w,X,Y)=1N∑i∇l(w,xi,yi)

Gt=1|S|∑i∈S∇l(w,xi,yi)

如果采取随机选取 S 的策略,我们可以不再考虑 Gt 的由来,而是仅把其当作一个随机变量对待。

命题 f 是一个凸的 L-平滑函数,w∗=argminwf(w),采用学习速率 η≤1L 且使得 Var(Gt)≤σ2 进行 t 轮梯度下降时,有

E[f(¯¯¯¯¯¯wt)]≤f(w∗)+∥w0−w∗∥22tη+ησ2

其中 ¯¯¯¯¯¯wi=1t∑ti=1wi。

证明 考虑转化为和 GD 类似的形式。一次项用期望的线性性,二次项用方差 Var(Gt)=E∥Gt∥2−(EGt)2=E∥Gt∥2−∥∇f(wi)∥2。由此不断转化 Gi 和 ∇f(wi),分离固定部分和随机部分。

E[f(wi+1)]≤f(wi)+E⟨∇f(wi),wi+1−wi⟩+L2E∥wi+1−wi∥2Smoothness=f(wi)+⟨∇f(wi),E(wi+1−wi)⟩+L2E∥wi+1−wi∥2期望的线性性=f(wi)−η⟨∇f(wi),∇f(wi)⟩+Lη22E∥Gi∥2=f(wi)−η⟨∇f(wi),∇f(wi)⟩+Lη22(∥∇f(wi)∥2+Var(Gi))Var(Gt)=E∥Gt∥2−∥∇f(wi)∥2≤f(wi)−η(1−Lη2)∥∇f(wi)∥2+Lη22σ2≤f(wi)−η2∥∇f(wi)∥2+η2σ2η<1L≤f(w∗)+⟨∇f(wi),wi−w∗⟩−η2∥∇f(wi)∥2+η2σ2≤f(w∗)−1ηE⟨wi+1−wi,wi−w∗⟩−η2∥Gi∥2+ησ2∥∇f(wi)∥2=E∥Gi∥2−Var(Gi)=12ηE(∥wi−w∗∥2−∥wi+1−w∗∥2)+ησ2同 GD

E[f(¯¯¯¯¯¯wt)]−f(w∗)=Ef(1tt∑i=1wt)−f(w∗)≤1tE(t∑i=1f(wi))−f(w∗)Jensen's Ineq≤1tt−1∑i=0(Ef(wi+1)−f(w∗))≤12ηt(∥w0−w∗∥2−E∥wt−w∗∥2)+ησ2≤12ηt∥w0−w∗∥2+ησ2

令

T=2∥w0−w∗∥2σ2ϵ2,η=ϵ2σ2

即可得到 Ef(¯¯¯¯¯¯wt)≤f(w∗)+ϵ。

也就是说,误差项是不随 t 改变的,因此只能通过缩小学习速率降低误差。这导致 GD 有 1T 的收敛速率时 SGD 只有 1√T 的收敛速率。

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 阿里最新开源QwQ-32B,效果媲美deepseek-r1满血版,部署成本又又又降低了!

· 单线程的Redis速度为什么快?

· SQL Server 2025 AI相关能力初探

· AI编程工具终极对决:字节Trae VS Cursor,谁才是开发者新宠?

· 展开说说关于C#中ORM框架的用法!