爬虫(六)-在前程无忧投简历

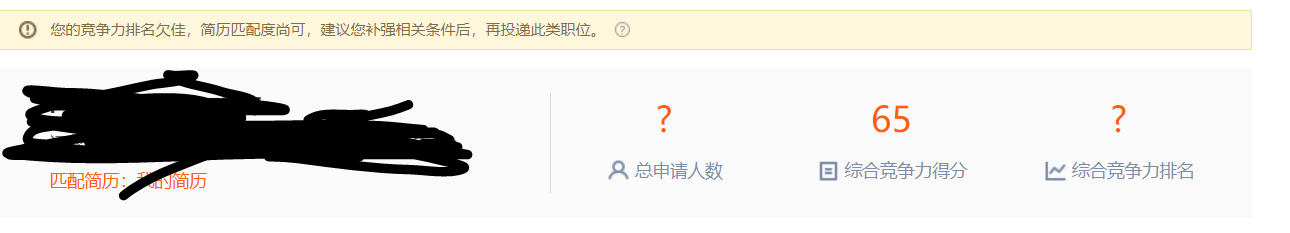

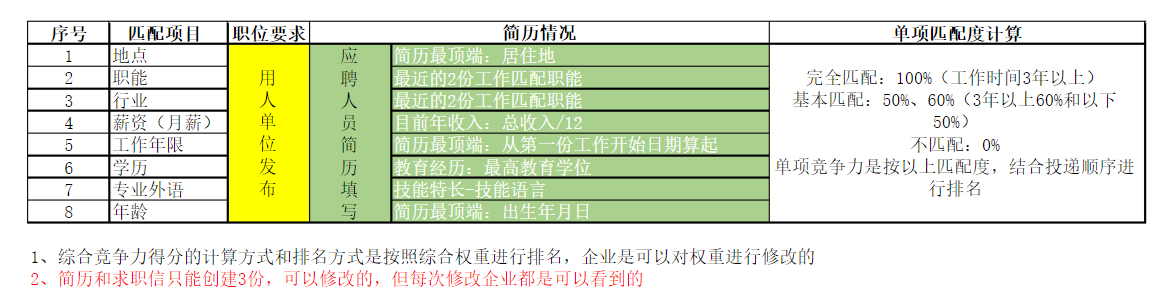

在前程无忧上投简历发现有个竞争力分析,似乎是按简历来的,想要根据评分投简历

抓取关键字搜索结果的综合竞争力得分和评语,筛选投简历

获取cookie

from selenium import webdriver from selenium.webdriver.chrome.options import Options chrome_options = Options() # chrome_options.add_argument('--headless') from time import sleep import re from lxml import etree import requests import os import json driver = webdriver.Chrome(chrome_options=chrome_options,executable_path = 'D:\python\chromedriver.exe') headers={"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/73.0.3683.86 Safari/537.36"}

def get_cookie():

driver.get("https://login.51job.com/login.php?loginway=1&lang=c&url=")

sleep(2)

phone=input("输入手机号:")

driver.find_element_by_id("loginname").send_keys(phone)

driver.find_element_by_id("btn7").click()

sleep(1)

code=input("输入短信:")

driver.find_element_by_id("phonecode").send_keys(code)

driver.find_element_by_id("login_btn").click()

sleep(2)

cookies = driver.get_cookies()

with open("cookie.json", "w")as f:

f.write(json.dumps(cookies))

driver.close()

用webdriver登陆获取cookie,把cookie写入文件,取消注释可以用无头模式

前程无忧手机短信一天只能发送三条,保存cookie登陆用

#搜索职位获得页码

def get_job():

job = input("输入职位:")

url=f"https://search.51job.com/list/020000,000000,0000,00,1,99,{job},2,1.html?lang=c&stype=1&postchannel=0000&workyear=02&cotype=99°reefrom=99&jobterm=99&companysize=99&lonlat=0%2C0&radius=-1&ord_field=0&confirmdate=9&fromType=5&dibiaoid=0&address=&line=&specialarea=00&from=&welfare="

r=session.get(url,headers=headers)

r.encoding=r.apparent_encoding

tree = etree.HTML(r.text)

x = tree.xpath('//span[@class="td"]/text()')[0]

total_page = int(re.findall("(\d+)", x)[0])

href = []

for i in range(1,total_page+1):

href.append(re.sub("\d.html", f'{i}.html', url))

return href

职位结果的页码

#职位id

def get_job_code(url):

headers={"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/73.0.3683.86 Safari/537.36"}

r=session.get(url,headers=headers)

tree=etree.HTML(r.text)

divs=tree.xpath('//div[@class="el"]/p/span/a/@href')

job=str(divs)

job_id=re.findall("\/(\d+).html",job)

return job_id

获取职位id

修改竞争力分析页面的id

#匹配的信息

def get_info(job_id):

href=f"https://i.51job.com/userset/bounce_window_redirect.php?jobid={job_id}&redirect_type=2"

r=session.get(href,headers=headers)

r.encoding=r.apparent_encoding

tree=etree.HTML(r.text)

pingjia=tree.xpath('//div[@class="warn w1"]//text()')[0].strip()

gongsi=[]

for i in tree.xpath('//div[@class="lf"]//text()'):

if i.strip():

gongsi.append(i.strip())

fenshu=[]

for i in tree.xpath('//ul[@class="rt"]//text()'):

if i.strip():

fenshu.append(i.strip())

url=f"https://jobs.51job.com/shanghai/{job_id}.html?s=03&t=0"

return {"公司":gongsi[1],"职位":gongsi[0],"匹配度":pingjia,fenshu[3]:fenshu[2],"链接":url,"_id":job_id}

主程序

#用cookie登陆

if not os.path.exists("cookie.json"):

get_cookie()

f=open("cookie.json","r")

cookies=json.loads(f.read())

f.close()

session = requests.Session()

for cookie in cookies:

session.cookies.set(cookie['name'], cookie['value'])

检查cookie,不存就保存

#获取所有职位id

code=[]

for i in get_job():

code=code+get_job_code(i)

职位id添加到列表

#存入Mongodb

import pymongo

client=pymongo.MongoClient("47.102.109.190",27017)

db=client["job_zhu"]

job_info=db["job_info"]

for i in code:

try:

if not job_info.find_one({"_id":i}):

info=get_info(i)

if not job_info.find_one(info):

job_info.insert_one(info)

print(info)

print("插入成功")

except:

print(code)

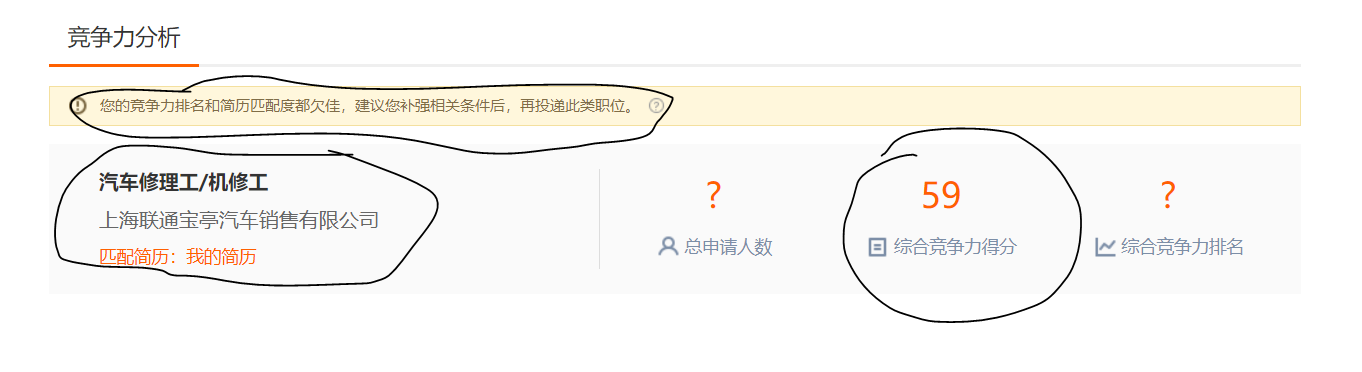

MongDB保存结果,用职位id去重

吃完饭已经有8000个职位了,找到127个匹配度好的投递

投递:

一个登陆状态的点击动作,可以用selenium

for i in job_info.find({"匹配度":{$regex:"排名很好"},"综合竞争力得分":{$gte:"80"}}): print(i) try: driver.get(i) driver.find_element_by_id("app_ck").click() sleep(2) except: pass

用cookie登陆后循环投递,查表筛选条件

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

chrome_options = Options()

chrome_options.add_argument('--headless')

from time import sleep

import re

from lxml import etree

import requests

import os

import json

driver = webdriver.Chrome(chrome_options=chrome_options,executable_path = 'D:\python\chromedriver.exe')

headers={"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/73.0.3683.86 Safari/537.36"}

driver.get("https://search.51job.com/list/020000,000000,0000,00,1,99,%2B,2,1.html?lang=c&stype=1&postchannel=0000&workyear=01%2C02&cotype=99°reefrom=03%2C04&jobterm=99&companysize=99&lonlat=0%2C0&radius=-1&ord_field=0&confirmdate=9&fromType=5&dibiaoid=0&address=&line=&specialarea=00&from=&welfare=")

#获取cookie

def get_cookie():

driver.get("https://login.51job.com/login.php?loginway=1&lang=c&url=")

sleep(2)

phone=input("输入手机号:")

driver.find_element_by_id("loginname").send_keys(phone)

driver.find_element_by_id("btn7").click()

sleep(1)

code=input("输入短信:")

driver.find_element_by_id("phonecode").send_keys(code)

driver.find_element_by_id("login_btn").click()

sleep(2)

cookies = driver.get_cookies()

with open("cookie.json", "w")as f:

f.write(json.dumps(cookies))

driver.close()

#搜索和页码

def get_job():

job = input("输入职位:")

url=f"https://search.51job.com/list/020000,000000,0000,00,1,99,{job},2,1.html?lang=c&stype=1&postchannel=0000&workyear=02&cotype=99°reefrom=99&jobterm=99&companysize=99&lonlat=0%2C0&radius=-1&ord_field=0&confirmdate=9&fromType=5&dibiaoid=0&address=&line=&specialarea=00&from=&welfare="

r=session.get(url,headers=headers)

r.encoding=r.apparent_encoding

tree = etree.HTML(r.text)

x = tree.xpath('//span[@class="td"]/text()')[0]

total_page = int(re.findall("(\d+)", x)[0])

href = []

for i in range(1,total_page+1):

href.append(re.sub("\d.html", f'{i}.html', url))

return href

#职位id

def get_job_code(url):

headers={"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/73.0.3683.86 Safari/537.36"}

r=session.get(url,headers=headers)

tree=etree.HTML(r.text)

divs=tree.xpath('//div[@class="el"]/p/span/a/@href')

job=str(divs)

job_id=re.findall("\/(\d+).html",job)

return job_id

#匹配信息

def get_info(job_id):

href=f"https://i.51job.com/userset/bounce_window_redirect.php?jobid={job_id}&redirect_type=2"

r=session.get(href,headers=headers)

r.encoding=r.apparent_encoding

tree=etree.HTML(r.text)

pingjia=tree.xpath('//div[@class="warn w1"]//text()')[0].strip()

gongsi=[]

for i in tree.xpath('//div[@class="lf"]//text()'):

if i.strip():

gongsi.append(i.strip())

fenshu=[]

for i in tree.xpath('//ul[@class="rt"]//text()'):

if i.strip():

fenshu.append(i.strip())

url=f"https://jobs.51job.com/shanghai/{job_id}.html?s=03&t=0"

return {"公司":gongsi[1],"职位":gongsi[0],"匹配度":pingjia,fenshu[3]:fenshu[2],"链接":url,"_id":job_id}

#用cookie登陆

if not os.path.exists("cookie.json"):

get_cookie()

f=open("cookie.json","r")

cookies=json.loads(f.read())

f.close()

session = requests.Session()

for cookie in cookies:

session.cookies.set(cookie['name'], cookie['value'])

#所有职位id

code=[]

for i in get_job():

code=code+get_job_code(i)

#存入Mongo

import pymongo

client=pymongo.MongoClient("47.102.109.190",27017)

db=client["job_zhu"]

job_info=db["job_info"]

for i in code:

try:

if not job_info.find_one({"_id":i}):

info=get_info(i)

if not job_info.find_one(info):

job_info.insert_one(info)

print(info)

print("插入成功")

except:

print(code)

浙公网安备 33010602011771号

浙公网安备 33010602011771号