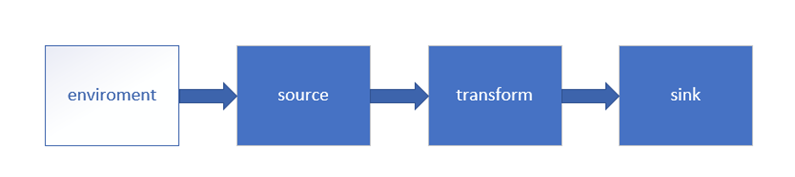

Fink| source| transform| sink

Flink 流处理Api

1. Environment

getExecutionEnvironment

创建一个执行环境,表示当前执行程序的上下文。 如果程序是独立调用的,则此方法返回本地执行环境;如果从命令行客户端调用程序以提交到集群,则此方法返回此集群的执行环境,也就是说,getExecutionEnvironment会根据查询运行的方式决定返回什么样的运行环境,是最常用的一种创建执行环境的方式。

//批处理

ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

//流处理

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

如果没有设置并行度,会以flink-conf.yaml中的配置为准,默认是1。

parallelism.default: 1

Flink会自动判断是本地环境还是远程的集群环境。

2. Source

① 从集合读取数据

② 从文件中读取数据

③ 从kafka中读取数据

引入kafka连接器的依赖

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka-0.11_2.12</artifactId>

<version>1.10.1</version>

</dependency>

④ 自定义source,传入SourceFunction

代码如下:

import java.util.Properties import org.apache.flink.api.common.serialization.SimpleStringSchema import org.apache.flink.api.scala._ import org.apache.flink.streaming.api.functions.source.SourceFunction import org.apache.flink.streaming.api.scala.{DataStream, StreamExecutionEnvironment} import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer011 import scala.collection.immutable import scala.util.Random object SourceTest { def main(args: Array[String]): Unit = { val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment env.setParallelism(1) // 1. 从集合中读取数据 温度传感器 id 时间戳 温度 /* val stream01 = env.fromCollection(List( SensorReading("sensor_1", 1547718199, 35.80018327300259), SensorReading("sensor_6", 1547718201, 15.402984393403084), SensorReading("sensor_7", 1547718202, 6.720945201171228), SensorReading("sensor_10", 1547718205, 38.101067604893444) )) stream01.print("stream01")*/ //env.fromElements("flink", 1, 32, 3213, 0.134).print("test_java") //从不同元素中读取 // 2. 从文件中读取数据 /* val dsTream02: DataStream[String] = env.readTextFile("F:\\BI\\code\\sensor.txt") dsTream02.print("stream02")*/ // 3. 从kafka中读取数据 // 创建kafka相关的配置 val properties = new Properties() properties.setProperty("bootstrap.servers", "hadoop101:9092") properties.setProperty("group.id", "consumer-group") properties.setProperty("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer") properties.setProperty("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer") properties.setProperty("auto.offset.reset", "latest") val stream03: DataStream[String] = env.addSource(new FlinkKafkaConsumer011[String]("sensor", new SimpleStringSchema(), properties)) // 4. 自定义数据源 /*val stream04: DataStream[SensorReading] = env.addSource(new SensorSource()) stream04.print("stream04")*/ env.execute("SouceTest Start") } } // 定义数据样例类 温度传感器 case class SensorReading(id: String, timestamp: Long, temperature: Double) class SensorSource() extends SourceFunction[SensorReading]{ //定义一个flag:表示数据源是否还在正常运行 var running: Boolean = true //取消数据生成 override def cancel(): Unit = running = false //正常生成数据 override def run(sourceContext: SourceFunction.SourceContext[SensorReading]): Unit = { // 创建一个随机数发生器 val rand: Random = new Random() // 随机初始换生成10个传感器的温度数据,之后在它基础随机波动生成流数据 var curTemp: immutable.IndexedSeq[(String, Double)] = 1.to(10).map( i => ("sensor_" + i, 60 + rand.nextGaussian() * 20) //nextGaussian标准高斯随机分布--正态曲线 ) // 无限循环生成流数据,除非被cancel while (running){ //更新值的温度 在前一次温度的基础上更新温度值 curTemp = curTemp.map( t => (t._1, t._2 + rand.nextGaussian()) ) // 获取当前的时间戳 val curTime: Long = System.currentTimeMillis() // 包装成SensorReading,输出 curTemp.foreach( t => sourceContext.collect(SensorReading(t._1, curTime, t._2)) ) // 间隔100ms Thread.sleep(100) } } }

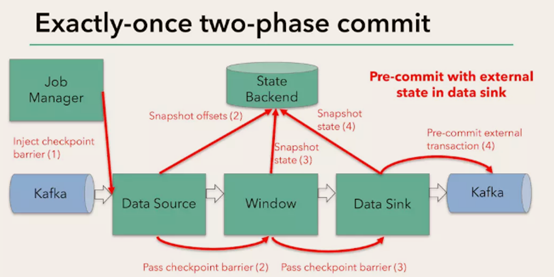

Flink+kafka是如何实现exactly-once语义的

Flink通过checkpoint来保存数据是否处理完成的状态;

有JobManager协调各个TaskManager进行checkpoint存储,checkpoint保存在 StateBackend中,默认StateBackend是内存级的,也可以改为文件级的进行持久化保存。

执行过程实际上是一个两段式提交,每个算子执行完成,会进行“预提交”,直到执行完sink操作,会发起“确认提交”,如果执行失败,预提交会放弃掉。

如果宕机需要通过StateBackend进行恢复,只能恢复所有确认提交的操作。

Spark中要想实现有状态的,需要使用updateBykey或者借助redis;

而Fink是把它记录在State Bachend,只要是经过keyBy等处理之后结果会记录在State Bachend(已处理未提交; 如果是处理完了就是已提交状态;),

它还会记录另外一种状态值:keyState,比如keyBy累积的结果;

StateBachend如果不想存储在内存中,也可以存储在fs文件中或者HDFS中; IDEA的工具只支持memory内存式存储,一旦重启就没了;部署到linux中就支持存储在文件中了;

Kakfa的自动提交:“enable.auto.commit”,比如从kafka出来后到sparkStreaming之后,一进来consumer会帮你自动提交,如果在处理过程中,到最后有一个没有写出去(比如写到redis、ES),虽然处理失败了但kafka的偏移量已经发生改变;所以移偏移量的时机很重要;

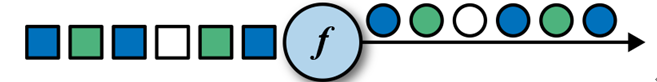

3. Transform 转换算子

map

val streamMap = stream.map { x => x * 2 }

object StartupApp { def main(args: Array[String]): Unit = { val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment val myKafkaConsumer: FlinkKafkaConsumer011[String] = MyKafkaUtil.getConsumer("GMALL_STARTUP") val dstream: DataStream[String] = env.addSource(myKafkaConsumer) //dstream.print().setParallelism(1) 测试从kafka中获得数据是否打通到了flink中 //将json转换成json对象 val startupLogDStream: DataStream[StartupLog] = dstream.map { jsonString => JSON.parseObject(jsonString, classOf[StartupLog]) } //需求一 相同渠道的值进行累加 val sumDStream: DataStream[(String, Int)] = startupLogDStream.map { startuplog => (startuplog.ch, 1) }.keyBy(0)

.reduce { (startuplogCount1, startuplogCount2) => val newCount: Int = startuplogCount1._2 + startuplogCount2._2 (startuplogCount1._1, newCount) } //val sumDStream: DataStream[(String, Int)] = startupLogDStream.map{startuplog => (startuplog.ch,1)}.keyBy(0).sum(1) //sumDStream.print() env.execute() } }

flatMap

flatMap的函数签名:def flatMap[A,B](as: List[A])(f: A ⇒ List[B]): List[B] 例如: flatMap(List(1,2,3))(i ⇒ List(i,i)) 结果是List(1,1,2,2,3,3), 而List("a b", "c d").flatMap(line ⇒ line.split(" ")) 结果是List(a, b, c, d) val streamFlatMap = stream.flatMap{ x => x.split(" ") }

filter

val streamFilter = stream.filter{ x => x == 1 }

KeyBy

DataStream → KeyedStream:输入必须是Tuple类型,逻辑地将一个流拆分成不相交的分区,每个分区包含具有相同key的元素,在内部以hash的形式实现的。

以HashCode来进行分区,可能有些key值不相同也会分到相同区。

val inputStream: DataStream[String] = env.readTextFile("F:\\Input\\sensor.txt")

// 1.transform 先转换成样例类类型 map split

val dataStream: DataStream[SensorReading] = inputStream.map(data => {

val dataArray: Array[String] = data.split(",")

SensorReading(dataArray(0).trim, dataArray(1).trim.toLong, dataArray(2).trim.toDouble)

})

//2. 聚合操作 之前必须先keyBy(可以分区并发去执行), DataStream不能直接聚合;

//val stream01: KeyedStream[SensorReading, String] = dataStream.keyBy(_.id) //不能dataStream.keyBy("id") 或者keyBy(0), scala是强类型语言

val stream01: DataStream[SensorReading] = dataStream.keyBy("id")

.sum("temperature")//KeyBy sum 是安照id进行逐个累加

stream01.print()

依次输入的数据为

sensor_1,1547718199,5.0

sensor_1,1547718199,30.0

sensor_1,1547718199,20.0

--->

SensorReading(sensor_1,1547718199,5.0)

SensorReading(sensor_1,1547718199,35.0) //30 + 5

SensorReading(sensor_1,1547718199,55.0) //20 + 35

滚动聚合算子(Rolling Aggregation)

这些算子可以针对KeyedStream的每一个支流做聚合,必须KeyBy分组之后再sum聚合等。

sum()

min()

max()

minBy()

maxBy()

Reduce

KeyedStream → DataStream:一个分组数据流的聚合操作,合并当前的元素和上次聚合的结果,产生一个新的值,返回的流中包含每一次聚合的结果,而不是只返回最后一次聚合的最终结果。

val inputStream: DataStream[SensorReading] = env.readTextFile("YOUR_PATH\\sensor.txt")

inputStream.map( data => {

val dataArray = data.split(",")

SensorReading(dataArray(0).trim, dataArray(1).trim.toLong, dataArray(2).trim.toDouble)

})

.keyBy("id")

.reduce( (x, y) => SensorReading(x.id, x.timestamp + 1, y.temperature + 10) )

//reduce 是每来一个聚合一个,输出当前传感器最新温度+10,时间戳是上次数据的时间+1;

--->

SensorReading(sensor_1,1,5.0)

SensorReading(sensor_1,2,40.0) //原来的温度是30,30 + 10; 时间是在上一条时间基础上1 + 1

SensorReading(sensor_1,3,30.0) //原来温度是20,20 + 10;

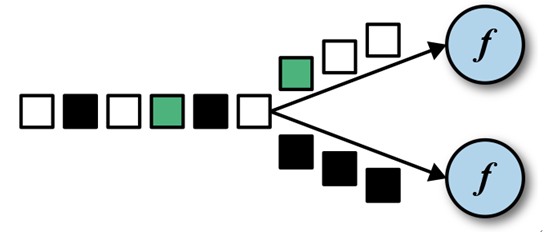

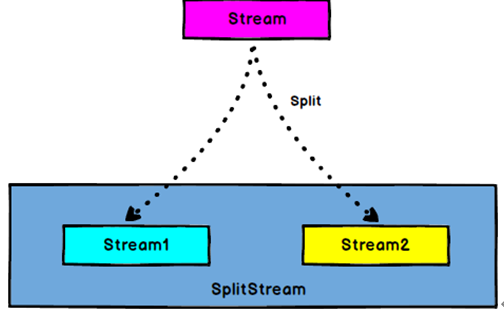

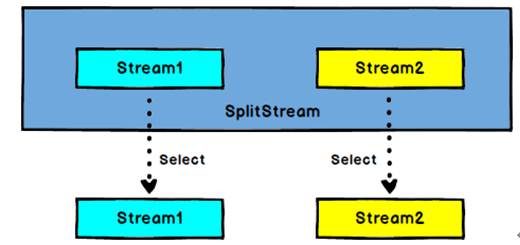

Split 和 Select

DataStream --split---> Split Stream:根据某些特征把一个DataStream拆分成两个或者多个DataStream。

SplitStream--select--> DataStream:从一个SplitStream中获取一个或者多个DataStream。

// 1.transform 先转换成样例类类型 map split

val dataStream: DataStream[SensorReading] = inputStream.map(data => {

val dataArray: Array[String] = data.split(",")

SensorReading(dataArray(0).trim, dataArray(1).trim.toLong, dataArray(2).trim.toDouble)

})

// 2. split和select 分流,根据温度是否大于30度划分

val splitStream: SplitStream[SensorReading] = dataStream.split(

data => {

if (data.temperature > 30) Seq("High") else Seq("Low")

})

val highTempStream: DataStream[SensorReading] = splitStream.select("High")

val lowTempStream: DataStream[SensorReading] = splitStream.select("Low")

val allTempStream: DataStream[SensorReading] = splitStream.select("High", "Low")

highTempStream.print("High")

--->

High> SensorReading(sensor_10,1547718205,38.1)

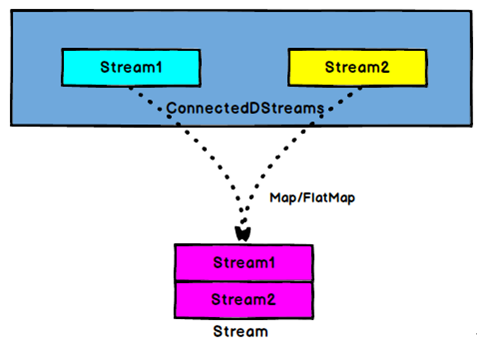

Connect和 CoMap

connecte的两条流数据类型可以不同,但一次操作只能合并2条流;

DataStream, DataStream --connect--> ConnectedStreams:连接两个保持他们类型的数据流,两个数据流被Connect之后,只是被放在了一个同一个流中,内部依然保持各自的数

据和形式不发生任何变化,两个流相互独立。

CoMap,CoFlatMap

ConnectedStreams --map/flatMap--> DataStream

作用于ConnectedStreams上,功能与map和flatMap一样,对ConnectedStreams中的每一个Stream分别进行map和flatMap处理。

// 1.transform 先转换成样例类类型 map split

val dataStream: DataStream[SensorReading] = inputStream.map(data => {

val dataArray: Array[String] = data.split(",")

SensorReading(dataArray(0).trim, dataArray(1).trim.toLong, dataArray(2).trim.toDouble)

})

// 2. split和select 分流,根据温度是否大于30度划分

val splitStream: SplitStream[SensorReading] = dataStream.split(

data => {

if (data.temperature > 30) Seq("High") else Seq("Low")

})

val highTempStream: DataStream[SensorReading] = splitStream.select("High")

val lowTempStream: DataStream[SensorReading] = splitStream.select("Low")

val allTempStream: DataStream[SensorReading] = splitStream.select("High", "Low")

// 3. connect和coMap 合并两条流

val warningStream: DataStream[(String, Double)] = highTempStream.map(

sensorData =>

(sensorData.id, sensorData.temperature)

)

val connectedStreams: ConnectedStreams[(String, Double), SensorReading] = warningStream.connect(lowTempStream)

val coMap: DataStream[(String, Double, String)] = connectedStreams.map(

warningData => (warningData._1, warningData._2, "warning"),

lowData => (lowData.id, lowData.temperature, "healthy")

)

coMap.print()

--->

(sensor_10,38.1,warning)

(sensor_1,20.0,healthy)

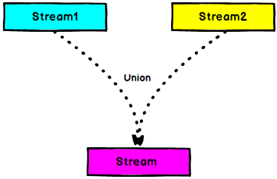

Union

DataStream → DataStream:对两个或者两个以上的DataStream进行union操作,产生一个包含所有DataStream元素的新DataStream。注意:如果你将一个DataStream跟它自己做union操作,在新的DataStream中,你将看到每一个元素都出现两次。

可以合并多条流,但是数据结构必须一样;

//合并流union

val unionDStream: DataStream[StartupLog] = appleStream.union(otherdStream)

unionDStream.print("union").setParallelism(1)

Connect与 Union 区别

1 、 Union之前两个流的类型必须是一样,Connect可以不一样,在之后的coMap中再去调整成为一样的。

2 Connect只能操作两个流,Union可以操作多个

实现UDF函数——更细粒度的控制流

函数类(Function Classes)

Flink暴露了所有udf函数的接口(实现方式为接口或者抽象类)。例如MapFunction, FilterFunction, ProcessFunction等等。

下面例子实现了FilterFunction接口:

val dataStream: DataStream[SensorReading] = inputStream.map(data => {

val dataArray: Array[String] = data.split(",")

SensorReading(dataArray(0).trim, dataArray(1).trim.toLong, dataArray(2).trim.toDouble)

})

.filter(new MyFilter)

dataStream.print()

class MyFilter() extends FilterFunction[SensorReading]{

override def filter(value: SensorReading): Boolean = {

value.id.startsWith("sensor_1")

}

}

匿名函数(Lambda Functions)

DataStream<String> tweets = env.readTextFile("INPUT_FILE");

DataStream<String> flinkTweets = tweets.filter( tweet -> tweet.contains("flink") );

富函数(Rich Functions)

“富函数”是DataStream API提供的一个函数类的接口,所有Flink函数类都有其Rich版本。它与常规函数的不同在于,可以获取运行环境的上下文,并拥有一些生命周期方法,所以可以实现更复杂的功能。

- RichMapFunction

- RichFlatMapFunction

- RichFilterFunction

- …

Rich Function有一个生命周期的概念。典型的生命周期方法有:

- open()方法是rich function的初始化方法,当一个算子例如map或者filter被调用之前open()会被调用。

- close()方法是生命周期中的最后一个调用的方法,做一些清理工作。

- getRuntimeContext()方法提供了函数的RuntimeContext的一些信息,例如函数执行的并行度,任务的名字,以及state状态

class MyMapper extends MapFunction[SensorReading, String]{

override def map(value: SensorReading): String = value.id + " temperature"

}

// 富函数,可以获取到运行时上下文,还有一些生命周期

class MyRichMapper extends RichMapFunction[SensorReading, String]{

override def open(parameters: Configuration): Unit = {

// 做一些初始化操作,比如数据库的连接

// getRuntimeContext

}

override def map(value: SensorReading): String = value.id + " temperature"

override def close(): Unit = {

// 一般做收尾工作,比如关闭连接,或者清空状态

}

}

Sink

Flink没有类似于spark中foreach方法,让用户进行迭代的操作。虽有对外的输出操作都要利用Sink完成。最后通过类似如下方式完成整个任务最终输出操作。

myDstream.addSink(new MySink(xxxx))

官方提供了一部分的框架的sink。除此以外,需要用户自定义实现sink。

Kafka

object MyKafkaUtil { val prop = new Properties() prop.setProperty("bootstrap.servers","hadoop101:9092") prop.setProperty("group.id","gmall") def getConsumer(topic:String ):FlinkKafkaConsumer011[String]= { val myKafkaConsumer:FlinkKafkaConsumer011[String] = new FlinkKafkaConsumer011[String](topic, new SimpleStringSchema(), prop) myKafkaConsumer } def getProducer(topic:String):FlinkKafkaProducer011[String]={ new FlinkKafkaProducer011[String]("hadoop101:9092",topic,new SimpleStringSchema()) } } //sink到kafka unionDStream.map(_.toString).addSink(MyKafkaUtil.getProducer("gmall_union")) ///opt/module/kafka/bin/kafka-console-consumer.sh --zookeeper hadoop101:2181 --topic gmall_union

从kafka到kafka 启动kafka kafka生产者:[kris@hadoop101 kafka]$ bin/kafka-console-producer.sh --broker-list hadoop101:9092 --topic sensor kafka消费者: [kris@hadoop101 kafka]$ bin/kafka-console-consumer.sh --bootstrap-server hadoop101:9092 --topic sinkTest --from-beginning SensorReading(sensor_1,1547718199,35.80018327300259) SensorReading(sensor_6,1547718201,15.402984393403084) SensorReading(sensor_7,1547718202,6.720945201171228) SensorReading(sensor_10,1547718205,38.101067604893444) SensorReading(sensor_1,1547718206,35.1) SensorReading(sensor_1,1547718207,35.6)

Redis

import org.apache.flink.streaming.connectors.redis.RedisSink import org.apache.flink.streaming.connectors.redis.common.config.FlinkJedisPoolConfig import org.apache.flink.streaming.connectors.redis.common.mapper.{RedisCommand, RedisCommandDescription, RedisMapper} object MyRedisUtil { private val config: FlinkJedisPoolConfig = new FlinkJedisPoolConfig.Builder().setHost("hadoop101").setPort(6379).build() def getRedisSink(): RedisSink[(String, String)] = { new RedisSink[(String, String)](config, new MyRedisMapper) } } class MyRedisMapper extends RedisMapper[(String, String)]{ //用何种命令进行保存 override def getCommandDescription: RedisCommandDescription = { new RedisCommandDescription(RedisCommand.HSET, "channel_sum") //hset类型, apple, 111 } //流中的元素哪部分是value override def getKeyFromData(channel_sum: (String, String)): String = channel_sum._2 //流中的元素哪部分是key override def getValueFromData(channel_sum: (String, String)): String = channel_sum._1 } object StartupApp { def main(args: Array[String]): Unit = { val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment val myKafkaConsumer: FlinkKafkaConsumer011[String] = MyKafkaUtil.getConsumer("GMALL_STARTUP") val dstream: DataStream[String] = env.addSource(myKafkaConsumer) //dstream.print().setParallelism(1) 测试从kafka中获得数据是否打通到了flink中 //将json转换成json对象 val startupLogDStream: DataStream[StartupLog] = dstream.map { jsonString => JSON.parseObject(jsonString, classOf[StartupLog]) } //sink到redis //把按渠道的统计值保存到redis中 hash key: channel_sum field ch value: count //按照不同渠道进行累加 val chCountDStream: DataStream[(String, Int)] = startupLogDStream.map(startuplog => (startuplog.ch, 1)).keyBy(0).sum(1) //把上述结果String, Int转换成String, String类型 val channelDStream: DataStream[(String, String)] = chCountDStream.map(chCount => (chCount._1, chCount._2.toString)) channelDStream.addSink(MyRedisUtil.getRedisSink())

ES

object MyEsUtil { val hostList: util.List[HttpHost] = new util.ArrayList[HttpHost]() hostList.add(new HttpHost("hadoop101", 9200, "http")) hostList.add(new HttpHost("hadoop102", 9200, "http")) hostList.add(new HttpHost("hadoop103", 9200, "http")) def getEsSink(indexName: String): ElasticsearchSink[String] = { //new接口---> 要实现一个方法 val esSinkFunc: ElasticsearchSinkFunction[String] = new ElasticsearchSinkFunction[String] { override def process(element: String, ctx: RuntimeContext, indexer: RequestIndexer): Unit = { val jSONObject: JSONObject = JSON.parseObject(element) val indexRequest: IndexRequest = Requests.indexRequest().index(indexName).`type`("_doc").source(jSONObject) indexer.add(indexRequest) } } val esSinkBuilder = new ElasticsearchSink.Builder[String](hostList, esSinkFunc) esSinkBuilder.setBulkFlushMaxActions(10) val esSink: ElasticsearchSink[String] = esSinkBuilder.build() esSink } } object StartupApp { def main(args: Array[String]): Unit = { val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment val myKafkaConsumer: FlinkKafkaConsumer011[String] = MyKafkaUtil.getConsumer("GMALL_STARTUP") val dstream: DataStream[String] = env.addSource(myKafkaConsumer) //sink之三 保存到ES val esSink: ElasticsearchSink[String] = MyEsUtil.getEsSink("gmall_startup") dstream.addSink(esSink) //dstream来自kafka的数据源 GET gmall_startup/_search

Mysql

class MyjdbcSink(sql: String) extends RichSinkFunction[Array[Any]] { val driver = "com.mysql.jdbc.Driver" val url = "jdbc:mysql://hadoop101:3306/gmall?useSSL=false" val username = "root" val password = "123456" val maxActive = "20" var connection: Connection = null // 创建连接 override def open(parameters: Configuration) { val properties = new Properties() properties.put("driverClassName",driver) properties.put("url",url) properties.put("username",username) properties.put("password",password) properties.put("maxActive",maxActive) val dataSource: DataSource = DruidDataSourceFactory.createDataSource(properties) connection = dataSource.getConnection() } // 把每个Array[Any] 作为数据库表的一行记录进行保存 override def invoke(values: Array[Any]): Unit = { val ps: PreparedStatement = connection.prepareStatement(sql) for (i <- 0 to values.length-1) { ps.setObject(i+1, values(i)) } ps.executeUpdate() } override def close(): Unit = { if (connection != null){ connection.close() } } } object StartupApp { def main(args: Array[String]): Unit = { val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment val myKafkaConsumer: FlinkKafkaConsumer011[String] = MyKafkaUtil.getConsumer("GMALL_STARTUP") val dstream: DataStream[String] = env.addSource(myKafkaConsumer) //dstream.print().setParallelism(1) 测试从kafka中获得数据是否打通到了flink中 //将json转换成json对象 val startupLogDStream: DataStream[StartupLog] = dstream.map { jsonString => JSON.parseObject(jsonString, classOf[StartupLog]) } //sink之四 保存到Mysql中 startupLogDStream.map(startuplog => Array(startuplog.mid, startuplog.uid, startuplog.ch, startuplog.area,startuplog.ts)) .addSink(new MyjdbcSink("insert into fink_startup values(?,?,?,?,?)")) env.execute() } }

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· AI与.NET技术实操系列:向量存储与相似性搜索在 .NET 中的实现

· 基于Microsoft.Extensions.AI核心库实现RAG应用

· Linux系列:如何用heaptrack跟踪.NET程序的非托管内存泄露

· 开发者必知的日志记录最佳实践

· SQL Server 2025 AI相关能力初探

· winform 绘制太阳,地球,月球 运作规律

· 震惊!C++程序真的从main开始吗?99%的程序员都答错了

· 【硬核科普】Trae如何「偷看」你的代码?零基础破解AI编程运行原理

· AI与.NET技术实操系列(五):向量存储与相似性搜索在 .NET 中的实现

· 超详细:普通电脑也行Windows部署deepseek R1训练数据并当服务器共享给他人

2018-05-13 JavaScript