python3简单使用requests 用户代理,cookie池

官方文档:http://docs.python-requests.org/en/master/

参考文档:http://www.cnblogs.com/zhaof/p/6915127.html#undefined

参考文档:Python爬虫实例(三)代理的使用

我这里使用的是当前最新的python3.6。

安装

pip3 install requests

使用requests模块完成各种操作

1、get请求

import requests url='https://www.baidu.com' r = requests.get(url) print(r.status_code)

2、post请求

url = 'https://www.baidu.com' data1 = { "name": "zhaofan", "age": 23 } r = requests.post(url, data=data1, verify=True) print(r.status_code)

3、使用代理

import requests url='http://docs.python-requests.org/en/master/' proxies={ 'http':'127.0.0.1:8080', 'https':'127.0.0.1:8080' } r = requests.get(url,proxies=proxies) print(r.status_code)

如果代理需要设置账户名和密码,只需要将字典更改为如下:

proxies = {

"http":"http://user:password@127.0.0.1:9999"

}

如果你的代理是通过sokces这种方式则需要pip install "requests[socks]"

proxies= {

"http":"socks5://127.0.0.1:9999",

"https":"sockes5://127.0.0.1:8888"

}

4、自定义header和cookie,获取cookie

url = 'https://weixin.sogou.com/weixin?type=1&s_from=input&query=python&ie=utf8&_sug_=n&_sug_type_=' headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 6.2) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/28.0.1464.0 Safari/537.36', 'Cookie': 'name=JSESSIONID;value=aaaUrhXY8CzPBgs1eXUFw;domain=weixin.sogou.com' } r = requests.get(url, headers=headers) # 获取cookie print(r.cookies) print(r.status_code) # print(r.text)

cookie的一个作用就是可以用于模拟登陆,做会话维持

s = requests.Session() s.get("http://httpbin.org/cookies/set/number/123456") response = s.get("http://httpbin.org/cookies") print(response.text)

5、用户代理池,cookie 池测试代码

import sys import requests import os import random sys.path.append(os.path.abspath('{bastpath}{sep}..'.format(bastpath=sys.path[0],sep=os.path.sep))) class test: def __init__(self,logPath): self.mycookies = [ {'name': 'amet non sint', 'value': 'nulla occaecat dolore', 'domain': 'Excepteur labore est proident', 'path': 'dolor laborum consectetur', 'sameSite': 'amet ea Lorem', 'storeId': 'ex nulla nostrud'}, {'name': 'velit consectetur in', 'value': None, 'domain': 'sit', 'path': 'nostrud', 'sameSite': 'et laborum', 'storeId': 'labore commodo elit reprehenderit occaecat'}] self.user_agent_list = ['Mozilla/5.0 (Windows NT 6.2) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/28.0.1464.0 Safari/537.36', 'Mozilla/5.0 (Windows NT 5.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/31.0.1650.16 Safari/537.36', 'Mozilla/5.0 (Windows NT 5.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/35.0.3319.102 Safari/537.36', 'Mozilla/5.0 (X11; CrOS i686 3912.101.0) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/27.0.1453.116 Safari/537.36', 'Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/27.0.1453.93 Safari/537.36', 'Mozilla/5.0 (Windows NT 6.2; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/32.0.1667.0 Safari/537.36', 'Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:17.0) Gecko/20100101 Firefox/17.0.6', 'Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/28.0.1468.0 Safari/537.36', 'Mozilla/5.0 (Windows NT 5.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2224.3 Safari/537.36', 'Mozilla/5.0 (X11; CrOS i686 3912.101.0) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/27.0.1453.116 Safari/537.36' ] # 首先根据关键字获取对应微信公众号的url def test(self,url): try: cookie1 = random.choice(self.mycookies) print(cookie1) UserAgent = random.choice(self.user_agent_list) header = {'User-Agent': UserAgent} print(header) txt = requests.get(url, cookies=cookie1,headers=header).text print(txt) except Exception as ex: print(ex)

6、证书验证

现在的很多网站都是https的方式访问,所以这个时候就涉及到证书的问题

为了避免这种情况的发生可以通过verify=False,但是这样是可以访问到页面,但是会提示:

InsecureRequestWarning: Unverified HTTPS request is being made. Adding certificate verification is strongly advised. See: https://urllib3.readthedocs

解决方法为:

import requests from requests.packages import urllib3 urllib3.disable_warnings() response = requests.get("https://www.12306.cn",verify=False) print(response.status_code)

7、认证设置

如果碰到需要认证的网站可以通过requests.auth模块实现

import requests from requests.auth import HTTPBasicAuth response = requests.get("http://120.27.34.24:9001/",auth=HTTPBasicAuth("user","123")) print(response.status_code)

当然这里还有一种方式

import requests response = requests.get("http://120.27.34.24:9001/",auth=("user","123")) print(response.status_code)

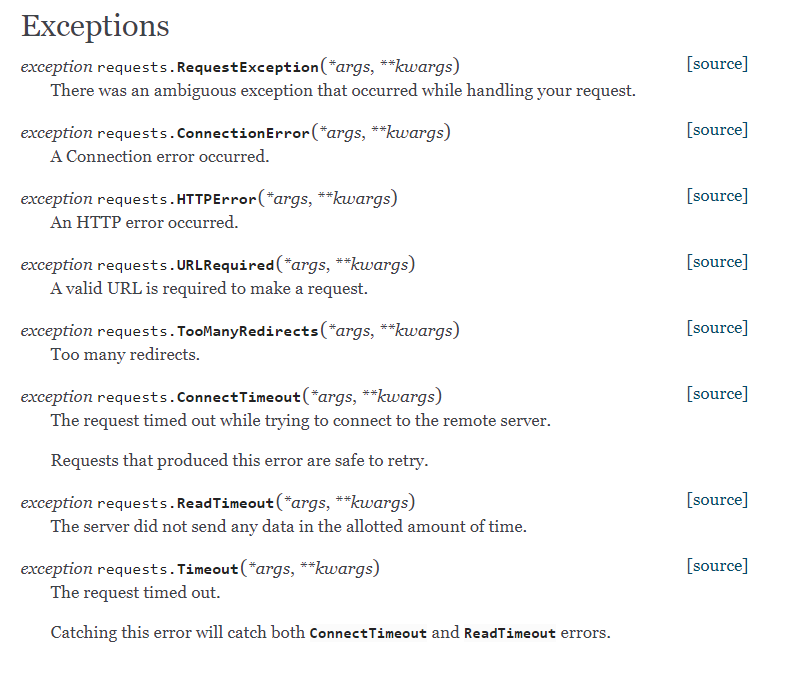

8、异常处理

关于reqeusts的异常在这里可以看到详细内容:http://www.python-requests.org/en/master/api/#exceptions

所有的异常都是在requests.excepitons中

从源码我们可以看出RequestException继承IOError,

HTTPError,ConnectionError,Timeout继承RequestionException

ProxyError,SSLError继承ConnectionError

ReadTimeout继承Timeout异常

这里列举了一些常用的异常继承关系,详细的可以看:http://cn.python-requests.org/zh_CN/latest/_modules/requests/exceptions.html#RequestException

简单测试

import requests from requests.exceptions import ReadTimeout,ConnectionError,RequestException try: response = requests.get("http://httpbin.org/get",timout=0.1) print(response.status_code) except ReadTimeout: print("timeout") except ConnectionError: print("connection Error") except RequestException: print("error")

其实最后测试可以发现,首先被捕捉的异常是timeout,当把网络断掉的haul就会捕捉到ConnectionError,如果前面异常都没有捕捉到,最后也可以通过RequestExctption捕捉到

9、状态码

状态码判断 Requests还附带了一个内置的状态码查询对象 主要有如下内容: 100: ('continue',), 101: ('switching_protocols',), 102: ('processing',), 103: ('checkpoint',), 122: ('uri_too_long', 'request_uri_too_long'), 200: ('ok', 'okay', 'all_ok', 'all_okay', 'all_good', '\o/', '✓'), 201: ('created',), 202: ('accepted',), 203: ('non_authoritative_info', 'non_authoritative_information'), 204: ('no_content',), 205: ('reset_content', 'reset'), 206: ('partial_content', 'partial'), 207: ('multi_status', 'multiple_status', 'multi_stati', 'multiple_stati'), 208: ('already_reported',), 226: ('im_used',), Redirection. 300: ('multiple_choices',), 301: ('moved_permanently', 'moved', '\o-'), 302: ('found',), 303: ('see_other', 'other'), 304: ('not_modified',), 305: ('use_proxy',), 306: ('switch_proxy',), 307: ('temporary_redirect', 'temporary_moved', 'temporary'), 308: ('permanent_redirect', 'resume_incomplete', 'resume',), # These 2 to be removed in 3.0 Client Error. 400: ('bad_request', 'bad'), 401: ('unauthorized',), 402: ('payment_required', 'payment'), 403: ('forbidden',), 404: ('not_found', '-o-'), 405: ('method_not_allowed', 'not_allowed'), 406: ('not_acceptable',), 407: ('proxy_authentication_required', 'proxy_auth', 'proxy_authentication'), 408: ('request_timeout', 'timeout'), 409: ('conflict',), 410: ('gone',), 411: ('length_required',), 412: ('precondition_failed', 'precondition'), 413: ('request_entity_too_large',), 414: ('request_uri_too_large',), 415: ('unsupported_media_type', 'unsupported_media', 'media_type'), 416: ('requested_range_not_satisfiable', 'requested_range', 'range_not_satisfiable'), 417: ('expectation_failed',), 418: ('im_a_teapot', 'teapot', 'i_am_a_teapot'), 421: ('misdirected_request',), 422: ('unprocessable_entity', 'unprocessable'), 423: ('locked',), 424: ('failed_dependency', 'dependency'), 425: ('unordered_collection', 'unordered'), 426: ('upgrade_required', 'upgrade'), 428: ('precondition_required', 'precondition'), 429: ('too_many_requests', 'too_many'), 431: ('header_fields_too_large', 'fields_too_large'), 444: ('no_response', 'none'), 449: ('retry_with', 'retry'), 450: ('blocked_by_windows_parental_controls', 'parental_controls'), 451: ('unavailable_for_legal_reasons', 'legal_reasons'), 499: ('client_closed_request',), Server Error. 500: ('internal_server_error', 'server_error', '/o\', '✗'), 501: ('not_implemented',), 502: ('bad_gateway',), 503: ('service_unavailable', 'unavailable'), 504: ('gateway_timeout',), 505: ('http_version_not_supported', 'http_version'), 506: ('variant_also_negotiates',), 507: ('insufficient_storage',), 509: ('bandwidth_limit_exceeded', 'bandwidth'), 510: ('not_extended',), 511: ('network_authentication_required', 'network_auth', 'network_authentication'), 通过下面例子测试:(不过通常还是通过状态码判断更方便)

import requests response= requests.get("http://www.baidu.com") if response.status_code == requests.codes.ok: print("访问成功")

10、文件上传

实现方法和其他参数类似,也是构造一个字典然后通过files参数传递

import requests

files= {"files":open("git.jpeg","rb")}

response = requests.post("http://httpbin.org/post",files=files)

print(response.text)