参考

https://www.skcript.com/svr/realtime-object-and-face-detection-in-android-using-tensorflow-object-detection-api/

https://www.cnblogs.com/zongfa/p/9663649.html

下载Tensorflow models

由于现在tensorflow已经升级到2.0, https://github.com/tensorflow/models 下面的很多项目都已经升级到了tensorflow2.0, 可是由于公司的机器学习平台的tensorflow还是1.12,直接运行基于tensorflow2.0编写的代码,会出现各种各样的问题,所以需要寻找老版本的models。 直接在网页上很难找到,所有release的版本都已经去掉了Research/tutorial/sample 这几个文件夹。最终通过如下命令,成功下载到了想要的models,我们这里主要用到research下面的object_detection项目

git clone -b r1.5 https://github.com/tensorflow/models.git

环境

安装anaconda和tensorflow这些都是比较容易找到的,由于公司已经预装了这些,这里就不再累述了。

数据收集与标注

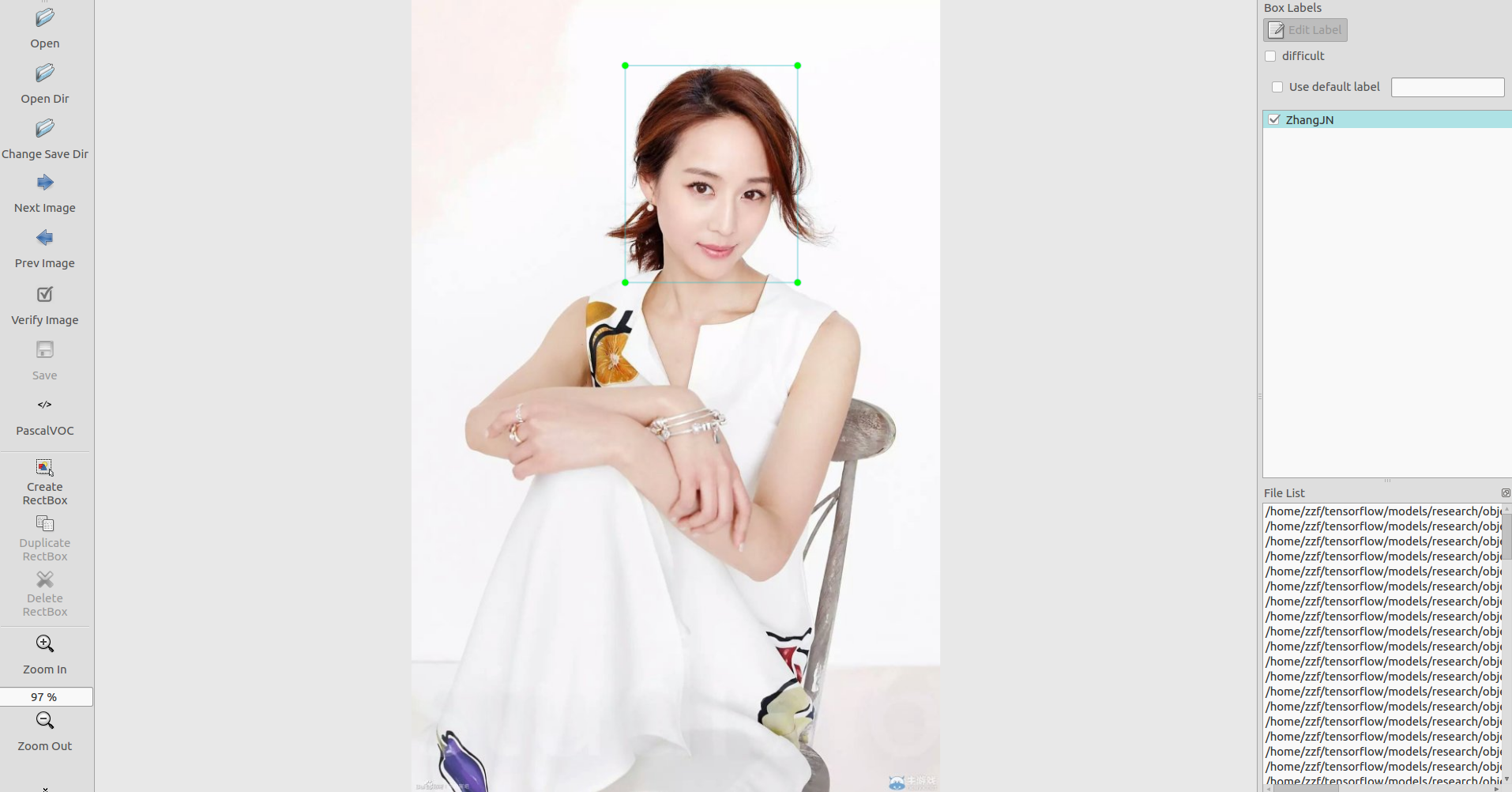

首先我找了一些自己需要用到的图片,接下来使用 LabelImg 这款小软件,直接在 https://tzutalin.github.io/labelImg/ (要FQ) 找到它的windows安装版本,对train和test里的图片进行人工标注(时间充裕的话越多越好),如下图所示。

标注完成后保存为同名的xml文件,并存在原图片所在的文件夹中。这样训练集就做好了。

转换成 TFRecords Format 格式

由于object_detection需要的输入格式是TFRecord, 所以我们必须把原图.jpg 和 LabelImg生成的.xml 先转换成 .csv 最后再转成 .record。

具体需要通过两个脚本:

# xml2csv.py import os import glob import pandas as pd import xml.etree.ElementTree as ET os.chdir('/home/zzf/tensorflow/models/research/object_detection/images/test') path = '/home/zzf/tensorflow/models/research/object_detection/images/test' def xml_to_csv(path): xml_list = [] for xml_file in glob.glob(path + '/*.xml'): tree = ET.parse(xml_file) root = tree.getroot() for member in root.findall('object'): value = (root.find('filename').text, int(root.find('size')[0].text), int(root.find('size')[1].text), member[0].text, int(member[4][0].text), int(member[4][1].text), int(member[4][2].text), int(member[4][3].text) ) xml_list.append(value) column_name = ['filename', 'width', 'height', 'class', 'xmin', 'ymin', 'xmax', 'ymax'] xml_df = pd.DataFrame(xml_list, columns=column_name) return xml_df def main(): image_path = path xml_df = xml_to_csv(image_path) xml_df.to_csv('zhangjn_train.csv', index=None) print('Successfully converted xml to csv.') main()

# generate_tfrecord.py # -*- coding: utf-8 -*- """ Usage: # From tensorflow/models/ # Create train data: python generate_tfrecord.py --csv_input=data/tv_vehicle_labels.csv --output_path=train.record # Create test data: python generate_tfrecord.py --csv_input=data/test_labels.csv --output_path=test.record """ import os import io import pandas as pd import tensorflow as tf from PIL import Image from object_detection.utils import dataset_util from collections import namedtuple, OrderedDict os.chdir('/home/zzf/tensorflow/models/research/object_detection') flags = tf.app.flags flags.DEFINE_string('csv_input', '', 'Path to the CSV input') flags.DEFINE_string('output_path', '', 'Path to output TFRecord') FLAGS = flags.FLAGS # TO-DO replace this with label map def class_text_to_int(row_label): if row_label == 'ZhangJN': # 需改动,多个写多个elif return 1 else: None def split(df, group): data = namedtuple('data', ['filename', 'object']) gb = df.groupby(group) return [data(filename, gb.get_group(x)) for filename, x in zip(gb.groups.keys(), gb.groups)] def create_tf_example(group, path): with tf.gfile.GFile(os.path.join(path, '{}'.format(group.filename)), 'rb') as fid: encoded_jpg = fid.read() encoded_jpg_io = io.BytesIO(encoded_jpg) image = Image.open(encoded_jpg_io) width, height = image.size filename = group.filename.encode('utf8') image_format = b'jpg' xmins = [] xmaxs = [] ymins = [] ymaxs = [] classes_text = [] classes = [] for index, row in group.object.iterrows(): xmins.append(row['xmin'] / width) xmaxs.append(row['xmax'] / width) ymins.append(row['ymin'] / height) ymaxs.append(row['ymax'] / height) classes_text.append(row['class'].encode('utf8')) classes.append(class_text_to_int(row['class'])) tf_example = tf.train.Example(features=tf.train.Features(feature={ 'image/height': dataset_util.int64_feature(height), 'image/width': dataset_util.int64_feature(width), 'image/filename': dataset_util.bytes_feature(filename), 'image/source_id': dataset_util.bytes_feature(filename), 'image/encoded': dataset_util.bytes_feature(encoded_jpg), 'image/format': dataset_util.bytes_feature(image_format), 'image/object/bbox/xmin': dataset_util.float_list_feature(xmins), 'image/object/bbox/xmax': dataset_util.float_list_feature(xmaxs), 'image/object/bbox/ymin': dataset_util.float_list_feature(ymins), 'image/object/bbox/ymax': dataset_util.float_list_feature(ymaxs), 'image/object/class/text': dataset_util.bytes_list_feature(classes_text), 'image/object/class/label': dataset_util.int64_list_feature(classes), })) return tf_example def main(_): writer = tf.python_io.TFRecordWriter(FLAGS.output_path) path = os.path.join(os.getcwd(), 'images/test') # 需改动 examples = pd.read_csv(FLAGS.csv_input) grouped = split(examples, 'filename') for group in grouped: tf_example = create_tf_example(group, path) writer.write(tf_example.SerializeToString()) writer.close() output_path = os.path.join(os.getcwd(), FLAGS.output_path) print('Successfully created the TFRecords: {}'.format(output_path)) if __name__ == '__main__': tf.app.run()

对于xml2csv.py,注意改变8,9行,os.chdir和path路径,以及35行,最后生成的csv文件的命名。generate_tfrecord.py也一样,路径需改为自己的,注意33行后的标签识别代码中改为相应的标签,我这里就一个。

对于训练集与测试集分别运行上述代码即可,得到train.record与test.record文件。

各文件所在目录

为了方便,我把image下的train和test的csv和record文件都放到object_detection/data目录下,如此,在object_dection文件夹下,我们有如下的文件结构:

Object-Detection -data/ --test_labels.csv --test.record --train_labels.csv --train.record -images/ --test/ ---testingimages.jpg --train/ ---testingimages.jpg --...yourimages.jpg -training/ # 新建,用于一会训练模型使用

配置文件与预训练模型

接下来需要设置配置文件,在objec_detection/samples下,寻找需要的对于模型的config文件,

我们还可以在官方提供的model zoo里下载训练好的模型。我们使用ssd_mobilenet_v1_coco,先下载它。当然也可以在configs下面找到对应的config文件ssd_mobilenet_v1_coco.config

在 object_dection文件夹下,解压 ssd_mobilenet_v1_coco_2017_11_17.tar.gz,

将ssd_mobilenet_v1_coco.config 放在training 文件夹下,然后创建另一个文件object_label.pbtxt,用来定义类别的标签。

item { id:1 name: 'ZhangJN' } #如果还有其他类别,加入下面 item { id:2 name: 'XXX' }

ssd_mobilenet_v1_coco.config需要修改下面几处

sd { num_classes: 1 #类别按实际情况, 一个就写1 box_coder { faster_rcnn_box_coder { y_scale: 10.0 x_scale: 10.0 height_scale: 5.0 width_scale: 5.0 } } }

train_config: { batch_size: 15 #Change the Batch size optimizer { rms_prop_optimizer: { learning_rate: { exponential_decay_learning_rate { initial_learning_rate: 0.001 decay_steps: 800720 decay_factor: 0.95 } } momentum_optimizer_value: 0.9 decay: 0.9 epsilon: 1.0 } } fine_tune_checkpoint: "ssd_mobilenet_v1_coco_11_06_2017/model.ckpt" #这个地方按照解压后的实际文件夹名称来修改 from_detection_checkpoint: true # Note: The below line limits the training process to 200K steps, which we # empirically found to be sufficient enough to train the pets dataset. This # effectively bypasses the learning rate schedule (the learning rate will # never decay). Remove the below line to train indefinitely. num_steps: 300 #Number of steps to train data_augmentation_options { random_horizontal_flip { } } data_augmentation_options { ssd_random_crop { } } }

train_input_reader: { tf_record_input_reader { input_path: "data/train.record" #path of our train record } label_map_path: "training/object_detection.pbtxt" #标签文件的位置 } eval_config: { num_examples: 2000 # Note: The below line limits the evaluation process to 10 evaluations. # Remove the below line to evaluate indefinitely. max_evals: 10 } eval_input_reader: { tf_record_input_reader { input_path: "data/test.record" #path of our test record } label_map_path: "training/object_detection.pbtxt" #标签文件的位置 shuffle: false num_readers: 1 }

所有数据都已准备好。可以开始训练了。

训练自己的模型

在research目录下执行

$ python setup.py install

现在的目录结构是

-object_detection --training

--ssd_mobilenet_v1_coco.config

--object_label.pbtxt

--data --train.record

--test.record

--ssd_mobilenet_v1_coco_11_06_2017

根据自己的tensorflow环境执行下面的命令

$ cd "PATH TO THE MODELS FOLDER" $ sudo apt-get install protobuf-compiler python-pil python-lxml $ sudo pip install pillow $ sudo pip install lxml $ sudo pip install jupyter $ sudo pip install matplotlib $ protoc object_detection/protos/*.proto --python_out=. $ export PYTHONPATH=$PYTHONPATH:`pwd`:`pwd`/slim

训练模型

$ python train.py --logtostderr --train_dir=training/ --pipeline_config_path=training/ssd_mobilenet_v1_coco.config

遇到很讨厌的错误

ValueError: axis = 0 not in [0, 0)

最后修改ssd_mobilenet_v1_coco.config文件

loss { classification_loss { weighted_sigmoid { anchorwise_output: true #add this } } localization_loss { weighted_smooth_l1 { anchorwise_output: true #add this } } hard_example_miner { num_hard_examples: 3000 iou_threshold: 0.99 loss_type: CLASSIFICATION max_negatives_per_positive: 3 min_negatives_per_image: 0 } classification_weight: 1.0 localization_weight: 1.0 }

模型正常训练了。

保存自己训练的模型

python3 export_inference_graph.py --input_type image_tensor --pipeline_config_path training/ssd_mobilenet_v1_coco.config --trained_checkpoint_prefx training/model.ckpt-3737 --output_directory zhangjn_detction

其中,trained_checkpoint_prefx要改为自己训练到的数字, output为想要将模型存放在何处,我这里新建了一个文件夹zhangjn_detction 。运行结束后,就可以在zhangjn_detction文件夹下看到若干文件,有saved_model、checkpoint、frozen_inference_graph.pb等。

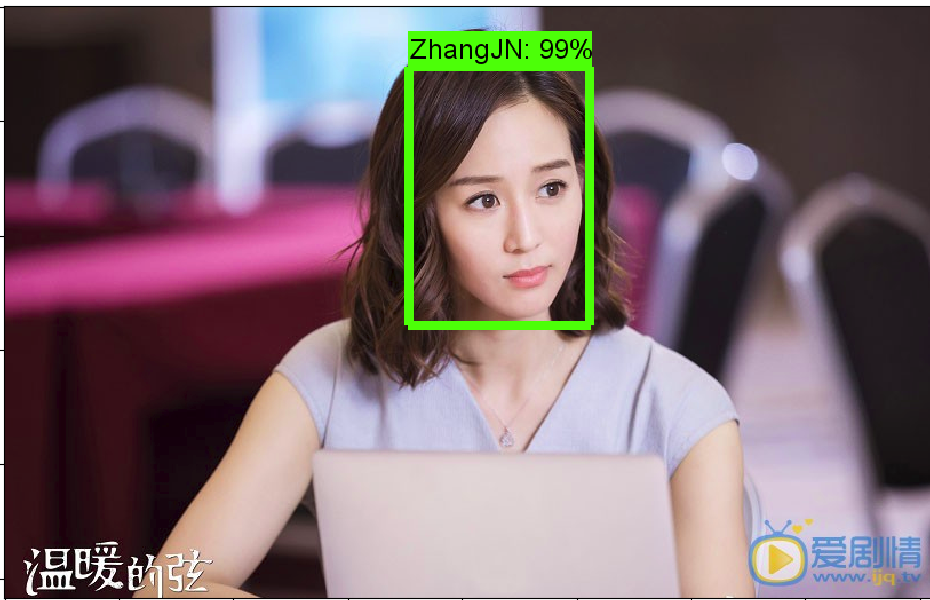

测试模型

将object_detection目录下的object_detection_tutorial.ipynb打开,或者转成object_detection_tutorial.py的python文件(网上可以找到转换方法),更改一下就可以测试。

# coding: utf-8 # # Object Detection Demo # Welcome to the object detection inference walkthrough! This notebook will walk you step by step through the process of using a pre-trained model to detect objects in an image. Make sure to follow the [installation instructions](https://github.com/tensorflow/models/blob/master/research/object_detection/g3doc/installation.md) before you start. from distutils.version import StrictVersion import numpy as np import os import six.moves.urllib as urllib import sys import tarfile import tensorflow as tf import zipfile from collections import defaultdict from io import StringIO from matplotlib import pyplot as plt from PIL import Image # This is needed since the notebook is stored in the object_detection folder. sys.path.append("..") from object_detection.utils import ops as utils_ops # if StrictVersion(tf.__version__) < StrictVersion('1.9.0'): # raise ImportError('Please upgrade your TensorFlow installation to v1.9.* or later!') # ## Env setup # In[2]: # This is needed to display the images. # get_ipython().magic(u'matplotlib inline') # ## Object detection imports # Here are the imports from the object detection module. from utils import label_map_util from utils import visualization_utils as vis_util # # Model preparation # ## Variables # # Any model exported using the `export_inference_graph.py` tool can be loaded here simply by changing `PATH_TO_FROZEN_GRAPH` to point to a new .pb file. # # By default we use an "SSD with Mobilenet" model here. See the [detection model zoo](https://github.com/tensorflow/models/blob/master/research/object_detection/g3doc/detection_model_zoo.md) for a list of other models that can be run out-of-the-box with varying speeds and accuracies. # In[4]: # What model to download. MODEL_NAME = 'zhangjn_detction' # MODEL_FILE = MODEL_NAME + '.tar.gz' # DOWNLOAD_BASE = 'http://download.tensorflow.org/models/object_detection/' # Path to frozen detection graph. This is the actual model that is used for the object detection. PATH_TO_FROZEN_GRAPH = MODEL_NAME + '/frozen_inference_graph.pb' # List of the strings that is used to add correct label for each box. PATH_TO_LABELS = os.path.join('data', 'object_label.pbtxt') NUM_CLASSES = 1 # ## Download Model # opener = urllib.request.URLopener() # opener.retrieve(DOWNLOAD_BASE + MODEL_FILE, MODEL_FILE) ''' tar_file = tarfile.open(MODEL_FILE) for file in tar_file.getmembers(): file_name = os.path.basename(file.name) if 'frozen_inference_graph.pb' in file_name: tar_file.extract(file, os.getcwd()) ''' # ## Load a (frozen) Tensorflow model into memory. detection_graph = tf.Graph() with detection_graph.as_default(): od_graph_def = tf.GraphDef() with tf.gfile.GFile(PATH_TO_FROZEN_GRAPH, 'rb') as fid: serialized_graph = fid.read() od_graph_def.ParseFromString(serialized_graph) tf.import_graph_def(od_graph_def, name='') # ## Loading label map # Label maps map indices to category names, so that when our convolution network predicts `5`, we know that this corresponds to `airplane`. Here we use internal utility functions, but anything that returns a dictionary mapping integers to appropriate string labels would be fine label_map = label_map_util.load_labelmap(PATH_TO_LABELS) categories = label_map_util.convert_label_map_to_categories(label_map, max_num_classes=NUM_CLASSES, use_display_name=True) category_index = label_map_util.create_category_index(categories) # ## Helper code # In[8]: def load_image_into_numpy_array(image): (im_width, im_height) = image.size return np.array(image.getdata()).reshape( (im_height, im_width, 3)).astype(np.uint8) # # Detection # For the sake of simplicity we will use only 2 images: # image1.jpg # image2.jpg # If you want to test the code with your images, just add path to the images to the TEST_IMAGE_PATHS. PATH_TO_TEST_IMAGES_DIR = 'test_images' TEST_IMAGE_PATHS = [ os.path.join(PATH_TO_TEST_IMAGES_DIR, 'image{}.jpg'.format(i)) for i in range(3, 8) ] # Size, in inches, of the output images. IMAGE_SIZE = (12, 8) # In[10]: def run_inference_for_single_image(image, graph): with graph.as_default(): with tf.Session() as sess: # Get handles to input and output tensors ops = tf.get_default_graph().get_operations() all_tensor_names = {output.name for op in ops for output in op.outputs} tensor_dict = {} for key in [ 'num_detections', 'detection_boxes', 'detection_scores', 'detection_classes', 'detection_masks' ]: tensor_name = key + ':0' if tensor_name in all_tensor_names: tensor_dict[key] = tf.get_default_graph().get_tensor_by_name( tensor_name) if 'detection_masks' in tensor_dict: # The following processing is only for single image detection_boxes = tf.squeeze(tensor_dict['detection_boxes'], [0]) detection_masks = tf.squeeze(tensor_dict['detection_masks'], [0]) # Reframe is required to translate mask from box coordinates to image coordinates and fit the image size. real_num_detection = tf.cast(tensor_dict['num_detections'][0], tf.int32) detection_boxes = tf.slice(detection_boxes, [0, 0], [real_num_detection, -1]) detection_masks = tf.slice(detection_masks, [0, 0, 0], [real_num_detection, -1, -1]) detection_masks_reframed = utils_ops.reframe_box_masks_to_image_masks( detection_masks, detection_boxes, image.shape[0], image.shape[1]) detection_masks_reframed = tf.cast( tf.greater(detection_masks_reframed, 0.5), tf.uint8) # Follow the convention by adding back the batch dimension tensor_dict['detection_masks'] = tf.expand_dims( detection_masks_reframed, 0) image_tensor = tf.get_default_graph().get_tensor_by_name('image_tensor:0') # Run inference output_dict = sess.run(tensor_dict, feed_dict={image_tensor: np.expand_dims(image, 0)}) # all outputs are float32 numpy arrays, so convert types as appropriate output_dict['num_detections'] = int(output_dict['num_detections'][0]) output_dict['detection_classes'] = output_dict[ 'detection_classes'][0].astype(np.uint8) output_dict['detection_boxes'] = output_dict['detection_boxes'][0] output_dict['detection_scores'] = output_dict['detection_scores'][0] if 'detection_masks' in output_dict: output_dict['detection_masks'] = output_dict['detection_masks'][0] return output_dict # In[ ]: for image_path in TEST_IMAGE_PATHS:

save_path = image_path.split(".")[0]+'_result.jpg' image = Image.open(image_path) # the array based representation of the image will be used later in order to prepare the # result image with boxes and labels on it. image_np = load_image_into_numpy_array(image) # Expand dimensions since the model expects images to have shape: [1, None, None, 3] image_np_expanded = np.expand_dims(image_np, axis=0) # Actual detection. output_dict = run_inference_for_single_image(image_np, detection_graph) # Visualization of the results of a detection. vis_util.visualize_boxes_and_labels_on_image_array( image_np, output_dict['detection_boxes'], output_dict['detection_classes'], output_dict['detection_scores'], category_index, instance_masks=output_dict.get('detection_masks'), use_normalized_coordinates=True, line_thickness=8) # plt.figure(figsize=IMAGE_SIZE) # plt.imshow(image_np) # plt.show()

plt.imsave(save_path, image_np)

1、因为不用下载模型,下载相关代码可以删除,model name, path to labels , num classes 更改成自己的,download model部分都删去。

2、测试图片,准备几张放入test images文件夹中,命名images+数字.jpg的格式,就不用改代码,在

TEST_IMAGE_PATHS = [ os.path.join(PATH_TO_TEST_IMAGES_DIR, 'image{}.jpg'.format(i)) for i in range(3, 8) ]

一行更改自己图片的数字序列就好了,range(3,8),我的图片命名从3至7.

由于运行在云服务器上,没办法显示图片,所以需要保存图片。最后加入

plt.imsave(save_path, image_np)

运行上面的脚本

python3 object_detection_tutorial.py

浙公网安备 33010602011771号

浙公网安备 33010602011771号