Adv: mongo possible faults

from here, quotes:

Please note: our followup analysis of 3.4.0-rc3 revealed additional faults in MongoDB’s replication algorithms which could lead to the loss of acknowledged documents–even with Majority Write Concern, journaling, and fsynced writes.

In May of 2013, we showed that MongoDB 2.4.3 would lose acknowledged writes at all consistency levels. Every write concern less than MAJORITY loses data by design due to rollbacks–but even WriteConcern.MAJORITY lost acknowledged writes, because when the server encountered a network error, it returned a successful, not a failed, response to the client. Happily, that bug was fixed a few releases later.

Since then I’ve improved Jepsen significantly and written a more powerful analyzer for checking whether or not a system is linearizable. I’d like to return to Mongo, now at version 2.6.7, to verify its single-document consistency. (Mongo 3.0 was released during my testing, and I expect they’ll be hammering out single-node data loss bugs for a little while.)

In this post, we’ll see that Mongo’s consistency model is broken by design: not only can “strictly consistent” reads see stale versions of documents, but they can also return garbage data from writes that never should have occurred. The former is (as far as I know) a new result which runs contrary to all of Mongo’s consistency documentation. The latter has been a documented issue in Mongo for some time. We’ll also touch on a result from the previous Jepsen post: almost all write concern levels allow data loss.

This analysis is brought to you by Stripe, where I now work on safety and data integrity–including Jepsen–full time. I’m delighted to work here and excited to talk about new consistency problems!

We’ll start with some background, methods, and an in-depth analysis of an example failure case, but if you’re in a hurry, you can skip ahead to the discussion.

Write consistency

First, we need to understand what MongoDB claims to offer. The Fundamentals FAQ says Mongo offers “atomic writes on a per-document-level”. From the last Jepsen post and the write concern documentation, we know Mongo’s default consistency level (Acknowledged) is unsafe. Why? Because operations are only guaranteed to be durable after they have been acknowledged by a majority of nodes. We must must use write concern Majority to ensure that successful operations won’t be discarded by a rollback later.

Why can’t we use a lower write concern? Because rollbacks are only OK if any two versions of the document can be merged associatively, commutatively, and idempotently; e.g. they form a CRDT. Our documents likely don’t have this property.

For instance, consider an increment-only counter, stored as a single integer field in a MongoDB document. If you encounter a rollback and find two copies of the document with the values

5and7, the correct value of the counter depends on when they diverged. If the initial value was0, we could have had five increments on one primary, and seven increments on another: the correct value is5+7=12. If, on the other hand, the value on both replicas was5, and only two inserts occurred on an isolated primary, the correct value should be7. Or it could be any value in between!And this assumes your operations (e.g. incrementing) commute! If they’re order-dependent, Mongo will allow flat-out invalid writes to go through, like claiming the same username twice, document ID conflicts, transferring $40 + $30 = $70 out of a $50 account which is never supposed to go negative, and so on.

Unless your documents are state-based CRDTs, the following table illustrates which Mongo write concern levels are actually safe:

Write concern Also called Safe? Unacknowledged NORMAL Unsafe: Doesn’t even bother checking for errors Acknowledged (new default) SAFE Unsafe: not even on disk or replicated Journaled JOURNAL_SAFE Unsafe: ops could be illegal or just rolled back by another primary Fsynced FSYNC_SAFE Unsafe: ditto, constraint violations & rollbacks Replica Acknowledged REPLICAS_SAFE Unsafe: ditto, another primary might overrule Majority MAJORITY Safe: no rollbacks (but check the fsync/journal fields) So: if you use MongoDB, you should almost always be using the Majority write concern. Anything less is asking for data corruption or loss when a primary transition occurs.

Read consistency

The FAQ Fundamentals doesn’t just promise atomic writes, though: it also claims “fully-consistent reads”. The Replication Introduction goes on:

The primary accepts all write operations from clients. Replica set can have only one primary. Because only one member can accept write operations, replica sets provide strict consistency for all reads from the primary.

What does “strict consistency” mean? Mongo’s glossary defines it as

A property of a distributed system requiring that all members always reflect the latest changes to the system. In a database system, this means that any system that can provide data must reflect the latest writes at all times. In MongoDB, reads from a primary have strict consistency; reads from secondary members have eventual consistency.

The Replication docs agree: “By default, in MongoDB, read operations to a replica set return results from the primary and are consistent with the last write operation”, as distinct from “eventual consistency”, where “the secondary member’s state will eventually reflect the primary’s state.”

Read consistency is controlled by the Read Preference, which emphasizes that reads from the primary will see the “latest version”:

By default, an application directs its read operations to the primary member in a replica set. Reading from the primary guarantees that read operations reflect the latest version of a document.

And goes on to warn that:

…. modes other than primary can and will return stale data because the secondary queries will not include the most recent write operations to the replica set’s primary.

All read preference modes except primary may return stale data because secondaries replicate operations from the primary with some delay. Ensure that your application can tolerate stale data if you choose to use a non-primary mode.

So, if we write at write concern Majority, and read with read preference Primary (the default), we should see the most recently written value.

CaS rules everything around me

When writes and reads are concurrent, what does “most recent” mean? Which states are we guaranteed to see? What could we see?

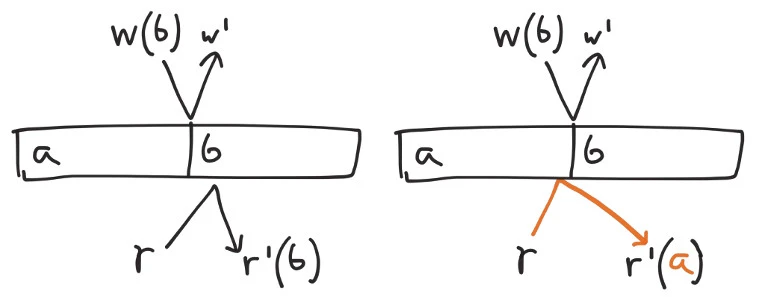

For concurrent operations, the absence of synchronized clocks prevents us from establishing a total order. We must allow each operation to come just before, or just after, any other in-flight writes. If we write

a, initiate a write ofb, then perform a read, we could see eitheraorbdepending on which operation takes place first.On the other hand, we obviously shouldn’t interact with a value from the future–we have no way to tell what those operations will be. The latest possible state an operation can see is the one just prior to the response received by the client.

And because we need to see the “most recent write operation”, we should not be able to interact with any state prior to the ops concurrent with the most recently acknowledged write. It should be impossible, for example, to write

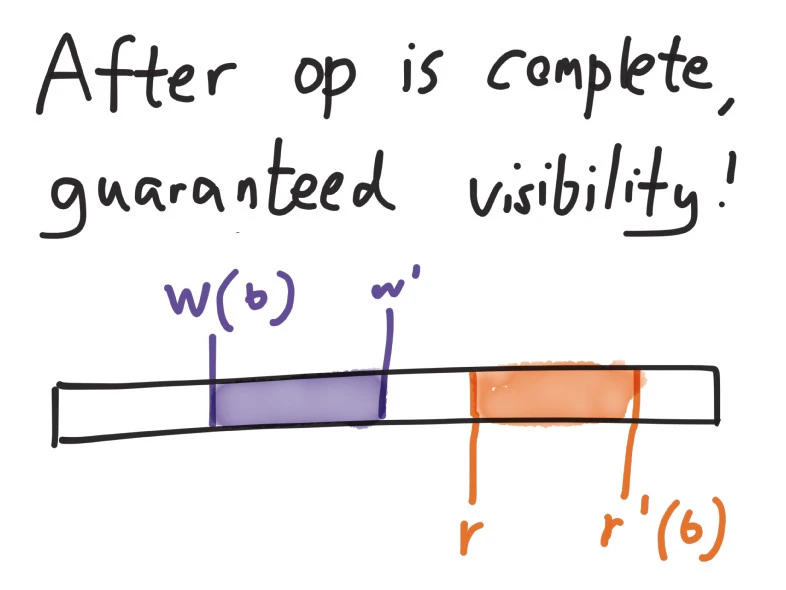

a, writeb, then reada: since the writes ofaandbare not concurrent, the second should always win.This is a common strong consistency model for concurrent data structures called linearizability. In a nutshell, every successful operation must appear to occur atomically at some time between its invocation and response.

So in Jepsen, we’ll model a MongoDB document as a linearizable compare-and-set (CaS) register, supporting three operations:

write(x'): set the register’s value tox'read(x): read the current valuex. Only succeeds if the current value is actuallyx.cas(x, x'): if and only if the value is currentlyx, set it tox'.We can express this consistency model as a singlethreaded datatype in Clojure. Given a register containing a

value, and an operationop, thestepfunction returns the new state of the register–or a specialinconsistentvalue if the operation couldn’t take place.

(defrecord CASRegister [value] Model (step [r op] (condp = (:f op) :write (CASRegister. (:value op)) :cas (let [[cur new] (:value op)] (if (= cur value) (CASRegister. new) (inconsistent (str "can't CAS " value " from " cur " to " new)))) :read (if (or (nil? (:value op)) (= value (:value op))) r (inconsistent (str "can't read " (:value op) " from register " value))))))Then we’ll have Jepsen generate a mix of read, write, and CaS operations, and apply those operations to a five-node Mongo cluster. Over the course of a few minutes we’ll have five clients perform those random read, write, and CaS ops against the cluster, while a special nemesis process creates and resolves network partitions to induce cluster transitions.

Finally, we’ll have Knossos analyze the resulting concurrent history of all clients' operations, in search of a linearizable path through the history.

Inconsistent reads

Surprise! Even when all writes and CaS ops use the Majority write concern, and all reads use the Primary read preference, operations on a single document in MongoDB are not linearizable. Reads taking place just after the start of a network partition demonstrate impossible behaviors.

In this history, an anomaly appears shortly after the nemesis isolates nodes n1 and n3 from n2, n4, and n5. Each line shows a singlethreaded process (e.g.

2) performing (e.g.:invoke) an operation (e.g.:read), with a value (e.g.3).An

:invokeindicates the start of an operation. If it completes successfully, the process logs a corresponding:ok. If it fails (by which we mean the operation definitely did not take place) we log:failinstead. If the operation crashes–for instance, if the network drops, a machine crashes, a timeout occurs, etc.–we log an:infomessage, and that operation remains concurrent with every subsequent op in the history. Crashed operations could take effect at any future time.

Not linearizable. Linearizable prefix was: 2 :invoke :read 3 4 :invoke :write 3 ... 4 :invoke :read 0 4 :ok :read 0 :nemesis :info :start "Cut off {:n5 #{:n3 :n1}, :n2 #{:n3 :n1}, :n4 #{:n3 :n1}, :n1 #{:n4 :n2 :n5}, :n3 #{:n4 :n2 :n5}}" 1 :invoke :cas [1 4] 1 :fail :cas [1 4] 3 :invoke :cas [4 4] 3 :fail :cas [4 4] 2 :invoke :cas [1 0] 2 :fail :cas [1 0] 0 :invoke :read 0 0 :ok :read 0 4 :invoke :read 0 4 :ok :read 0 1 :invoke :read 0 1 :ok :read 0 3 :invoke :cas [2 1] 3 :fail :cas [2 1] 2 :invoke :read 0 2 :ok :read 0 0 :invoke :cas [0 4] 4 :invoke :cas [2 3] 4 :fail :cas [2 3] 1 :invoke :read 4 1 :ok :read 4 3 :invoke :cas [4 2] 2 :invoke :cas [1 1] 2 :fail :cas [1 1] 4 :invoke :write 3 1 :invoke :read 3 1 :ok :read 3 2 :invoke :cas [4 2] 2 :fail :cas [4 2] 1 :invoke :cas [3 1] 2 :invoke :write 4 0 :info :cas :network-error 2 :info :write :network-error 3 :info :cas :network-error 4 :info :write :network-error 1 :info :cas :network-error 5 :invoke :write 1 5 :fail :write 1 ... more failing ops which we can ignore since