1. 环境:maven 3.6.3、spark-core_2.12-3.2.1、Java1.8

2. 问题描述

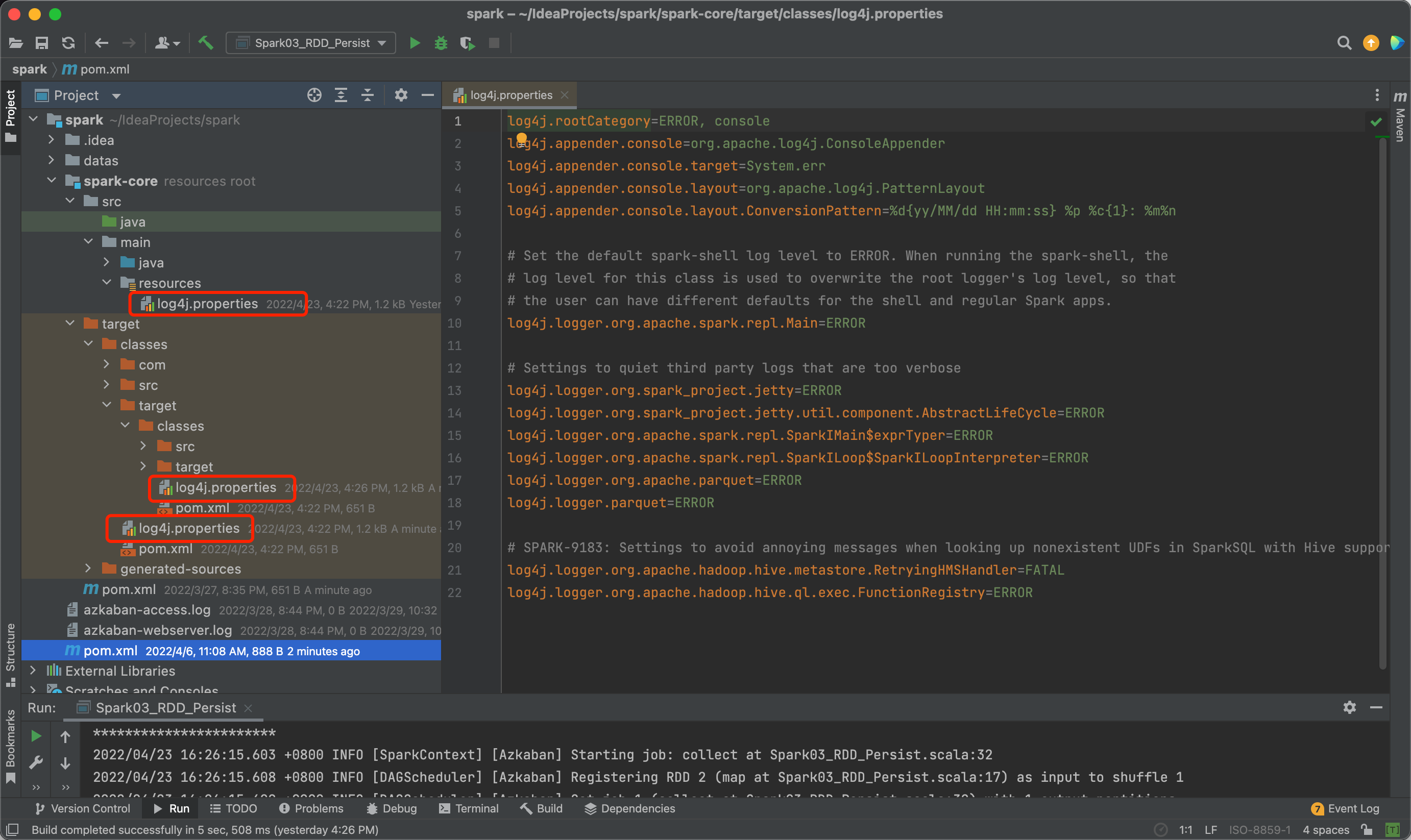

2.1 设置了log4j.properties,目的是将只将运行结果和ERROR的信息打印在控制台,但不起作用

2.2 log4j.properties

log4j.rootCategory=ERROR, console

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.target=System.err

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d{yy/MM/dd HH:mm:ss} %p %c{1}: %m%n

# Set the default spark-shell log level to ERROR. When running the spark-shell, the

# log level for this class is used to overwrite the root logger's log level, so that

# the user can have different defaults for the shell and regular Spark apps.

log4j.logger.org.apache.spark.repl.Main=ERROR

# Settings to quiet third party logs that are too verbose

log4j.logger.org.spark_project.jetty=ERROR

log4j.logger.org.spark_project.jetty.util.component.AbstractLifeCycle=ERROR

log4j.logger.org.apache.spark.repl.SparkIMain$exprTyper=ERROR

log4j.logger.org.apache.spark.repl.SparkILoop$SparkILoopInterpreter=ERROR

log4j.logger.org.apache.parquet=ERROR

log4j.logger.parquet=ERROR

# SPARK-9183: Settings to avoid annoying messages when looking up nonexistent UDFs in SparkSQL with Hive support

log4j.logger.org.apache.hadoop.hive.metastore.RetryingHMSHandler=FATAL

log4j.logger.org.apache.hadoop.hive.ql.exec.FunctionRegistry=ERROR

2.3 如图

![image]()

3. 解决过程

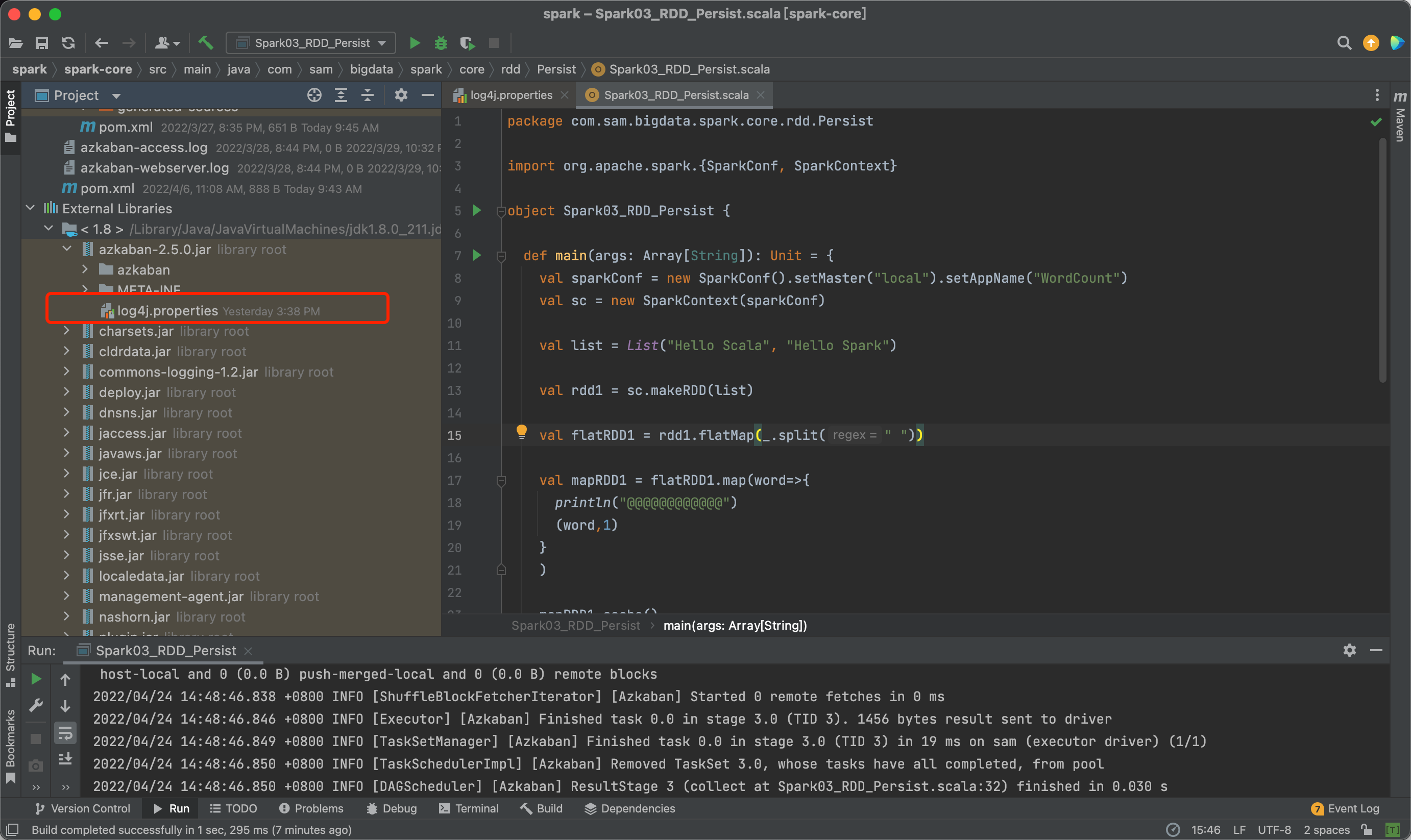

3.1 通过网上的搜索,发现是java1.8的azkaban.2.5.0.jar包中已经有log4j.properties,覆盖了我自己创建的log4j.properties,这里将azkaban.2.5.0.jar包进行删除(希望大佬能够给我提供另一种解决方法,感谢)

![image]()

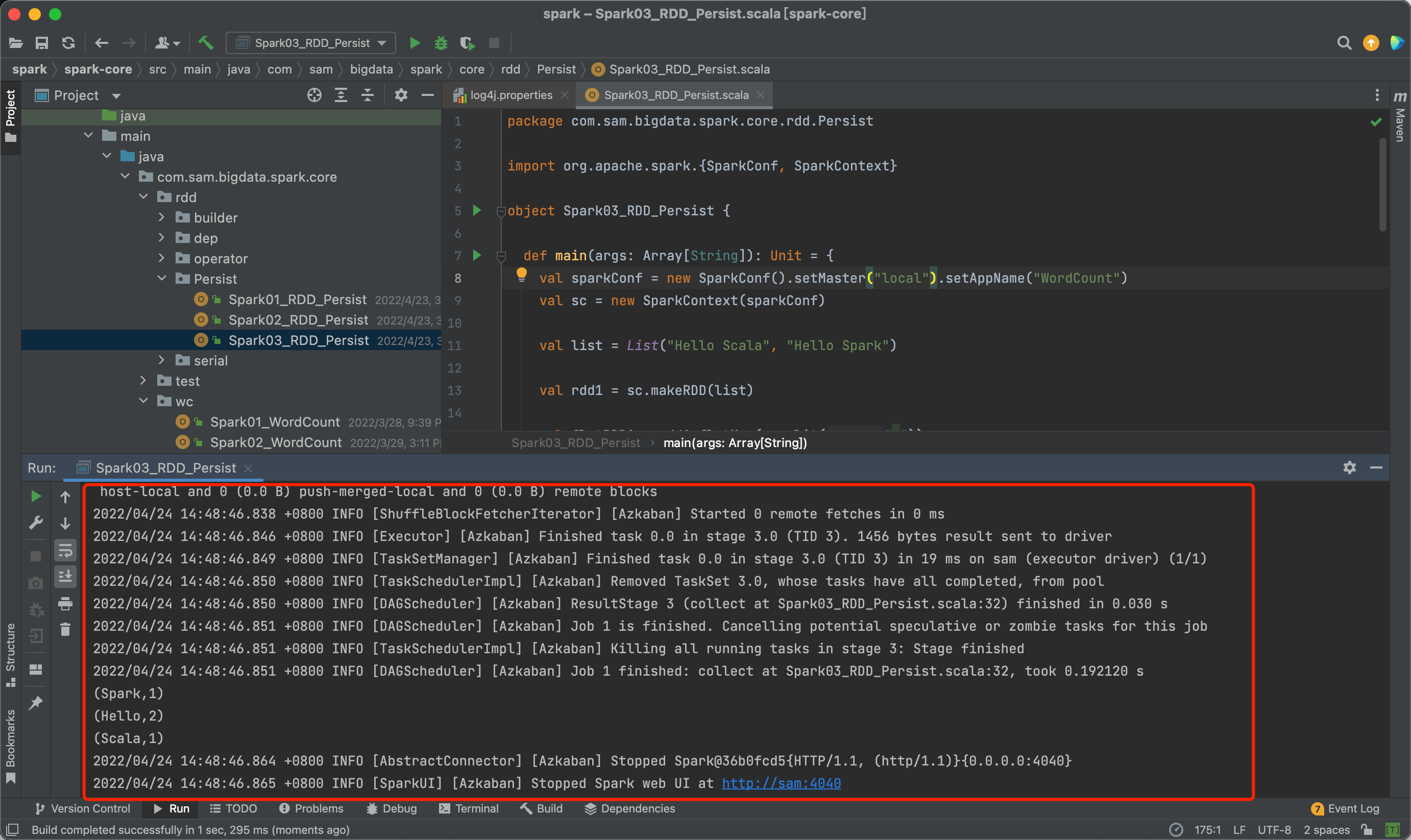

3.1 修改前的运行结果

![image]()

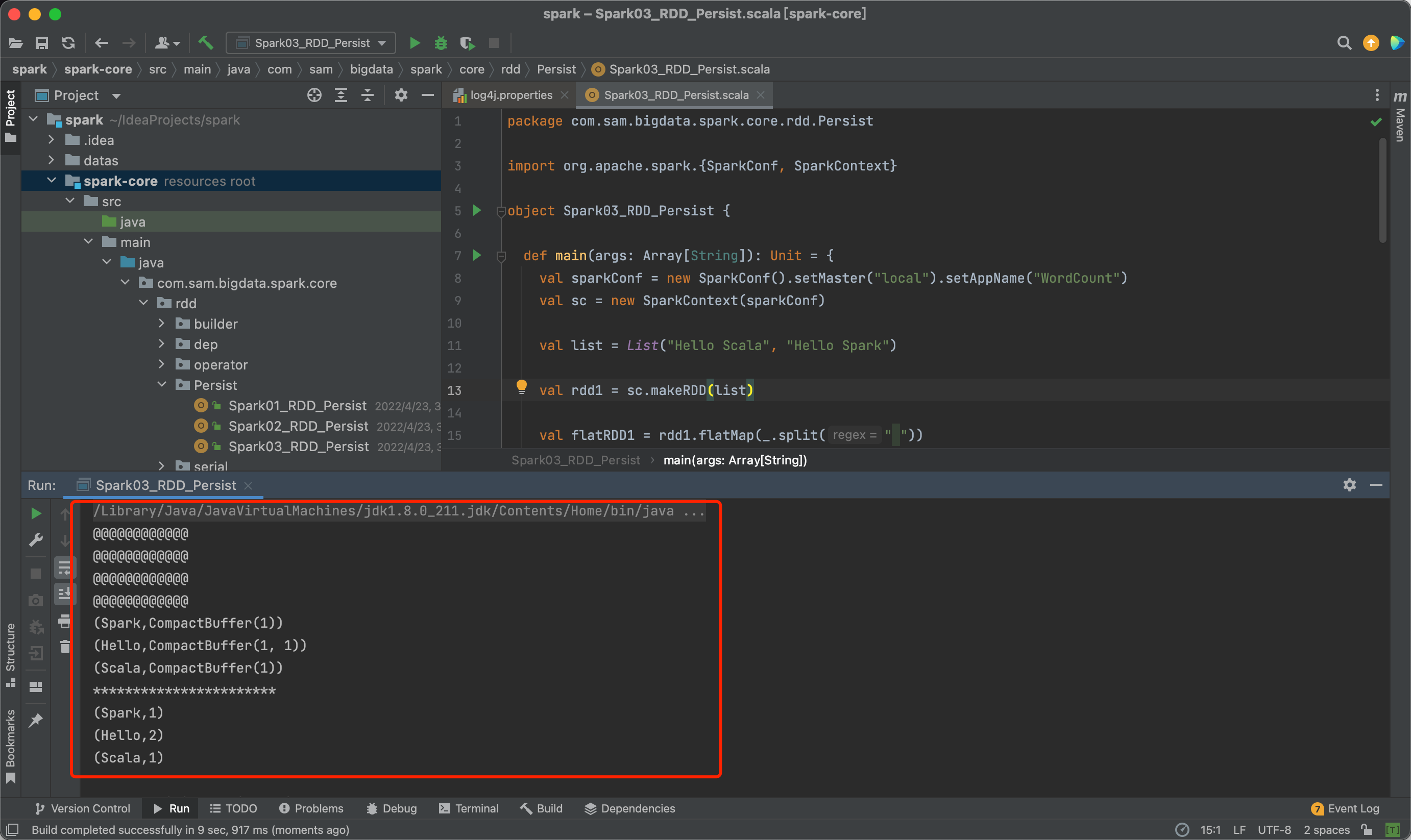

3.2 修改后的运行结果

![image]()

浙公网安备 33010602011771号

浙公网安备 33010602011771号