实验三:朴素贝叶斯算法实验

【实验目的】

理解朴素贝叶斯算法原理,掌握朴素贝叶斯算法框架。

【实验内容】

针对下表中的数据,编写python程序实现朴素贝叶斯算法(不使用sklearn包),对输入数据进行预测;

熟悉sklearn库中的朴素贝叶斯算法,使用sklearn包编写朴素贝叶斯算法程序,对输入数据进行预测;

【实验报告要求】

对照实验内容,撰写实验过程、算法及测试结果;

代码规范化:命名规则、注释;

查阅文献,讨论朴素贝叶斯算法的应用场景。

| 色泽 | 根蒂 | 敲声 | 纹理 | 脐部 | 触感 | 好瓜 |

| 青绿 | 蜷缩 | 浊响 | 清晰 | 凹陷 | 碍滑 | 是 |

| 乌黑 | 蜷缩 | 沉闷 | 清晰 | 凹陷 | 碍滑 | 是 |

| 乌黑 | 蜷缩 | 浊响 | 清晰 | 凹陷 | 碍滑 | 是 |

| 青绿 | 蜷缩 | 沉闷 | 清晰 | 凹陷 | 碍滑 | 是 |

| 浅白 | 蜷缩 | 浊响 | 清晰 | 凹陷 | 碍滑 | 是 |

| 青绿 | 稍蜷 | 浊响 | 清晰 | 稍凹 | 软粘 | 是 |

| 乌黑 | 稍蜷 | 浊响 | 稍糊 | 稍凹 | 软粘 | 是 |

| 乌黑 | 稍蜷 | 浊响 | 清晰 | 稍凹 | 硬滑 | 是 |

| 乌黑 | 稍蜷 | 沉闷 | 稍糊 | 稍凹 | 硬滑 | 否 |

| 青绿 | 硬挺 | 清脆 | 清晰 | 平坦 | 软粘 | 否 |

| 浅白 | 硬挺 | 清脆 | 模糊 | 平坦 | 硬滑 | 否 |

| 浅白 | 蜷缩 | 浊响 | 模糊 | 平坦 | 软粘 | 否 |

| 青绿 | 稍蜷 | 浊响 | 稍糊 | 凹陷 | 硬滑 | 否 |

| 浅白 | 稍蜷 | 沉闷 | 稍糊 | 凹陷 | 硬滑 | 否 |

| 乌黑 | 稍蜷 | 浊响 | 清晰 | 稍凹 | 软粘 | 否 |

| 浅白 | 蜷缩 | 浊响 | 模糊 | 平坦 | 硬滑 | 否 |

| 青绿 | 蜷缩 | 沉闷 | 稍糊 | 稍凹 | 硬滑 | 否 |

一、针对下表中的数据,编写python程序实现朴素贝叶斯算法(不使用sklearn包),对输入数据进行预测;

(1)创建数据

import numpy as np

import pandas as pd

from math import exp, sqrt, pi

def getDataSet():

dataSet = [

['青绿', '蜷缩', '浊响', '清晰', '凹陷', '硬滑', 0.697, 0.460, 1],

['乌黑', '蜷缩', '沉闷', '清晰', '凹陷', '硬滑', 0.774, 0.376, 1],

['乌黑', '蜷缩', '浊响', '清晰', '凹陷', '硬滑', 0.634, 0.264, 1],

['青绿', '蜷缩', '沉闷', '清晰', '凹陷', '硬滑', 0.608, 0.318, 1],

['浅白', '蜷缩', '浊响', '清晰', '凹陷', '硬滑', 0.556, 0.215, 1],

['青绿', '稍蜷', '浊响', '清晰', '稍凹', '软粘', 0.403, 0.237, 1],

['乌黑', '稍蜷', '浊响', '稍糊', '稍凹', '软粘', 0.481, 0.149, 1],

['乌黑', '稍蜷', '浊响', '清晰', '稍凹', '硬滑', 0.437, 0.211, 1],

['乌黑', '稍蜷', '沉闷', '稍糊', '稍凹', '硬滑', 0.666, 0.091, 0],

['青绿', '硬挺', '清脆', '清晰', '平坦', '软粘', 0.243, 0.267, 0],

['浅白', '硬挺', '清脆', '模糊', '平坦', '硬滑', 0.245, 0.057, 0],

['浅白', '蜷缩', '浊响', '模糊', '平坦', '软粘', 0.343, 0.099, 0],

['青绿', '稍蜷', '浊响', '稍糊', '凹陷', '硬滑', 0.639, 0.161, 0],

['浅白', '稍蜷', '沉闷', '稍糊', '凹陷', '硬滑', 0.657, 0.198, 0],

['乌黑', '稍蜷', '浊响', '清晰', '稍凹', '软粘', 0.360, 0.370, 0],

['浅白', '蜷缩', '浊响', '模糊', '平坦', '硬滑', 0.593, 0.042, 0],

['青绿', '蜷缩', '沉闷', '稍糊', '稍凹', '硬滑', 0.719, 0.103, 0]

]

features = ['色泽', '根蒂', '敲声', '纹理', '脐部', '触感', '密度', '含糖量']

(2)算法实现

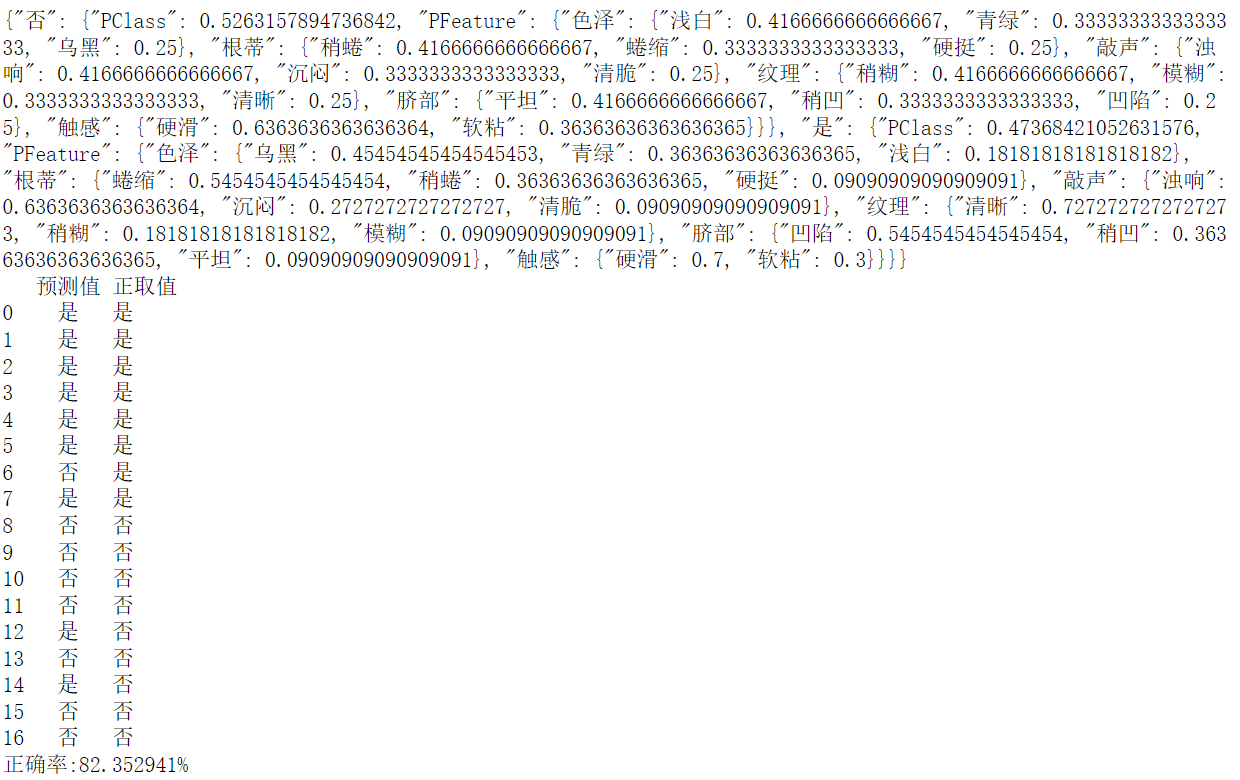

import pandas as pd import numpy as np class NaiveBayes: def __init__(self): self.model = {}#key 为类别名 val 为字典PClass表示该类的该类,PFeature:{}对应对于各个特征的概率 def calEntropy(self, y): # 计算熵 valRate = y.value_counts().apply(lambda x : x / y.size) # 频次汇总 得到各个特征对应的概率 valEntropy = np.inner(valRate, np.log2(valRate)) * -1 return valEntropy def fit(self, xTrain, yTrain = pd.Series()): if not yTrain.empty:#如果不传,自动选择最后一列作为分类标签 xTrain = pd.concat([xTrain, yTrain], axis=1) self.model = self.buildNaiveBayes(xTrain) return self.model def buildNaiveBayes(self, xTrain): yTrain = xTrain.iloc[:,-1] yTrainCounts = yTrain.value_counts()# 频次汇总 得到各个特征对应的概率 yTrainCounts = yTrainCounts.apply(lambda x : (x + 1) / (yTrain.size + yTrainCounts.size)) #使用了拉普拉斯平滑 retModel = {} for nameClass, val in yTrainCounts.items(): retModel[nameClass] = {'PClass': val, 'PFeature':{}} propNamesAll = xTrain.columns[:-1] allPropByFeature = {} for nameFeature in propNamesAll: allPropByFeature[nameFeature] = list(xTrain[nameFeature].value_counts().index) #print(allPropByFeature) for nameClass, group in xTrain.groupby(xTrain.columns[-1]): for nameFeature in propNamesAll: eachClassPFeature = {} propDatas = group[nameFeature] propClassSummary = propDatas.value_counts()# 频次汇总 得到各个特征对应的概率 for propName in allPropByFeature[nameFeature]: if not propClassSummary.get(propName): propClassSummary[propName] = 0#如果有属性灭有,那么自动补0 Ni = len(allPropByFeature[nameFeature]) propClassSummary = propClassSummary.apply(lambda x : (x + 1) / (propDatas.size + Ni))#使用了拉普拉斯平滑 for nameFeatureProp, valP in propClassSummary.items(): eachClassPFeature[nameFeatureProp] = valP retModel[nameClass]['PFeature'][nameFeature] = eachClassPFeature return retModel def predictBySeries(self, data): curMaxRate = None curClassSelect = None for nameClass, infoModel in self.model.items(): rate = 0 rate += np.log(infoModel['PClass']) PFeature = infoModel['PFeature'] for nameFeature, val in data.items(): propsRate = PFeature.get(nameFeature) if not propsRate: continue rate += np.log(propsRate.get(val, 0))#使用log加法避免很小的小数连续乘,接近零 #print(nameFeature, val, propsRate.get(val, 0)) #print(nameClass, rate) if curMaxRate == None or rate > curMaxRate: curMaxRate = rate curClassSelect = nameClass return curClassSelect def predict(self, data): if isinstance(data, pd.Series): return self.predictBySeries(data) return data.apply(lambda d: self.predictBySeries(d), axis=1) dataTrain = pd.read_csv("D:/机器学习/data_word.csv", encoding = "gbk") naiveBayes = NaiveBayes() treeData = naiveBayes.fit(dataTrain) import json print(json.dumps(treeData, ensure_ascii=False)) pd = pd.DataFrame({'预测值':naiveBayes.predict(dataTrain), '正取值':dataTrain.iloc[:,-1]}) print(pd) print('正确率:%f%%'%(pd[pd['预测值'] == pd['正取值']].shape[0] * 100.0 / pd.shape[0]))

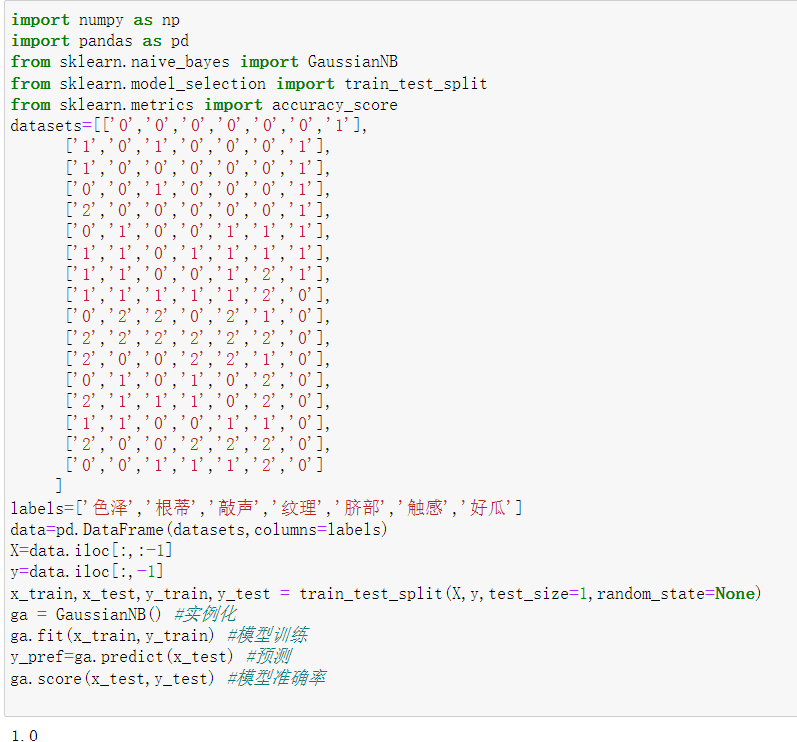

二、熟悉sklearn库中的朴素贝叶斯算法,使用sklearn包编写朴素贝叶斯算法程序,对输入数据进行预测;

import numpy as np

import pandas as pd

from sklearn.naive_bayes import GaussianNB

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

datasets=[['0','0','0','0','0','0','1'],

['1','0','1','0','0','0','1'],

['1','0','0','0','0','0','1'],

['0','0','1','0','0','0','1'],

['2','0','0','0','0','0','1'],

['0','1','0','0','1','1','1'],

['1','1','0','1','1','1','1'],

['1','1','0','0','1','2','1'],

['1','1','1','1','1','2','0'],

['0','2','2','0','2','1','0'],

['2','2','2','2','2','2','0'],

['2','0','0','2','2','1','0'],

['0','1','0','1','0','2','0'],

['2','1','1','1','0','2','0'],

['1','1','0','0','1','1','0'],

['2','0','0','2','2','2','0'],

['0','0','1','1','1','2','0']

]

labels=['色泽','根蒂','敲声','纹理','脐部','触感','好瓜']

data=pd.DataFrame(datasets,columns=labels)

X=data.iloc[:,:-1]

y=data.iloc[:,-1]

x_train,x_test,y_train,y_test = train_test_split(X,y,test_size=1,random_state=None)

ga = GaussianNB() #实例化

ga.fit(x_train,y_train) #模型训练

y_pref=ga.predict(x_test) #预测

ga.score(x_test,y_test) #模型准确率

三、实验总结

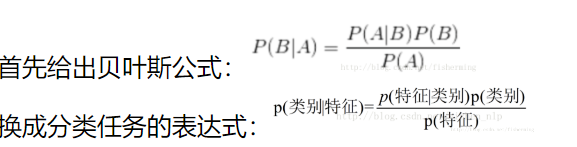

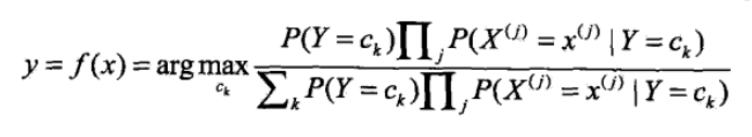

(1)公式

朴素贝叶斯公式:

(2)应用场景

朴素贝叶斯算法在文字识别, 图像识别方向有着较为重要的作用。 可以将未知的一种文字或图像,根据其已有的分类规则来进行分类,最终达到分类的目的。 现实生活中朴素贝叶斯算法应用广泛,如文本分类,垃圾邮件的分类,信用评估,钓鱼网站检测等等

浙公网安备 33010602011771号

浙公网安备 33010602011771号