[saltstack]自动化架构 - 迁

部署

本文只使用了2015.5(lts)和2019.2.0 作为测试和生产环境部署

最新的部署文档

http://mirrors.nju.edu.cn/saltstack/index.html

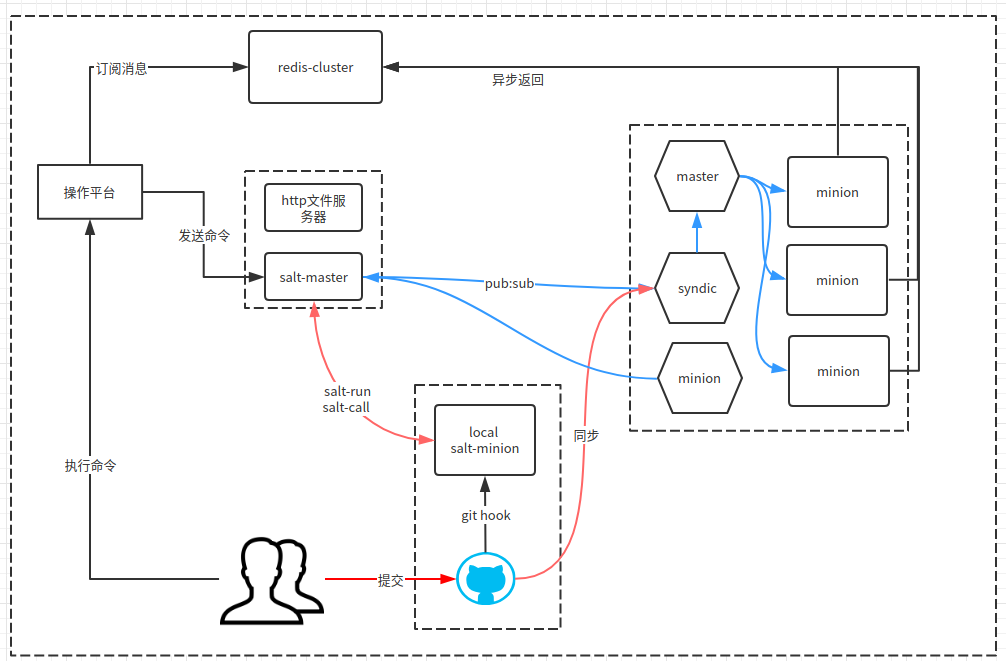

架构

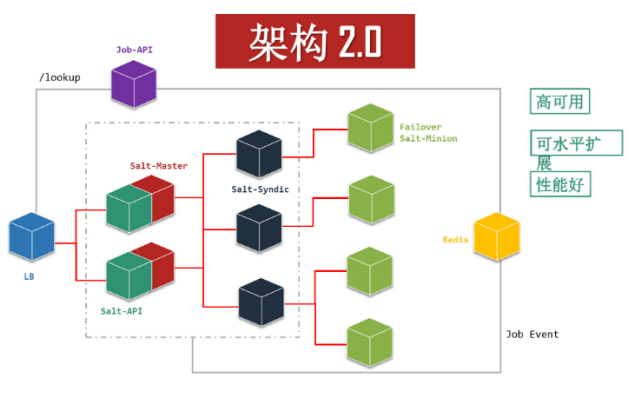

1 采用master-syndic-minion的结构,由于现在对salt的依赖不强烈,所以没有采用双master双syndic的方式。如果要采用双master和双syndic需要则架构要改成类似携程的高可用架构

2 使用gitfs同步下发state文件和扩展模块脚本

使用gitfs的好处就是能够在每个syndic上配置相同的gitfs backend,做到文件系统的统一且从git上做了版本管理。

3 所有节点都使用uuid来命名minion_id

由于我们的产品都是重复的内网ip和主机名,所以采用uuid的方式去命名minion_id, 带来的问题就是光看minion_id定位不到是哪个机器(在录入到cmdb之前)

master 安装配置

/etc/yum.repos.d/salt-lastest.repo

[saltstack-repo] name=SaltStack repo for Red Hat Enterprise Linux $releasever baseurl=https://repo.saltstack.com/yum/redhat/$releasever/$basearch/latest enabled=1 gpgcheck=0

我们的环境统一用2019.2.0

[salt-2019.2.0] name=SaltStack 2019.2 Release Channel for Python 2 RHEL/Centos baseurl=https://repo.saltstack.com/yum/redhat/6/x86_64/archive/2019.2.0 failovermethod=priority enabled=1 gpgcheck=0

yum install salt-master salt-*

# salt-master -V 查看master的版本,注意master版本要和minion一致,否未会遇到蛋疼的问题。

Salt Version:

Salt: 2019.2.0

Dependency Versions:

cffi: 1.12.3

cherrypy: 5.6.0

dateutil: Not Installed

docker-py: Not Installed

gitdb: Not Installed

gitpython: Not Installed

ioflo: Not Installed

Jinja2: 2.8.1

libgit2: 0.27.3

libnacl: Not Installed

M2Crypto: Not Installed

Mako: Not Installed

msgpack-pure: Not Installed

msgpack-python: 0.5.6

mysql-python: Not Installed

pycparser: 2.17

pycrypto: 2.6.1

pycryptodome: Not Installed

pygit2: 0.27.3

Python: 3.6.8 (default, Apr 25 2019, 21:02:35)

python-gnupg: Not Installed

PyYAML: 3.11

PyZMQ: 15.3.0

RAET: Not Installed

smmap: Not Installed

timelib: Not Installed

Tornado: 4.4.2

ZMQ: 4.1.4

System Versions:

dist: centos 7.6.1810 Core

locale: UTF-8

machine: x86_64

release: 3.10.0-957.el7.x86_64

system: Linux

version: CentOS Linux 7.6.1810 Core

default_include: master.d/*.conf order_masters: True # masterofmaster # auto_accept: True hash_type: sha256 worker_threads: 4 job_cache: False syndic_wait: 1 // master端的外部缓存配置,在多master做负载集群的情况,非常实用,单master意义不大,速度也没有加快 # redis.db: '0' # redis.host: '10.4.231.113' # redis.port: 6379 # master_job_cache: redis

生产上我们使用了gitfs作为文件系统,具体请看 [saltstack]配置Git Fileserver

在实际使用中,出现过两个问题:

- master 系统load非常高,是由于syndic节点重复了(系统被克隆),造成master和syndic的类似消息死循环了,所以在以后的维护中需要注意这一点。

- 上报的syndic节点长时间没有key accept,造成syndic不响应了。

其他配置:

# 单节点master和本地gitfs

/etc/salt/master:

interface: 192.168.102.74 #接口地址

auto_accept: True #自动接收minion的key

file_recv: True #允许cp.push 即从minion传输到master

log_file: /var/log/salt/master #日志地址

log_level: debug # 日志等级

file_roots: #文件路径 salt:// 路径

/var/cache/salt # master端的cache

base:

- /srv/salt

pillar_root: # 指定pillar目录

- /srv/pillar

# 配置 外部retuners

mysql.host: '127.0.0.1'

#mysql.user: 'jumpserver'

mysql.dbuser: 'jumpserver'

mysql.pass: 'jumpserver'

mysql.db: 'jumpserver'

mysql.port: 3306

master_job_cache: mysql

mongo.db: "mongo_pillar"

mongo.host: "127.0.0.1"

mongo.port: 27017

ext_pillar:

- mongo: {collection: mgo_pillar}

配置master端的 mysql returner,啥都不用改,但是性能提升有限。

USE `salt`; -- -- Table structure for table `jids` -- DROP TABLE IF EXISTS `jids`; CREATE TABLE `jids` ( `jid` varchar(255) NOT NULL, `load` mediumtext NOT NULL, UNIQUE KEY `jid` (`jid`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8; -- -- Table structure for table `salt_returns` -- DROP TABLE IF EXISTS `salt_returns`; CREATE TABLE `salt_returns` ( `fun` varchar(50) NOT NULL, `jid` varchar(255) NOT NULL, `return` mediumtext NOT NULL, `id` varchar(255) NOT NULL, `success` varchar(10) NOT NULL, `full_ret` mediumtext NOT NULL, `alter_time` TIMESTAMP DEFAULT CURRENT_TIMESTAMP, KEY `id` (`id`), KEY `jid` (`jid`), KEY `fun` (`fun`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8;

syndic安装

/etc/salt/master

default_include: master.d/*.conf auto_accept: True hash_type: sha256 syndic_master: xxxxxxxxxx

/etc/salt/proxy

master: xxxxxxxxxxxxxxx id: d38f1542b81f355c6fbb54a877f3407f

minion安装

获取每个主机的的uuid脚本 gen_id.sh

#!/bin/bash

awk '{gsub(/-/,"",$0);var=tolower($0)}END{system("sed -i \"/^id:/c id: "var"\" /etc/salt/minion")}' /sys/class/dmi/id/product_uuid

exit 0

将脚本加入开机启动,这样每次克隆后都可以拿到一个新的uuid,确保uuid不能重复

cat /usr/lib/systemd/system/salt-minion.service | grep ExecStartPre= || sed -i '/ExecStart/a\ExecStartPre=/etc/salt/gen_id.sh' /usr/lib/systemd/system/salt-minion.service && chmod +x /etc/salt/gen_id.sh && systemctl daemon-reload && systemctl restart salt-minion

/etc/salt/minion

master: xxxxxxxxxxxxx id: d38f1542b81f355c6fbb54a877f3407f tcp_keepalive: True tcp_keepalive_idle: 300 tcp_keepalive_cnt: -1 tcp_keepalive_intvl: -1

安装出现问题

如果碰到python2.4以下,先安装Anaconda

出现 swig -python -I/usr/include/python2.6 -I/usr/include -includeall -o SWIG/_m2crypto_wrap.c SWIG/_m2crypto.i /usr/include/openssl/opensslconf.h:31: Error: CPP #error ""This openssl-devel package does not work your architecture?"". Use the -cpperraswarn option to continue swig processing. error: command 'swig' failed with exit status 1 使用: env SWIG_FEATURES="-cpperraswarn -includeall -D__`uname -m`__ -I/usr/include/openssl" pip install M2Crypto

源码安装

1 升级到 python 2.7 2 安装pip 3 安装salt ./pip install pyyaml ./pip install jinja2 ./pip install M2Crypto ./pip install pyjinja2 ./pip install msgpack-python ./pip install msgpack-pure ./pip install pycrypto tar -xf salt-2014.1.13.tar.gz python setup.py install 安装libzmq rpm -ivh libzmq3-3.2.2-13.1.i386.rpm 安装zeromq zeromq-3.2.5.tar.gz 最后安装PyZMQ ./pip install PyZMQ -- 用 esay_install 把python-zmq的库路径"/usr/lib/"写到"/etc/ld.so.conf"并执行“ldconfig”。 If you expected pyzmq to link against an installed libzmq, please check to make sure: * You have a C compiler installed * A development version of Python is installed (including headers) * A development version of ZMQ >= 2.1.4 is installed (including headers) * If ZMQ is not in a default location, supply the argument --zmq=<path> * If you did recently install ZMQ to a default location, try rebuilding the ld cache with `sudo ldconfig` or specify zmq location with `--zmq=/usr/local` You can skip all this detection/waiting nonsense if you know you want pyzmq to bundle libzmq as an extension by passing: `--zmq=bundled`

网络配置

iptables -A INPUT -p tcp --dport 4506 -j ACCEPT iptables -A INPUT -p tcp --sport 4505 -j ACCEPT iptables -A OUTPUT -d 192.168.0.0/16 -p tcp --sport 4506 -j ACCEPT iptables -A OUTPUT -d 192.168.0.0/16 -p tcp --dport 4506 -j ACCEPT iptables -A OUTPUT -d 192.168.0.0/16 -p tcp --sport 4505 -j ACCEPT iptables -A OUTPUT -d 192.168.0.0/16 -p tcp --dport 4505 -j ACCEPT

Saltstack master 启动后默认监听 4505 和 4506两个端口。4505(publish_port)为 salt的

消息发布系统,4506(ret_port)为 salt 客户端与服务端通信的端口。

启动和debug

服务端启动方式:service/systemctl salt-master start 客户端启动方式:service/systemctl salt-minion start /usr/bin/salt-master -l debug # 启动debug salt 'ttt' test.ping -l debug # 执行命令debug salt-keys -L 才能认得到 然后加入-a 如果修改过ID,最好把旧的删掉 -d #列出accept的服务器 salt-key -l acc salt-key -D //删除所有KEY salt-key -d key //删除单个key salt-key -A //接受所有KEY salt-key -a key //接受单个key # 启动两个 salt-minion debian里面使用了直接启动的进程 -- 可以再改一下脚本 /usr/bin/python /usr/bin/salt-minion -c /opt/etc/salt -d rhel里面 改了原来的脚本

nodegroup

/etc/salt/master.d/nodegroups.conf

值得注意的是编辑 master的时候,group1和 group2 前面是 2 个空格

nodegroups:

platapp: 'L@platapp1,platapp2,platapp3,platapp4,platapp5,platapp6'

platZT: 'L@platZT1,platZT2'

website: 'L@website1,website2,website3'

nljk: 'E@nljkapp[1-3]|nlkjapp4|vnljkapp[1-4]'

imlink: 'E@sjkr1[2-3] or L@sjkr8,NEWIM1,sjkr1'

imloc: 'L@sjkr11,sjkr7,sjkr4'

sjap: 'L@NEWIM2,newim4,sjkr6,NEWIM8,sjkr12,NEWIM11,NEWIM5'

pcap: 'L@sjkr8,NEWIM1,sjkr1,sjkr13'

one_salt: '* and not E@v?dxpt[1-2]|yxrk[1-4]|yxtxmw[1-4]' # not 不能作为第一个条件出现

two_salts: 'E@v?dxpt[1-2]|yxrk[1-4]|yxtxmw[1-4]'

复合匹配 C@

故障排除

expected <block end>, but found '<block sequence start>' in "<unicode string>", line 9, column 5: - zeromq 注意要在 - 之前空两格 TypeError encountered executing state.highstate: 'NoneType' object is not iterable. 一般都是格式错误 [ ERROR ][9025] Unable to cache file 'salt://test.sh' from saltenv 'base'. UnicodeEncodeError: 'ascii' codec can't encode character u'\uff1a' in position 3: ordinal not in range(128)' 一般由于sls 文件里面有中文字符,特别是 : , 需要注意 minion升级后的问题 1 执行 stat.sls 报错,手动要升级 msgpack-python-0.4.6.tar.gz Pillar: Specified SLS 'data' in environment 'base' is not available on the salt master 需要重新刷新的pillar salt "the_minion" saltutil.refresh_pillar