Policy Improvement and Policy Iteration

From the last post, we know how to evaluate a policy. But that's not enough, because the purpose of policy evaluation is to improve policies so that finally get the optimal policy. So in this post, we will discuss about how to improve a given policy, and how to from a given policy get to the optimal policy.

Firstly, when you have an evaluated policy, the Action-Value function is known for every state. That is, at a certain state s, we known which action can give the system the largest reward.

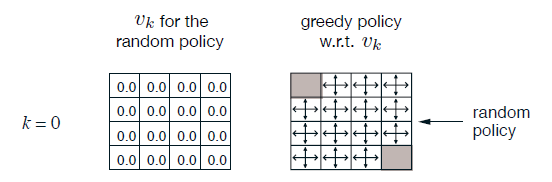

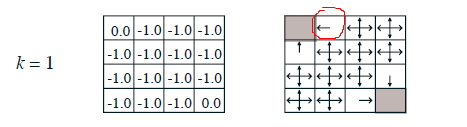

In the puzzle wandering example, we evaluate the random policy. However,the State-Value functions can be used for policy improvement. After 1 step calculating,we can conclude at the circled location, moving left is better than randomly picking a direction because left side has more reward.

After three steps, we've got a much better intuition about the map. We can change the random policy to a new better one.

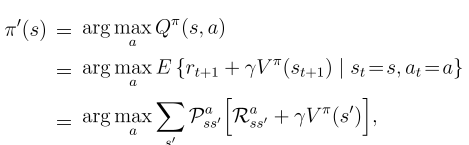

The way to improve the current policy is to greedyly pick actions for every state. It is worth noting that greedily picking actions does not means it only consider one step (too greedy to consider multiple steps). Instead, when k=3, the algorithm can foresee three steps, and the greedy picking algorithm will select the best action for k steps.

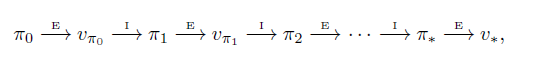

The Policy Iteration Algorithm is keep doing evaluation and improvement tasks untill the policy becomes stable,

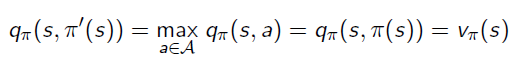

This process means Action-Value function of the improved policy picking the best return from a single action:

The algorithm is:

浙公网安备 33010602011771号

浙公网安备 33010602011771号