分布式存储ceph---部署ceph(2)

一、部署准备

准备5台机器(linux系统为centos7.6版本),当然也可以至少3台机器并充当部署节点和客户端,可以与ceph节点共用:

1台部署节点(配一块硬盘,运行ceph-depoly)

3台ceph节点(配两块硬盘,第一块为系统盘并运行mon,第二块作为osd数据盘)

1台客户端(可以使用ceph提供的文件系统,块存储,对象存储)

[root@ren3 ~]# lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT sda 8:0 0 20G 0 disk ├─sda1 8:1 0 1G 0 part /boot └─sda2 8:2 0 19G 0 part ├─centos-root 253:0 0 17G 0 lvm / └─centos-swap 253:1 0 2G 0 lvm [SWAP] sdb 8:16 0 5G 0 disk sdc 8:32 0 5G 0 disk sr0 11:0 1 4.3G 0 rom [root@ren3 ~]# fdisk /dev/sdb 欢迎使用 fdisk (util-linux 2.23.2)。 更改将停留在内存中,直到您决定将更改写入磁盘。 使用写入命令前请三思。 Device does not contain a recognized partition table 使用磁盘标识符 0xf44577f9 创建新的 DOS 磁盘标签。 命令(输入 m 获取帮助):n Partition type: p primary (0 primary, 0 extended, 4 free) e extended Select (default p): Using default response p 分区号 (1-4,默认 1): 起始 扇区 (2048-10485759,默认为 2048): 将使用默认值 2048 Last 扇区, +扇区 or +size{K,M,G} (2048-10485759,默认为 10485759): 将使用默认值 10485759 分区 1 已设置为 Linux 类型,大小设为 5 GiB 命令(输入 m 获取帮助):p 磁盘 /dev/sdb:5368 MB, 5368709120 字节,10485760 个扇区 Units = 扇区 of 1 * 512 = 512 bytes 扇区大小(逻辑/物理):512 字节 / 512 字节 I/O 大小(最小/最佳):512 字节 / 512 字节 磁盘标签类型:dos 磁盘标识符:0xf44577f9 设备 Boot Start End Blocks Id System /dev/sdb1 2048 10485759 5241856 83 Linux 命令(输入 m 获取帮助):w The partition table has been altered! Calling ioctl() to re-read partition table. 正在同步磁盘。 [root@ren3 ~]# fdisk /dev/sdc 欢迎使用 fdisk (util-linux 2.23.2)。 更改将停留在内存中,直到您决定将更改写入磁盘。 使用写入命令前请三思。 Device does not contain a recognized partition table 使用磁盘标识符 0xa7937c9d 创建新的 DOS 磁盘标签。 命令(输入 m 获取帮助):n Partition type: p primary (0 primary, 0 extended, 4 free) e extended Select (default p): Using default response p 分区号 (1-4,默认 1): 起始 扇区 (2048-10485759,默认为 2048): 将使用默认值 2048 Last 扇区, +扇区 or +size{K,M,G} (2048-10485759,默认为 10485759): 将使用默认值 10485759 分区 1 已设置为 Linux 类型,大小设为 5 GiB 命令(输入 m 获取帮助):w The partition table has been altered! Calling ioctl() to re-read partition table. 正在同步磁盘。 [root@ren3 ~]# lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT sda 8:0 0 20G 0 disk ├─sda1 8:1 0 1G 0 part /boot └─sda2 8:2 0 19G 0 part ├─centos-root 253:0 0 17G 0 lvm / └─centos-swap 253:1 0 2G 0 lvm [SWAP] sdb 8:16 0 5G 0 disk └─sdb1 8:17 0 5G 0 part sdc 8:32 0 5G 0 disk └─sdc1 8:33 0 5G 0 part sr0 11:0 1 4.3G 0 rom [root@ren3 ~]# mkfs -t xfs /dev/sdb1 meta-data=/dev/sdb1 isize=512 agcount=4, agsize=327616 blks = sectsz=512 attr=2, projid32bit=1 = crc=1 finobt=0, sparse=0 data = bsize=4096 blocks=1310464, imaxpct=25 = sunit=0 swidth=0 blks naming =version 2 bsize=4096 ascii-ci=0 ftype=1 log =internal log bsize=4096 blocks=2560, version=2 = sectsz=512 sunit=0 blks, lazy-count=1 realtime =none extsz=4096 blocks=0, rtextents=0 [root@ren3 ~]# mkfs -t xfs /dev/sdc1 meta-data=/dev/sdc1 isize=512 agcount=4, agsize=327616 blks = sectsz=512 attr=2, projid32bit=1 = crc=1 finobt=0, sparse=0 data = bsize=4096 blocks=1310464, imaxpct=25 = sunit=0 swidth=0 blks naming =version 2 bsize=4096 ascii-ci=0 ftype=1 log =internal log bsize=4096 blocks=2560, version=2 = sectsz=512 sunit=0 blks, lazy-count=1 realtime =none extsz=4096 blocks=0, rtextents=0

1、所有ceph集群节点(包括客户端)设置静态域名解析;

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.11.3 ren3 #openstack 控制节点(ceph节点1) 192.168.11.4 ren4 #openstack 计算节点(ceph节点2) 192.168.11.5 ren5 #openstack 存储节点(ceph节点3) 192.168.11.6 ren6 #ceph 部署节点

2、所有集群节点(包括客户端)创建cent用户,并设置密码,后执行如下命令:

useradd cent && echo "123" | passwd --stdin cent echo -e 'Defaults:cent !requiretty\ncent ALL = (root) NOPASSWD:ALL' | tee /etc/sudoers.d/ceph chmod 440 /etc/sudoers.d/ceph

3、在部署节点切换为cent用户,设置无密钥登陆各节点包括客户端节点;

[root@ren6 ~]# su - cent [cent@ren6 ~]$ ssh-keygen [cent@ren6 ~]$ ssh-copy-id ren3 [cent@ren6 ~]$ ssh-copy-id ren4 [cent@ren6 ~]$ ssh-copy-id ren5

/etc/ssh/sshd_confid文件中的PasswordAuthentication yes需要改为yes

4、在部署节点切换为cent用户,在cent用户家目录,设置如下文件:vi~/.ssh/config# create new ( define all nodes and users );

Host dlp

Hostname dlp

User cent

Host node1

Hostname node1

User cent

Host node2

Hostname node2

User cent

Host node3

Hostname node3

User cent

chmod 600 ~/.ssh/config

二、所有节点配置国内ceph源并安装ceph

1、all-node(包括客户端)在/etc/yum.repos.d/创建 ceph-yunwei.repo

[ceph-yunwei] name=ceph-yunwei-install baseurl=https://mirrors.aliyun.com/centos/7/storage/x86_64/ceph-jewel/ enable=1 gpgcheck=0

或者也可以将如上内容添加到现有的 CentOS-Base.repo 中。

2、到国内ceph源中https://mirrors.aliyun.com/centos/7.6.1810/storage/x86_64/ceph-jewel/下载如下所需rpm包。注意:红色框中为ceph-deploy的rpm,只需要在部署节点安装,下载需要到https://mirrors.aliyun.com/ceph/rpm-jewel/el7/noarch/中找到最新对应的ceph-deploy-xxxxx.noarch.rpm 下载

ceph-10.2.11-0.el7.x86_64.rpm ceph-base-10.2.11-0.el7.x86_64.rpm ceph-common-10.2.11-0.el7.x86_64.rpm ceph-deploy-1.5.39-0.noarch.rpm ceph-devel-compat-10.2.11-0.el7.x86_64.rpm cephfs-java-10.2.11-0.el7.x86_64.rpm ceph-fuse-10.2.11-0.el7.x86_64.rpm ceph-libs-compat-10.2.11-0.el7.x86_64.rpm ceph-mds-10.2.11-0.el7.x86_64.rpm ceph-mon-10.2.11-0.el7.x86_64.rpm ceph-osd-10.2.11-0.el7.x86_64.rpm ceph-radosgw-10.2.11-0.el7.x86_64.rpm ceph-resource-agents-10.2.11-0.el7.x86_64.rpm ceph-selinux-10.2.11-0.el7.x86_64.rpm ceph-test-10.2.11-0.el7.x86_64.rpm libcephfs1-10.2.11-0.el7.x86_64.rpm libcephfs1-devel-10.2.11-0.el7.x86_64.rpm libcephfs_jni1-10.2.11-0.el7.x86_64.rpm libcephfs_jni1-devel-10.2.11-0.el7.x86_64.rpm librados2-10.2.11-0.el7.x86_64.rpm librados2-devel-10.2.11-0.el7.x86_64.rpm libradosstriper1-10.2.11-0.el7.x86_64.rpm libradosstriper1-devel-10.2.11-0.el7.x86_64.rpm librbd1-10.2.11-0.el7.x86_64.rpm librbd1-devel-10.2.11-0.el7.x86_64.rpm librgw2-10.2.11-0.el7.x86_64.rpm librgw2-devel-10.2.11-0.el7.x86_64.rpm python-ceph-compat-10.2.11-0.el7.x86_64.rpm python-cephfs-10.2.11-0.el7.x86_64.rpm python-rados-10.2.11-0.el7.x86_64.rpm python-rbd-10.2.11-0.el7.x86_64.rpm rbd-fuse-10.2.11-0.el7.x86_64.rpm rbd-mirror-10.2.11-0.el7.x86_64.rpm rbd-nbd-10.2.11-0.el7.x86_64.rpm

3、将下载好的rpm拷贝到所有节点,并安装。注意ceph-deploy-xxxxx.noarch.rpm 只有部署节点用到,其他节点不需要,部署节点也需要安装其余的rpm

4、在部署节点(cent用户下执行):安装 ceph-deploy,在root用户下,进入下载好的rpm包目录,执行:

yum localinstall -y ./*

(或者sudo yum install ceph-deploy)

创建ceph工作目录

mkdir ceph && cd ceph

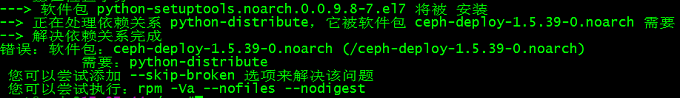

注意:如遇到如下报错:

处理办法1:

可能不能安装成功,报如下问题:将python-distribute remove 再进行安装(或者 yum remove python-setuptools -y)

注意:如果不是安装上述方法添加的rpm,用的是网络源,每个节点必须yum install ceph ceph-radosgw -y

处理办法2:

安装依赖包:python-distribute

yum install python-distribute -y

再次安装:ceph-deploy-1.5.39-0.noarch.rpm

yum localinstall ceph-deploy-1.5.39-0.noarch.rpm -y

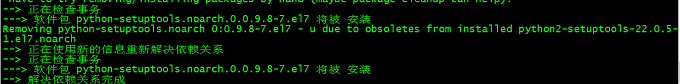

删除:python2-setuptools-22.0.5-1.el7.noarch

yum remove python2-setuptools-22.0.5-1.el7.noarch -y

因为目前的源用的是openstack-ocata源,安装版本就成了python2-setuptools-22.0.5-1.el7.noarch,所以修改yum源后,只要保证安装 python-setuptools 版本是 0.9.8-7.el7 就可以通过了,如下把openstack-ocata 源移走或者删除:

root@dlp10:30:02/etc/yum.repos.d# mv rdo-release-yunwei.repo old/ root@dlp10:30:11/etc/yum.repos.d# ls Centos7-Base-yunwei.repo ceph-yunwei.repo epel-testing.repo old ceph.repo epel.repo epel-yunwei.repo root@dlp10:30:12/etc/yum.repos.d# yum clean all root@dlp10:30:12/etc/yum.repos.d# yum makecache

yum localinstall ceph-deploy-1.5.39-0.noarch.rpm -y

查看版本:

ceph -v

5、在部署节点(cent用户下执行):配置新集群

ceph-deploy new node1 node2 node3 vim ./ceph.conf ################## osd_pool_default_size = 1 osd_pool_default_min_size = 1 mon_clock_drift_allowed = 2 mon_clock_drift_warn_backoff = 3 ####################

可选参数如下:

public_network = 192.168.254.0/24 cluster_network = 172.16.254.0/24 osd_pool_default_size = 3 osd_pool_default_min_size = 1 osd_pool_default_pg_num = 8 osd_pool_default_pgp_num = 8 osd_crush_chooseleaf_type = 1 [mon] mon_clock_drift_allowed = 0.5 [osd] osd_mkfs_type = xfs osd_mkfs_options_xfs = -f filestore_max_sync_interval = 5 filestore_min_sync_interval = 0.1 filestore_fd_cache_size = 655350 filestore_omap_header_cache_size = 655350 filestore_fd_cache_random = true osd op threads = 8 osd disk threads = 4 filestore op threads = 8 max_open_files = 655350

6、在部署节点执行(cent用户下执行):所有节点安装ceph软件

所有节点有如下软件包:

root@rab116:13:59~/cephjrpm#ls ceph-10.2.11-0.el7.x86_64.rpm ceph-resource-agents-10.2.11-0.el7.x86_64.rpm librbd1-10.2.11-0.el7.x86_64.rpm ceph-base-10.2.11-0.el7.x86_64.rpm ceph-selinux-10.2.11-0.el7.x86_64.rpm librbd1-devel-10.2.11-0.el7.x86_64.rpm ceph-common-10.2.11-0.el7.x86_64.rpm ceph-test-10.2.11-0.el7.x86_64.rpm librgw2-10.2.11-0.el7.x86_64.rpm ceph-devel-compat-10.2.11-0.el7.x86_64.rpm libcephfs1-10.2.11-0.el7.x86_64.rpm librgw2-devel-10.2.11-0.el7.x86_64.rpm cephfs-java-10.2.11-0.el7.x86_64.rpm libcephfs1-devel-10.2.11-0.el7.x86_64.rpm python-ceph-compat-10.2.11-0.el7.x86_64.rpm ceph-fuse-10.2.11-0.el7.x86_64.rpm libcephfs_jni1-10.2.11-0.el7.x86_64.rpm python-cephfs-10.2.11-0.el7.x86_64.rpm ceph-libs-compat-10.2.11-0.el7.x86_64.rpm libcephfs_jni1-devel-10.2.11-0.el7.x86_64.rpm python-rados-10.2.11-0.el7.x86_64.rpm ceph-mds-10.2.11-0.el7.x86_64.rpm librados2-10.2.11-0.el7.x86_64.rpm python-rbd-10.2.11-0.el7.x86_64.rpm ceph-mon-10.2.11-0.el7.x86_64.rpm librados2-devel-10.2.11-0.el7.x86_64.rpm rbd-fuse-10.2.11-0.el7.x86_64.rpm ceph-osd-10.2.11-0.el7.x86_64.rpm libradosstriper1-10.2.11-0.el7.x86_64.rpm rbd-mirror-10.2.11-0.el7.x86_64.rpm ceph-radosgw-10.2.11-0.el7.x86_64.rpm libradosstriper1-devel-10.2.11-0.el7.x86_64.rpm rbd-nbd-10.2.11-0.el7.x86_64.rpm

所有节点安装上述软件包(包括客户端):

yum localinstall ./* -y

7、在部署节点执行,所有节点安装ceph软件

ceph-deploy install dlp node1 node2 node3

8、在部署节点初始化集群(cent用户下执行):

ceph-deploy mon create-initial

9、在osd节点prepare Object Storage Daemon :

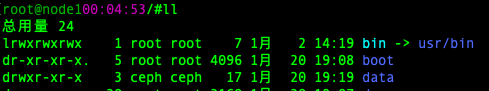

mkdir /data && chown ceph.ceph /data

10、每个节点将第二块硬盘做分区,并格式化为xfs文件系统挂载到/data:

root@con116:45:22/#fdisk /dev/vdb root@con116:45:22/#lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT vda 252:0 0 40G 0 disk ├─vda1 252:1 0 512M 0 part /boot └─vda2 252:2 0 39.5G 0 part ├─cl-root 253:0 0 35.5G 0 lvm / └─cl-swap 253:1 0 4G 0 lvm [SWAP] vdb 252:16 0 10G 0 disk └─vdb1 252:17 0 10G 0 part root@rab116:54:35/#mkfs -t xfs /dev/vdb1 root@rab116:54:50/#mount /dev/vdb1 /data/ root@rab116:56:39/#lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT vda 252:0 0 40G 0 disk ├─vda1 252:1 0 512M 0 part /boot └─vda2 252:2 0 39.5G 0 part ├─cl-root 253:0 0 35.5G 0 lvm / └─cl-swap 253:1 0 4G 0 lvm [SWAP] vdb 252:16 0 10G 0 disk └─vdb1 252:17 0 10G 0 part /data

11、在/data/下面创建osd挂载目录:

mkdir /data/osd chown -R ceph.ceph /data/ chmod 750 /data/osd/ ln -s /data/osd /var/lib/ceph

注意:准备前先将硬盘做文件系统 xfs,挂载到/var/lib/ceph/osd,并且注意属主和属主为ceph:

12、准备Object Storage Daemon:

ceph-deploy osd prepare node1:/var/lib/ceph/osd node2:/var/lib/ceph/osd node3:/var/lib/ceph/osd

13、激活Object Storage Daemon:

ceph-deploy osd activate node1:/var/lib/ceph/osd node2:/var/lib/ceph/osd node3:/var/lib/ceph/osd

14、在部署节点transfer config files

ceph-deploy admin dlp node1 node2 node3 sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

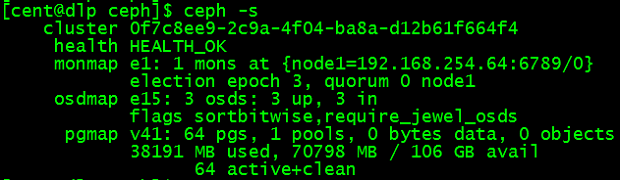

15、在ceph集群中任意节点检测:

ceph -s

三、客户端设置

1、客户端也要有cent用户:

useradd cent && echo "123" | passwd --stdin cent echo-e 'Defaults:cent !requiretty\ncent ALL = (root) NOPASSWD:ALL' | tee /etc/sudoers.d/ceph chmod440 /etc/sudoers.d/ceph

在部署节点执行,安装ceph客户端及设置:

ceph-deploy install controller ceph-deploy admin controller

2、客户端执行

sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

3、客户端执行,块设备rdb配置:

创建rbd:rbd create disk01 --size 10G --image-feature layering

删除:rbd rm disk01

列示rbd:rbd ls -l

映射rbd的image map:sudo rbd map disk01

取消映射:sudo rbd unmap disk01

显示map:rbd showmapped

格式化disk01文件系统xfs:sudo mkfs.xfs /dev/rbd0

挂载硬盘:sudo mount /dev/rbd0 /mnt

验证是否挂着成功:df -h

4、File System配置:

在部署节点执行,选择一个node来创建MDS:

ceph-deploy mds create node1

以下操作在node1上执行:

sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

在MDS节点node1上创建 cephfs_data 和 cephfs_metadata 的 pool

ceph osd pool create cephfs_data 128 ceph osd pool create cephfs_metadata 128

开启pool:

ceph fs new cephfs cephfs_metadata cephfs_data

显示ceph fs:

ceph fs ls ceph mds stat

以下操作在客户端执行,安装ceph-fuse:

yum -y install ceph-fuse

获取admin key:

sshcent@node1"sudo ceph-authtool -p /etc/ceph/ceph.client.admin.keyring" > admin.key chmod600 admin.key

挂载ceph-fs:

mount-t ceph node1:6789:/ /mnt -o name=admin,secretfile=admin.key df-hT

停止ceph-mds服务:

systemctl stop ceph-mds@node1 ceph mds fail 0 ceph fs rm cephfs --yes-i-really-mean-it ceph osd lspools 显示结果:0 rbd,1 cephfs_data,2 cephfs_metadata, ceph osd pool rm cephfs_metadata cephfs_metadata --yes-i-really-really-mean-it

四、删除环境

ceph-deploy purge dlp node1 node2 node3 controller ceph-deploy purgedata dlp node1 node2 node3 controller ceph-deploy forgetkeys rm -rf ceph*

浙公网安备 33010602011771号

浙公网安备 33010602011771号