Docker

认识Docker

文档地址 https://docs.docker.com/ 仓库地址 https://hub.docker.com/

传统虚拟机技术缺点

资源占用多 冗余步骤多 启动很慢

容器化技术

容器化技术不是模拟一个完整的操作系统 容器运行在宿主机上,没有自己的内核,很轻巧 每个容器是相互隔离的,有自己的文件系统,互不影响

有比虚拟机更少的抽象层,不用抽象硬件,利用宿主机的内核

DevOps(开发运维)

应用更快速的交付和部署 打包镜像发布测试,一键运行 更便捷的升级和扩缩容 部署应用就如同小朋友搭积木一样 开发测试环境高度一致

镜像(image)

通过镜像可以创建出多个容器

容器(container)

启动,停止,删除等基本命令进行操作,可以理解为一个简易的Linux

仓库(repository)

存放镜像的地方。公有仓库docker hub(默认是国外的,阿里云配置镜像加速)

安装Docker

查看云主机

系统内核

[root@10-9-48-229 ~]# uname -r 3.10.0-957.1.3.el7.x86_64

系统版本

[root@10-9-48-229 ~]# cat /etc/os-release NAME="CentOS Linux" VERSION="7 (Core)" ID="centos" ID_LIKE="rhel fedora" VERSION_ID="7" PRETTY_NAME="CentOS Linux 7 (Core)" ANSI_COLOR="0;31" CPE_NAME="cpe:/o:centos:centos:7" HOME_URL="https://www.centos.org/" BUG_REPORT_URL="https://bugs.centos.org/" CENTOS_MANTISBT_PROJECT="CentOS-7" CENTOS_MANTISBT_PROJECT_VERSION="7" REDHAT_SUPPORT_PRODUCT="centos" REDHAT_SUPPORT_PRODUCT_VERSION="7"

安装教程

https://docs.docker.com/engine/install/centos/

安装 gcc 环境

yum -y install gcc

yum -y install gcc-c++

关闭docker

systemctl stop docker

卸载旧的版本

sudo yum remove docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-engine

卸载docker

sudo yum remove docker-ce docker-ce-cli containerd.io

移除目录

sudo rm -rf /var/lib/docker

需要的安装包

sudo yum install -y yum-utils

设置镜像的仓库

sudo yum-config-manager \

--add-repo \

http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

更新yum软件包索引

yum makecache fast

安装Docker引擎(ce表示社区版,ee表示企业版)

sudo yum install docker-ce docker-ce-cli containerd.io

启动Docker

systemctl start docker

docker version

开机启动

# CentOS 7 新式语法 systemctl start docker.service systemctl enable docker.service

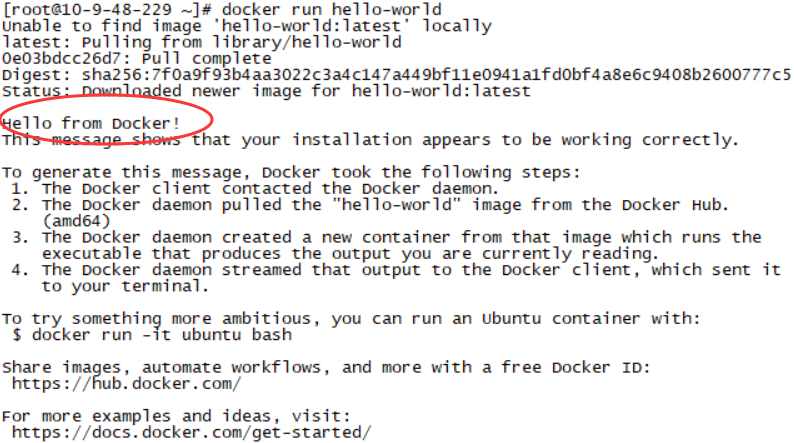

hello world

docker run hello-world

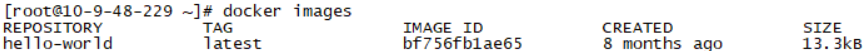

查看该hello-world镜像

镜像加速(尽管我买的是Ucloud云主机,还是可以去注册阿里云账号,生成如下信息!阿里云NB丶)

sudo mkdir -p /etc/docker sudo tee /etc/docker/daemon.json <<-'EOF' { "registry-mirrors": ["https://xnoai74w.mirror.aliyuncs.com"] } EOF sudo systemctl daemon-reload sudo systemctl restart docker

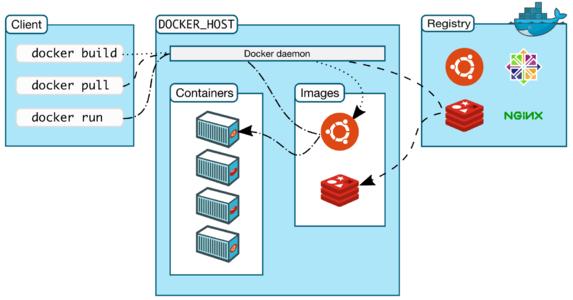

client-server形式

client在Linux上

server是守护进程的形式,可存放各种容器

若 docker-compose version 出现命令不存在,点击查看安装步骤

Docker的命令

帮助命令

https://docs.docker.com/engine/reference/commandline/docker/

docker version #docker版本信息 docker info #docker系统信息,镜像和容器的数量 docker 命令 --help #万能命令

镜像命令

https://docs.docker.com/engine/reference/commandline/images/

查看本地主机上的所有镜像

[root@10-9-48-229 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

hello-world latest bf756fb1ae65 8 months ago 13.3kB

说明

REPOSITORY 镜像的仓库源 TAG 版本标签信息 IMAGE ID 镜像的id CREATED 创建时间 SIZE 镜像的大小

命令可选项

--all , -a 列出所有镜像 --quiet , -q 只显示镜像的id

搜索镜像

[root@10-9-48-229 ~]# docker search mysql

NAME DESCRIPTION STARS OFFICIAL AUTOMATED

mysql MySQL is a widely used, open-source relation… 9918 [OK]

mariadb MariaDB is a community-developed fork of MyS… 3630 [OK]

mysql/mysql-server Optimized MySQL Server Docker images. Create… 723 [OK]

可选项

# 通过收藏来过滤

--filter=STARS=3000

[root@10-9-48-229 ~]# docker search mysql --filter=STARS=3000

NAME DESCRIPTION STARS OFFICIAL AUTOMATED

mysql MySQL is a widely used, open-source relation… 9918 [OK]

mariadb MariaDB is a community-developed fork of MyS… 3630 [OK]

拉取/下载镜像

[root@10-9-48-229 ~]# docker pull mysql

Using default tag: latest #默认是最新的,企业里最好指定版本

latest: Pulling from library/mysql

bf5952930446: Downloading [============================> ] 15.61MB/27.09MB

8254623a9871: Download complete #分层下载,docker image的核心 联合文件系统

938e3e06dac4: Download complete

ea28ebf28884: Download complete

f3cef38785c2: Download complete

894f9792565a: Downloading [===================> ] 5.299MB/13.45MB

Digest: sha256:c358e72e100ab493a0304bda35e6f239db2ec8c9bb836d8a427ac34307d074ed #签名

Status: Downloaded newer image for mysql:latest

docker.io/library/mysql:latest #真实地址 可以这么玩:docker pull 真实地址

指定版本下载

docker pull mysql:5.7 # 版本 要能在 https://hub.docker.com/ 中搜出来

docker images

删除镜像

docker images

docker rmi -f bf756fb1ae65 #一般通过 image id 来删除

docker rmi -f 镜像id 镜像id 镜像id #删除多个镜像

docker rmi -f $(docker images -aq) #删除所有镜像

容器命令

(有了镜像才可以创建容器,现在下载一个centos镜像来进行测试)

下载centos镜像

docker pull centos

新建容器并启动

docker run [可选参数] image 可选参数内容: --name="Name" 容器名称,用来区分容器 -d 后台方式运行 -it 使用交互方式运行,进入容器查看内容 -p 指定容器的端口 -p 8080:8080 -p IP:主机端口:容器端口 -p 主机端口:容器端口(常用) -p 容器端口 容器端口 -P 随机指定端口

启动并进入容器(这个容器,就是一个centos)

[root@10-9-48-229 ~]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE centos latest 0d120b6ccaa8 3 weeks ago 215MB [root@10-9-48-229 ~]# docker run -it centos /bin/bash [root@06312d33166f /]# ls bin dev etc home lib lib64 lost+found media mnt opt proc root run sbin srv sys tmp usr var [root@06312d33166f /]# exit exit [root@10-9-48-229 ~]#

查看当前正在运行的容器

[root@10-9-48-229 /]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

docker ps -a #查看所有运行过的容器

-n=3 #查看最近3个

-q #只显示容器的编号

退出容器

exit #直接退出交互窗口并停止容器

ctrl+p+q #退出交互窗口容器却不停止

删除容器

docker ps -aq

docker rm 容器id #删除指定的容器,不能删除正在运行的,强制删除加-f docker rm -f $(docker ps -aq) #强制删除所有的容器 docker ps -a -q|xargs docker rm #强制删除所有的容器

docker kill 容器id #强制停止当前容器

启动/停止一个运行过的容器

docker start 9786d107a853 docker stop 9786d107a853

常用其他命令

后台启动容器

docker run -d centos #后台启动,此时docker ps发现centos停止了,是因为这个centos没有对外提供服务,没有一个前台进程

查看日志命令

docker logs -tf --tail 10 4cf528d77128

docker run -d centos /bin/sh -c "while true;do echo hahaha;sleep 1;done" #shell脚本让容器打印日志

查看容器内的进程信息

[root@10-9-48-229 /]# docker top 4cf528d77128

UID PID PPID C STIME TTY TIME CMD

root 15545 15529 0 23:21 ? 00:00:00 /bin/sh -c while true;do echo hahaha;sleep 1;done

root 15595 15545 0 23:22 ? 00:00:00 /usr/bin/coreutils --coreutils-prog-shebang=sleep /usr/bin/sleep 1

查看镜像的元数据

docker inspect 4cf528d77128

[root@10-9-48-229 /]# docker inspect 4cf528d77128

[

{

"Id": "4cf528d77128c6c1d97bd4bd0f96806e06b72f86a77816886cdb65b738ec0a52",

"Created": "2020-09-03T15:16:52.810046849Z",

"Path": "/bin/sh",

"Args": [

"-c",

"while true;do echo hahaha;sleep 1;done"

],

"State": {

"Status": "running",

"Running": true,

"Paused": false,

"Restarting": false,

"OOMKilled": false,

"Dead": false,

"Pid": 15545,

"ExitCode": 0,

"Error": "",

"StartedAt": "2020-09-03T15:21:51.301974968Z",

"FinishedAt": "2020-09-03T15:17:43.866467683Z"

},

"Image": "sha256:0d120b6ccaa8c5e149176798b3501d4dd1885f961922497cd0abef155c869566",

"ResolvConfPath": "/var/lib/docker/containers/4cf528d77128c6c1d97bd4bd0f96806e06b72f86a77816886cdb65b738ec0a52/resolv.conf",

"HostnamePath": "/var/lib/docker/containers/4cf528d77128c6c1d97bd4bd0f96806e06b72f86a77816886cdb65b738ec0a52/hostname",

"HostsPath": "/var/lib/docker/containers/4cf528d77128c6c1d97bd4bd0f96806e06b72f86a77816886cdb65b738ec0a52/hosts",

"LogPath": "/var/lib/docker/containers/4cf528d77128c6c1d97bd4bd0f96806e06b72f86a77816886cdb65b738ec0a52/4cf528d77128c6c1d97bd4bd0f96806e06b72f86a77816886cdb65b738ec0a52-json.log",

"Name": "/nice_dhawan",

"RestartCount": 0,

"Driver": "devicemapper",

"Platform": "linux",

"MountLabel": "",

"ProcessLabel": "",

"AppArmorProfile": "",

"ExecIDs": null,

"HostConfig": {

"Binds": null,

"ContainerIDFile": "",

"LogConfig": {

"Type": "json-file",

"Config": {}

},

"NetworkMode": "default",

"PortBindings": {},

"RestartPolicy": {

"Name": "no",

"MaximumRetryCount": 0

},

"AutoRemove": false,

"VolumeDriver": "",

"VolumesFrom": null,

"CapAdd": null,

"CapDrop": null,

"Capabilities": null,

"Dns": [],

"DnsOptions": [],

"DnsSearch": [],

"ExtraHosts": null,

"GroupAdd": null,

"IpcMode": "private",

"Cgroup": "",

"Links": null,

"OomScoreAdj": 0,

"PidMode": "",

"Privileged": false,

"PublishAllPorts": false,

"ReadonlyRootfs": false,

"SecurityOpt": null,

"UTSMode": "",

"UsernsMode": "",

"ShmSize": 67108864,

"Runtime": "runc",

"ConsoleSize": [

0,

0

],

"Isolation": "",

"CpuShares": 0,

"Memory": 0,

"NanoCpus": 0,

"CgroupParent": "",

"BlkioWeight": 0,

"BlkioWeightDevice": [],

"BlkioDeviceReadBps": null,

"BlkioDeviceWriteBps": null,

"BlkioDeviceReadIOps": null,

"BlkioDeviceWriteIOps": null,

"CpuPeriod": 0,

"CpuQuota": 0,

"CpuRealtimePeriod": 0,

"CpuRealtimeRuntime": 0,

"CpusetCpus": "",

"CpusetMems": "",

"Devices": [],

"DeviceCgroupRules": null,

"DeviceRequests": null,

"KernelMemory": 0,

"KernelMemoryTCP": 0,

"MemoryReservation": 0,

"MemorySwap": 0,

"MemorySwappiness": null,

"OomKillDisable": false,

"PidsLimit": null,

"Ulimits": null,

"CpuCount": 0,

"CpuPercent": 0,

"IOMaximumIOps": 0,

"IOMaximumBandwidth": 0,

"MaskedPaths": [

"/proc/asound",

"/proc/acpi",

"/proc/kcore",

"/proc/keys",

"/proc/latency_stats",

"/proc/timer_list",

"/proc/timer_stats",

"/proc/sched_debug",

"/proc/scsi",

"/sys/firmware"

],

"ReadonlyPaths": [

"/proc/bus",

"/proc/fs",

"/proc/irq",

"/proc/sys",

"/proc/sysrq-trigger"

]

},

"GraphDriver": {

"Data": {

"DeviceId": "39",

"DeviceName": "docker-253:1-3981408-0121446216aa675898726b0b45453e637f27c99aadef67a80dac0ad2a7377027",

"DeviceSize": "10737418240"

},

"Name": "devicemapper"

},

"Mounts": [],

"Config": {

"Hostname": "4cf528d77128",

"Domainname": "",

"User": "",

"AttachStdin": false,

"AttachStdout": false,

"AttachStderr": false,

"Tty": false,

"OpenStdin": false,

"StdinOnce": false,

"Env": [

"PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin"

],

"Cmd": [

"/bin/sh",

"-c",

"while true;do echo hahaha;sleep 1;done"

],

"Image": "centos",

"Volumes": null,

"WorkingDir": "",

"Entrypoint": null,

"OnBuild": null,

"Labels": {

"org.label-schema.build-date": "20200809",

"org.label-schema.license": "GPLv2",

"org.label-schema.name": "CentOS Base Image",

"org.label-schema.schema-version": "1.0",

"org.label-schema.vendor": "CentOS"

}

},

"NetworkSettings": {

"Bridge": "",

"SandboxID": "0ae87e99f87b4871af6045e268ca018e47e8e2e74499374540347b62134412ca",

"HairpinMode": false,

"LinkLocalIPv6Address": "",

"LinkLocalIPv6PrefixLen": 0,

"Ports": {},

"SandboxKey": "/var/run/docker/netns/0ae87e99f87b",

"SecondaryIPAddresses": null,

"SecondaryIPv6Addresses": null,

"EndpointID": "355add0949820be91fd5c7883ddda6e5de56a5888ee39cc40613acd97b7e2ab4",

"Gateway": "172.17.0.1",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"IPAddress": "172.17.0.2",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"MacAddress": "02:42:ac:11:00:02",

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "a1ab3ade09e14a0f3b2d9a50da9c4008cbc380f8703d560703a94827b4d8d4f9",

"EndpointID": "355add0949820be91fd5c7883ddda6e5de56a5888ee39cc40613acd97b7e2ab4",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.2",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:02",

"DriverOpts": null

}

}

}

}

]

进入当前正在运行的容器

#通常容器都是使用后台方式运行的,需要进入容器,修改一些配置 #方式一 docker exec -it 容器id bashShell #进入容器后开启一个新的终端,可以在里边操作 [root@10-9-48-229 /]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 4cf528d77128 centos "/bin/sh -c 'while t…" 20 minutes ago Up 15 minutes nice_dhawan [root@10-9-48-229 /]# docker exec -it 4cf528d77128 /bin/bash [root@4cf528d77128 /]# #方式二 docker attach 容器id #进入容器正在执行的终端,不会启动新的进程

从容器内拷贝文件到主机上(后期可以用-v卷的方式)

docker cp 容器id:容器内路径 目的主机路径

[root@10-9-48-229 ~]# docker run -it centos /bin/bash

[root@6b790ffe6a2a /]# cd home

[root@6b790ffe6a2a home]# ls

[root@6b790ffe6a2a home]# touch xixixi.java

[root@6b790ffe6a2a home]# exit

exit

[root@10-9-48-229 ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

6b790ffe6a2a centos "/bin/bash" 3 minutes ago Exited (0) 7 seconds ago relaxed_perlman

[root@10-9-48-229 ~]# docker cp 6b790ffe6a2a:/home/xixixi.java /home

[root@10-9-48-229 ~]# cd /home

[root@10-9-48-229 home]# ls

dfs mysql tomcat xixixi.java

练习:用docker装Nginx

暴露端口

docker search nginx #搜索镜像 尽量去hub.docker.com上搜索 docker pull nginx #下载镜像 docker images #查看镜像 docker run -d --name nginx01 -p 3344:80 nginx #需开启3344端口 docker ps #查看进程 curl localhost:3344 #本机测试 -bash: curl: command not found 解决 yum update -y && yum install curl -y http://xx.xx.xx.xx:3344/ #浏览器公网访问测试 docker exec -it nginx01 /bin/bash #进入该容器 whereis nginx #找到nginx配置文件 exit #退出 docker ps #查看进程 docker stop #停止

练习:用docker装tomcat

进入容器查看

docker run -it --rm tomcat:9.0 #官方套路,用完即删,关闭就删了,方便测试,docker ps -a是看不到痕迹的 docker pull tomcat #非用完即删方式 docker run -d -p 3355:8080 --name tomcat01 tomcat #启动tomcat docker exec -it tomcat01 /bin/bash #会直接进入root@7a16ef3a9238:/usr/local/tomcat# ls -al (webapps里边是空的,可以从webapps.dist里搞进去) cp webapps.dist/* webapps -r http://xx.xx.xx.xx:3355/

练习:部署ES+kibana

看容器的当前运行状态,进行修改

# es 暴露的端口很多

# es 十分的耗内存

# es 的数据一般需要放置到安全目录丶挂载

# 下载并启动 elasticsearch

docker run -d --name elasticsearch -p 9200:9200 -p 9300:9300 -e "discovery.type=single-node" elasticsearch:7.6.2

docker stats #查看 cpu 状态

curl localhost:9200 # 测试 es 是否成功

# 关闭,启动时限制内存 -e表示环境 但是没有成功启动,Docker容器后台运行,就必须有一个前台进程.

docker run -dit --name elasticsearch02 -p 9200:9200 -p 9300:9300 -e "discovery.type=single-node" -e ES_JAVA_OPTS="-Xms64m Xmx512m" elasticsearch:7.6.2

docker ps -a

docker rm 92ef80884600

# 使用kibana连接es 未完待续…

可视化工具

portainer # (先用这个) # rancher(CI/CD再用)

docker run -d -p 8088:9000 \ --restart=always -v /var/run/docker.sock:/var/run/docker.sock --privileged=true portainer/portainer # 测试 http://106.75.32.166:8088/

Commit镜像

docker commit -m="提交的描述信息" -a="作者" 容器id 目标镜像:[tag] docker run -it -p 8080:8080 tomcat docker ps #另一个会话窗口,进入tomcat命令行 docker exec -it c32c4530f5e6 /bin/bash cp webapps.dist/* webapps -r #现在要做的就是把当前这个tomcat搞成一个镜像进行提交 exit docker ps docker commit -a="chhh" -m="add webapps app" c32c4530f5e6 tomcat02:1.0 #将这个容器生成一个新的镜像 类似快照 docker images #此时发现镜像已经生成成功了

容器数据卷

数据不放在容器中,容器的持久化和同步操作,容器间共享数据。

方式一:直接用命令来挂载:-v

docker run -it -v /home/ceshi:/home centos /bin/bash docker inspect 4f88d6c7d1cf #查看卷挂载信息 “Mounts” 这个inspect后面还可以跟具名 ,是 VOLUME NAME 都行 #现在分别在容器内和容器外这两个挂载的目录进行新建文件,到对方目录去查看 touch test1.java #停掉容器,去宿主机上修改 test1.java ,完了开启容器查看 (以后改主机上的文件就可以直接修改到容器的文件) docker start 4f88d6c7d1cf docker attach 4f88d6c7d1cf

练习:安装Mysql

docker pull mysql:5.7 #官方测试:docker run --name some-mysql -e MYSQL_ROOT_PASSWORD=my-secret-pw -d mysql:tag #开启端口3310,容器名mysql01 docker run -d -p 3310:3306 -v /home/mysql_docker_test/conf:/etc/mysql/conf.d -v /home/mysql_docker_test/data:/var/lib/mysql -e MYSQL_ROOT_PASSWORD=root --name mysql01 mysql:5.7 #竟然启动成功,没有瞬间关掉丶用mysql客户端连接测试成功! #强行干掉容器,看看挂载到本地的文件还在不在 docker rm -f mysql01

匿名挂载、具名挂载 和 指定路径挂载

# 指定路径挂载 就是指定上面的方式,用绝对路径 # 匿名挂载(通常不用这个),-v 直接跟需要挂载出来的容器内的目录,而不指定外部本地的目录,-P表示随机端口 docker run -d -P --name nginx02 -v /etc/nginx nginx # 具名挂载 docker run -d -P --name nginx03 -v juming-nginx:/etc/nginx nginx # 查看所有 volume 的情况 docker volume ls docker inspect juming-nginx #可查看挂载情况 /var/lib/docker/volumes/juming-nginx/_data 没指定目录都挂到这里 # 另外,通常我们用到ro/rw改变读写权限readonly(只能通过外部宿主机来改写文件)/readwrite(默认) docker run -d -P --name nginx03 -v juming-nginx:/etc/nginx:ro nginx docker run -d -P --name nginx03 -v juming-nginx:/etc/nginx:rw nginx

方式二:DockerFile构建时指定挂载

DockerFile就是用来构建docker镜像的构建文件丶命令脚本

(commit可以生成自己的镜像,同样的,dockerfile也可以生成自己的镜像,并且DockerFile里边进行卷挂载才是最常用的方式,因为以后镜像都是自己构建)

(VOLUME ["volume01","volume02"])相当于就是在容器内建文件名为volume01/volume02的共享文件夹(实际是拷贝的机制)

cd /home mkdir docker-test-volume cd docker-test-volume vim dockerfile1 #通过这个脚本生成镜像,镜像是一层一层的,脚本是一个个命令,每个命令都是一层 FROM centos VOLUME ["volume01","volume02"] CMD echo "-----end------" CMD /bin/bash docker build -f /home/docker-test-volume/dockerfile1 -t chhh/centos . docker images # 发现新的镜像已经有了 docker run -it 46a115c3e58d /bin/bash # 启动 ls -al # 发现 volume01,volume02 这两个目录 cd volume01 touch container.txt # 在外面来查看当前容器的信息 docker inspect fea68c0b5eb5 # 查看卷挂载的路径 cd /var/lib/docker/volumes/aa4b0b6c4c28913b88f5c2cc573d0c58dd8b751d3b7d71c18928c3a7c61934f5/_data # ls 发现挂载成功

容器与容器之间,也可以共享数据

docker run -it --name docker01 chhh/centos ctrl+p+q docker run -it --name docker02 --volumes-from docker01 chhh/centos # 01中加了文件,02对应的目录中就有了此文件 docker run -it --name docker03 --volumes-from docker01 chhh/centos # --volumes-from 的使用是基于docker01镜像已经指定了挂载的目录 # 新建文件,发现可以共享 # 删除容器 docker01 ,其挂载的目录不受影响

容器之间配置信息的传递,数据卷容器的生命周期一直持续到没有容器使用为止; 如果将数据持久化到本地,数据将不再消失。

多个mysql实现数据共享(只需要处理一下挂载的目录)

docker run -d -p 3310:3306 -v /home/mysql_docker_test/conf:/etc/mysql/conf.d -v /home/mysql_docker_test/data:/var/lib/mysql -e MYSQL_ROOT_PASSWORD=root --name mysql01 mysql:5.7 docker run -d -p 3311:3306 -v /home/mysql_docker_test/conf:/etc/mysql/conf.d -v /home/mysql_docker_test/data:/var/lib/mysql -e MYSQL_ROOT_PASSWORD=root --name mysql02 mysql:5.7

DockerFile

以前项目完成了,交付的成品是jar、war等,现在是交付docker镜像

所谓dockerfile,就是用来制作这个交付镜像的核心文件,最终镜像的内容包括环境和源码等等

自制镜像第一步:编写dockerfile

docker run -it centos # 原始centos,vim/ifconfig都是用不了的,现在自制镜像,扩展它的功能 # 新开窗口 cd /home mkdir dockerfile #准备自制镜像 cd dockerfile/ vim mydockerfile-centos

dockerfile具体内容

FROM centos MAINTAINER chhh<810808038@qq.com> ENV MYPATH /usr/local WORKDIR $MYPATH RUN yum -y install vim RUN yum -y install net-tools EXPOSE 80 CMD echo $MYPATH CMD echo "---end---" CMD /bin/bash

根据dockerfile构建镜像

docker build -f mydockerfile-centos -t mycentos:0.1 .

docker images

docker history 466c71bf6845 # 查看镜像的构建过程 庖丁解牛 目无全牛

docker run -it mycentos:0.1

CMD 和 ENTRYPOINT 区别(了解)

CMD # 指定这个容器启动时要运行的命令,只有最后一个会生效,可被替代 ENTRYPOINT # 指定这个容器启动时要运行的命令,可以追加命令

CMD

vim dockerfile-cmd-test FROM centos CMD ["ls","-a"] docker build -f dockerfile-cmd-test -t cmdtest . docker images docker run 57f90ddc37cc # 发现 ls -a 命令生效 docker run 57f90ddc37cc -l # 报错,CMD不能追加 docker run 57f90ddc37cc ls -al # 得行

ENTRYPOINT

vim dockerfile-cmd-entrypoint FROM centos ENTRYPOINT ["ls","-a"] docker build -f dockerfile-cmd-entrypoint -t entrypoint-test . docker run 3bf726e9ed3f docker run 3bf726e9ed3f -l # 不报错

docker中很多命令都很相似,需要对比了解区别

练习:做一个tomcat镜像

# 新建目录 [root@10-9-48-229 tomcat]# pwd /home/chhh/build/tomcat # 把资源搞进来 [root@10-9-48-229 tomcat]# ls apache-tomcat-9.0.37.tar.gz jdk-8u261-linux-x64.tar.gz # 记录 touch readme.txt # 编写 Dockerfile (文件名一字不差) vim Dockerfile #构建镜像 docker build -t diytomcat .

Dockerfile内容如下(# 每一句就是一层)

FROM centos MAINTAINER chhh<810808038@qq.com> COPY readme.txt /usr/local/readme.txt ADD jdk-8u261-linux-x64.tar.gz /usr/local/ ADD apache-tomcat-9.0.37.tar.gz /usr/local/ RUN yum -y install vim ENV MYPATH /usr/local/ WORKDIR $MYPATH ENV JAVA_HOME /usr/local/jdk1.8.0_261 ENV CLASSPATH $JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar ENV CATALINA_HOME /usr/local/apache-tomcat-9.0.37 ENV CATALINA_BASE /usr/local/apache-tomcat-9.0.37 ENV PATH $PATH:$JAVA_HOME/BIN:$CATALINA_HOME/lib:$CATALINA_HOME/bin EXPOSE 8080 CMD /usr/local/apache-tomcat-9.0.37/bin/startup.sh && tail -F /usr/local/apache-tomcat-9.0.37/bin/logs/catalina.out

启动镜像

docker images # 发现 diytomcat docker run -d -p 9090:8080 --name chhhtomcat -v /home/chhh/build/tomcat/test:/usr/local/apache-tomcat-9.0.37/webapps/test -v /home/chhh/build/tomcat/tomcatlogs/:/usr/local/apache-tomcat-9.0.37/logs diytomcat curl localhost:9090 docker exec -it dd379f79f0b7 /bin/bash

发布项目(热部署)

# /home/chhh/build/tomcat/test 在此挂载的路径下放入项目即可

vim index.jsp

<%@ page language="java" contentType="text/html; charset=UTF-8"

pageEncoding="UTF-8"%>

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8">

<title>以后用docker打包发布</title>

</head>

<body>

Hello World!<br/>

<%

System.out.println("---my test web logs---");

%>

</body>

</html>

mkdir WEB-INF

vim web.xml

<?xml version="1.0" encoding="UTF-8"?>

<web-app xmlns="http://java.sun.com/xml/ns/javaee"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://java.sun.com/xml/ns/javaee

http://java.sun.com/xml/ns/javaee/web-app_2_5.xsd"

version="2.5">

</web-app>

访问成功,日志也可以在挂载的路径下查看了

http://106.75.32.166:9090/test/

发布自己的镜像

https://hub.docker.com/ docker login -u curryneymar # 密码是大写 # 为自己的镜像添加标签 docker tag 544deda75f5c curryneymar/tomcat:1.0 docker images # 推送到远程 因为服务器连不了外网,所以会失败 docker push curryneymar/tomcat:1.0

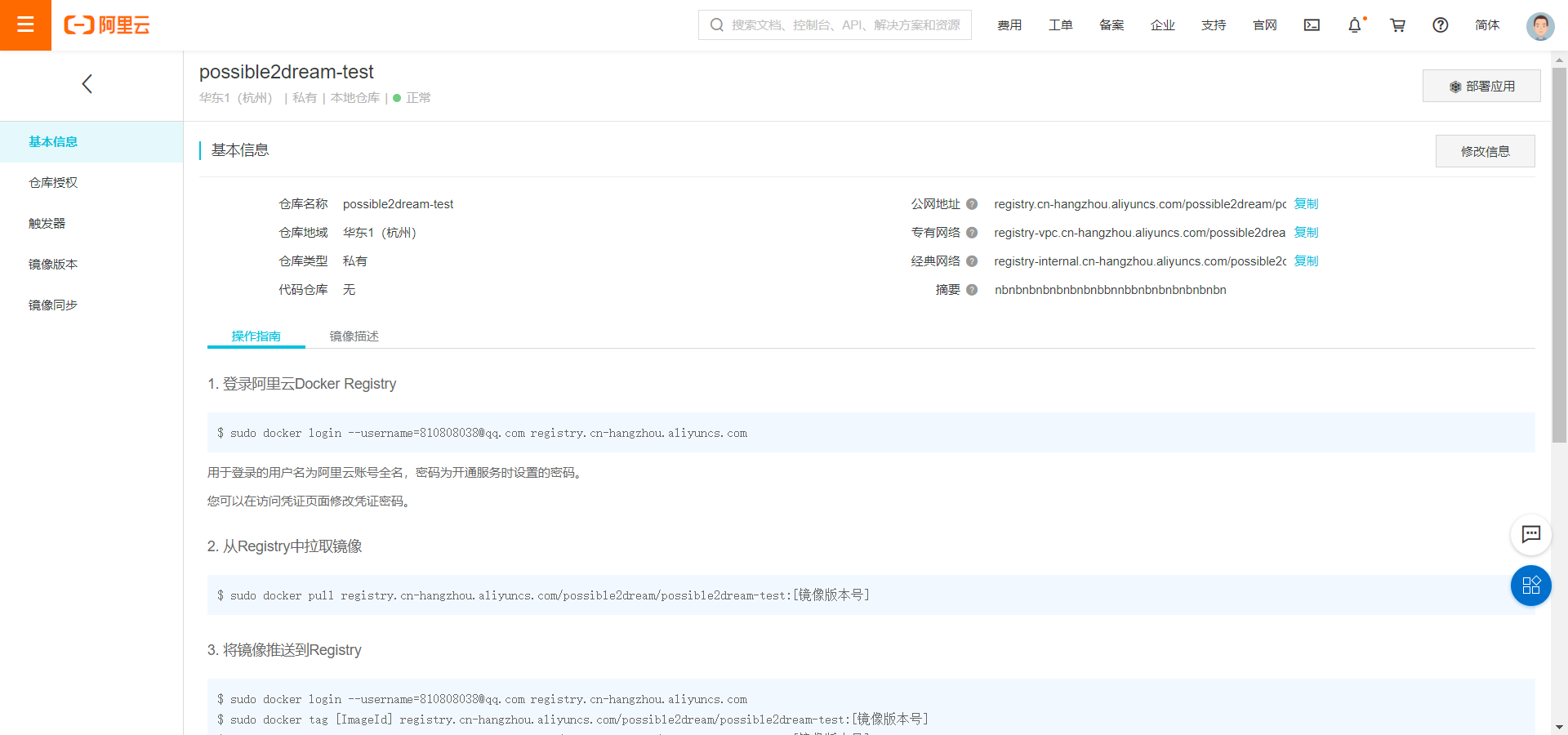

发布镜像到阿里云容器服务

容器服务 创建命名空间 创建镜像仓库(选本地仓库)

阿里云NB,没交钱也提供服务!

docker logout sudo docker login --username=810808038@qq.com registry.cn-hangzhou.aliyuncs.com sudo docker tag 544deda75f5c registry.cn-hangzhou.aliyuncs.com/possible2dream/possible2dream-test:1.0 sudo docker push registry.cn-hangzhou.aliyuncs.com/possible2dream/possible2dream-test:1.0

但是还是老中断……

另外,docker save/load 可保存镜像给小伙伴/加载小伙伴给的镜像

Docker网络

Centos网络

[root@10-9-48-229 ~]# ip addr 1: lo 本机回环地址: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever 2: eth0 内网地址: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1454 qdisc pfifo_fast state UP group default qlen 1000 link/ether 52:54:00:aa:21:ec brd ff:ff:ff:ff:ff:ff inet 10.9.48.229/16 brd 10.9.255.255 scope global eth0 valid_lft forever preferred_lft forever 3: docker0 docker地址: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default link/ether 02:42:de:f6:71:44 brd ff:ff:ff:ff:ff:ff inet 172.17.0.1(这个东西想象成路由器)/16 brd 172.17.255.255 scope global docker0 valid_lft forever preferred_lft forever

启动一个tomcat容器

docker run -d -P --name tomcat01 tomcat

查看容器内部网卡信息

[root@10-9-48-229 ~]# docker exec -it tomcat01 ip addr 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever 86: eth0@if87: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff link-netnsid 0 inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0 valid_lft forever preferred_lft forever

Linux ping 容器ip(发现可以ping通)

[root@10-9-48-229 ~]# ping 172.17.0.2 PING 172.17.0.2 (172.17.0.2) 56(84) bytes of data. 64 bytes from 172.17.0.2: icmp_seq=1 ttl=64 time=0.065 ms 64 bytes from 172.17.0.2: icmp_seq=2 ttl=64 time=0.059 ms

安装了docker,就会有一个网卡docker0桥接模式,使用的技术是evth-pair; 每启动一个docker容器,docker就会为其新分配一个ip。

启动容器后再次执行 ip addr

[root@10-9-48-229 ~]# ip addr 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1454 qdisc pfifo_fast state UP group default qlen 1000 link/ether 52:54:00:aa:21:ec brd ff:ff:ff:ff:ff:ff inet 10.9.48.229/16 brd 10.9.255.255 scope global eth0 valid_lft forever preferred_lft forever 3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default link/ether 02:42:de:f6:71:44 brd ff:ff:ff:ff:ff:ff inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0 valid_lft forever preferred_lft forever 87: vethf192272@if86: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master docker0 state UP group default link/ether 52:0e:44:3c:2e:52 brd ff:ff:ff:ff:ff:ff link-netnsid 0

再启动一个容器

docker run -d -P --name tomcat02 tomcat

再次查看外部Linux网络(发现网卡又多了一对)

[root@10-9-48-229 ~]# ip addr 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1454 qdisc pfifo_fast state UP group default qlen 1000 link/ether 52:54:00:aa:21:ec brd ff:ff:ff:ff:ff:ff inet 10.9.48.229/16 brd 10.9.255.255 scope global eth0 valid_lft forever preferred_lft forever 3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default link/ether 02:42:de:f6:71:44 brd ff:ff:ff:ff:ff:ff inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0 valid_lft forever preferred_lft forever 87: vethf192272@if86: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master docker0 state UP group default link/ether 52:0e:44:3c:2e:52 brd ff:ff:ff:ff:ff:ff link-netnsid 0 89: vethf48e178@if88: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master docker0 state UP group default link/ether ca:65:15:58:68:d2 brd ff:ff:ff:ff:ff:ff link-netnsid 1

再进入容器查看网络

[root@10-9-48-229 ~]# docker exec -it tomcat02 ip addr 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever 88: eth0@if89: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default link/ether 02:42:ac:11:00:03 brd ff:ff:ff:ff:ff:ff link-netnsid 0 inet 172.17.0.3/16 brd 172.17.255.255 scope global eth0 valid_lft forever preferred_lft forever

网卡成对出现,使用 evth-pair 技术,即一对的虚拟设备接口,docker0充当一个桥梁

docker :172.17.0.1 tomcat01:172.17.0.2 tomcat01:172.17.0.3

用 tomcat02 来 ping tomcat01 (发现可以 ping 通)

[root@10-9-48-229 ~]# docker exec -it tomcat02 ping 172.17.0.2 PING 172.17.0.2 (172.17.0.2) 56(84) bytes of data. 64 bytes from 172.17.0.2: icmp_seq=1 ttl=64 time=0.078 ms 64 bytes from 172.17.0.2: icmp_seq=2 ttl=64 time=0.059 ms

关闭容器,网卡自动消失

--link

容器3 与 容器2 不用通过网络,直接通过服务名ping通

docker run -d -P --name tomcat03 --link tomcat02 tomcat

docker exec -it tomcat03 ping tomcat02 # 反向却ping不通

原理(尽管--link已经不推荐使用了,通常是玩丶自定义网络)

[root@10-9-48-229 ~]# docker exec -it tomcat03 cat /etc/hosts 127.0.0.1 localhost ::1 localhost ip6-localhost ip6-loopback fe00::0 ip6-localnet ff00::0 ip6-mcastprefix ff02::1 ip6-allnodes ff02::2 ip6-allrouters 172.17.0.3 tomcat02 705da20dfb69 172.17.0.4 e4410508e461

自定义网络

目的:容器互连

docker network --help docker network ls # 查看所有docker网络 docker network rm mynet # 移除网络

网络模式

bridge:桥接(自定义网络也用这个) none:不配置网络 host:和宿主机共享网络 container:容器网络连通(用的少,局限性大)

自定义网络 并 使用

# 启动容器,默认带有一个 --net bridge ,就是开启docker0,这个东西可以自定义

docker run -d -P --name tomcat01 --net bridge tomcat

docker0 域名不能访问,需要自定义一个网络:

docker network create --driver bridge --subnet 192.168.0.0/16 --gateway 192.168.0.1 mynet

# 题外话:192.168.0.0/16,看到这种东西,表示前16位是固定的,即192.168是固定的,后面的自由度为255*255

docker network ls

docker network inspect mynet

docker run -d -P --name tomcat-net-01 --net mynet tomcat

docker run -d -P --name tomcat-net-02 --net mynet tomcat

docker network inspect mynet # 精彩

docker exec -it tomcat-net-01 ping 192.168.0.3

docker exec -it tomcat-net-01 ping tomcat-net-02 # Temporary failure in name resolution 重启,删了重试,解决

(不同的集群使用不同的网络,保证集群健康丶使网络不连通)

网络连通

不同的网络上的容器之间实现互ping(docker0和mynet)

docker run -d -P --name tomcat-net-01 --net mynet tomcat docker run -d -P --name tomcat-net-02 --net mynet tomcat docker run -d -P --name tomcat01 tomcat docker run -d -P --name tomcat02 tomcat docker ps # 尝试从 docker0 上的 tomcat01 直接 ping mynet 上的 tomcat-net-01 显然会撞墙 docker exec -it tomcat01 ping tomcat-net-01 # docker network connect --help 学会查文档 # 将容器 tomcat01 同网络 mynet 连起来 docker network connect mynet tomcat01 docker network inspect mynet # 这会再用容器 tomcat01 ping mynet 网络就可以通了

Redis集群部署实战

(分片+高可用+负载均衡)

建redis的网卡驱动、子网

docker network create --driver bridge --subnet 172.38.0.0/16 --gateway 172.38.0.1 redis

docker network ls

docker network inspect redis

通过脚本创建6个redis配置(每个文件夹写入对应配置)(这个脚本搞错了会发现后面启动不了)

for port in $(seq 1 6); do mkdir -p /mydata/redis/node-${port}/conf touch /mydata/redis/node-${port}/conf/redis.conf cat << EOF >/mydata/redis/node-${port}/conf/redis.conf port 6379 bind 0.0.0.0 cluster-enabled yes cluster-config-file nodes.conf cluster-node-timeout 5000 cluster-announce-ip 172.38.0.1${port} cluster-announce-port 6379 cluster-announce-bus-port 16379 appendonly yes EOF done

启动redis节点

# 节点1 docker run -p 6371:6379 -p 16371:16379 --name redis-1 \ -v /mydata/redis/node-1/data:/data \ -v /mydata/redis/node-1/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.38.0.11 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf # 节点2 docker run -p 6372:6379 -p 16372:16379 --name redis-2 \ -v /mydata/redis/node-2/data:/data \ -v /mydata/redis/node-2/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.38.0.12 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf # 节点3 docker run -p 6373:6379 -p 16373:16379 --name redis-3 \ -v /mydata/redis/node-3/data:/data \ -v /mydata/redis/node-3/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.38.0.13 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf # 节点4 docker run -p 6374:6379 -p 16374:16379 --name redis-4 \ -v /mydata/redis/node-4/data:/data \ -v /mydata/redis/node-4/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.38.0.14 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf # 节点5 docker run -p 6375:6379 -p 16375:16379 --name redis-5 \ -v /mydata/redis/node-5/data:/data \ -v /mydata/redis/node-5/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.38.0.15 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf # 节点6 docker run -p 6376:6379 -p 16376:16379 --name redis-6 \ -v /mydata/redis/node-6/data:/data \ -v /mydata/redis/node-6/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.38.0.16 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

以交互模式进入redis节点内,创建redis集群

[root@10-9-48-229 /]# docker exec -it redis-1 /bin/sh /data # redis-cli --cluster create 172.38.0.11:6379 172.38.0.12:6379 \ > 172.38.0.13:6379 172.38.0.14:6379 172.38.0.15:6379 \不要前面的尖括号 > 172.38.0.16:6379 --cluster-replicas 1 不要前面的尖括号 >>> Performing hash slots allocation on 6 nodes... Master[0] -> Slots 0 - 5460 Master[1] -> Slots 5461 - 10922 Master[2] -> Slots 10923 - 16383 Adding replica 172.38.0.15:6379 to 172.38.0.11:6379 Adding replica 172.38.0.16:6379 to 172.38.0.12:6379 Adding replica 172.38.0.14:6379 to 172.38.0.13:6379 M: 25473413da7c71327c6b03fea2d6ee96736a0759 172.38.0.11:6379 slots:[0-5460] (5461 slots) master M: 2744e22697f4138ae5298e11198efb5f8b993654 172.38.0.12:6379 slots:[5461-10922] (5462 slots) master M: a9e53fc5b4c2a526e0f589548236d3f05e3913d1 172.38.0.13:6379 slots:[10923-16383] (5461 slots) master S: b740f3099a7e4087bcef77bc0696b48d5ccee1dc 172.38.0.14:6379 replicates a9e53fc5b4c2a526e0f589548236d3f05e3913d1 S: a4bc0a705ffc5745332581f1fe4f563cfced51b4 172.38.0.15:6379 replicates 25473413da7c71327c6b03fea2d6ee96736a0759 S: 90477b979e90ec3ce96abb4c5e5b768fba81c4fb 172.38.0.16:6379 replicates 2744e22697f4138ae5298e11198efb5f8b993654 Can I set the above configuration? (type 'yes' to accept): yes >>> Nodes configuration updated >>> Assign a different config epoch to each node >>> Sending CLUSTER MEET messages to join the cluster Waiting for the cluster to join .... >>> Performing Cluster Check (using node 172.38.0.11:6379) M: 25473413da7c71327c6b03fea2d6ee96736a0759 172.38.0.11:6379 slots:[0-5460] (5461 slots) master 1 additional replica(s) M: 2744e22697f4138ae5298e11198efb5f8b993654 172.38.0.12:6379 slots:[5461-10922] (5462 slots) master 1 additional replica(s) S: b740f3099a7e4087bcef77bc0696b48d5ccee1dc 172.38.0.14:6379 slots: (0 slots) slave replicates a9e53fc5b4c2a526e0f589548236d3f05e3913d1 M: a9e53fc5b4c2a526e0f589548236d3f05e3913d1 172.38.0.13:6379 slots:[10923-16383] (5461 slots) master 1 additional replica(s) S: 90477b979e90ec3ce96abb4c5e5b768fba81c4fb 172.38.0.16:6379 slots: (0 slots) slave replicates 2744e22697f4138ae5298e11198efb5f8b993654 S: a4bc0a705ffc5745332581f1fe4f563cfced51b4 172.38.0.15:6379 slots: (0 slots) slave replicates 25473413da7c71327c6b03fea2d6ee96736a0759 [OK] All nodes agree about slots configuration. >>> Check for open slots... >>> Check slots coverage... [OK] All 16384 slots covered. /data #

测试(主从复制)

/data # redis-cli -c 127.0.0.1:6379> cluster info cluster_state:ok cluster_slots_assigned:16384 cluster_slots_ok:16384 cluster_slots_pfail:0 cluster_slots_fail:0 cluster_known_nodes:6 cluster_size:3 cluster_current_epoch:6 cluster_my_epoch:1 cluster_stats_messages_ping_sent:412 cluster_stats_messages_pong_sent:423 cluster_stats_messages_sent:835 cluster_stats_messages_ping_received:418 cluster_stats_messages_pong_received:412 cluster_stats_messages_meet_received:5 cluster_stats_messages_received:835 127.0.0.1:6379> cluster nodes 2744e22697f4138ae5298e11198efb5f8b993654 172.38.0.12:6379@16379 master - 0 1599792899841 2 connected 5461-10922 25473413da7c71327c6b03fea2d6ee96736a0759 172.38.0.11:6379@16379 myself,master - 0 1599792900000 1 connected 0-5460 b740f3099a7e4087bcef77bc0696b48d5ccee1dc 172.38.0.14:6379@16379 slave a9e53fc5b4c2a526e0f589548236d3f05e3913d1 0 1599792900000 4 connected a9e53fc5b4c2a526e0f589548236d3f05e3913d1 172.38.0.13:6379@16379 master - 0 1599792899540 3 connected 10923-16383 90477b979e90ec3ce96abb4c5e5b768fba81c4fb 172.38.0.16:6379@16379 slave 2744e22697f4138ae5298e11198efb5f8b993654 0 1599792900542 6 connected a4bc0a705ffc5745332581f1fe4f563cfced51b4 172.38.0.15:6379@16379 slave 25473413da7c71327c6b03fea2d6ee96736a0759 0 1599792899540 5 connected 127.0.0.1:6379> set a b -> Redirected to slot [15495] located at 172.38.0.13:6379 OK ----->去将 172.38.0.13 对应的 redis-3 停掉 (docker stop 34e7ddf06f3b) 172.38.0.13:6379> get a Error: Operation timed out /data # redis-cli -c 127.0.0.1:6379> get a -> Redirected to slot [15495] located at 172.38.0.14:6379 "b" 172.38.0.14:6379> cluster nodes 90477b979e90ec3ce96abb4c5e5b768fba81c4fb 172.38.0.16:6379@16379 slave 2744e22697f4138ae5298e11198efb5f8b993654 0 1599793402383 6 connected b740f3099a7e4087bcef77bc0696b48d5ccee1dc 172.38.0.14:6379@16379 myself,master - 0 1599793402000 7 connected 10923-16383 25473413da7c71327c6b03fea2d6ee96736a0759 172.38.0.11:6379@16379 master - 0 1599793402000 1 connected 0-5460 2744e22697f4138ae5298e11198efb5f8b993654 172.38.0.12:6379@16379 master - 0 1599793401882 2 connected 5461-10922 a4bc0a705ffc5745332581f1fe4f563cfced51b4 172.38.0.15:6379@16379 slave 25473413da7c71327c6b03fea2d6ee96736a0759 0 1599793402000 5 connected a9e53fc5b4c2a526e0f589548236d3f05e3913d1 172.38.0.13:6379@16379 master,fail - 1599793229967 1599793228000 3 connected 172.38.0.14:6379>

SpringBoot微服务打包Docker镜像

新建SpringBoot项目

package com.example.demo.controller; import org.springframework.web.bind.annotation.RequestMapping; import org.springframework.web.bind.annotation.RestController; @RestController public class HelloController { @RequestMapping("/hello") public String hello(){ return "hello,xxx"; } }

http://localhost:8080/hello 访问没问题

项目中新建Dockerfile(Idea中装docker插件这里仅仅是为了Dockerfile高亮?)

FROM java:8 COPY *.jar /app.jar CMD ["--server.port=8080"] EXPOSE 8080 ENTRYPOINT ["java","-jar","/app.jar"]

服务器新建目录 并 上传内容

[root@10-9-48-229 idea]# pwd /home/idea [root@10-9-48-229 idea]# ls demo-0.0.1-SNAPSHOT.jar Dockerfile

编译镜像 启动 访问

docker build -t chhh192 . # 编译镜像 docker images # 查看镜像 docker run -d -P --name chhh-springboot-web chhh192 #用镜像启动容器 docker ps # 查看端口 curl localhost:32773/hello # 访问 这种黑窗访问方式 不需要开端口 测试方便

浙公网安备 33010602011771号

浙公网安备 33010602011771号