HRegionServer异常下线问题

==版本==

Hadoop:2.7.1

HBase:1.2.1

Zookeeper:3.4.6

==现象==

HBase集群启动一段时间之后,一部分HRegionServer会异常下线重启(用systemctl启动的,所以会重启)

==日志==

2018-09-21 00:27:51,972 WARN [regionserver/dscn34/10.11.2.32:16020] util.Sleeper: We slept 54143ms instead of 3000ms, this is likely due to a long garbage collecting pause and it's usually bad, see http://hbase.apache.org/book.html#trouble.rs.runtime.zkexpired 2018-09-21 00:27:51,972 INFO [dscn34,16020,1537407400327_ChoreService_2] regionserver.HRegionServer: dscn34,16020,1537407400327-MemstoreFlusherChore requesting flush of DBN_DHL,91,1473221287052.36e00f06c2b59dc4283f5d73ee91efed. because if has an old edit so flush to free WALs after random delay 77512ms 2018-09-21 00:27:51,972 WARN [JvmPauseMonitor] util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 51038ms GC pool 'ParNew' had collection(s): count=1 time=41ms 2018-09-21 00:27:51,972 INFO [dscn34,16020,1537407400327_ChoreService_2] regionserver.HRegionServer: dscn34,16020,1537407400327-MemstoreFlusherChore requesting flush of DBN_FEDEX,24,1473221280323.b0e731a473f4c6d692dd5e2aa520289a. because if has an old edit so flush to free WALs after random delay 12692ms 2018-09-21 00:27:51,972 INFO [dscn34,16020,1537407400327_ChoreService_2] regionserver.HRegionServer: dscn34,16020,1537407400327-MemstoreFlusherChore requesting flush of DBN_ZTO,722417400271,1522145895300.7d6038e7685346c2a0e13924f123d83e. because if has an old edit so flush to free WALs after random delay 233845ms 2018-09-21 00:27:51,980 INFO [dscn34,16020,1537407400327_ChoreService_2] regionserver.HRegionServer: dscn34,16020,1537407400327-MemstoreFlusherChore requesting flush of DBN_EMS,7837291049398,1534937258760.041625bd69778d1db922c3965ca7cf08. because if has an old edit so flush to free WALs after random delay 179626ms 2018-09-21 00:27:51,980 INFO [dscn34,16020,1537407400327_ChoreService_2] regionserver.HRegionServer: dscn34,16020,1537407400327-MemstoreFlusherChore requesting flush of DBN_APEX,56,1478569982861.aee12cc19b94ea7615a8ba75b6e9f9c6. because if has an old edit so flush to free WALs after random delay 96643ms 2018-09-21 00:27:51,992 WARN [PriorityRpcServer.handler=10,queue=0,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Scan(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$ScanRequest)","starttimems":1537460820587,"responsesize":1763,"method":"Scan","processingtimems":51404,"client":"10.11.2.32:43512","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:51,994 WARN [PriorityRpcServer.handler=1,queue=1,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Scan(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$ScanRequest)","starttimems":1537460820588,"responsesize":1851,"method":"Scan","processingtimems":51393,"client":"10.11.2.4:49553","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:51,994 WARN [ResponseProcessor for block BP-1370501599-10.11.2.4-1466755309516:blk_1198373776_124709107] hdfs.DFSClient: Slow ReadProcessor read fields took 51384ms (threshold=30000ms); ack: seqno: 53686 status: SUCCESS status: SUCCESS downstreamAckTimeNanos: 353471 4: "\000\000", targets: [10.11.2.32:50010, 10.11.2.15:50010] 2018-09-21 00:27:51,994 WARN [sync.4] hdfs.DFSClient: Slow waitForAckedSeqno took 51398ms (threshold=30000ms) 2018-09-21 00:27:51,994 WARN [sync.0] hdfs.DFSClient: Slow waitForAckedSeqno took 51398ms (threshold=30000ms) 2018-09-21 00:27:52,000 WARN [sync.2] hdfs.DFSClient: Slow waitForAckedSeqno took 51404ms (threshold=30000ms) 2018-09-21 00:27:52,000 INFO [sync.2] wal.FSHLog: Slow sync cost: 51404 ms, current pipeline: [10.11.2.32:50010, 10.11.2.15:50010] 2018-09-21 00:27:52,000 WARN [PriorityRpcServer.handler=7,queue=1,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820589,"responsesize":8,"method":"Multi","processingtimems":51411,"client":"10.11.2.25:41292","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,000 WARN [PriorityRpcServer.handler=12,queue=0,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820589,"responsesize":8,"method":"Multi","processingtimems":51411,"client":"10.11.2.30:60065","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,000 WARN [PriorityRpcServer.handler=18,queue=0,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820591,"responsesize":8,"method":"Multi","processingtimems":51409,"client":"10.11.2.31:50843","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,000 WARN [ResponseProcessor for block BP-1370501599-10.11.2.4-1466755309516:blk_1198373776_124709107] hdfs.DFSClient: DFSOutputStream ResponseProcessor exception for block BP-1370501599-10.11.2.4-1466755309516:blk_1198373776_124709107 java.io.EOFException: Premature EOF: no length prefix available at org.apache.hadoop.hdfs.protocolPB.PBHelper.vintPrefixed(PBHelper.java:2000) at org.apache.hadoop.hdfs.protocol.datatransfer.PipelineAck.readFields(PipelineAck.java:176) at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer$ResponseProcessor.run(DFSOutputStream.java:798) 2018-09-21 00:27:52,000 WARN [sync.3] hdfs.DFSClient: Slow waitForAckedSeqno took 51404ms (threshold=30000ms) 2018-09-21 00:27:52,001 INFO [sync.3] wal.FSHLog: Slow sync cost: 51405 ms, current pipeline: [10.11.2.32:50010, 10.11.2.15:50010] 2018-09-21 00:27:51,999 WARN [PriorityRpcServer.handler=17,queue=1,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820588,"responsesize":8,"method":"Multi","processingtimems":51410,"client":"10.11.2.33:37983","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:51,999 WARN [PriorityRpcServer.handler=13,queue=1,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820594,"responsesize":8,"method":"Multi","processingtimems":51404,"client":"10.11.2.31:51053","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,001 WARN [PriorityRpcServer.handler=0,queue=0,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820589,"responsesize":8,"method":"Multi","processingtimems":51412,"client":"10.11.2.31:50956","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,001 WARN [PriorityRpcServer.handler=14,queue=0,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Scan(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$ScanRequest)","starttimems":1537460820596,"responsesize":987,"method":"Scan","processingtimems":51405,"client":"10.11.2.32:42054","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,001 WARN [DataStreamer for file /hbase/WALs/dscn34,16020,1537407400327/dscn34%2C16020%2C1537407400327.default.1537460773988 block BP-1370501599-10.11.2.4-1466755309516:blk_1198373776_124709107] hdfs.DFSClient: Error Recovery for block BP-1370501599-10.11.2.4-1466755309516:blk_1198373776_124709107 in pipeline 10.11.2.32:50010, 10.11.2.15:50010: bad datanode 10.11.2.32:50010 2018-09-21 00:27:51,994 INFO [sync.4] wal.FSHLog: Slow sync cost: 51398 ms, current pipeline: [10.11.2.32:50010, 10.11.2.15:50010] 2018-09-21 00:27:51,994 WARN [sync.1] hdfs.DFSClient: Slow waitForAckedSeqno took 51398ms (threshold=30000ms) 2018-09-21 00:27:52,001 WARN [PriorityRpcServer.handler=2,queue=0,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820588,"responsesize":8,"method":"Multi","processingtimems":51413,"client":"10.11.2.24:36206","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,000 WARN [PriorityRpcServer.handler=4,queue=0,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820590,"responsesize":8,"method":"Multi","processingtimems":51410,"client":"10.11.2.25:41236","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,000 WARN [PriorityRpcServer.handler=19,queue=1,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820589,"responsesize":8,"method":"Multi","processingtimems":51411,"client":"10.11.2.25:41436","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,002 WARN [PriorityRpcServer.handler=15,queue=1,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820589,"responsesize":8,"method":"Multi","processingtimems":51413,"client":"10.11.2.31:51096","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,002 WARN [sync.1] wal.FSHLog: HDFS pipeline error detected. Found 1 replicas but expecting no less than 2 replicas. Requesting close of WAL. current pipeline: [10.11.2.15:50010] 2018-09-21 00:27:52,002 INFO [sync.1] wal.FSHLog: Slow sync cost: 51406 ms, current pipeline: [10.11.2.15:50010] 2018-09-21 00:27:52,000 INFO [sync.0] wal.FSHLog: Slow sync cost: 51404 ms, current pipeline: [10.11.2.32:50010, 10.11.2.15:50010] 2018-09-21 00:27:52,002 WARN [PriorityRpcServer.handler=8,queue=0,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820590,"responsesize":8,"method":"Multi","processingtimems":51412,"client":"10.11.2.32:47637","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,007 WARN [PriorityRpcServer.handler=5,queue=1,port=16020] ipc.RpcServer: (responseTooSlow): {"call":"Multi(org.apache.hadoop.hbase.protobuf.generated.ClientProtos$MultiRequest)","starttimems":1537460820591,"responsesize":8,"method":"Multi","processingtimems":51411,"client":"10.11.2.32:47398","queuetimems":0,"class":"HRegionServer"} 2018-09-21 00:27:52,026 WARN [DataStreamer for file /hbase/WALs/dscn34,16020,1537407400327/dscn34%2C16020%2C1537407400327.default.1537460773988 block BP-1370501599-10.11.2.4-1466755309516:blk_1198373776_124709107] hdfs.DFSClient: DataStreamer Exception org.apache.hadoop.ipc.RemoteException(java.io.IOException): BP-1370501599-10.11.2.4-1466755309516:blk_1198373776_124709107 does not exist or is not under Constructionblk_1198373776_124710071 at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkUCBlock(FSNamesystem.java:6238) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.updateBlockForPipeline(FSNamesystem.java:6305) at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.updateBlockForPipeline(NameNodeRpcServer.java:804) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.updateBlockForPipeline(ClientNamenodeProtocolServerSideTranslatorPB.java:955) at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java) at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616) at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:969) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2049) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2045) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1657) at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2043) at org.apache.hadoop.ipc.Client.call(Client.java:1411) at org.apache.hadoop.ipc.Client.call(Client.java:1364) at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:206) at com.sun.proxy.$Proxy16.updateBlockForPipeline(Unknown Source) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.updateBlockForPipeline(ClientNamenodeProtocolTranslatorPB.java:832) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:497) at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:187) at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102) at com.sun.proxy.$Proxy17.updateBlockForPipeline(Unknown Source) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:497) at org.apache.hadoop.hbase.fs.HFileSystem$1.invoke(HFileSystem.java:279) at com.sun.proxy.$Proxy18.updateBlockForPipeline(Unknown Source) at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.setupPipelineForAppendOrRecovery(DFSOutputStream.java:1188) at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.processDatanodeError(DFSOutputStream.java:933) at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:487) 2018-09-21 00:27:52,026 FATAL [regionserver/dscn34/10.11.2.32:16020.logRoller] regionserver.LogRoller: Aborting java.io.IOException: cannot get log writer at org.apache.hadoop.hbase.wal.DefaultWALProvider.createWriter(DefaultWALProvider.java:365) at org.apache.hadoop.hbase.regionserver.wal.FSHLog.createWriterInstance(FSHLog.java:724) at org.apache.hadoop.hbase.regionserver.wal.FSHLog.rollWriter(FSHLog.java:689) at org.apache.hadoop.hbase.regionserver.LogRoller.run(LogRoller.java:148) at java.lang.Thread.run(Thread.java:745) Caused by: java.io.FileNotFoundException: Parent directory doesn't exist: /hbase/WALs/dscn34,16020,1537407400327 at org.apache.hadoop.hdfs.server.namenode.FSDirectory.verifyParentDir(FSDirectory.java:1722) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFileInternal(FSNamesystem.java:2520) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFileInt(FSNamesystem.java:2452) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFile(FSNamesystem.java:2335) at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.create(NameNodeRpcServer.java:623) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.create(ClientNamenodeProtocolServerSideTranslatorPB.java:397) at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java) at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616) at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:969) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2049) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2045) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1657) at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2043) at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62) at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) at java.lang.reflect.Constructor.newInstance(Constructor.java:422) at org.apache.hadoop.ipc.RemoteException.instantiateException(RemoteException.java:106) at org.apache.hadoop.ipc.RemoteException.unwrapRemoteException(RemoteException.java:73) at org.apache.hadoop.hdfs.DFSOutputStream.newStreamForCreate(DFSOutputStream.java:1616) at org.apache.hadoop.hdfs.DFSClient.create(DFSClient.java:1488) at org.apache.hadoop.hdfs.DFSClient.create(DFSClient.java:1448) at org.apache.hadoop.hdfs.DistributedFileSystem$7.doCall(DistributedFileSystem.java:430) at org.apache.hadoop.hdfs.DistributedFileSystem$7.doCall(DistributedFileSystem.java:426) at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81) at org.apache.hadoop.hdfs.DistributedFileSystem.createNonRecursive(DistributedFileSystem.java:426) at org.apache.hadoop.fs.FileSystem.createNonRecursive(FileSystem.java:1110) at org.apache.hadoop.fs.FileSystem.createNonRecursive(FileSystem.java:1086) at org.apache.hadoop.hbase.regionserver.wal.ProtobufLogWriter.init(ProtobufLogWriter.java:90) at org.apache.hadoop.hbase.wal.DefaultWALProvider.createWriter(DefaultWALProvider.java:361) ... 4 more Caused by: org.apache.hadoop.ipc.RemoteException(java.io.FileNotFoundException): Parent directory doesn't exist: /hbase/WALs/dscn34,16020,1537407400327 at org.apache.hadoop.hdfs.server.namenode.FSDirectory.verifyParentDir(FSDirectory.java:1722) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFileInternal(FSNamesystem.java:2520) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFileInt(FSNamesystem.java:2452) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFile(FSNamesystem.java:2335) at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.create(NameNodeRpcServer.java:623) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.create(ClientNamenodeProtocolServerSideTranslatorPB.java:397) at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java) at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616) at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:969) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2049) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2045) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1657) at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2043) at org.apache.hadoop.ipc.Client.call(Client.java:1411) at org.apache.hadoop.ipc.Client.call(Client.java:1364) at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:206) at com.sun.proxy.$Proxy16.create(Unknown Source) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.create(ClientNamenodeProtocolTranslatorPB.java:264) at sun.reflect.GeneratedMethodAccessor24.invoke(Unknown Source) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:497) at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:187) at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102) at com.sun.proxy.$Proxy17.create(Unknown Source) at sun.reflect.GeneratedMethodAccessor24.invoke(Unknown Source) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:497) at org.apache.hadoop.hbase.fs.HFileSystem$1.invoke(HFileSystem.java:279) at com.sun.proxy.$Proxy18.create(Unknown Source) at org.apache.hadoop.hdfs.DFSOutputStream.newStreamForCreate(DFSOutputStream.java:1612) ... 14 more

==原因==

从下线的HRegionServer的日志中可以看到GC花了51038ms。

网友说多数情况时以内GC的时间过长,超过了HRegionServer与ZK的连接时间,

被判定为异常,所以HRegionServer才被迫下线。

那么,HBase连接Zookeeper的连接超时时间是多少呢?

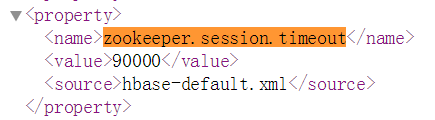

从HBase的配置页面上看到的信息是这样的,没有超过GC时间

来看看HBase官网对这个配置项的介绍,

<property> <name>zookeeper.session.timeout</name> <value>90000</value> <description>ZooKeeper session timeout in milliseconds. It is used in two different ways. First, this value is used in the ZK client that HBase uses to connect to the ensemble. It is also used by HBase when it starts a ZK server and it is passed as the 'maxSessionTimeout'. See http://hadoop.apache.org/zookeeper/docs/current/zookeeperProgrammers.html#ch_zkSessions. For example, if a HBase region server connects to a ZK ensemble that's also managed by HBase, then the session timeout will be the one specified by this configuration. But, a region server that connects to an ensemble managed with a different configuration will be subjected that ensemble's maxSessionTimeout. So, even though HBase might propose using 90 seconds, the ensemble can have a max timeout lower than this and it will take precedence. The current default that ZK ships with is 40 seconds, which is lower than HBase's. </description> </property>

注意看最后的一句话:

HBase建议的时间是90秒,如果全局中有比这个值低的,将优先使用这个低的值,需要注意的是zk的默认时间是40秒。

OK,非常重要的线索,那我们来看看zk的超时时间吧。

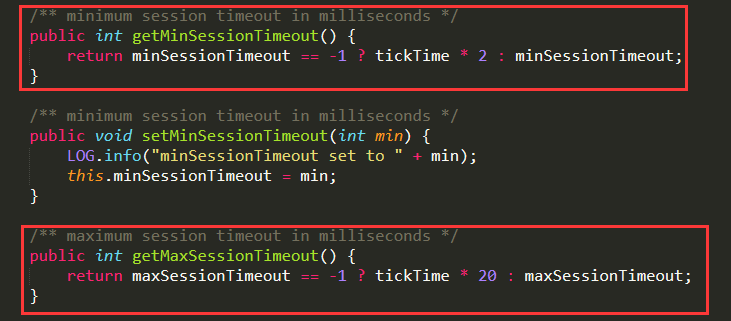

先来看看zk源码中,对sessionTimeOut时间的计算方式

如图:

代码如下:

/** minimum session timeout in milliseconds */ public int getMinSessionTimeout() { return minSessionTimeout == -1 ? tickTime * 2 : minSessionTimeout; } /** maximum session timeout in milliseconds */ public int getMaxSessionTimeout() { return maxSessionTimeout == -1 ? tickTime * 20 : maxSessionTimeout; }

再来看看zk的启动日志中,可以看到tickTime为默认的2000,

所以,zk的连接最大超时时间应该是2000 * 20 = 40000,即40s。

也就是说HRegionServer与ZK的连接超时时间实际为40秒。

2018-06-14 14:25:32,004 [myid:2] - INFO [main:QuorumPeer@959] - tickTime set to 2000 2018-06-14 14:25:32,004 [myid:2] - INFO [main:QuorumPeer@979] - minSessionTimeout set to -1 2018-06-14 14:25:32,004 [myid:2] - INFO [main:QuorumPeer@990] - maxSessionTimeout set to -1

到这里基本上已经知道答案了,HBase的GC一共花费了50多秒,

超过了与ZK的连接时间,被判定为异常,所以被强制下线。

==解决==

广大网友给了解决办法,就是调整JVM参数。

HBase配置文件:hbase-env.sh

调优项目:

-XX:+UseParNewGC:设置年轻代为并行收集。

-XX:+UseConcMarkSweepGC:使用CMS内存收集 。

-XX:+UseCompressedClassPointers:压缩类指针。

-XX:+UseCompressedOops:压缩对象指针。

-XX:+UseCMSCompactAtFullCollection:使用并发收集器时,开启对年老代的压缩。

-XX:CMSInitiatingOccupancyFraction=80:使用cms作为垃圾回收使用80%后开始CMS收集。

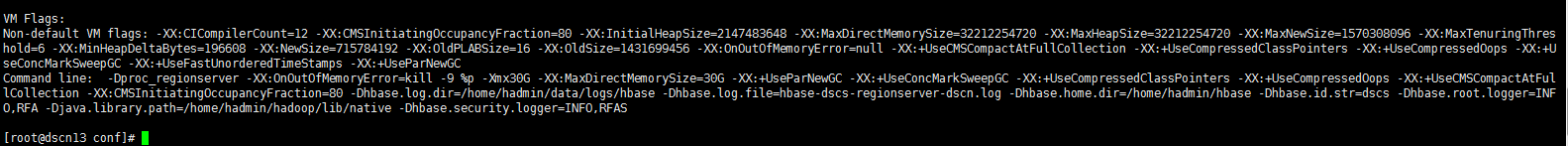

配置结果如图:

修改完配置文件之后,重启HBase集群,使用jinfo查看一下HRegionServer的JVM配置是否生效。

==结果==

HBase运行几天之后,没有发生HRegionServer异常下线的情况

==注意==

在使用下面这两个JVM参数的时候,JVM的HeapSize不能超过32G

-XX:+UseCompressedClassPointers

-XX:+UseCompressedOops

如果超过32G会出现一下错误提示:

--END--

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 从 HTTP 原因短语缺失研究 HTTP/2 和 HTTP/3 的设计差异

· AI与.NET技术实操系列:向量存储与相似性搜索在 .NET 中的实现

· 基于Microsoft.Extensions.AI核心库实现RAG应用

· Linux系列:如何用heaptrack跟踪.NET程序的非托管内存泄露

· 开发者必知的日志记录最佳实践

· winform 绘制太阳,地球,月球 运作规律

· AI与.NET技术实操系列(五):向量存储与相似性搜索在 .NET 中的实现

· 超详细:普通电脑也行Windows部署deepseek R1训练数据并当服务器共享给他人

· 【硬核科普】Trae如何「偷看」你的代码?零基础破解AI编程运行原理

· 上周热点回顾(3.3-3.9)

2017-10-19 集中式系统和分布式系统