基于kubeasz部署高可用k8s集群

在部署高可用k8s之前,我们先来说一说单master架构和多master架构,以及多master架构中各组件工作逻辑

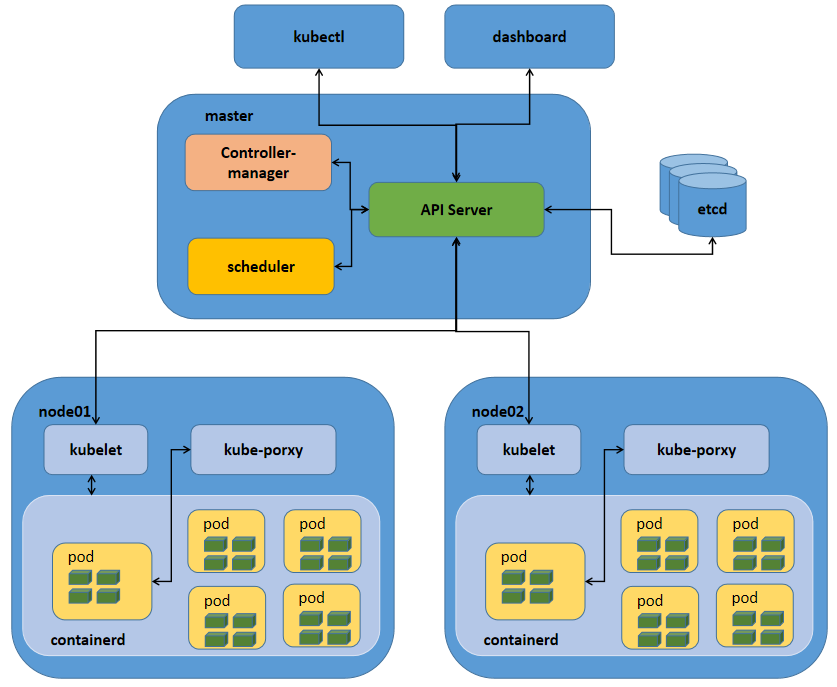

k8s单master架构

提示:这种单master节点的架构,通常只用于测试环境,生产环境绝对不允许;这是因为k8s集群master的节点是单点,一旦master节点宕机,将导致整个集群不可用;其次单master节点apiServer是性能瓶颈;从上图我们就可以看到,master节点所有组件和node节点中的kubelet和客户端kubectl、dashboard都会连接apiserver,同时apiserver还要负责往etcd中更新或读取数据,对客户端的请求做认证、准入控制等;很显然apiserver此时是非常忙碌的,极易成为整个K8S集群的瓶颈;所以不推荐在生产环境中使用单master架构;

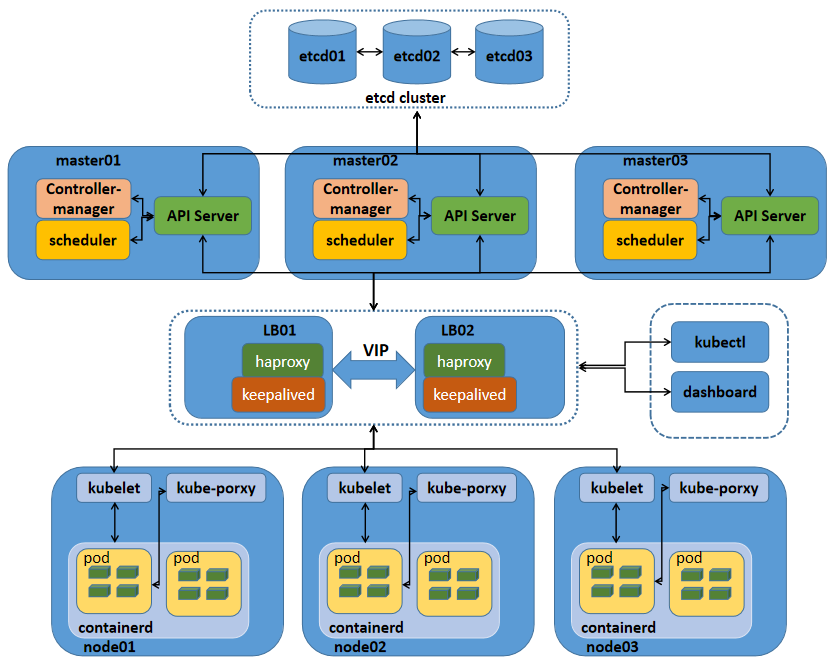

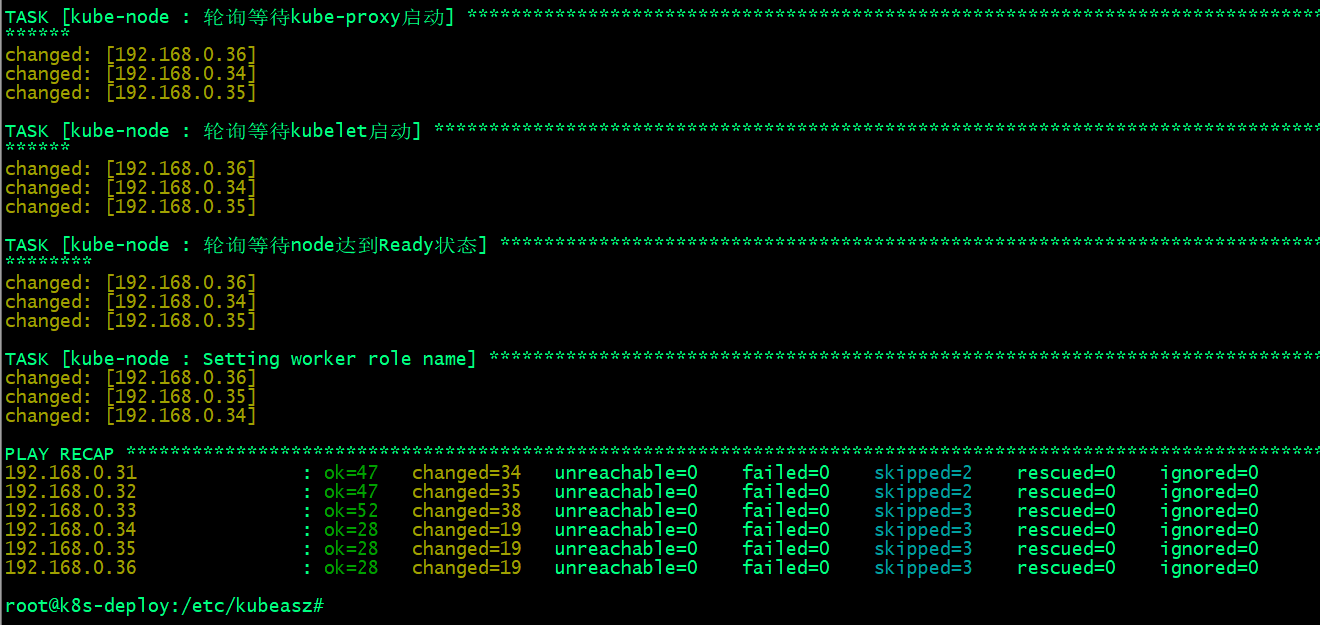

k8s多master架构

提示:k8s高可用主要是对master节点组件高可用;其中apiserver高可用的逻辑就是通过启用多个实例来对apiserver做高可用;apiserver从某种角度讲它应该是一个有状态服务,但为了降低apiserver的复杂性,apiserver将数据存储到etcd中,从而使得apiserver从有状态服务变成了一个无状态服务;所以高可用apiserver我们只需要启用多个实例通过一个负载均衡器来反向代理多个apiserver,客户端和node的节点的kubelet通过负载均衡器来连接apiserver即可;对于controller-manager、scheduler这两个组件来说,高可用的逻辑也是启用多个实例来实现的,不同与apiserver,这两个组件由于工作逻辑的独特性,一个k8s集群中有且只有一个controller-manager和scheduler在工作,所以启动多个实例它们必须工作在主备模式,即一个active,多个backup的模式;它们通过分布式锁的方式实现内部选举,决定谁来工作,最终抢到分布式锁(k8s集群endpoint)的controller-manager、scheduler成为active状态代表集群controller-manager、scheduler组件工作,抢到锁的controller-manager和scheduler会周期性的向apiserver通告自己的心跳信息,以维护自己active状态,避免其他controller-manager、scheduler进行抢占;其他controller-manager、scheduler收到活动的controller-manager、scheduler的心跳信息后自动切换为backup状态;一旦在规定时间备用controller-manager、scheduler没有收到活动的controller-manager、scheduler的心跳,此时就会触发选举,重复上述过程;

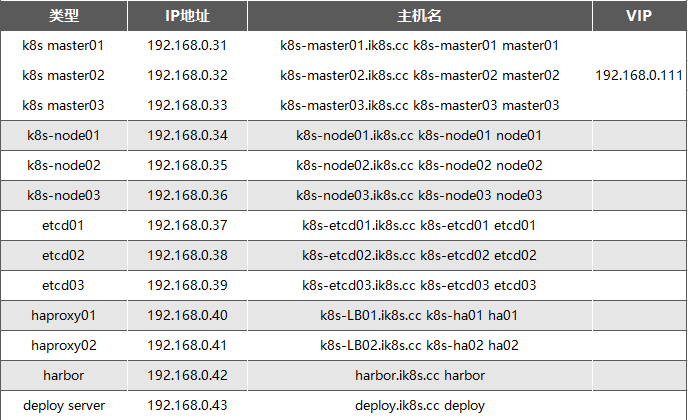

服务器规划

基础环境部署

重新生成machine-id

root@k8s-deploy:~# cat /etc/machine-id 1d2aeda997bd417c838377e601fd8e10 root@k8s-deploy:~# rm -rf /etc/machine-id && dbus-uuidgen --ensure=/etc/machine-id && cat /etc/machine-id 8340419bb01397bf654c596f6443cabf root@k8s-deploy:~#

提示:如果你的环境是通过某一个虚拟机基于快照克隆出来的虚拟机,很有可能对应machine-id一样,可以通过上述命令将对应虚拟机的machine-id修改成不一样;注意上述命令不能再crt中同时对多个虚拟机执行,同时对多个虚拟机执行,生成的machine-id是一样的;

内核参数优化

root@deploy:~# cat /etc/sysctl.conf net.ipv4.ip_forward=1 vm.max_map_count=262144 kernel.pid_max=4194303 fs.file-max=1000000 net.ipv4.tcp_max_tw_buckets=6000 net.netfilter.nf_conntrack_max=2097152 net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 vm.swappiness=0

系统资源限制

root@deploy:~# tail -10 /etc/security/limits.conf root soft core unlimited root hard core unlimited root soft nproc 1000000 root hard nproc 1000000 root soft nofile 1000000 root hard nofile 1000000 root soft memlock 32000 root hard memlock 32000 root soft msgqueue 8192000 root hard msgqueue 8192000 root@deploy:~#

内核模块挂载

root@deploy:~# cat /etc/modules-load.d/modules.conf # /etc/modules: kernel modules to load at boot time. # # This file contains the names of kernel modules that should be loaded # at boot time, one per line. Lines beginning with "#" are ignored. ip_vs ip_vs_lc ip_vs_lblc ip_vs_lblcr ip_vs_rr ip_vs_wrr ip_vs_sh ip_vs_dh ip_vs_fo ip_vs_nq ip_vs_sed ip_vs_ftp ip_vs_sh ip_tables ip_set ipt_set ipt_rpfilter ipt_REJECT ipip xt_set br_netfilter nf_conntrack overlay root@deploy:~#

禁用SWAP

root@deploy:~# free -mh

total used free shared buff/cache available

Mem: 3.8Gi 249Mi 3.3Gi 1.0Mi 244Mi 3.3Gi

Swap: 3.8Gi 0B 3.8Gi

root@deploy:~# swapoff -a

root@deploy:~# sed -i '/swap/s@^@#@' /etc/fstab

root@deploy:~# cat /etc/fstab

# /etc/fstab: static file system information.

#

# Use 'blkid' to print the universally unique identifier for a

# device; this may be used with UUID= as a more robust way to name devices

# that works even if disks are added and removed. See fstab(5).

#

# <file system> <mount point> <type> <options> <dump> <pass>

# / was on /dev/ubuntu-vg/ubuntu-lv during curtin installation

/dev/disk/by-id/dm-uuid-LVM-yecQxSAXrKdCNj1XNrQeaacvLAmKdL5SVadOXV0zHSlfkdpBEsaVZ9erw8Ac9gpm / ext4 defaults 0 1

# /boot was on /dev/sda2 during curtin installation

/dev/disk/by-uuid/80fe59b8-eb79-4ce9-a87d-134bc160e976 /boot ext4 defaults 0 1

#/swap.img none swap sw 0 0

root@deploy:~#

提示:以上操作建议在每个节点都做一下,然后把所有节点都重启;

1、基于keepalived及haproxy部署高可用负载均衡

下载安装keepalived和haproxy

root@k8s-ha01:~#apt update && apt install keepalived haproxy -y root@k8s-ha02:~#apt update && apt install keepalived haproxy -y

在ha01上创建/etc/keepalived/keepalived.conf

root@k8s-ha01:~# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

acassen

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_instance VI_1 {

state MASTER

interface ens160

garp_master_delay 10

smtp_alert

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.0.111 dev ens160 label ens160:0

}

}

root@k8s-ha01:~#

将配置文件复制给ha02

root@k8s-ha01:~# scp /etc/keepalived/keepalived.conf ha02:/etc/keepalived/keepalived.conf keepalived.conf 100% 545 896.6KB/s 00:00 root@k8s-ha01:~#

在ha02上编辑/etc/keepalived/keepalived.conf

提示:ha02上主要修改优先级和声明角色状态,如上图所示;

在ha02上启动keepalived并设置开机启动

root@k8s-ha02:~# systemctl start keepalived root@k8s-ha02:~# systemctl enable keepalived Synchronizing state of keepalived.service with SysV service script with /lib/systemd/systemd-sysv-install. Executing: /lib/systemd/systemd-sysv-install enable keepalived root@k8s-ha02:~#

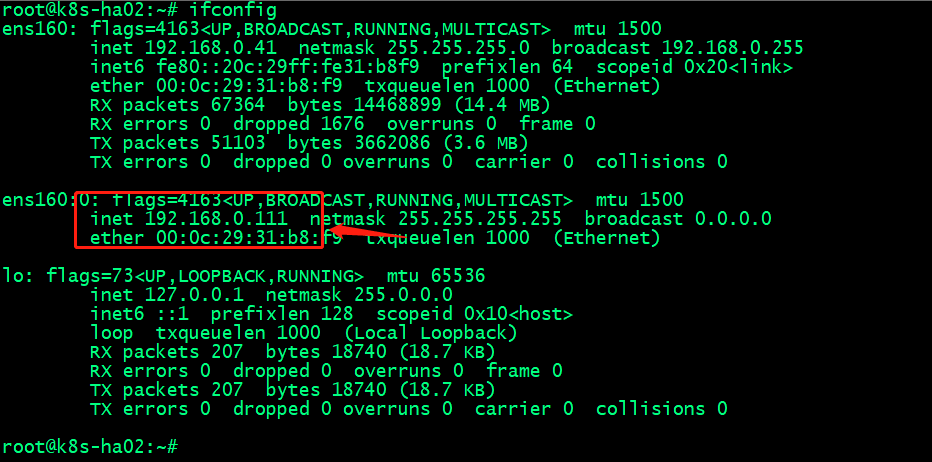

验证:在ha02上查看对应vip是否存在?

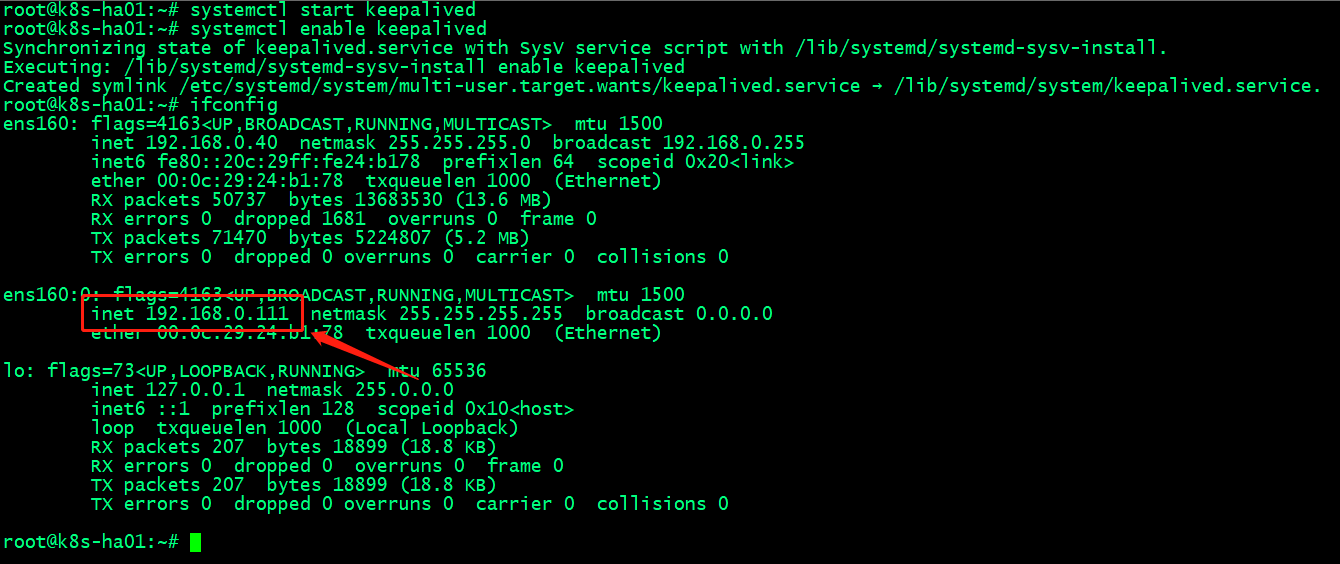

在ha01启动keepalived并设置为开机启动,看看对应vip是否会漂移至ha01呢?

提示:可以看到在ha01上启动keepalived以后,对应vip就漂移到ha01上了;这是因为ha01上的keepalived的优先级要比ha02高;

测试:停止ha01上的keepalived,看看vip是否会漂移至ha02上呢?

提示:能够看到在ha01停止keepalived以后,对应vip会自动漂移至ha02;

验证:用集群其他主机ping vip看看对应是否能够ping通呢?

root@k8s-node03:~# ping 192.168.0.111 PING 192.168.0.111 (192.168.0.111) 56(84) bytes of data. 64 bytes from 192.168.0.111: icmp_seq=1 ttl=64 time=2.04 ms 64 bytes from 192.168.0.111: icmp_seq=2 ttl=64 time=1.61 ms ^C --- 192.168.0.111 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1005ms rtt min/avg/max/mdev = 1.611/1.827/2.043/0.216 ms root@k8s-node03:~#

提示:能够用集群其他主机ping vip说明vip是可用的,至此keepalived配置好了;

配置haproxy

编辑/etc/haproxy/haproxy.cfg

root@k8s-ha01:~# cat /etc/haproxy/haproxy.cfg

global

log /dev/log local0

log /dev/log local1 notice

chroot /var/lib/haproxy

stats socket /run/haproxy/admin.sock mode 660 level admin expose-fd listeners

stats timeout 30s

user haproxy

group haproxy

daemon

# Default SSL material locations

ca-base /etc/ssl/certs

crt-base /etc/ssl/private

# See: https://ssl-config.mozilla.org/#server=haproxy&server-version=2.0.3&config=intermediate

ssl-default-bind-ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-CHACHA20-POLY1305:ECDHE-RSA-CHACHA20-POLY1305:DHE-RSA-AES128-GCM-SHA256:DHE-RSA-AES256-GCM-SHA384

ssl-default-bind-ciphersuites TLS_AES_128_GCM_SHA256:TLS_AES_256_GCM_SHA384:TLS_CHACHA20_POLY1305_SHA256

ssl-default-bind-options ssl-min-ver TLSv1.2 no-tls-tickets

defaults

log global

mode http

option httplog

option dontlognull

timeout connect 5000

timeout client 50000

timeout server 50000

errorfile 400 /etc/haproxy/errors/400.http

errorfile 403 /etc/haproxy/errors/403.http

errorfile 408 /etc/haproxy/errors/408.http

errorfile 500 /etc/haproxy/errors/500.http

errorfile 502 /etc/haproxy/errors/502.http

errorfile 503 /etc/haproxy/errors/503.http

errorfile 504 /etc/haproxy/errors/504.http

listen k8s_apiserver_6443

bind 192.168.0.111:6443

mode tcp

#balance leastconn

server k8s-master01 192.168.0.31:6443 check inter 2000 fall 3 rise 5

server k8s-master02 192.168.0.32:6443 check inter 2000 fall 3 rise 5

server k8s-master03 192.168.0.33:6443 check inter 2000 fall 3 rise 5

root@k8s-ha01:~#

把上述配置复制给ha02

root@k8s-ha01:~# scp /etc/haproxy/haproxy.cfg ha02:/etc/haproxy/haproxy.cfg haproxy.cfg 100% 1591 1.7MB/s 00:00 root@k8s-ha01:~#

在ha01上启动haproxy,并将haproxy设置为开机启动

root@k8s-ha01:~# systemctl start haproxy

Job for haproxy.service failed because the control process exited with error code.

See "systemctl status haproxy.service" and "journalctl -xeu haproxy.service" for details.

root@k8s-ha01:~# systemctl status haproxy

× haproxy.service - HAProxy Load Balancer

Loaded: loaded (/lib/systemd/system/haproxy.service; disabled; vendor preset: enabled)

Active: failed (Result: exit-code) since Sat 2023-04-22 12:13:34 UTC; 6s ago

Docs: man:haproxy(1)

file:/usr/share/doc/haproxy/configuration.txt.gz

Process: 1281 ExecStartPre=/usr/sbin/haproxy -Ws -f $CONFIG -c -q $EXTRAOPTS (code=exited, status=0/SUCCESS)

Process: 1283 ExecStart=/usr/sbin/haproxy -Ws -f $CONFIG -p $PIDFILE $EXTRAOPTS (code=exited, status=1/FAILURE)

Main PID: 1283 (code=exited, status=1/FAILURE)

CPU: 141ms

Apr 22 12:13:34 k8s-ha01.ik8s.cc systemd[1]: haproxy.service: Scheduled restart job, restart counter is at 5.

Apr 22 12:13:34 k8s-ha01.ik8s.cc systemd[1]: Stopped HAProxy Load Balancer.

Apr 22 12:13:34 k8s-ha01.ik8s.cc systemd[1]: haproxy.service: Start request repeated too quickly.

Apr 22 12:13:34 k8s-ha01.ik8s.cc systemd[1]: haproxy.service: Failed with result 'exit-code'.

Apr 22 12:13:34 k8s-ha01.ik8s.cc systemd[1]: Failed to start HAProxy Load Balancer.

root@k8s-ha01:~#

提示:上面报错是因为默认情况下内核不允许监听本机不存在的socket,我们需要修改内核参数允许本机监听不存在的socket;

修改内核参数

root@k8s-ha01:~# sysctl -a |grep bind net.ipv4.ip_autobind_reuse = 0 net.ipv4.ip_nonlocal_bind = 0 net.ipv6.bindv6only = 0 net.ipv6.ip_nonlocal_bind = 0 root@k8s-ha01:~# echo "net.ipv4.ip_nonlocal_bind = 1">> /etc/sysctl.conf root@k8s-ha01:~# cat /etc/sysctl.conf net.ipv4.ip_forward=1 vm.max_map_count=262144 kernel.pid_max=4194303 fs.file-max=1000000 net.ipv4.tcp_max_tw_buckets=6000 net.netfilter.nf_conntrack_max=2097152 net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 vm.swappiness=0 net.ipv4.ip_nonlocal_bind = 1 root@k8s-ha01:~# sysctl -p net.ipv4.ip_forward = 1 vm.max_map_count = 262144 kernel.pid_max = 4194303 fs.file-max = 1000000 net.ipv4.tcp_max_tw_buckets = 6000 net.netfilter.nf_conntrack_max = 2097152 net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 vm.swappiness = 0 net.ipv4.ip_nonlocal_bind = 1 root@k8s-ha01:~#

验证:重启haproxy 看看是能够正常监听6443?

root@k8s-ha01:~# systemctl restart haproxy

root@k8s-ha01:~# systemctl status haproxy

● haproxy.service - HAProxy Load Balancer

Loaded: loaded (/lib/systemd/system/haproxy.service; enabled; vendor preset: enabled)

Active: active (running) since Sat 2023-04-22 12:19:50 UTC; 7s ago

Docs: man:haproxy(1)

file:/usr/share/doc/haproxy/configuration.txt.gz

Process: 1441 ExecStartPre=/usr/sbin/haproxy -Ws -f $CONFIG -c -q $EXTRAOPTS (code=exited, status=0/SUCCESS)

Main PID: 1443 (haproxy)

Tasks: 5 (limit: 4571)

Memory: 70.1M

CPU: 309ms

CGroup: /system.slice/haproxy.service

├─1443 /usr/sbin/haproxy -Ws -f /etc/haproxy/haproxy.cfg -p /run/haproxy.pid -S /run/haproxy-master.sock

└─1445 /usr/sbin/haproxy -Ws -f /etc/haproxy/haproxy.cfg -p /run/haproxy.pid -S /run/haproxy-master.sock

Apr 22 12:19:50 k8s-ha01.ik8s.cc haproxy[1443]: [WARNING] (1443) : parsing [/etc/haproxy/haproxy.cfg:23] : 'option httplog' not usable with proxy 'k8s

_apiserver_6443' (needs 'mode http'). Falling back to 'option tcplog'.

Apr 22 12:19:50 k8s-ha01.ik8s.cc haproxy[1443]: [NOTICE] (1443) : New worker #1 (1445) forked

Apr 22 12:19:50 k8s-ha01.ik8s.cc systemd[1]: Started HAProxy Load Balancer.

Apr 22 12:19:50 k8s-ha01.ik8s.cc haproxy[1445]: [WARNING] (1445) : Server k8s_apiserver_6443/k8s-master01 is DOWN, reason: Layer4 connection problem,

info: "Connection refused", check duration: 0ms. 2 active and 0 backup servers left. 0 sessions active, 0 requeued, 0 remaining in queue.

Apr 22 12:19:50 k8s-ha01.ik8s.cc haproxy[1445]: [NOTICE] (1445) : haproxy version is 2.4.18-0ubuntu1.3

Apr 22 12:19:50 k8s-ha01.ik8s.cc haproxy[1445]: [NOTICE] (1445) : path to executable is /usr/sbin/haproxy

Apr 22 12:19:50 k8s-ha01.ik8s.cc haproxy[1445]: [ALERT] (1445) : sendmsg()/writev() failed in logger #1: No such file or directory (errno=2)

Apr 22 12:19:50 k8s-ha01.ik8s.cc haproxy[1445]: [WARNING] (1445) : Server k8s_apiserver_6443/k8s-master02 is DOWN, reason: Layer4 connection problem,

info: "Connection refused", check duration: 0ms. 1 active and 0 backup servers left. 0 sessions active, 0 requeued, 0 remaining in queue.

Apr 22 12:19:51 k8s-ha01.ik8s.cc haproxy[1445]: [WARNING] (1445) : Server k8s_apiserver_6443/k8s-master03 is DOWN, reason: Layer4 connection problem,

info: "Connection refused", check duration: 0ms. 0 active and 0 backup servers left. 0 sessions active, 0 requeued, 0 remaining in queue.

Apr 22 12:19:51 k8s-ha01.ik8s.cc haproxy[1445]: [ALERT] (1445) : proxy 'k8s_apiserver_6443' has no server available!

root@k8s-ha01:~# ss -tnl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 4096 192.168.0.111:6443 0.0.0.0:*

LISTEN 0 4096 127.0.0.53%lo:53 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

root@k8s-ha01:~#

提示:可用看到修改内核参数以后,重启haproxy对应vip的6443就在本地监听了;对应ha02也需要修改内核参数,然后将haproxy启动并设置为开机启动;

重启ha02上面的haproxy并设置为开机启动

root@k8s-ha02:~# systemctl restart haproxy root@k8s-ha02:~# systemctl enable haproxy Synchronizing state of haproxy.service with SysV service script with /lib/systemd/systemd-sysv-install. Executing: /lib/systemd/systemd-sysv-install enable haproxy root@k8s-ha02:~# ss -tnl State Recv-Q Send-Q Local Address:Port Peer Address:Port Process LISTEN 0 4096 127.0.0.53%lo:53 0.0.0.0:* LISTEN 0 4096 192.168.0.111:6443 0.0.0.0:* LISTEN 0 128 0.0.0.0:22 0.0.0.0:* root@k8s-ha02:~#

提示:现在不管vip在那个节点,对应请求都会根据vip迁移而随之迁移;至此基于keepalived及haproxy部署高可用负载均衡器就部署完成;

2、部署https harbor服务提供镜像的分发

在harbor服务器上配置docker-ce的源

root@harbor:~# apt-get update && apt-get -y install apt-transport-https ca-certificates curl software-properties-common && curl -fsSL https://mirrors.aliyun.com/docker-ce/linux/ubuntu/gpg | sudo apt-key add - && add-apt-repository "deb [arch=amd64] https://mirrors.aliyun.com/docker-ce/linux/ubuntu $(lsb_release -cs) stable" && apt-get -y update

在harbor服务器上安装docker和docker-compose

root@harbor:~# apt-cache madison docker-ce docker-ce | 5:23.0.3-1~ubuntu.22.04~jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:23.0.2-1~ubuntu.22.04~jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:23.0.1-1~ubuntu.22.04~jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:23.0.0-1~ubuntu.22.04~jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.24~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.23~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.22~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.21~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.20~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.19~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.18~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.17~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.16~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.15~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.14~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.13~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages root@harbor:~# apt install -y docker-ce=5:20.10.19~3-0~ubuntu-jammy

验证docker版本

oot@harbor:~# docker version Client: Docker Engine - Community Version: 23.0.3 API version: 1.41 (downgraded from 1.42) Go version: go1.19.7 Git commit: 3e7cbfd Built: Tue Apr 4 22:05:48 2023 OS/Arch: linux/amd64 Context: default Server: Docker Engine - Community Engine: Version: 20.10.19 API version: 1.41 (minimum version 1.12) Go version: go1.18.7 Git commit: c964641 Built: Thu Oct 13 16:44:47 2022 OS/Arch: linux/amd64 Experimental: false containerd: Version: 1.6.20 GitCommit: 2806fc1057397dbaeefbea0e4e17bddfbd388f38 runc: Version: 1.1.5 GitCommit: v1.1.5-0-gf19387a docker-init: Version: 0.19.0 GitCommit: de40ad0 root@harbor:~#

下载docker-compose二进制文件

root@harbor:~# wget https://github.com/docker/compose/releases/download/v2.17.2/docker-compose-linux-x86_64 -o /usr/local/bin/docker-compose

给docker-compose二进制文件加上可执行权限,并验证docker-compose的版本

root@harbor:~# cd /usr/local/bin/ root@harbor:/usr/local/bin# ll total 53188 drwxr-xr-x 2 root root 4096 Apr 22 07:05 ./ drwxr-xr-x 10 root root 4096 Feb 17 17:19 ../ -rw-r--r-- 1 root root 54453847 Apr 22 07:03 docker-compose root@harbor:/usr/local/bin# chmod a+x docker-compose root@harbor:/usr/local/bin# cd root@harbor:~# docker-compose -v Docker Compose version v2.17.2 root@harbor:~#

下载harbor离线安装包

root@harbor:~# wget https://github.com/goharbor/harbor/releases/download/v2.8.0/harbor-offline-installer-v2.8.0.tgz

创建存放harbor离线安装包目录,并将离线安装包解压于对应目录

root@harbor:~# ls harbor-offline-installer-v2.8.0.tgz root@harbor:~# mkdir /app root@harbor:~# tar xf harbor-offline-installer-v2.8.0.tgz -C /app/ root@harbor:~# cd /app/ root@harbor:/app# ls harbor root@harbor:/app#

创建存放证书的目录certs

root@harbor:/app# ls harbor root@harbor:/app# mkdir certs root@harbor:/app# ls certs harbor root@harbor:/app#

上传证书

root@harbor:/app/certs# ls 9529909_harbor.ik8s.cc_nginx.zip root@harbor:/app/certs# unzip 9529909_harbor.ik8s.cc_nginx.zip Archive: 9529909_harbor.ik8s.cc_nginx.zip Aliyun Certificate Download inflating: 9529909_harbor.ik8s.cc.pem inflating: 9529909_harbor.ik8s.cc.key root@harbor:/app/certs# ls 9529909_harbor.ik8s.cc.key 9529909_harbor.ik8s.cc.pem 9529909_harbor.ik8s.cc_nginx.zip root@harbor:/app/certs#

复制harbor配置模板为harbor.yaml

root@harbor:/app/certs# cd .. root@harbor:/app# ls certs harbor root@harbor:/app# cd harbor/ root@harbor:/app/harbor# ls LICENSE common.sh harbor.v2.8.0.tar.gz harbor.yml.tmpl install.sh prepare root@harbor:/app/harbor# cp harbor.yml.tmpl harbor.yml root@harbor:/app/harbor#

编辑harbor.yaml文件

root@harbor:/app/harbor# grep -v "#" harbor.yml | grep -v "^#"

hostname: harbor.ik8s.cc

http:

port: 80

https:

port: 443

certificate: /app/certs/9529909_harbor.ik8s.cc.pem

private_key: /app/certs/9529909_harbor.ik8s.cc.key

harbor_admin_password: admin123.com

database:

password: root123

max_idle_conns: 100

max_open_conns: 900

conn_max_lifetime: 5m

conn_max_idle_time: 0

data_volume: /data

trivy:

ignore_unfixed: false

skip_update: false

offline_scan: false

security_check: vuln

insecure: false

jobservice:

max_job_workers: 10

notification:

webhook_job_max_retry: 3

log:

level: info

local:

rotate_count: 50

rotate_size: 200M

location: /var/log/harbor

_version: 2.8.0

proxy:

http_proxy:

https_proxy:

no_proxy:

components:

- core

- jobservice

- trivy

upload_purging:

enabled: true

age: 168h

interval: 24h

dryrun: false

cache:

enabled: false

expire_hours: 24

root@harbor:/app/harbor#

提示:上述配置文件修改了hostname,这个主要用来指定证书中站点域名,这个必须和证书签发时指定的域名一样;其次是证书和私钥的路径以及harbor默认登录密码;

根据配置文件中指定路径来创建存放harbor的数据目录

root@harbor:/app/harbor# grep "data_volume" harbor.yml data_volume: /data root@harbor:/app/harbor# mkdir /data root@harbor:/app/harbor#

提示:为了避免数据丢失建议这个目录是挂载网络文件系统,如nfs;

执行harbor部署

root@harbor:/app/harbor# ./install.sh --with-notary --with-trivy

[Step 0]: checking if docker is installed ...

Note: docker version: 23.0.3

[Step 1]: checking docker-compose is installed ...

Note: Docker Compose version v2.17.2

[Step 2]: loading Harbor images ...

17d981d1fd47: Loading layer [==================================================>] 37.78MB/37.78MB

31886c65da47: Loading layer [==================================================>] 99.07MB/99.07MB

22b8b3f55675: Loading layer [==================================================>] 3.584kB/3.584kB

e0d07daed386: Loading layer [==================================================>] 3.072kB/3.072kB

192e4941b719: Loading layer [==================================================>] 2.56kB/2.56kB

ea466c659008: Loading layer [==================================================>] 3.072kB/3.072kB

0a9da2a9c15e: Loading layer [==================================================>] 3.584kB/3.584kB

b8d43ab61309: Loading layer [==================================================>] 20.48kB/20.48kB

Loaded image: goharbor/harbor-log:v2.8.0

91ff9ec8c599: Loading layer [==================================================>] 5.762MB/5.762MB

7b3b74d0bc46: Loading layer [==================================================>] 9.137MB/9.137MB

415c34d8de89: Loading layer [==================================================>] 14.47MB/14.47MB

d5f96f4cee68: Loading layer [==================================================>] 29.29MB/29.29MB

2e13bf4c5a45: Loading layer [==================================================>] 22.02kB/22.02kB

3065ef318899: Loading layer [==================================================>] 14.47MB/14.47MB

Loaded image: goharbor/notary-signer-photon:v2.8.0

10b1cdff4db0: Loading layer [==================================================>] 5.767MB/5.767MB

8ca511ff01d7: Loading layer [==================================================>] 4.096kB/4.096kB

c561ee469bc5: Loading layer [==================================================>] 17.57MB/17.57MB

88b0cf5853d2: Loading layer [==================================================>] 3.072kB/3.072kB

f68cc37aeda4: Loading layer [==================================================>] 31.01MB/31.01MB

f96735fc99d1: Loading layer [==================================================>] 49.37MB/49.37MB

Loaded image: goharbor/harbor-registryctl:v2.8.0

117dfa0ad222: Loading layer [==================================================>] 8.91MB/8.91MB

fad9e0a04e3e: Loading layer [==================================================>] 25.92MB/25.92MB

b5e945e047c5: Loading layer [==================================================>] 4.608kB/4.608kB

5b87a66594e3: Loading layer [==================================================>] 26.71MB/26.71MB

Loaded image: goharbor/harbor-exporter:v2.8.0

844a11bc472a: Loading layer [==================================================>] 91.99MB/91.99MB

329ec42b7278: Loading layer [==================================================>] 3.072kB/3.072kB

479889c4a17d: Loading layer [==================================================>] 59.9kB/59.9kB

9d7cf0ba93a4: Loading layer [==================================================>] 61.95kB/61.95kB

Loaded image: goharbor/redis-photon:v2.8.0

d78edf9b37a0: Loading layer [==================================================>] 5.762MB/5.762MB

6b1886c87164: Loading layer [==================================================>] 9.137MB/9.137MB

8e04b3ba3694: Loading layer [==================================================>] 15.88MB/15.88MB

1859c0f529e2: Loading layer [==================================================>] 29.29MB/29.29MB

5a4832cc0365: Loading layer [==================================================>] 22.02kB/22.02kB

32e5f34311d8: Loading layer [==================================================>] 15.88MB/15.88MB

Loaded image: goharbor/notary-server-photon:v2.8.0

157c80352244: Loading layer [==================================================>] 44.11MB/44.11MB

ae9e333084d9: Loading layer [==================================================>] 65.86MB/65.86MB

9172f69ba869: Loading layer [==================================================>] 24.09MB/24.09MB

78d4f0b7a9dd: Loading layer [==================================================>] 65.54kB/65.54kB

66e1120f8426: Loading layer [==================================================>] 2.56kB/2.56kB

d2e29dcfd3b2: Loading layer [==================================================>] 1.536kB/1.536kB

e2862979f5b1: Loading layer [==================================================>] 12.29kB/12.29kB

f060948ced19: Loading layer [==================================================>] 2.621MB/2.621MB

4f1f83dea031: Loading layer [==================================================>] 416.8kB/416.8kB

Loaded image: goharbor/prepare:v2.8.0

b757f0470527: Loading layer [==================================================>] 8.909MB/8.909MB

45f3777da07b: Loading layer [==================================================>] 3.584kB/3.584kB

1ec69429b88c: Loading layer [==================================================>] 2.56kB/2.56kB

54ae653f9340: Loading layer [==================================================>] 47.5MB/47.5MB

2b5374d50351: Loading layer [==================================================>] 48.29MB/48.29MB

Loaded image: goharbor/harbor-jobservice:v2.8.0

138a75d30165: Loading layer [==================================================>] 6.295MB/6.295MB

37678a05d20c: Loading layer [==================================================>] 4.096kB/4.096kB

62ab39a1f583: Loading layer [==================================================>] 3.072kB/3.072kB

dc2c8ea056cc: Loading layer [==================================================>] 191.2MB/191.2MB

eef0034d3cf6: Loading layer [==================================================>] 14.03MB/14.03MB

8ef49e77e2da: Loading layer [==================================================>] 206MB/206MB

Loaded image: goharbor/trivy-adapter-photon:v2.8.0

7b11ded34da7: Loading layer [==================================================>] 5.767MB/5.767MB

db79ebbe62ed: Loading layer [==================================================>] 4.096kB/4.096kB

7008de4e1efa: Loading layer [==================================================>] 3.072kB/3.072kB

78e690f643e2: Loading layer [==================================================>] 17.57MB/17.57MB

c59eb6af140b: Loading layer [==================================================>] 18.36MB/18.36MB

Loaded image: goharbor/registry-photon:v2.8.0

697d673e9002: Loading layer [==================================================>] 91.15MB/91.15MB

73dc6648b3fc: Loading layer [==================================================>] 6.097MB/6.097MB

7c040ff2580b: Loading layer [==================================================>] 1.233MB/1.233MB

Loaded image: goharbor/harbor-portal:v2.8.0

ed7088f4a42d: Loading layer [==================================================>] 8.91MB/8.91MB

5fb2a39a2645: Loading layer [==================================================>] 3.584kB/3.584kB

eed4c9aebbc2: Loading layer [==================================================>] 2.56kB/2.56kB

5a03baf4cc2c: Loading layer [==================================================>] 59.24MB/59.24MB

23c80bc54f04: Loading layer [==================================================>] 5.632kB/5.632kB

f7e397f31506: Loading layer [==================================================>] 115.7kB/115.7kB

78504c142fac: Loading layer [==================================================>] 44.03kB/44.03kB

ec904722ce15: Loading layer [==================================================>] 60.19MB/60.19MB

4746711ff0cc: Loading layer [==================================================>] 2.56kB/2.56kB

Loaded image: goharbor/harbor-core:v2.8.0

6460b77e3fdb: Loading layer [==================================================>] 123.4MB/123.4MB

19620cea1000: Loading layer [==================================================>] 22.57MB/22.57MB

d9674d59d34c: Loading layer [==================================================>] 5.12kB/5.12kB

f3b8b5f2a0b2: Loading layer [==================================================>] 6.144kB/6.144kB

948463f03a69: Loading layer [==================================================>] 3.072kB/3.072kB

674b9b213d01: Loading layer [==================================================>] 2.048kB/2.048kB

77dff9e1e728: Loading layer [==================================================>] 2.56kB/2.56kB

7c74827c6695: Loading layer [==================================================>] 2.56kB/2.56kB

254ef4a11cdc: Loading layer [==================================================>] 2.56kB/2.56kB

23cb6acbaaad: Loading layer [==================================================>] 9.728kB/9.728kB

Loaded image: goharbor/harbor-db:v2.8.0

6ca8f9c9b7ce: Loading layer [==================================================>] 91.15MB/91.15MB

Loaded image: goharbor/nginx-photon:v2.8.0

[Step 3]: preparing environment ...

[Step 4]: preparing harbor configs ...

prepare base dir is set to /app/harbor

Generated configuration file: /config/portal/nginx.conf

Generated configuration file: /config/log/logrotate.conf

Generated configuration file: /config/log/rsyslog_docker.conf

Generated configuration file: /config/nginx/nginx.conf

Generated configuration file: /config/core/env

Generated configuration file: /config/core/app.conf

Generated configuration file: /config/registry/config.yml

Generated configuration file: /config/registryctl/env

Generated configuration file: /config/registryctl/config.yml

Generated configuration file: /config/db/env

Generated configuration file: /config/jobservice/env

Generated configuration file: /config/jobservice/config.yml

Generated and saved secret to file: /data/secret/keys/secretkey

Successfully called func: create_root_cert

Successfully called func: create_root_cert

Successfully called func: create_cert

Copying certs for notary signer

Copying nginx configuration file for notary

Generated configuration file: /config/nginx/conf.d/notary.upstream.conf

Generated configuration file: /config/nginx/conf.d/notary.server.conf

Generated configuration file: /config/notary/server-config.postgres.json

Generated configuration file: /config/notary/server_env

Generated and saved secret to file: /data/secret/keys/defaultalias

Generated configuration file: /config/notary/signer_env

Generated configuration file: /config/notary/signer-config.postgres.json

Generated configuration file: /config/trivy-adapter/env

Generated configuration file: /compose_location/docker-compose.yml

Clean up the input dir

Note: stopping existing Harbor instance ...

[Step 5]: starting Harbor ...

➜

Notary will be deprecated as of Harbor v2.6.0 and start to be removed in v2.8.0 or later.

You can use cosign for signature instead since Harbor v2.5.0.

Please see discussion here for more details. https://github.com/goharbor/harbor/discussions/16612

[+] Running 15/15

✔ Network harbor_harbor Created 0.2s

✔ Network harbor_notary-sig Created 0.2s

✔ Network harbor_harbor-notary Created 0.1s

✔ Container harbor-log Started 5.3s

✔ Container harbor-db Started 7.2s

✔ Container redis Started 6.4s

✔ Container registry Started 6.3s

✔ Container harbor-portal Started 6.9s

✔ Container registryctl Started 6.4s

✔ Container trivy-adapter Started 6.0s

✔ Container harbor-core Started 7.4s

✔ Container notary-signer Started 7.1s

✔ Container nginx Started 8.9s

✔ Container harbor-jobservice Started 8.8s

✔ Container notary-server Started 7.2s

✔ ----Harbor has been installed and started successfully.----

root@harbor:/app/harbor#

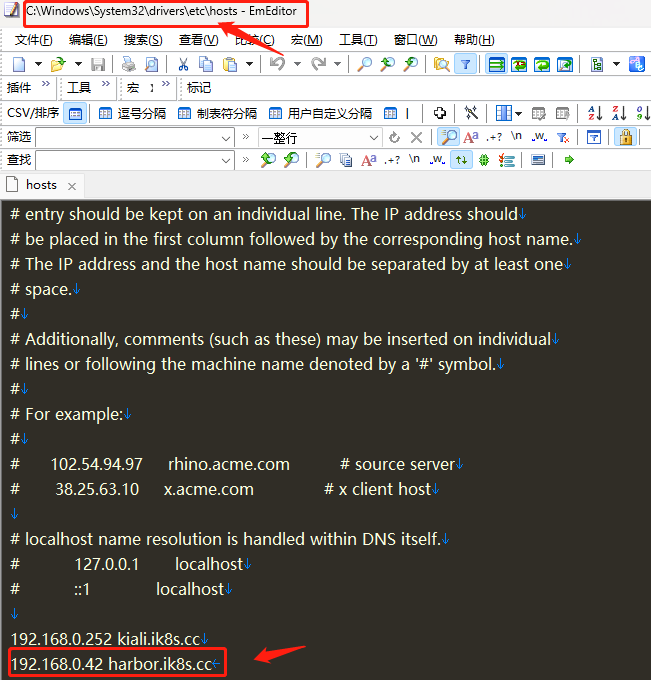

域名解析,将证书签发时的域名指向harbor服务器

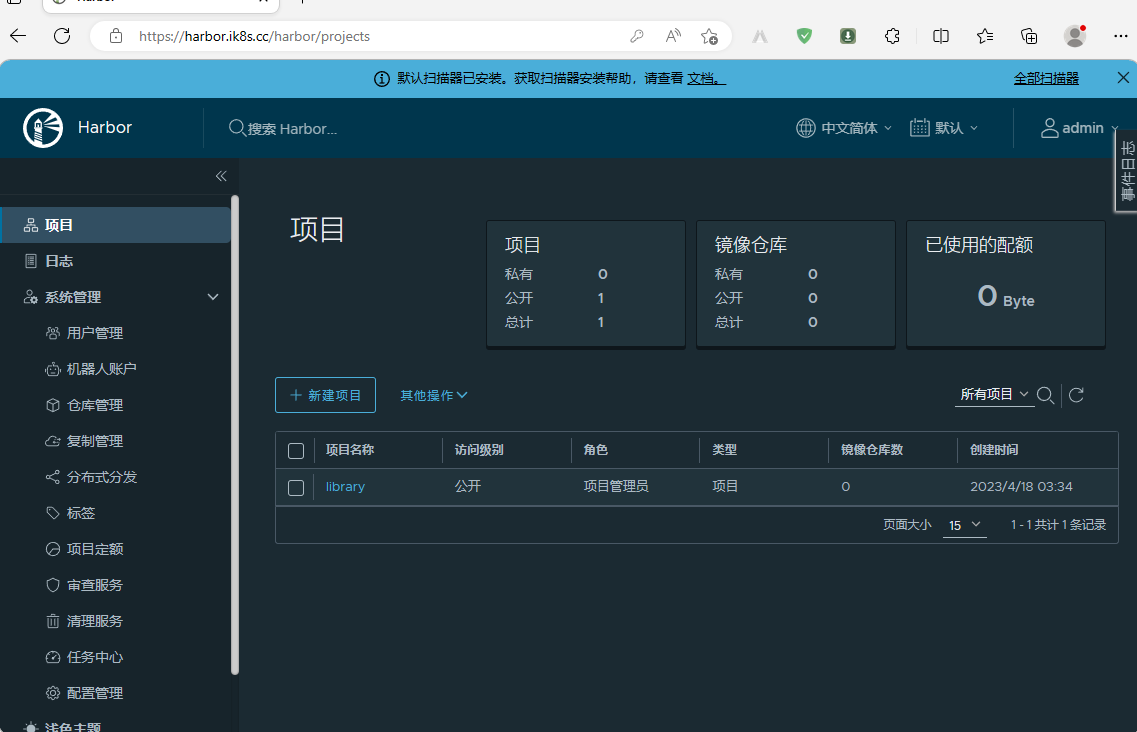

验证:通过windows的浏览器访问harbor.ik8s.cc,看看对应harbor是否能够正常访问到?

使用我配置的密码登录harbor,看看是否可用正常登录?

创建项目是否可用正常创建?

提示:可用看到我们在web网页上能够成功创建项目;

扩展:给harbor提供service文件,实现开机自动启动

root@harbor:/app/harbor# cat /usr/lib/systemd/system/harbor.service [Unit] Description=Harbor After=docker.service systemd-networkd.service systemd-resolved.service Requires=docker.service Documentation=http://github.com/vmware/harbor [Service] Type=simple Restart=on-failure RestartSec=5 ExecStart=/usr/local/bin/docker-compose -f /app/harbor/docker-compose.yml up ExecStop=/usr/local/bin/docker-compose -f /app/harbor/docker-compose.yml down [Install] WantedBy=multi-user.target root@harbor:/app/harbor#

加载harbor.service重启harbor并设置harbor开机自启动

root@harbor:/app/harbor# systemctl root@harbor:/app/harbor# systemctl daemon-reload root@harbor:/app/harbor# systemctl restart harbor root@harbor:/app/harbor# systemctl enable harbor Created symlink /etc/systemd/system/multi-user.target.wants/harbor.service → /lib/systemd/system/harbor.service. root@harbor:/app/harbor#

3、测试基于nerdctl可以登录https harbor并能实现进行分发

nerdctl登录harbor

root@k8s-node02:~# nerdctl login harbor.ik8s.cc Enter Username: admin Enter Password: WARN[0005] skipping verifying HTTPS certs for "harbor.ik8s.cc" WARNING: Your password will be stored unencrypted in /root/.docker/config.json. Configure a credential helper to remove this warning. See https://docs.docker.com/engine/reference/commandline/login/#credentials-store Login Succeeded root@k8s-node02:~#

提示:这里也需要做域名解析;

测试从node02本地向harbor上传镜像

root@k8s-node02:~# nerdctl pull nginx WARN[0000] skipping verifying HTTPS certs for "docker.io" docker.io/library/nginx:latest: resolved |++++++++++++++++++++++++++++++++++++++| index-sha256:63b44e8ddb83d5dd8020327c1f40436e37a6fffd3ef2498a6204df23be6e7e94: done |++++++++++++++++++++++++++++++++++++++| manifest-sha256:f2fee5c7194cbbfb9d2711fa5de094c797a42a51aa42b0c8ee8ca31547c872b1: done |++++++++++++++++++++++++++++++++++++++| config-sha256:6efc10a0510f143a90b69dc564a914574973223e88418d65c1f8809e08dc0a1f: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:75576236abf5959ff23b741ed8c4786e244155b9265db5e6ecda9d8261de529f: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:26c5c85e47da3022f1bdb9a112103646c5c29517d757e95426f16e4bd9533405: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:8c767bdbc9aedd4bbf276c6f28aad18251cceacb768967c5702974ae1eac23cd: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:78e14bb05fd35b58587cd0c5ca2c2eb12b15031633ec30daa21c0ea3d2bb2a15: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:4f3256bdf66bf00bcec08043e67a80981428f0e0de12f963eac3c753b14d101d: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:2019c71d56550b97ce01e0b6ef8e971fec705186f2927d2cb109ac3e18edb0ac: done |++++++++++++++++++++++++++++++++++++++| elapsed: 41.1s total: 54.4 M (1.3 MiB/s) root@k8s-node02:~# nerdctl images REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE nginx latest 63b44e8ddb83 10 seconds ago linux/amd64 149.8 MiB 54.4 MiB <none> <none> 63b44e8ddb83 10 seconds ago linux/amd64 149.8 MiB 54.4 MiB root@k8s-node02:~# nerdctl tag nginx harbor.ik8s.cc/baseimages/nginx:v1 root@k8s-node02:~# nerdctl images REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE nginx latest 63b44e8ddb83 51 seconds ago linux/amd64 149.8 MiB 54.4 MiB harbor.ik8s.cc/baseimages/nginx v1 63b44e8ddb83 5 seconds ago linux/amd64 149.8 MiB 54.4 MiB <none> <none> 63b44e8ddb83 51 seconds ago linux/amd64 149.8 MiB 54.4 MiB root@k8s-node02:~# nerdctl push harbor.ik8s.cc/baseimages/nginx:v1 INFO[0000] pushing as a reduced-platform image (application/vnd.docker.distribution.manifest.list.v2+json, sha256:c45a31532f8fcd4db2302631bc1644322aa43c396fabbf3f9e9038ff09688c26) WARN[0000] skipping verifying HTTPS certs for "harbor.ik8s.cc" index-sha256:c45a31532f8fcd4db2302631bc1644322aa43c396fabbf3f9e9038ff09688c26: done |++++++++++++++++++++++++++++++++++++++| manifest-sha256:f2fee5c7194cbbfb9d2711fa5de094c797a42a51aa42b0c8ee8ca31547c872b1: done |++++++++++++++++++++++++++++++++++++++| config-sha256:6efc10a0510f143a90b69dc564a914574973223e88418d65c1f8809e08dc0a1f: done |++++++++++++++++++++++++++++++++++++++| elapsed: 4.2 s total: 9.6 Ki (2.3 KiB/s) root@k8s-node02:~#

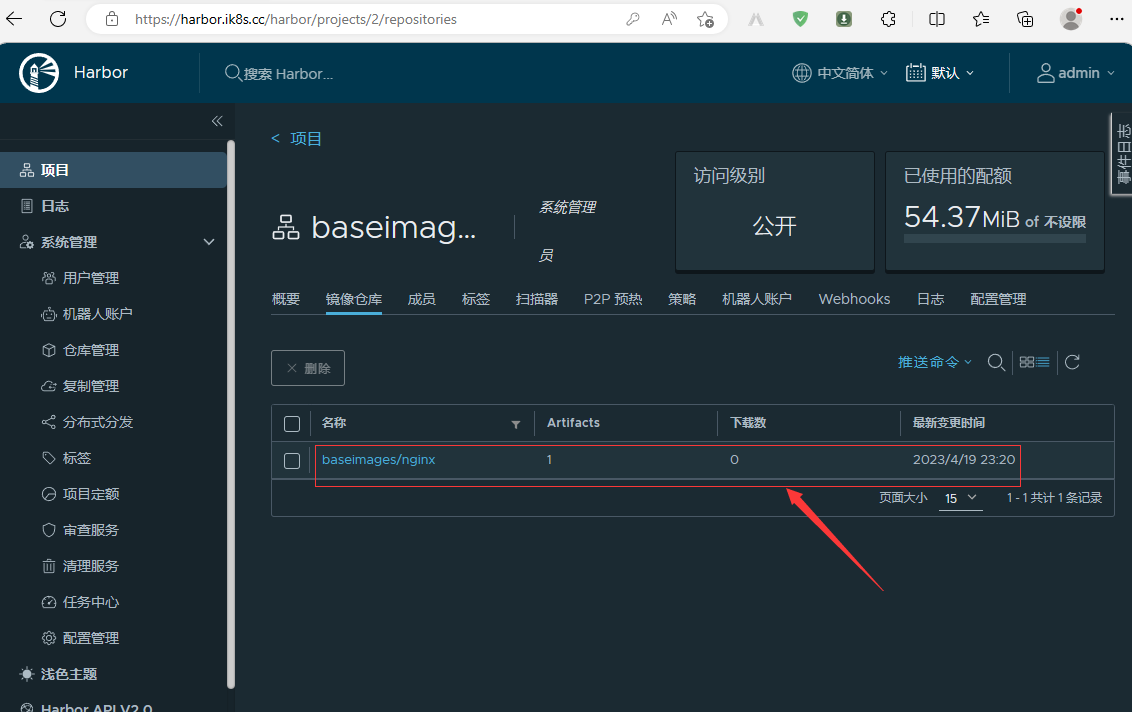

验证:在web网页上看我们上传的nginx:v1镜像是否在仓库里?

测试从harbor仓库下载镜像到本地

root@k8s-node03:~# nerdctl images REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE root@k8s-node03:~# nerdctl login harbor.ik8s.cc Enter Username: admin Enter Password: WARN[0004] skipping verifying HTTPS certs for "harbor.ik8s.cc" WARNING: Your password will be stored unencrypted in /root/.docker/config.json. Configure a credential helper to remove this warning. See https://docs.docker.com/engine/reference/commandline/login/#credentials-store Login Succeeded root@k8s-node03:~# nerdctl pull harbor.ik8s.cc/baseimages/nginx:v1 WARN[0000] skipping verifying HTTPS certs for "harbor.ik8s.cc" harbor.ik8s.cc/baseimages/nginx:v1: resolved |++++++++++++++++++++++++++++++++++++++| index-sha256:c45a31532f8fcd4db2302631bc1644322aa43c396fabbf3f9e9038ff09688c26: done |++++++++++++++++++++++++++++++++++++++| manifest-sha256:f2fee5c7194cbbfb9d2711fa5de094c797a42a51aa42b0c8ee8ca31547c872b1: done |++++++++++++++++++++++++++++++++++++++| config-sha256:6efc10a0510f143a90b69dc564a914574973223e88418d65c1f8809e08dc0a1f: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:75576236abf5959ff23b741ed8c4786e244155b9265db5e6ecda9d8261de529f: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:2019c71d56550b97ce01e0b6ef8e971fec705186f2927d2cb109ac3e18edb0ac: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:26c5c85e47da3022f1bdb9a112103646c5c29517d757e95426f16e4bd9533405: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:8c767bdbc9aedd4bbf276c6f28aad18251cceacb768967c5702974ae1eac23cd: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:4f3256bdf66bf00bcec08043e67a80981428f0e0de12f963eac3c753b14d101d: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:78e14bb05fd35b58587cd0c5ca2c2eb12b15031633ec30daa21c0ea3d2bb2a15: done |++++++++++++++++++++++++++++++++++++++| elapsed: 7.3 s total: 54.4 M (7.4 MiB/s) root@k8s-node03:~# nerdctl images REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE harbor.ik8s.cc/baseimages/nginx v1 c45a31532f8f 5 seconds ago linux/amd64 149.8 MiB 54.4 MiB <none> <none> c45a31532f8f 5 seconds ago linux/amd64 149.8 MiB 54.4 MiB root@k8s-node03:~#

通过上述测试,可以看到我们部署的harbor仓库能够实现上传和下载images;至此基于商用公司的免费证书搭建https harbor仓库就完成了;

4、基于kubeasz部署高可用kubernetes集群

部署节点部署环境初始化

本次我们使用kubeasz项目来部署二进制高可用k8s集群;项目地址:https://github.com/easzlab/kubeasz;该项目使用ansible-playbook实现自动化,提供一件安装脚本,也可以分步骤执行安装各组件;所以部署节点首先要安装好ansible,其次该项目使用docker下载部署k8s过程中的各种镜像以及二进制,所以部署节点docker也需要安装好,当然如果你的部署节点没有安装docker,它也会自动帮你安装;

部署节点配置docker源

root@deploy:~# apt-get update && apt-get -y install apt-transport-https ca-certificates curl software-properties-common && curl -fsSL https://mirrors.aliyun.com/docker-ce/linux/ubuntu/gpg | sudo apt-key add - && add-apt-repository "deb [arch=amd64] https://mirrors.aliyun.com/docker-ce/linux/ubuntu $(lsb_release -cs) stable" && apt-get -y update

部署节点安装ansible、docker

root@deploy:~# apt-cache madison ansible docker-ce ansible | 2.10.7+merged+base+2.10.8+dfsg-1 | http://mirrors.aliyun.com/ubuntu jammy/universe amd64 Packages ansible | 2.10.7+merged+base+2.10.8+dfsg-1 | http://mirrors.aliyun.com/ubuntu jammy/universe Sources docker-ce | 5:23.0.4-1~ubuntu.22.04~jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:23.0.3-1~ubuntu.22.04~jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:23.0.2-1~ubuntu.22.04~jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:23.0.1-1~ubuntu.22.04~jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:23.0.0-1~ubuntu.22.04~jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.24~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.23~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.22~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.21~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.20~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.19~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.18~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.17~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.16~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.15~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.14~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages docker-ce | 5:20.10.13~3-0~ubuntu-jammy | https://mirrors.aliyun.com/docker-ce/linux/ubuntu jammy/stable amd64 Packages root@deploy:~# apt install ansible docker-ce -y

部署节点安装sshpass命令⽤于同步公钥到各k8s服务器

root@deploy:~# apt install sshpass -y

部署节点生成密钥对

root@deploy:~# ssh-keygen -t rsa-sha2-512 -b 4096 Generating public/private rsa-sha2-512 key pair. Enter file in which to save the key (/root/.ssh/id_rsa): /root/.ssh/id_rsa already exists. Overwrite (y/n)? y Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa Your public key has been saved in /root/.ssh/id_rsa.pub The key fingerprint is: SHA256:uZ7jOnS/r0FNsPRpvvachoFwrUo2X0wbJ2Ve/wm596I root@deploy.ik8s.cc The key's randomart image is: +---[RSA 4096]----+ | o | | . + .o .| | ..=+...| | ...==oo .| | So.=o=o o| | . =oo =o o.| | . +.=..oo. .| | ..o.oo.oo..| | .++.o+Eo+. | +----[SHA256]-----+ root@deploy:~#

编写分发公钥脚本

root@k8s-deploy:~# cat pub-key-scp.sh

#!/bin/bash

#⽬标主机列表

HOSTS="

192.168.0.31

192.168.0.32

192.168.0.33

192.168.0.34

192.168.0.35

192.168.0.36

192.168.0.37

192.168.0.38

192.168.0.39

"

REMOTE_PORT="22"

REMOTE_USER="root"

REMOTE_PASS="admin"

for REMOTE_HOST in ${HOSTS};do

REMOTE_CMD="echo ${REMOTE_HOST} is successfully!"

#添加目标远程主机的公钥

ssh-keyscan -p "${REMOTE_PORT}" "${REMOTE_HOST}" >> ~/.ssh/known_hosts

#通过sshpass配置免秘钥登录、并创建python3软连接

sshpass -p "${REMOTE_PASS}" ssh-copy-id "${REMOTE_USER}@${REMOTE_HOST}"

ssh ${REMOTE_HOST} ln -sv /usr/bin/python3 /usr/bin/python

echo ${REMOTE_HOST} 免秘钥配置完成!

done

root@k8s-deploy:~#

执行脚本分发ssh公钥至master、node、etcd节点实现免密钥登录

root@deploy:~# sh pub-key-scp.sh

验证:在deploy节点,ssh连接k8s集群任意主机,看看是否能够正常免密登录?

root@k8s-deploy:~# ssh 192.168.0.33 Welcome to Ubuntu 22.04.2 LTS (GNU/Linux 5.15.0-70-generic x86_64) * Documentation: https://help.ubuntu.com * Management: https://landscape.canonical.com * Support: https://ubuntu.com/advantage This system has been minimized by removing packages and content that are not required on a system that users do not log into. To restore this content, you can run the 'unminimize' command. Last login: Sat Apr 22 11:45:25 2023 from 192.168.0.232 root@k8s-master03:~# exit logout Connection to 192.168.0.33 closed. root@k8s-deploy:~#

提示:能够正常免密登录对应主机,表示上述脚本实现免密登录没有问题;

下载kubeasz项目安装脚本

root@deploy:~# apt install git -y

root@deploy:~# export release=3.5.2

root@deploy:~# wget https://github.com/easzlab/kubeasz/releases/download/${release}/ezdown

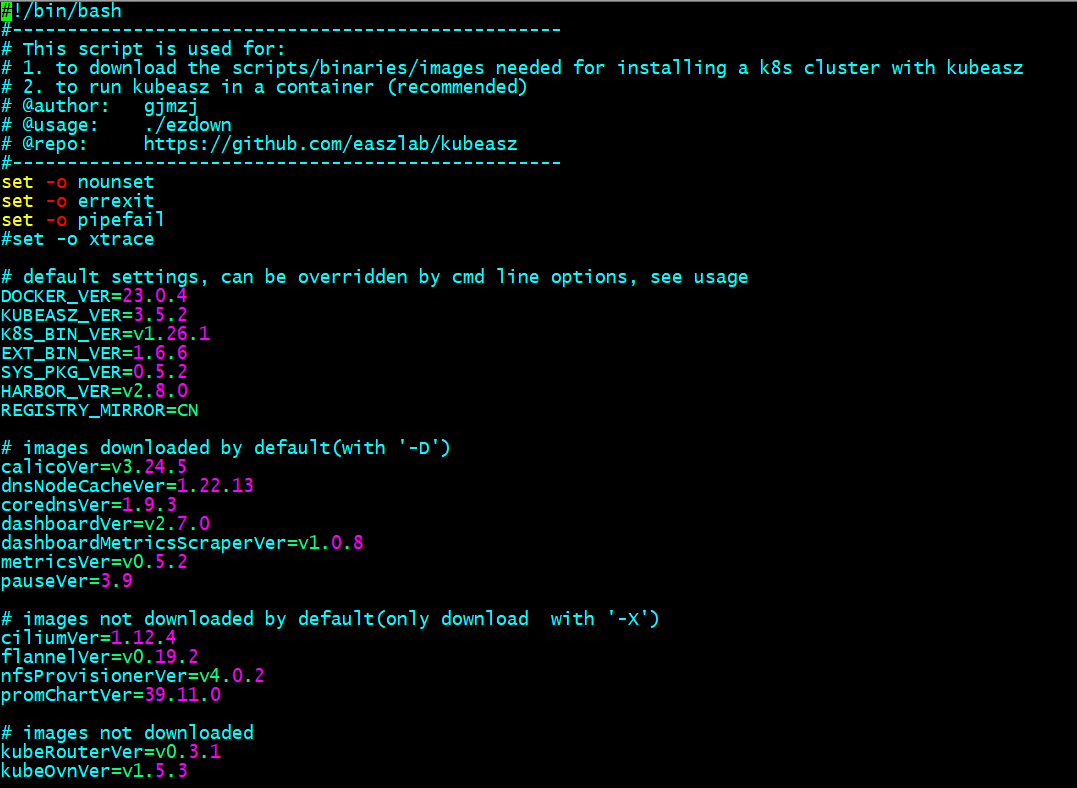

编辑ezdown

提示:编辑ezdown脚本主要是定义安装下载组件的版本,根据自己环境来定制对应版本就好;

给脚本添加执行权限

root@k8s-deploy:~# chmod a+x ezdown root@k8s-deploy:~# ll ezdown -rwxr-xr-x 1 root root 25433 Feb 9 15:11 ezdown* root@k8s-deploy:~#

执行脚本,下载kubeasz项目及组件

root@deploy:~# ./ezdown -D

提示:执行ezdown脚本它会下载一些镜像和二进制工具等,并将下载的二进制工具和kubeasz项目存放在/etc/kubeasz/目录中;

root@k8s-deploy:~# ll /etc/kubeasz/ total 140 drwxrwxr-x 13 root root 4096 Apr 22 07:59 ./ drwxr-xr-x 83 root root 4096 Apr 22 11:53 ../ drwxrwxr-x 3 root root 4096 Feb 9 15:14 .github/ -rw-rw-r-- 1 root root 301 Feb 9 14:50 .gitignore -rw-rw-r-- 1 root root 5556 Feb 9 14:50 README.md -rw-rw-r-- 1 root root 20304 Feb 9 14:50 ansible.cfg drwxr-xr-x 3 root root 4096 Apr 22 07:50 bin/ drwxr-xr-x 3 root root 4096 Apr 22 07:59 clusters/ drwxrwxr-x 8 root root 4096 Feb 9 15:14 docs/ drwxr-xr-x 2 root root 4096 Apr 22 07:59 down/ drwxrwxr-x 2 root root 4096 Feb 9 15:14 example/ -rwxrwxr-x 1 root root 26174 Feb 9 14:50 ezctl* -rwxrwxr-x 1 root root 25433 Feb 9 14:50 ezdown* drwxrwxr-x 10 root root 4096 Feb 9 15:14 manifests/ drwxrwxr-x 2 root root 4096 Feb 9 15:14 pics/ drwxrwxr-x 2 root root 4096 Apr 22 08:07 playbooks/ drwxrwxr-x 22 root root 4096 Feb 9 15:14 roles/ drwxrwxr-x 2 root root 4096 Feb 9 15:14 tools/ root@k8s-deploy:~#

查看ezctl工具的使用帮助

root@deploy:~# cd /etc/kubeasz/

root@deploy:/etc/kubeasz# ./ezctl --help

Usage: ezctl COMMAND [args]

-------------------------------------------------------------------------------------

Cluster setups:

list to list all of the managed clusters

checkout <cluster> to switch default kubeconfig of the cluster

new <cluster> to start a new k8s deploy with name 'cluster'

setup <cluster> <step> to setup a cluster, also supporting a step-by-step way

start <cluster> to start all of the k8s services stopped by 'ezctl stop'

stop <cluster> to stop all of the k8s services temporarily

upgrade <cluster> to upgrade the k8s cluster

destroy <cluster> to destroy the k8s cluster

backup <cluster> to backup the cluster state (etcd snapshot)

restore <cluster> to restore the cluster state from backups

start-aio to quickly setup an all-in-one cluster with default settings

Cluster ops:

add-etcd <cluster> <ip> to add a etcd-node to the etcd cluster

add-master <cluster> <ip> to add a master node to the k8s cluster

add-node <cluster> <ip> to add a work node to the k8s cluster

del-etcd <cluster> <ip> to delete a etcd-node from the etcd cluster

del-master <cluster> <ip> to delete a master node from the k8s cluster

del-node <cluster> <ip> to delete a work node from the k8s cluster

Extra operation:

kca-renew <cluster> to force renew CA certs and all the other certs (with caution)

kcfg-adm <cluster> <args> to manage client kubeconfig of the k8s cluster

Use "ezctl help <command>" for more information about a given command.

root@deploy:/etc/kubeasz#

使用ezctl工具生成配置文件和hosts文件

root@k8s-deploy:~# cd /etc/kubeasz/ root@k8s-deploy:/etc/kubeasz# ./ezctl new k8s-cluster01 2023-04-22 13:27:51 DEBUG generate custom cluster files in /etc/kubeasz/clusters/k8s-cluster01 2023-04-22 13:27:51 DEBUG set versions 2023-04-22 13:27:51 DEBUG disable registry mirrors 2023-04-22 13:27:51 DEBUG cluster k8s-cluster01: files successfully created. 2023-04-22 13:27:51 INFO next steps 1: to config '/etc/kubeasz/clusters/k8s-cluster01/hosts' 2023-04-22 13:27:51 INFO next steps 2: to config '/etc/kubeasz/clusters/k8s-cluster01/config.yml' root@k8s-deploy:/etc/kubeasz#

编辑ansible hosts配置文件

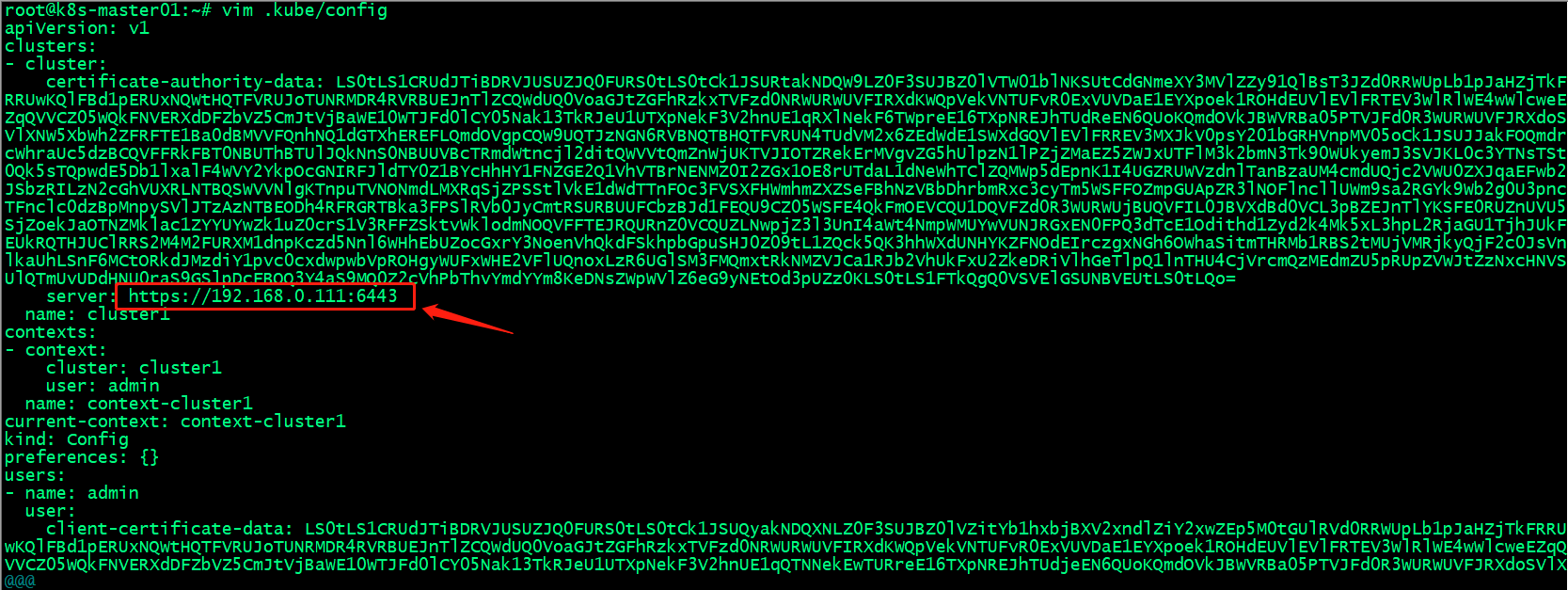

root@k8s-deploy:/etc/kubeasz# cat /etc/kubeasz/clusters/k8s-cluster01/hosts

# 'etcd' cluster should have odd member(s) (1,3,5,...)

[etcd]

192.168.0.37

192.168.0.38

192.168.0.39

# master node(s), set unique 'k8s_nodename' for each node

# CAUTION: 'k8s_nodename' must consist of lower case alphanumeric characters, '-' or '.',

# and must start and end with an alphanumeric character

[kube_master]

192.168.0.31 k8s_nodename='192.168.0.31'

192.168.0.32 k8s_nodename='192.168.0.32'

#192.168.0.33 k8s_nodename='192.168.0.33'

# work node(s), set unique 'k8s_nodename' for each node

# CAUTION: 'k8s_nodename' must consist of lower case alphanumeric characters, '-' or '.',

# and must start and end with an alphanumeric character

[kube_node]

192.168.0.34 k8s_nodename='192.168.0.34'

192.168.0.35 k8s_nodename='192.168.0.35'

# [optional] harbor server, a private docker registry

# 'NEW_INSTALL': 'true' to install a harbor server; 'false' to integrate with existed one

[harbor]

#192.168.1.8 NEW_INSTALL=false

# [optional] loadbalance for accessing k8s from outside

[ex_lb]

#192.168.1.6 LB_ROLE=backup EX_APISERVER_VIP=192.168.1.250 EX_APISERVER_PORT=8443

#192.168.1.7 LB_ROLE=master EX_APISERVER_VIP=192.168.1.250 EX_APISERVER_PORT=8443

# [optional] ntp server for the cluster

[chrony]

#192.168.1.1

[all:vars]

# --------- Main Variables ---------------

# Secure port for apiservers

SECURE_PORT="6443"

# Cluster container-runtime supported: docker, containerd

# if k8s version >= 1.24, docker is not supported

CONTAINER_RUNTIME="containerd"

# Network plugins supported: calico, flannel, kube-router, cilium, kube-ovn

CLUSTER_NETWORK="calico"

# Service proxy mode of kube-proxy: 'iptables' or 'ipvs'

PROXY_MODE="ipvs"

# K8S Service CIDR, not overlap with node(host) networking

SERVICE_CIDR="10.100.0.0/16"

# Cluster CIDR (Pod CIDR), not overlap with node(host) networking

CLUSTER_CIDR="10.200.0.0/16"

# NodePort Range

NODE_PORT_RANGE="30000-32767"

# Cluster DNS Domain

CLUSTER_DNS_DOMAIN="cluster.local"

# -------- Additional Variables (don't change the default value right now) ---

# Binaries Directory

bin_dir="/usr/local/bin"

# Deploy Directory (kubeasz workspace)

base_dir="/etc/kubeasz"

# Directory for a specific cluster

cluster_dir="{{ base_dir }}/clusters/k8s-cluster01"

# CA and other components cert/key Directory

ca_dir="/etc/kubernetes/ssl"

# Default 'k8s_nodename' is empty

k8s_nodename=''

root@k8s-deploy:/etc/kubeasz#

提示:上述hosts配置文件主要用来指定etcd节点、master节点、node节点、vip、运行时、网络组件类型、service IP与pod IP范围等配置信息。

编辑cluster config.yml文件

root@k8s-deploy:/etc/kubeasz# cat /etc/kubeasz/clusters/k8s-cluster01/config.yml

############################

# prepare

############################

# 可选离线安装系统软件包 (offline|online)

INSTALL_SOURCE: "online"

# 可选进行系统安全加固 github.com/dev-sec/ansible-collection-hardening

OS_HARDEN: false

############################

# role:deploy

############################

# default: ca will expire in 100 years

# default: certs issued by the ca will expire in 50 years

CA_EXPIRY: "876000h"

CERT_EXPIRY: "438000h"

# force to recreate CA and other certs, not suggested to set 'true'

CHANGE_CA: false

# kubeconfig 配置参数

CLUSTER_NAME: "cluster1"

CONTEXT_NAME: "context-{{ CLUSTER_NAME }}"

# k8s version

K8S_VER: "1.26.1"

# set unique 'k8s_nodename' for each node, if not set(default:'') ip add will be used

# CAUTION: 'k8s_nodename' must consist of lower case alphanumeric characters, '-' or '.',

# and must start and end with an alphanumeric character (e.g. 'example.com'),

# regex used for validation is '[a-z0-9]([-a-z0-9]*[a-z0-9])?(\.[a-z0-9]([-a-z0-9]*[a-z0-9])?)*'

K8S_NODENAME: "{%- if k8s_nodename != '' -%} \

{{ k8s_nodename|replace('_', '-')|lower }} \

{%- else -%} \

{{ inventory_hostname }} \

{%- endif -%}"

############################

# role:etcd

############################

# 设置不同的wal目录,可以避免磁盘io竞争,提高性能

ETCD_DATA_DIR: "/var/lib/etcd"

ETCD_WAL_DIR: ""

############################

# role:runtime [containerd,docker]

############################

# ------------------------------------------- containerd

# [.]启用容器仓库镜像

ENABLE_MIRROR_REGISTRY: true

# [containerd]基础容器镜像

SANDBOX_IMAGE: "harbor.ik8s.cc/baseimages/pause:3.9"

# [containerd]容器持久化存储目录

CONTAINERD_STORAGE_DIR: "/var/lib/containerd"

# ------------------------------------------- docker

# [docker]容器存储目录

DOCKER_STORAGE_DIR: "/var/lib/docker"

# [docker]开启Restful API

ENABLE_REMOTE_API: false

# [docker]信任的HTTP仓库

INSECURE_REG: '["http://easzlab.io.local:5000"]'

############################

# role:kube-master

############################

# k8s 集群 master 节点证书配置,可以添加多个ip和域名(比如增加公网ip和域名)

MASTER_CERT_HOSTS:

- "192.168.0.111"

- "kubeapi.ik8s.cc"

#- "www.test.com"

# node 节点上 pod 网段掩码长度(决定每个节点最多能分配的pod ip地址)

# 如果flannel 使用 --kube-subnet-mgr 参数,那么它将读取该设置为每个节点分配pod网段

# https://github.com/coreos/flannel/issues/847

NODE_CIDR_LEN: 24

############################

# role:kube-node

############################

# Kubelet 根目录

KUBELET_ROOT_DIR: "/var/lib/kubelet"

# node节点最大pod 数

MAX_PODS: 200

# 配置为kube组件(kubelet,kube-proxy,dockerd等)预留的资源量

# 数值设置详见templates/kubelet-config.yaml.j2

KUBE_RESERVED_ENABLED: "no"

# k8s 官方不建议草率开启 system-reserved, 除非你基于长期监控,了解系统的资源占用状况;

# 并且随着系统运行时间,需要适当增加资源预留,数值设置详见templates/kubelet-config.yaml.j2

# 系统预留设置基于 4c/8g 虚机,最小化安装系统服务,如果使用高性能物理机可以适当增加预留

# 另外,集群安装时候apiserver等资源占用会短时较大,建议至少预留1g内存

SYS_RESERVED_ENABLED: "no"

############################

# role:network [flannel,calico,cilium,kube-ovn,kube-router]

############################

# ------------------------------------------- flannel

# [flannel]设置flannel 后端"host-gw","vxlan"等

FLANNEL_BACKEND: "vxlan"

DIRECT_ROUTING: false

# [flannel]

flannel_ver: "v0.19.2"

# ------------------------------------------- calico

# [calico] IPIP隧道模式可选项有: [Always, CrossSubnet, Never],跨子网可以配置为Always与CrossSubnet(公有云建议使用always比较省事,其他的话需要修改各自公有云的网络配置,具体可以参考各个公有云说明)

# 其次CrossSubnet为隧道+BGP路由混合模式可以提升网络性能,同子网配置为Never即可.

CALICO_IPV4POOL_IPIP: "Always"

# [calico]设置 calico-node使用的host IP,bgp邻居通过该地址建立,可手工指定也可以自动发现

IP_AUTODETECTION_METHOD: "can-reach={{ groups['kube_master'][0] }}"

# [calico]设置calico 网络 backend: brid, vxlan, none

CALICO_NETWORKING_BACKEND: "brid"

# [calico]设置calico 是否使用route reflectors

# 如果集群规模超过50个节点,建议启用该特性

CALICO_RR_ENABLED: false

# CALICO_RR_NODES 配置route reflectors的节点,如果未设置默认使用集群master节点

# CALICO_RR_NODES: ["192.168.1.1", "192.168.1.2"]

CALICO_RR_NODES: []

# [calico]更新支持calico 版本: ["3.19", "3.23"]

calico_ver: "v3.24.5"

# [calico]calico 主版本

calico_ver_main: "{{ calico_ver.split('.')[0] }}.{{ calico_ver.split('.')[1] }}"

# ------------------------------------------- cilium

# [cilium]镜像版本

cilium_ver: "1.12.4"

cilium_connectivity_check: true

cilium_hubble_enabled: false

cilium_hubble_ui_enabled: false

# ------------------------------------------- kube-ovn

# [kube-ovn]选择 OVN DB and OVN Control Plane 节点,默认为第一个master节点

OVN_DB_NODE: "{{ groups['kube_master'][0] }}"

# [kube-ovn]离线镜像tar包

kube_ovn_ver: "v1.5.3"

# ------------------------------------------- kube-router

# [kube-router]公有云上存在限制,一般需要始终开启 ipinip;自有环境可以设置为 "subnet"

OVERLAY_TYPE: "full"

# [kube-router]NetworkPolicy 支持开关

FIREWALL_ENABLE: true

# [kube-router]kube-router 镜像版本

kube_router_ver: "v0.3.1"

busybox_ver: "1.28.4"

############################

# role:cluster-addon

############################

# coredns 自动安装

dns_install: "no"

corednsVer: "1.9.3"

ENABLE_LOCAL_DNS_CACHE: false

dnsNodeCacheVer: "1.22.13"

# 设置 local dns cache 地址

LOCAL_DNS_CACHE: "169.254.20.10"

# metric server 自动安装

metricsserver_install: "no"

metricsVer: "v0.5.2"

# dashboard 自动安装

dashboard_install: "no"

dashboardVer: "v2.7.0"

dashboardMetricsScraperVer: "v1.0.8"

# prometheus 自动安装

prom_install: "no"

prom_namespace: "monitor"

prom_chart_ver: "39.11.0"

# nfs-provisioner 自动安装

nfs_provisioner_install: "no"

nfs_provisioner_namespace: "kube-system"

nfs_provisioner_ver: "v4.0.2"

nfs_storage_class: "managed-nfs-storage"

nfs_server: "192.168.1.10"

nfs_path: "/data/nfs"

# network-check 自动安装

network_check_enabled: false

network_check_schedule: "*/5 * * * *"

############################

# role:harbor

############################

# harbor version,完整版本号

HARBOR_VER: "v2.6.3"

HARBOR_DOMAIN: "harbor.easzlab.io.local"

HARBOR_PATH: /var/data

HARBOR_TLS_PORT: 8443

HARBOR_REGISTRY: "{{ HARBOR_DOMAIN }}:{{ HARBOR_TLS_PORT }}"

# if set 'false', you need to put certs named harbor.pem and harbor-key.pem in directory 'down'

HARBOR_SELF_SIGNED_CERT: true

# install extra component

HARBOR_WITH_NOTARY: false

HARBOR_WITH_TRIVY: false

HARBOR_WITH_CHARTMUSEUM: true

root@k8s-deploy:/etc/kubeasz#

提示:上述配置文件主要定义了CA和证书的过期时长、kubeconfig配置参数、k8s集群版本、etcd数据存放目录、运行时参数、masster证书名称、node节点pod网段子网掩码长度、kubelet根目录、node节点最大pod数量、网络插件相关参数配置以及集群插件安装相关配置;

提示:这里需要注意一点,虽然我们没有自动安装coredns,但是这两个变量需要设置下,如果ENABLE_LOCAL_DNS_CACHE的值是true,下面的LOCAL_DNS_CACHE就写成对应coredns服务的IP地址;如果ENABLE_LOCAL_DNS_CACHE的值是false,后面的LOCAL_DNS_CACHE是谁的IP地址就无所谓了;

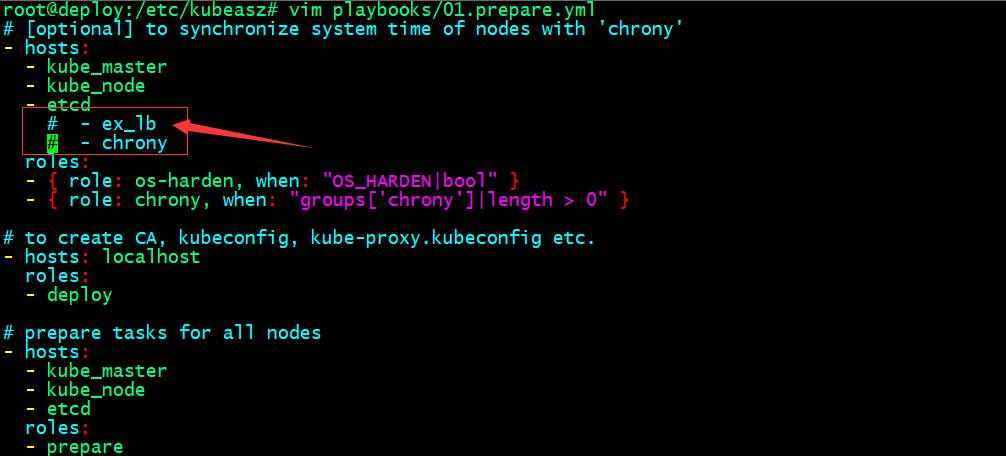

编辑系统基础初始化主机配置

提示:注释掉上述ex_lb和chrony表示这两个主机我们自己定义,不需要通过kubeasz来帮我们初始化;即系统初始化,只针对master、node、etcd这三类节点来做;

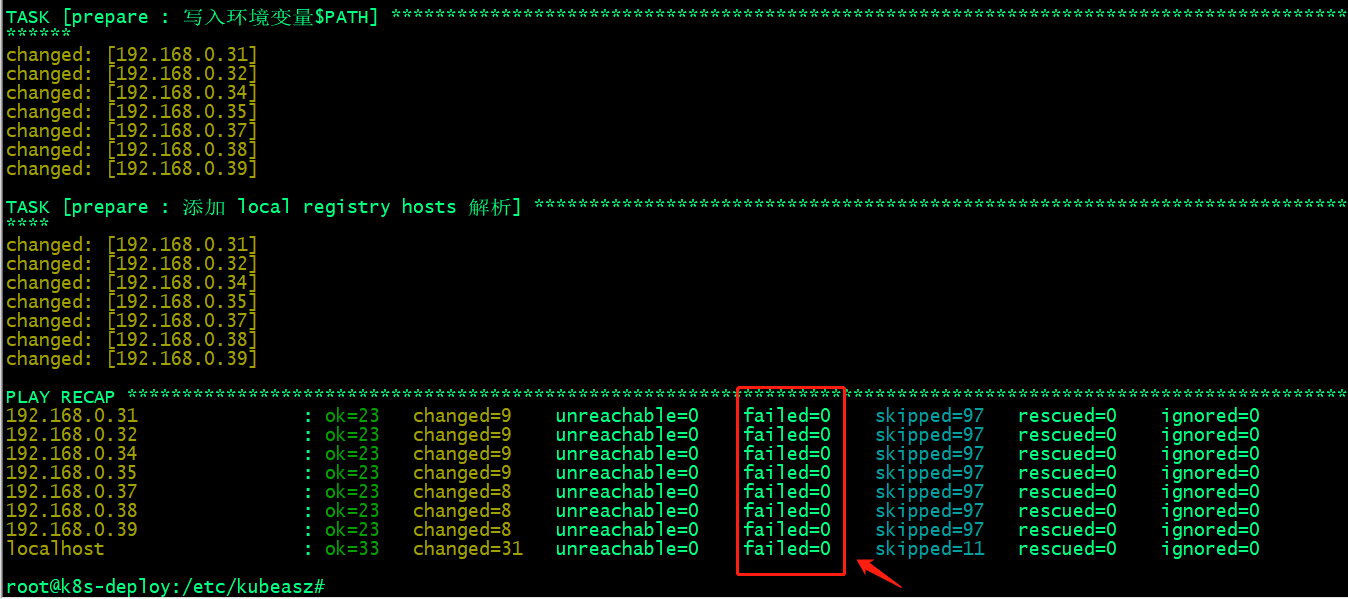

准备CA和基础环境初始化

root@deploy:/etc/kubeasz# ./ezctl setup k8s-cluster01 01

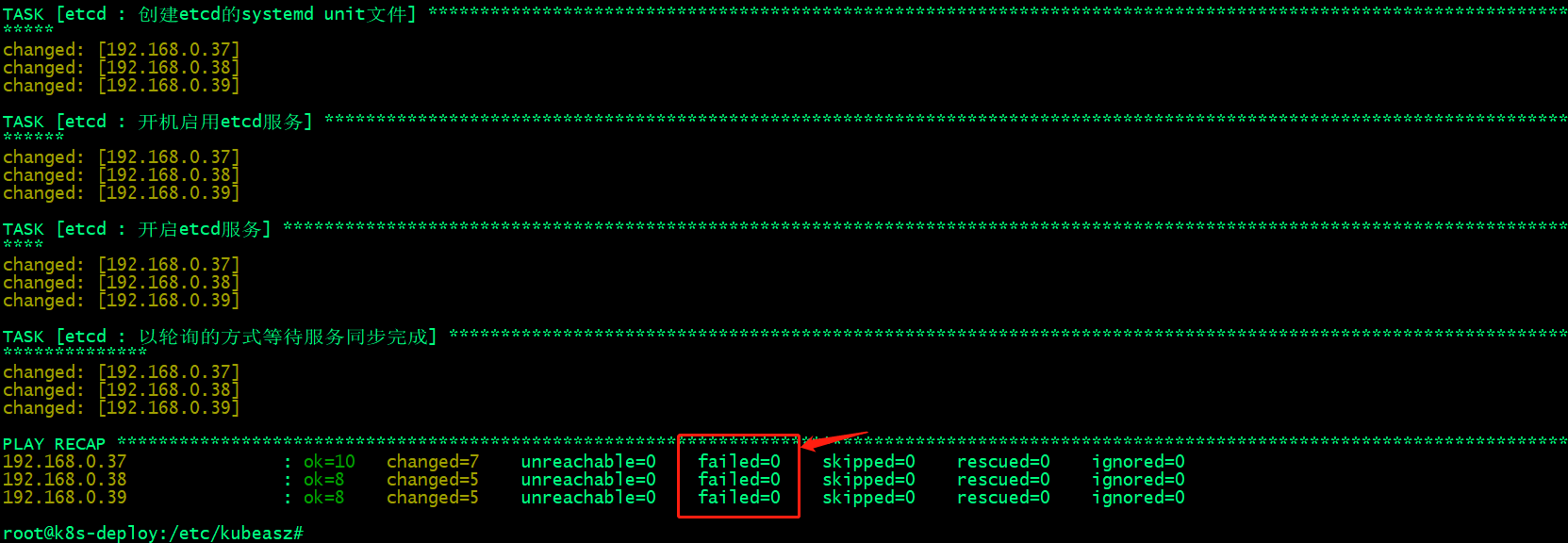

提示:执行上述命令,反馈failed都是0,表示指定节点的初始化环境准备就绪,接下来我们就可以进行第二步部署etcd节点;

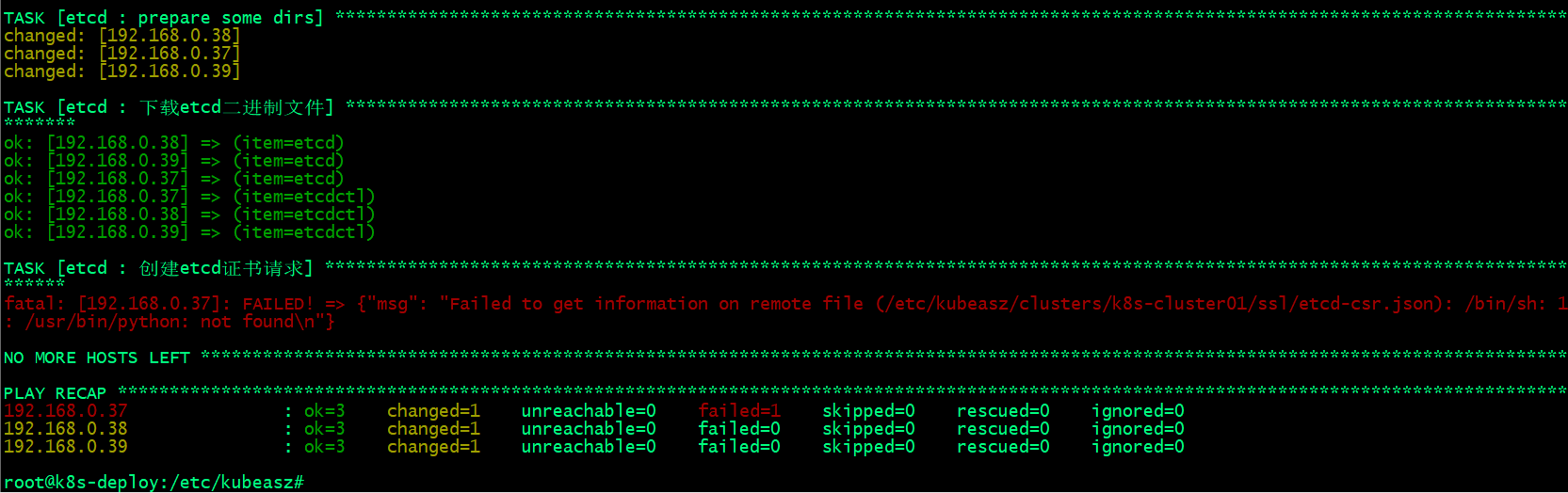

部署etcd集群

root@deploy:/etc/kubeasz# ./ezctl setup k8s-cluster01 02

提示:这里报错说/usr/bin/python没有找到,导致不能获取到/etc/kubeasz/clusters/k8s-cluster01/ssl/etcd-csr.json信息;

解决办法,在部署节点上将/usr/bin/python3软连接至/usr/bin/python;

root@deploy:/etc/kubeasz# ln -sv /usr/bin/python3 /usr/bin/python '/usr/bin/python' -> '/usr/bin/python3' root@deploy:/etc/kubeasz#

再次执行上述部署步骤

验证etcd集群是否正常?

root@k8s-etcd01:~# export NODE_IPS="192.168.0.37 192.168.0.38 192.168.0.39"

root@k8s-etcd01:~# for ip in ${NODE_IPS}; do ETCDCTL_API=3 /usr/local/bin/etcdctl --endpoints=https://${ip}:2379 --cacert=/etc/kubernetes/ssl/ca.pem --cert=/etc/kubernetes/ssl/etcd.pem --key=/etc/kubernetes/ssl/etcd-key.pem endpoint health; done

https://192.168.0.37:2379 is healthy: successfully committed proposal: took = 32.64189ms

https://192.168.0.38:2379 is healthy: successfully committed proposal: took = 30.249623ms

https://192.168.0.39:2379 is healthy: successfully committed proposal: took = 32.747586ms

root@k8s-etcd01:~#

提示:能够看到上面的健康状态成功,表示etcd集群服务正常;

部署容器运行时containerd

验证基础容器镜像

root@deploy:/etc/kubeasz# grep SANDBOX_IMAGE ./clusters/* -R ./clusters/k8s-cluster01/config.yml:SANDBOX_IMAGE: "harbor.ik8s.cc/baseimages/pause:3.9" root@deploy:/etc/kubeasz#

下载基础镜像到本地,然后更换标签,上传至harbor之上

root@deploy:/etc/kubeasz# docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.9 3.9: Pulling from google_containers/pause 61fec91190a0: Already exists Digest: sha256:7031c1b283388d2c2e09b57badb803c05ebed362dc88d84b480cc47f72a21097 Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.9 registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.9 root@deploy:/etc/kubeasz# docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.9 harbor.ik8s.cc/baseimages/pause:3.9 root@deploy:/etc/kubeasz# docker login harbor.ik8s.cc Username: admin Password: WARNING! Your password will be stored unencrypted in /root/.docker/config.json. Configure a credential helper to remove this warning. See https://docs.docker.com/engine/reference/commandline/login/#credentials-store Login Succeeded root@deploy:/etc/kubeasz# docker push harbor.ik8s.cc/baseimages/pause:3.9 The push refers to repository [harbor.ik8s.cc/baseimages/pause] e3e5579ddd43: Pushed 3.9: digest: sha256:0fc1f3b764be56f7c881a69cbd553ae25a2b5523c6901fbacb8270307c29d0c4 size: 526 root@deploy:/etc/kubeasz#

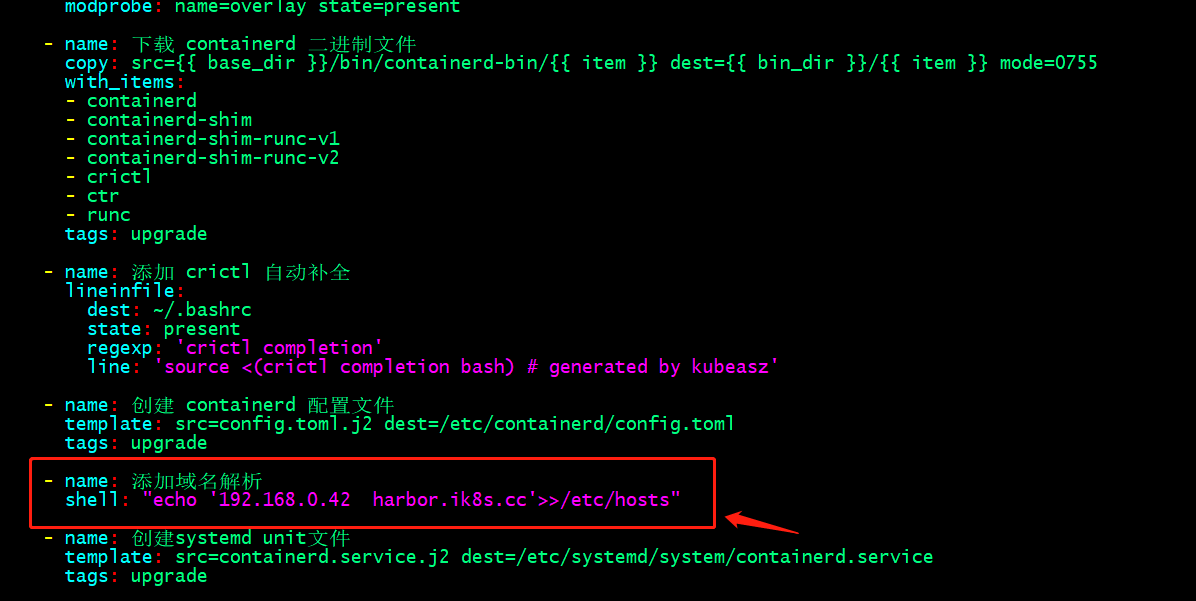

配置harbor镜像仓库域名解析-公司有DNS服务器进⾏域名解析

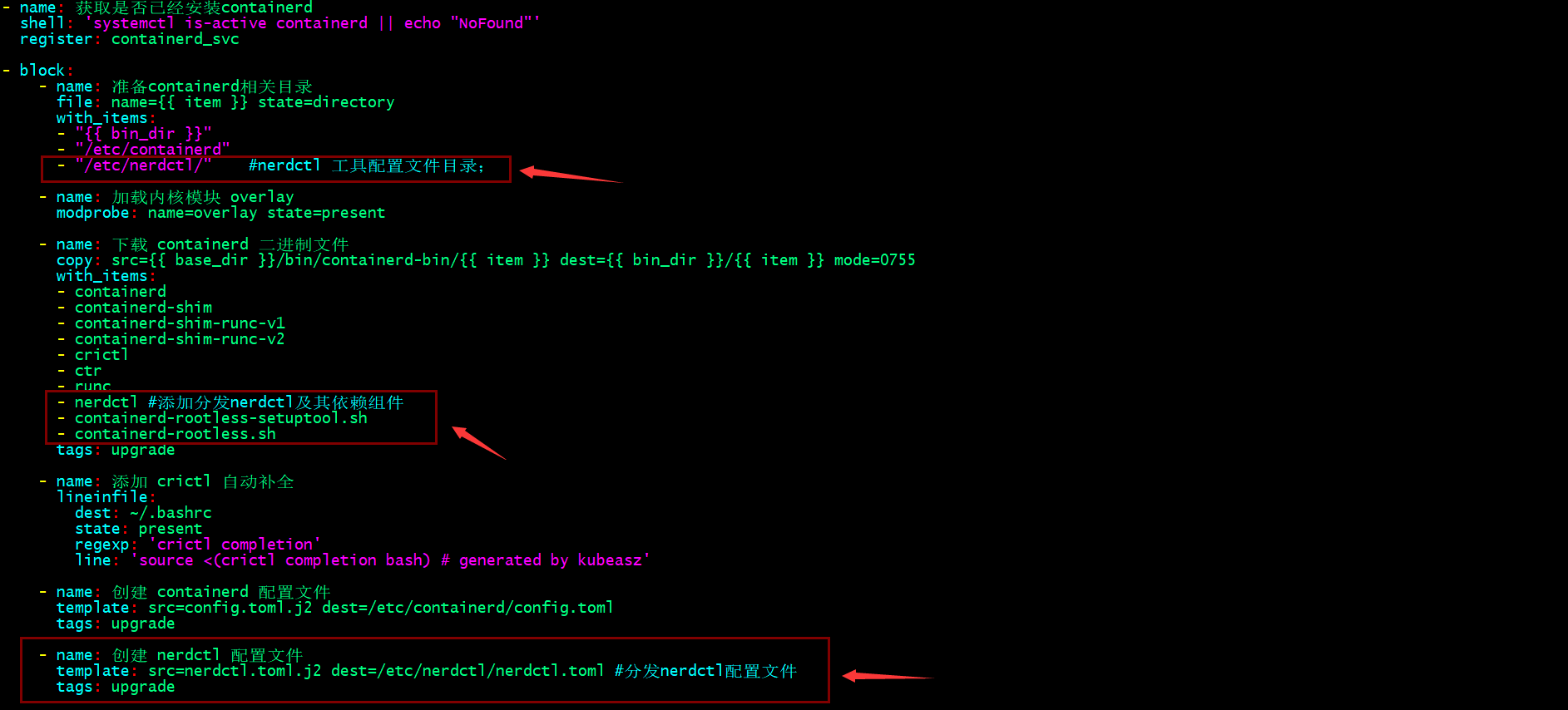

提示:编辑/etc/kubeasz/roles/containerd/tasks/main.yml文件在block配置段里面任意找个地方将其上述任务加上即可;

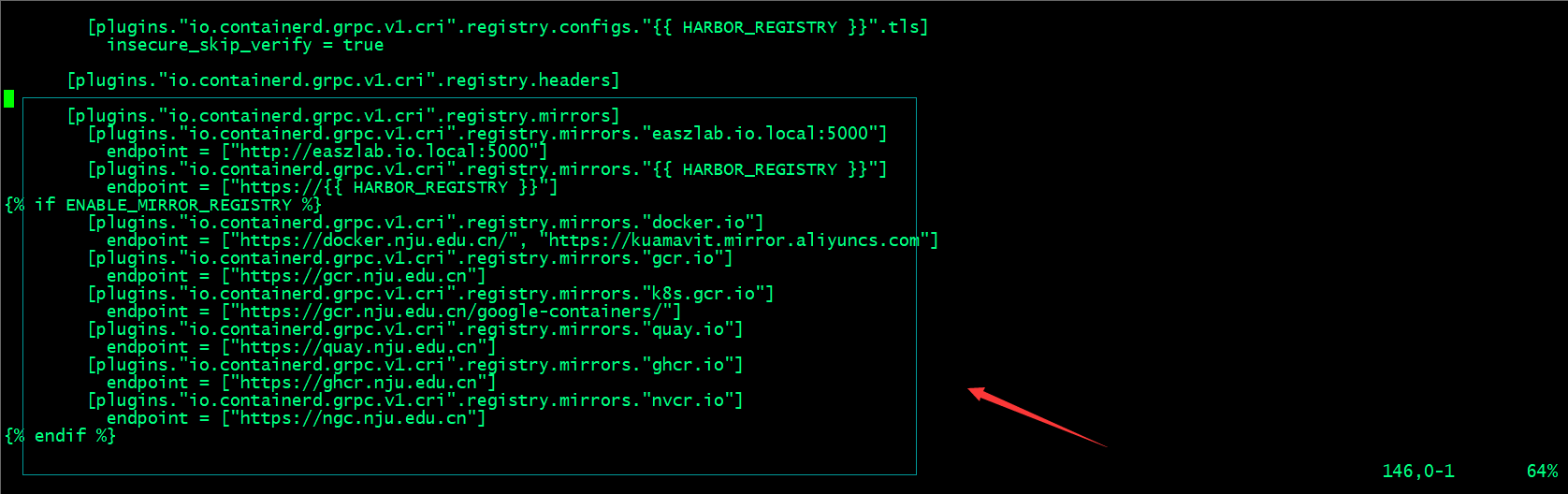

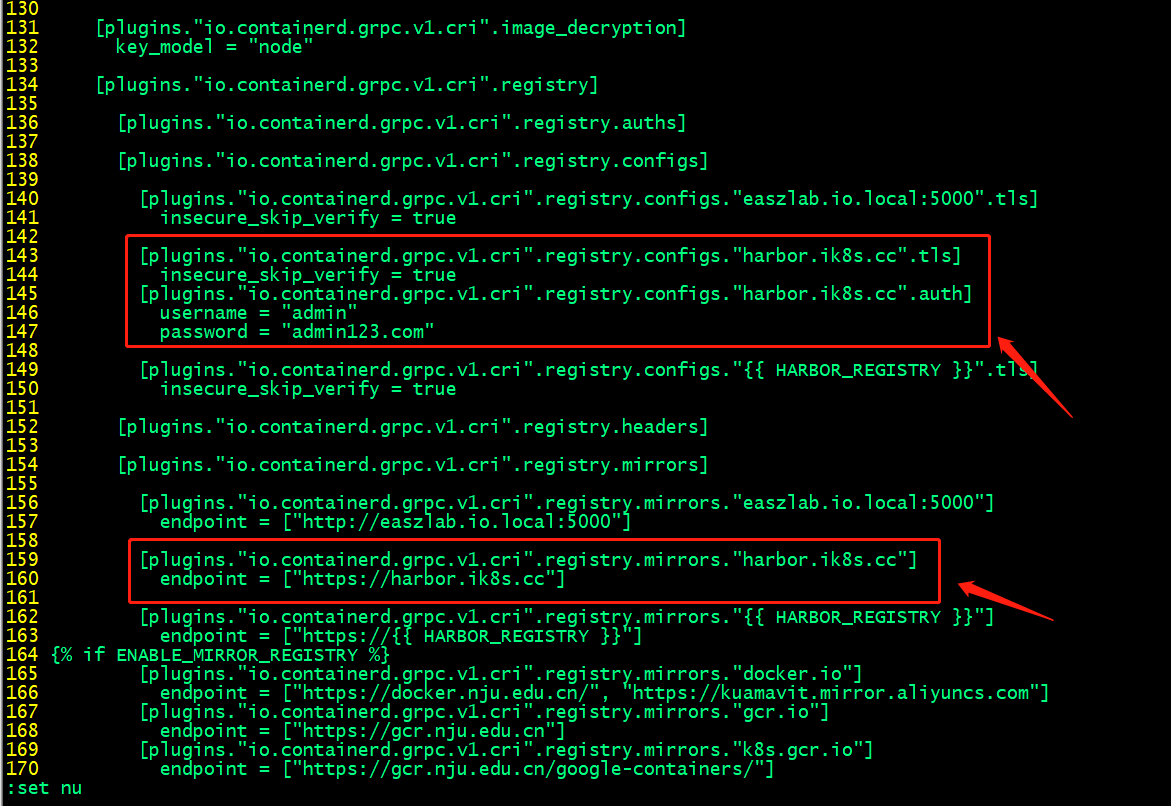

编辑/etc/kubeasz/roles/containerd/templates/config.toml.j2⾃定义containerd配置⽂件模板;

提示:这个参数在ubuntu2204上一定要改成true;否者会出现k8spod不断重启的现象;一般和kubelet保持一致;

提示:这里可以根据自己的环境来配置相应的镜像加速地址;

私有https/http镜像仓库配置下载认证

提示:如果你的镜像仓库是一个私有(不是公开的仓库,即下载镜像需要用户名和密码的仓库)https/http仓库,添加上述配置containerd在下载对应仓库中的镜像,会拿这里配置的用户名密码去下载镜像;

配置nerdctl客户端

提示:编辑/etc/kubeasz/roles/containerd/tasks/main.yml文件加上nerdctl配置相关任务;

在部署节点准备nerdctl工具二进制文件和依赖文件、配置文件

root@k8s-deploy:/etc/kubeasz/bin/containerd-bin# ll total 184572 drwxr-xr-x 2 root root 4096 Jan 26 01:51 ./ drwxr-xr-x 3 root root 4096 Apr 22 13:17 ../ -rwxr-xr-x 1 root root 51529720 Dec 19 16:53 containerd* -rwxr-xr-x 1 root root 7254016 Dec 19 16:53 containerd-shim* -rwxr-xr-x 1 root root 9359360 Dec 19 16:53 containerd-shim-runc-v1* -rwxr-xr-x 1 root root 9375744 Dec 19 16:53 containerd-shim-runc-v2* -rwxr-xr-x 1 root root 22735256 Dec 19 16:53 containerd-stress* -rwxr-xr-x 1 root root 52586151 Dec 14 07:20 crictl* -rwxr-xr-x 1 root root 26712216 Dec 19 16:53 ctr* -rwxr-xr-x 1 root root 9431456 Aug 25 2022 runc* root@k8s-deploy:/etc/kubeasz/bin/containerd-bin# tar xf /root/nerdctl-1.3.0-linux-amd64.tar.gz -C . root@k8s-deploy:/etc/kubeasz/bin/containerd-bin# ll total 208940 drwxr-xr-x 2 root root 4096 Apr 22 14:00 ./ drwxr-xr-x 3 root root 4096 Apr 22 13:17 ../ -rwxr-xr-x 1 root root 51529720 Dec 19 16:53 containerd* -rwxr-xr-x 1 root root 21622 Apr 5 12:21 containerd-rootless-setuptool.sh* -rwxr-xr-x 1 root root 7032 Apr 5 12:21 containerd-rootless.sh* -rwxr-xr-x 1 root root 7254016 Dec 19 16:53 containerd-shim* -rwxr-xr-x 1 root root 9359360 Dec 19 16:53 containerd-shim-runc-v1* -rwxr-xr-x 1 root root 9375744 Dec 19 16:53 containerd-shim-runc-v2* -rwxr-xr-x 1 root root 22735256 Dec 19 16:53 containerd-stress* -rwxr-xr-x 1 root root 52586151 Dec 14 07:20 crictl* -rwxr-xr-x 1 root root 26712216 Dec 19 16:53 ctr* -rwxr-xr-x 1 root root 24920064 Apr 5 12:22 nerdctl* -rwxr-xr-x 1 root root 9431456 Aug 25 2022 runc* root@k8s-deploy:/etc/kubeasz/bin/containerd-bin# cat /etc/kubeasz/roles/containerd/templates/nerdctl.toml.j2 namespace = "k8s.io" debug = false debug_full = false insecure_registry = true root@k8s-deploy:/etc/kubeasz/bin/containerd-bin#

提示:准备好nerdctl相关文件以后,对应就可以执行部署容器运行时containerd的任务;

执⾏部署容器运行时containerd

root@deploy:/etc/kubeasz# ./ezctl setup k8s-cluster01 03

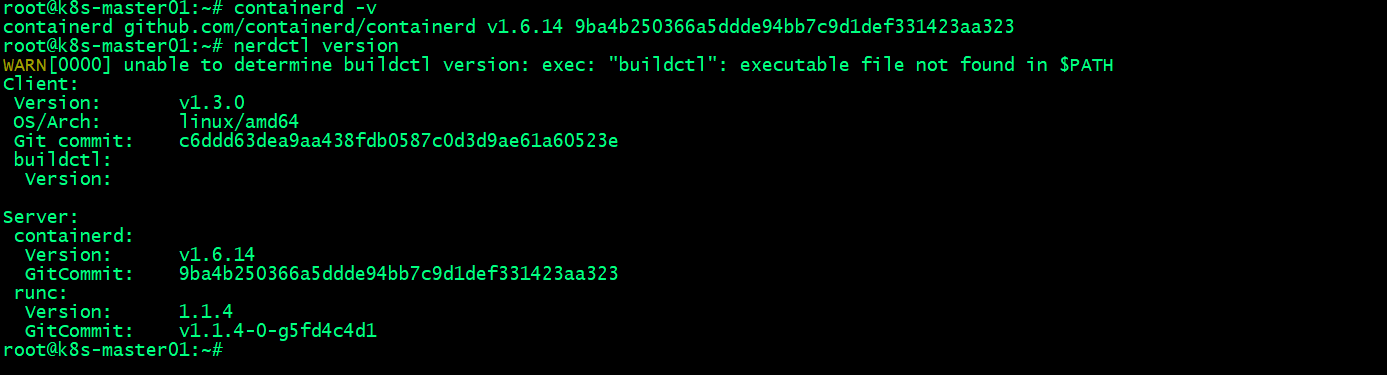

验证:在node节点或master节点验证containerd的版本信息,以及nerdctl的版本信息

提示:在master节点或node节点能够查看到containerd和nerdctl的版本信息,说明容器运行时containerd部署完成;

测试:在master节点使用nerdctl 下载镜像,看看是否可以正常下载?

root@k8s-master01:~# nerdctl pull ubuntu:22.04 WARN[0000] skipping verifying HTTPS certs for "docker.io" docker.io/library/ubuntu:22.04: resolved |++++++++++++++++++++++++++++++++++++++| index-sha256:67211c14fa74f070d27cc59d69a7fa9aeff8e28ea118ef3babc295a0428a6d21: done |++++++++++++++++++++++++++++++++++++++| manifest-sha256:7a57c69fe1e9d5b97c5fe649849e79f2cfc3bf11d10bbd5218b4eb61716aebe6: done |++++++++++++++++++++++++++++++++++++++| config-sha256:08d22c0ceb150ddeb2237c5fa3129c0183f3cc6f5eeb2e7aa4016da3ad02140a: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:2ab09b027e7f3a0c2e8bb1944ac46de38cebab7145f0bd6effebfe5492c818b6: done |++++++++++++++++++++++++++++++++++++++| elapsed: 28.4s total: 28.2 M (1015.6 KiB/s) root@k8s-master01:~# nerdctl images REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE ubuntu 22.04 67211c14fa74 6 seconds ago linux/amd64 83.4 MiB 28.2 MiB <none> <none> 67211c14fa74 6 seconds ago linux/amd64 83.4 MiB 28.2 MiB root@k8s-master01:~#

测试:在master节点登录harbor仓库

root@k8s-master01:~# nerdctl login harbor.ik8s.cc Enter Username: admin Enter Password: WARN[0005] skipping verifying HTTPS certs for "harbor.ik8s.cc" WARNING: Your password will be stored unencrypted in /root/.docker/config.json. Configure a credential helper to remove this warning. See https://docs.docker.com/engine/reference/commandline/login/#credentials-store Login Succeeded root@k8s-master01:~#

测试:在master节点上向harbor上传镜像是否正常呢?

root@k8s-master01:~# nerdctl pull ubuntu:22.04 WARN[0000] skipping verifying HTTPS certs for "docker.io" docker.io/library/ubuntu:22.04: resolved |++++++++++++++++++++++++++++++++++++++| index-sha256:67211c14fa74f070d27cc59d69a7fa9aeff8e28ea118ef3babc295a0428a6d21: done |++++++++++++++++++++++++++++++++++++++| manifest-sha256:7a57c69fe1e9d5b97c5fe649849e79f2cfc3bf11d10bbd5218b4eb61716aebe6: done |++++++++++++++++++++++++++++++++++++++| config-sha256:08d22c0ceb150ddeb2237c5fa3129c0183f3cc6f5eeb2e7aa4016da3ad02140a: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:2ab09b027e7f3a0c2e8bb1944ac46de38cebab7145f0bd6effebfe5492c818b6: done |++++++++++++++++++++++++++++++++++++++| elapsed: 22.3s total: 28.2 M (1.3 MiB/s) root@k8s-master01:~# nerdctl login harbor.ik8s.cc WARN[0000] skipping verifying HTTPS certs for "harbor.ik8s.cc" WARNING: Your password will be stored unencrypted in /root/.docker/config.json. Configure a credential helper to remove this warning. See https://docs.docker.com/engine/reference/commandline/login/#credentials-store Login Succeeded root@k8s-master01:~# nerdctl tag ubuntu:22.04 harbor.ik8s.cc/baseimages/ubuntu:22.04 root@k8s-master01:~# nerdctl push harbor.ik8s.cc/baseimages/ubuntu:22.04 INFO[0000] pushing as a reduced-platform image (application/vnd.oci.image.index.v1+json, sha256:730821bd93846fe61e18dd16cb476ef8d11489bab10a894e8acc7eb0405cc68e) WARN[0000] skipping verifying HTTPS certs for "harbor.ik8s.cc" index-sha256:730821bd93846fe61e18dd16cb476ef8d11489bab10a894e8acc7eb0405cc68e: done |++++++++++++++++++++++++++++++++++++++| manifest-sha256:7a57c69fe1e9d5b97c5fe649849e79f2cfc3bf11d10bbd5218b4eb61716aebe6: done |++++++++++++++++++++++++++++++++++++++| config-sha256:08d22c0ceb150ddeb2237c5fa3129c0183f3cc6f5eeb2e7aa4016da3ad02140a: done |++++++++++++++++++++++++++++++++++++++| elapsed: 0.5 s total: 2.9 Ki (5.9 KiB/s) root@k8s-master01:~#

测试:在node节点上登录harbor,下载刚才上传的ubuntu:22.04镜像,看看是否可以正常下载?

root@k8s-node01:~# nerdctl images REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE root@k8s-node01:~# nerdctl login harbor.ik8s.cc WARN[0000] skipping verifying HTTPS certs for "harbor.ik8s.cc" WARNING: Your password will be stored unencrypted in /root/.docker/config.json. Configure a credential helper to remove this warning. See https://docs.docker.com/engine/reference/commandline/login/#credentials-store Login Succeeded root@k8s-node01:~# nerdctl pull harbor.ik8s.cc/baseimages/ubuntu:22.04 WARN[0000] skipping verifying HTTPS certs for "harbor.ik8s.cc" harbor.ik8s.cc/baseimages/ubuntu:22.04: resolved |++++++++++++++++++++++++++++++++++++++| index-sha256:730821bd93846fe61e18dd16cb476ef8d11489bab10a894e8acc7eb0405cc68e: done |++++++++++++++++++++++++++++++++++++++| manifest-sha256:7a57c69fe1e9d5b97c5fe649849e79f2cfc3bf11d10bbd5218b4eb61716aebe6: done |++++++++++++++++++++++++++++++++++++++| config-sha256:08d22c0ceb150ddeb2237c5fa3129c0183f3cc6f5eeb2e7aa4016da3ad02140a: done |++++++++++++++++++++++++++++++++++++++| layer-sha256:2ab09b027e7f3a0c2e8bb1944ac46de38cebab7145f0bd6effebfe5492c818b6: done |++++++++++++++++++++++++++++++++++++++| elapsed: 4.7 s total: 28.2 M (6.0 MiB/s) root@k8s-node01:~# nerdctl images REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE harbor.ik8s.cc/baseimages/ubuntu 22.04 730821bd9384 5 seconds ago linux/amd64 83.4 MiB 28.2 MiB <none> <none> 730821bd9384 5 seconds ago linux/amd64 83.4 MiB 28.2 MiB root@k8s-node01:~#

提示:能够在master或node节点上正常使用nerdctl上传镜像到harbor,从harbor下载镜像到本地,说明我们部署的容器运行时containerd就没有问题了;接下就可以部署k8s master节点;

部署k8s master节点

root@deploy:/etc/kubeasz# cat roles/kube-master/tasks/main.yml

- name: 下载 kube_master 二进制

copy: src={{ base_dir }}/bin/{{ item }} dest={{ bin_dir }}/{{ item }} mode=0755

with_items:

- kube-apiserver

- kube-controller-manager

- kube-scheduler

- kubectl

tags: upgrade_k8s

- name: 分发controller/scheduler kubeconfig配置文件

copy: src={{ cluster_dir }}/{{ item }} dest=/etc/kubernetes/{{ item }}

with_items:

- kube-controller-manager.kubeconfig

- kube-scheduler.kubeconfig

tags: force_change_certs

- name: 创建 kubernetes 证书签名请求

template: src=kubernetes-csr.json.j2 dest={{ cluster_dir }}/ssl/kubernetes-csr.json

tags: change_cert, force_change_certs

connection: local

- name: 创建 kubernetes 证书和私钥

shell: "cd {{ cluster_dir }}/ssl && {{ base_dir }}/bin/cfssl gencert \

-ca=ca.pem \

-ca-key=ca-key.pem \

-config=ca-config.json \

-profile=kubernetes kubernetes-csr.json | {{ base_dir }}/bin/cfssljson -bare kubernetes"

tags: change_cert, force_change_certs

connection: local

# 创建aggregator proxy相关证书

- name: 创建 aggregator proxy证书签名请求

template: src=aggregator-proxy-csr.json.j2 dest={{ cluster_dir }}/ssl/aggregator-proxy-csr.json

connection: local

tags: force_change_certs

- name: 创建 aggregator-proxy证书和私钥

shell: "cd {{ cluster_dir }}/ssl && {{ base_dir }}/bin/cfssl gencert \

-ca=ca.pem \

-ca-key=ca-key.pem \

-config=ca-config.json \

-profile=kubernetes aggregator-proxy-csr.json | {{ base_dir }}/bin/cfssljson -bare aggregator-proxy"

connection: local

tags: force_change_certs

- name: 分发 kubernetes证书

copy: src={{ cluster_dir }}/ssl/{{ item }} dest={{ ca_dir }}/{{ item }}

with_items:

- ca.pem

- ca-key.pem

- kubernetes.pem

- kubernetes-key.pem

- aggregator-proxy.pem

- aggregator-proxy-key.pem

tags: change_cert, force_change_certs

- name: 替换 kubeconfig 的 apiserver 地址

lineinfile:

dest: "{{ item }}"

regexp: "^ server"

line: " server: https://127.0.0.1:{{ SECURE_PORT }}"

with_items:

- "/etc/kubernetes/kube-controller-manager.kubeconfig"

- "/etc/kubernetes/kube-scheduler.kubeconfig"

tags: force_change_certs

- name: 创建 master 服务的 systemd unit 文件

template: src={{ item }}.j2 dest=/etc/systemd/system/{{ item }}

with_items:

- kube-apiserver.service

- kube-controller-manager.service

- kube-scheduler.service

tags: restart_master, upgrade_k8s

- name: enable master 服务

shell: systemctl enable kube-apiserver kube-controller-manager kube-scheduler

ignore_errors: true

- name: 启动 master 服务

shell: "systemctl daemon-reload && systemctl restart kube-apiserver && \

systemctl restart kube-controller-manager && systemctl restart kube-scheduler"

tags: upgrade_k8s, restart_master, force_change_certs

# 轮询等待kube-apiserver启动完成

- name: 轮询等待kube-apiserver启动

shell: "systemctl is-active kube-apiserver.service"

register: api_status

until: '"active" in api_status.stdout'

retries: 10

delay: 3

tags: upgrade_k8s, restart_master, force_change_certs

# 轮询等待kube-controller-manager启动完成

- name: 轮询等待kube-controller-manager启动

shell: "systemctl is-active kube-controller-manager.service"

register: cm_status

until: '"active" in cm_status.stdout'

retries: 8

delay: 3

tags: upgrade_k8s, restart_master, force_change_certs

# 轮询等待kube-scheduler启动完成

- name: 轮询等待kube-scheduler启动

shell: "systemctl is-active kube-scheduler.service"

register: sch_status

until: '"active" in sch_status.stdout'

retries: 8

delay: 3

tags: upgrade_k8s, restart_master, force_change_certs

- block:

- name: 复制kubectl.kubeconfig

shell: 'cd {{ cluster_dir }} && cp -f kubectl.kubeconfig {{ K8S_NODENAME }}-kubectl.kubeconfig'

tags: upgrade_k8s, restart_master, force_change_certs

- name: 替换 kubeconfig 的 apiserver 地址

lineinfile:

dest: "{{ cluster_dir }}/{{ K8S_NODENAME }}-kubectl.kubeconfig"

regexp: "^ server"

line: " server: https://{{ inventory_hostname }}:{{ SECURE_PORT }}"

tags: upgrade_k8s, restart_master, force_change_certs

- name: 轮询等待master服务启动完成

command: "{{ base_dir }}/bin/kubectl --kubeconfig={{ cluster_dir }}/{{ K8S_NODENAME }}-kubectl.kubeconfig get node"

register: result

until: result.rc == 0

retries: 5

delay: 6

tags: upgrade_k8s, restart_master, force_change_certs

- name: 获取user:kubernetes是否已经绑定对应角色

shell: "{{ base_dir }}/bin/kubectl get clusterrolebindings|grep kubernetes-crb || echo 'notfound'"

register: crb_info

run_once: true

- name: 创建user:kubernetes角色绑定

command: "{{ base_dir }}/bin/kubectl create clusterrolebinding kubernetes-crb --clusterrole=system:kubelet-api-admin --user=kubernetes"

run_once: true

when: "'notfound' in crb_info.stdout"

connection: local

root@deploy:/etc/kubeasz#

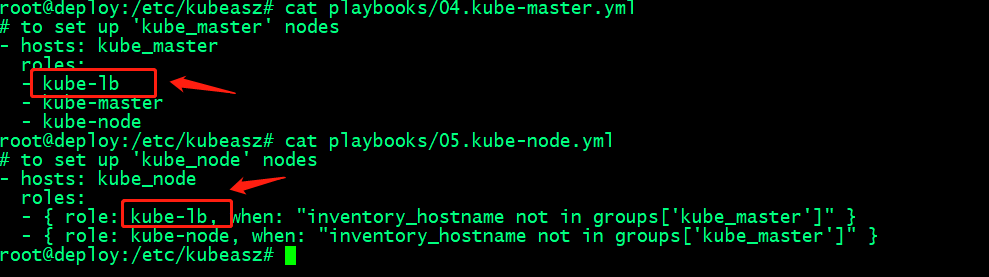

提示:上述kubeasz项目,部署master节点的任务,主要做了下载master节点所需的二进制组件,分发配置文件,证书密钥等文件、service文件,最后启动服务;如果我们需要自定义任务,可以修改上述文件来实现;

执行部署master节点

root@deploy:/etc/kubeasz# ./ezctl setup k8s-cluster01 04

在部署节点验证master节点是否可用获取到node信息?

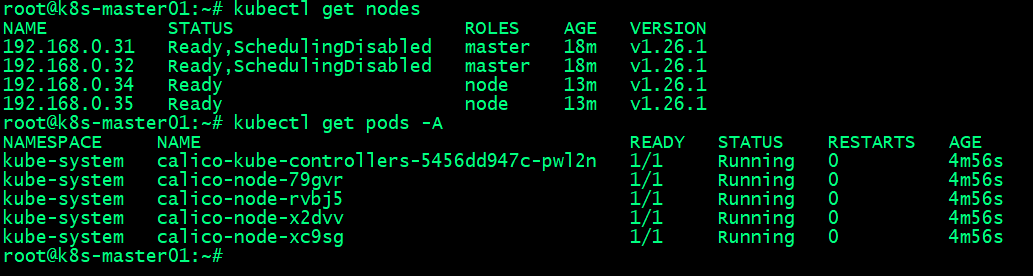

root@k8s-deploy:/etc/kubeasz# kubectl get nodes NAME STATUS ROLES AGE VERSION 192.168.0.31 Ready,SchedulingDisabled master 2m6s v1.26.1 192.168.0.32 Ready,SchedulingDisabled master 2m6s v1.26.1 root@k8s-deploy:/etc/kubeasz#

提示:能够使用kubectl命令获取到节点信息,表示master部署成功;

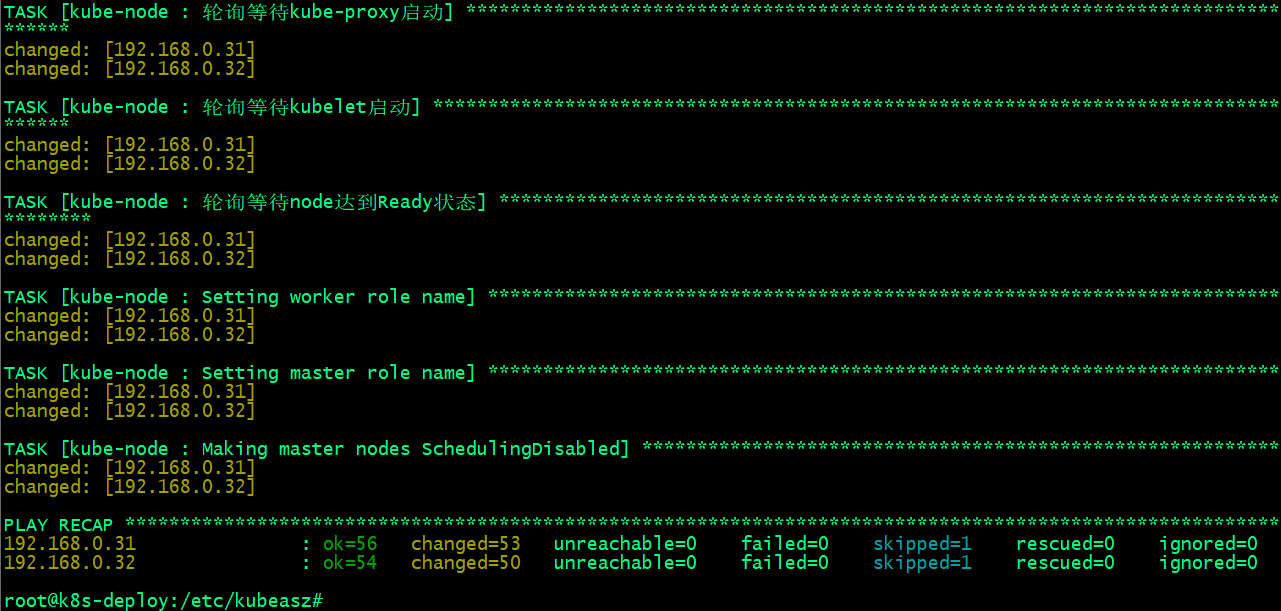

部署k8s node节点

root@deploy:/etc/kubeasz# cat roles/kube-node/tasks/main.yml

- name: 创建kube_node 相关目录

file: name={{ item }} state=directory

with_items:

- /var/lib/kubelet

- /var/lib/kube-proxy

- name: 下载 kubelet,kube-proxy 二进制和基础 cni plugins

copy: src={{ base_dir }}/bin/{{ item }} dest={{ bin_dir }}/{{ item }} mode=0755

with_items:

- kubectl

- kubelet

- kube-proxy

- bridge

- host-local

- loopback

tags: upgrade_k8s

- name: 添加 kubectl 自动补全

lineinfile:

dest: ~/.bashrc

state: present

regexp: 'kubectl completion'

line: 'source <(kubectl completion bash) # generated by kubeasz'

##----------kubelet 配置部分--------------

# 创建 kubelet 相关证书及 kubelet.kubeconfig

- import_tasks: create-kubelet-kubeconfig.yml

tags: force_change_certs

- name: 准备 cni配置文件

template: src=cni-default.conf.j2 dest=/etc/cni/net.d/10-default.conf

- name: 创建kubelet的配置文件

template: src=kubelet-config.yaml.j2 dest=/var/lib/kubelet/config.yaml

tags: upgrade_k8s, restart_node

- name: 创建kubelet的systemd unit文件

template: src=kubelet.service.j2 dest=/etc/systemd/system/kubelet.service

tags: upgrade_k8s, restart_node

- name: 开机启用kubelet 服务

shell: systemctl enable kubelet

ignore_errors: true

- name: 开启kubelet 服务

shell: systemctl daemon-reload && systemctl restart kubelet

tags: upgrade_k8s, restart_node, force_change_certs

##-------kube-proxy部分----------------

- name: 分发 kube-proxy.kubeconfig配置文件

copy: src={{ cluster_dir }}/kube-proxy.kubeconfig dest=/etc/kubernetes/kube-proxy.kubeconfig

tags: force_change_certs

- name: 替换 kube-proxy.kubeconfig 的 apiserver 地址

lineinfile:

dest: /etc/kubernetes/kube-proxy.kubeconfig

regexp: "^ server"

line: " server: {{ KUBE_APISERVER }}"

tags: force_change_certs

- name: 创建kube-proxy 配置

template: src=kube-proxy-config.yaml.j2 dest=/var/lib/kube-proxy/kube-proxy-config.yaml

tags: reload-kube-proxy, restart_node, upgrade_k8s

- name: 创建kube-proxy 服务文件

template: src=kube-proxy.service.j2 dest=/etc/systemd/system/kube-proxy.service

tags: reload-kube-proxy, restart_node, upgrade_k8s

- name: 开机启用kube-proxy 服务

shell: systemctl enable kube-proxy

ignore_errors: true

- name: 开启kube-proxy 服务

shell: systemctl daemon-reload && systemctl restart kube-proxy

tags: reload-kube-proxy, upgrade_k8s, restart_node, force_change_certs

# 设置k8s_nodename 在/etc/hosts 地址解析

- name: 设置k8s_nodename 在/etc/hosts 地址解析

lineinfile:

dest: /etc/hosts

state: present

regexp: "{{ K8S_NODENAME }}"

line: "{{ inventory_hostname }} {{ K8S_NODENAME }}"

delegate_to: "{{ item }}"

with_items: "{{ groups.kube_master }}"

when: "inventory_hostname != K8S_NODENAME"

# 轮询等待kube-proxy启动完成

- name: 轮询等待kube-proxy启动

shell: "systemctl is-active kube-proxy.service"

register: kubeproxy_status

until: '"active" in kubeproxy_status.stdout'

retries: 4

delay: 2

tags: reload-kube-proxy, upgrade_k8s, restart_node, force_change_certs

# 轮询等待kubelet启动完成

- name: 轮询等待kubelet启动

shell: "systemctl is-active kubelet.service"

register: kubelet_status

until: '"active" in kubelet_status.stdout'

retries: 4

delay: 2

tags: reload-kube-proxy, upgrade_k8s, restart_node, force_change_certs

- name: 轮询等待node达到Ready状态

shell: "{{ base_dir }}/bin/kubectl get node {{ K8S_NODENAME }}|awk 'NR>1{print $2}'"

register: node_status

until: node_status.stdout == "Ready" or node_status.stdout == "Ready,SchedulingDisabled"

retries: 8

delay: 8

tags: upgrade_k8s, restart_node, force_change_certs

connection: local

- block:

- name: Setting worker role name

shell: "{{ base_dir }}/bin/kubectl label node {{ K8S_NODENAME }} kubernetes.io/role=node --overwrite"

- name: Setting master role name

shell: "{{ base_dir }}/bin/kubectl label node {{ K8S_NODENAME }} kubernetes.io/role=master --overwrite"

when: "inventory_hostname in groups['kube_master']"

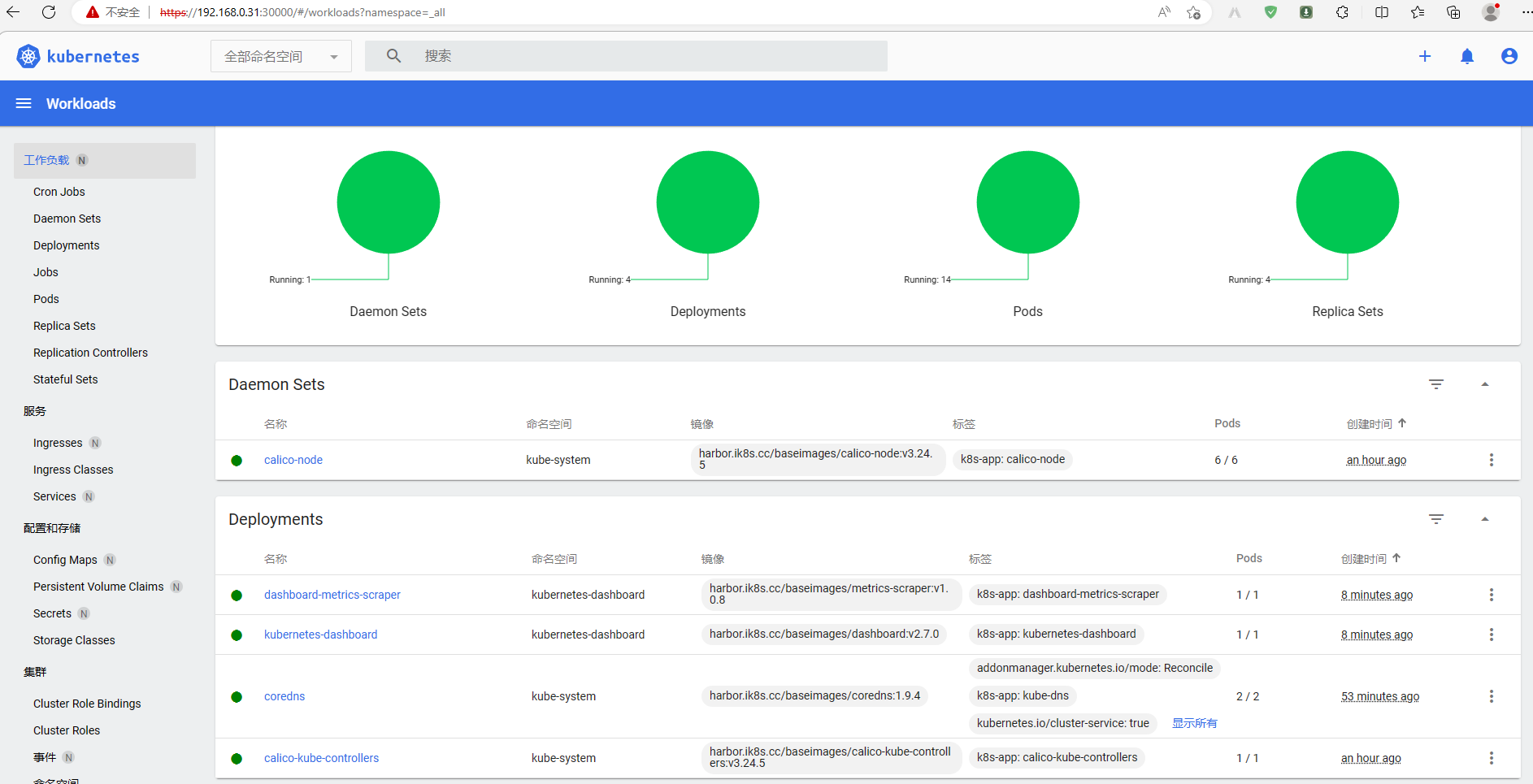

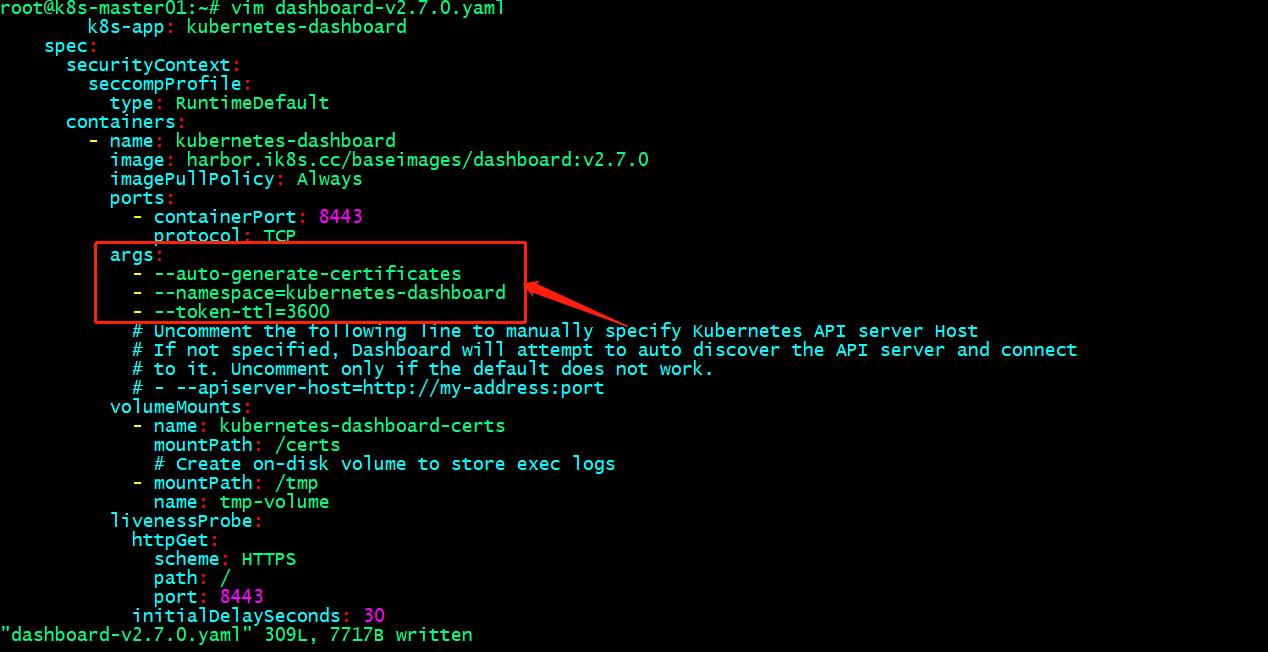

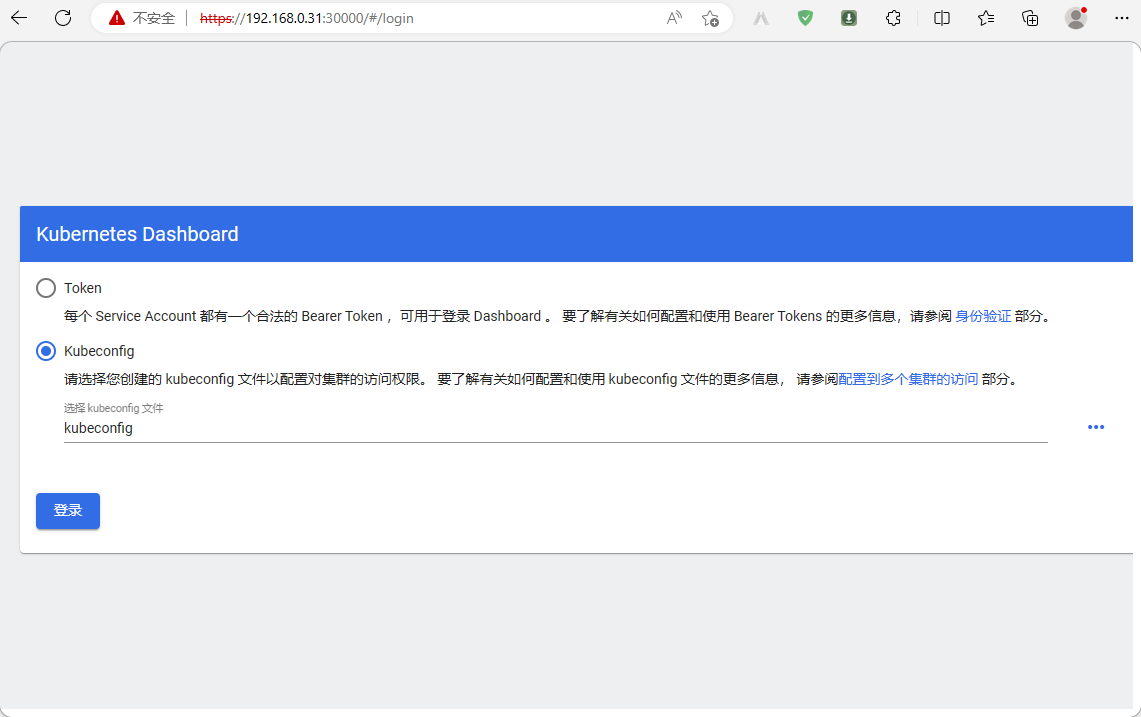

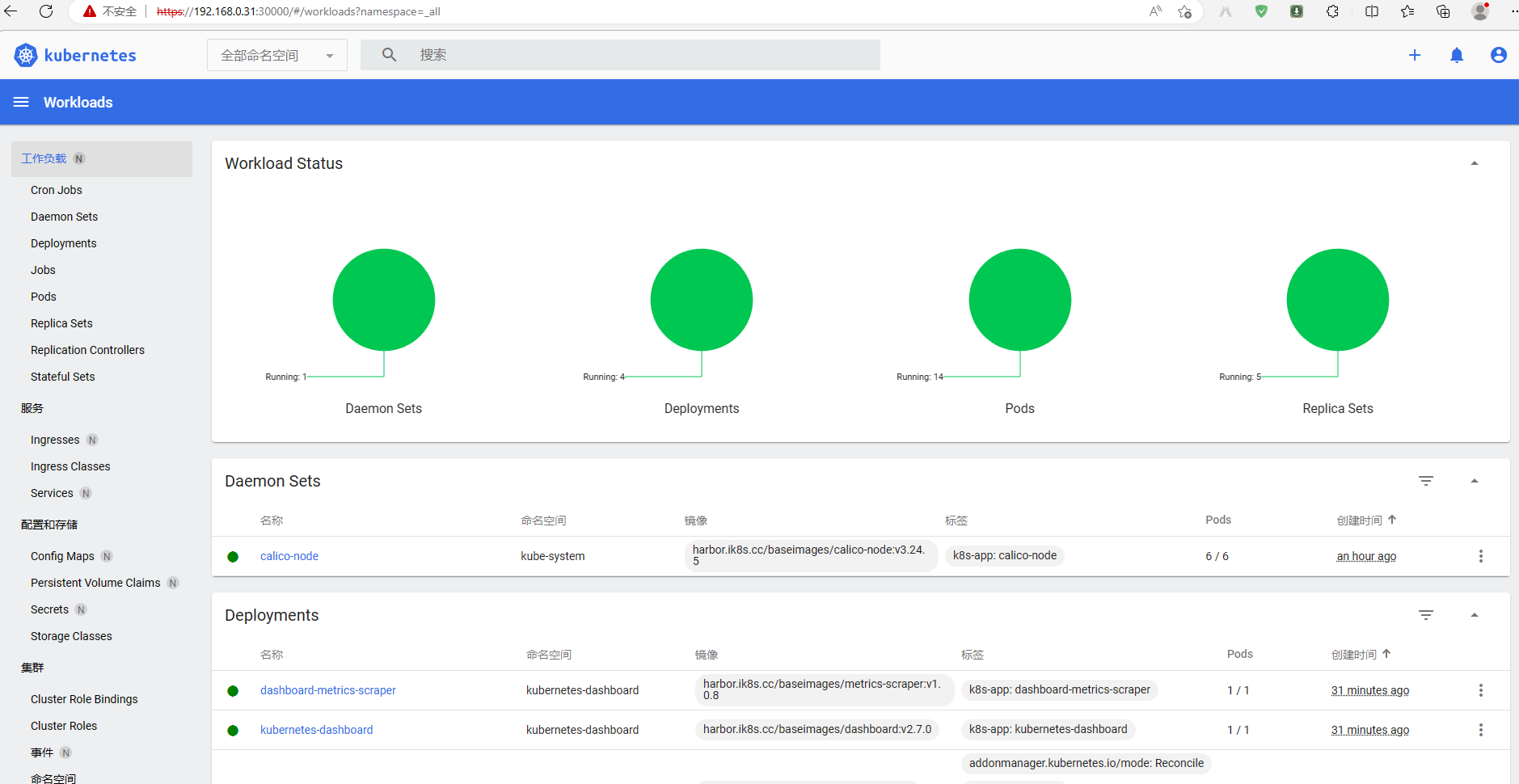

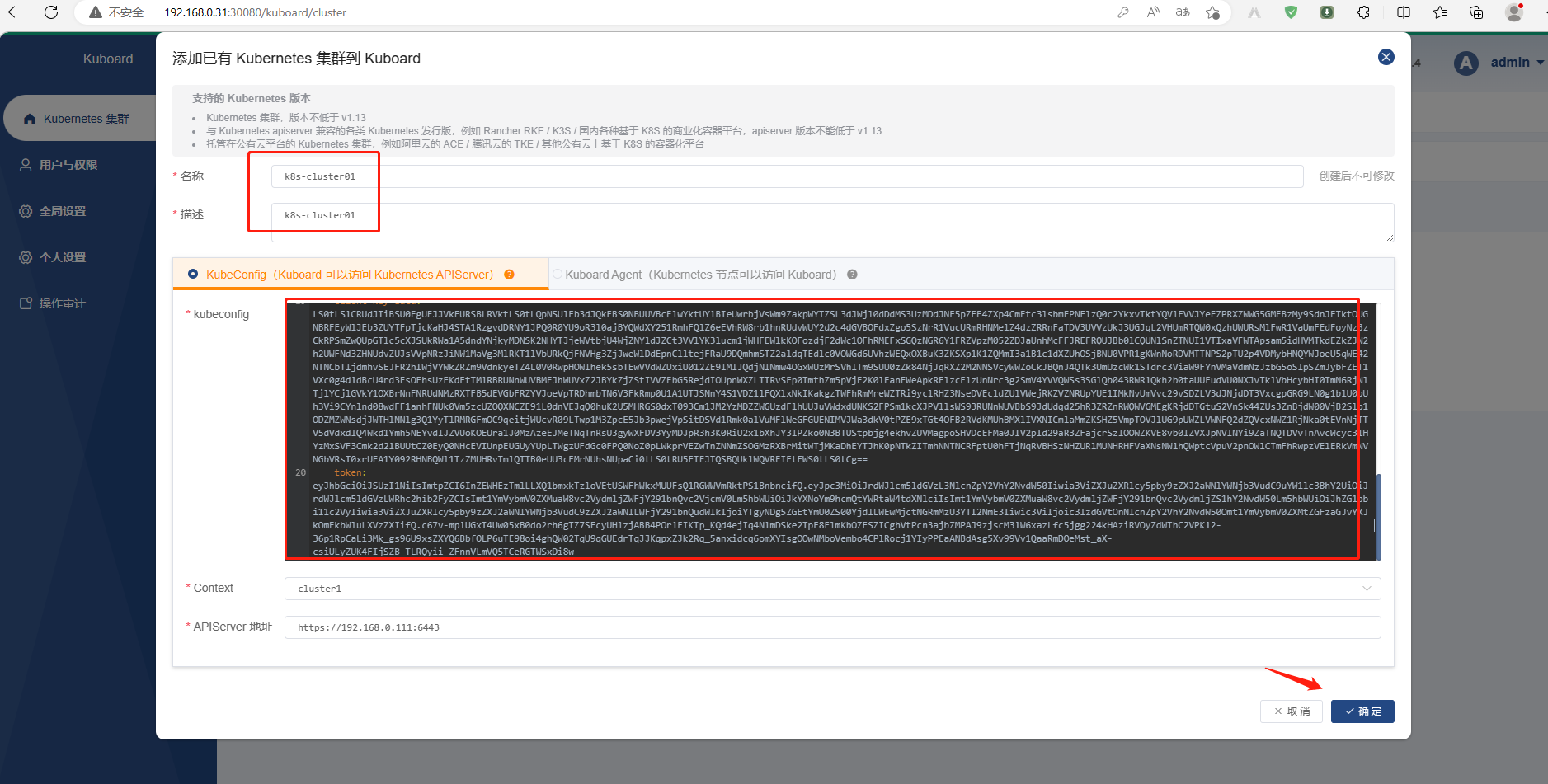

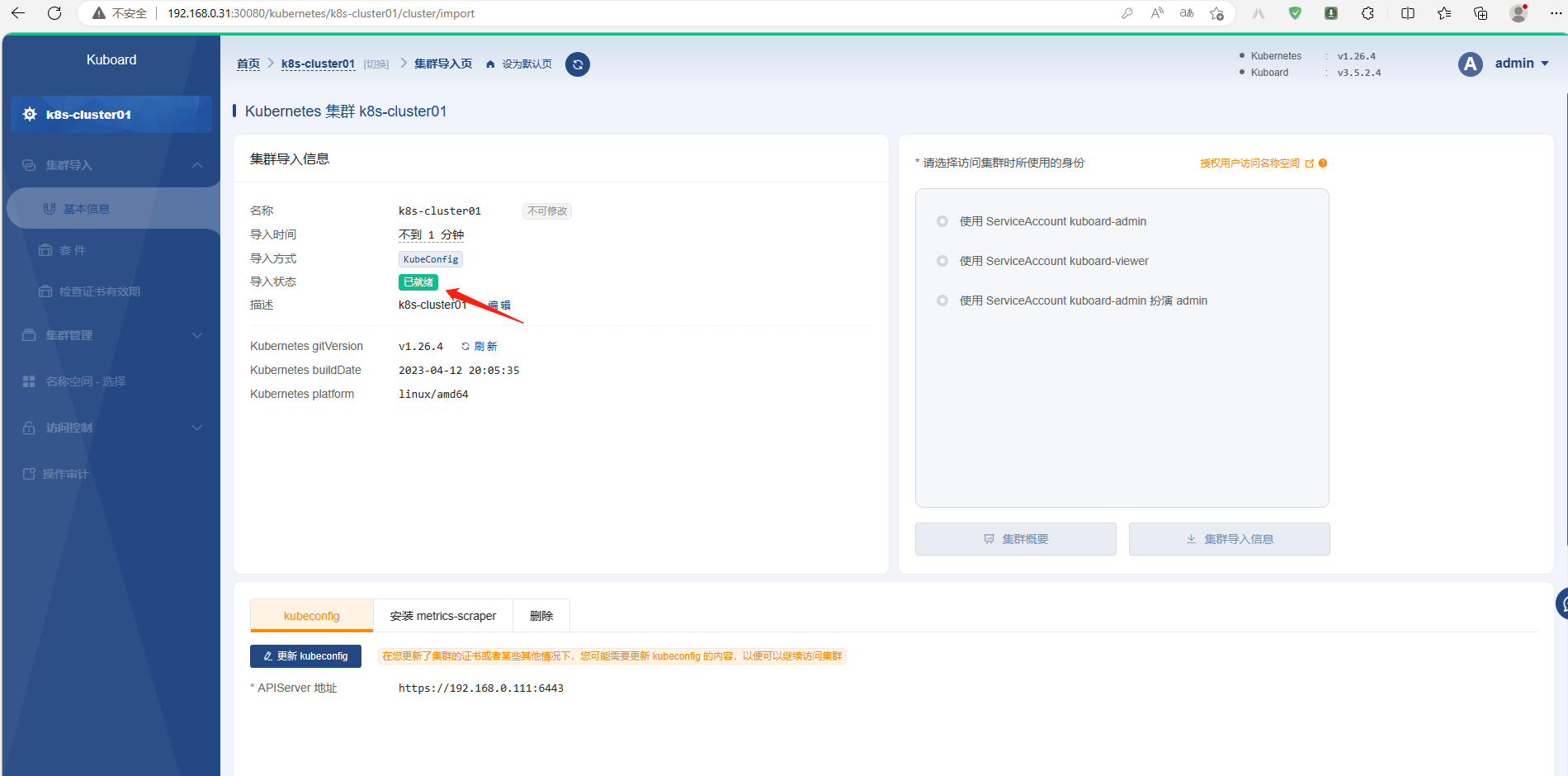

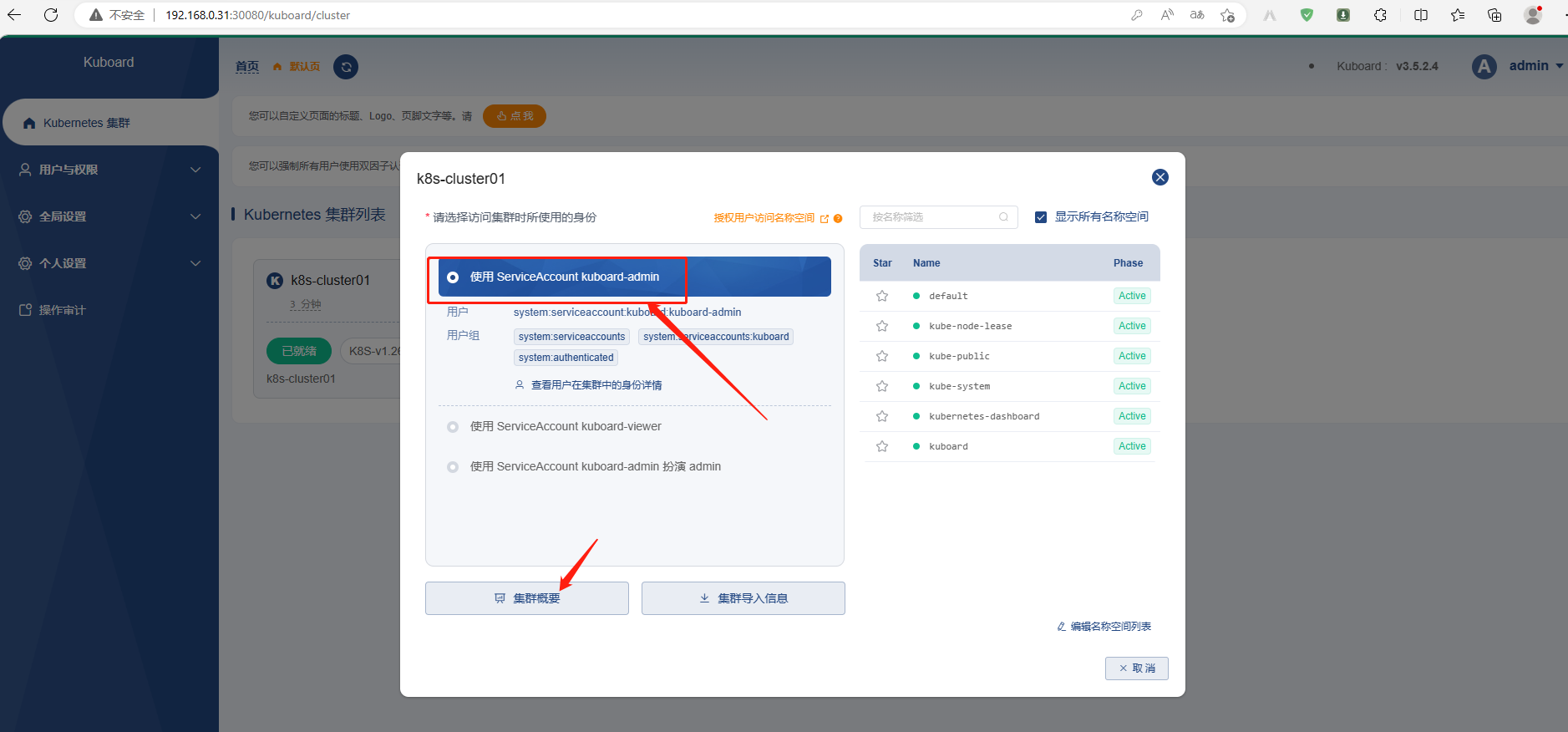

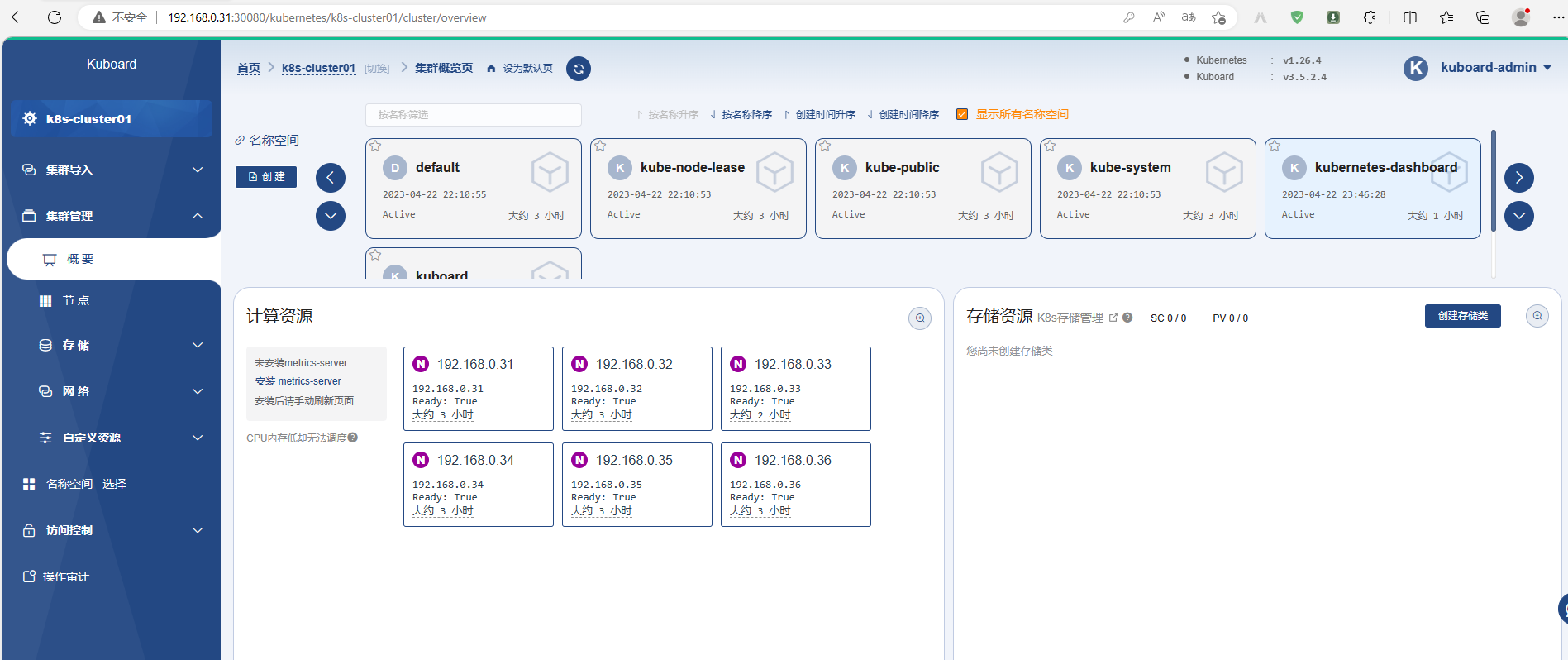

- name: Making master nodes SchedulingDisabled