分布式存储系统之Ceph集群MDS扩展

前文我们了解了cephfs使用相关话题,回顾请参考https://www.cnblogs.com/qiuhom-1874/p/16758866.html;今天我们来聊一聊MDS组件扩展相关话题;

我们知道MDS是为了实现cephfs而运行的进程,主要负责管理文件系统元数据信息;这意味着客户端使用cephfs存取数据,都会先联系mds找元数据;然后mds再去元数据存储池读取数据,然后返回给客户端;即元素存储池只能由mds操作;换句话说,mds是访问cephfs的唯一入口;那么问题来了,如果ceph集群上只有一个mds进程,很多个客户端来访问cephfs,那么mds肯定会成为瓶颈,所以为了提高cephfs的性能,我们必须提供多个mds供客户端使用;那mds该怎么扩展呢?前边我们说过,mds是管理文件系统元素信息,将元素信息存储池至rados集群的指定存储池中,使得mds从有状态变为无状态;那么对于mds来说,扩展mds就是多运行几个进程而已;但是由于文件系统元数据的工作特性,我们不能像扩展其他无状态应用那样扩展;比如,在ceph集群上有两个mds,他们同时操作一个存储池中的一个文件,那么最后合并时发现,一个删除文件,一个修改了文件,合并文件系统崩溃了;即两个mds同时操作存储池的同一个文件那么对应mds需要同步和数据一致,这和副本有什么区别呢?对于客户端读请求可以由多个mds分散负载,对于客户端的写请求呢,向a写入,b该怎么办呢?b只能从a这边同步,或者a向b写入,这样一来对于客户端的写请求并不能分散负载,即当客户端增多,瓶颈依然存在;

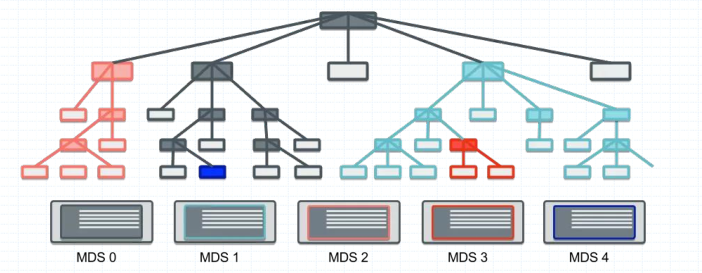

为了解决分散负载文件系统的读写请求,分布式文件系统业界提供了将名称空间分割治理的解决方案,通过将文件系统根树及其热点子树分别部署于不同的元数据服务器进行负载均衡,从而赋予了元数据存储线性扩展的可能;简单讲就是一个mds只复制一个子目录的元数据信息;

元数据分区

提示:如上所示,我们将一个文件系统可以分成多颗子树,一个mds只复制其中一颗子树,从而实现元数据信息的读写分散负载;

常用的元数据分区方式

1、静态子树分区:所谓静态子树分区,就是管理员手动指定某颗指数,由某个元数据服务器负责;如,我们将nfs挂载之一个目录下,这种方式就是静态子树分区,通过将一个子目录关联到另外一个分区上去,从而实现减轻当前文件系统的负载;

2、静态hash分区:所谓静态hash分区是指,有多个目录,对应文件存储到那个目录下,不是管理员指定而是通过对文件名做一致性hash或者hash再取模等等,最终落到那个目录就存储到那个目录;从而减轻对应子目录在当前文件系统的负载;

3、惰性混编分区:所谓惰性混编分区是指将静态hash方式和传统文件系统的方式结合使用;

4、动态子树分区:所谓动态子树分区就是根据文件系统的负载能力动态调整对应子树;cephfs就是使用这种方式实现多活mds;在ceph上多主MDS模式是指CephFS将整个文件系统的名称空间切分为多个子树并配置到多个MDS之上,不过,读写操作的负载均衡策略分别是子树切分和目录副本;将写操作负载较重的目录切分成多个子目录以分散负载;为读操作负载较重的目录创建多个副本以均衡负载;子树分区和迁移的决策是一个同步过程,各MDS每10秒钟做一次独立的迁移决策,每个MDS并不存在一个一致的名称空间视图,且MDS集群也不存在一个全局调度器负责统一的调度决策;各MDS彼此间通过交换心跳信息(HeartBeat,简称HB)及负载状态来确定是否要进行迁移、如何分区名称空间,以及是否需要目录切分为子树等;管理员也可以配置CephFS负载的计算方式从而影响MDS的负载决策,目前,CephFS支持基于CPU负载、文件系统负载及混合此两种的决策机制;

动态子树分区依赖于共享存储完成热点负载在MDS间的迁移,于是Ceph把MDS的元数据存储于后面的RADOS集群上的专用存储池中,此存储池可由多个MDS共享;MDS对元数据的访问并不直接基于RADOS进行,而是为其提供了一个基于内存的缓存区以缓存热点元数据,并且在元数据相关日志条目过期之前将一直存储于内存中;

CephFS使用元数据日志来解决容错问题

元数据日志信息流式存储于CephFS元数据存储池中的元数据日志文件上,类似于LFS(Log-Structured File System)和WAFL( Write Anywhere File Layout)的工作机制, CephFS元数据日志文件的体积可以无限增长以确保日志信息能顺序写入RADOS,并额外赋予守护进程修剪冗余或不相关日志条目的能力;

Multi MDS

每个CephFS都会有一个易读的文件系统名称和一个称为FSCID标识符ID,并且每个CephFS默认情况下都只配置一个Active MDS守护进程;一个MDS集群中可处于Active状态的MDS数量的上限由max_mds参数配置,它控制着可用的rank数量,默认值为1; rank是指CephFS上可同时处于Active状态的MDS守护进程的可用编号,其范围从0到max_mds-1;一个rank编号意味着一个可承载CephFS层级文件系统目录子树 目录子树元数据管理功能的Active状态的ceph-mds守护进程编制,max_mds的值为1时意味着仅有一个0号rank可用; 刚启动的ceph-mds守护进程没有接管任何rank,它随后由MON按需进行分配;一个ceph-mds一次仅可占据一个rank,并且在守护进程终止时将其释放;即rank分配出去以后具有排它性;一个rank可以处于下列三种状态中的某一种,Up:rank已经由某个ceph-mds守护进程接管; Failed:rank未被任何ceph-mds守护进程接管; Damaged:rank处于损坏状态,其元数据处于崩溃或丢失状态;在管理员修复问题并对其运行“ceph mds repaired”命令之前,处于Damaged状态的rank不能分配给其它任何MDS守护进程;

查看ceph集群mds状态

[root@ceph-admin ~]# ceph mds stat

cephfs-1/1/1 up {0=ceph-mon02=up:active}

[root@ceph-admin ~]#

提示:可以看到当前集群有一个mds运行在ceph-mon02节点并处于up活动状态;

部署多个mds

[root@ceph-admin ~]# ceph-deploy mds create ceph-mon01 ceph-mon03

[ceph_deploy.conf][DEBUG ] found configuration file at: /root/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (2.0.1): /usr/bin/ceph-deploy mds create ceph-mon01 ceph-mon03

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username : None

[ceph_deploy.cli][INFO ] verbose : False

[ceph_deploy.cli][INFO ] overwrite_conf : False

[ceph_deploy.cli][INFO ] subcommand : create

[ceph_deploy.cli][INFO ] quiet : False

[ceph_deploy.cli][INFO ] cd_conf : <ceph_deploy.conf.cephdeploy.Conf instance at 0x7f9478f34830>

[ceph_deploy.cli][INFO ] cluster : ceph

[ceph_deploy.cli][INFO ] func : <function mds at 0x7f947918d050>

[ceph_deploy.cli][INFO ] ceph_conf : None

[ceph_deploy.cli][INFO ] mds : [('ceph-mon01', 'ceph-mon01'), ('ceph-mon03', 'ceph-mon03')]

[ceph_deploy.cli][INFO ] default_release : False

[ceph_deploy][ERROR ] ConfigError: Cannot load config: [Errno 2] No such file or directory: 'ceph.conf'; has `ceph-deploy new` been run in this directory?

[root@ceph-admin ~]# su - cephadm

Last login: Thu Sep 29 23:09:04 CST 2022 on pts/0

[cephadm@ceph-admin ~]$ ls

cephadm@ceph-mgr01 cephadm@ceph-mgr02 cephadm@ceph-mon01 cephadm@ceph-mon02 cephadm@ceph-mon03 ceph-cluster

[cephadm@ceph-admin ~]$ cd ceph-cluster/

[cephadm@ceph-admin ceph-cluster]$ ls

ceph.bootstrap-mds.keyring ceph.bootstrap-osd.keyring ceph.client.admin.keyring ceph-deploy-ceph.log

ceph.bootstrap-mgr.keyring ceph.bootstrap-rgw.keyring ceph.conf ceph.mon.keyring

[cephadm@ceph-admin ceph-cluster]$ ceph-deploy mds create ceph-mon01 ceph-mon03

[ceph_deploy.conf][DEBUG ] found configuration file at: /home/cephadm/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (2.0.1): /bin/ceph-deploy mds create ceph-mon01 ceph-mon03

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username : None

[ceph_deploy.cli][INFO ] verbose : False

[ceph_deploy.cli][INFO ] overwrite_conf : False

[ceph_deploy.cli][INFO ] subcommand : create

[ceph_deploy.cli][INFO ] quiet : False

[ceph_deploy.cli][INFO ] cd_conf : <ceph_deploy.conf.cephdeploy.Conf instance at 0x7f2c575ba7e8>

[ceph_deploy.cli][INFO ] cluster : ceph

[ceph_deploy.cli][INFO ] func : <function mds at 0x7f2c57813050>

[ceph_deploy.cli][INFO ] ceph_conf : None

[ceph_deploy.cli][INFO ] mds : [('ceph-mon01', 'ceph-mon01'), ('ceph-mon03', 'ceph-mon03')]

[ceph_deploy.cli][INFO ] default_release : False

[ceph_deploy.mds][DEBUG ] Deploying mds, cluster ceph hosts ceph-mon01:ceph-mon01 ceph-mon03:ceph-mon03

[ceph-mon01][DEBUG ] connection detected need for sudo

[ceph-mon01][DEBUG ] connected to host: ceph-mon01

[ceph-mon01][DEBUG ] detect platform information from remote host

[ceph-mon01][DEBUG ] detect machine type

[ceph_deploy.mds][INFO ] Distro info: CentOS Linux 7.9.2009 Core

[ceph_deploy.mds][DEBUG ] remote host will use systemd

[ceph_deploy.mds][DEBUG ] deploying mds bootstrap to ceph-mon01

[ceph-mon01][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.mds][ERROR ] RuntimeError: config file /etc/ceph/ceph.conf exists with different content; use --overwrite-conf to overwrite

[ceph-mon03][DEBUG ] connection detected need for sudo

[ceph-mon03][DEBUG ] connected to host: ceph-mon03

[ceph-mon03][DEBUG ] detect platform information from remote host

[ceph-mon03][DEBUG ] detect machine type

[ceph_deploy.mds][INFO ] Distro info: CentOS Linux 7.9.2009 Core

[ceph_deploy.mds][DEBUG ] remote host will use systemd

[ceph_deploy.mds][DEBUG ] deploying mds bootstrap to ceph-mon03

[ceph-mon03][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph-mon03][WARNIN] mds keyring does not exist yet, creating one

[ceph-mon03][DEBUG ] create a keyring file

[ceph-mon03][DEBUG ] create path if it doesn't exist

[ceph-mon03][INFO ] Running command: sudo ceph --cluster ceph --name client.bootstrap-mds --keyring /var/lib/ceph/bootstrap-mds/ceph.keyring auth get-or-create mds.ceph-mon03 osd allow rwx mds allow mon allow profile mds -o /var/lib/ceph/mds/ceph-ceph-mon03/keyring

[ceph-mon03][INFO ] Running command: sudo systemctl enable ceph-mds@ceph-mon03

[ceph-mon03][WARNIN] Created symlink from /etc/systemd/system/ceph-mds.target.wants/ceph-mds@ceph-mon03.service to /usr/lib/systemd/system/ceph-mds@.service.

[ceph-mon03][INFO ] Running command: sudo systemctl start ceph-mds@ceph-mon03

[ceph-mon03][INFO ] Running command: sudo systemctl enable ceph.target

[ceph_deploy][ERROR ] GenericError: Failed to create 1 MDSs

[cephadm@ceph-admin ceph-cluster]$

提示:这里出了两个错误,第一个错误是没有找到ceph.conf文件,解决办法就是切换至cephadm用户执行ceph-deploy mds create命令;第二个错误是告诉我们说远程主机上的配置文件和我们本地配置文件不一样;解决办法,可以先推送配置文件到集群各主机之上或者从集群主机拉取配置文件到本地然后在分发配置文件,然后在部署mds;

查看本地配置文件和远程集群主机配置文件

[cephadm@ceph-admin ceph-cluster]$ cat /etc/ceph/ceph.conf [global] fsid = 7fd4a619-9767-4b46-9cee-78b9dfe88f34 mon_initial_members = ceph-mon01 mon_host = 192.168.0.71 public_network = 192.168.0.0/24 cluster_network = 172.16.30.0/24 auth_cluster_required = cephx auth_service_required = cephx auth_client_required = cephx [cephadm@ceph-admin ceph-cluster]$ ssh ceph-mon01 'cat /etc/ceph/ceph.conf' [global] fsid = 7fd4a619-9767-4b46-9cee-78b9dfe88f34 mon_initial_members = ceph-mon01 mon_host = 192.168.0.71 public_network = 192.168.0.0/24 cluster_network = 172.16.30.0/24 auth_cluster_required = cephx auth_service_required = cephx auth_client_required = cephx [client] rgw_frontends = "civetweb port=8080" [cephadm@ceph-admin ceph-cluster]$

提示:可以看到ceph-mon01节点上的配置文件中多了一个client的配置段;

从ceph-mon01拉去配置文件到本地

[cephadm@ceph-admin ceph-cluster]$ ceph-deploy config pull ceph-mon01 [ceph_deploy.conf][DEBUG ] found configuration file at: /home/cephadm/.cephdeploy.conf [ceph_deploy.cli][INFO ] Invoked (2.0.1): /bin/ceph-deploy config pull ceph-mon01 [ceph_deploy.cli][INFO ] ceph-deploy options: [ceph_deploy.cli][INFO ] username : None [ceph_deploy.cli][INFO ] verbose : False [ceph_deploy.cli][INFO ] overwrite_conf : False [ceph_deploy.cli][INFO ] subcommand : pull [ceph_deploy.cli][INFO ] quiet : False [ceph_deploy.cli][INFO ] cd_conf : <ceph_deploy.conf.cephdeploy.Conf instance at 0x7f966fb478c0> [ceph_deploy.cli][INFO ] cluster : ceph [ceph_deploy.cli][INFO ] client : ['ceph-mon01'] [ceph_deploy.cli][INFO ] func : <function config at 0x7f966fd76cf8> [ceph_deploy.cli][INFO ] ceph_conf : None [ceph_deploy.cli][INFO ] default_release : False [ceph_deploy.config][DEBUG ] Checking ceph-mon01 for /etc/ceph/ceph.conf [ceph-mon01][DEBUG ] connection detected need for sudo [ceph-mon01][DEBUG ] connected to host: ceph-mon01 [ceph-mon01][DEBUG ] detect platform information from remote host [ceph-mon01][DEBUG ] detect machine type [ceph-mon01][DEBUG ] fetch remote file [ceph_deploy.config][DEBUG ] Got /etc/ceph/ceph.conf from ceph-mon01 [ceph_deploy.config][ERROR ] local config file ceph.conf exists with different content; use --overwrite-conf to overwrite [ceph_deploy.config][ERROR ] Unable to pull /etc/ceph/ceph.conf from ceph-mon01 [ceph_deploy][ERROR ] GenericError: Failed to fetch config from 1 hosts [cephadm@ceph-admin ceph-cluster]$ ceph-deploy --overwrite-conf config pull ceph-mon01 [ceph_deploy.conf][DEBUG ] found configuration file at: /home/cephadm/.cephdeploy.conf [ceph_deploy.cli][INFO ] Invoked (2.0.1): /bin/ceph-deploy --overwrite-conf config pull ceph-mon01 [ceph_deploy.cli][INFO ] ceph-deploy options: [ceph_deploy.cli][INFO ] username : None [ceph_deploy.cli][INFO ] verbose : False [ceph_deploy.cli][INFO ] overwrite_conf : True [ceph_deploy.cli][INFO ] subcommand : pull [ceph_deploy.cli][INFO ] quiet : False [ceph_deploy.cli][INFO ] cd_conf : <ceph_deploy.conf.cephdeploy.Conf instance at 0x7fa2f65438c0> [ceph_deploy.cli][INFO ] cluster : ceph [ceph_deploy.cli][INFO ] client : ['ceph-mon01'] [ceph_deploy.cli][INFO ] func : <function config at 0x7fa2f6772cf8> [ceph_deploy.cli][INFO ] ceph_conf : None [ceph_deploy.cli][INFO ] default_release : False [ceph_deploy.config][DEBUG ] Checking ceph-mon01 for /etc/ceph/ceph.conf [ceph-mon01][DEBUG ] connection detected need for sudo [ceph-mon01][DEBUG ] connected to host: ceph-mon01 [ceph-mon01][DEBUG ] detect platform information from remote host [ceph-mon01][DEBUG ] detect machine type [ceph-mon01][DEBUG ] fetch remote file [ceph_deploy.config][DEBUG ] Got /etc/ceph/ceph.conf from ceph-mon01 [cephadm@ceph-admin ceph-cluster]$ ls ceph.bootstrap-mds.keyring ceph.bootstrap-osd.keyring ceph.client.admin.keyring ceph-deploy-ceph.log ceph.bootstrap-mgr.keyring ceph.bootstrap-rgw.keyring ceph.conf ceph.mon.keyring [cephadm@ceph-admin ceph-cluster]$ cat ceph.conf [global] fsid = 7fd4a619-9767-4b46-9cee-78b9dfe88f34 mon_initial_members = ceph-mon01 mon_host = 192.168.0.71 public_network = 192.168.0.0/24 cluster_network = 172.16.30.0/24 auth_cluster_required = cephx auth_service_required = cephx auth_client_required = cephx [client] rgw_frontends = "civetweb port=8080" [cephadm@ceph-admin ceph-cluster]$

提示:如果本地配置文件存在需要加上--overwrite-conf选项强制将覆盖原有配置文件

再次将本地配置文件分发至集群各主机

[cephadm@ceph-admin ceph-cluster]$ ceph-deploy --overwrite-conf config push ceph-mon01 ceph-mon02 ceph-mon03 ceph-mgr01 ceph-mgr02

[ceph_deploy.conf][DEBUG ] found configuration file at: /home/cephadm/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (2.0.1): /bin/ceph-deploy --overwrite-conf config push ceph-mon01 ceph-mon02 ceph-mon03 ceph-mgr01 ceph-mgr02

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username : None

[ceph_deploy.cli][INFO ] verbose : False

[ceph_deploy.cli][INFO ] overwrite_conf : True

[ceph_deploy.cli][INFO ] subcommand : push

[ceph_deploy.cli][INFO ] quiet : False

[ceph_deploy.cli][INFO ] cd_conf : <ceph_deploy.conf.cephdeploy.Conf instance at 0x7fcf983488c0>

[ceph_deploy.cli][INFO ] cluster : ceph

[ceph_deploy.cli][INFO ] client : ['ceph-mon01', 'ceph-mon02', 'ceph-mon03', 'ceph-mgr01', 'ceph-mgr02']

[ceph_deploy.cli][INFO ] func : <function config at 0x7fcf98577cf8>

[ceph_deploy.cli][INFO ] ceph_conf : None

[ceph_deploy.cli][INFO ] default_release : False

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mon01

[ceph-mon01][DEBUG ] connection detected need for sudo

[ceph-mon01][DEBUG ] connected to host: ceph-mon01

[ceph-mon01][DEBUG ] detect platform information from remote host

[ceph-mon01][DEBUG ] detect machine type

[ceph-mon01][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mon02

[ceph-mon02][DEBUG ] connection detected need for sudo

[ceph-mon02][DEBUG ] connected to host: ceph-mon02

[ceph-mon02][DEBUG ] detect platform information from remote host

[ceph-mon02][DEBUG ] detect machine type

[ceph-mon02][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mon03

[ceph-mon03][DEBUG ] connection detected need for sudo

[ceph-mon03][DEBUG ] connected to host: ceph-mon03

[ceph-mon03][DEBUG ] detect platform information from remote host

[ceph-mon03][DEBUG ] detect machine type

[ceph-mon03][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mgr01

[ceph-mgr01][DEBUG ] connection detected need for sudo

[ceph-mgr01][DEBUG ] connected to host: ceph-mgr01

[ceph-mgr01][DEBUG ] detect platform information from remote host

[ceph-mgr01][DEBUG ] detect machine type

[ceph-mgr01][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mgr02

[ceph-mgr02][DEBUG ] connection detected need for sudo

[ceph-mgr02][DEBUG ] connected to host: ceph-mgr02

[ceph-mgr02][DEBUG ] detect platform information from remote host

[ceph-mgr02][DEBUG ] detect machine type

[ceph-mgr02][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[cephadm@ceph-admin ceph-cluster]$

再次部署MDS

[cephadm@ceph-admin ceph-cluster]$ ceph-deploy mds create ceph-mon01 ceph-mon03

[ceph_deploy.conf][DEBUG ] found configuration file at: /home/cephadm/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (2.0.1): /bin/ceph-deploy mds create ceph-mon01 ceph-mon03

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username : None

[ceph_deploy.cli][INFO ] verbose : False

[ceph_deploy.cli][INFO ] overwrite_conf : False

[ceph_deploy.cli][INFO ] subcommand : create

[ceph_deploy.cli][INFO ] quiet : False

[ceph_deploy.cli][INFO ] cd_conf : <ceph_deploy.conf.cephdeploy.Conf instance at 0x7fc39019c7e8>

[ceph_deploy.cli][INFO ] cluster : ceph

[ceph_deploy.cli][INFO ] func : <function mds at 0x7fc3903f5050>

[ceph_deploy.cli][INFO ] ceph_conf : None

[ceph_deploy.cli][INFO ] mds : [('ceph-mon01', 'ceph-mon01'), ('ceph-mon03', 'ceph-mon03')]

[ceph_deploy.cli][INFO ] default_release : False

[ceph_deploy.mds][DEBUG ] Deploying mds, cluster ceph hosts ceph-mon01:ceph-mon01 ceph-mon03:ceph-mon03

[ceph-mon01][DEBUG ] connection detected need for sudo

[ceph-mon01][DEBUG ] connected to host: ceph-mon01

[ceph-mon01][DEBUG ] detect platform information from remote host

[ceph-mon01][DEBUG ] detect machine type

[ceph_deploy.mds][INFO ] Distro info: CentOS Linux 7.9.2009 Core

[ceph_deploy.mds][DEBUG ] remote host will use systemd

[ceph_deploy.mds][DEBUG ] deploying mds bootstrap to ceph-mon01

[ceph-mon01][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph-mon01][WARNIN] mds keyring does not exist yet, creating one

[ceph-mon01][DEBUG ] create a keyring file

[ceph-mon01][DEBUG ] create path if it doesn't exist

[ceph-mon01][INFO ] Running command: sudo ceph --cluster ceph --name client.bootstrap-mds --keyring /var/lib/ceph/bootstrap-mds/ceph.keyring auth get-or-create mds.ceph-mon01 osd allow rwx mds allow mon allow profile mds -o /var/lib/ceph/mds/ceph-ceph-mon01/keyring

[ceph-mon01][INFO ] Running command: sudo systemctl enable ceph-mds@ceph-mon01

[ceph-mon01][WARNIN] Created symlink from /etc/systemd/system/ceph-mds.target.wants/ceph-mds@ceph-mon01.service to /usr/lib/systemd/system/ceph-mds@.service.

[ceph-mon01][INFO ] Running command: sudo systemctl start ceph-mds@ceph-mon01

[ceph-mon01][INFO ] Running command: sudo systemctl enable ceph.target

[ceph-mon03][DEBUG ] connection detected need for sudo

[ceph-mon03][DEBUG ] connected to host: ceph-mon03

[ceph-mon03][DEBUG ] detect platform information from remote host

[ceph-mon03][DEBUG ] detect machine type

[ceph_deploy.mds][INFO ] Distro info: CentOS Linux 7.9.2009 Core

[ceph_deploy.mds][DEBUG ] remote host will use systemd

[ceph_deploy.mds][DEBUG ] deploying mds bootstrap to ceph-mon03

[ceph-mon03][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph-mon03][DEBUG ] create path if it doesn't exist

[ceph-mon03][INFO ] Running command: sudo ceph --cluster ceph --name client.bootstrap-mds --keyring /var/lib/ceph/bootstrap-mds/ceph.keyring auth get-or-create mds.ceph-mon03 osd allow rwx mds allow mon allow profile mds -o /var/lib/ceph/mds/ceph-ceph-mon03/keyring

[ceph-mon03][INFO ] Running command: sudo systemctl enable ceph-mds@ceph-mon03

[ceph-mon03][INFO ] Running command: sudo systemctl start ceph-mds@ceph-mon03

[ceph-mon03][INFO ] Running command: sudo systemctl enable ceph.target

[cephadm@ceph-admin ceph-cluster]$

查看msd状态

[cephadm@ceph-admin ceph-cluster]$ ceph mds stat

cephfs-1/1/1 up {0=ceph-mon02=up:active}, 2 up:standby

[cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs

cephfs - 0 clients

======

+------+--------+------------+---------------+-------+-------+

| Rank | State | MDS | Activity | dns | inos |

+------+--------+------------+---------------+-------+-------+

| 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 |

+------+--------+------------+---------------+-------+-------+

+---------------------+----------+-------+-------+

| Pool | type | used | avail |

+---------------------+----------+-------+-------+

| cephfs-metadatapool | metadata | 59.8k | 280G |

| cephfs-datapool | data | 3391k | 280G |

+---------------------+----------+-------+-------+

+-------------+

| Standby MDS |

+-------------+

| ceph-mon03 |

| ceph-mon01 |

+-------------+

MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable)

[cephadm@ceph-admin ceph-cluster]$

提示:可以看到现在有两个mds处于standby状态,一个active状态mds;

管理rank

增加Active MDS的数量命令格式:ceph fs set <fsname> max_mds <number>

[cephadm@ceph-admin ceph-cluster]$ ceph fs set cephfs max_mds 2 [cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs cephfs - 0 clients ====== +------+--------+------------+---------------+-------+-------+ | Rank | State | MDS | Activity | dns | inos | +------+--------+------------+---------------+-------+-------+ | 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 | | 1 | active | ceph-mon01 | Reqs: 0 /s | 10 | 13 | +------+--------+------------+---------------+-------+-------+ +---------------------+----------+-------+-------+ | Pool | type | used | avail | +---------------------+----------+-------+-------+ | cephfs-metadatapool | metadata | 61.1k | 280G | | cephfs-datapool | data | 3391k | 280G | +---------------------+----------+-------+-------+ +-------------+ | Standby MDS | +-------------+ | ceph-mon03 | +-------------+ MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable) [cephadm@ceph-admin ceph-cluster]$

提示:仅当存在某个备用守护进程可供新rank使用时,文件系统中的实际rank数才会增加;多活MDS的场景依然要求存在备用的冗余主机以实现服务HA,因此max_mds的值总是应该比实际可用的MDS数量至少小1;

降低Acitve MDS的数量

减小max_mds的值仅会限制新的rank的创建,对于已经存在的Active MDS及持有的rank不造成真正的影响,因此降低max_mds的值后,管理员需要手动关闭不再不再被需要的rank;命令格式:ceph mds deactivate {System:rank|FSID:rank|rank}

[cephadm@ceph-admin ceph-cluster]$ ceph fs set cephfs max_mds 1 [cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs - 0 clients ====== +------+----------+------------+---------------+-------+-------+ | Rank | State | MDS | Activity | dns | inos | +------+----------+------------+---------------+-------+-------+ | 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 | | 1 | stopping | ceph-mon01 | | 10 | 13 | +------+----------+------------+---------------+-------+-------+ +---------------------+----------+-------+-------+ | Pool | type | used | avail | +---------------------+----------+-------+-------+ | cephfs-metadatapool | metadata | 61.6k | 280G | | cephfs-datapool | data | 3391k | 280G | +---------------------+----------+-------+-------+ +-------------+ | Standby MDS | +-------------+ | ceph-mon03 | +-------------+ MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable) [cephadm@ceph-admin ceph-cluster]$ ceph mds deactivate cephfs:1 Error ENOTSUP: command is obsolete; please check usage and/or man page [cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs - 0 clients ====== +------+--------+------------+---------------+-------+-------+ | Rank | State | MDS | Activity | dns | inos | +------+--------+------------+---------------+-------+-------+ | 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 | +------+--------+------------+---------------+-------+-------+ +---------------------+----------+-------+-------+ | Pool | type | used | avail | +---------------------+----------+-------+-------+ | cephfs-metadatapool | metadata | 62.1k | 280G | | cephfs-datapool | data | 3391k | 280G | +---------------------+----------+-------+-------+ +-------------+ | Standby MDS | +-------------+ | ceph-mon03 | | ceph-mon01 | +-------------+ MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable) [cephadm@ceph-admin ceph-cluster]$

提示:虽然我们执行ceph deactivate 命令对应提示我们命令过时,但对应mds还是被还原了;

手动分配目录子树至rank

多Active MDS的CephFS集群上会运行一个均衡器用于调度元数据负载,这种模式通常足以满足大多数用户的需求;个别场景中,用户需要使用元数据到特定级别的显式映射来覆盖动态平衡器,以在整个集群上自定义分配应用负载;针对此目的提供的机制称为“导出关联”,它是目录的扩展属性ceph.dir.pin;目录属性设置命令:setfattr -n ceph.dir.pin -v RANK /PATH/TO/DIR;扩展属性的值 ( -v ) 是要将目录子树指定到的rank 默认为-1,表示不关联该目录;目录导出关联继承自设置了导出关联的最近的父级,因此,对某个目录设置导出关联会影响该目录的所有子级目录;

[cephadm@ceph-admin ceph-cluster]$ sefattr -bash: sefattr: command not found [cephadm@ceph-admin ceph-cluster]$ yum provides setfattr Loaded plugins: fastestmirror Repository epel is listed more than once in the configuration Repository epel-debuginfo is listed more than once in the configuration Repository epel-source is listed more than once in the configuration Loading mirror speeds from cached hostfile * base: mirrors.aliyun.com * extras: mirrors.aliyun.com * updates: mirrors.aliyun.com attr-2.4.46-13.el7.x86_64 : Utilities for managing filesystem extended attributes Repo : base Matched from: Filename : /usr/bin/setfattr [cephadm@ceph-admin ceph-cluster]$

提示:前提是我们系统上要有setfattr命令,如果没有可以安装attr这个包即可;

MDS故障转移机制

出于冗余的目的,每个CephFS上都应该配置一定数量Standby状态的ceph-mds守护进程等着接替失效的rank,CephFS提供了四个选项用于控制Standby状态的MDS守护进程如何工作;

1、 mds_standby_replay:布尔型值,true表示当前MDS守护进程将持续读取某个特定的Up状态的rank的元数据日志,从而持有相关rank的元数据缓存,并在此rank失效时加速故障切换; 一个Up状态的rank仅能拥有一个replay守护进程,多出的会被自动降级为正常的非replay型MDS;

2、 mds_standby_for_name:设置当前MDS进程仅备用于指定名称的rank;

3、 mds_standby_for_rank:设置当前MDS进程仅备用于指定的rank,它不会接替任何其它失效的rank;不过,在有着多个CephFS的场景中,可联合使用下面的参数来指定为哪个文件系统的rank进行冗余;

4、 mds_standby_for_fscid:联合mds_standby_for_rank参数的值协同生效;同时设置了mds_standby_for_rank:备用于指定fscid的指定rank;未设置mds_standby_for_rank时:备用于指定fscid的任意rank;

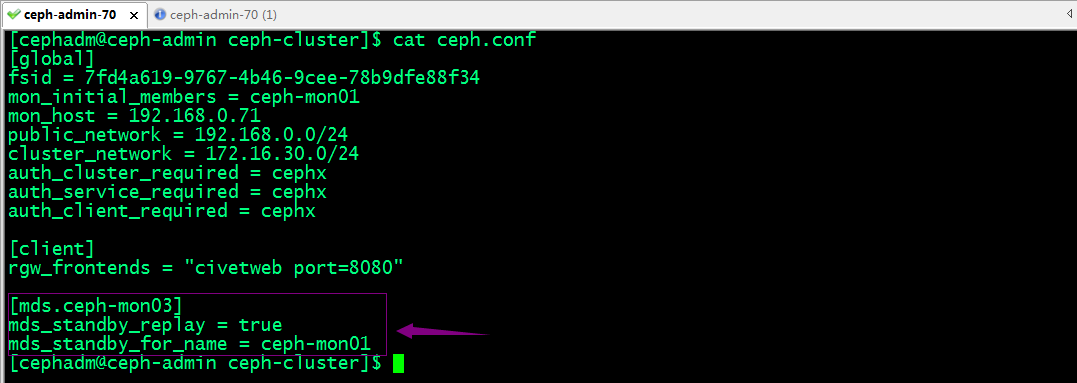

配置冗余mds

提示:上述配置表示ceph-mon03这个冗余的mds开启对ceph-mon01做实时备份,但ceph-mon01故障,对应ceph-mon03自动接管ceph-mon01负责的rank;

推送配置到集群各主机

[cephadm@ceph-admin ceph-cluster]$ ceph-deploy --overwrite-conf config push ceph-mon01 ceph-mon02 ceph-mon03 ceph-mgr01 ceph-mgr02

[ceph_deploy.conf][DEBUG ] found configuration file at: /home/cephadm/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (2.0.1): /bin/ceph-deploy --overwrite-conf config push ceph-mon01 ceph-mon02 ceph-mon03 ceph-mgr01 ceph-mgr02

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username : None

[ceph_deploy.cli][INFO ] verbose : False

[ceph_deploy.cli][INFO ] overwrite_conf : True

[ceph_deploy.cli][INFO ] subcommand : push

[ceph_deploy.cli][INFO ] quiet : False

[ceph_deploy.cli][INFO ] cd_conf : <ceph_deploy.conf.cephdeploy.Conf instance at 0x7f03332968c0>

[ceph_deploy.cli][INFO ] cluster : ceph

[ceph_deploy.cli][INFO ] client : ['ceph-mon01', 'ceph-mon02', 'ceph-mon03', 'ceph-mgr01', 'ceph-mgr02']

[ceph_deploy.cli][INFO ] func : <function config at 0x7f03334c5cf8>

[ceph_deploy.cli][INFO ] ceph_conf : None

[ceph_deploy.cli][INFO ] default_release : False

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mon01

[ceph-mon01][DEBUG ] connection detected need for sudo

[ceph-mon01][DEBUG ] connected to host: ceph-mon01

[ceph-mon01][DEBUG ] detect platform information from remote host

[ceph-mon01][DEBUG ] detect machine type

[ceph-mon01][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mon02

[ceph-mon02][DEBUG ] connection detected need for sudo

[ceph-mon02][DEBUG ] connected to host: ceph-mon02

[ceph-mon02][DEBUG ] detect platform information from remote host

[ceph-mon02][DEBUG ] detect machine type

[ceph-mon02][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mon03

[ceph-mon03][DEBUG ] connection detected need for sudo

[ceph-mon03][DEBUG ] connected to host: ceph-mon03

[ceph-mon03][DEBUG ] detect platform information from remote host

[ceph-mon03][DEBUG ] detect machine type

[ceph-mon03][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mgr01

[ceph-mgr01][DEBUG ] connection detected need for sudo

[ceph-mgr01][DEBUG ] connected to host: ceph-mgr01

[ceph-mgr01][DEBUG ] detect platform information from remote host

[ceph-mgr01][DEBUG ] detect machine type

[ceph-mgr01][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[ceph_deploy.config][DEBUG ] Pushing config to ceph-mgr02

[ceph-mgr02][DEBUG ] connection detected need for sudo

[ceph-mgr02][DEBUG ] connected to host: ceph-mgr02

[ceph-mgr02][DEBUG ] detect platform information from remote host

[ceph-mgr02][DEBUG ] detect machine type

[ceph-mgr02][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

[cephadm@ceph-admin ceph-cluster]$

停止ceph-mon01上的mds进程,看看对应ceph-mon03是否接管?

[cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs cephfs - 0 clients ====== +------+--------+------------+---------------+-------+-------+ | Rank | State | MDS | Activity | dns | inos | +------+--------+------------+---------------+-------+-------+ | 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 | | 1 | active | ceph-mon01 | Reqs: 0 /s | 10 | 13 | +------+--------+------------+---------------+-------+-------+ +---------------------+----------+-------+-------+ | Pool | type | used | avail | +---------------------+----------+-------+-------+ | cephfs-metadatapool | metadata | 65.3k | 280G | | cephfs-datapool | data | 3391k | 280G | +---------------------+----------+-------+-------+ +-------------+ | Standby MDS | +-------------+ | ceph-mon03 | +-------------+ MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable) [cephadm@ceph-admin ceph-cluster]$ ssh ceph-mon01 'systemctl stop ceph-mds@ceph-mon01.service' Failed to stop ceph-mds@ceph-mon01.service: Interactive authentication required. See system logs and 'systemctl status ceph-mds@ceph-mon01.service' for details. [cephadm@ceph-admin ceph-cluster]$ ssh ceph-mon01 'sudo systemctl stop ceph-mds@ceph-mon01.service' [cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs cephfs - 0 clients ====== +------+--------+------------+---------------+-------+-------+ | Rank | State | MDS | Activity | dns | inos | +------+--------+------------+---------------+-------+-------+ | 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 | | 1 | rejoin | ceph-mon03 | | 0 | 3 | +------+--------+------------+---------------+-------+-------+ +---------------------+----------+-------+-------+ | Pool | type | used | avail | +---------------------+----------+-------+-------+ | cephfs-metadatapool | metadata | 65.3k | 280G | | cephfs-datapool | data | 3391k | 280G | +---------------------+----------+-------+-------+ +-------------+ | Standby MDS | +-------------+ +-------------+ MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable) [cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs cephfs - 0 clients ====== +------+--------+------------+---------------+-------+-------+ | Rank | State | MDS | Activity | dns | inos | +------+--------+------------+---------------+-------+-------+ | 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 | | 1 | active | ceph-mon03 | Reqs: 0 /s | 10 | 13 | +------+--------+------------+---------------+-------+-------+ +---------------------+----------+-------+-------+ | Pool | type | used | avail | +---------------------+----------+-------+-------+ | cephfs-metadatapool | metadata | 65.3k | 280G | | cephfs-datapool | data | 3391k | 280G | +---------------------+----------+-------+-------+ +-------------+ | Standby MDS | +-------------+ +-------------+ MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable) [cephadm@ceph-admin ceph-cluster]$

提示:可以看到当ceph-mon01故障以后,对应ceph-mon03自动接管了ceph-mon01负责的rank;

恢复ceph-mon01

[cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs - 0 clients ====== +------+--------+------------+---------------+-------+-------+ | Rank | State | MDS | Activity | dns | inos | +------+--------+------------+---------------+-------+-------+ | 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 | | 1 | active | ceph-mon03 | Reqs: 0 /s | 10 | 13 | +------+--------+------------+---------------+-------+-------+ +---------------------+----------+-------+-------+ | Pool | type | used | avail | +---------------------+----------+-------+-------+ | cephfs-metadatapool | metadata | 65.3k | 280G | | cephfs-datapool | data | 3391k | 280G | +---------------------+----------+-------+-------+ +-------------+ | Standby MDS | +-------------+ +-------------+ MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable) [cephadm@ceph-admin ceph-cluster]$ ssh ceph-mon01 'sudo systemctl start ceph-mds@ceph-mon01.service' [cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs - 0 clients ====== +------+--------+------------+---------------+-------+-------+ | Rank | State | MDS | Activity | dns | inos | +------+--------+------------+---------------+-------+-------+ | 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 | | 1 | active | ceph-mon03 | Reqs: 0 /s | 10 | 13 | +------+--------+------------+---------------+-------+-------+ +---------------------+----------+-------+-------+ | Pool | type | used | avail | +---------------------+----------+-------+-------+ | cephfs-metadatapool | metadata | 65.3k | 280G | | cephfs-datapool | data | 3391k | 280G | +---------------------+----------+-------+-------+ +-------------+ | Standby MDS | +-------------+ | ceph-mon01 | +-------------+ MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable) [cephadm@ceph-admin ceph-cluster]$ ssh ceph-mon03 'sudo systemctl restart ceph-mds@ceph-mon03.service' [cephadm@ceph-admin ceph-cluster]$ ceph fs status cephfs - 0 clients ====== +------+----------------+------------+---------------+-------+-------+ | Rank | State | MDS | Activity | dns | inos | +------+----------------+------------+---------------+-------+-------+ | 0 | active | ceph-mon02 | Reqs: 0 /s | 18 | 17 | | 1 | active | ceph-mon01 | Reqs: 0 /s | 10 | 13 | | 1-s | standby-replay | ceph-mon03 | Evts: 0 /s | 0 | 3 | +------+----------------+------------+---------------+-------+-------+ +---------------------+----------+-------+-------+ | Pool | type | used | avail | +---------------------+----------+-------+-------+ | cephfs-metadatapool | metadata | 65.3k | 280G | | cephfs-datapool | data | 3391k | 280G | +---------------------+----------+-------+-------+ +-------------+ | Standby MDS | +-------------+ +-------------+ MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable) [cephadm@ceph-admin ceph-cluster]$

提示:重新恢复ceph-mon01以后,对应不会进行抢占,它会自动沦为standby状态;并且当ceph-mon03重启或故障后对应ceph-mon01也会自动接管对应rank;

所谓动态子树分区就是根据文件系统的负载能力动态调整对应子树;cephfs就是使用这种方式实现多活mds;在ceph上多主MDS模式是指CephFS将整个文件系统的名称空间切分为多个子树并配置到多个MDS之上,不过,读写操作的负载均衡策略分别是子树切分和目录副本;将写操作负载较重的目录切分成多个子目录以分散负载;为读操作负载较重的目录创建多个副本以均衡负载;子树分区和迁移的决策是一个同步过程,各MDS每10秒钟做一次独立的迁移决策,每个MDS并不存在一个一致的名称空间视图,且MDS集群也不存在一个全局调度器负责统一的调度决策;各MDS彼此间通过交换心跳信息(HeartBeat,简称HB)及负载状态来确定是否要进行迁移、如何分区名称空间,以及是否需要目录切分为子树等;管理员也可以配置CephFS负载的计算方式从而影响MDS的负载决策,目前,CephFS支持基于CPU负载、文件系统负载及混合此两种的决策机制;

所谓动态子树分区就是根据文件系统的负载能力动态调整对应子树;cephfs就是使用这种方式实现多活mds;在ceph上多主MDS模式是指CephFS将整个文件系统的名称空间切分为多个子树并配置到多个MDS之上,不过,读写操作的负载均衡策略分别是子树切分和目录副本;将写操作负载较重的目录切分成多个子目录以分散负载;为读操作负载较重的目录创建多个副本以均衡负载;子树分区和迁移的决策是一个同步过程,各MDS每10秒钟做一次独立的迁移决策,每个MDS并不存在一个一致的名称空间视图,且MDS集群也不存在一个全局调度器负责统一的调度决策;各MDS彼此间通过交换心跳信息(HeartBeat,简称HB)及负载状态来确定是否要进行迁移、如何分区名称空间,以及是否需要目录切分为子树等;管理员也可以配置CephFS负载的计算方式从而影响MDS的负载决策,目前,CephFS支持基于CPU负载、文件系统负载及混合此两种的决策机制;