容器编排系统K8s之flannel网络模型

前文我们聊到了k8s上webui的安装和相关用户授权,回顾请参考:https://www.cnblogs.com/qiuhom-1874/p/14222930.html;今天我们来聊一聊k8s上的网络相关话题;

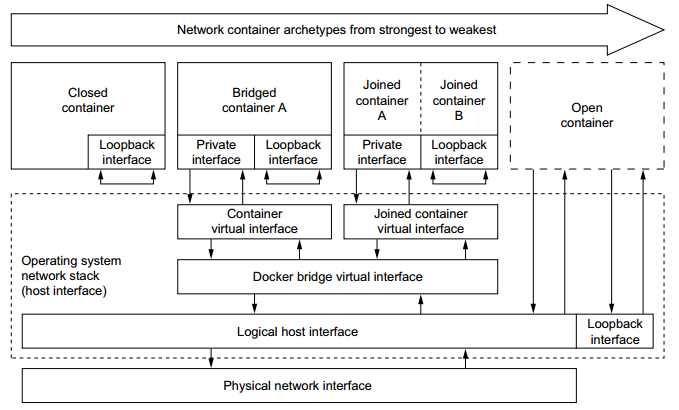

在说k8s网络之前,我们先来回顾下docker中的网络是怎么实现的,在docker中,容器有4种类型,第一种是closed container类型,这种容器类型,容器内部只有一个lo接口,它无法实现和外部网络通信;第二种是bridged container,这种类型容器就是默认的容器类型,它是通过桥接的形式将容器中的虚拟网卡直接桥接到docker0桥上,让其容器内部的虚拟网卡和docker0桥直接位于同一网络名称空间中,使得容器可以同外部网络通信;第三种容器就是joined container,这种容器是共享式网络容器,所谓共享式网络容器是指在运行一个容器时,直接指定我们要把该容器同那个容器共享为同一网络名称空间,这种网络模型本质上也桥接的一种,不同于bridage contaier的是,joined container它可以共享多个容器的网络名称空间;比如容器a要和容器b通信,容器a就可以直接加入到容器b的网络名称空间中,实现两个容器在同一网络名称空间中;第四种容器是open container,这种容器网络是直接共享宿主机网络名称空间;如下图所示

提示:从上述描述不难发现docker中的容器网络模型都是通过桥接的模式实现的不同类别的网络模型;

在docker中跨主机容器通信是怎么做的呢?

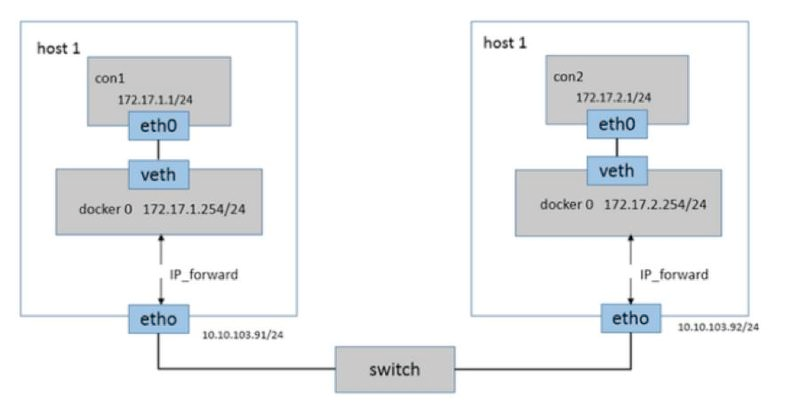

从上面的描述,docker中的容器要想和外部通信,首先要把对应的容器桥接到能够和外部通信的桥上,默认情况docker运行的容器,它会把容器内部的虚拟网卡桥接到docker0桥,对应docker0桥是宿主机上的一个虚拟网桥,它是一个nat桥,它能够和外部网络通信的原因是它借助了宿主机上的iptables规则中的SNAT实现的源地址转换,从而实现和外部网络主机通信;同样的道理如果对应docker0桥上桥接的容器要能够被外部网络所访问,它也需要借助宿主机上的iptables中的DNAT,让其外部网络访问对应宿主机上的ip地址,对应流量通过DNAT将用户请求送达至容器内部进行响应;如下图所示

提示:同一宿主机上的容器通信直接可以通过docker0桥直接通信,跨主机容器间通信,必须借助宿主机上的iptables规则,将docker0桥上桥接的容器通过SNAT或DNAT把对应请求路由出去或将外部请求转发到对应容器内部进行响应;

k8s上的网络

我们知道在k8s上有三种网络,第一种是宿主机网络,这种网络没有列入容器编排的范畴内,是集群管理员自行维护;第二种网络上service网络,service网络也叫cluster网络,该网络本质上不会在任何网卡上存在,它是借助每个节点上的kube-proxy生成的iptables或ipvs规则,主要用来实现对pod访问的负载均衡,也是各服务间访问的网络;第三种网络就是pod网络,pod网络主要用来pod和pod间通信;如下图所示

提示:在k8s上pod和pod通信,它不是靠iptables中的SANT或DNAT实现的,它也不走docker0桥,而是借助外部网络插件实现的,对于k8s的网络插件来说,实现的软件有很多,最为著名的有flannel或calico这两种;这两种插件都能实现pod与pod间通信不依赖iptables中的SNAT或DANT;不同的是flannel不支持网络策略,对应calico支持网络策略;

flannel是怎么实现的pod与pod间通信的呢?

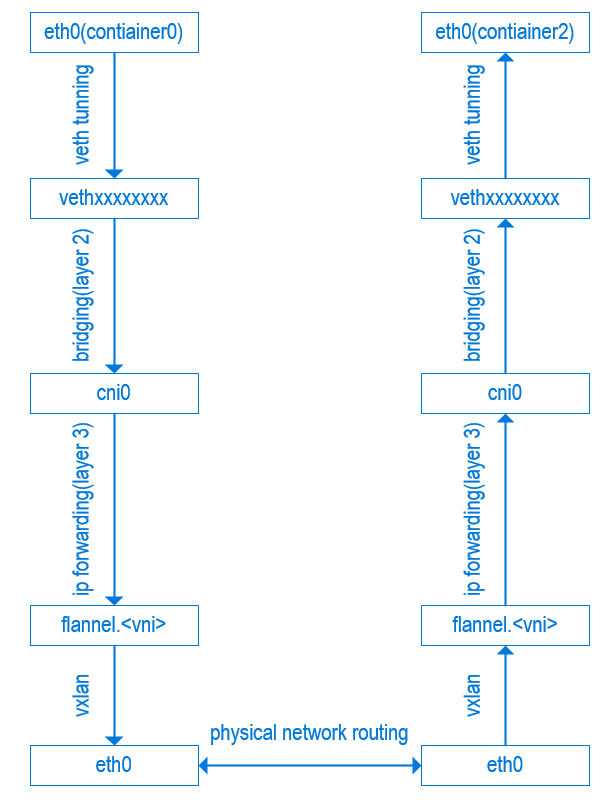

我们知道在k8s上pod的ip地址,取决于我们使用的网络插件,使用flannel网络插件我们在初始化集群时就要指定对应的pod网络(10.244.0.0/16),如果使用calico网络插件,初始化集群我们要指定pod网络为192.168.0.0/16;我们指定对应的pod网络地址,使用对应的插件,k8s集群就能正常工作,这其中的原因是默认flannel网络插件使用的地址就是10.244.0.0/16的地址,calico使用的192.168.0.0/16;当然这个默认的配置我们是可以更改的;以flannel为例,它是怎么实现pod和pod直接通信的呢?我们知道在docker环境中跨节点通信,两个容器的地址可能是相同的地址,为此跨节点容器通信就必须借助SNAT或DNAT方式进行通信;对于在k8s上网络插件要想实现pod和pod直接通信,首先要解决podip和podip不能互相冲突;对于flannel这个网络插件来说,它解决pod地址冲突是依赖网络虚拟化中的vxlan机制实现的;vxlan能够将10.244.0.0/16这个网络划分为多个子网,每个节点的pod使用对应节点上的子网地址,这样一来不同节点上的podip就一定不会发生两个podip地址相同的情况;比如vxlan把10.244.0.0/16的网络划分为256个子网,第一个节点上运行的pod就是用10.244.0.0/24这个子网中的地址,第二个节点上的pod就使用10.244.1.0/24子网中的地址;第三个节点,第四个节点依次类推;IP地址冲突问题解决,pod和pod怎么直接通信呢?方案一:按照docker网络中的思想,我们可以将容器内部的虚拟网卡直接桥接到宿主机上的网卡上,这样一来对应每个pod就可以通过宿主机网卡来实现通信;但是这种方式有一个缺点,如果对应pod增多,对于宿主机网络中的arp广播报文可能因为数量多而导致arp泛洪;从而导致网络拥塞而不可用;为此我们需要借助其他机制来解决;比如把每个节点的子网划分一个vlan,节点与节点通信,通过vlan去交换数据;这样一来我们就需要手动去管理vlan,很显然这种方式不是我们想要的方式;方式二:我们不把容器的虚拟网卡桥接到宿主机网卡上,而是把它桥接的一个虚拟的网桥上;然后把宿主机和宿主机通过某种机制打通一个隧道,然后生成对应的路由信息,这样一来在同一节点上的pod通信直接通过虚拟网桥通信即可;如果要和其他节点上的pod通信对应报文会通路由信息把对应虚拟网桥上的流量发送到隧道接口,进行隧道协议报文的封装,然后把封装好的报文通过自己所在节点上的物理网卡发送出去,对应主机收到此报文后,通过层层解封装,最后到达对应pod内部,从而实现pod和pod直接通信;对此pod是无所感知的,因为最终到达pod的报文一定是源ip和目标ip都是对应的podip;那么问题来了,对应接口怎么知道是对端虚拟网桥的ip地址呢?它怎么知道对应报文该发往那个主机呢?这个就跟我们使用的网络插件有关系了;在flannel网络插件中,对应的网络信息是存储在一个存储系统中的,比如使用etcd存储;在k8s上安装好flannel插件以后,对应的它会在每个宿主机上运行一个守护进程,并且在每个节点上创建一个cni0的接口,这个接口就是我们上面说的虚拟网桥;除了这个网桥,它还会创建一个flannel.1的接口,这个接口就是隧道接口;随后flannel会借助vxlan把10.244.0.0/16这个网络进行子网划分,并把对应划分的子网信息同对应节点上的物理网卡上的ip地址,mac地址,进行一一对应;比如节点1的子网为10.244.0.0/24,对应物理网卡上的ip地址为192.168.0.41,mac地址xxx;把节点2的子网信息以及对应节点ip地址,mac地址等信息一一对应起来,并把这些信息保存在etcd中;当节点1上的pod需要和节点2上的pod通信时,此时vxlan控制器会到etcd中检索对应的信息,然后封装报文;其实说这么多就一句话,在k8s上flannel网络插件是通过vxlan机制,实现对每个节点上pod网络进行子网划分解决了podip地址冲突问题,同时也基于vxlan机制实现节点与节点间的隧道通信;从而实现k8s上的pod与pod间可以直接通信;如下图所示

k8s上借助flannel网络插件,实现跨节点pod通信报文走向示意图

提示:简单讲vxlan就是借助物理网卡来承载pod网络,而实现的二层隧道;从而各pod可以直接使用该隧道通信,中间无需做任何nat转换;其实vxlan这种技术运用还是很广泛的,比如openstack中的自服务网络,docker swarm中的容器和容器间通信;

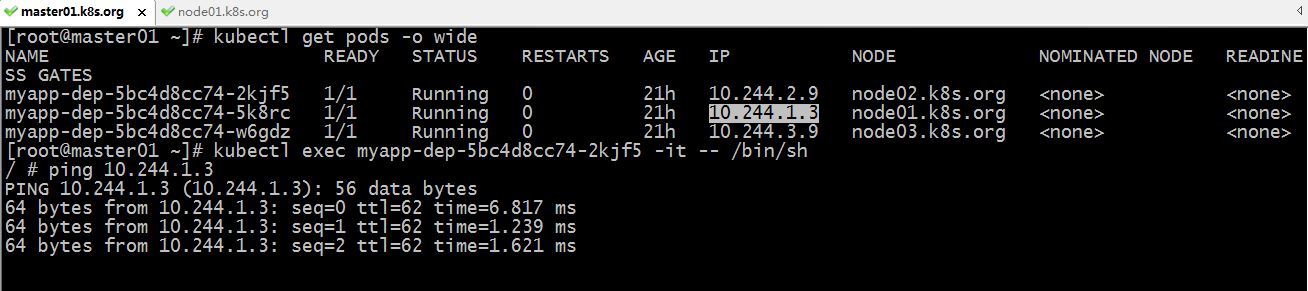

测试:在k8s上运行pod,然后在pod内部ping跨节点pod,看看他们之间具体是怎么通信过程

[root@master01 ~]# kubectl get pods -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES myapp-dep-5bc4d8cc74-2kjf5 1/1 Running 0 20h 10.244.2.9 node02.k8s.org <none> <none> myapp-dep-5bc4d8cc74-5k8rc 1/1 Running 0 20h 10.244.1.3 node01.k8s.org <none> <none> myapp-dep-5bc4d8cc74-w6gdz 1/1 Running 0 20h 10.244.3.9 node03.k8s.org <none> <none> [root@master01 ~]#

提示:以上k8s上在default名称空间中跑了3个pod,分别被调度到3个节点之上,各自运行了一个pod;

进入其中一个pod,在其内部ping另外一个pod

[root@master01 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

myapp-dep-5bc4d8cc74-2kjf5 1/1 Running 0 20h 10.244.2.9 node02.k8s.org <none> <none>

myapp-dep-5bc4d8cc74-5k8rc 1/1 Running 0 20h 10.244.1.3 node01.k8s.org <none> <none>

myapp-dep-5bc4d8cc74-w6gdz 1/1 Running 0 20h 10.244.3.9 node03.k8s.org <none> <none>

[root@master01 ~]# kubectl exec -it myapp-dep-5bc4d8cc74-2kjf5 -- /bin/sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

3: eth0@if15: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1450 qdisc noqueue state UP

link/ether 5a:2a:ca:ec:83:65 brd ff:ff:ff:ff:ff:ff

inet 10.244.2.9/24 brd 10.244.2.255 scope global eth0

valid_lft forever preferred_lft forever

/ # ping 10.244.1.3

PING 10.244.1.3 (10.244.1.3): 56 data bytes

64 bytes from 10.244.1.3: seq=0 ttl=62 time=9.944 ms

64 bytes from 10.244.1.3: seq=1 ttl=62 time=1.974 ms

64 bytes from 10.244.1.3: seq=2 ttl=62 time=2.115 ms

登录到node01节点,查看网络接口

[root@node01 ~]# ip a l

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP qlen 1000

link/ether 00:0c:29:01:21:41 brd ff:ff:ff:ff:ff:ff

inet 172.16.11.4/24 brd 172.16.11.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe01:2141/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN

link/ether 02:42:e1:a6:d7:1a brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

4: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN

link/ether 76:12:1a:11:62:86 brd ff:ff:ff:ff:ff:ff

inet 10.244.1.0/32 brd 10.244.1.0 scope global flannel.1

valid_lft forever preferred_lft forever

inet6 fe80::7412:1aff:fe11:6286/64 scope link

valid_lft forever preferred_lft forever

5: cni0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UP qlen 1000

link/ether 52:6f:30:31:77:86 brd ff:ff:ff:ff:ff:ff

inet 10.244.1.1/24 brd 10.244.1.255 scope global cni0

valid_lft forever preferred_lft forever

inet6 fe80::506f:30ff:fe31:7786/64 scope link

valid_lft forever preferred_lft forever

7: vethce8e4bf2@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master cni0 state UP

link/ether 9a:22:8e:d7:78:33 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet6 fe80::9822:8eff:fed7:7833/64 scope link

valid_lft forever preferred_lft forever

[root@node01 ~]#

提示:可以看到对应节点有cni0接口,也有flannel.1接口;

在node01节点上抓cni0上的包

[root@node01 ~]# tcpdump -i cni0 -nn icmp tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on cni0, link-type EN10MB (Ethernet), capture size 262144 bytes 18:55:18.469861 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 225, length 64 18:55:18.470073 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 225, length 64 18:55:19.471439 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 226, length 64 18:55:19.471575 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 226, length 64 18:55:20.472470 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 227, length 64 18:55:20.472608 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 227, length 64 18:55:21.473084 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 228, length 64 18:55:21.473223 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 228, length 64 18:55:22.474856 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 229, length 64 18:55:22.474922 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 229, length 64 18:55:23.475499 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 230, length 64 18:55:23.475685 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 230, length 64 18:55:24.476694 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 231, length 64 18:55:24.476854 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 231, length 64 ^C 14 packets captured 14 packets received by filter 0 packets dropped by kernel [root@node01 ~]#

提示:可以看到在cni0上能够看到10.244.2.9在ping10.244.1.3;说明pod和pod通信首先会通过cni0这个接口;

在node01上抓flainnel.1接口上的icmp包

[root@node01 ~]# tcpdump -i flannel.1 -nn icmp tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on flannel.1, link-type EN10MB (Ethernet), capture size 262144 bytes 18:57:03.607093 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 330, length 64 18:57:03.607273 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 330, length 64 18:57:04.607604 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 331, length 64 18:57:04.607819 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 331, length 64 18:57:05.608172 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 332, length 64 18:57:05.608369 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 332, length 64 18:57:06.609825 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 333, length 64 18:57:06.610106 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 333, length 64 18:57:07.610310 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 334, length 64 18:57:07.612417 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 334, length 64 ^C 10 packets captured 10 packets received by filter 0 packets dropped by kernel [root@node01 ~]#

提示:在node01上的flannel.1接口上抓icmp包,能够正常看到10.244.2.9在ping10.244.1.3;说明对应报文来到了flannel.1接口;

在node01上的物理接口上抓icmp包,看看是否能抓到对应的icmp包呢?

[root@node01 ~]# tcpdump -i eth0 -nn icmp tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on eth0, link-type EN10MB (Ethernet), capture size 262144 bytes

提示:可以看到在node01上的物理接口上抓icmp类型的包,一个都没有抓到,其原因是对应报文通过隧道接口封装后,在物理接口上不是icmp类型的包了;

在node01的物理接口上抓node02的ip地址的包,看看会抓到什么?

[root@node01 ~]# tcpdump -i eth0 -nn host 172.16.11.5 tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on eth0, link-type EN10MB (Ethernet), capture size 262144 bytes 19:02:36.139552 IP 172.16.11.5.46521 > 172.16.11.4.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 662, length 64 19:02:36.139935 IP 172.16.11.4.57232 > 172.16.11.5.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 662, length 64 19:02:37.143339 IP 172.16.11.5.46521 > 172.16.11.4.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 663, length 64 19:02:37.143587 IP 172.16.11.4.57232 > 172.16.11.5.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 663, length 64 19:02:38.144569 IP 172.16.11.5.46521 > 172.16.11.4.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 664, length 64 19:02:38.145276 IP 172.16.11.4.57232 > 172.16.11.5.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 664, length 64 19:02:39.144889 IP 172.16.11.5.46521 > 172.16.11.4.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 665, length 64 19:02:39.145126 IP 172.16.11.4.57232 > 172.16.11.5.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 665, length 64 19:02:40.145727 IP 172.16.11.5.46521 > 172.16.11.4.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 13568, seq 666, length 64 19:02:40.145976 IP 172.16.11.4.57232 > 172.16.11.5.8472: OTV, flags [I] (0x08), overlay 0, instance 1 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 13568, seq 666, length 64 ^C 10 packets captured 10 packets received by filter 0 packets dropped by kernel [root@node01 ~]#

提示:从上面的抓包信息可以看到,在node01节点物理接口上收到来自node02物理节点上的包,外层是两个节点ip地址通信,里面承载了对应的podip;通过上述验证,可以发现在k8s上pod和pod通信,的确没有做任何nat,而是借助vxlan隧道实现的pod和pod直接通信;

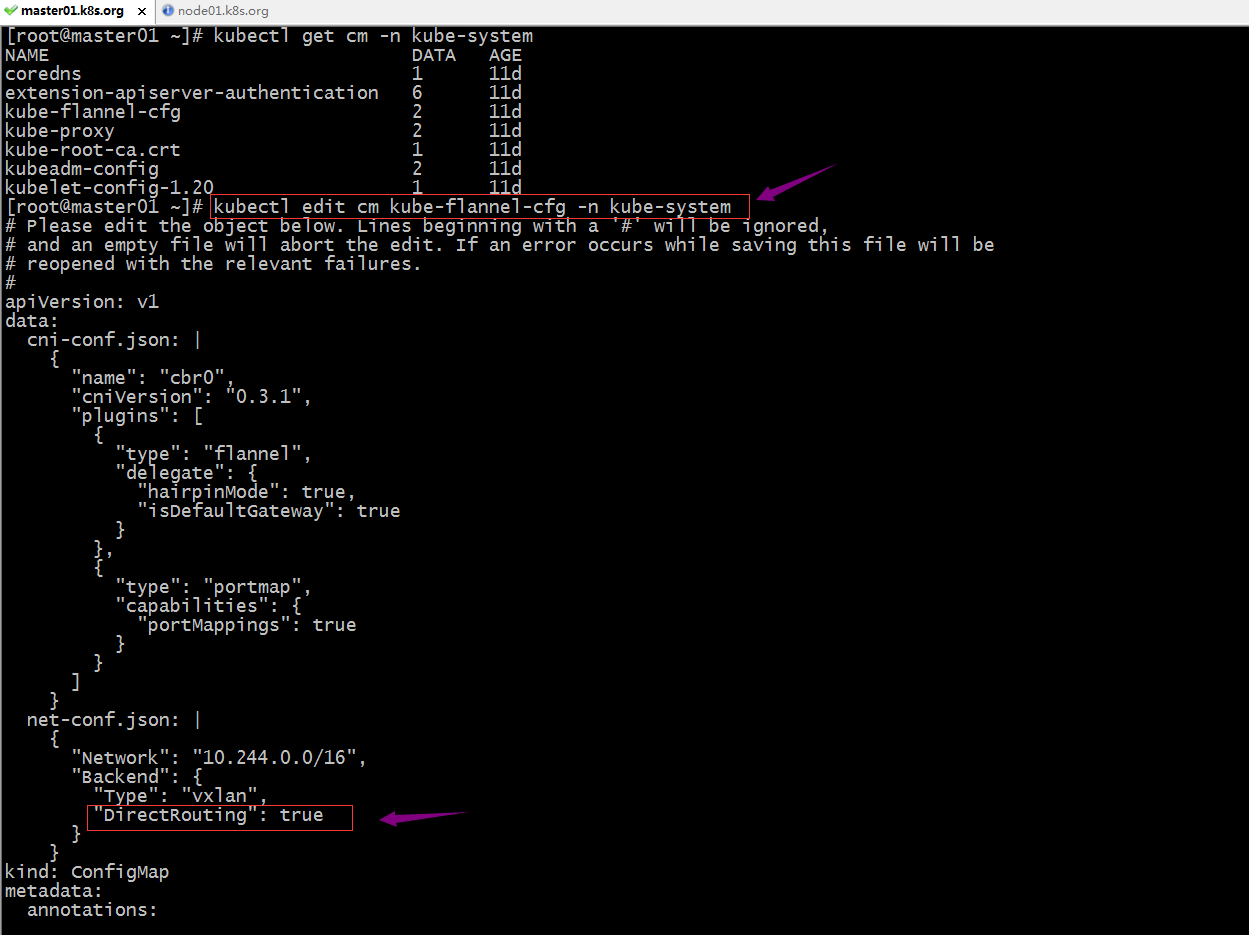

更改flannel工作为直接路由模式,使pod与pod网络通信不经由flannel.1接口,直接将流量发送给物理接口

提示:在flannel的配置文件中的backend配置段中,加上“DirectRouting”: true配置信息,这里需要注意加了此配置,上面的type后面要有逗号分隔;修改完成以后,保存退出即可;

删除原有的flannel pod,让其自动重新新建flannel pod,应用新配置

删除前查看节点的路由信息

[root@master01 ~]# route -n Kernel IP routing table Destination Gateway Genmask Flags Metric Ref Use Iface 0.0.0.0 172.16.11.2 0.0.0.0 UG 0 0 0 eth0 0.0.0.0 172.16.11.2 0.0.0.0 UG 100 0 0 eth0 10.244.0.0 0.0.0.0 255.255.255.0 U 0 0 0 cni0 10.244.1.0 10.244.1.0 255.255.255.0 UG 0 0 0 flannel.1 10.244.2.0 10.244.2.0 255.255.255.0 UG 0 0 0 flannel.1 10.244.3.0 10.244.3.0 255.255.255.0 UG 0 0 0 flannel.1 169.254.0.0 0.0.0.0 255.255.0.0 U 1002 0 0 eth0 172.16.11.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0 172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0 [root@master01 ~]#

提示:可以看到删除原有flannel pod前,对应节点路由信息中,对应10.244.1.0/24、10.244.2.0/24和3.0/24的网络都是指向flannel.1这个接口;

删除原有的flannel pod

[root@master01 ~]# kubectl get pods -n kube-system --show-labels NAME READY STATUS RESTARTS AGE LABELS coredns-7f89b7bc75-9s8wr 1/1 Running 0 11d k8s-app=kube-dns,pod-template-hash=7f89b7bc75 coredns-7f89b7bc75-ck8gl 1/1 Running 0 11d k8s-app=kube-dns,pod-template-hash=7f89b7bc75 etcd-master01.k8s.org 1/1 Running 1 11d component=etcd,tier=control-plane kube-apiserver-master01.k8s.org 1/1 Running 1 11d component=kube-apiserver,tier=control-plane kube-controller-manager-master01.k8s.org 1/1 Running 3 11d component=kube-controller-manager,tier=control-plane kube-flannel-ds-2z7sk 1/1 Running 2 11d app=flannel,controller-revision-hash=64465d999,pod-template-generation=1,tier=node kube-flannel-ds-57fng 1/1 Running 0 11d app=flannel,controller-revision-hash=64465d999,pod-template-generation=1,tier=node kube-flannel-ds-vt2jv 1/1 Running 0 11d app=flannel,controller-revision-hash=64465d999,pod-template-generation=1,tier=node kube-flannel-ds-wk52c 1/1 Running 2 11d app=flannel,controller-revision-hash=64465d999,pod-template-generation=1,tier=node kube-proxy-2hcd9 1/1 Running 0 11d controller-revision-hash=c449f5b75,k8s-app=kube-proxy,pod-template-generation=1 kube-proxy-m9s45 1/1 Running 0 11d controller-revision-hash=c449f5b75,k8s-app=kube-proxy,pod-template-generation=1 kube-proxy-mh9nx 1/1 Running 0 11d controller-revision-hash=c449f5b75,k8s-app=kube-proxy,pod-template-generation=1 kube-proxy-t57x8 1/1 Running 0 11d controller-revision-hash=c449f5b75,k8s-app=kube-proxy,pod-template-generation=1 kube-scheduler-master01.k8s.org 1/1 Running 3 11d component=kube-scheduler,tier=control-plane [root@master01 ~]# kubectl delete pod -l app=flannel -n kube-system pod "kube-flannel-ds-2z7sk" deleted pod "kube-flannel-ds-57fng" deleted pod "kube-flannel-ds-vt2jv" deleted pod "kube-flannel-ds-wk52c" deleted [root@master01 ~]# kubectl get pods -n kube-system NAME READY STATUS RESTARTS AGE coredns-7f89b7bc75-9s8wr 1/1 Running 0 11d coredns-7f89b7bc75-ck8gl 1/1 Running 0 11d etcd-master01.k8s.org 1/1 Running 1 11d kube-apiserver-master01.k8s.org 1/1 Running 1 11d kube-controller-manager-master01.k8s.org 1/1 Running 3 11d kube-flannel-ds-9ww8d 1/1 Running 0 39s kube-flannel-ds-gd45l 1/1 Running 0 79s kube-flannel-ds-ps6c5 1/1 Running 0 27s kube-flannel-ds-x642z 1/1 Running 0 70s kube-proxy-2hcd9 1/1 Running 0 11d kube-proxy-m9s45 1/1 Running 0 11d kube-proxy-mh9nx 1/1 Running 0 11d kube-proxy-t57x8 1/1 Running 0 11d kube-scheduler-master01.k8s.org 1/1 Running 3 11d [root@master01 ~]# route -n Kernel IP routing table Destination Gateway Genmask Flags Metric Ref Use Iface 0.0.0.0 172.16.11.2 0.0.0.0 UG 0 0 0 eth0 0.0.0.0 172.16.11.2 0.0.0.0 UG 100 0 0 eth0 10.244.0.0 0.0.0.0 255.255.255.0 U 0 0 0 cni0 10.244.1.0 172.16.11.4 255.255.255.0 UG 0 0 0 eth0 10.244.2.0 172.16.11.5 255.255.255.0 UG 0 0 0 eth0 10.244.3.0 172.16.11.6 255.255.255.0 UG 0 0 0 eth0 169.254.0.0 0.0.0.0 255.255.0.0 U 1002 0 0 eth0 172.16.11.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0 172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0 [root@master01 ~]#

提示:可以看到,新建flannel pod后对应的路由信息就发生变了;现在就没有任何路由会通过flannel.1接口;

验证:进入一个pod内部ping 另一个pod ip,看看对应报文走向

在节点1抓包

[root@node01 ~]# tcpdump -i cni0 -nn icmp tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on cni0, link-type EN10MB (Ethernet), capture size 262144 bytes 19:45:55.693118 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 18944, seq 32, length 64 19:45:55.693285 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 18944, seq 32, length 64 19:45:56.693771 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 18944, seq 33, length 64 19:45:56.693941 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 18944, seq 33, length 64 19:45:57.695549 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 18944, seq 34, length 64 19:45:57.695905 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 18944, seq 34, length 64 19:45:58.696517 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 18944, seq 35, length 64 19:45:58.697035 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 18944, seq 35, length 64 ^C 8 packets captured 8 packets received by filter 0 packets dropped by kernel [root@node01 ~]# tcpdump -i flannel.1 -nn icmp tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on flannel.1, link-type EN10MB (Ethernet), capture size 262144 bytes ^C 0 packets captured 0 packets received by filter 0 packets dropped by kernel [root@node01 ~]# tcpdump -i eth0 -nn icmp tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on eth0, link-type EN10MB (Ethernet), capture size 262144 bytes 19:46:24.737002 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 18944, seq 61, length 64 19:46:24.737350 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 18944, seq 61, length 64 19:46:25.737664 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 18944, seq 62, length 64 19:46:25.737987 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 18944, seq 62, length 64 19:46:26.739459 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 18944, seq 63, length 64 19:46:26.739705 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 18944, seq 63, length 64 19:46:27.739800 IP 10.244.2.9 > 10.244.1.3: ICMP echo request, id 18944, seq 64, length 64 19:46:27.740026 IP 10.244.1.3 > 10.244.2.9: ICMP echo reply, id 18944, seq 64, length 64 ^C 8 packets captured 8 packets received by filter 0 packets dropped by kernel [root@node01 ~]#

提示:可以看到在node01的flannel.1接口上就抓不到icmp类型的包,在对应物理接口上能够抓到icmp类型的包,并且从抓包信息中也能看到对应是10.244.2.9在ping 10.244.1.3;

我们知道在k8s上有三种网络,第一种是宿主机网络,这种网络没有列入容器编排的范畴内,是集群管理员自行维护;第二种网络上service网络,service网络也叫cluster网络,该网络本质上不会在任何网卡上存在,它是借助每个节点上的kube-proxy生成的iptables或ipvs规则,主要用来实现对pod访问的负载均衡,也是各服务间访问的网络;第三种网络就是pod网络,pod网络主要用来pod和pod间通信;

我们知道在k8s上有三种网络,第一种是宿主机网络,这种网络没有列入容器编排的范畴内,是集群管理员自行维护;第二种网络上service网络,service网络也叫cluster网络,该网络本质上不会在任何网卡上存在,它是借助每个节点上的kube-proxy生成的iptables或ipvs规则,主要用来实现对pod访问的负载均衡,也是各服务间访问的网络;第三种网络就是pod网络,pod网络主要用来pod和pod间通信;

浙公网安备 33010602011771号

浙公网安备 33010602011771号