ELK日志收集平台部署

需求背景

由于公司的后台服务有三台,每当后台服务运行异常,需要看日志排查错误的时候,都必须开启3个ssh窗口进行查看,研发们觉得很不方便,于是便有了统一日志收集与查看的需求。

这里,我用ELK集群,通过收集三台后台服务的日志,再统一进行日志展示,实现了这一需求。

当然,当前只是进行了简单的日志采集,如果后期相对某些日志字段进行分析,则可以通过logstash以及Kibana来实现。

部署环境

系统:CentOS 7

软件:

elasticsearch-6.1.1

logstash-6.1.1

kibana-6.1.1

下载地址:https://www.elastic.co/cn/products

搭建步骤

一:elasticsearch:

elasticsearch是用于存储日志的数据库。

下载elasticsearch软件,解压:

|

1

2

|

# tar -zxvf elasticsearch-6.1.1.tar.gz # mv elasticsearch-6.1.1 /opt/apps/elasticsearch |

由于elasticsearch建议使用非root用户启动,使用root启动会报错,故需创建一个普通用户,并进行一些简单配置:

|

1

2

3

4

5

6

|

# useradd elk# vi /opt/apps/elasticsearch/config/elasticsearch.ymlnetwork.host: 0.0.0.0http.port: 9200http.cors.enabled: truehttp.cors.allow-origin: "*" |

启动,并验证:

|

1

2

3

4

5

6

7

|

# su - elk$ nohup /opt/apps/elasticsearch/bin/elasticsearch &# netstat -ntpl | grep 9200tcp 0 0 0.0.0.0:9200 0.0.0.0:* LISTEN 6637/java #curl 'localhost:9200/_cat/health?v'epoch timestamp cluster status node.total node.data shards pri relo init unassign pending_tasks max_task_wait_time active_shards_percent1514858033 09:53:53 elasticsearch yellow 1 1 241 241 0 0 241 0 - 50.0% |

如果报错:OpenJDK 64-Bit Server VM warning: If the number of processors is expected to increase from one, then you should configure the number of parallel GC threads appropriately using -XX:ParallelGCThreads=N 说明需要加CPU和内存

bootstrap checks failed

max file descriptors [65535] for elasticsearch process is too low, increase to at least [65536]

[1]: max virtual memory areas vm.max_map_count [65530] is too low, increase to at least [262144]

解决方案

1、vi /etc/sysctl.conf

设置fs.file-max=655350

vm.max_map_count=262144

保存之后sysctl -p使设置生效

2、vi /etc/security/limits.conf 新增

* soft nofile 655350

* hard nofile 655350

3、重新使用SSH登录,再次启动elasticsearch即可。

二:logstash

logstash用于收集各服务器上的日志,然后把收集到的日志,存储进elasticsearch。收集日志的方式有很多种,例如结合redis或者filebeat,这里我们使用redis收集的方式。

安装logstash:

|

1

2

3

|

在所有服务器上:# tar -zxvf logstash-6.1.1.tar.gz# mv logstash-6.1.1 /opt/apps/logstash/ |

配置后台服务器,收集相关的日志:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

|

在三台后台服务器上新建logstash文件,配置日志收集:# vi /opt/conf/logstash/logstash.conf input { file { #指定type type => "web_stderr" #匹配多行的日志 codec => multiline { pattern => "^[^0-9]" what => "previous" } #指定本地的日志路径 path => [ "/opt/logs/web-stderr.log"] sincedb_path => "/opt/logs/logstash/sincedb-access" } file { type => "web_stdout" codec => multiline { pattern => "^[^0-9]" what => "previous" } path => [ "/opt/logs/web-stdout.log"] sincedb_path => "/opt/logs/logstash/sincedb-access" } #收集nginx日志 file { type => "nginx" path => [ "/opt/logs/nginx/*.log"] sincedb_path => "/opt/logs/logstash/sincedb-access" }}output { #指定输出的目标redis redis { host => "xx.xx.xx.xx" port => "6379" data_type => "list" key => "logstash" }} |

配置elk日志服务器上的logstash,从redis队列中读取日志,并存储到elasticsearch中:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

|

# vi /opt/conf/logstash/logstash-server.conf#配置从redis队列中读取收集的日志input { redis { host => "xx.xx.xx.xx" port => "6379" type => "redis-input" data_type => "list" key => "logstash" threads => 10 }}#把日志输出到elasticsearch中output { elasticsearch { hosts => "localhost:9200" index => "logstash-%{type}.%{+YYYY.MM.dd}" } #这里把日志收集到本地文件 file { path => "/opt/logs/logstash/%{type}.%{+yyyy-MM-dd}" codec => line { format => "%{message}"} }} |

启动logstash进程:

|

1

2

3

4

|

后台服务器:# nohup /opt/apps/logstash/bin/logstash -f /opt/conf/logstash/logstash.conf --path.data=/opt/data/logstash/logstash &elk日志服务器:# nohup /opt/apps/logstash/bin/logstash -f /opt/conf/logstash/logstash-server.conf --path.data=/opt/data/logstash/logstash-server & |

三:kibana

kibana用于日志的前端展示。

安装、配置kibana:

|

1

2

3

4

5

6

7

8

|

# tar -zxvf kibana-6.1.1-linux-x86_64.tar.gz# mv kibana-6.1.1-linux-x86_64 /opt/apps/kibana配置elasticsearch链接:# vi /opt/apps/kibana/config/kibana.ymlserver.port: 5601server.host: "0.0.0.0"#配置elasticsearch链接:elasticsearch.url: "http://localhost:9200" |

启动kibana:

|

1

|

nohup /opt/apps/kibana/bin/kibana & |

访问kibana:

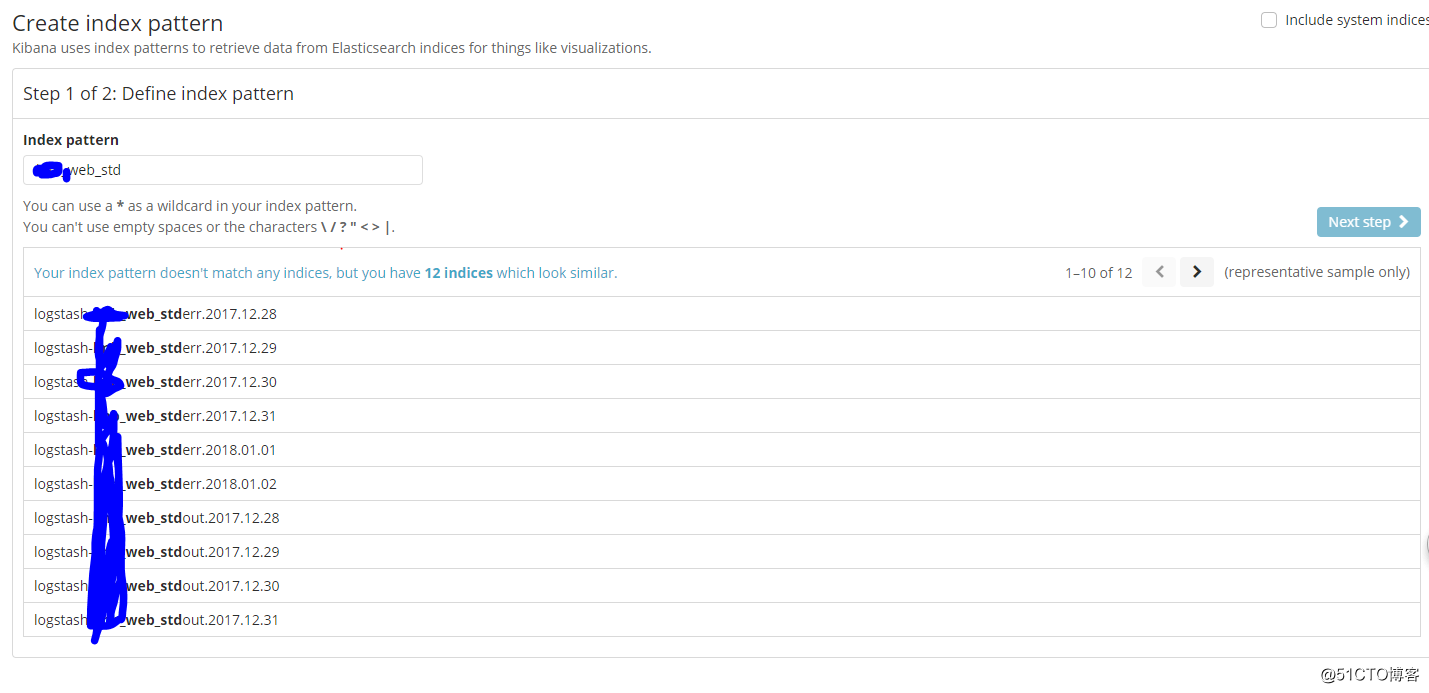

可以根据我们在logstash中配置的type,创建索引:

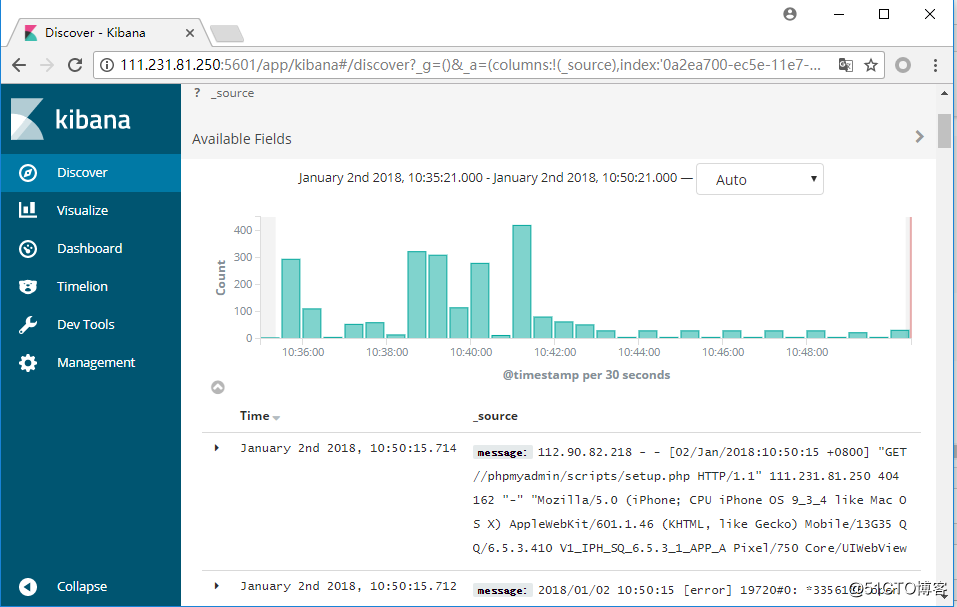

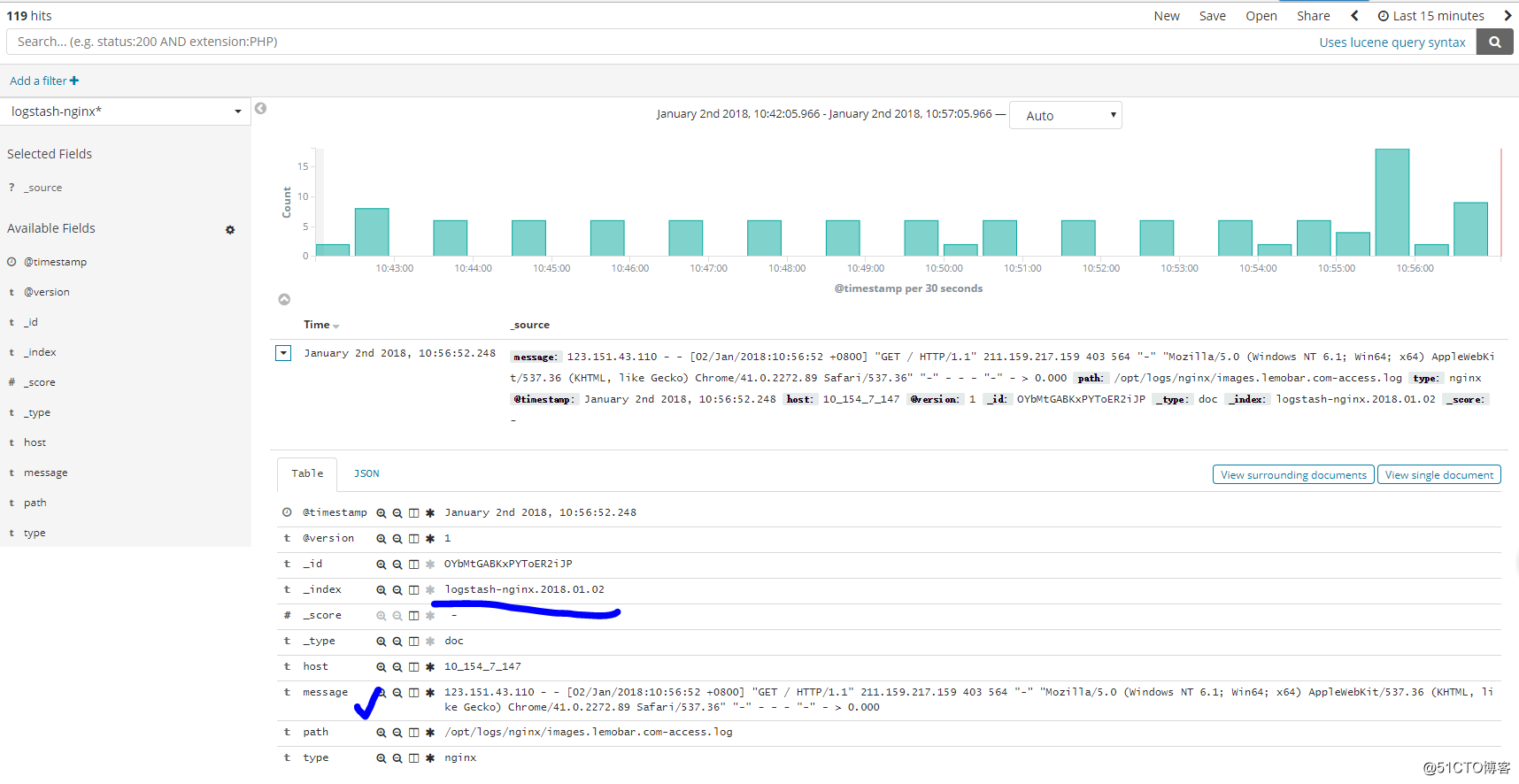

可以根据我们创建的索引,进行查看(这里查看nginx日志):

后记:

当然了,结合logstash和kibana不单单仅能实现收集日志的功能,通过对字段的匹配、筛选以及结合kibana的图标功能,能对我们想要的字段进行分析,实现相应的数据报表等。