用python编写简单爬虫

需求:抓取百度百科python词条相关词条网页的标题和简介,并将数据输出在一个html表格中

入口页:python的百度词条页 https://baike.baidu.com/item/Python/407313

词条页面URL:'/item/%E8%AE%A1%E7%AE%97%E6%9C%BA%E7%A8%8B%E5%BA%8F%E8%AE%BE%E8%AE%A1%E8%AF%AD%E8%A8%80' 注意:这不是一个完整的url,需要对之进行拼接

数据格式:

-标题:<dd class="lemmaWgt-lemmaTitle-title"><h1>***</h1></dd>

-简介:<div class='lemma-summary'>***</div>

页面编码:UTF-8

实例代码:

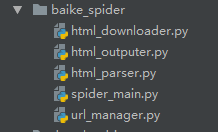

文件目录结构如图

入口文件(spider_main.py):

# coding:utf-8 import url_manager import html_parser import html_downloader import html_outputer class SpiderMain(object): def __init__(self): self.urls = url_manager.UrlManager() self.downloader = html_downloader.HtmlDownloader() self.parser = html_parser.HtmlParser() self.outputer = html_outputer.HtmlOutputer() def craw(self, root_url): count = 1 self.urls.add_new_url(root_url) while self.urls.has_new_url(): # 如果有待爬取的url try: new_url = self.urls.get_new_url() # 取一个待爬取的url print 'craw %d:%s' % (count, new_url) html_cont = self.downloader.download(new_url) # 下载页面 new_urls, new_data = self.parser.parse(new_url, html_cont) # 解析页面得到新的url和一些数据 self.urls.add_new_urls(new_urls) # 将新得到的url添加到url管理器 self.outputer.collect_data(new_data) # 将获取到的数据添加到output文件中 if count == 10: break count += 1 except Exception as e: print e self.outputer.output_html() if __name__ == '__main__': obj_spider = SpiderMain() obj_spider.craw('https://baike.baidu.com/item/Python/407313')

url管理文件(url_manager.py):

# coding:utf-8 class UrlManager(object): def __init__(self): self.new_urls = set() self.old_urls = set() def add_new_url(self, url): if url is None: return if url not in self.new_urls and url not in self.old_urls: self.new_urls.add(url) def add_new_urls(self, urls): if urls is None or len(urls) == 0: return for url in urls: self.add_new_url(url) def has_new_url(self): return len(self.new_urls) != 0 def get_new_url(self): new_url = self.new_urls.pop() # 从列表中获取一个并且移除 self.old_urls.add(new_url) return new_url

页面源码下载(html_downloader.py):

# coding:utf-8 import urllib2 class HtmlDownloader(object): def download(self, url): if url is None: return None response = urllib2.urlopen(url) if response.getcode() != 200: return None return response.read()

源码解析(html_parser.py):

# coding:utf-8 from bs4 import BeautifulSoup import urlparse import re class HtmlParser(object): def _get_new_urls(self, page_url, soup): new_urls = set() # 页面中的链接格式:/item/xxx links = soup.find_all('a', href=re.compile(r"/item/(.*)")) # 得到所有的url的标签 for link in links: new_url = link['href'] # 获取所有的链接 new_full_url = urlparse.urljoin(page_url, new_url) # 让new_url按照page_url的格式拼接成一个完整的url new_urls.add(new_full_url) return new_urls def _get_new_data(self, page_url, soup): res_data = {} res_data['url'] = page_url # <dd class="lemmaWgt-lemmaTitle-title"><h1>Python</h1></dd> title_node = soup.find('dd', class_='lemmaWgt-lemmaTitle-title').find('h1') res_data['title'] = title_node.get_text() # <div class="lemma-summary" label-module="lemmaSummary"> 简介的html summary_node = soup.find('div', class_='lemma-summary') res_data['summary'] = summary_node.get_text() return res_data def parse(self, page_url, html_cont): if page_url is None or html_cont is None: return soup = BeautifulSoup(html_cont, 'html.parser', from_encoding='utf-8') new_urls = self._get_new_urls(page_url, soup) new_data = self._get_new_data(page_url, soup) return new_urls, new_data

将爬取到的数据输出(html_output.py):

# coding:utf-8 class HtmlOutputer(object): def __init__(self): self.datas = [] def collect_data(self, data): # 收集数据 if data is None: return self.datas.append(data) def output_html(self): # 将数据输出为html fout = open('output.html', 'w') fout.write('<html>') fout.write('<body>') fout.write('<table>') for data in self.datas: fout.write('<tr>') fout.write('<td>%s</td>' % data['url']) fout.write('<td>%s</td>' % data['title'].encode('utf-8')) fout.write('<td>%s</td>' % data['summary'].encode('utf-8')) fout.write('</tr>') fout.write('</table>') fout.write('</body>') fout.write('</html>')

浙公网安备 33010602011771号

浙公网安备 33010602011771号