import torch

import torch.nn as nn

import torch.nn.functional as F

class DMlp(nn.Module):

'''

用来提取局部特征

'''

def __init__(self, dim, growth_rate=2.0):

super().__init__()

hidden_dim = int(dim * growth_rate)

self.conv_0 = nn.Sequential(

nn.Conv2d(dim,hidden_dim,3,1,1,groups=dim),

nn.Conv2d(hidden_dim,hidden_dim,1,1,0)

)

self.act =nn.GELU()

self.conv_1 = nn.Conv2d(hidden_dim, dim, 1, 1, 0)

def forward(self, x):

x = self.conv_0(x)

x = self.act(x)

x = self.conv_1(x)

return x

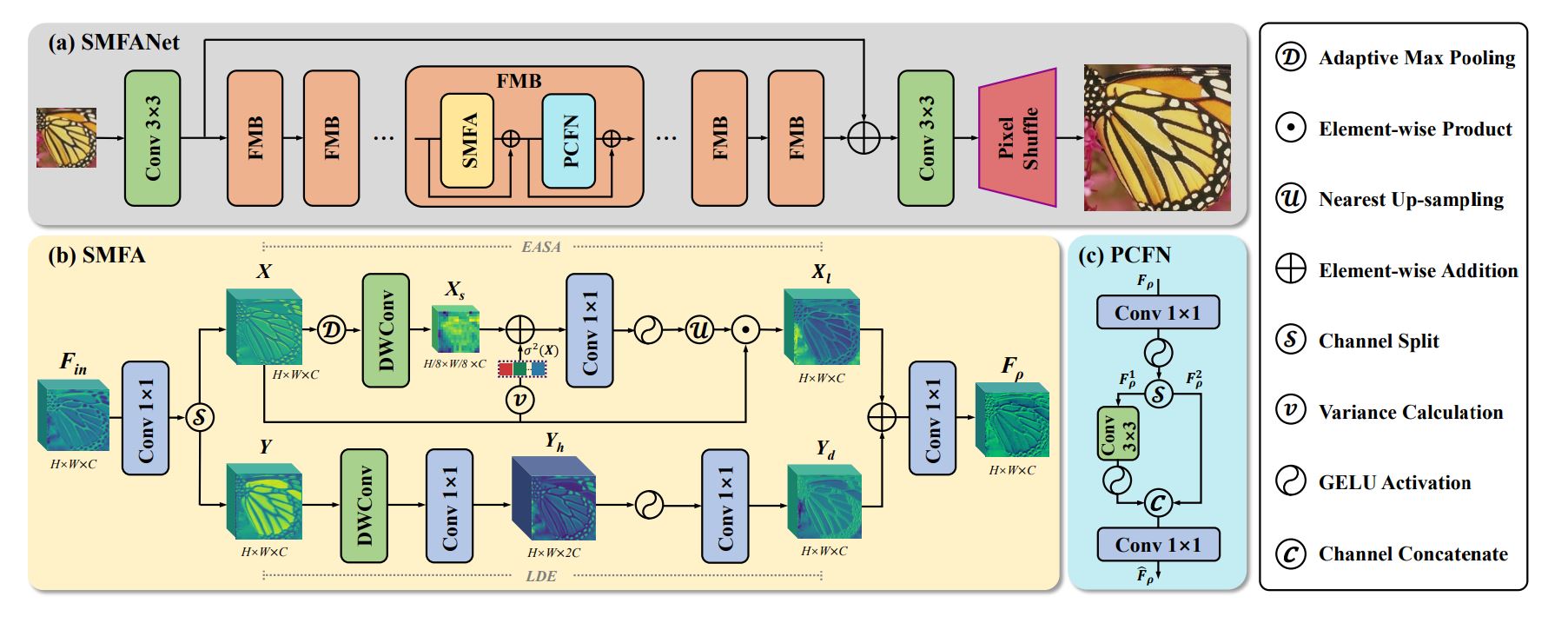

class SMFA(nn.Module):

'''

或许能代替自注意力 用来提取全局特征 这个里面也包括了局部特征的提取

'''

def __init__(self, dim=36):

super(SMFA, self).__init__()

self.linear_0 = nn.Conv2d(dim,dim*2,1,1,0)

self.linear_1 = nn.Conv2d(dim,dim,1,1,0)

self.linear_2 = nn.Conv2d(dim,dim,1,1,0)

self.lde = DMlp(dim,2)

self.dw_conv = nn.Conv2d(dim,dim,3,1,1,groups=dim)

self.gelu = nn.GELU()

self.down_scale = 8

self.alpha = nn.Parameter(torch.ones((1,dim,1,1)))

self.belt = nn.Parameter(torch.zeros((1,dim,1,1)))

def forward(self, f):

_,_,h,w = f.shape

y, x = self.linear_0(f).chunk(2, dim=1) # 输入信息 通道翻倍 然后按通道分成两部分 x y

x_s = self.dw_conv(F.adaptive_max_pool2d(x, (h // self.down_scale, w // self.down_scale))) # x 进行最大池化和深度卷积 全局特征

x_v = torch.var(x, dim=(-2,-1), keepdim=True) # x 统计空间信息的差异

# 全局信息和空间信息差异 加权融合 1*1的卷积融合通道信息 激活函数 再通过插值调整到和x相同 然后与x相乘

x_l = x * F.interpolate(self.gelu(self.linear_1(x_s * self.alpha + x_v * self.belt)), size=(h,w), mode='nearest')

y_d = self.lde(y) # 倒残差结构 这个就是局部信息模块

# 处理之后的x和y再通过加法和1*1的卷积融合

return self.linear_2(x_l + y_d)

if __name__ == '__main__':

block = SMFA(dim=32)

input = torch.rand(1, 32, 64, 64)

output = block(input)

print(input.size())

print(output.size())

![]()

浙公网安备 33010602011771号

浙公网安备 33010602011771号